The Representation Economy

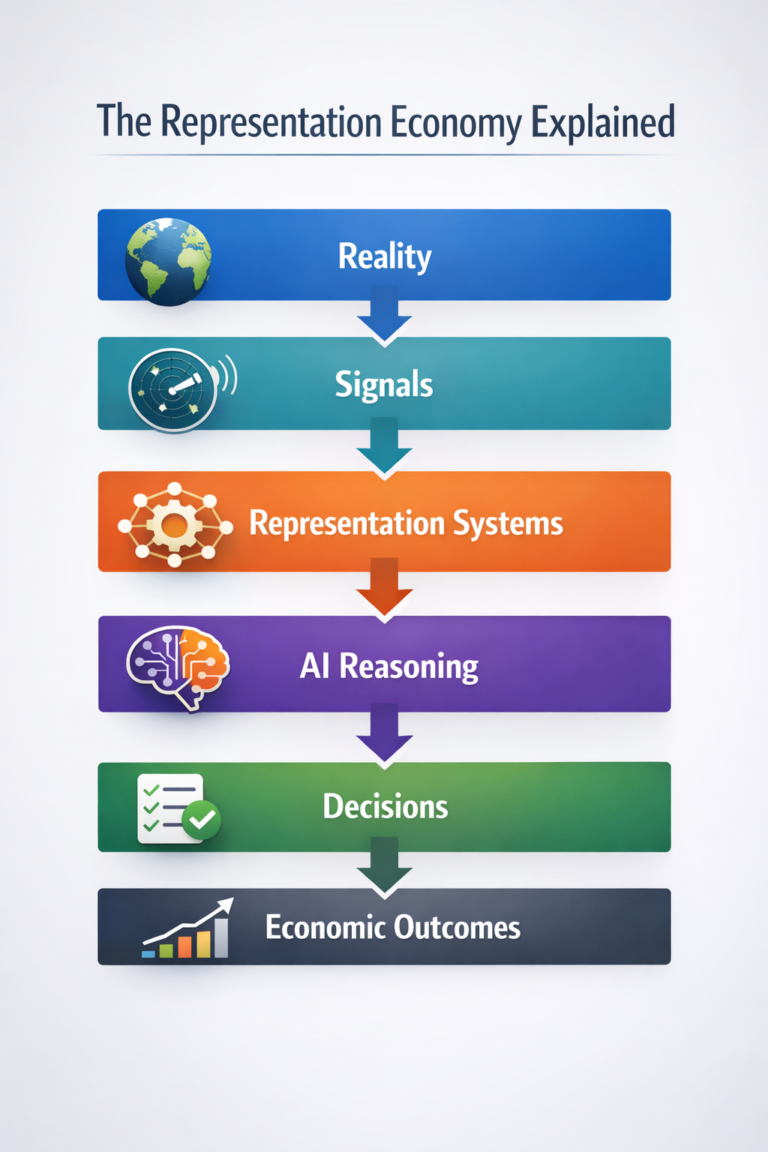

Artificial intelligence is often described as a technology shift, a software shift, or a productivity shift. But the deeper transformation is institutional.

Organizations are entering an era in which competitive advantage depends not only on intelligence itself, but on how well they sense reality, reason about it, and delegate action responsibly. That is why the SENSE–CORE–DRIVER architecture matters.

This framework explains how intelligent institutions operate in the AI era:

- SENSE defines how institutions observe reality.

- CORE defines how institutions interpret signals, build understanding, and coordinate reasoning.

- DRIVER defines what institutions allow machines to decide or execute, under what authority, and with what safeguards.

Above these layers sits a broader economic shift: the Representation Economy. In this emerging order, value increasingly flows to institutions that can represent reality more accurately, coordinate intelligence more effectively, and delegate authority more responsibly.

This guide answers 51 important questions about the Representation Economy and the SENSE–CORE–DRIVER architecture.

Article Summary

This article explains the Representation Economy, an emerging economic system where institutions compete through their ability to represent reality inside computational systems.

It introduces the SENSE–CORE–DRIVER architecture, a framework explaining how intelligent institutions:

-

sense signals from the world

-

reason about institutional knowledge

-

delegate decisions responsibly to machines

The framework provides a structured model for understanding AI governance, institutional intelligence, and machine delegation in the modern enterprise.

Key Takeaways

- The Representation Economy describes an era in which value depends on how well institutions represent reality inside computational systems.

- The SENSE layer determines how institutions detect signals and observe the world.

- The CORE layer governs reasoning, interpretation, memory, and institutional cognition.

- The DRIVER layer determines what machines are allowed to decide or execute, under what authority, and with what recourse.

- Institutions that succeed in the AI era will build stronger systems to sense reality, reason about it, and delegate action responsibly.

Part I: The Representation Economy

- What is the Representation Economy?

The Representation Economy is an economic order in which value increasingly depends on how well institutions represent people, assets, events, risks, relationships, and changing reality inside computational systems. Institutions that build better representations can make better decisions, coordinate resources more effectively, and create stronger forms of advantage.

- Why is representation becoming central in the AI era?

AI systems do not act on reality directly. They act on representations of reality. If something cannot be represented properly inside institutional systems, it may not be recognized, reasoned about, priced correctly, governed well, or acted upon responsibly.

- How is the Representation Economy different from the data economy?

The data economy focuses on collecting, storing, and monetizing data. The Representation Economy goes further. It focuses on whether institutions can transform signals and data into meaningful, trustworthy, operationally useful models of reality.

- Is representation just another word for data modeling?

No. Data modeling is one technical part of representation. Representation is broader. It includes identity, meaning, relationships, context, constraints, exceptions, and the institutional significance of what a signal or entity actually stands for.

- Why do institutions compete through representation systems?

Because institutions compete through perception and coordination. The organizations that identify changes earlier, understand them more accurately, and route action more intelligently can adapt faster and perform better.

- What kinds of things must institutions represent?

Institutions must represent customers, suppliers, employees, assets, workflows, obligations, permissions, risks, exceptions, policies, events, and dependencies. In advanced AI systems, they must also represent uncertainty, decision rights, recourse paths, and authority boundaries.

- What is a representation gap?

A representation gap is the distance between reality and what an institutional system can meaningfully capture, model, and use. Many failures in AI do not begin because a model is unintelligent. They begin because the system is blind to something important.

- Can AI create value without strong representation?

Only in narrow and relatively low-stakes settings. Once AI enters enterprise operations, public systems, regulated domains, or real-world workflows, weak representation becomes a major constraint on performance, trust, and safety.

- Why can representation failures create economic inequality?

Because some people, edge cases, communities, informal realities, and complex situations are easier to represent than others. Those who are poorly represented may be poorly served, poorly assessed, poorly priced, or excluded entirely.

- What is the core principle of the Representation Economy?

Institutions can only optimize, govern, and coordinate what they can represent.

Part II: SENSE

- What is the SENSE layer?

The SENSE layer is how institutions observe reality. It includes the systems, processes, sensors, monitoring tools, feedback loops, reporting structures, and signal pathways through which organizations detect what is happening in the world.

- Why is SENSE the first requirement of intelligent institutions?

Because reasoning depends on perception. If institutions cannot see reality clearly, even advanced AI systems will operate on incomplete, delayed, or distorted information.

- What is signal infrastructure?

Signal infrastructure is the collection of systems and mechanisms through which institutions detect relevant changes in their environment. It may include telemetry, sensors, logs, market feeds, compliance triggers, operational dashboards, and frontline reporting processes.

- How do institutions gather signals from the world?

Signals may come from users, customers, internal systems, markets, regulators, supply chains, devices, digital platforms, employees, and external events. A mature institution does not merely collect signals; it organizes them into meaningful visibility.

- Why do organizations often fail at sensing?

Because they confuse data abundance with perceptual quality. They may have enormous amounts of data while still missing weak signals, exceptions, causal indicators, or operational realities that matter most.

- What is the difference between sensing and data collection?

Data collection records events. Sensing is the disciplined process of identifying, validating, prioritizing, and contextualizing signals that matter for understanding and action.

- What is visibility governance?

Visibility governance is the discipline of deciding what an institution should be allowed to see, infer, combine, store, or act upon. It recognizes that visibility is not just a technical capability; it is also a governance question.

- Why does visibility governance matter?

Because more visibility is not automatically better. Institutions need rules for what may be observed, what should remain bounded, and how sensing power should be constrained to remain legitimate and defensible.

- What happens when institutions sense the wrong signals?

They optimize for the wrong objectives, misread change, overlook emerging risks, and create false confidence. Many strategic failures begin as perception failures.

- How does AI expand institutional sensing?

AI can process vast volumes of signals, identify subtle patterns, surface weak indicators, and detect anomalies at speeds that would be impossible through manual observation alone.

- What is a representation gap in the SENSE layer?

It is the failure to capture or encode important aspects of reality at the observation stage. If the institution cannot sense a condition, later reasoning systems may never account for it.

- Why will institutions that see better outperform others?

Because they detect opportunities and threats sooner, reduce lag between reality and response, and improve the quality of all downstream reasoning and action.

- Does SENSE include human perception as well as machine perception?

Yes. Human observation, frontline awareness, qualitative judgment, and lived operational experience remain essential parts of institutional sensing. Some of the most valuable signals still originate from people.

- What is a SENSE failure?

A SENSE failure occurs when an institution cannot perceive what matters, cannot distinguish signal from noise, or cannot represent observed reality in a form that later layers can use effectively.

Part III: CORE

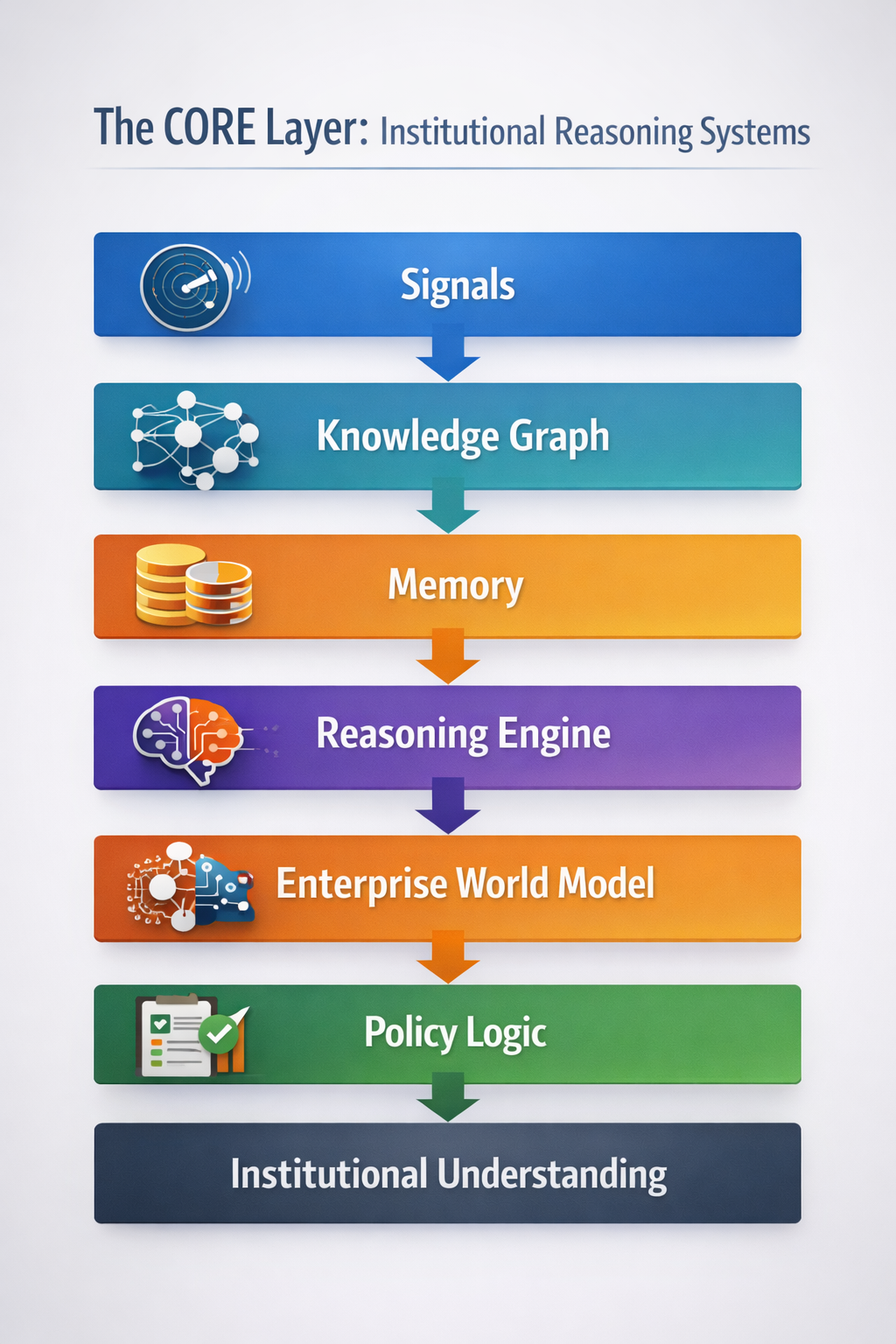

- What is the CORE layer?

The CORE layer is the reasoning system of the institution. It interprets signals, builds understanding, coordinates meaning, updates beliefs, compares possibilities, and supports decisions.

- What does institutional cognition mean?

Institutional cognition refers to how organizations form understanding, interpret signals, coordinate knowledge, and choose among alternatives. In the AI era, this increasingly becomes a hybrid process involving both human and machine reasoning.

- Why is CORE more than analytics?

Analytics often describes patterns or trends. CORE goes further. It includes memory, causal interpretation, reasoning, constraint handling, trade-off evaluation, contextual understanding, and coordinated judgment.

- What systems belong in the CORE layer?

Knowledge graphs, reasoning engines, policy logic, retrieval systems, memory architectures, orchestration layers, enterprise world models, simulation systems, and human-machine coordination mechanisms all belong in CORE.

- What is an enterprise world model?

An enterprise world model is a structured computational representation of how an organization works. It models entities, workflows, dependencies, constraints, permissions, relationships, and operational states so that systems can reason about organizational reality.

- Why are enterprise world models important?

Because AI in enterprises must understand more than language or raw data. It must understand process context, decision boundaries, business rules, exceptions, and the institutional meaning of events.

- What is shared reasoning?

Shared reasoning is the ability of multiple systems, agents, teams, or decision processes to operate from a sufficiently aligned understanding of goals, constraints, context, and logic.

- Why does coordination matter in the CORE layer?

Because institutions do not fail only from bad decisions. They also fail from uncoordinated good decisions. Local intelligence without system-level alignment can still produce organizational breakdown.

- Why is memory essential to institutional intelligence?

Memory provides continuity. Without it, institutions repeat mistakes, lose context, fragment knowledge, and fail to accumulate intelligence over time.

- How can AI misreason even when the data is correct?

It may apply the wrong abstraction, optimize the wrong objective, confuse correlation with causality, ignore hidden constraints, miss institutional context, or fail to update reasoning when conditions change.

- What is a CORE failure?

A CORE failure occurs when an institution sees enough but cannot interpret correctly, coordinate effectively, or reason within the right constraints. It may produce outputs that look intelligent but remain misaligned with reality.

- Why is institutional reasoning becoming a competitive advantage?

Because access to powerful models is becoming more common. The differentiator is shifting toward how institutions organize reasoning, memory, decision support, and coordinated understanding.

- Can human judgment still matter in the CORE layer?

Absolutely. In many real-world settings, hybrid reasoning systems that combine human judgment with machine inference are more resilient, contextual, and trustworthy than purely automated approaches.

- What is the biggest misconception about the CORE layer?

The biggest misconception is that a more powerful model automatically produces better institutional reasoning. In practice, reasoning quality depends on architecture, memory, coordination, constraints, and context design.

Part IV: DRIVER

- What is the DRIVER layer?

The DRIVER layer is the action and delegation layer. It determines what machines are allowed to recommend, initiate, approve, deny, optimize, or execute, under what authority, and with what safeguards.

- Why is DRIVER the hardest layer?

Because it is where intelligence meets authority. Producing an answer is easier than deciding whether a machine should be allowed to act on that answer in the real world.

- What is delegation infrastructure?

Delegation infrastructure is the institutional and technical system through which authority is distributed between humans and machines. It defines decision rights, escalation paths, action thresholds, review mechanisms, reversibility, and accountability boundaries.

- Why is delegation a governance issue rather than just a technical feature?

Because delegating action changes institutional responsibility. Once machines influence or execute consequential outcomes, questions of authority, legitimacy, accountability, and recourse become unavoidable.

- What is delegated authority?

Delegated authority is the permission granted to a machine or AI-enabled process to make, influence, or execute decisions within defined parameters on behalf of an institution.

- What is machine legitimacy?

Machine legitimacy is the degree to which a machine-mediated decision is seen as authorized, appropriate, explainable, and institutionally acceptable. A decision can be statistically correct and still lack legitimacy.

- Why is correctness not enough in the DRIVER layer?

Because institutions must answer more than “Was the output accurate?” They must also answer “Was the machine allowed to decide?” “Who authorized this?” “Under what conditions?” and “What happens if it goes wrong?”

- What is recourse?

Recourse is the ability to contest, review, reverse, appeal, or remediate an AI-influenced decision. It is one of the foundations of trust in machine-mediated systems.

- Why must AI decisions be reversible where possible?

Because action without reversibility increases institutional risk. Reversibility helps contain damage, recover from error, and prevent isolated failures from becoming systemic failures.

- What is a DRIVER failure?

A DRIVER failure occurs when machines act without proper authority, sufficient oversight, bounded delegation, or meaningful recourse. This is often where AI risk becomes operational rather than theoretical.

- What is the action boundary?

The action boundary is the line between systems that advise and systems that act. Risk changes significantly when AI crosses from recommendation into execution.

- Why do organizations delegate too early?

Because automation promises speed, efficiency, and scale. Organizations often mistake output quality for institutional readiness and move into action before legitimacy and governance are mature enough.

- What capabilities must institutions build to succeed in the Representation Economy?

They must build strong SENSE systems, coherent CORE reasoning, and responsible DRIVER delegation. In the AI era, institutional strength will increasingly depend on the ability to sense reality, reason about it, and delegate action responsibly.

Why This Framework Matters

The SENSE–CORE–DRIVER architecture provides a practical way to understand why so many AI systems succeed in demos but fail inside institutions.

Some fail because they cannot sense enough reality.

Some fail because they cannot reason well enough about what they sense.

Some fail because they are allowed to act before legitimacy, authority, and recourse are properly designed.

The Representation Economy sits above all three. It is the broader shift in which institutional value increasingly depends on what can be represented, interpreted, and delegated through intelligent systems.

The future will not belong simply to organizations with more AI.

It will belong to organizations that can see better, think better, and delegate better.

Concept Map: How the Framework Fits Together

Representation Economy

│

▼

SENSE → CORE → DRIVER

│

▼

Institutional Intelligence

│

▼

Responsible Delegation

│

▼

Trusted AI Systems

SENSE determines what institutions can observe.

CORE determines how institutions interpret what they observe.

DRIVER determines what institutions allow machines to do.

Together, these layers form the operating architecture of intelligent institutions in the Representation Economy.

The Representation Economy describes a world where institutions compete through their ability to represent reality inside computational systems. The SENSE–CORE–DRIVER architecture explains how intelligent institutions sense signals, reason about them, and delegate decisions responsibly in the AI era.

Frequently Asked Questions (FAQ)

What is the Representation Economy?

The Representation Economy is an economic system in which value increasingly depends on how well institutions represent reality inside computational and decision systems.

What is the SENSE layer?

The SENSE layer is the perception layer of the institution. It captures signals from the world through monitoring systems, sensors, reporting processes, and other visibility mechanisms.

What is the CORE layer?

The CORE layer is the reasoning layer. It interprets signals, builds understanding, coordinates knowledge, and supports institutional cognition.

What is the DRIVER layer?

The DRIVER layer is the action and delegation layer. It determines what machines are allowed to decide or execute, under what authority, and with what safeguards.

Why does delegation infrastructure matter?

Delegation infrastructure matters because AI becomes risky not when it generates outputs, but when it is allowed to act without clear authority, legitimacy, accountability, and reversibility.

What is the difference between data and representation?

Data is a raw or structured input. Representation is the meaningful institutional model of what that data stands for, how it relates to other things, and how it should be interpreted.

Why do AI systems fail even when the model is good?

They often fail because the institution has weak sensing, fragmented reasoning, poor coordination, unsafe delegation, or no meaningful recourse. The model is only one part of the system.

Why is recourse important in AI systems?

Recourse allows people and institutions to contest or reverse AI-influenced decisions. It is critical for trust, accountability, and legitimacy.

What is machine legitimacy?

Machine legitimacy is the degree to which machine-driven decisions are seen as authorized, appropriate, explainable, and institutionally acceptable.

Why is this framework useful for enterprise AI?

It helps leaders diagnose where AI efforts actually break down: perception, reasoning, or delegation. That makes it practical for governance, strategy, and architecture.

Glossary

Representation Economy

An economic order in which value increasingly depends on how well institutions represent reality inside computational systems.

SENSE

The layer through which institutions observe reality, gather signals, and make events visible.

CORE

The layer where institutions interpret signals, coordinate knowledge, apply reasoning, and form operational understanding.

DRIVER

The layer that determines what actions may be delegated to machines and under what governance conditions.

Signal Infrastructure

The systems and processes through which institutions detect relevant changes, events, or conditions.

Representation Gap

The difference between reality and what institutional systems can meaningfully capture and use.

Visibility Governance

The rules and norms governing what institutions are allowed to observe, infer, connect, and act upon.

Enterprise World Model

A structured representation of how an organization functions, including workflows, entities, dependencies, and constraints.

Delegation Infrastructure

The systems, rules, and safeguards through which authority is shared between humans and machines.

Machine Legitimacy

The institutional acceptability and authorization of machine-mediated decisions.

Recourse

The ability to review, contest, reverse, or remedy an AI-influenced decision.

Action Boundary

The threshold at which a system moves from advising to acting.

Institutional Cognition

The way an organization forms understanding, coordinates intelligence, and chooses among alternatives.

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.