For the past few years, most AI strategy conversations have focused on models. Which model is more accurate? Which one is cheaper? Which one can reason better? But as artificial intelligence moves from generating content to influencing real institutional decisions—loans, claims, supply chains, healthcare triage, and infrastructure operations—a deeper question emerges. The real competitive advantage in the AI era will not come from models alone. It will come from how well institutions represent reality for those models to operate on. That architecture is what I call the Representation Stack.

The Real AI Question Has Changed

For the last two years, most AI strategy conversations have revolved around models.

Which model is more accurate?

Which one is cheaper?

Which one is safer?

Which one can reason better?

These questions still matter.

But they no longer reach the deepest layer of institutional advantage.

As AI adoption accelerates globally, the competitive divide is shifting from model selection to architectural design.

According to the Stanford AI Index 2025, 78% of organizations reported using AI in 2024, up from 55% the year before. Global private investment in generative AI reached $33.9 billion during the same period.

At that scale of adoption, the central issue is no longer:

“Can institutions access intelligence?”

The real question is:

“Can institutions structure reality well enough for intelligence to operate safely, consistently, and at scale?”

This shift introduces a new concept that will define the next phase of enterprise AI:

The Representation Stack.

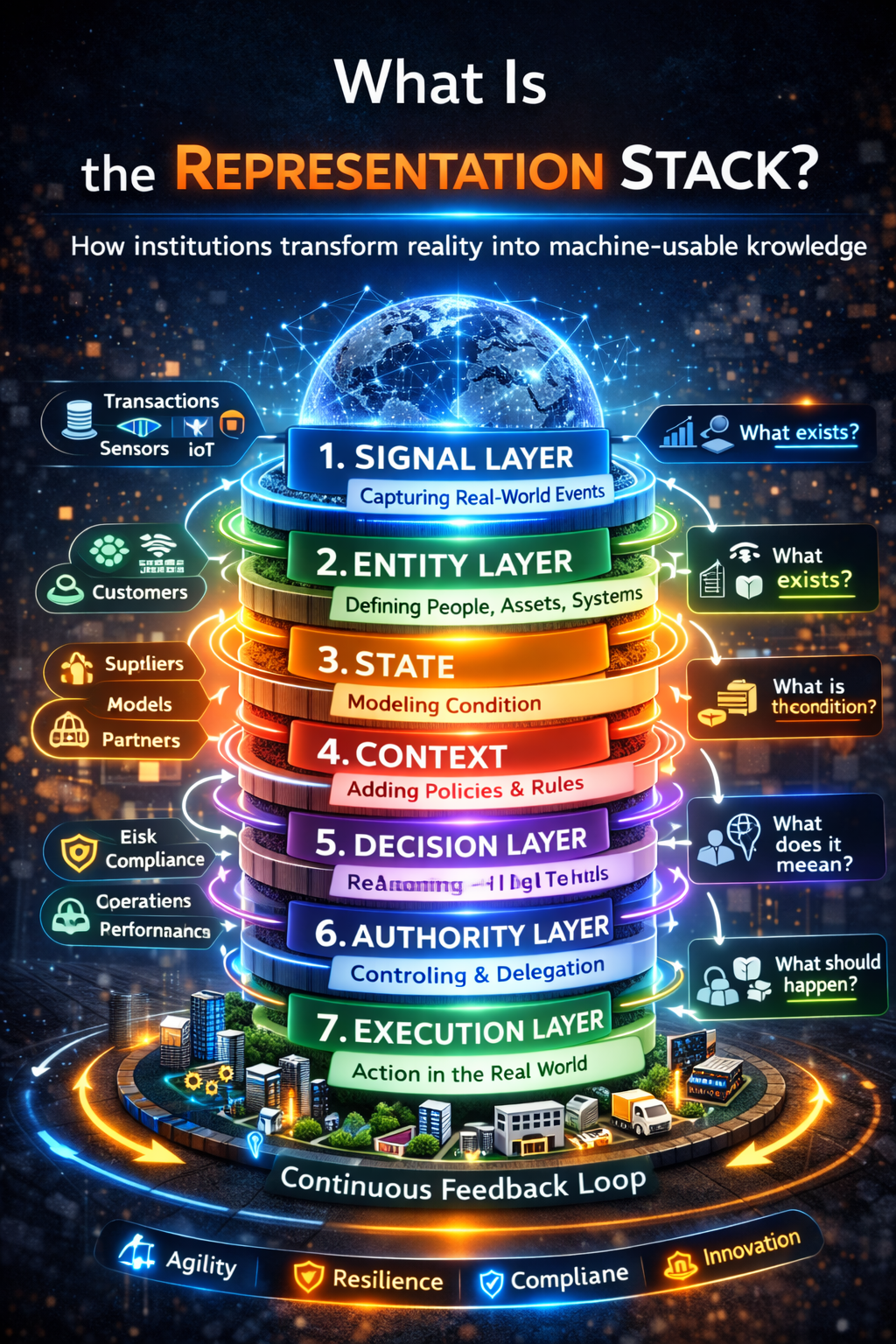

What Is the Representation Stack?

The Representation Stack is the layered architecture through which institutions transform complex, changing reality into something machines can sense, interpret, govern, and act upon.

It is the missing bridge between raw data and reliable AI decision-making.

In simple terms:

The Representation Stack explains how organizations convert the real world into machine-usable institutional reality.

Without this architecture:

AI systems reason over

- partial signals

- fragmented identities

- stale states

- incomplete context

- unclear authority boundaries

With it, AI becomes something far more powerful.

It becomes part of an intelligent institution.

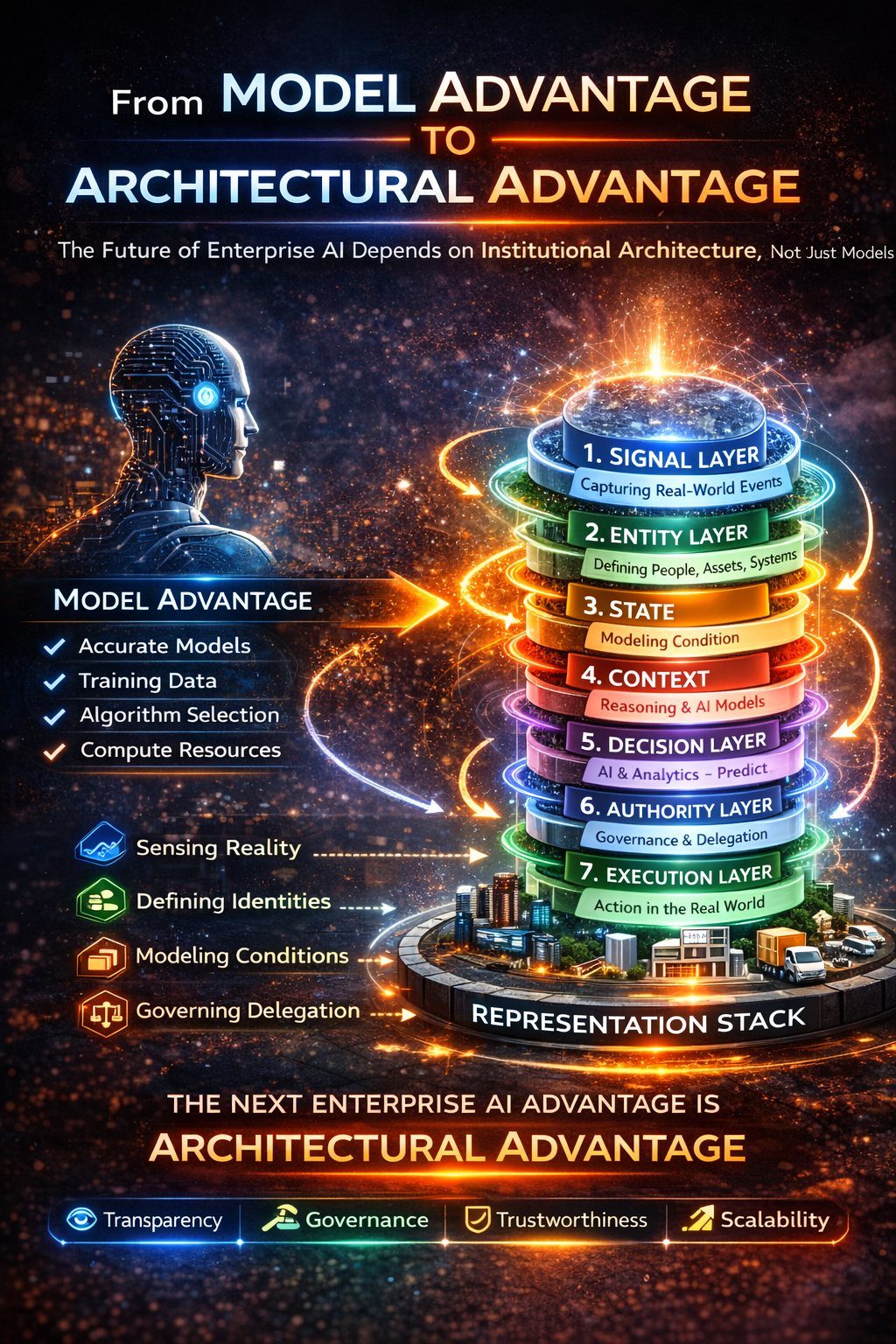

From Model Advantage to Architectural Advantage

The Representation Stack emerges from a deeper economic shift.

In the first phase of AI, competitive advantage came from building or accessing models.

Organizations competed on:

- model accuracy

- model scale

- training data

- compute resources

But in the next phase of AI, advantage increasingly comes from building the institutional architecture that makes intelligence trustworthy and operational.

That architecture must connect:

- signals

- entities

- states

- meaning

- decisions

- authority

- execution

into a coherent institutional system.

This is the deeper logic behind the Representation Economy, where the quality of institutional representation determines how effectively organizations can deploy intelligence.

Related concept:

Representation Capital – The Invisible Asset Deciding Which Institutions Win the AI Economy

From AI Systems to Intelligent Institutions

An organization does not become intelligent simply because it deploys AI.

Many enterprises already operate:

- chatbots

- copilots

- search systems

- recommendation engines

- fraud detection models

- optimization algorithms

- agentic workflows

Yet most are still not intelligent institutions.

They are collections of AI tools attached to fragmented operating environments.

An intelligent institution is different.

It has a structured architecture to:

- Sense reality

- Interpret meaning

- Make decisions

- Delegate authority

- Execute actions within governance boundaries

Modern AI governance frameworks increasingly reflect this systemic view.

For example:

- NIST AI Risk Management Framework treats AI as a socio-technical system requiring monitoring, governance, and lifecycle oversight.

- OECD AI Principles emphasize accountability, transparency, and institutional governance.

- The EU AI Act introduces obligations around oversight, monitoring, logging, and human supervision.

These frameworks all point to the same emerging truth:

AI is no longer just about building models.

It is about designing institutional operating architecture.

The Representation Stack is that architecture.

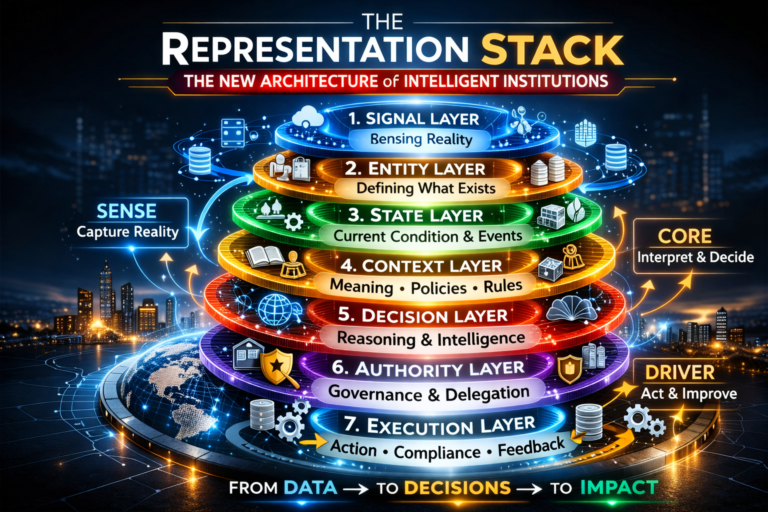

The Representation Stack and the SENSE–CORE–DRIVER Architecture

The Representation Stack becomes clearer when viewed through the SENSE–CORE–DRIVER framework.

SENSE

Reality becomes machine-legible.

CORE

Reality is interpreted and decisions are formed.

DRIVER

Decisions are executed within governed authority.

The Representation Stack operationalizes this architecture.

The Seven Layers of the Representation Stack

-

Signal Layer: Detecting Reality

The signal layer captures traces of reality.

Examples include:

- financial transactions

- sensor readings

- system events

- customer interactions

- operational telemetry

- documents and communications

Example:

A bank may observe:

- card transactions

- device changes

- login attempts

- call-center interactions

- unusual geographic patterns

A logistics company may observe:

- shipment scans

- temperature sensor readings

- route deviations

- warehouse events

Signals are not yet understanding.

They are simply evidence that something happened.

Weak signal layers create institutional blindness.

-

Entity Layer: Defining What Exists

Signals must connect to something.

The entity layer defines the actors and objects that exist in the system.

Examples include:

- customers

- suppliers

- shipments

- accounts

- contracts

- devices

- assets

This layer creates identity continuity across systems.

Without it, the same customer may appear differently across:

- marketing systems

- billing systems

- support systems

- risk systems

Once identity fragments, AI begins reasoning over inconsistent reality.

-

State Layer: Modeling Current Condition

Entities alone are not enough.

Institutions must understand the current condition of each entity.

This is the role of the state layer.

Examples:

A loan may be

- active

- overdue

- delinquent

- restructured

A shipment may be

- in transit

- delayed

- cleared by customs

- temperature-compromised

A patient may have

- vital sign states

- treatment status

- allergy records

- risk indicators

Decisions depend heavily on state accuracy.

Stale states lead to unreliable decisions.

-

Context Layer: Giving Meaning to Reality

Signals, entities, and states still do not explain what events mean.

That is the role of context.

Context includes:

- policies

- regulations

- business rules

- contractual obligations

- operational constraints

- market conditions

Example:

A delayed shipment means one thing if it breaches a service contract and another if the delay falls within tolerance.

A large transaction may be normal for one customer and suspicious for another.

Context converts raw facts into institutional meaning.

This is also where many AI deployments fail.

They assume context can be centralized and fixed.

In reality, context evolves constantly.

-

Decision Layer: Institutional Reasoning

Once reality is represented and contextualized, institutions can reason.

The decision layer includes:

- predictive models

- optimization engines

- rule systems

- AI reasoning systems

This layer determines:

- what action should be taken

- what options are available

- what outcomes are likely

However:

A strong model operating on weak representation produces weak decisions.

This reframes AI strategy.

The real question is not:

“How good is the model?”

The real question becomes:

“What reality is the model reasoning over?”

-

Authority Layer: Governing Delegation

The authority layer determines:

- what actions AI may take

- when humans must intervene

- which policies apply

- what approvals are required

Example:

AI may

- recommend decisions

- execute low-risk actions

- escalate high-risk cases

The authority layer defines the boundaries of machine autonomy.

As AI systems move closer to operational decisions, this governance layer becomes critical.

-

Execution Layer: Acting in the World

The final layer is execution.

This is where decisions become real actions.

Examples:

- approving payments

- routing support cases

- blocking fraud attempts

- adjusting supply chains

- triggering compliance workflows

Execution must also produce audit evidence.

Actions should be:

- traceable

- reviewable

- reversible when necessary

In mature systems, execution generates new signals.

The Representation Stack becomes a continuous feedback cycle.

Why the Representation Stack Matters Now

AI is moving from content generation to institutional operation.

Earlier AI systems mostly produced:

- text

- code

- images

- summaries

Weak representation architecture was inconvenient but manageable.

But when AI begins influencing:

- lending decisions

- healthcare triage

- fraud detection

- supply chain operations

- customer treatment

- public services

weak representation architecture becomes dangerous.

The next wave of enterprise AI advantage will not come from models alone.

Those models are increasingly available to everyone.

Advantage will come from building superior institutional architecture around them.

Intelligence is becoming abundant.

Representation quality is becoming strategic.

Why Boards Should Care

Boards do not need to understand every ontology or schema.

But they must understand the consequences of weak representation architecture.

If the Representation Stack is weak:

- the institution sees reality poorly

- decisions rely on incomplete context

- authority boundaries become unclear

- AI risk accumulates invisibly

If the Representation Stack is strong:

- reality becomes legible

- decisions become coherent

- governance becomes enforceable

- intelligence compounds into advantage

The Representation Stack is therefore not just a technology issue.

It is a board-level design issue.

The Strategic Lesson

The institutions that win the AI era will not simply deploy the best models.

They will build the best stacks.

They will know how to transform:

signals → entities → states → meaning → decisions → authority → execution

into a coherent institutional system.

That is the architecture of intelligent institutions.

Conclusion: The Architecture of Institutional Intelligence

The internet had a stack.

Cloud computing had a stack.

Enterprise software had a stack.

Now intelligent institutions need one too.

The Representation Stack is the architecture that makes the Representation Economy real.

It allows institutions to:

- represent reality accurately

- reason over that reality coherently

- govern decisions responsibly

- execute actions with accountability

In the coming decade, the most important AI question will no longer be:

“Which model should we use?”

It will be:

“What architecture allows our institution to represent reality well enough to trust machine decisions?”

That architecture is the Representation Stack.

And the institutions that build it first will define how intelligence operates inside modern organizations.

FAQ

What is the Representation Stack?

The Representation Stack is a layered institutional architecture that converts real-world signals into machine-understandable representations, enabling AI systems to reason, decide, and act within governed boundaries.

Why is the Representation Stack important for enterprise AI?

Without a structured representation architecture, AI systems operate on incomplete or inconsistent information, increasing operational and governance risk.

How does the Representation Stack relate to AI governance?

The Representation Stack embeds governance into the architecture itself by defining signals, entities, states, context, decision authority, and execution accountability.

What is the relationship between the Representation Stack and SENSE–CORE–DRIVER?

The Representation Stack operationalizes the SENSE–CORE–DRIVER framework by defining the layers through which reality is sensed, interpreted, and acted upon within institutions.

Glossary

Representation Economy

An economic shift where competitive advantage depends on how effectively institutions represent reality for intelligent systems.

Representation Stack

The layered architecture that enables institutions to convert real-world complexity into machine-usable knowledge.

Institutional Intelligence

The ability of organizations to sense, interpret, decide, and act coherently using human and machine intelligence.

AI Governance Architecture

The structural framework ensuring AI systems operate within defined policies, authority boundaries, and oversight mechanisms.

Further Reading

Stanford AI Index Report

NIST AI Risk Management Framework

OECD AI Principles

EU Artificial Intelligence Act

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.