Representation Economics

For years, the artificial intelligence conversation has revolved around one question: Which model is better? Bigger models, faster inference, better benchmarks. But a deeper question is beginning to matter more.

Why do some organizations become easier for AI systems to understand, trust, and work with than others? The answer points to a new economic reality. In the AI era, competitive advantage will not come only from better algorithms. It will come from better representation of reality. I call this shift Representation Economics

Executive Summary

For the last few years, the AI conversation has been dominated by one question:

Which model is better?

Which model is faster.

Which model is cheaper.

Which model reasons better.

Which model has the strongest benchmark score.

That question mattered in the first phase of the AI era. It still matters now. But it is no longer the most important question.

A deeper question is beginning to define the next era:

Why do some organizations become easier for AI systems to understand, trust, and work with than others?

That is where a new law of value creation begins.

The first wave of the digital economy rewarded firms that digitized processes.

The second wave rewarded firms that captured and used data well.

The next wave will reward firms that can represent reality in ways machines can reliably interpret, reason over, verify, and act upon.

I call this Representation Economics.

Representation Economics is the idea that in the AI era, value will increasingly flow to institutions that are better at making the world machine-legible, machine-trustworthy, and machine-coordinatable. In other words, advantage will not come only from intelligence. It will come from the quality of the representation that intelligence can operate on.

That shift matters because AI systems do not act on reality directly. They act on representations of reality.

A loan approval system does not see a human life in full. It sees an application, a credit history, a transaction pattern, a risk profile, and a policy context.

A healthcare AI does not see a patient in all their complexity. It sees records, scans, symptoms, lab values, and care rules.

A supply-chain AI does not see “the business.” It sees inventories, vendors, delays, exceptions, service levels, and cost signals.

If those representations are incomplete, stale, fragmented, or misleading, even a very powerful model will make weak decisions. If those representations are timely, structured, governed, and connected to the right entities and states, even a less glamorous model can create real enterprise value.

That is why the next competitive edge is not just model quality. It is representation quality.

This is not a theoretical issue. Stanford’s 2025 AI Index shows that AI’s influence across the economy and global governance is intensifying, while organizations are still uneven in how they operationalize trustworthy AI. In parallel, major governance frameworks from NIST, OECD, UNESCO, and the European Union all emphasize that trust in AI depends not only on model capability, but on governance, transparency, oversight, and the conditions in which AI is deployed. (Stanford HAI)

Why board members should care

Most boards are still evaluating AI through a familiar lens:

- model investment

- productivity uplift

- use cases

- risk and compliance

- build-versus-buy decisions

Those are important. But they are increasingly downstream.

The more strategic question is whether the institution is becoming representable.

Can the organization make its operational reality visible to machines in a way that is current, governed, attributable, and actionable? Can its systems distinguish signal from noise? Can they connect events to entities, states to decisions, and decisions to legitimate execution? Can they explain what happened, why it happened, and what recourse exists if the system was wrong?

If the answer is no, then AI may still produce demos, copilots, and isolated productivity gains. But it will struggle to produce durable institutional advantage.

That is why Representation Economics belongs in the boardroom. It changes the unit of strategic analysis from “how much AI do we have?” to “how well can our institution be seen, reasoned over, and trusted by intelligent systems?”

What Representation Economics really means

Representation Economics is not just about data quality.

That distinction matters.

Many leaders hear arguments like this and reduce them to a familiar phrase: garbage in, garbage out. But this is much bigger than that. Data quality is only one part of the story.

Representation Economics asks a broader set of questions:

- Can the institution detect the right signals?

- Can it connect those signals to the right entities?

- Can it model the current state correctly?

- Can it update that state as reality changes?

- Can it reason over that state in context?

- Can it act within legitimate authority boundaries?

- Can it prove why it acted?

- Can it reverse or correct action when reality was misunderstood?

That is why Representation Economics is not merely a data concept. It is an institutional design concept.

In the industrial era, factories created value by transforming raw materials into products.

In the AI era, institutions increasingly create value by transforming messy reality into actionable representation.

The firms that do this well will be easier to buy from, easier to insure, easier to regulate, easier to finance, easier to integrate with, and easier for AI systems to trust.

The firms that do this badly will suffer friction everywhere.

A simple example: two logistics companies

Imagine two logistics companies.

Both buy access to the same class of foundation models.

Both use similar optimization software.

Both hire strong AI teams.

But Company A has clean, machine-readable records of shipments, drivers, route exceptions, warehouse capacity, vendor obligations, weather dependencies, and customer commitments. Its systems update in near real time. Policies are connected to operational actions. Exceptions are tagged, stored, and learnable.

Company B has the same ambition but runs on scattered spreadsheets, disconnected apps, undocumented workarounds, duplicate vendor records, inconsistent location IDs, and stale exception logs.

Which company will get more value from AI?

Not the company with “better AI” in theory.

The company with better representation of operational reality.

In Company A, AI reasons over what is actually happening.

In Company B, AI reasons over fragments, shadows, and approximations.

That is the difference between intelligence applied to reality and intelligence applied to noise.

Why value is moving from data advantage to representation advantage

For years, business strategy treated data as the central asset of the AI economy. That was directionally right, but incomplete.

Raw data alone does not create durable advantage. Many firms are data-rich and still operationally confused. They collect huge volumes of information but cannot connect it into a coherent, trusted, action-ready picture.

Representation is different.

Representation means that signals are not merely stored. They are organized into meaningful entities, current states, permissions, relationships, exceptions, obligations, and decision contexts.

A bank may have enormous volumes of customer data. But if it cannot represent financial intent, fraud context, shifting risk posture, delegated authority, and recourse pathways clearly enough for AI systems to act responsibly, that data does not become strategic value.

A hospital may have digitized records. But if AI cannot reliably tell which condition matters now, which treatment rule applies, which clinician has authority, and what should happen if a recommendation was wrong, then digitization is not enough.

Representation advantage is what turns information into institutional capability.

That is why the next winners will not simply be data-rich. They will be representation-rich.

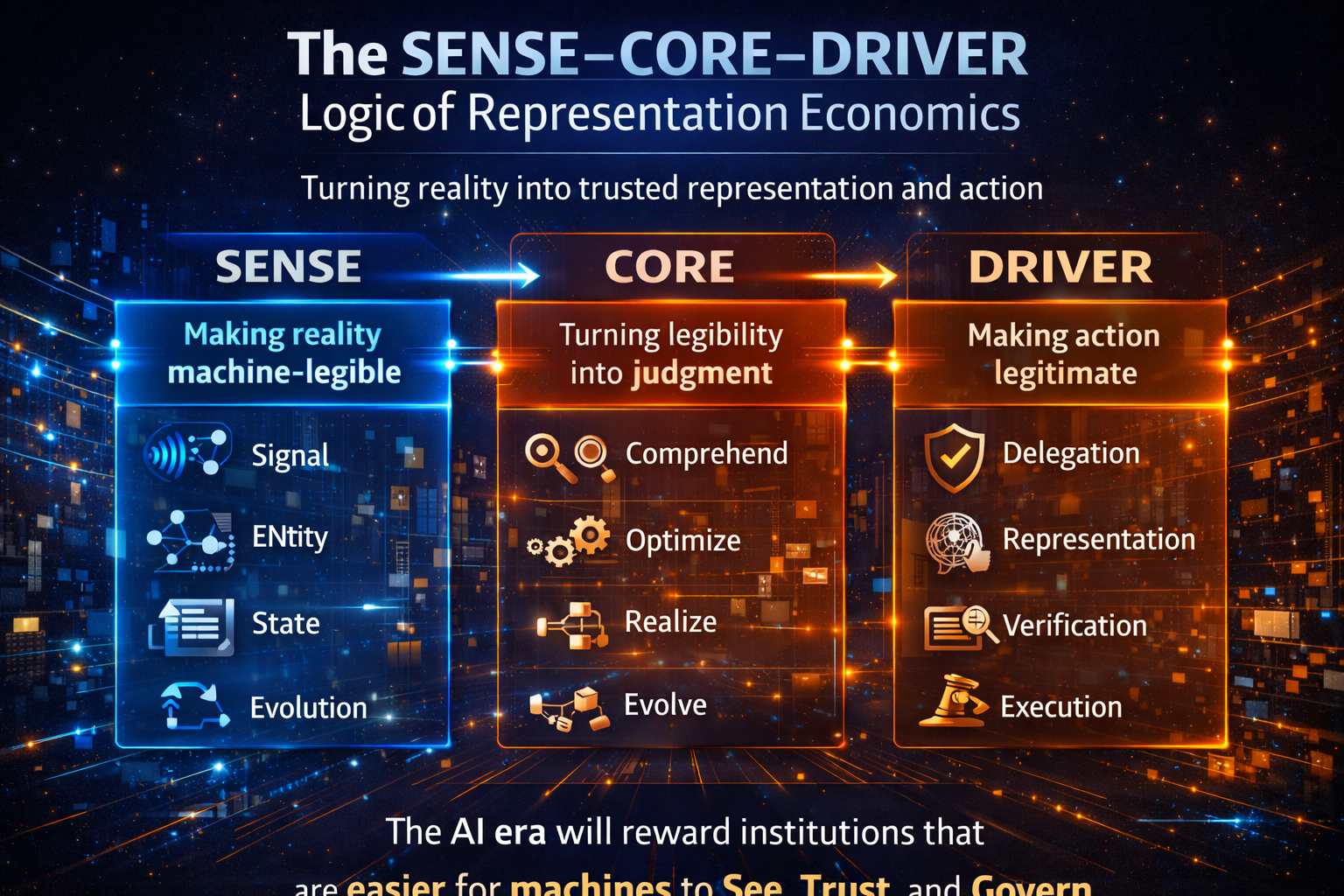

The SENSE–CORE–DRIVER logic of Representation Economics

This is where the SENSE–CORE–DRIVER framework becomes essential.

Representation Economics needs a practical architecture. SENSE–CORE–DRIVER provides that architecture.

SENSE: making reality legible

SENSE is the layer where reality becomes machine-legible.

It includes:

- Signal: detecting events, changes, and traces from the world

- ENtity: attaching those signals to a persistent actor, object, location, or asset

- State representation: building a structured view of current condition

- Evolution: updating that state over time as new signals arrive

Without SENSE, AI operates in darkness.

Think of a smart factory. Sensors may detect machine temperature, vibration, output, and maintenance events. But unless those signals are tied to the right machine, the right production line, the right maintenance history, and the right business impact, the system does not truly understand the factory. It is collecting signals without constructing reality.

CORE: turning legibility into judgment

CORE is the cognition layer.

It enables the institution to:

- Comprehend context

- Optimize decisions

- Realize action pathways

- Evolve through feedback

This is where models, reasoning systems, policy engines, simulations, retrieval layers, and workflows come together.

But CORE is only as good as the representation it receives. Strong reasoning on weak representation still creates fragile outcomes.

DRIVER: making action legitimate

DRIVER is the layer that many AI strategies still underweight.

It includes:

- Delegation: who authorized the system to act

- Representation: what model of reality the system used

- Identity: which entity was affected

- Verification: how the action is checked

- Execution: how action is carried out

- Recourse: what happens if the system is wrong

This is where AI moves from “interesting” to “institutional.”

Many enterprises can generate recommendations. Far fewer can safely let machines trigger actions in finance, healthcare, HR, procurement, law, public services, or critical infrastructure. That is because acting systems require legitimacy, not just intelligence.

This direction is consistent with the broader global policy landscape. NIST’s AI Risk Management Framework is built around governance, mapping, measurement, and management. OECD’s AI Principles promote trustworthy AI aligned with human rights and democratic values.

UNESCO’s recommendation emphasizes transparency, fairness, and human oversight across all 194 member states. The EU AI Act adds legal obligations based on risk and use context. The World Economic Forum’s recent work on AI agents likewise focuses on evaluation and governance as these systems move closer to real-world action. (NIST Publications)

Why this changes competition

Representation Economics changes how competitive advantage is built.

In the industrial view, firms competed on scale, labor, cost, and distribution.

In the digital view, they also competed on software, data, and platforms.

In the AI view, they will increasingly compete on something even more foundational:

How clearly and credibly they can be represented inside machine-mediated systems.

This has major consequences.

A company that is easier for AI procurement systems to evaluate may win more contracts.

A company that is easier for automated compliance systems to verify may face lower friction.

A company that is easier for AI finance systems to assess may access capital faster.

A company that is easier for machine agents to integrate with may become the preferred node in a larger ecosystem.

This is not science fiction. It is the natural consequence of a world in which more decisions are mediated by software that reads structured representations before it acts.

In such a world, poor representation becomes an economic tax.

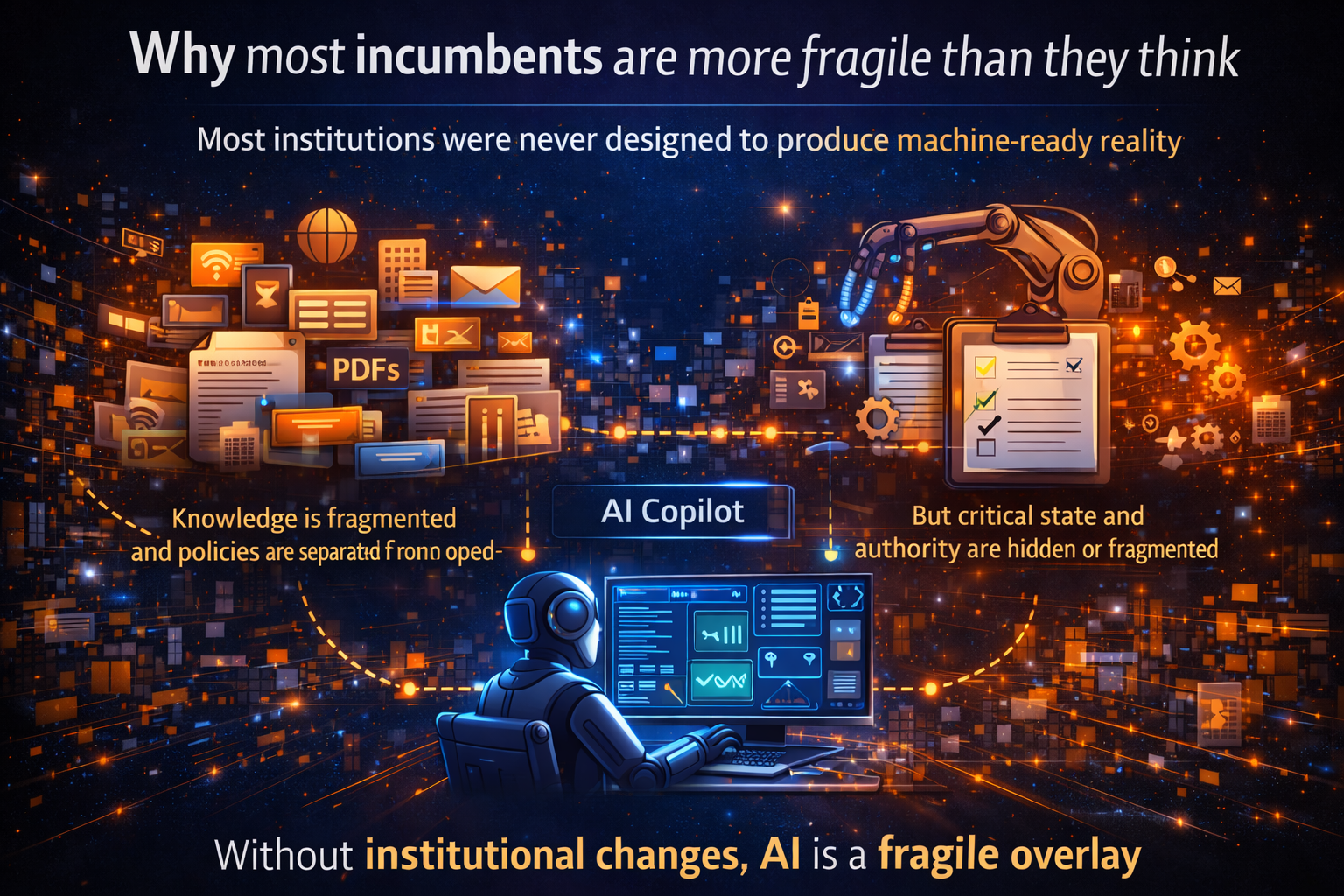

Why most incumbents are more fragile than they think

Many incumbents still think their AI challenge is about tooling.

It is not.

Their deeper challenge is that the institution was never designed to produce machine-ready reality.

Knowledge is spread across email, PDFs, chat threads, contracts, forms, ERP systems, tribal memory, and undocumented exceptions. Policies often live separately from operations. Authority structures are clear to humans but invisible to machines. Critical state changes are either delayed, ambiguous, or trapped in handoffs.

So companies deploy copilots and agents on top of an institution that remains structurally hard to represent.

That is why so many AI pilots look impressive but fail to scale into defensible enterprise value.

The bottleneck is not always the model.

It is the institution’s representational condition.

What new kinds of companies will emerge

Representation Economics also points to a new category of firms.

Just as the internet created search engines, cloud platforms, payment rails, and identity layers, the AI era will create organizations whose core business is to make reality more machine-legible and machine-trustworthy.

These may include firms that specialize in:

- institutional state infrastructure

- delegated authority management

- representation assurance

- machine-readable compliance

- identity and provenance synchronization

- recourse and reversal systems

- machine-legibility layers for finance, healthcare, logistics, manufacturing, and government

In other words, some of the most important companies of the next decade may not sell intelligence itself. They may sell the infrastructure that makes intelligence trustworthy at scale.

The global dimension: why representation will become a strategic issue for nations

This is not only an enterprise story. It is also a geopolitical one.

Countries and regions are increasingly shaping the institutional environment in which AI operates. The EU AI Act is the first comprehensive legal framework on AI by a major regulator. UNESCO’s recommendation applies globally across 194 member states. OECD’s principles provide an intergovernmental baseline for trustworthy AI.

The World Bank and UNDP continue to emphasize digital public infrastructure, especially identity, payments, and trusted data exchange, as foundational systems for secure and inclusive digital coordination. India’s current articulation of DPI also highlights how interoperable identity, payments, and data exchange can operate as population-scale public rails. (Digital Strategy)

That matters because the next race is not only about chips, models, or compute. It is also about who builds the strongest representation environment for machine-mediated economies.

The strategic implication for CEOs and boards

The next decade will not reward firms simply for “using AI.”

It will reward firms that redesign themselves so that AI can operate on reality with greater clarity, trust, and legitimacy.

That means boards should begin asking different questions:

- What realities in our institution must be represented accurately before AI can influence decisions?

- Where are our state models fragmented or stale?

- Where do policies fail to travel with operational action?

- Which decisions require explicit recourse architecture?

- What parts of our business are invisible to machine systems today?

- Are we investing in intelligence without investing in representability?

Those questions sit much closer to durable advantage than another model bake-off.

Conclusion: the new law of value creation

So what is the new law?

It is this:

In the AI era, value will increasingly accrue to institutions that can turn reality into reliable representation, representation into sound judgment, and judgment into legitimate action.

That is the sequence.

Not data alone.

Not models alone.

Not automation alone.

But:

SENSE → CORE → DRIVER

That is why Representation Economics matters.

It explains why some firms will appear AI-enabled but remain fragile.

It explains why others will quietly compound advantage.

It explains why machine trust will become an economic force.

And it explains why the future belongs not simply to intelligent institutions, but to institutions that are easier for intelligence to work with.

The next decade will not be won only by those who build powerful AI.

It will be won by those who build representable institutions.

And that may prove to be the defining law of value creation in the AI era.

Why This Idea Matters for the Global AI Economy

The concept of Representation Economics sits at the intersection of several major global developments:

-

the rapid deployment of enterprise AI systems

-

emerging global governance frameworks such as the NIST AI Risk Management Framework

-

international principles from the OECD and UNESCO

-

regulatory structures like the EU AI Act

-

and the growing focus on trustworthy AI discussed by the World Economic Forum

Together, these developments signal a major shift: the future of AI will depend not only on model capability but also on the institutional architecture that makes machine decision-making reliable and legitimate.

Summary

What this article argues

AI advantage is moving beyond model quality and raw data. The next winners will be institutions that can represent reality in ways machines can reliably interpret, reason over, verify, and act upon.

Core framework

SENSE → CORE → DRIVER

Who should read this

Board members, CEOs, CTOs, CIOs, chief risk officers, enterprise architects, AI governance leaders, and policy strategists.

Why this matters now

As AI systems move from generating content to influencing decisions and triggering actions, machine-legible, trusted representation becomes a strategic asset.

FAQ

What is Representation Economics in simple language?

Representation Economics is the idea that in the AI era, value will increasingly flow to organizations that are easier for intelligent systems to understand, trust, and coordinate with.

How is Representation Economics different from data quality?

Data quality is only one part of the story. Representation Economics is about turning signals into connected entities, current states, permissions, decision contexts, and governed action pathways.

Why does this matter for boards and C-suite leaders?

Because the real strategic issue is no longer only “Do we have AI?” It is “Can our institution be represented well enough for AI to create safe, scalable, defensible value?”

What is the SENSE–CORE–DRIVER framework?

It is a practical architecture for building representable institutions. SENSE makes reality legible, CORE reasons over that reality, and DRIVER ensures action happens with legitimacy, verification, and recourse.

Why is representation more important than model quality in many enterprise settings?

Because even powerful models fail when they operate on fragmented, stale, or misleading representations of the organization’s actual reality.

What kinds of companies could win in a Representation Economics world?

Companies that become easier for AI systems to evaluate, trust, integrate with, regulate, finance, insure, and transact with.

Will Representation Economics matter only for enterprises?

No. It also matters for governments, regulators, public infrastructure builders, financial systems, and cross-border digital ecosystems.

Is this aligned with current global AI governance trends?

Yes. Official frameworks from NIST, OECD, UNESCO, the EU, the World Economic Forum, the World Bank, and UNDP increasingly emphasize governance, oversight, transparency, risk management, and trusted digital infrastructure around AI. (NIST Publications)

What is Representation Economics?

Representation Economics is the idea that in the AI era, value will increasingly flow to organizations that can represent reality in ways machines can reliably interpret, reason over, and act upon.

Why does representation matter for AI systems?

AI systems do not operate directly on the physical world. They operate on representations of reality, such as structured data, models of state, and institutional context. The quality of these representations determines the quality of AI decisions.

How is Representation Economics different from data strategy?

Data strategy focuses on collecting and storing information. Representation Economics focuses on structuring reality so machines can reason and act responsibly within institutions.

Why will Representation Economics matter for boards and CEOs?

Because AI systems will increasingly influence operational, financial, and strategic decisions. Organizations that cannot represent their reality clearly will struggle to deploy AI safely and effectively.

Glossary

Representation Economics

A framework for understanding how value in the AI era increasingly depends on how well institutions make reality machine-legible, trustworthy, and actionable.

Machine legibility

The degree to which systems, entities, states, and events are structured clearly enough for machines to interpret reliably.

Machine trust

The confidence an AI-mediated system can place in the identity, state, provenance, and policy alignment of the information and actors it interacts with.

SENSE

The layer that detects signals, connects them to entities, models state, and tracks change over time.

CORE

The reasoning layer that interprets context, optimizes choices, and evolves decisions through feedback.

DRIVER

The legitimacy layer that governs delegation, verification, execution, and recourse when AI systems influence or take action.

Representable institution

An institution whose operational reality is sufficiently visible, structured, attributable, and governed for intelligent systems to work with safely and effectively.

Representation advantage

A strategic advantage created when an institution is easier for AI systems to understand, evaluate, coordinate with, and trust.

Digital public infrastructure

Foundational digital systems such as identity, payments, and trusted data exchange that support secure and scalable coordination across society. (Open Knowledge Repository)

References and further reading

For readers who want to explore the broader policy and governance context behind this shift:

- Stanford HAI, AI Index Report 2025 — on AI’s expanding economic and governance impact. (Stanford HAI)

- NIST, AI Risk Management Framework — on governance, mapping, measurement, and management of AI risk. (NIST Publications)

- OECD, AI Principles — on innovative, trustworthy AI aligned with human rights and democratic values. (OECD.AI)

- UNESCO, Recommendation on the Ethics of Artificial Intelligence — on transparency, fairness, and human oversight. (UNESCO)

- European Commission, EU AI Act — on the first comprehensive legal framework on AI by a major regulator. (Digital Strategy)

- World Economic Forum, AI Agents in Action: Foundations for Evaluation and Governance — on governance for increasingly autonomous AI systems. (World Economic Forum)

- World Bank and UNDP resources on digital public infrastructure — on identity, payments, and trusted data exchange as foundations for digital coordination. (Open Knowledge Repository)

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

- The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Balance Sheet: How AI Is Redefining Assets, Liabilities, and Institutional Strength – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.