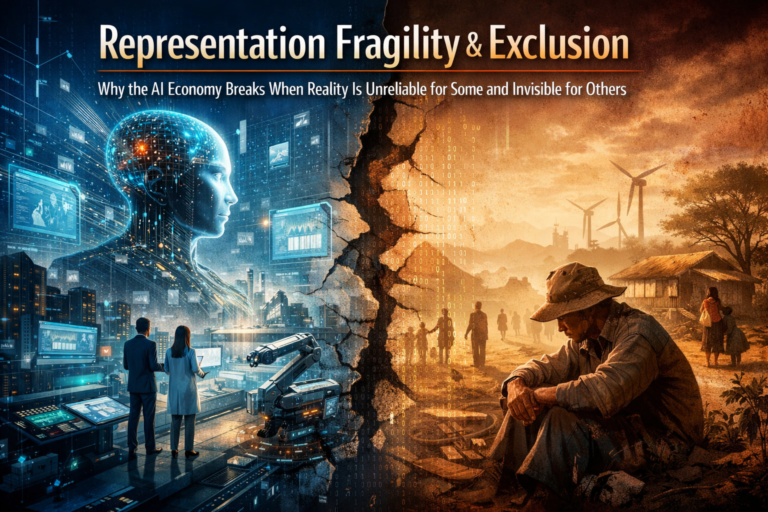

Representation Fragility and Exclusion:

The next AI crisis will not begin with models. It will begin with reality.

For the last few years, the public debate around AI has been dominated by model performance: larger models, lower inference costs, multimodal systems, autonomous agents, and faster deployment cycles. That conversation matters. But it misses the deeper shift already underway.

The real battleground is not intelligence alone. It is representation: how the world is converted into a machine-readable form that systems can sense, classify, compare, remember, and act upon.

NIST’s AI Risk Management Framework reflects this broader reality by emphasizing governance, mapping, measurement, and management across the full AI lifecycle, not just model performance. Meanwhile, financial supervisors at the BIS have warned that data quality, model risk, governance, and explainability can create systemic vulnerabilities as AI moves deeper into critical decision systems. (NIST Publications)

That is where two underappreciated risks emerge: representation fragility and representation exclusion.

Representation fragility appears when institutions depend on reality models they can no longer inspect, repair, or confidently verify.

Representation exclusion appears when people, small businesses, local-language communities, rural producers, and even non-human systems never become legible enough to enter the machine-readable economy in a meaningful way. UNESCO’s AI ethics framework stresses inclusiveness, diversity, fairness, and human oversight across the AI lifecycle, while OECD and World Bank work shows that AI adoption remains uneven, especially where digital foundations are weak. (UNESCO)

Together, these two failures create the defining paradox of the AI era: reality becomes highly legible where power is already concentrated, and weakly legible where inclusion matters most.

This is not just a technical issue. It is an economic, institutional, and geopolitical one.

The OECD has found that SME AI adoption remains relatively low compared with larger firms. The World Bank’s 2025 AI foundations work argues that low- and middle-income countries face steep barriers to adapting and deploying AI at scale without stronger connectivity, compute, data, skills, and governance. The World Economic Forum and UNDP have both emphasized that digital public infrastructure and trustworthy digital systems are essential if AI-led growth is to be broad-based rather than exclusionary. (OECD)

AI systems do not act directly on reality—they act on structured representations of reality. When those representations are incomplete, distorted, or missing, the decisions produced by AI systems reflect those limitations. This is why representation fragility and exclusion are emerging as central risks in the AI economy.

What representation fragility really means

Representation fragility is the condition in which an organization relies on AI-mediated representations of reality that it no longer knows how to inspect, reconstruct, or correct.

A bank does not merely run a model. It depends on entity resolution systems, identity feeds, transaction labels, third-party enrichment, behavioral signals, risk classifications, and policy engines. A hospital does not merely use analytics. It depends on digital summaries, triage signals, patient-state updates, and care-priority flags. A supply chain does not merely optimize inventory. It depends on continuously refreshed digital representations of shipments, routes, counterparties, and delay conditions.

In each case, the institution is no longer just using software. It is acting through a structured version of reality.

The danger begins when that version of reality becomes unrepairable.

Imagine a supplier is wrongly flagged as unstable because its records are fragmented, one identity feed merged the wrong entities, and a downstream risk engine interpreted missing data as negative evidence.

The system may continue to run smoothly. Dashboards still work. Decisions still flow. But the institution may no longer know where the distortion entered the chain. What looks like an intelligence problem is often a representation problem. What looks like an automation failure is often a reality-maintenance failure. BIS guidance on AI in finance repeatedly highlights how opaque data lineage, weak explainability, and model-governance gaps complicate validation, oversight, and recovery. (Bank for International Settlements)

This is why representation fragility is more serious than ordinary model error. A bad output can sometimes be fixed. A damaged representation layer can contaminate every downstream decision that depends on it.

What representation exclusion really means

Representation exclusion is the opposite failure.

Here, the problem is not that the machine-readable representation is broken. It is that many entities never receive a rich, trusted, and actionable representation in the first place.

Consider a small farmer with inconsistent land records, thin digital history, weak access to formal financial rails, and fragmented agronomic data. Or a micro-enterprise with genuine cash flow but poor digitization. Or a local-language community whose speech patterns, cultural references, and market behaviors remain sparsely represented in mainstream AI systems. Or ecological assets such as biodiversity zones and water systems that matter economically but are still poorly encoded in most enterprise decision environments.

In all these cases, exclusion happens before the model produces an output.

FAO has highlighted both the promise of AI in agriculture and the practical barriers that smallholders face in accessing digital advisory services and inclusive innovation pathways. UNESCO’s ethics framework calls explicitly for diversity and inclusiveness across the AI lifecycle, including attention to underrepresented groups. UNDP’s digital inclusion work likewise frames equitable digital systems as foundational, not optional. (FAOHome)

This matters because the AI economy increasingly rewards what can be sensed, modeled, verified, and acted on at machine speed. If an actor is poorly represented, it will struggle to access credit, insurance, visibility, compliance recognition, pricing fairness, and market opportunity.

In the industrial era, exclusion often meant lacking capital or infrastructure. In the AI era, exclusion increasingly means lacking machine legibility.

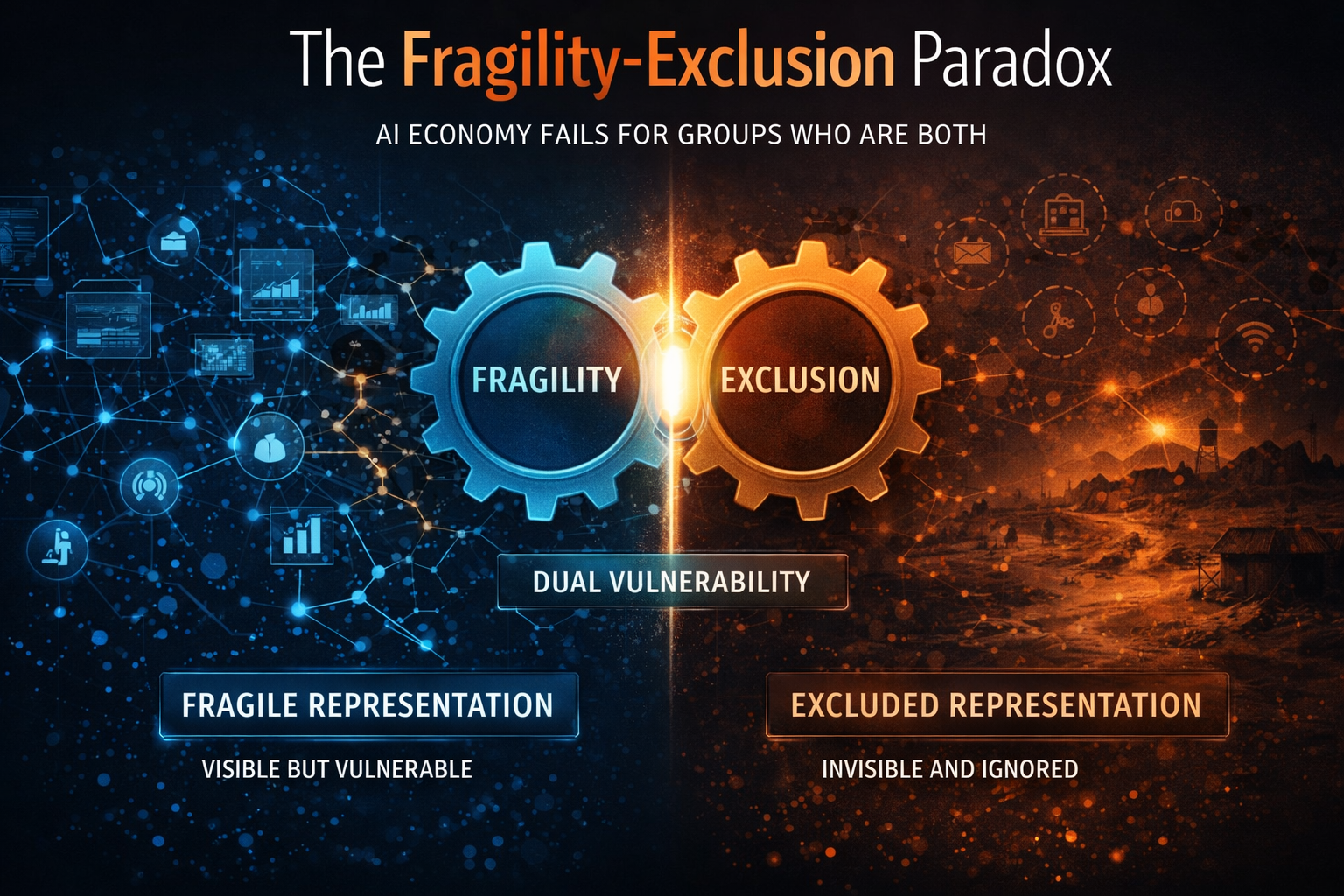

The fragility-exclusion paradox

The deepest risk is that fragility and exclusion emerge at the same time.

Large institutions become fragile because they rely on layered, outsourced, constantly shifting representations they cannot fully repair. Smaller actors become excluded because they lack the infrastructure, continuity, standards, and identity layers required to become legible in the first place.

So the top of the economy becomes over-dependent, while the bottom becomes under-represented.

This is the structural paradox of the AI economy: it can become more automated and less grounded at the same time; more intelligent and less equitable at the same time.

That pattern is already visible globally. OECD evidence points to persistent gaps in AI uptake between SMEs and larger firms.

The World Bank argues that AI readiness depends on foundational conditions, not simply access to models. WEF and UNDP work on digital public infrastructure similarly underscores that safe, interoperable, trusted infrastructure shapes whether digital systems broaden participation or deepen existing divides. (OECD)

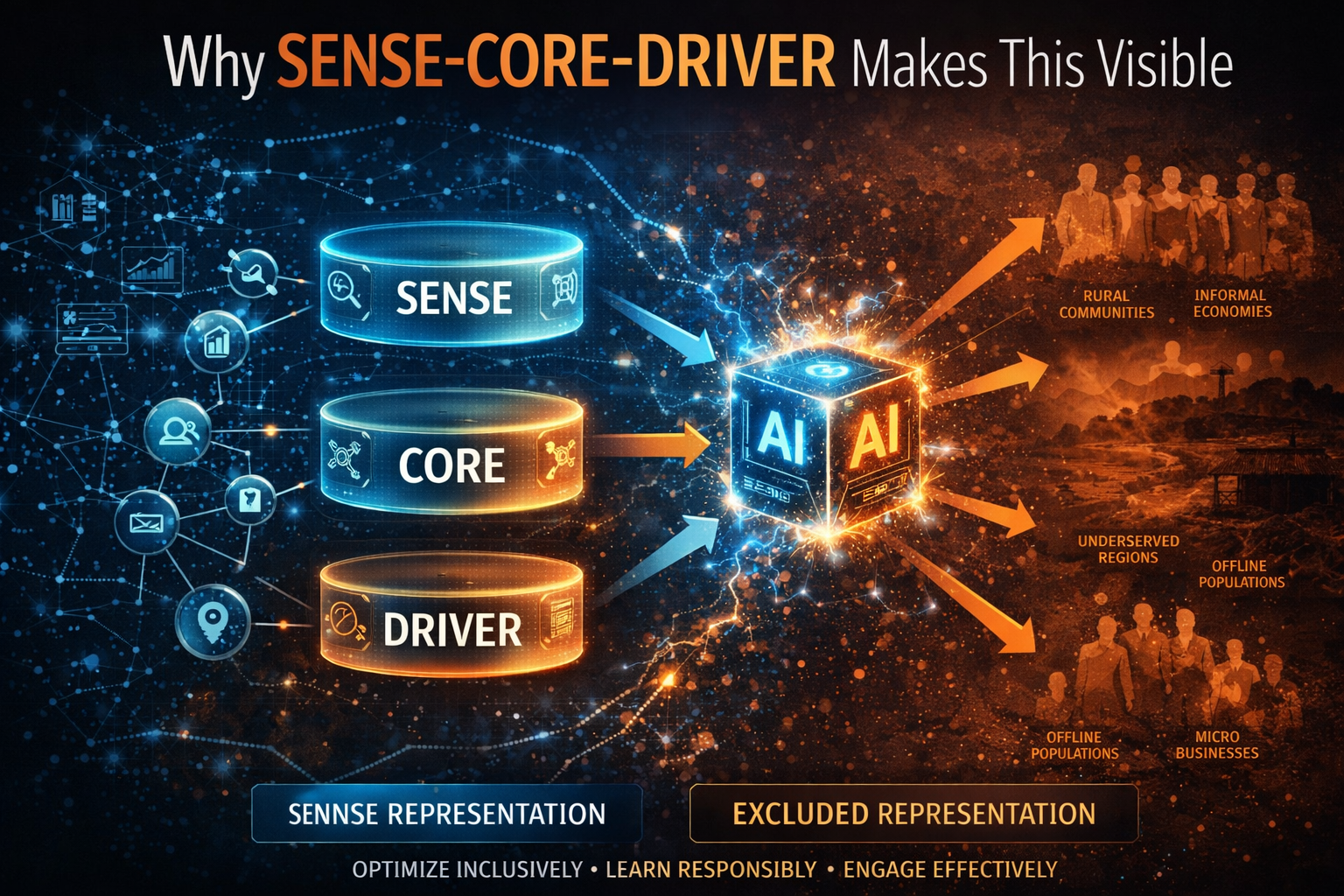

Why SENSE–CORE–DRIVER makes this visible

Most organizations still overinvest in the CORE of AI—models, reasoning, orchestration, and decision engines—while underinvesting in SENSE and DRIVER, which determine whether intelligence is grounded, governable, and trusted.

SENSE: the legibility layer

SENSE is where reality becomes machine-readable.

S — Signal: detecting events, traces, and changes from the world

EN — Entity: attaching those signals to a persistent actor, asset, object, or place

S — State representation: building a structured model of present condition

E — Evolution: updating that representation over time

When fragility appears, SENSE degrades because the institution no longer understands how those layers were assembled or updated. When exclusion appears, SENSE fails because some entities never accumulate enough signal depth, identity continuity, or state richness to matter in machine decision systems.

CORE: the cognition layer

CORE is where systems:

C — Comprehend context

O — Optimize decisions

R — Realize action

E — Evolve through feedback

CORE can be excellent and still fail if it reasons over unstable or incomplete representations. Better models do not solve broken legibility. They often scale it faster. NIST and BIS both point to the importance of governance, measurement, explainability, and ongoing risk management precisely because model quality alone is insufficient in high-stakes environments. (NIST Publications)

DRIVER: the legitimacy layer

DRIVER is where institutions answer the questions that determine whether machine action is acceptable:

D — Delegation: who authorized the action

R — Representation: what version of reality was used

I — Identity: which entity was affected

V — Verification: how the system checks itself

E — Execution: how the action is carried out

R — Recourse: what happens when the system is wrong

Fragility worsens when DRIVER is weak because distorted representations cannot be challenged quickly. Exclusion worsens when DRIVER is weak because those outside the system have no practical path to contest, correct, or enter it.

Simple examples that make the issue real

A rural business applies for working capital. It has real customer demand, repeat orders, and healthy local reputation, but weak formal documentation and fragmented digital records. The lender’s systems cannot model it confidently, so it gets worse terms or no credit at all. That is representation exclusion. OECD and World Bank work on SME adoption and AI foundations helps explain why such gaps persist: strong participation in the AI economy requires more than tools; it requires readiness, infrastructure, skills, and data continuity. (OECD)

Now imagine a large bank with a sophisticated AI stack. It uses third-party identity resolution, transaction enrichment, risk scoring, and automated decision rules. One upstream merge error contaminates several downstream views of the same customer or supplier. The system still functions, but the institution cannot easily reconstruct the source of the distortion. That is representation fragility.

BIS material on financial stability implications of AI explicitly flags data quality, governance, and explainability as core concerns. (Bank for International Settlements)

Agriculture shows both failures at once. FAO notes that AI can improve yields, disease detection, precision agriculture, and advisory services. But the gains depend on inclusive digital access, trusted data pathways, and local relevance.

Where those foundations are missing, farmers are excluded. Where they exist but are weakly governed, institutions can make confident recommendations on degraded or mismatched representations. (FAOHome)

The same logic applies to nature. Many firms now face material exposure to biodiversity loss, water stress, and ecosystem degradation, yet those realities are still thinly represented in many operational systems. If something is economically consequential but representationally weak, AI systems will continue to optimize around an incomplete map.

Why this will define the next generation of winners

The winners of the AI economy will not be defined only by who owns the most advanced models.

They will be defined by who can build reality systems that are both inclusive and repairable.

Three capabilities will become strategic.

-

Representation depth

The ability to capture richer, more continuous, more trustworthy signals about entities that were previously invisible or weakly visible.

-

Representation resilience

The ability to inspect, debug, verify, and reconstruct the representations on which AI depends.

-

Representation legitimacy

The ability to prove that machine action was authorized, grounded, contestable, and reversible.

This is also where new company categories will emerge: representation observability platforms, auditable entity-resolution systems, correction and recourse infrastructure, local-language representation layers, biodiversity sensing networks, and institutional memory systems that preserve how reality models were formed. The growing global emphasis on digital public infrastructure, inclusive digital systems, and sovereign or shared AI infrastructure suggests that both markets and states are converging on the same lesson: AI capability without representational foundations creates brittle progress. (World Economic Forum)

What leaders should do now

Leaders should stop asking only whether their AI is accurate.

They should ask:

- Do we still understand how our systems are modeling reality?

- Where are those representations brittle?

- Which customers, suppliers, communities, and assets remain outside our machine-readable view?

- What recourse exists when our systems get reality wrong?

- Which critical decisions depend on representations we cannot inspect or repair?

These are harder questions than benchmark accuracy. They are also more strategic ones.

Organizations should invest in SENSE ownership, not just CORE capability. They should reduce dependence on opaque representation chains they cannot inspect. They should build DRIVER mechanisms for correction, challenge, and recovery. And they should treat inclusion not as a CSR side issue, but as a design requirement for participation in the AI economy.

Because the next AI divide will not be explained only by access to models. It will be explained by whether an entity is represented at all—and whether that representation can be trusted, challenged, and repaired over time.

Key takeaway for boards and C-suites:

The most important AI question is no longer “How smart is the model?” It is “How reliable, inclusive, and contestable is the reality our systems act on?” Institutions that master representation depth, resilience, and legitimacy will define the next era of advantage.

Summary

In simple terms:

- Representation fragility = reality models that cannot be trusted or repaired

- Representation exclusion = entities that are invisible to AI systems

- Together, they define the next economic divide in the AI era

Conclusion

The future of AI will not be decided by intelligence alone. It will be decided by whether institutions can build a world in which reality is both representable and repairable.

That is the central challenge of the representation economy.

In the old digital era, advantage came from owning data and deploying software. In the AI era, advantage will come from defining reality more reliably, more inclusively, and more legitimately than others.

Institutions that ignore fragility will become dependent on reality systems they cannot fix. Institutions that ignore exclusion will help create an economy that works only for the already legible.

Both will lose.

The greatest risk in the AI economy is not simply that machines may think wrongly. It is that societies may allow reality itself to become brittle for some and invisible for others.

Once that happens, the problem is no longer technical.

It is structural.

Glossary

Representation economy: An economic order in which advantage depends on how well institutions make entities, states, and relationships machine-readable and actionable.

Representation fragility: The vulnerability that appears when organizations depend on reality models they can no longer inspect, repair, or verify.

Representation exclusion: The invisibility that occurs when people, firms, communities, or ecosystems remain outside meaningful machine-readable representation.

Machine-readable economy: An economy in which access to services, markets, and decisions increasingly depends on structured digital representation.

SENSE: The legibility layer where signals are captured, linked to entities, turned into state, and updated over time.

CORE: The cognition layer where systems comprehend context, optimize decisions, realize action, and improve through feedback.

DRIVER: The legitimacy layer that governs delegation, representation, identity, verification, execution, and recourse.

Recourse: The capacity to challenge, reverse, or recover from an AI-mediated decision.

Digital public infrastructure: Shared digital rails—such as identity, payments, and data exchange—that support broad participation in digital and AI-enabled economies.

FAQ

Why is this article not just about AI bias?

Because bias usually describes unfairness in outputs. This article points to a deeper failure upstream: some entities are represented poorly, while others are not represented at all. UNESCO’s AI ethics work explicitly treats inclusiveness and diversity as lifecycle concerns, not just output concerns. (UNESCO)

Why does representation fragility matter for large enterprises?

Because layered AI systems depend on complex data, identity, and decision chains. BIS and NIST both show that weak governance, poor explainability, and data-quality issues can amplify operational and systemic risk. (Bank for International Settlements)

Why does representation exclusion matter for growth?

Because SMEs, rural actors, and underserved communities cannot fully participate in AI-enabled markets if they are not legible to machine decision systems. OECD and World Bank evidence shows that adoption and readiness gaps remain significant. (OECD)

What is the main leadership takeaway?

Do not focus only on model capability. Build inclusive SENSE, strong CORE, and legitimate DRIVER. Durable advantage in the AI economy comes from representation that is broad, repairable, and governable. This is consistent with NIST’s lifecycle view of AI risk management. (NIST Publications)

References and Further Reading

- NIST, AI Risk Management Framework and AI RMF Playbook for lifecycle governance, mapping, measurement, and management of AI risk. (NIST Publications)

- OECD, AI adoption by small and medium-sized enterprises, on the persistent adoption gap between SMEs and larger firms. (OECD)

- World Bank, Digital Progress and Trends 2025: AI Foundations, on the foundational conditions required for broad AI adoption. (World Bank)

- BIS, on financial stability implications of AI and AI explainability and governance in critical systems. (Bank for International Settlements)

- UNESCO, Recommendation on the Ethics of Artificial Intelligence, especially on diversity, inclusiveness, fairness, and human oversight. (UNESCO)

- FAO, on inclusive AI for agriculture, precision agriculture, and smallholder access to digital services. (FAOHome)

- WEF and UNDP, on digital public infrastructure and inclusive digital systems as foundations for broader participation. (World Economic Forum)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Conversion Industry: Why the Biggest AI Companies Will Rebuild Reality Before They Build Intelligence – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Accounting: The New Discipline That Will Decide Which AI-Driven Institutions Can Be Trusted – Raktim Singh

- Synthetic Representation: How the AI Economy Will Construct Reality When It Cannot Fully Observe It – Raktim Singh

- Representation Clearinghouses: The Missing Infrastructure the AI Economy Needs to Reconcile Reality Before It Acts – Raktim Singh

- Recourse Platforms: The Next AI Infrastructure Market for Correction, Appeal, and Recovery – Raktim Singh

- Representation Workflows: The Hidden Operating System That Will Decide the Winners of the AI Economy – Raktim Singh

- Representation Switching Costs: Why the AI Economy’s Deepest Lock-In Will Come From Who Defines Reality – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.

Hi there just wanted to give you a brief heads up and let you know a few of the pictures aren’t loading properly. I’m not sure why but I think its a linking issue. I’ve tried it in two different browsers and both show the same results.