The Representation Commons:

AI will not transform entire economies merely because models improve. It will transform economies when reality itself becomes easier for machines to see, verify, and act on responsibly.

For the last few years, the AI conversation has been dominated by models.

Which model is smartest? Which one is cheapest? Which one reasons better, codes faster, summarizes more accurately, or powers stronger agents?

Those questions still matter. But they are no longer the deepest questions facing boards, governments, or industries.

A more foundational issue is now emerging beneath the surface of the AI economy: what happens when AI becomes powerful, but the world around it remains poorly represented?

That is the strategic gap many leaders still underestimate. AI can only create broad-based value when it can reliably interpret the entities, states, relationships, permissions, claims, and events that make up real-world systems. When suppliers, credentials, health claims, land records, invoices, licenses, compliance states, and identities remain fragmented or trapped in disconnected systems, AI does not see a coherent economy.

It sees broken fragments. OECD’s recent work on governing with AI makes the same point in institutional language: effective adoption depends on enabling layers such as governance, data, digital infrastructure, skills, procurement, and partnerships. (OECD)

This is where a new strategic idea becomes essential: the Representation Commons.

The Representation Commons is the shared layer of machine-readable reality that allows AI systems, institutions, markets, and public services to operate on trusted representations instead of guesswork.

It is not a single database. It is not a central platform. It is not merely a government repository. It is the common set of standards, identifiers, provenance mechanisms, interoperability rules, and governance rails that make entities and transactions legible across organizational boundaries.

In simple language, the Representation Commons is the shared infrastructure that helps machines understand the world in ways that are portable, verifiable, and governable.

Without it, AI remains impressive but narrow. With it, AI becomes economically useful at scale.

The Representation Commons is the shared infrastructure of standards, identifiers, provenance systems, and governance rules that make real-world entities, claims, and transactions machine-readable across systems.

Why AI alone is not enough

A common assumption in today’s market is that once models become more capable, value will automatically spread through the economy. It will not.

A highly capable model dropped into an illegible environment is like a brilliant analyst dropped into a room full of unlabeled folders, contradictory spreadsheets, missing documents, outdated records, screenshots, PDFs, and unverifiable certificates. The analyst may still produce something useful. But it cannot make consistently reliable decisions because the environment itself is poorly structured.

The same is true for AI.

If a lender cannot verify income or cash-flow history in a machine-readable way, AI-based lending remains constrained. If a hospital cannot exchange claims, approvals, and records through interoperable workflows, AI in healthcare remains fragmented.

If digital identity and credential systems do not interoperate across borders, digital trade and mobility remain slower than they should be. If provenance is weak, media trust erodes. If public records are inconsistent, AI-enabled service delivery becomes brittle and exclusionary.

These are not merely software problems. They are legibility problems. NIST’s AI Risk Management Framework similarly stresses that trustworthy AI depends on broader governance, risk management, context, and system design, not model performance alone. (NIST Publications)

That is why the next phase of AI competition will not be defined only by better models. It will be defined by better legibility infrastructure.

The deeper paradox: private intelligence, public illegibility

Here is the paradox at the heart of the current AI wave: we are investing heavily in intelligence, but far less in representation.

Organizations are launching copilots, agents, orchestration layers, and prediction engines. Yet many of the environments in which those systems must operate remain unreadable. The model may be advanced. The surrounding reality may not be.

That mismatch becomes especially dangerous as AI moves from generating content to validating claims, routing workflows, evaluating counterparties, and recommending or executing decisions. The more AI touches real operations, the more it depends on trusted inputs, persistent identifiers, clear state models, auditable permissions, and interoperable context. OECD and NIST arrive at this from different directions, but both point toward the same conclusion: trustworthiness and value creation depend on more than model strength. They depend on the surrounding institutional substrate. (OECD)

This is exactly why the Representation Commons matters.

Think roads, not cars

The easiest way to understand the Representation Commons is to think about roads.

A country can import the best cars in the world. But if it does not build roads, traffic rules, maps, address systems, licensing, signals, and maintenance infrastructure, mobility will remain chaotic.

AI is the car.

The Representation Commons is the road system.

Most AI strategies today are still too car-centric. They ask which model to buy, which agent framework to adopt, or which assistant to deploy. Those are useful questions, but they are downstream. The upstream questions are more structural:

Can entities be identified across systems?

Can permissions travel?

Can states be verified?

Can consent be captured and honored?

Can provenance be checked?

Can decisions be audited?

Can recourse be triggered when something goes wrong?

Those are Representation Commons questions.

The SENSE–CORE–DRIVER lens

In my broader Representation Economics framework, AI systems succeed or fail across three interdependent layers.

SENSE is the legibility layer where reality becomes machine-readable:

- Signal: detecting events, changes, and traces from the world

- ENtity: attaching those signals to a persistent person, organization, asset, place, or object

- State representation: building a structured model of current condition

- Evolution: updating that state over time as new signals arrive

CORE is the cognition layer:

- comprehend context

- optimize decisions

- realize action

- evolve through feedback

DRIVER is the governance and legitimacy layer:

- delegation

- representation

- identity

- verification

- execution

- recourse

The Representation Commons sits primarily in SENSE, but it strengthens DRIVER as well. AI does not become broadly useful merely when it can think. It becomes broadly useful when it can think about something that is legible, and when its actions can be checked, authorized, and trusted. That is the missing bridge between model intelligence and social-scale value.

What the Representation Commons looks like in the real world

This is not a speculative idea. Pieces of the Representation Commons are already emerging across the world.

In Europe, the EU Digital Identity Wallet framework is being built around mutual recognition, portability, and interoperable use of digital identity and credentials across member states. The point is not just digitized identity. It is trusted, reusable identity and credential exchange across systems and borders. Public authorities are expected to accept EU Digital Identity Wallets once issued by member states, underscoring the shift toward a shared trust layer. (European Commission)

The W3C Verifiable Credentials Data Model 2.0 provides a standard way to express claims such as licenses, degrees, and certifications in a cryptographically secure and machine-verifiable format. This moves trust away from screenshots, emailed PDFs, and manual checks toward structured, checkable credentials that machines can reason over. (W3C)

India’s Account Aggregator framework shows the same principle in financial data sharing. The official framework states that financial information is shared only with explicit customer consent and transferred from one institution to another based on the individual’s instruction. That matters not just because it improves access.

It matters because it creates permissioned, interoperable, machine-usable representation of financial information across institutions. (Department of Financial Services)

India’s National Health Claims Exchange, under Ayushman Bharat Digital Mission, similarly aims to standardize the exchange of health-claim information among payers, providers, and third-party administrators. When claims workflows, consent, and records become interoperable and auditable, AI can move from surface-level assistance toward real process improvement. (NHCX)

Singapore’s MyInfo offers another practical example. It allows individuals to pre-fill digital forms using verified data from government sources, reducing repetitive submission, manual verification, and friction across services. MyInfo is now integrated into more than 1,000 digital services, which is exactly what shared legibility looks like when it starts compounding across an ecosystem. (Singapore Government Developer Portal)

In digital media, the C2PA specification and Content Credentials aim to establish source and history information for content. In an AI-rich media environment, machines increasingly need to know not just what content says, but where it came from, whether it was altered, and how provenance can be checked. (C2PA)

These examples all point in the same direction: shared legibility is becoming infrastructure.

Why nations, industries, and ecosystems must build it together

A Representation Commons cannot be built by one enterprise alone.

A firm can build a clean internal data model. It can improve its workflows. It can deploy strong copilots and decision systems. But if the surrounding ecosystem remains illegible, the benefits remain partial.

A logistics company still depends on ports, customs, freight records, insurers, and payment rails. A hospital still depends on labs, pharmacies, regulators, insurers, and claims systems. A bank still depends on identity systems, consent frameworks, counterparties, and verification layers. A manufacturer still depends on suppliers, certification bodies, trade documentation, logistics partners, and quality records.

That is why this is an ecosystem challenge rather than merely an enterprise challenge. Shared legibility creates the highest value precisely at the point of coordination. This is also why development and policy institutions increasingly emphasize interoperable digital public infrastructure, trusted data exchange, and inclusive governance as foundational to broad-based digital value creation. (OECD)

What happens if we do not build it

If societies do not invest in the Representation Commons, AI will still advance. But its benefits will flow unevenly.

Large firms with proprietary ecosystems will become easier for machines to trust. Smaller firms will remain harder to verify and integrate. Public services will struggle to scale reliable AI deployment. Cross-border coordination will stay expensive. Compliance will remain manual. Trust will become platform-dependent rather than portable.

This is a crucial point. The absence of shared legibility infrastructure does not stop AI. It changes who benefits from AI.

That makes the Representation Commons not only a technology issue, but also a market-design issue, an industrial-policy issue, and an inclusion issue.

A simple example: the small supplier

Imagine a small manufacturing supplier with excellent products.

Its quality records sit in PDFs.

Its certifications are emailed manually.

Its shipment data appears in different formats for different buyers.

Its sustainability claims are difficult to verify.

Its payment history is fragmented across systems.

Its compliance records are not machine-readable.

Now imagine a large buyer using AI to source vendors, assess risk, verify compliance, forecast delays, and automate procurement.

Who gets selected first?

Usually not the best supplier.

The best represented supplier.

That is the heart of Representation Economics. In the AI economy, value does not flow only toward what is real. It flows toward what is legible.

The Representation Commons reduces this distortion by making verification, interoperability, and trust less dependent on firm size or platform power.

What leaders should do now

Leaders who want AI to deliver broad-based value should think beyond tools and ask five practical questions.

First, what entities in our ecosystem remain poorly represented? Suppliers, patients, products, claims, permits, invoices, land parcels, emissions records, credentials, and licenses are common blind spots.

Second, which states and permissions are still trapped inside documents rather than machine-readable flows? Approvals, eligibility, coverage, quality status, consent, and audit trails often remain locked in manual formats.

Third, where is interoperability weakest? In many industries, the problem is not internal AI capability. It is the gap between ministries, banks, hospitals, ports, insurers, counterparties, and cross-border systems.

Fourth, what must become portable and verifiable? Identity, provenance, credentials, claims history, compliance artifacts, and delegation rules are prime candidates.

Fifth, which parts of our future AI value depend on shared infrastructure rather than internal tooling alone? This is often the biggest strategic blind spot of all.

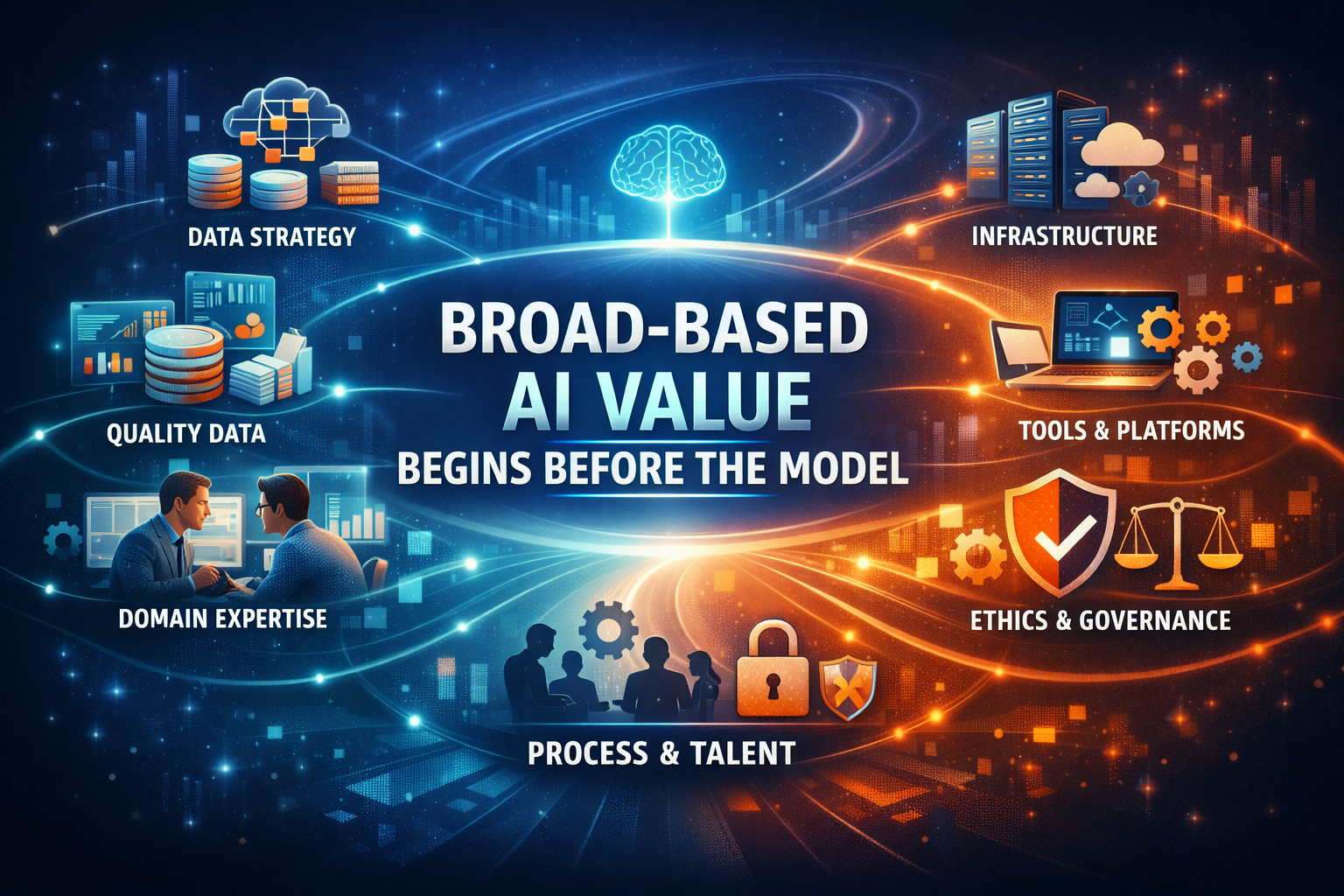

Conclusion: broad-based AI value begins before the model

The next decade of AI will not be won by intelligence alone.

It will be won by the nations, industries, and ecosystems that make reality easier for machines to understand, verify, and act on responsibly. The Representation Commons is the layer that turns AI from isolated capability into systemic value. It is how countries reduce friction, industries improve coordination, ecosystems widen participation, and institutions make trust more portable.

Before AI can create value for everyone, the world must first become legible enough for AI to work with everyone.

That is not only a model problem. It is a representation problem.

Glossary

Representation Commons

The shared infrastructure of standards, identifiers, provenance systems, and governance rails that makes reality machine-readable across institutions.

Representation Economics

A framework for understanding how value in the AI era increasingly depends on how well entities, claims, permissions, and states are represented for machine reasoning and action.

Shared Legibility Infrastructure

The technical and institutional systems that help machines consistently interpret and trust real-world entities and events across boundaries.

Machine-Readable Reality

A form of representation in which information is structured so software and AI systems can process, verify, and act on it reliably.

Verifiable Credentials

Cryptographically secure, machine-verifiable digital credentials used to express claims such as licenses, degrees, or certifications. (W3C)

Digital Public Infrastructure

Foundational digital systems such as identity, consent, payments, and data exchange rails that support broad participation in modern economies.

Provenance

Information about the source, history, and modification path of a digital asset or claim. (C2PA)

Interoperability

The ability of systems, institutions, or platforms to exchange and use information consistently across boundaries.

Portable Trust

Trust that can move across systems because it is attached to standardized, verifiable representations rather than to one closed platform.

Interoperability

Ability of systems to exchange and use information seamlessly.

Portable Trust

Trust that moves across systems through verifiable representations.

FAQ

What is the Representation Commons in simple words?

It is the shared infrastructure that makes people, products, claims, permissions, and transactions easier for machines to understand and trust across systems.

Why is the Representation Commons important for AI?

Because even powerful AI systems underperform when the surrounding world is fragmented, unverifiable, and hard to interpret.

How is this different from better AI models?

Better models improve reasoning. The Representation Commons improves what the model can reliably reason about.

Is this mainly a government issue?

No. Governments matter, but industries, standards bodies, platforms, and ecosystems all play a role in making shared legibility possible.

What are real examples of the Representation Commons?

Examples include the EU Digital Identity Wallet, W3C Verifiable Credentials, India’s Account Aggregator framework, India’s National Health Claims Exchange, Singapore’s MyInfo, and C2PA Content Credentials. (European Commission)

Why should boards care?

Because future AI advantage will depend not only on deploying models, but on operating inside ecosystems where trust, verification, and coordination can scale.

What is the Representation Commons?

The Representation Commons is the shared infrastructure that makes real-world entities, transactions, and claims machine-readable, verifiable, and interoperable across systems.

Why is AI not enough on its own?

Because AI depends on structured, trusted inputs. Without representation, even advanced models cannot generate reliable outcomes.

What does “AI value begins before the model” mean?

It means that the structure and legibility of data and systems determine whether AI can create value—before intelligence is applied.

What are examples of Representation Commons?

Examples include digital identity systems, verifiable credentials, consent-based data sharing frameworks, and interoperable public infrastructure.

Why should enterprises care?

Because future AI advantage depends not only on models, but on how well organizations represent reality for machines to understand and act on.

References and further reading

For a short reference section at the end of the article, use these categories rather than long academic formatting:

- OECD — governing with AI in the public sector and the enabling foundations for adoption. (OECD)

- NIST — AI Risk Management Framework and trustworthiness guidance. (NIST Publications)

- European Commission — EU Digital Identity Wallet framework and interoperability path. (European Commission)

- W3C — Verifiable Credentials Data Model 2.0. (W3C)

- Government of India — Account Aggregator framework and National Health Claims Exchange. (Department of Financial Services)

- Singapore Government — MyInfo digital data-sharing infrastructure. (Singapore Government Developer Portal)

- C2PA — content provenance and authenticity standards. (C2PA)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

- The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Balance Sheet: How AI Is Redefining Assets, Liabilities, and Institutional Strength – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- Representation Arbitrage: The New AI Advantage That Will Redefine Who Wins and Who Disappears – Raktim Singh

- • Why Most AI Projects Fail Before Intelligence Even Begins

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.