The Representation Economy

AI is changing more than work. It is changing institutional architecture.

Artificial intelligence is not only changing how organizations automate tasks. It is changing how institutions observe reality, interpret signals, decide what matters, and act with authority.

That is the deeper shift.

For the last few years, the AI conversation has been dominated by model-centric questions. Which model is bigger? Which model is cheaper? Which model is safer? Which model generates better text, code, images, or predictions?

Those questions still matter. But they no longer explain where durable institutional advantage will come from.

The next era of advantage will not belong only to institutions with powerful models. It will belong to institutions that can build a better representation of reality and turn that representation into better decisions, safer execution, and more legitimate action. That is why the next economy being shaped by AI is not just an automation economy. It is a representation economy.

This shift is happening at a moment when AI adoption has accelerated sharply. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the year before, and that global private investment in generative AI reached $33.9 billion in 2024.

At the same time, the governance environment is becoming more operational: the OECD AI Principles were updated in 2024, NIST continues to expand practical AI risk guidance, and the EU AI Act is being phased in progressively, with key provisions already applying and full rollout currently scheduled through August 2027. (Stanford HAI)

In other words, AI is no longer a side experiment. It is becoming part of institutional architecture.

And once AI becomes institutional architecture, a more important question appears:

How should institutions be designed when perception, reasoning, and action are increasingly machine-mediated?

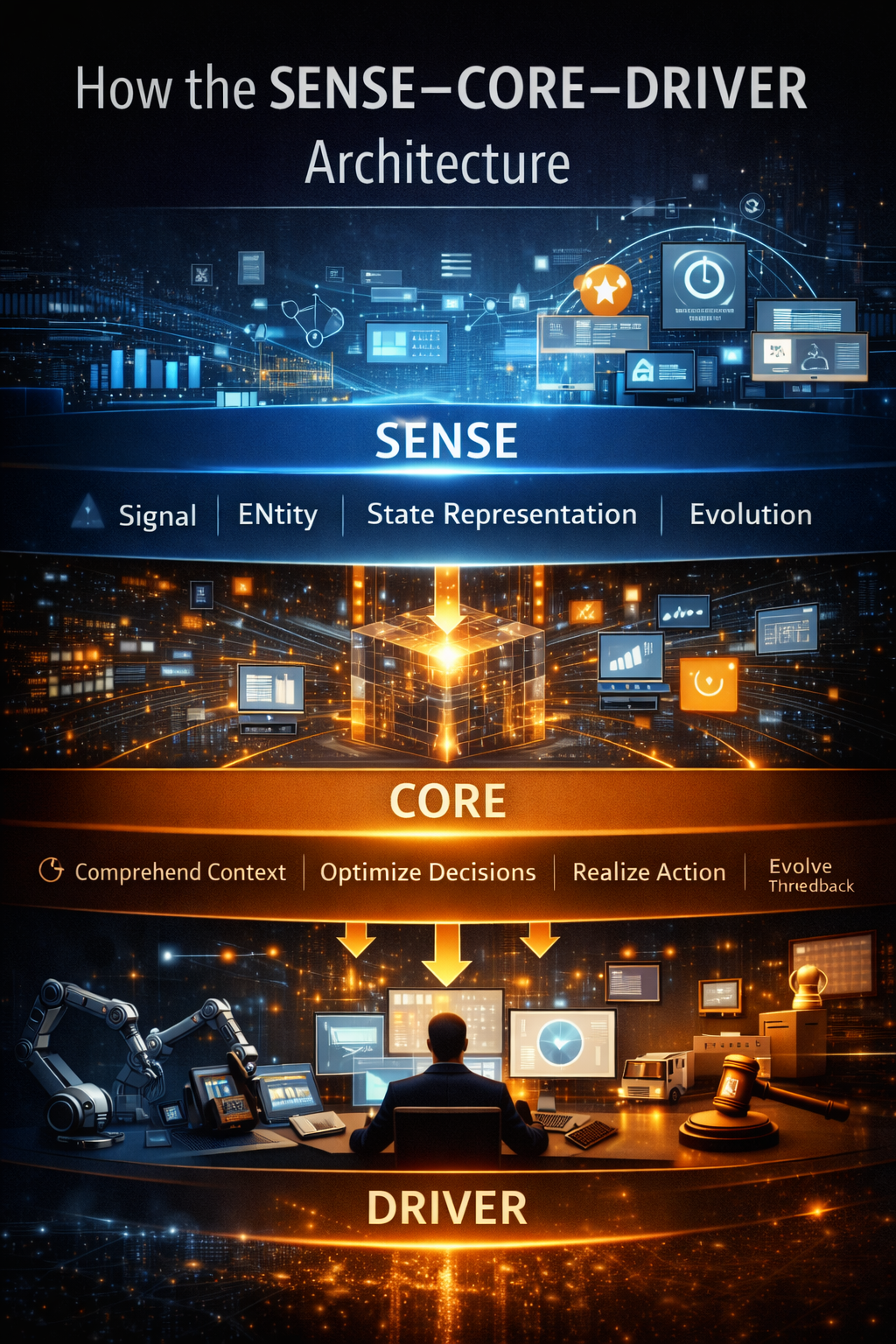

My answer is the SENSE–CORE–DRIVER architecture.

It is a practical framework for understanding how intelligent institutions will operate in the representation economy.

- SENSE is the legibility layer: how reality becomes machine-readable.

- CORE is the cognition layer: how institutions reason over that reality.

- DRIVER is the legitimacy and execution layer: how decisions become authorized, governed, and real.

This is not merely a technology stack. It is an institutional stack.

And the institutions that master it will not simply “use AI better.” They will see earlier, decide better, act faster, and govern more credibly than their competitors.

What Is the Representation Economy?

The Representation Economy is an emerging economic paradigm in which institutions compete based on their ability to continuously sense reality, reason about it, and execute decisions through intelligent systems.

In earlier economic eras, advantage came from:

-

labor

-

capital

-

industrial production

-

software automation

In the Representation Economy, advantage increasingly comes from the quality of institutional representations of reality.

Organizations that can accurately represent the world — customers, markets, risks, operations, and environments — can make better decisions faster.

To operate in this new environment, institutions require a new architecture.

That architecture can be understood through three layers:

-

SENSE – making reality legible through signals and state representations

-

CORE – reasoning about decisions using institutional knowledge and models

-

DRIVER – executing delegated actions with legitimacy, verification, and accountability

Together, these layers form the SENSE–CORE–DRIVER architecture of intelligent institutions.

Key Takeaways

-

AI is transforming institutions into intelligent decision systems

-

Competitive advantage will shift to representation capability

-

The SENSE–CORE–DRIVER architecture defines how intelligent institutions operate

-

New institutions will emerge around decision infrastructure and legitimacy platforms

The big shift: from automation to representation

Every major economic era is built on a new form of leverage.

The industrial era scaled muscle.

The information era scaled communication.

The software era scaled transactions and workflows.

The AI era is beginning to scale something deeper: representation.

Representation is the structured ability to make the world legible enough for systems to interpret and act on it.

A hospital does not merely store patient records. It represents a patient’s evolving condition.

A bank does not merely process transactions. It represents trust, intent, exposure, and obligation.

A city does not merely collect data. It represents movement, congestion, safety, demand, and public behavior.

A supply chain does not merely move goods. It represents inventory state, supplier reliability, demand volatility, and execution risk.

The better the representation, the better the decision.

This matters because AI systems do not act directly on raw reality. They act on representations of reality: signals, entities, states, labels, graphs, histories, policies, and feedback loops. That is why the next institutional advantage will not come only from training better models. It will come from answering deeper questions:

- What part of reality do we capture?

- How accurately do we represent it?

- How quickly do we update it?

- How intelligently do we reason over it?

- How safely and legitimately do we convert it into action?

That is why the representation economy matters. It shifts the conversation from “AI as a tool” to AI as institutional perception, institutional cognition, and institutional execution.

Why institutions now need a new architecture

Most institutions were built for an earlier world.

They were designed for human review, periodic reporting, fragmented data, delayed escalation, and mostly manual action. In that world, it was acceptable for reality to be only partially visible, for decisions to be slow, and for execution to be mediated through committees, forms, and time.

That world is disappearing.

In an AI-rich environment, institutions increasingly face continuous streams of signals instead of periodic updates, dynamic entities instead of static records, real-time risk instead of quarterly summaries, delegated workflows instead of purely human processing, and machine-generated recommendations that can rapidly turn into machine-executed outcomes.

That makes older architectures insufficient.

Traditional institutions often suffer from four structural gaps:

-

They cannot see clearly

Data is fragmented across systems, teams, vendors, forms, and channels. There is no stable institutional picture of reality.

-

They cannot reason coherently

Even when data exists, it is not connected or contextualized well enough to support cross-functional decisions.

-

They cannot execute safely

AI may suggest an action, but the institution often lacks clear authority boundaries, verification pathways, and recourse mechanisms.

-

They cannot learn institutionally

Actions happen, but the institution does not retain the decision trail, exception logic, and outcome memory well enough to improve.

This is exactly why practical governance matters. NIST’s AI Risk Management Framework and its Generative AI Profile emphasize governance, context mapping, measurement, and active risk management. OECD guidance similarly highlights accountability, robustness, transparency, and lifecycle monitoring. The point is not that institutions need more policy language. The point is that they need an architecture that can operationalize those principles. (NIST)

That is where SENSE–CORE–DRIVER becomes useful.

It offers a simple but powerful answer to a hard question:

How do intelligent institutions turn reality into governed action?

Why the Representation Economy Matters

The Representation Economy will change how institutions compete.

Three shifts are already visible:

-

Decision speed becomes strategic

Organizations that can sense reality faster and reason better will outperform slower institutions.

-

Institutional memory becomes an asset

Decisions, exceptions, and outcomes become structured knowledge that improves future reasoning.

-

Governance becomes infrastructure

As machines participate in decision systems, institutions must define:

- authority

- verification

- accountability

- recourse

This creates entirely new categories of institutional infrastructure.

The SENSE Layer: Making Reality Legible

SENSE is where the institution learns to see

SENSE is the first layer of an intelligent institution. It is where reality becomes visible enough for machine-assisted decision-making.

SENSE stands for:

- Signal — detecting events, changes, and traces from the world

- ENtity — attaching those signals to a persistent actor, object, location, account, or asset

- State representation — building a structured model of the current condition of that entity

- Evolution — updating that state over time as new signals arrive

SENSE is not just data collection. It is institutional legibility.

Example: fraud detection

A traditional system may inspect a single transaction and ask, “Does this look suspicious?”

A SENSE-based institution sees something richer:

- the signal: a high-value transfer from a new device

- the entity: a specific customer, merchant, and account network

- the state: recent login reset, unusual geography, new payee, altered spending pattern

- the evolution: three small test transactions preceded this event, and similar sequences appeared in earlier fraud cases

That is not merely more data. It is a more usable representation of reality.

Why SENSE matters across industries

The same pattern applies everywhere.

In healthcare, SENSE links symptoms, labs, medication history, and deterioration over time.

In manufacturing, it links vibration readings, asset identity, maintenance state, and degradation patterns.

In logistics, it links shipment events, route changes, weather, supplier reliability, and exception history.

In education, it links learner behavior, concept mastery, engagement signals, and progression trajectory.

The key shift is this: institutions stop working from isolated records and start working from living representations.

That is also why AI readiness increasingly depends on strong data governance, digital infrastructure, institutional reform, skills, and local ecosystems. The World Bank’s 2025 work on strengthening AI foundations explicitly frames AI readiness as a core pillar and highlights data governance, institutional reform, and local innovation capacity as essential foundations for meaningful AI adoption. (World Bank)

Why many AI initiatives fail before they even begin

Many AI initiatives fail not because the model is weak, but because the institution cannot make reality legible.

If signals are sparse, entity resolution is broken, state is stale, or evolution is missing, the model is forced to reason over a distorted world.

That is like asking an excellent pilot to fly with a cracked windshield and delayed instruments.

What a mature SENSE layer usually includes

A mature SENSE layer often includes:

- event streams from internal and external systems

- entity resolution across customers, assets, accounts, products, and cases

- state models that summarize the current condition

- temporal memory showing how state changed over time

- confidence controls that distinguish strong signals from noisy ones

Consider an insurance claim.

Without SENSE, the institution sees a form.

With SENSE, it sees claimant history, policy state, incident timing, repair estimates, linked entities, suspicious pattern overlaps, and previous adjudication outcomes.

That is the difference between paperwork and representation.

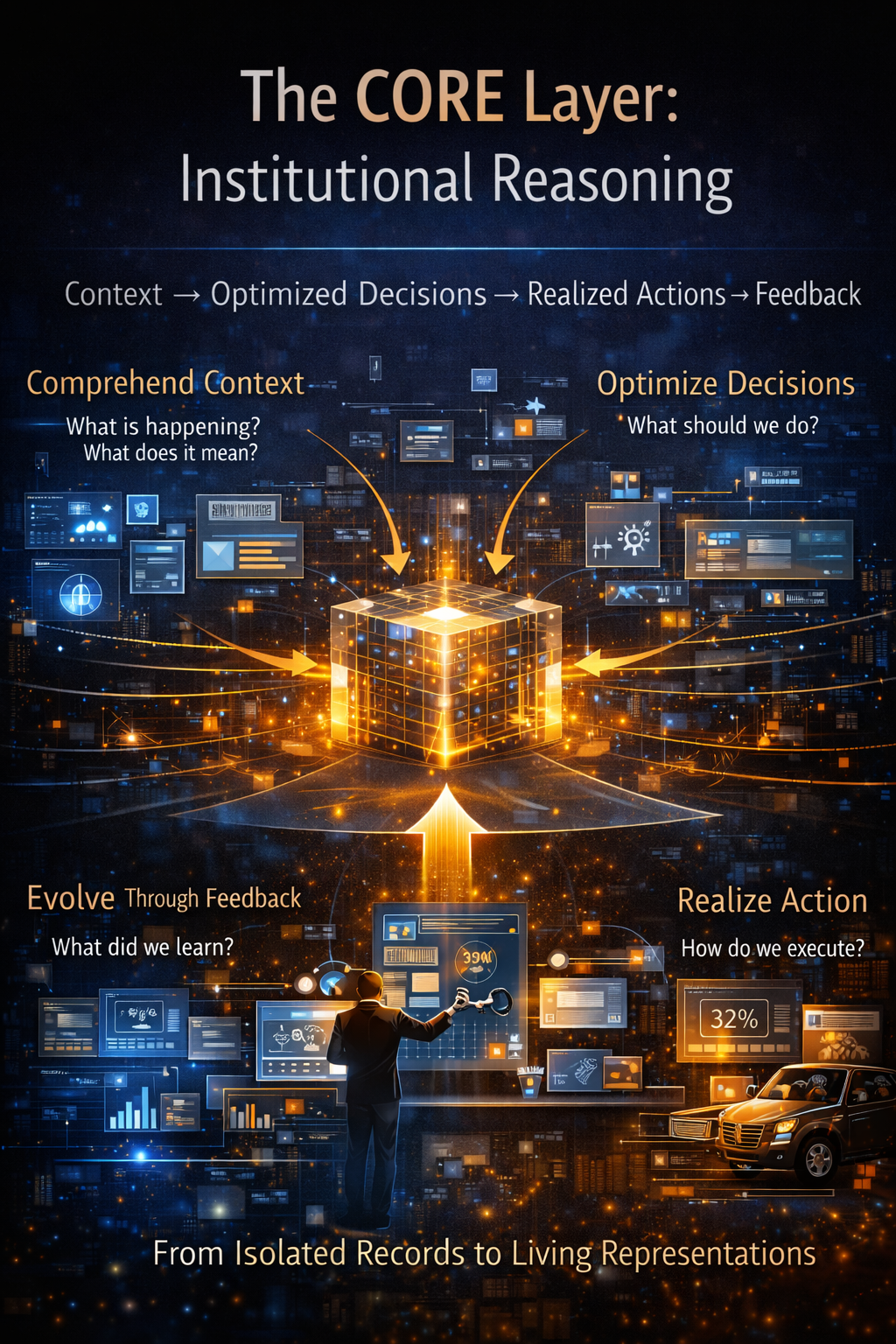

The CORE Layer: Institutional Reasoning

If SENSE makes reality legible, CORE makes it intelligible

CORE is the reasoning layer of the institution.

It stands for:

- Comprehend context

- Optimize decisions

- Realize action

- Evolve through feedback

This is where AI models, heuristics, rules, simulations, workflows, domain logic, causal assumptions, business goals, and policy constraints come together.

In simple terms, CORE answers four questions:

- What is happening?

- What does it mean?

- What should we do?

- What did we learn?

Why CORE is much more than “using a model”

Many organizations still think of AI as a smart assistant sitting beside a workflow.

But in serious institutional environments, CORE is not just a chatbot or prediction endpoint. It is a decision system.

Take enterprise lending as an example.

- SENSE creates a living representation of the borrower, transaction patterns, collateral, sector conditions, and repayment state.

- CORE reasons over creditworthiness, fraud signals, policy rules, concentration risk, exposure limits, and scenarios.

- DRIVER determines whether the proposed action can be executed, by whom, under what conditions, and with what recourse.

That middle step is where institutional intelligence is actually created.

Comprehend context

This is the part many organizations underestimate.

A model output is not context.

Context is the institution’s ability to place a signal inside a meaningful decision frame.

A late payment means one thing for a new borrower with unstable cash flow. It means something entirely different for a long-standing customer during a known sector shock.

Context is what turns pattern recognition into judgment.

Optimize decisions

Once context is understood, CORE helps the institution choose among alternatives.

In retail, that may mean deciding between discounting, replenishment, or stock reallocation.

In healthcare, it may mean triage, escalation, or watchful waiting.

In cybersecurity, it may mean isolate, monitor, challenge, or block.

In public services, it may mean prioritize review, release payment, or request additional verification.

Optimization here does not always mean mathematical maximization. In real institutions, it usually means balancing speed, cost, safety, fairness, policy, trust, and strategic intent.

Realize action

A decision that cannot be translated into an operational pathway is only a suggestion.

CORE therefore needs interfaces to workflows, systems, tools, forms, and teams. It must know how a decision becomes executable in the real institution.

Evolve through feedback

This is what separates static automation from intelligent institutions.

An institution becomes smarter when it learns not only from outcomes, but from overrides, disputes, exceptions, failures, and unintended consequences.

If humans consistently overturn a recommendation, that matters.

If an action works for one segment but fails for another, that matters.

If a rule appears efficient but creates downstream unfairness or legal risk, that matters.

That lifecycle mindset is strongly aligned with current governance thinking. OECD and NIST both emphasize that responsible AI cannot be treated as a one-time compliance event; it must be continuously monitored, assessed, and improved over time. (NIST Publications)

The simple analogy

SENSE is the institution’s eyes and ears.

CORE is the institution’s judgment.

Without SENSE, CORE is blind.

Without CORE, SENSE is only noise.

The DRIVER Layer: Delegated Action and Legitimacy

This is the layer most AI strategies still underestimate

DRIVER is where AI moves from recommendation to real-world consequence.

It stands for:

- Delegation — who authorized the system to act

- Representation — what version of reality the system relied on

- Identity — which entity was affected

- Verification — how the decision is checked

- Execution — how the action is carried out

- Recourse — what happens if the system is wrong

DRIVER is the legitimacy layer.

It is what turns institutional action into something governable.

Why DRIVER matters now

A recommendation is not the same thing as an action.

A model suggesting “possible fraud” is one thing. Freezing a customer’s account is another.

A system suggesting “possible tumor” is one thing. Altering a treatment path is another.

A system suggesting “high-risk borrower” is one thing. Denying credit is another.

The moment AI crosses from analysis to execution, legitimacy becomes central.

That is why governance frameworks and regulations increasingly focus on accountability, transparency, oversight, and risk controls. The OECD AI Principles stress trustworthy AI and accountability. NIST’s risk framework focuses on governance and responsible use. The European Commission’s official AI Act timeline shows that obligations are arriving in stages, including general provisions, AI literacy, GPAI rules, governance obligations, and later high-risk system requirements. (OECD)

Delegation

Who gave the machine the right to act?

This is not a symbolic question. It is an architectural one.

Was a delegation policy approved?

Are actions bounded by risk category?

Can authority vary by context, customer segment, or confidence threshold?

Is the system proposing actions, executing them, or both?

Representation

What version of reality did the system rely on when it acted?

If the underlying representation was incomplete, stale, or wrong, the action may be illegitimate even if the model’s internal logic was consistent.

Identity

Who or what is affected?

A legitimate institution must know whether it is acting on the correct customer, patient, citizen, employee, asset, machine, case, or account.

Verification

How is the action checked before it becomes real?

This can involve rule validation, policy checks, confidence thresholds, anomaly screens, multi-source confirmation, or required human sign-off.

Execution

What exactly is carried out?

Send alert? Freeze account? Approve payment? Route a patient? Change access rights? Shut down a machine? Trigger investigation?

Execution must be bounded, observable, logged, and reversible where possible.

Recourse

What happens if the system is wrong?

Can the customer appeal?

Can the employee challenge it?

Can the clinician override it?

Can the institution reconstruct the logic and timeline?

Can the decision be reversed fast enough to matter?

Without recourse, automation becomes brittle power.

With recourse, it becomes governable authority.

That is the real promise of DRIVER: it turns AI from a clever tool into an accountable institutional actor.

Why this architecture was difficult to build earlier

For most of history, institutions could not build this architecture at scale.

Three things were missing.

-

Reality was too hard to capture

Signals were sparse, analog, delayed, or disconnected.

-

Reasoning was too expensive

Even when data existed, it was difficult to reason across thousands or millions of dynamic cases.

-

Execution infrastructure was too fragmented

Institutions lacked the identity, workflow, observability, software, and governance layers required to convert machine recommendations into controlled action.

That is changing now because several capabilities are arriving together: richer digital traces, better data infrastructure, stronger identity and workflow systems, more capable AI models, and maturing governance frameworks around risk, oversight, and accountability. Stanford, NIST, OECD, the World Bank, and the EU’s own AI governance infrastructure all point to the same broader pattern: AI is moving from experimentation to institutionalization. (Stanford HAI)

This is why the present moment matters.

The technology is now strong enough to make representation economically valuable. The governance environment is becoming mature enough to make representation institutionally acceptable.

Where the SENSE–CORE–DRIVER framework works best

This framework is especially powerful where five conditions are present:

-

Reality changes quickly

Fraud, logistics, cyber threats, patient deterioration, and supply volatility are dynamic by nature.

-

Decisions are frequent

The institution must make many decisions continuously, not occasionally.

-

Stakes are meaningful

Mistakes have financial, legal, operational, human, or reputational costs.

-

Context matters

Simple rules are not enough.

-

Action must be governed

The institution cannot afford opaque or uncontrolled automation.

That is why this framework is especially relevant in:

- banking and financial services

- healthcare

- insurance

- public services

- critical infrastructure

- manufacturing

- supply chains

- cybersecurity

- digital platforms

- education systems at scale

In all these areas, the future winner is unlikely to be the organization with only the best model. It is more likely to be the organization with the best institutional representation and governed execution.

Where the framework can fail

No architecture is magic.

SENSE–CORE–DRIVER can fail in predictable ways, and naming those failure modes is important because serious board-level strategy is as much about limits as it is about promise.

Failure 1: false legibility

The institution believes it sees reality clearly, but the signals are biased, delayed, incomplete, or mislinked.

Failure 2: brittle reasoning

CORE becomes overconfident, shallow, or optimized for the wrong target.

Failure 3: illegitimate execution

The institution automates actions without sufficient delegation, verification, explanation, or recourse.

Failure 4: governance theater

Policies look polished on paper, but live systems run through fragile scripts, disconnected vendors, silent overrides, and invisible operational shortcuts.

Failure 5: memory failure

The institution acts, but does not learn because feedback, exceptions, and downstream outcomes are not captured in a durable institutional memory.

This is why SENSE–CORE–DRIVER should not be treated as a one-time technology project. It must be treated as a living institutional discipline.

What new institutions may emerge in the representation economy

Once representation becomes strategic, new institutional forms begin to emerge.

-

Representation-native enterprises

These organizations are built around continuously updated operational reality rather than delayed management summaries.

-

Decision infrastructure firms

These firms compete not mainly on software features, but on superior institutional reasoning and controlled execution.

-

Legitimacy platforms

New layers arise around auditability, traceability, identity binding, decision verification, and recourse orchestration.

-

Institutional memory systems

These systems preserve not just data, but decision history, exception logic, override patterns, and outcome learning.

-

Delegated action markets

These are environments in which machine actors perform bounded tasks under explicit policy, authority, and accountability rules.

That is where the representation economy becomes larger than enterprise software. It begins to redefine how institutional power itself is organized.

Historical precedents: every major leap in coordination began with better representation

History offers a useful pattern.

When accounting improved, firms became more governable.

When maps improved, states became more navigable.

When double-entry bookkeeping spread, commerce scaled.

When clocks standardized time, industrial coordination became possible.

When databases matured, digital business expanded.

When search engines organized the web, information became usable at scale.

Each of these shifts made some part of reality more legible.

The representation economy is the next step in that pattern.

It is not just about storing more data. It is about creating actionable, governable, continuously updated representations of the world.

That is why this moment matters so much. Institutions are moving from record-keeping to reality-modeling.

Why this matters strategically for boards, CEOs, and CTOs

The most important implication of this framework is that AI strategy can no longer remain model-centric.

Boards and executive teams now need to ask harder questions:

- What reality does our institution currently fail to see?

- Where is our representation of customers, assets, operations, and risk too shallow?

- Which decisions are still being made with low-quality context?

- Where are we automating without legitimacy?

- What recourse exists when machine-mediated action is wrong?

- What institutional memory are we building from exceptions and outcomes?

These are not technical questions alone. They are strategic governance questions.

They shape resilience.

They shape speed.

They shape trust.

They shape enterprise value.

That is why the representation economy is not a niche academic concept. It is a board-level design problem.

The Future of Institutional Architecture

Over the next decade, the institutions that dominate their sectors will not simply deploy more AI tools.

They will redesign themselves around representation infrastructure.

They will build systems that:

-

continuously sense reality

-

reason through institutional knowledge

-

execute delegated actions responsibly

The institutions that master this architecture will define the next era of economic competition.

This is the logic of the Representation Economy.

Conclusion: the next institutional advantage will be built on legibility, reasoning, and legitimacy

The biggest AI shift is not that machines can now generate language.

It is that institutions can increasingly build machine-mediated representations of reality, reason over them continuously, and act on them at scale.

That changes the architecture of the enterprise.

It changes the architecture of governance.

It changes the architecture of trust.

In the industrial age, advantage came from owning production capacity.

In the software age, advantage came from owning digital workflows.

In the representation economy, advantage will come from owning the best way to make reality legible, reason over it intelligently, and act on it legitimately.

That is why intelligent institutions will increasingly run on the SENSE–CORE–DRIVER architecture.

Not because it sounds elegant.

Because in an AI-shaped world, institutions will win or fail on three capabilities:

Can they see?

Can they think?

Can they act with legitimacy?

SENSE. CORE. DRIVER.

That is not just a framework.

It may become the architecture of the next institution.

FAQ: The Representation Economy and the SENSE–CORE–DRIVER Architecture

- What is the representation economy?

It is the idea that competitive advantage increasingly comes from how well institutions represent reality, reason over that representation, and convert it into governed action.

- How is the representation economy different from the automation economy?

Automation focuses mainly on task execution. The representation economy focuses on perception, reasoning, and legitimate execution.

- Why does AI make representation more important?

Because AI systems depend on structured representations of the world rather than raw reality itself.

- Is representation just another word for data?

No. Data is raw input. Representation is organized, contextualized, decision-ready understanding.

- What does SENSE stand for?

Signal, ENtity, State representation, and Evolution.

- What does CORE stand for?

Comprehend context, Optimize decisions, Realize action, and Evolve through feedback.

- What does DRIVER stand for?

Delegation, Representation, Identity, Verification, Execution, and Recourse.

- Why is SENSE important?

Because institutions cannot reason well if the reality they are observing is fragmented or distorted.

- Why is CORE important?

Because intelligence is not only prediction. It is context-aware institutional reasoning.

- Why is DRIVER important?

Because execution without legitimacy creates risk, mistrust, and institutional fragility.

- Is this framework only for large enterprises?

No. The logic applies to startups, governments, hospitals, banks, universities, and digital platforms.

- Is SENSE basically data engineering?

Not exactly. Data engineering supports SENSE, but SENSE also includes entity resolution, state modeling, and temporal evolution.

- Is CORE just a large language model?

No. CORE can include models, rules, workflows, domain knowledge, business policy, human judgment, and feedback systems.

- Is DRIVER just compliance?

No. DRIVER is about legitimacy in execution, not just documentation.

- What is institutional legibility?

It is the ability of an institution to observe and structure relevant reality clearly enough to act intelligently.

- What is institutional reasoning?

It is an organization’s ability to interpret context, compare options, and choose action in line with goals and constraints.

- What is delegated action?

It is when a system is allowed to propose, initiate, or execute actions within clearly authorized boundaries.

- Why is recourse important?

Because even capable systems can be wrong, and institutions need fair correction pathways.

- Can this framework work in banking?

Yes. It is highly relevant for fraud, credit, underwriting, collections, compliance, and customer operations.

- Can it work in healthcare?

Yes. It helps connect patient state, context, intervention logic, safety checks, and execution boundaries.

- Can it work in government?

Yes. It is valuable for case handling, eligibility assessment, regulatory review, and service delivery.

- Can it work in cybersecurity?

Yes. It is especially powerful for sensing threats, reasoning over attack context, and enabling controlled response.

- What is the biggest mistake institutions make with AI?

They focus too much on model capability and too little on representation quality and execution legitimacy.

- Why do many AI pilots fail in production?

Because the institution lacks strong sensing, reasoning, workflow integration, and governance architecture.

- Does this framework remove human judgment?

No. It helps define where human judgment should remain, where it should supervise, and where it should intervene.

- What is false legibility?

It is when the system appears to understand reality but is working from incomplete, biased, stale, or mislinked representations.

- What is brittle reasoning?

Reasoning that looks good in demos but fails under drift, edge cases, or real-world complexity.

- What is illegitimate execution?

When AI-mediated action happens without proper authorization, verification, or recourse.

- Why does time matter so much in SENSE?

Because reality changes, and stale state often produces weak or dangerous decisions.

- What is a state representation?

A structured view of the current condition of an entity at a point in time.

- Why is feedback essential in CORE?

Because institutions only become intelligent when they learn from outcomes, overrides, and exceptions.

- What does “making reality legible” mean?

It means turning messy real-world conditions into usable institutional understanding.

- Is explainability enough for DRIVER?

No. Legitimacy also requires delegation, auditability, verification, and recourse.

- How is this different from classic enterprise architecture?

Classic enterprise architecture often organizes systems and interfaces. This framework organizes perception, reasoning, authority, and action.

- Is the representation economy only relevant to digital firms?

No. It applies to banks, manufacturers, insurers, public institutions, hospitals, and supply chains.

- Why are regulations becoming more relevant now?

Because AI is moving closer to consequential decisions, and institutions need stronger controls, literacy, oversight, and risk management. (AI Act Service Desk)

- Does the EU AI Act support this broader way of thinking?

Indirectly, yes. Its phased obligations reinforce the need for structured governance, operational accountability, and oversight for higher-risk AI uses. (AI Act Service Desk)

- Does NIST support lifecycle governance?

Yes. NIST’s AI RMF and GenAI Profile emphasize governance, mapping, measurement, management, and continuous monitoring. (NIST)

- Does OECD support accountability as a lifecycle issue?

Yes. OECD’s AI principles and related accountability work treat trustworthy AI as an ongoing institutional responsibility. (OECD)

- What industries are likely to adopt this fastest?

Finance, healthcare, cybersecurity, logistics, public services, and industrial operations.

- What new job roles may emerge from this shift?

AI governance architects, representation engineers, decision operations leads, recourse designers, model risk strategists, and institutional memory architects.

- What is an institutional memory system?

A system that captures decisions, exceptions, overrides, outcomes, and associated logic over time.

- Can representation become a competitive moat?

Yes. Better representation can produce better decisions, faster learning, stronger resilience, and greater trust.

- How does this relate to agentic AI?

Agents become far more valuable when they operate inside strong SENSE, CORE, and DRIVER boundaries.

- Is this framework anti-agent?

No. It is pro-governed agency.

- What is the simplest place to start?

Choose one high-value workflow and map its signals, entities, states, reasoning steps, execution controls, and recourse paths.

- What should leaders ask first?

Where does our institution currently fail to see, fail to think, or fail to act legitimately?

- Why is this useful for boards and the C-suite?

Because it helps leaders discuss AI as institutional design, not only as technology procurement.

- Why is this framework strategically powerful for thought leadership?

Because it gives leaders a vocabulary for talking about AI, governance, execution, and competitive advantage in one coherent architecture.

- What is the central message of this article?

The future of AI advantage is not only model capability. It is institutional legibility, institutional reasoning, and legitimate execution.

What is the SENSE–CORE–DRIVER architecture?

The SENSE–CORE–DRIVER architecture describes how intelligent institutions operate.

-

SENSE converts real-world signals into structured institutional representations.

-

CORE performs reasoning, optimization, and decision-making.

-

DRIVER executes authorized actions with governance, identity, and accountability.

Glossary

Representation Economy

An emerging economic logic in which advantage depends on how well institutions represent reality, reason over it, and convert it into governed action.

Intelligent Institution

An organization that combines data, software, AI, policy, and workflows to perceive reality, reason over it, and act with controlled authority.

Institutional Architecture

The structural design through which an institution senses, reasons, governs, and executes decisions.

SENSE

The legibility layer of the institution: Signal, ENtity, State representation, Evolution.

Signal

A detectable event, change, trace, or input from the world.

Entity

The person, object, asset, account, case, or organization to which signals are attached.

State Representation

A structured description of an entity’s current condition.

Evolution

The way that state changes over time.

CORE

The cognition layer: Comprehend context, Optimize decisions, Realize action, Evolve through feedback.

Institutional Reasoning

The ability of an institution to interpret context, compare options, and choose action in line with goals, risks, and constraints.

Context

The surrounding meaning that makes a signal useful for decision-making.

Decision Infrastructure

The systems, logic, workflows, models, and policies that support decisions at scale.

Feedback Loop

A process through which outcomes, overrides, and exceptions improve future decisions.

DRIVER

The legitimacy and execution layer: Delegation, Representation, Identity, Verification, Execution, Recourse.

Delegation

The formal or operational authorization allowing a system to act within defined limits.

Verification

The checks used to confirm whether a proposed action should proceed.

Execution

The point at which a decision becomes real in the operating environment.

Recourse

The ability to challenge, review, correct, or reverse a decision.

Institutional Legibility

The degree to which an organization can clearly observe and structure relevant parts of reality.

False Legibility

A misleading appearance of understanding caused by biased, incomplete, or stale representation.

Governed Action

Execution that occurs within explicit authority, oversight, traceability, and correction pathways.

Institutional Memory

A durable record of decisions, exceptions, outcomes, and lessons that improves future performance.

AI Governance Architecture

The practical design through which institutions make AI accountable, monitorable, and safe to use in real-world decision environments.

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

References and further reading

This article draws on recent public work from Stanford HAI’s 2025 AI Index, NIST’s AI Risk Management Framework and Generative AI Profile, the OECD AI Principles and accountability guidance, the European Commission’s official AI Act implementation timeline, and the World Bank’s 2025 work on strengthening AI foundations. These sources collectively reinforce the same structural point: AI is moving from experimentation toward institutionalization, and that shift raises the importance of governance, lifecycle oversight, representation quality, and execution legitimacy. (Stanford HAI)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.