The Representation Premium

For the last two years, most conversations about AI advantage have centered on model quality.

Which model is smarter? Which one is cheaper? Which one reasons better? Which one can act?

Those questions still matter. But they are no longer the only questions that matter.

As AI moves from generating answers to helping people search, compare, decide, negotiate, and transact, a deeper competitive shift is taking shape.

In many markets, the winners will not simply be the firms with the best products, the largest models, or even the most ambitious internal AI programs. Increasingly, the winners will be the institutions that are easiest for AI systems to understand, verify, and work with.

That shift is already visible in the growing importance of structured data for search and commerce, the rise of machine-verifiable credentials, and the emergence of agentic commerce initiatives from major platforms and payment networks. (Google for Developers)

This is where the idea of the representation premium begins.

The representation premium is the market advantage earned by institutions that are easier for AI systems to see, trust, and coordinate with.

In simple terms, if a company is more machine-legible than its rivals, AI systems can find it faster, compare it more confidently, recommend it more safely, and transact with it more easily. Over time, that machine legibility can become a real source of economic reward. The opposite is also true. Institutions that remain opaque, fragmented, or difficult for machines to interpret may suffer a representation discount. That discount may not appear first as a stock-market event. It may show up earlier as weaker discoverability, slower onboarding, lower conversion, higher compliance friction, lower agent preference, and reduced participation in machine-mediated markets.

This idea sits at the heart of the broader representation economy and connects directly to the SENSE–CORE–DRIVER framework. What gets sensed, modeled, trusted, and delegated increasingly shapes what gets chosen.

The Representation Premium is the competitive advantage earned by organizations that are easy for AI systems to understand, verify, and interact with. As AI increasingly mediates search, decisions, and transactions, companies that are more machine-legible will gain higher visibility, trust, and market preference.

What is the Representation Premium?

The Representation Premium is the market advantage gained by institutions that are easier for AI systems to see, trust, and coordinate with.

Why is the Representation Premium important?

Because AI systems increasingly influence discovery, decision-making, and transactions, companies that are machine-readable gain higher selection probability.

What causes a Representation Discount?

Poor data structure, fragmented systems, unverifiable claims, and lack of machine-readable interfaces

The New Market Reality: From Human Choice to Machine-Mediated Choice

Historically, most commercial choice was human choice. A person searched for a supplier, read a review, asked for a proposal, checked documents, compared alternatives, and made a decision.

That world is changing.

Search engines increasingly rely on structured data to understand products, reviews, prices, shipping, availability, organizations, and page meaning. Google explicitly recommends structured product and merchant listing markup so content can appear in richer and more actionable search experiences.

At the same time, AI systems are moving beyond conversation into action. OpenAI introduced Operator in early 2025 and later integrated those capabilities into ChatGPT agent, which can browse websites, fill out forms, and complete online tasks with user oversight.

Visa has announced initiatives designed to enable AI to find and buy through its network, while PayPal has rolled out toolkits and commerce experiences aimed at agentic workflows. (Google for Developers)

That means more economic decisions will be influenced by systems that do not experience brand in the old human way. These systems evaluate clarity, consistency, metadata quality, credential validity, policy fit, operational certainty, and transaction confidence.

A simple example makes the point.

Imagine two industrial suppliers selling nearly identical components at comparable prices.

The first supplier has inconsistent product names across its website and catalogs, outdated certifications hidden in scanned PDFs, unclear delivery commitments, and a contact process that depends on long email chains.

The second supplier publishes structured product data, current availability, machine-readable specifications, verified credentials, clear return policies, and inventory or ordering interfaces that software can understand.

A human buyer may still compare both. But an AI procurement assistant, sourcing engine, or enterprise copilot will almost always have an easier time with the second supplier. That supplier is easier to search, easier to compare, easier to verify, and easier to recommend.

That is representation premium in action.

Representation Is Becoming an Economic Variable

Many executives still treat representation as a technical issue. They see it as a matter of metadata, schema design, integration, data quality, or compliance paperwork.

That view is now too narrow.

Representation is becoming an economic variable because AI systems need machine-usable descriptions of reality. If reality is badly represented, AI cannot act on it reliably. If reality is clearly represented, AI can coordinate around it at scale.

This is why standards and trust frameworks matter more than they first appear.

The W3C’s Verifiable Credentials Data Model 2.0 provides a way to express credentials so they are cryptographically secure, privacy-respecting, and machine-verifiable. NIST’s AI Risk Management Framework is designed to help organizations incorporate trustworthiness into AI design, development, use, and evaluation.

The OECD AI Principles, updated in 2024, promote innovative and trustworthy AI that respects human rights and democratic values. These are not abstract policy documents. They are part of the infrastructure that determines whether a machine can rely on what an institution is presenting. (W3C)

In other words, markets are no longer rewarding only good products. They are starting to reward good machine-readable representations of products, services, credentials, obligations, operating state, and institutional trust.

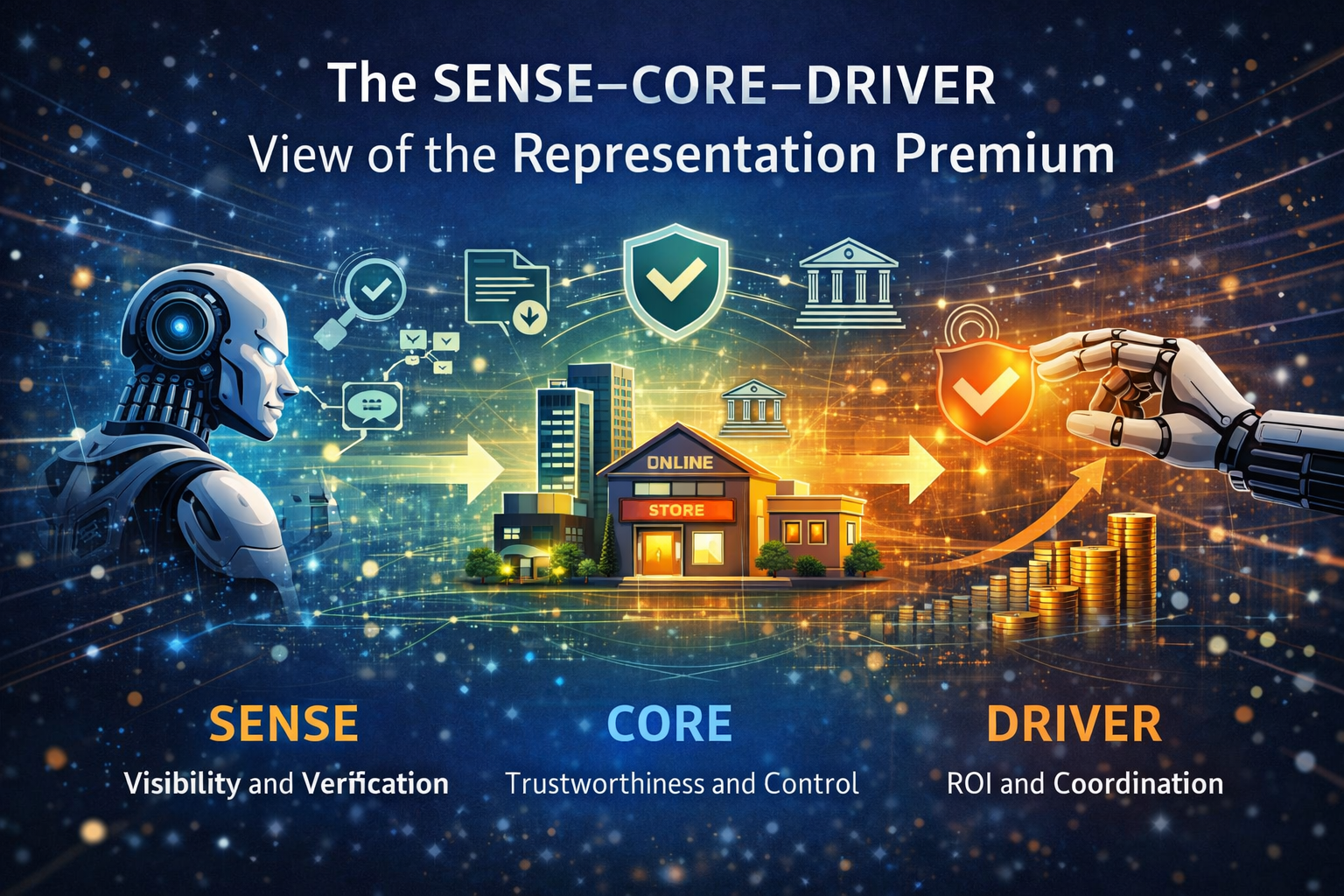

The SENSE–CORE–DRIVER View of the Representation Premium

The easiest way to understand this shift is through the SENSE–CORE–DRIVER framework.

SENSE: Make Reality Machine-Legible

SENSE is the layer where reality becomes machine-readable.

It includes signals, entities, state, and change over time. If an institution cannot clearly express who it is, what it offers, what condition it is in, what rules apply, and what has changed, then it becomes hard for AI systems to even notice it properly.

A company that cannot cleanly represent its products, certifications, delivery windows, risk posture, or policy boundaries will be less visible in an AI-mediated market.

CORE: Make Representation Reasonable to Machines

CORE is the cognition layer.

Once something is represented, AI systems reason over it. They compare options, interpret context, rank alternatives, estimate risk, detect mismatches, and decide what to recommend.

If your representation is clear, complete, and trustworthy, CORE systems can reason about you with more confidence. If your information is ambiguous or contradictory, machines will assign more uncertainty to you, even if your actual business is strong.

DRIVER: Make Action Legitimate and Scalable

DRIVER is the execution and legitimacy layer.

This is where decisions become actions. A system checks whether it is authorized to proceed, whether policies allow the step, whether credentials are valid, and whether the action can be audited, explained, or reversed.

If your institution supports legitimate machine action, it becomes easier for AI systems to coordinate with you in real workflows.

The representation premium appears when all three layers reinforce one another. You are visible enough to be sensed, understandable enough to be reasoned over, and trustworthy enough to be acted with.

Easy Examples from Everyday Markets

The idea sounds strategic, but it is actually very practical.

Example 1: Restaurants

One restaurant has a great Instagram presence, but no structured menu, inconsistent opening hours across platforms, no reservation interface AI assistants can use, and incomplete location details.

Another restaurant publishes current hours, menu items, allergy information, reservation hooks, and standardized location data.

Which one is more likely to be surfaced by an AI assistant helping a family decide where to eat? The second one.

Example 2: Manufacturers

One manufacturer says it is compliant, certified, and export-ready, but the proof sits across old brochures, email attachments, and scattered PDFs.

Another presents current certifications, trade details, quality checks, supplier status, and product specifications in machine-readable formats, with verification where needed.

Which one becomes easier for procurement systems, insurers, customs workflows, and financing partners to process? Again, the second one.

Example 3: Universities

One university describes its programs only in narrative marketing language.

Another expresses program structure, credentials, accreditation, course outcomes, and student support clearly and consistently in formats that digital systems can understand.

Which institution is more likely to be recommended by AI career advisors, skills systems, and education marketplaces? The second one.

In each case, the premium is not about hype. It is about reducing machine uncertainty.

Why This Matters Even More in a Same-Model World

A common assumption in AI strategy is that if everyone gains access to similar frontier models, differentiation will collapse.

The opposite may happen.

When models become widely available, representation quality matters more. If the same general-purpose AI can evaluate ten suppliers, ten hospitals, ten universities, or ten financial providers, it still has to decide which one is easier to interpret, safer to engage, and more appropriate for the user’s needs.

In a same-model world, better representation becomes a stronger differentiator because the model’s intelligence is no longer the scarce asset. What remains scarce is clean, trustworthy, machine-usable institutional reality.

This is why structured data, verifiable credentials, policy metadata, trusted identity layers, and interoperable interfaces will matter more, not less, as models improve. The smarter the AI layer becomes, the more value shifts toward the quality of the world it is allowed to see and act upon. (Google for Developers)

The Representation Discount Is Just as Important

Every premium creates a discount.

A representation discount is the market penalty paid by institutions that are hard for AI systems to interpret or trust.

This happens when:

- data is fragmented across legacy systems,

- public-facing information is inconsistent,

- policies are ambiguous,

- credentials are difficult to verify,

- operating state is opaque,

- interfaces are human-friendly but machine-hostile.

When that happens, AI systems either avoid the institution or require expensive human intervention before moving forward.

That friction matters.

In a world of AI-assisted commerce, AI procurement, AI-supported customer journeys, and AI-enabled enterprise decision-making, friction is not just an operational inconvenience. It becomes a competitive tax.

An institution may still be excellent in reality. But if it is poorly represented, it may be under-selected by the systems that increasingly mediate demand, trust, and coordination.

This Is Not Only a Big-Company Story

The representation premium is not just for large enterprises.

In fact, it may be especially important for small and medium-sized institutions. Smaller firms often lack the brand power, sales reach, and lobbying muscle of larger incumbents. But they can still compete if they become easier for AI systems to find, verify, and work with.

A niche exporter with transparent lead times, structured catalog data, verified certifications, and AI-readable trade documents may become more discoverable in machine-mediated sourcing than a larger but less legible competitor.

A regional school with machine-readable credential pathways may become more visible to digital learning and career systems.

A specialized clinic with clearly represented services, credential evidence, and interoperable appointment workflows may become easier for digital health systems to route patients toward, subject to regulatory and safety boundaries.

The representation premium, then, is not only a story about dominant firms. It may become a ladder for agile institutions that learn to become machine-legible early.

What Leaders Should Do Now

The key executive question is simple: how does an institution earn a representation premium?

-

Treat representation as strategy, not documentation

Do not treat this as a back-office cleanup exercise. Ask what your institution must be easy for machines to understand.

-

Clean up the public layer

Products, services, credentials, policies, locations, and commitments should be expressed clearly and consistently across channels.

-

Make trust machine-usable

Claims that matter to transactions should be verifiable where possible. Credentials, provenance, auditability, and identity will increasingly shape machine confidence.

-

Reduce ambiguity at the action boundary

If an AI system wants to book, buy, verify, route, compare, or recommend, can it understand your terms, permissions, constraints, and recourse paths?

-

Align internal and external representation

Many organizations tell one story to the market and run a different reality inside. In the AI era, those gaps become more dangerous because machine systems amplify inconsistency rather than hide it.

Why This Matters for the Future of Markets

The deeper point is this: AI is not only changing intelligence. It is changing selection.

For decades, markets selected through human attention, human review, and human trust. Increasingly, markets will also select through machine legibility, machine verification, and machine coordination.

That does not mean humans disappear. It means more of the path to human choice will be shaped by AI systems acting as filters, analysts, brokers, negotiators, and agents.

When that happens, institutions that are easier for AI systems to see, trust, and coordinate with will enjoy real advantages in discovery, recommendation, conversion, onboarding, compliance, and transaction flow. Over time, those advantages can compound into stronger market position.

That is the representation premium.

This is why representation economics matters. The next era of competition will not be won only by firms with better models. It will also be won by firms that design better representations of reality.

In the language of SENSE–CORE–DRIVER, the institutions that win will be those that make themselves easier to sense, easier to reason about, and easier to engage with legitimately.

That is not a technical footnote to the AI economy.

It is one of its next pricing mechanisms.

Key Takeaways

-

AI is shifting markets from human choice to machine-mediated selection

-

Machine legibility is becoming a competitive advantage

-

Representation Premium determines visibility, trust, and coordination

-

Poor representation leads to Representation Discount

-

SENSE–CORE–DRIVER explains how AI selects institutions

Conclusion

The first phase of the AI era rewarded experimentation. The second rewarded deployment. The next phase will reward representation.

Boards and C-suites need to see this shift early. In an AI-mediated market, value will not flow only to the organizations with the best tools. It will increasingly flow to the organizations that are easiest for intelligent systems to interpret, trust, and coordinate with.

That is why the representation premium deserves serious board-level attention. It links AI strategy to discoverability, trust, transaction readiness, governance, and market position. It reframes machine legibility from a technical hygiene issue into a source of economic advantage.

The institutions that win in the next decade may not simply be the most automated or even the most intelligent. They may be the most clearly represented.

And that changes everything.

Frequently Asked Questions (FAQ)

What is the representation premium in AI?

The representation premium is the market advantage earned by institutions that are easier for AI systems to see, trust, verify, and coordinate with. It reflects the growing value of machine legibility in AI-mediated markets.

Why does machine legibility matter for business strategy?

Machine legibility matters because AI systems increasingly influence search, recommendation, procurement, compliance, and transaction decisions. If your institution is hard for machines to understand, it may be under-selected even if it is strong in reality.

What is the difference between representation premium and representation discount?

A representation premium is the advantage gained by institutions that are easy for AI systems to work with. A representation discount is the penalty suffered by institutions that are opaque, inconsistent, or hard to verify.

How does SENSE–CORE–DRIVER relate to the representation premium?

SENSE makes an institution visible to machines. CORE makes it understandable to machine reasoning systems. DRIVER makes it safe and legitimate for machines to act on that understanding. The premium emerges when all three layers work well together.

Is the representation premium only relevant for large enterprises?

No. Small and medium-sized firms may benefit even faster because better machine legibility can help them compete with larger firms in AI-mediated discovery, sourcing, and service workflows.

What are examples of representation premium in practice?

Examples include structured product data for commerce, machine-verifiable credentials, consistent policy metadata, AI-readable service descriptions, interoperable booking or ordering workflows, and clear digital trust signals. (Google for Developers)

Why will this matter more as AI models improve?

As models become more widely available, model quality becomes less exclusive. Representation quality then becomes a stronger differentiator because AI systems still need clear, trustworthy, machine-usable information to make decisions. (OpenAI)

Glossary

Representation Premium

The market advantage earned by institutions that are easier for AI systems to see, trust, and coordinate with.

Representation Discount

The penalty paid by institutions that are difficult for AI systems to interpret, verify, or transact with.

Representation Economy

An economic environment in which competitive advantage increasingly depends on how reality is represented for intelligent systems.

Machine Legibility

The degree to which an institution’s products, policies, credentials, services, and operating state can be understood by software and AI systems.

SENSE–CORE–DRIVER

A strategic framework in which SENSE makes reality machine-legible, CORE reasons over that representation, and DRIVER governs action, legitimacy, and recourse.

Structured Data

Standardized markup that helps search engines and software systems understand page content and entities more accurately. (Google for Developers)

Verifiable Credentials

Digital credentials designed to be cryptographically secure, privacy-respecting, and machine-verifiable. (W3C)

Agentic Commerce

Commerce in which AI agents help discover, compare, and complete purchases or payment flows on behalf of users. (usa.visa.com)

Machine-Readable Trust

Trust signals that AI systems can verify and act upon, such as credential validity, policy clarity, provenance, and identity assurance.

Action Boundary

The point at which an AI system moves from analysis or recommendation into real-world action.

References and further reading

For authority and further reading, you can cite these in your published version:

- Google Search documentation on structured data and merchant listings. (Google for Developers)

- W3C Verifiable Credentials Data Model 2.0. (W3C)

- NIST AI Risk Management Framework. (NIST)

- OECD AI Principles. (OECD)

- OpenAI announcements on Operator and ChatGPT agent. (OpenAI)

- Visa and PayPal materials on agentic commerce. (usa.visa.com)

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

- The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Balance Sheet: How AI Is Redefining Assets, Liabilities, and Institutional Strength – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.