Executive definition: What is Representation Failure?

Representation Failure is the condition in which an institution gives an AI system a weak, incomplete, outdated, fragmented, or poorly governed model of reality, causing the system to reason badly or act unsafely.

In simple terms, the AI does not fail only because the model is imperfect. It fails because the institution has not represented the world well enough for the machine to operate intelligently.

That is the central argument of this article:

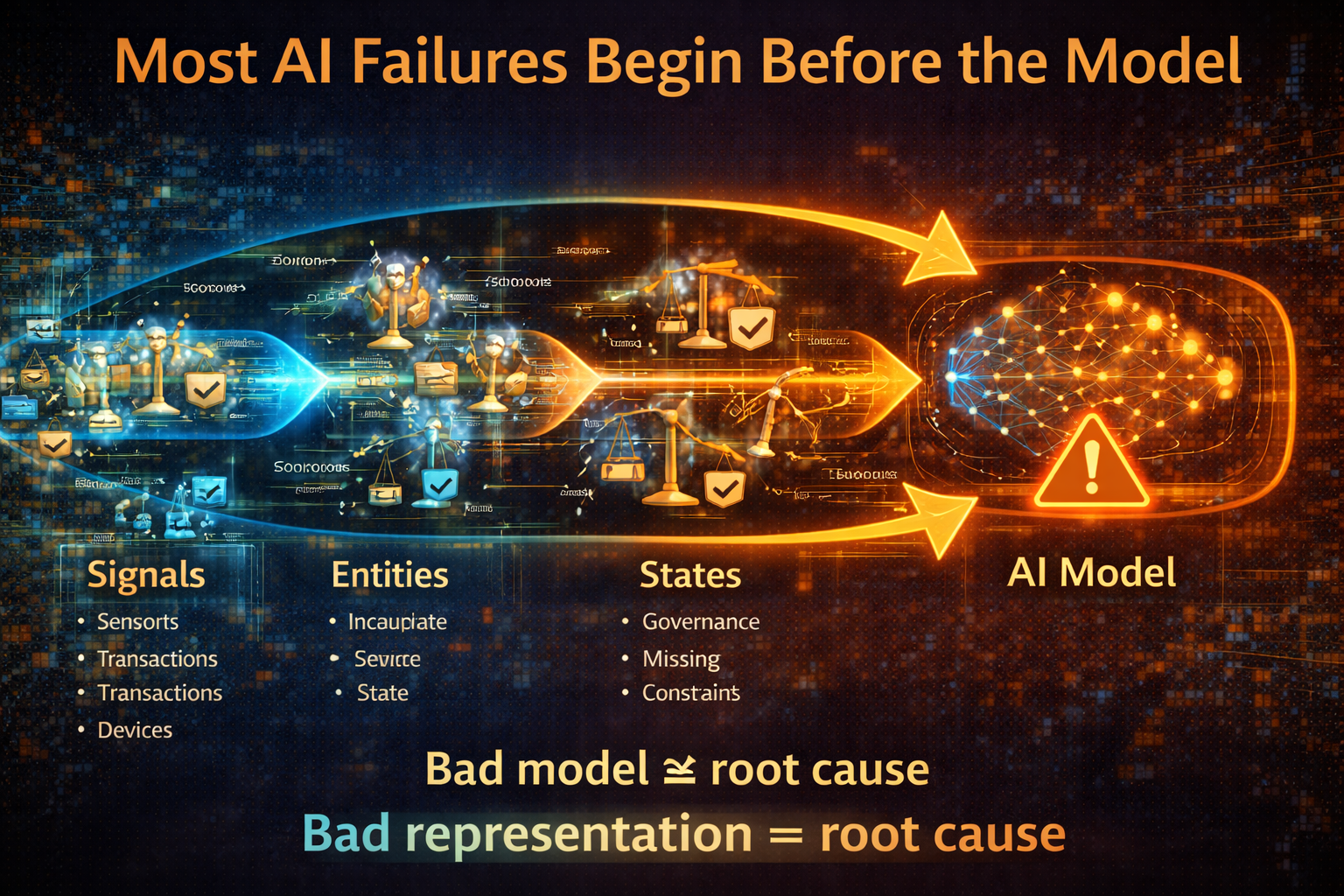

Most AI failures do not begin at the model layer. They begin when institutions misread reality.

What is Representation Failure?

Representation Failure occurs when an AI system breaks not because of poor algorithms, but because the institution incorrectly models reality. Signals, entities, states, constraints, or governance rules are represented incorrectly, causing AI systems to reason on an inaccurate picture of the world.

The real problem often starts before the model

Artificial intelligence is often blamed for failures it did not create alone.

When an AI system gives the wrong answer, overreacts, misses context, makes an unfair recommendation, or triggers the wrong action, the instinct is usually to point at the model. People say the model hallucinated, the algorithm was biased, the agent made a bad call, or the system was not reliable enough.

Sometimes that is true.

But increasingly, the deeper problem begins earlier.

Many AI failures are not just model failures. They are representation failures.

A representation failure happens when an institution asks AI to reason over a weak, incomplete, distorted, outdated, or badly governed model of reality. The system may have excellent compute, strong prompting, and sophisticated orchestration. But if the institution has represented the wrong reality, or too little of it, the AI system will still break.

This topic matters now because AI adoption is accelerating rapidly. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the year before, while private investment in generative AI reached $33.9 billion globally in 2024. At the same time, major governance frameworks from NIST, the OECD, and the EU increasingly emphasize accountability, traceability, transparency, and human oversight. Institutions are therefore scaling AI at the same time that the consequences of weak design are becoming harder to ignore. (Stanford HAI)

This is where the idea of Representation Failure becomes essential.

If the Representation Economy explains how institutions create advantage by building machine-legible models of reality, then Representation Failure explains what happens when they do that badly. And if SENSE–CORE–DRIVER is the architecture for intelligent institutions, Representation Failure is the theory of how those institutions break when reality is sensed poorly, reasoned over badly, or delegated without legitimacy.

That is the real issue. AI systems do not merely fail because they are probabilistic. They often fail because institutions misread the world they operate in.

Why Representation Failure matters

As AI systems scale and begin making autonomous decisions, failures in how reality is represented can lead to systematic errors. This makes representation architecture a critical part of institutional AI strategy.

What Representation Failure really means

Representation Failure is the condition in which an institution’s model of reality is too thin, too fragmented, too static, too generic, or too weakly governed for AI systems to reason or act safely within it.

In simpler language, the institution has not built a good enough picture of the world for the machine to operate intelligently.

That picture may involve customers, patients, suppliers, accounts, assets, cases, risks, policies, permissions, workflows, exceptions, obligations, or outcomes. If any of those are missing, misidentified, disconnected, or poorly updated, the AI system begins operating inside a distorted decision environment.

This matters because AI systems do not work on raw reality. They work on represented reality.

A customer is not just a person in the abstract. Inside an institution, the customer becomes a representation made of identity data, transaction history, risk state, permissions, interactions, complaints, products, and context.

A patient is not just a human being in need of care. Inside a hospital system, the patient becomes a representation made of symptoms, history, medications, urgency, lab reports, contraindications, care continuity, and escalation logic.

A supplier is not just an external company. Inside a supply chain, it becomes a representation of reliability, lead time, contract terms, geography, dependencies, substitution options, quality history, and resilience risk.

When those representations are weak, the AI does not merely become less accurate. It becomes less intelligent in a structural sense.

Why Representation Failure is different from ordinary AI failure

The most common framing of AI failure focuses on the model: hallucination, bias, drift, low accuracy, or poor explainability.

Those are real issues. But Representation Failure goes one layer deeper.

It asks:

- Did the institution capture the right signals?

- Did it identify the right entity?

- Did it model the current state properly?

- Did it understand what had changed?

- Did it represent constraints, authority, and recourse?

- Did it delegate action beyond what the representation could justify?

That is why Representation Failure fits naturally into SENSE–CORE–DRIVER.

SENSE failure

The institution fails to capture or organize reality correctly.

CORE failure

The institution reasons over the wrong context or conflicting representations.

DRIVER failure

The institution allows action, delegation, or automation without sufficient legitimacy, verification, or recourse.

Once you see this, many AI failures look different. The system may not be “breaking” because the model is unintelligent. It may be breaking because the institution gave it the wrong world to think inside.

SENSE failures: when institutions do not see reality clearly

The first type of Representation Failure happens in SENSE.

SENSE is where reality becomes machine-legible. It depends on signal, entity, state representation, and evolution over time. If any of these are weak, the institution has already compromised the intelligence of the system.

Take fraud detection. A narrow system may detect a suspicious transaction pattern. But if it cannot represent recent travel context, device change, prior customer disputes, linked identities, known exceptions, or recent service interactions, it may interpret normal behavior as fraudulent or miss genuine abuse. The issue is not just bad prediction. It is shallow representation.

Take healthcare triage. An AI assistant may see symptoms, appointment notes, and test results. But if it cannot represent continuity of care, prior complications, contraindications, urgency shifts, or clinician judgment history, it may recommend something that is technically plausible but clinically unsafe.

Take enterprise support. A service agent may have access to policy documentation and previous tickets. But if it cannot represent the latest exception handling, customer tier, prior commitments, local overrides, or operational bottlenecks, it may answer confidently while being institutionally wrong.

This is why SENSE failure is so dangerous. Institutions often believe they have enough data because they have many systems. But fragmented data is not the same as an adequate representation of reality.

CORE failures: when institutions reason over the wrong world

The second type of Representation Failure happens in CORE.

CORE is where the institution comprehends context, optimizes decisions, realizes action, and evolves through feedback. But CORE can only be as good as the reality it is asked to reason over.

An institution may think it has built a strong reasoning layer when in fact it has only layered sophisticated inference on top of poor context.

Imagine a lending system asked to optimize credit decisions. If it represents repayment behavior but not temporary hardship, product suitability, channel coercion, prior dispute outcomes, or internal escalation norms, its reasoning may appear rigorous while being strategically and ethically weak.

Imagine a pricing engine. If it sees demand signals, competitor patterns, and inventory levels but not customer trust, fairness exposure, reputational sensitivity, or long-term churn risk, it may optimize short-term margin while damaging institutional legitimacy.

Imagine a case-management system in government. If it sees application completeness and eligibility fields but not language barriers, appeal history, vulnerability signals, or policy ambiguity, it may reason efficiently but unjustly.

This is why institutional reasoning is not just statistical inference. It is reasoning under constraint. It has to reflect policy, authority, promises, risk appetite, reversibility, and operational reality.

NIST’s AI Risk Management Framework explicitly centers AI governance around GOVERN, MAP, MEASURE, and MANAGE, while the OECD’s AI Principles call for accountability and traceability across datasets, processes, and decisions during the AI lifecycle. The policy message is clear: good AI reasoning depends on understanding the real institutional context in which the system operates. (NIST Publications)

DRIVER failures: when institutions delegate beyond legitimacy

The third type of Representation Failure happens in DRIVER.

DRIVER is where decisions become action. It covers delegation, representation, identity, verification, execution, and recourse.

This is where many organizations get into trouble. They move from recommendation to action too quickly.

A system may recommend account freezing, case escalation, product denial, route reallocation, appointment prioritization, or pricing adjustment. The institution then automates the action without asking whether the underlying representation is strong enough to justify delegation.

That is a Representation Failure at the level of legitimacy.

If identity is weak, delegation is dangerous.

If verification is weak, automation is dangerous.

If recourse is weak, action is dangerous.

If the represented reality is outdated, autonomy is dangerous.

This is why boards and regulators care about human oversight. The EU AI Act’s human-oversight provisions are designed to prevent or minimize risks to health, safety, and fundamental rights when high-risk AI systems are used, and its transparency obligations require systems to be interpretable enough for deployers to use them appropriately. (Artificial Intelligence Act)

In simple terms, DRIVER failure occurs when the institution lets the machine act more confidently than the representation deserves.

The five common forms of Representation Failure

To make this practical, most Representation Failures fall into five broad forms.

-

Signal failure

The institution does not capture the right events, changes, behaviors, or exceptions.

-

Entity failure

The system cannot correctly identify who or what it is acting upon. Identity is fragmented, duplicated, stale, or context-free.

-

State failure

The current condition of the customer, case, asset, workflow, or environment is modeled incompletely.

-

Constraint failure

Policies, permissions, legal boundaries, escalation rules, or risk limits are missing or weakly encoded.

-

Outcome failure

The institution does not properly capture whether the decision worked, caused harm, triggered appeals, or should change future behavior.

Each one weakens intelligence in a different way. Together, they create the illusion of sophisticated AI operating inside institutional blindness.

Why Representation Failure matters more as AI scales

In small pilots, Representation Failure can remain hidden. Humans compensate. Teams manually correct outputs. Exceptions are handled informally. Leaders mistake this for success.

But as AI scales, representation weaknesses compound.

A weak representation used once creates one bad answer.

A weak representation used across thousands of cases creates systemic distortion.

A weak representation connected to autonomous execution creates institutional risk.

That is why scaling AI without fixing representation is so dangerous. The institution starts industrializing its blind spots.

This is also why many organizations struggle to turn AI adoption into durable performance. Adoption alone does not create transformation. If the institution has not redesigned how it sees, models, governs, and delegates reality, AI can remain impressive in demos and fragile in production. Stanford’s 2025 AI Index reinforces the scale of adoption and investment, but those numbers do not eliminate the need for stronger governance and operational design. (Stanford HAI)

How institutions can reduce Representation Failure

The answer is not to stop using AI. It is to build better representational foundations.

First, improve SENSE

Ask what critical realities the institution still does not capture well. Look beyond structured data. Include exceptions, workflow state, temporal change, identity resolution, and feedback from the edge of operations.

Second, strengthen CORE

Make reasoning reflect institutional context, not just generic inference. Define what the AI should optimize for, what it should escalate, and what it must never ignore.

Third, tighten DRIVER

Match delegation to representational maturity. If identity, verification, and recourse are weak, autonomy should stay bounded. If the institution cannot explain or reverse an action, it should be cautious about automating it.

Fourth, review failure through the lens of representation

Ask not only whether the system was accurate, but whether it was reasoning over a truthful enough version of reality.

Fifth, elevate Representation Failure to the board level

This is not only a technical matter. It is a question of institutional fitness, governance, and legitimacy.

Why this idea matters for the future of intelligent institutions

The most important AI question is changing.

It is no longer only: Which model is best?

It is no longer only: How do we govern AI risk?

It is increasingly this:

What kind of reality has our institution made visible to machines, and is that reality good enough for machines to reason and act within?

That is the question beneath Representation Failure.

It matters because future-leading institutions will not be defined only by better models. They will be defined by stronger representations: better sensing, cleaner entity resolution, richer state awareness, clearer constraints, stronger authority mapping, and better recourse.

That is exactly what SENSE–CORE–DRIVER is for. It is not merely a framework for building AI systems. It is a framework for making institutions more legible, more coherent, and more legitimate in how they use intelligence.

Key takeaways

- Many AI failures begin before the model.

- Representation Failure occurs when institutions give AI weak or distorted versions of reality.

- SENSE failures distort what the institution sees.

- CORE failures distort how the institution reasons.

- DRIVER failures distort what the institution delegates and executes.

- The more AI scales, the more dangerous weak representation becomes.

- Institutions that reduce Representation Failure will build stronger governance, better judgment, and more durable AI advantage.

Conclusion: Most AI failures begin before the model

Representation Failure gives us a more useful way to understand why AI systems break.

They do not fail only because models are imperfect. They fail because institutions often ask those models to operate inside incomplete, distorted, weakly governed versions of reality.

That is a deeper problem than hallucination alone.

It means the future of AI will not be shaped only by smarter models, larger context windows, or better agents. It will also be shaped by whether institutions learn to represent the world they operate in with enough clarity, continuity, and legitimacy.

So the real lesson is simple:

Most AI failures begin before the model. They begin when institutions misread reality.

The institutions that grasp this early will build safer systems, stronger governance, better judgment, and more durable advantage.

And those institutions will be the ones best prepared for the Representation Economy.

FAQ

What is Representation Failure?

Representation Failure is the condition in which an institution gives AI a weak, incomplete, outdated, or poorly governed model of reality, causing the system to reason or act badly.

How is Representation Failure different from model failure?

Model failure focuses on the algorithm itself, such as hallucination, drift, or low accuracy. Representation Failure focuses on whether the institution modeled reality well enough for the AI system to operate intelligently.

Why does Representation Failure matter for enterprise AI?

Because enterprise AI operates inside institutional environments full of policies, workflows, exceptions, authority boundaries, and changing context. If those are poorly represented, even strong models can fail.

How does this connect to SENSE–CORE–DRIVER?

Representation Failure can occur in SENSE when reality is captured poorly, in CORE when reasoning happens over weak context, and in DRIVER when decisions are delegated without enough legitimacy or recourse.

Is Representation Failure only about bad data?

No. It includes bad data, but also missing context, fragmented identity, poor state modeling, weak policy encoding, weak outcome tracking, and weak recourse.

Why are boards increasingly responsible for this?

Because as AI influences more consequential decisions, boards must govern not only AI adoption but also how the institution sees, models, governs, and delegates reality. Governance frameworks increasingly point in this direction. (NIST Publications)

Which sectors are most exposed?

Finance, healthcare, manufacturing, logistics, insurance, telecom, and government are especially exposed because they rely on repeated decisions under policy, risk, and operational constraints.

Can Representation Failure be measured?

It can be assessed through diagnostics around signal quality, entity clarity, state continuity, policy representation, authority mapping, outcome tracking, verification, and recourse strength.

Why is this idea strategic, not just technical?

Because it changes how institutions think about advantage. It shifts the conversation from model choice alone to how the institution sees reality, reasons about it, and acts responsibly within it.

What is Representation Failure in AI?

Representation Failure occurs when AI systems break because the underlying representation of reality is incorrect, incomplete, or poorly governed.

Why do many AI systems fail even when models are accurate?

Many failures occur because the system misrepresents entities, states, or signals. Even a powerful model cannot reason correctly if the input representation of reality is wrong.

How is Representation Failure different from model failure?

Model failure occurs when an algorithm produces incorrect predictions. Representation failure occurs when the system’s understanding of the world is wrong before reasoning even begins.

What causes Representation Failure?

Common causes include:

- fragmented data signals

- incorrect entity mapping

- missing state representation

- poorly defined constraints

- weak governance of AI decisions

How does the SENSE–CORE–DRIVER framework reduce Representation Failure?

The framework ensures that institutions:

- correctly observe reality (SENSE)

- reason about it properly (CORE)

- execute decisions within governance (DRIVER)

Why will Representation Failure become a major enterprise AI risk?

As AI systems move from recommendations to autonomous actions, errors in representation can scale across entire organizations, making them a major governance and risk issue.

Glossary

Representation Failure

A condition in which AI systems break because the institution has modeled reality too weakly, too narrowly, or too incorrectly.

Representation Economy

The shift in which competitive advantage increasingly comes from building machine-legible representations of reality that AI systems can interpret, reason over, and act upon.

SENSE

The layer where institutions capture signals, identify entities, model state, and track change over time.

CORE

The layer where institutions reason over represented reality, optimize choices, and learn from feedback.

DRIVER

The layer where authority, verification, execution, and recourse govern machine-supported action.

Machine-legible reality

Reality translated into structured forms that software and AI systems can interpret and act upon.

Entity resolution

The process of determining which records, signals, and events belong to the same person, asset, case, or object.

State representation

A structured model of the current condition of a customer, case, asset, workflow, or environment.

Constraint representation

The encoding of policies, legal boundaries, permissions, thresholds, and escalation rules that limit or guide machine action.

Recourse

The ability to challenge, review, reverse, or correct a machine-supported decision.

Human oversight

Mechanisms that allow people to supervise, intervene in, or override AI decisions when appropriate.

Traceability

The ability to reconstruct what data, logic, processes, and decisions contributed to an AI output or action.

Representational maturity

The degree to which an institution has accurately modeled the realities that matter for safe and effective AI-enabled decisions.

Representation Economy

An economic shift where institutions compete based on how accurately they can represent reality using AI systems.

SENSE Layer

The institutional capability to observe and structure signals from the real world.

CORE Layer

The reasoning layer where AI models interpret signals and generate decisions.

DRIVER Layer

The execution layer that governs how AI decisions are delegated, verified, and executed.

Entity Representation

The digital identity of a real-world object, person, system, or process.

AI Decision Architecture

The institutional system that converts data signals into machine decisions

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

References and further reading

This article is grounded in the broader global shift toward widespread AI use and stronger governance expectations. Stanford’s 2025 AI Index documents accelerating enterprise AI adoption and generative AI investment. NIST’s AI Risk Management Framework emphasizes governance, contextual mapping, measurement, and management of AI risk through its GOVERN, MAP, MEASURE, and MANAGE functions. The OECD AI Principles emphasize accountability and traceability across the AI lifecycle. The EU AI Act reinforces transparency and human oversight obligations for high-risk systems. (Stanford HAI)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

This article is part of an ongoing research series on the Representation Economy and the SENSE–CORE–DRIVER architecture for intelligent institutions, first published on raktimsingh.com.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.