The Representation Deficit

Most organizations believe the AI race is about models.

Which model is more accurate?

Which model is cheaper?

Which model reasons better?

These questions matter. But they are no longer the deepest questions.

The institutions that will struggle in the AI era will not always fail because they chose the wrong model. Many will fail because reality itself never entered the decision system in the right form.

This structural gap is what I call the Representation Deficit.

A representation deficit occurs when the world an institution operates in cannot be adequately sensed, structured, interpreted, governed, and acted upon by its decision systems.

In the Representation Economy, competitive advantage will not come only from better AI models. It will come from better institutional representations of reality.

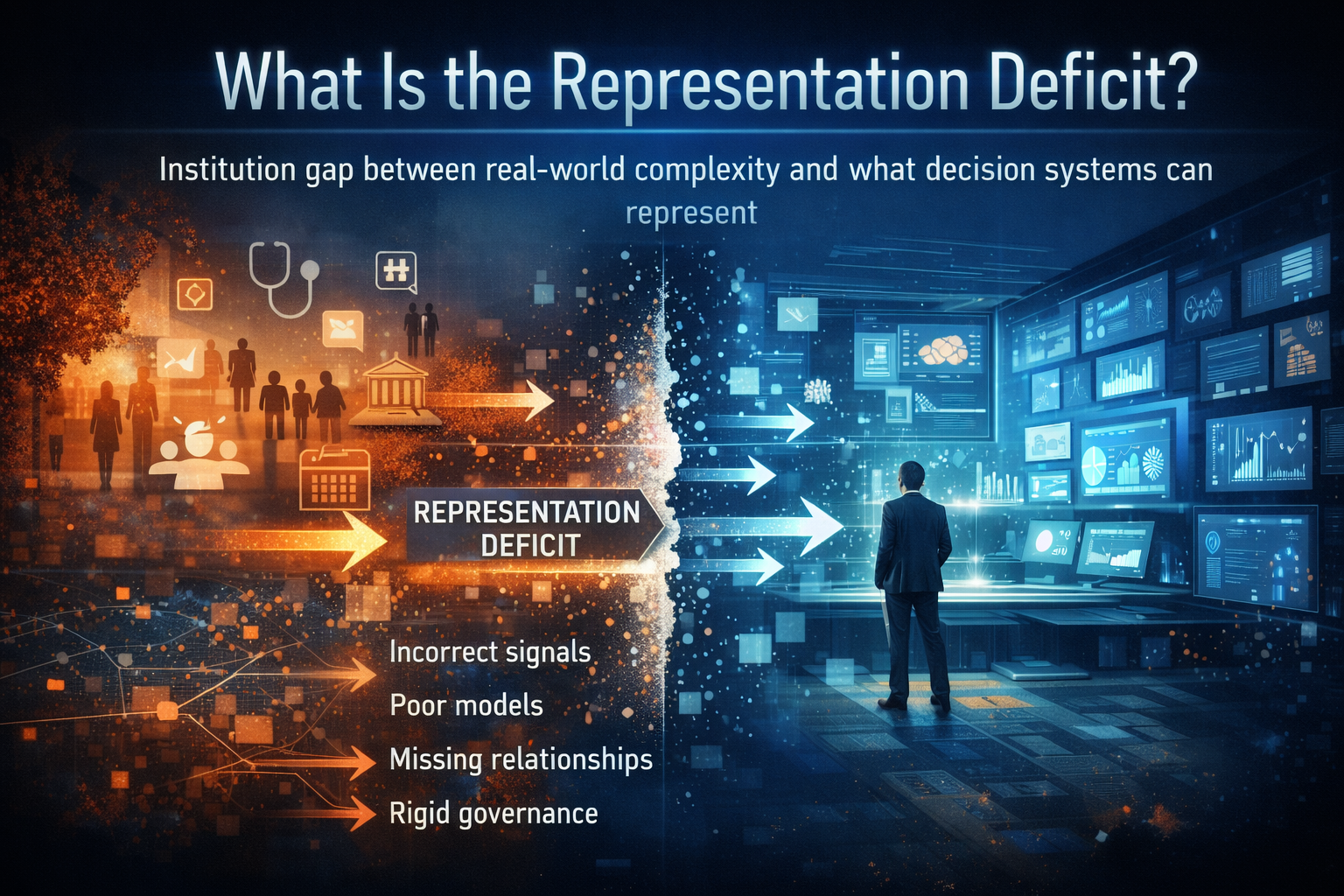

What is the representation deficit?

The representation deficit is the structural gap between real-world complexity and what institutional decision systems can represent. When organizations fail to properly sense, structure, govern, and interpret reality, their AI systems operate on incomplete representations, leading to flawed decisions even when the underlying models are accurate.

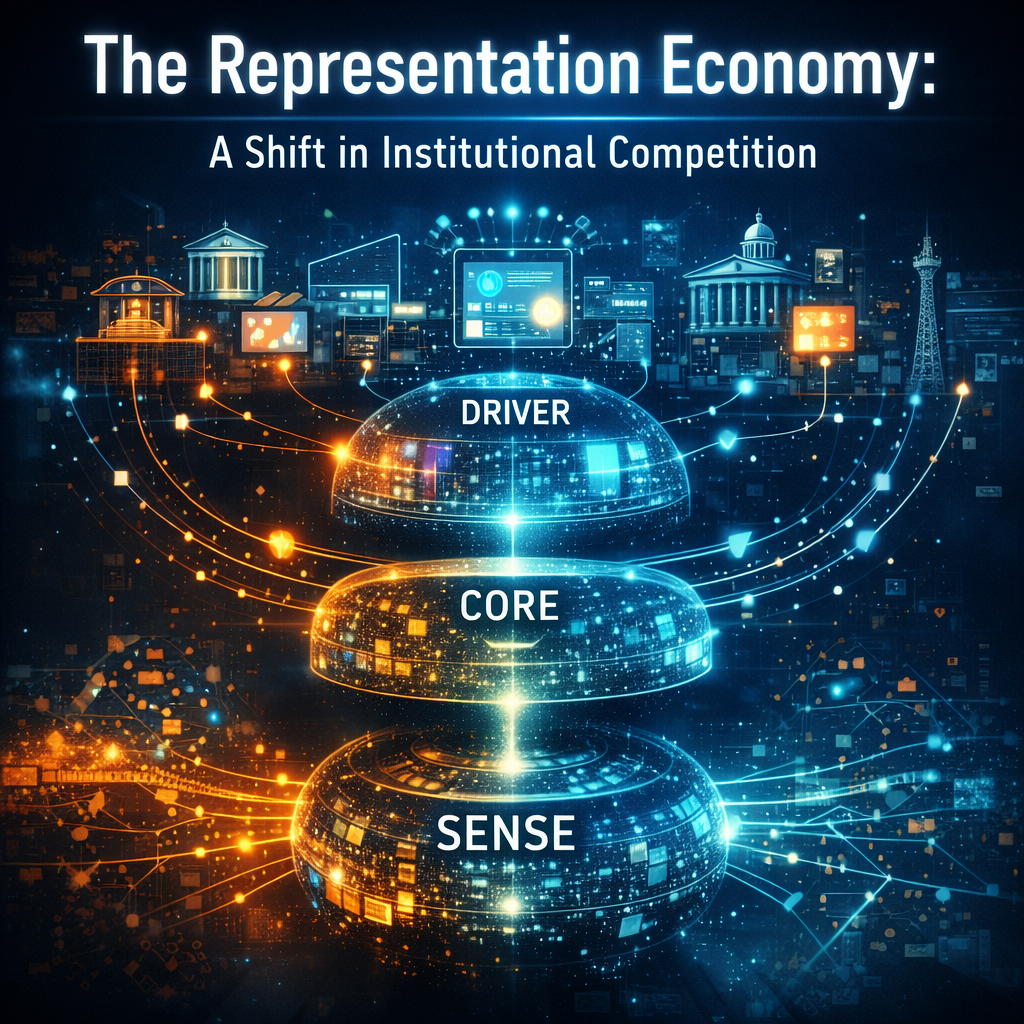

The Representation Economy: A Shift in Institutional Competition

For decades, institutions competed through:

- scale

- efficiency

- software systems

- automation

But artificial intelligence is transforming how organizations see, reason, and act.

AI is not simply a tool for generating content or predictions. It is becoming part of the operating architecture of institutions.

It determines:

- what organizations can detect

- what signals they interpret as meaningful

- how they reason about complex situations

- which actions they automate

This shift marks the emergence of what I call the Representation Economy.

In this economy, the institutions that win will not merely have better algorithms.

They will have better representations of reality.

(See also:

The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture

https://www.raktimsingh.com/representation-economy-sense-core-driver/)

A representation deficit emerges when an institution cannot convert complex, evolving reality into a form that its decision systems can responsibly interpret and act upon.

This gap can appear in many ways.

An institution may:

- collect large volumes of data but miss the right signals

- detect events but fail to capture relationships

- store outcomes but ignore causal pathways

- build dashboards but miss contextual nuance

- enforce governance rules that no longer match operational reality

When this happens, the organization may appear digitally advanced while remaining institutionally blind.

The system produces outputs that look intelligent, but they are built on an incomplete or distorted representation of reality.

Why AI Failures Often Begin Before the Model

Most AI discussions start at the model layer.

But institutional failure often begins earlier.

Consider a hospital deploying AI to improve patient flow.

The system may track:

- admissions

- discharges

- bed utilization

- staffing levels

But it may still fail if it cannot represent realities such as:

- delayed family decisions

- diagnostic uncertainty

- specialist availability

- informal escalation pathways

- differences between “technically empty” and “operationally ready” beds

The system may optimize for the data it sees—but not for the reality the hospital operates in.

The same pattern appears across industries.

A bank may deploy AI to detect fraud.

It may track:

- transaction patterns

- device fingerprints

- account metadata

But if it cannot represent:

- family relationships

- coordinated identity fraud

- caregiving emergencies

- cultural spending patterns

- merchant ecosystem behavior

then the system is not reading reality.

It is reading a thin shadow of reality.

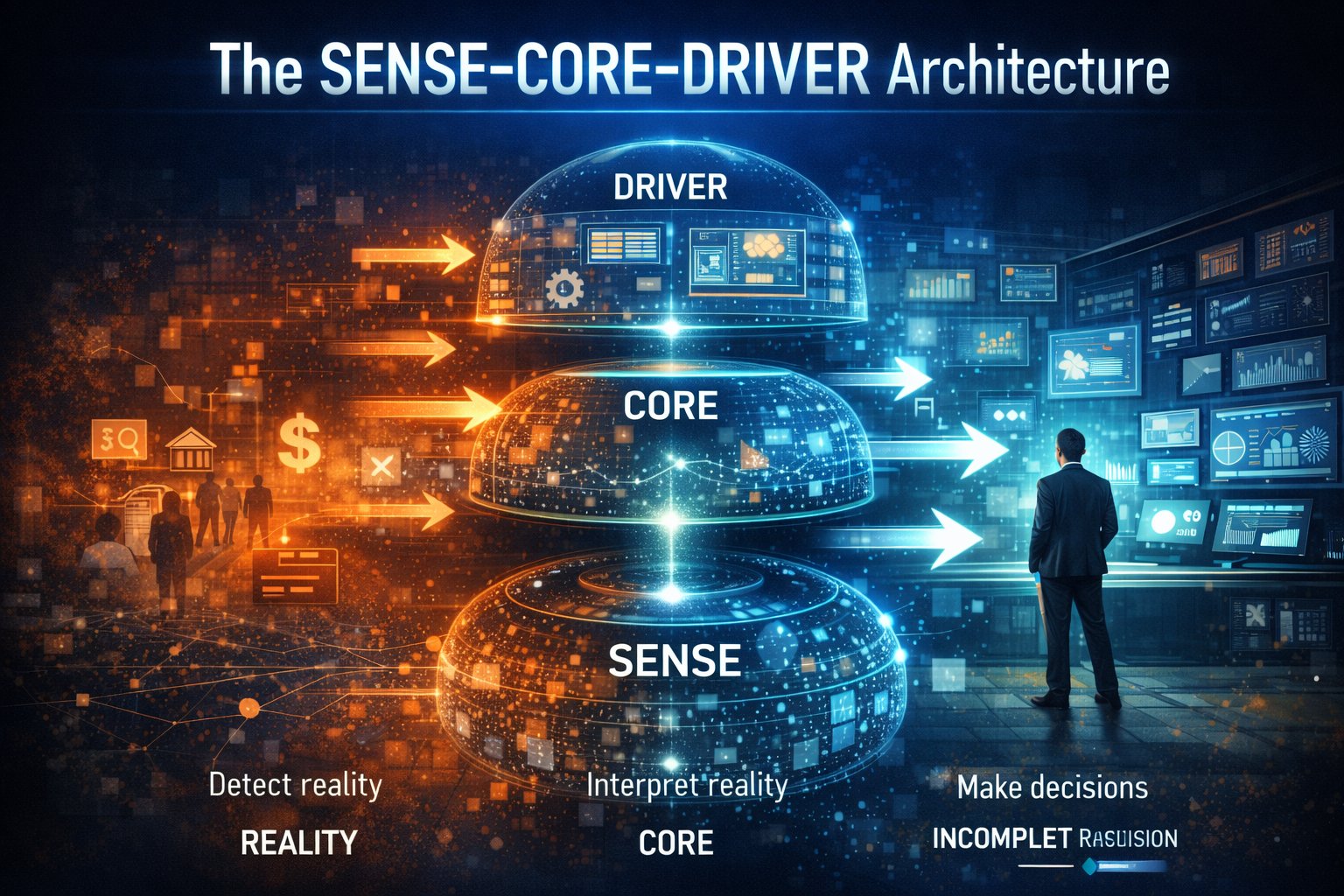

The SENSE–CORE–DRIVER Architecture

The representation deficit becomes easier to understand through the SENSE–CORE–DRIVER architecture, a framework for understanding how intelligent institutions operate.

SENSE: Can the Institution Detect Reality?

Every intelligent system begins with sensing.

The SENSE layer captures signals such as:

- data streams

- behavioral signals

- contextual information

- environmental conditions

- workflow traces

- identity relationships

A representation deficit often begins here.

An organization may collect enormous amounts of data yet still miss the most important signals.

It may observe transactions but miss intent.

It may record activity but miss friction.

It may store outcomes but miss the pathways that created them.

Weak sensing produces distorted understanding.

CORE: Can the Institution Interpret Reality?

The CORE layer converts signals into meaning.

It represents:

- reasoning systems

- policies

- institutional memory

- causal interpretation

- entity relationships

- trade-off logic

This is where organizations transform data into institutional understanding.

But many institutions confuse data storage with comprehension.

For example:

A company may know that customers churned, but not understand why.

An insurer may know that claims were denied, but not encode the decision pathways that produced those outcomes.

Without a strong CORE layer, institutions become fast but shallow.

They react quickly, but to an oversimplified model of reality.

DRIVER: Can the Institution Act with Legitimacy?

The DRIVER layer converts reasoning into action.

It governs:

- approvals

- rejections

- prioritization

- pricing

- routing

- enforcement

- escalation

This is where representation deficits become visible.

Because DRIVER converts hidden epistemic weaknesses into real-world outcomes.

When organizations deploy AI systems that cannot:

- explain decisions

- handle exceptions

- adapt to unusual cases

- provide recourse

the problem is rarely just the model.

The deeper issue is that reality never entered the system in a legitimate form.

The Hidden Risk: Institutional Blindness at Machine Speed

Before AI, representation gaps were often masked by human judgment.

Experienced professionals could detect anomalies.

Frontline workers understood nuance.

Managers knew where official processes diverged from real operations.

AI changes that dynamic.

Once organizations embed AI into workflows, representation becomes operational infrastructure.

Poor representation no longer creates confusion.

It creates automated misallocation.

It can lead to:

- systematic denial of legitimate claims

- incorrect fraud alerts

- supply chain disruptions

- flawed risk scoring

- regulatory blind spots

The most dangerous form of representation deficit is scaled blindness.

When weak representations drive automated systems, errors propagate rapidly across the organization.

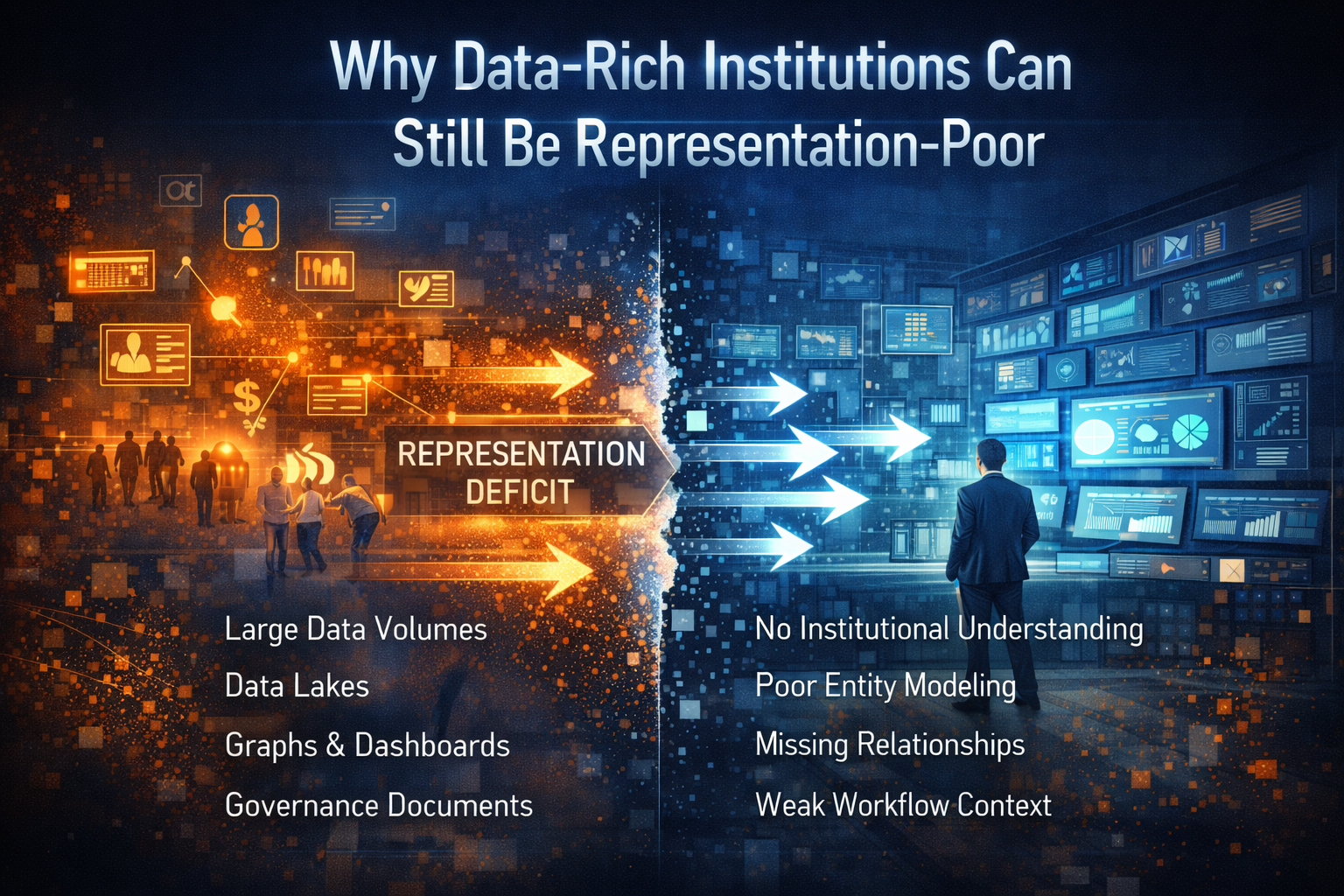

Why Data-Rich Institutions Can Still Be Representation-Poor

Many leaders assume that large datasets automatically translate into AI readiness.

This assumption is incorrect.

Data abundance does not guarantee representational adequacy.

Organizations may possess:

- large data lakes

- sophisticated dashboards

- advanced analytics platforms

and still lack:

- identity clarity

- relationship modeling

- contextual representation

- workflow memory

- decision traceability

Representation is not simply data accumulation.

Representation is the disciplined conversion of reality into forms that can be:

- interpreted

- governed

- contested

- updated

- acted upon responsibly

This is why representation infrastructure will become one of the most important strategic capabilities of the AI era.

The Strategic Questions Boards Must Now Ask

As AI systems increasingly influence institutional decisions, boards and senior executives must ask new questions.

What realities remain invisible to our systems?

What entities do we define too crudely?

Where do policies fail to match actual workflows?

What exceptions remain unrepresented?

Where are we delegating action beyond representational maturity?

How can individuals contest machine-driven decisions?

These are not technical questions.

They are becoming the core governance questions of intelligent institutions.

(See also:

The Enterprise AI Operating Model

https://www.raktimsingh.com/enterprise-ai-operating-model/)

How Winning Institutions Will Reduce the Representation Deficit

Organizations that succeed in the Representation Economy will do five things well.

-

Build richer sensing systems

They will capture:

- behavioral signals

- contextual information

- workflow traces

- exceptions

—not just structured data.

-

Model entities and relationships properly

Identity and relationships will become core infrastructure, not metadata.

-

Strengthen institutional reasoning

Decision systems will incorporate:

- policy logic

- institutional memory

- explainability mechanisms

-

Govern the representation layer

Governance will extend beyond models to include how reality enters the system.

- Delegate gradually

Institutions will match automation rights with representational maturity.

These principles define the SENSE–CORE–DRIVER architecture of intelligent institutions.

The Deeper Shift: Institutions Must Learn to See

The AI decade is not just changing what organizations can automate.

It is changing what institutions must be able to see.

The competition ahead will not simply be about intelligence infrastructure.

It will be about representation infrastructure.

The winners will be the institutions that answer five questions better than their peers:

Who senses reality earliest?

Who structures reality most faithfully?

Who governs meaning most responsibly?

Who delegates action with legitimacy?

Who updates their representations as the world evolves?

These are the institutions that will compound advantage in the AI era.

Key Insight

In the age of generative AI and autonomous decision systems, institutional advantage depends less on raw data or model size and more on the quality of representation. The organizations that win will be those that reduce the representation deficit by improving how reality is sensed, structured, interpreted, and acted upon.

This article introduces the concept of the Representation Deficit as a foundational idea within the broader Representation Economy framework, alongside the SENSE–CORE–DRIVER architecture of intelligent institutions.

Conclusion: The Next Frontier of Institutional Strategy

Artificial intelligence is often framed as a technological revolution.

But its deeper impact is institutional.

The organizations that succeed in the coming decade will not simply install smarter models.

They will reduce the gap between reality and decision.

That is the real meaning of the representation deficit.

When reality cannot enter the decision system, institutions do not merely become inefficient.

They become:

- less aware

- less adaptive

- less legitimate

- less competitive

The future therefore belongs to institutions that master a simple but powerful principle:

Before a machine can act wisely, an institution must first make reality legible.

Glossary

Representation Economy

An economic paradigm in which institutional advantage depends on how effectively organizations represent and interpret reality using intelligent systems.

Representation Deficit

The structural gap between real-world complexity and what an institution’s decision systems can represent.

SENSE–CORE–DRIVER

An architecture describing how intelligent institutions detect reality (SENSE), interpret it (CORE), and act upon it (DRIVER).

Representation Infrastructure

The systems that allow institutions to sense, structure, govern, and update representations of reality.

FAQ

What is the representation deficit in AI?

The representation deficit refers to the gap between real-world complexity and the way institutions represent reality in their decision systems.

Why does AI fail despite good models?

AI systems often fail because they operate on incomplete or distorted representations of reality.

What is the Representation Economy?

The Representation Economy describes a new competitive landscape where institutions gain advantage through better representations of reality rather than just better algorithms.

What is SENSE–CORE–DRIVER?

SENSE–CORE–DRIVER is a framework for understanding how intelligent institutions sense reality, interpret it, and act upon it.

References & Further Reading

Stanford Human-Centered AI Institute — AI Index Report

https://hai.stanford.edu/ai-index

NIST AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework

OECD AI Principles

https://oecd.ai/en/ai-principles

Raktim Singh — Enterprise AI Operating Model

https://www.raktimsingh.com/enterprise-ai-operating-model/

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.