Executive Summary: Representation-native company

Most companies still talk about AI as a tool, a product feature, or a productivity layer. They ask which model to deploy, which copilots to adopt, which workflows to automate, and which use cases will create the fastest return.

Those questions matter. But they are no longer the deepest questions.

As AI moves into the operating core of the enterprise, the real issue is not simply whether a firm has access to intelligence. The real issue is whether the firm is designed in a way that intelligence can actually use. Stanford’s 2025 AI Index shows how quickly this shift is happening: 78% of organizations reported using AI in 2024, up from 55% the year before, and the share using generative AI in at least one business function rose from 33% to 71%. (Stanford HAI)

This is where a new idea becomes necessary: the representation-native company.

A representation-native company is a firm designed to sense reality continuously, maintain machine-legible state, reason over institutional context, and delegate action within governed authority boundaries. It does not treat AI as an add-on. It treats representation itself as the core architecture of competitive advantage.

That makes it different from the familiar idea of the AI-native firm. An AI-native company may have models everywhere. A representation-native company goes further. It is built so that reality is continuously visible, interpretable, governable, and actionable across the institution.

That is the deeper logic of the Representation Economy.

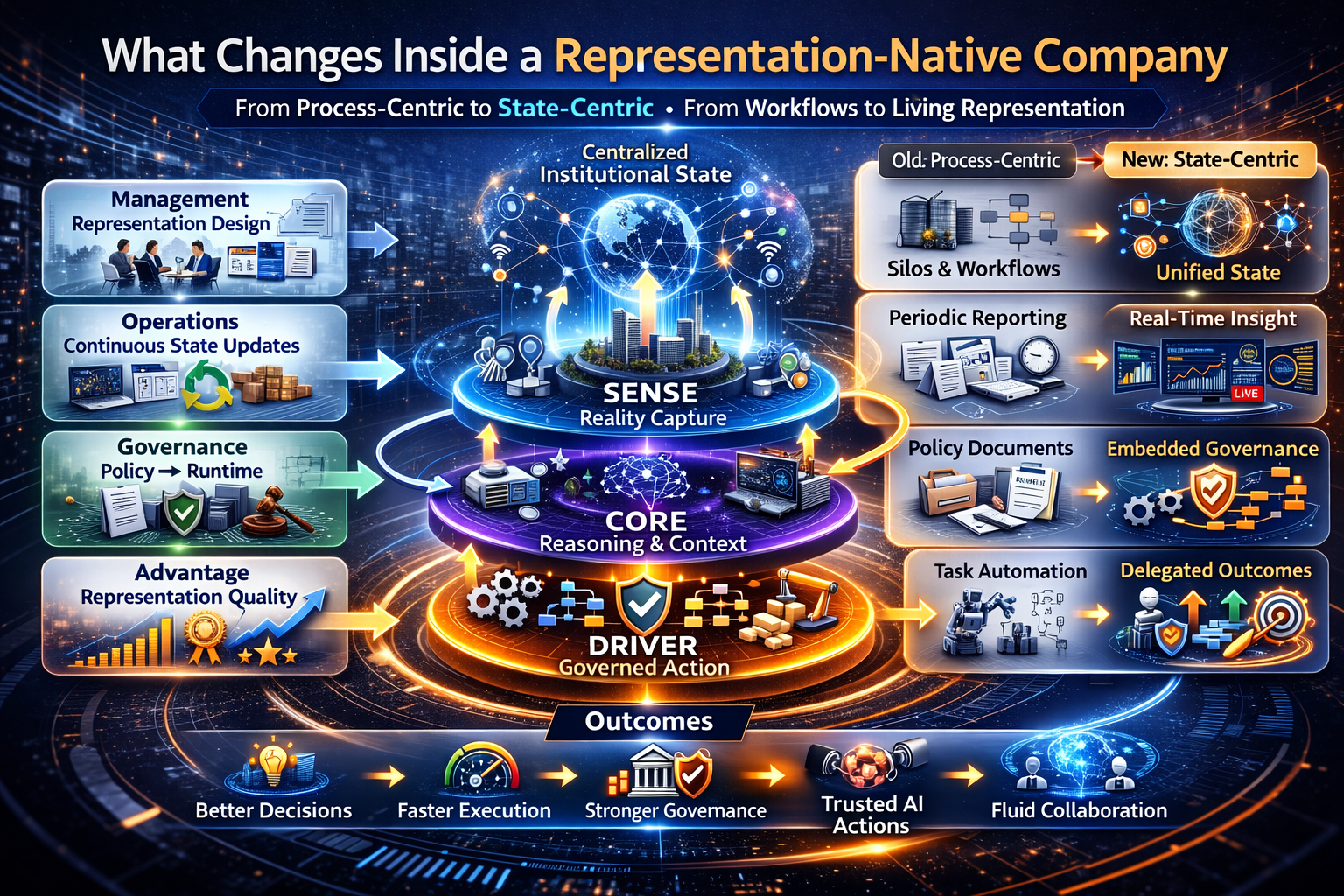

The firms that succeed in the AI era will not simply deploy powerful models. They will design institutions that can observe reality, represent it accurately, reason over those representations, and act on them with governance and accountability. This new institutional design is called the representation-native company. It operates through the SENSE–CORE–DRIVER architecture, where reality becomes machine-legible (SENSE), institutional reasoning occurs (CORE), and decisions are executed through governed delegation (DRIVER). The future theory of the firm will therefore be built around representation capacity, not just software capability.

The Next Theory of the Firm Will Be Built Around Representation

For more than a century, firms have been organized around labor, hierarchy, process, and control. Information moved in batches. Reports summarized events after the fact. Managers coordinated functions. Systems of record captured transactions. Strategy operated above the flow of everyday institutional reality.

That design made sense when intelligence was expensive, slow, and mostly human.

AI changes that.

It lowers the cost of interpretation, summarization, pattern recognition, simulation, recommendation, and decision support. At the same time, the governance burden rises. NIST’s AI Risk Management Framework explicitly organizes responsible AI around Govern, Map, Measure, and Manage, while the OECD AI Principles emphasize trustworthy AI that respects human rights, democratic values, transparency, accountability, and robustness. (NIST)

In other words, the AI era creates a double demand:

The firm must become more intelligent.

But it must also become more legible, more governable, and more legitimate.

That is why the theory of the firm now has to change.

The winning company of the next decade will not simply be the one with the best model access. It will be the one that is best architected to convert reality into trustworthy machine-readable form.

That company is the representation-native company.

What Is a Representation-Native Company?

A representation-native company is a firm whose operating model is built around three institutional capabilities:

SENSE

The ability to detect signals, identify entities, represent state, and update that state as reality changes.

CORE

The ability to reason over that represented reality, compare options, apply context, and improve decisions.

DRIVER

The ability to delegate action within authority boundaries, verification rules, execution controls, and recourse pathways.

This is not just a technology stack. It is a new organizational logic.

In the industrial era, firms won by controlling physical assets.

In the software era, firms won by scaling digital workflows, platforms, and networks.

In the AI era, many firms will win because they are better at turning reality into governed machine-usable form.

That shift is what makes the idea of a representation-native company so important. It is not just a better digital firm. It is a different institutional form.

Why “AI-Native” Is No Longer Precise Enough

The phrase “AI-native company” is often used too loosely. Sometimes it means a company that started with AI in the product. Sometimes it means a company with fewer legacy systems. Sometimes it simply means faster adoption.

But none of those definitions is sufficient.

A company can be AI-native and still be institutionally fragile. It can deploy frontier models yet remain unable to connect identity, state, permissions, policy, context, and recourse across real decisions.

That is why “representation-native” is the stronger idea.

A representation-native company is organized around:

- signal capture

- entity identity

- state representation

- contextual reasoning

- governed delegation

- verification and recourse

In plain language, it is built so that machines can understand what is happening, what matters, what is allowed, and what should happen next.

That is a much stricter and more useful standard than simply saying a firm “uses AI.”

The Old Firm Was Built Around Process. The New Firm Will Be Built Around State.

Traditional companies are often designed around departments and workflows. Sales owns one process. Operations owns another. Finance owns another. Risk, compliance, procurement, and service functions each maintain their own partial view of reality.

That model creates friction because intelligence has to be reconstructed over and over again.

A representation-native company works differently.

It is designed around living institutional state.

Instead of asking only, “Which team owns this process?” it asks:

- What entity is this?

- What is its current state?

- What signals changed that state?

- What policies apply here?

- What action is legitimate now?

- What recourse exists if the action is wrong?

This shift from process-centric to state-centric design is one of the deepest organizational changes of the AI era.

It is also one of the least discussed.

The SENSE Layer: The Firm as a Reality-Capture System

The first job of a representation-native company is not automation. It is legibility.

SENSE is the layer where the firm detects signals, identifies entities, constructs current state, and updates those states as reality changes.

Think of a retailer.

In a traditional retailer, inventory data may sit in one system, promotions in another, customer behavior in another, store-level events in another, and exceptions in email threads or messaging tools. Technically, the company has data. But it does not have a coherent, continuously updated representation of reality.

A representation-native retailer is different. It knows not just what sold, but what inventory condition exists now, which substitutions are emerging, which return patterns are unusual, what customer intent is shifting, and which local actions systems are allowed to take.

That is not merely analytics.

It is a transition from data ownership to state awareness.

The same logic applies in banking, logistics, healthcare, manufacturing, telecom, and public systems. The firms that win will increasingly treat signal quality, entity clarity, and state fidelity as strategic assets.

The CORE Layer: The Firm as a Reasoning System

Once reality becomes machine-legible, the company needs a cognition layer.

CORE is where the firm interprets represented reality, compares possibilities, prioritizes trade-offs, applies policy, recommends actions, and learns from outcomes.

In a traditional enterprise, this cognitive work is fragmented. Some of it lives in teams. Some in models. Some in documents. Some in dashboards. Some in meetings. Intelligence exists, but coordinating it is slow and expensive.

In a representation-native company, CORE becomes institutional infrastructure.

Consider an insurer. A conventional insurer may use AI to score risk or flag fraud. A representation-native insurer goes further. It reasons over policy state, claims chronology, evidence quality, customer history, regulatory thresholds, escalation conditions, and recourse routes. It distinguishes routine cases from ambiguous ones and routes each case to the right blend of machine assistance and human judgment.

That is the real shift.

The company is no longer just a set of workflows supported by analytics. It becomes a reasoning organization built on continuously updated institutional state.

The DRIVER Layer: The Firm as a Legitimate Delegation System

This is where most current AI visions break down.

Many firms can generate recommendations. Far fewer can delegate action safely.

DRIVER is the layer that governs who authorized an action, what representation of reality was used, what constraints applied, how the action was verified, what was executed, and what happens if the system is wrong.

This is not a minor governance detail. It is the difference between AI as assistance and AI as institutionally usable capability.

Imagine two logistics companies with similarly capable models. Both can predict disruptions. But only one can automatically reroute shipments, notify affected parties, respect contractual rules, apply geographic constraints, record why the decision was made, and reverse course when conditions change.

That company is not simply more automated.

It is more institutionally mature.

It has turned intelligence into governed delegation.

And increasingly, that is what durable AI advantage will look like.

What Changes Inside a Representation-Native Company

If representation becomes the organizing principle of the firm, the internal design of the company changes in important ways.

Management becomes representation design

Leaders are no longer only allocating budgets and overseeing teams. They are deciding what the company must be able to see clearly, model correctly, and govern safely.

Operations become continuous state updating

Traditional operations often depend on delayed reconciliation. Representation-native operations depend on living state. The key question becomes: is our current institutional picture accurate enough for action?

Governance moves from documents to runtime architecture

Policies still matter, but policy documents alone are not enough. Governance must live in execution pathways, identity controls, approval thresholds, verification logic, and recourse mechanisms.

Competitive advantage shifts from access to quality of representation

In a same-model world, many companies will have access to comparable intelligence. What will differ is whether those systems can work over a coherent, trusted, and governable picture of reality.

The boundary of the firm becomes more fluid

A representation-native company can coordinate more effectively across employees, software, contractors, suppliers, partners, and machine agents because identity, state, and authority relationships are clearer.

This changes orchestration, sourcing, and even what belongs inside the firm.

Why Representation Matters More Than Model Quality

Many executives still overestimate the importance of model selection and underestimate the institutional importance of representation quality.

But a superior model working over fragmented, stale, poorly governed reality often produces inferior outcomes.

A simpler way to say it:

A company with average models and superior representation architecture may outperform a company with frontier models and broken institutional legibility.

That is already visible in enterprise practice.

The firms that create repeatable AI value are rarely the ones with the loudest demos alone. They are usually the ones with cleaner state, clearer authority boundaries, stronger data and identity integrity, and better runtime governance.

That is why representation-native advantage is likely to be more durable than prompt-native or model-native advantage.

What New Types of Companies Will Emerge?

The representation-native company is not just a better version of today’s firm. It also points to the next wave of company formation.

We are likely to see new businesses emerge around:

- representation infrastructure

- machine-verifiable state layers

- delegation assurance

- recourse orchestration

- institutional identity and authority graphs

- machine-legibility services for regulated sectors

These companies will not primarily sell raw AI. They will sell the missing layer that makes AI operationally usable inside real institutions.

That is a major shift.

It suggests that one of the most valuable categories of the AI era may not be intelligence production alone, but representation production.

The Board-Level Question That Now Matters

For boards and CEOs, the central question is no longer merely, “How do we deploy AI?”

It is:

What kind of firm are we becoming?

Are we still organized for a world in which intelligence is scarce, reporting is periodic, and action is escalated manually?

Or are we redesigning ourselves for a world in which advantage depends on our ability to sense reality, reason over it, and delegate action with legitimacy?

That is not a technology procurement question.

It is a theory-of-the-firm question.

And it will increasingly determine which organizations scale AI safely, which organizations create durable trust, and which organizations convert intelligence into actual institutional power.

Why This Matters for the Representation Economy

The Representation Economy is not simply about better data, better dashboards, or better models.

It is about a deeper change in economic structure.

As AI spreads, institutions will compete not only on what they produce, but on how well they can represent reality for machine systems. That means the next enduring competitive advantages may come from:

- better sensing

- stronger state fidelity

- cleaner identity resolution

- richer contextual reasoning

- safer delegation

- stronger recourse

This is why the representation-native company matters so much.

It is the organizational form that fits the Representation Economy.

Conclusion: The Firm of the AI Era Will Be Built Around Representation

For years, strategy conversations about AI centered on models, automation, and productivity. Those conversations were necessary. They are no longer sufficient.

The deeper question is whether the firm can represent reality well enough for machines to assist, reason, and act without creating confusion, fragility, or institutional harm.

That is the problem the representation-native company solves.

It treats SENSE as the architecture of legibility.

It treats CORE as the architecture of institutional cognition.

It treats DRIVER as the architecture of legitimate action.

Together, these three layers create a firm that is not merely AI-enabled, but fundamentally redesigned for the Representation Economy.

That is why the representation-native company may become one of the defining organizational ideas of the AI decade.

Not because it adds more intelligence.

But because it finally gives intelligence a company it can actually live inside.

Glossary

Representation-Native Company

A company designed to sense reality continuously, maintain machine-legible state, reason over institutional context, and delegate action within governed authority boundaries.

Representation Economy

An economic environment in which competitive advantage increasingly depends on how well institutions represent reality for machine reasoning and action.

SENSE

The layer where reality becomes machine-legible through signals, identity, state representation, and evolution over time.

CORE

The cognition layer where the institution interprets represented reality, compares options, and improves decisions.

DRIVER

The legitimacy and execution layer that governs delegation, verification, action, and recourse.

Machine-Legible State

A structured representation of real-world conditions that a machine system can interpret reliably enough to support decisions or action.

Governed Delegation

The bounded transfer of operational action to AI or automated systems within defined authority, policy, and recourse constraints.

Representation Architecture

The institutional design that determines how reality is sensed, modeled, reasoned over, and acted upon.

State Fidelity

The accuracy, freshness, and reliability of the institution’s current representation of real-world conditions.

Recourse

The ability to challenge, reverse, correct, or remediate an AI-supported action or decision.

Representation Economy

An economic environment where institutional advantage comes from the ability to represent and interpret reality accurately for AI systems.

Representation Capital

The institutional capability to build, maintain, and update machine-usable representations of reality.

Institutional AI Architecture

The structural design through which organizations integrate AI into decision making and operations.

Frequently Asked Questions (FAQ)

What is a representation-native company?

A representation-native company is a firm built to make reality continuously visible, machine-legible, and governable so AI systems can reason and act safely.

How is a representation-native company different from an AI-native company?

An AI-native company may use AI deeply. A representation-native company goes further by redesigning the firm itself around legibility, reasoning, and legitimate delegation.

Why does this matter for boards?

Because AI success increasingly depends on organizational design, not just model choice. Boards must think about authority, recourse, risk, and institutional legibility.

What does SENSE mean in this framework?

SENSE refers to the firm’s ability to detect signals, identify entities, represent state, and track change over time.

What does CORE mean in this framework?

CORE refers to the firm’s reasoning layer: interpreting reality, comparing options, and improving decisions.

What does DRIVER mean in this framework?

DRIVER refers to the layer that governs legitimate action: who can act, under what authority, with what verification, and what recourse exists.

Why is representation more important than model quality in some cases?

Because even a powerful model performs poorly if the institution’s reality is fragmented, stale, or poorly governed.

What new companies may emerge because of this shift?

Likely categories include representation infrastructure firms, delegation assurance providers, and machine-legibility services for regulated industries.

Does this idea apply only to large enterprises?

No. It applies to any organization where AI is starting to influence real decisions, workflows, or actions.

Why could this become a new theory of the firm?

Because it changes the organizing principle of the company—from labor and process coordination to representation, reasoning, and governed delegation.

Why will representation matter more than model quality?

Even the best AI models cannot produce reliable decisions if the underlying representation of reality is incomplete, fragmented, or inaccurate. Institutional advantage will come from better representations, not just better models.

What is the SENSE–CORE–DRIVER architecture?

The SENSE–CORE–DRIVER architecture describes how AI-driven institutions operate:

SENSE — detect signals and represent reality

CORE — reason and optimize decisions

DRIVER — execute decisions with governance

What is representation capital?

Representation capital is the institutional ability to observe, model, and maintain accurate representations of reality so AI systems can operate effectively.

Why is this a new theory of the firm?

Historically, firms were organized around process, hierarchy, and coordination.

In the AI era, firms will increasingly be organized around representation, reasoning, and delegation architectures.

References and further reading

Stanford HAI’s 2025 AI Index documents the acceleration of enterprise AI adoption, including the jump from 55% to 78% in organizations reporting AI use and from 33% to 71% in generative AI use in at least one business function. (Stanford HAI)

NIST’s AI Risk Management Framework explains the four core functions—Govern, Map, Measure, and Manage—and emphasizes embedding trustworthiness into the design, development, deployment, and use of AI systems. (NIST)

The OECD AI Principles describe trustworthy AI as AI that respects human rights and democratic values, and note that the principles were updated in 2024. (OECD)

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.