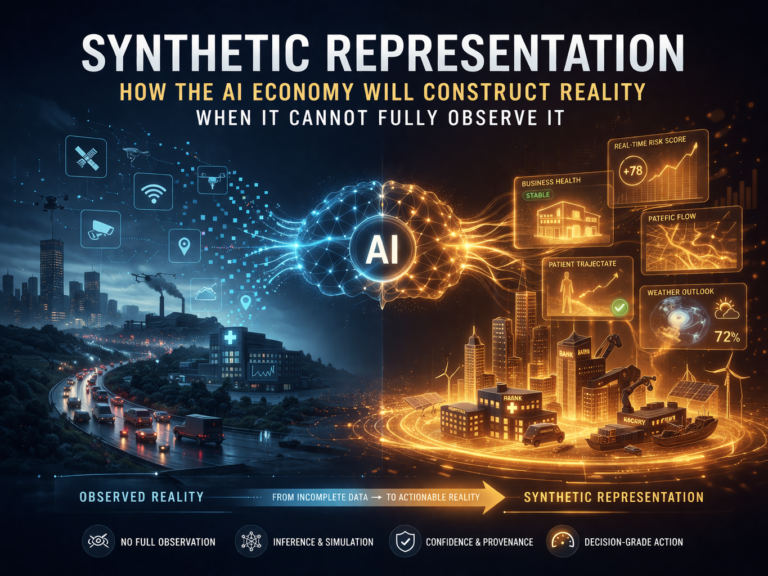

Synthetic Representation:

The next phase of AI will not be defined only by how well systems analyze reality, but by how safely institutions act on realities they had to partially construct.

Most AI conversations still begin with an outdated assumption: first the system observes the world, then it reasons about it.

A bank gathers data and scores a borrower.

A hospital compiles records and supports a diagnosis.

A supply chain platform tracks inventory and predicts delays.

A city measures traffic and adjusts flows.

That picture is no longer sufficient.

In many of the most important decisions now emerging, institutions do not fully observe the world before they act. They see fragments, delays, proxies, partial signals, and conflicting traces spread across systems, organizations, devices, and time windows. So the system does something more ambitious. It fills the gap. It constructs an actionable picture of reality from what is incomplete, inferred, predicted, simulated, or continuously updated.

That is what I call Synthetic Representation.

This is not the same as synthetic data. Synthetic data is usually defined as artificial data generated to mimic the patterns or statistical properties of real-world data, often for privacy, testing, or model development. The UK ICO defines synthetic data in those terms, and government guidance often treats it similarly. (ICO)

Synthetic Representation is broader and more consequential.

It is the machine-constructed, continuously updated, decision-grade representation of an entity, system, or situation when full direct observation is not possible. It may draw on real signals, historical patterns, simulations, model-based inference, expert rules, contextual data, and probabilistic updates. It is not fake data. It is the operational reality on which institutions increasingly decide.

That distinction matters because the AI economy will not run only on what is observed. It will increasingly run on what is constructed well enough to act on.

What is Synthetic Representation?

Synthetic Representation is the machine-constructed, continuously updated, decision-grade view of reality that institutions use when full direct observation is incomplete, delayed, or impossible.

Why this is a new strategic category

The real breakthrough here is not technical. It is institutional.

Earlier digital systems primarily stored records. Modern AI systems increasingly maintain active representations of what is likely true now, what may happen next, and what should count as real enough for action.

That is why digital twins matter conceptually. NIST describes a digital twin as a particular type of computer model of a physical system with the potential for high accuracy, precision, and flexibility, and notes that forecasting is foundational across digital twin functions. NASA’s Earth System Digital Twin framing goes further, describing such systems as dynamic and interactive information systems that represent past and current states while enabling forecasts and scenario analysis. (NIST)

Those examples point to something larger than twins themselves. The future institution will not merely maintain records of what happened. It will maintain evolving representations of what is likely happening, what is emerging, and what is sufficiently credible to trigger pricing, lending, care, intervention, delegation, or automation.

That is where Synthetic Representation moves to the center of the AI economy.

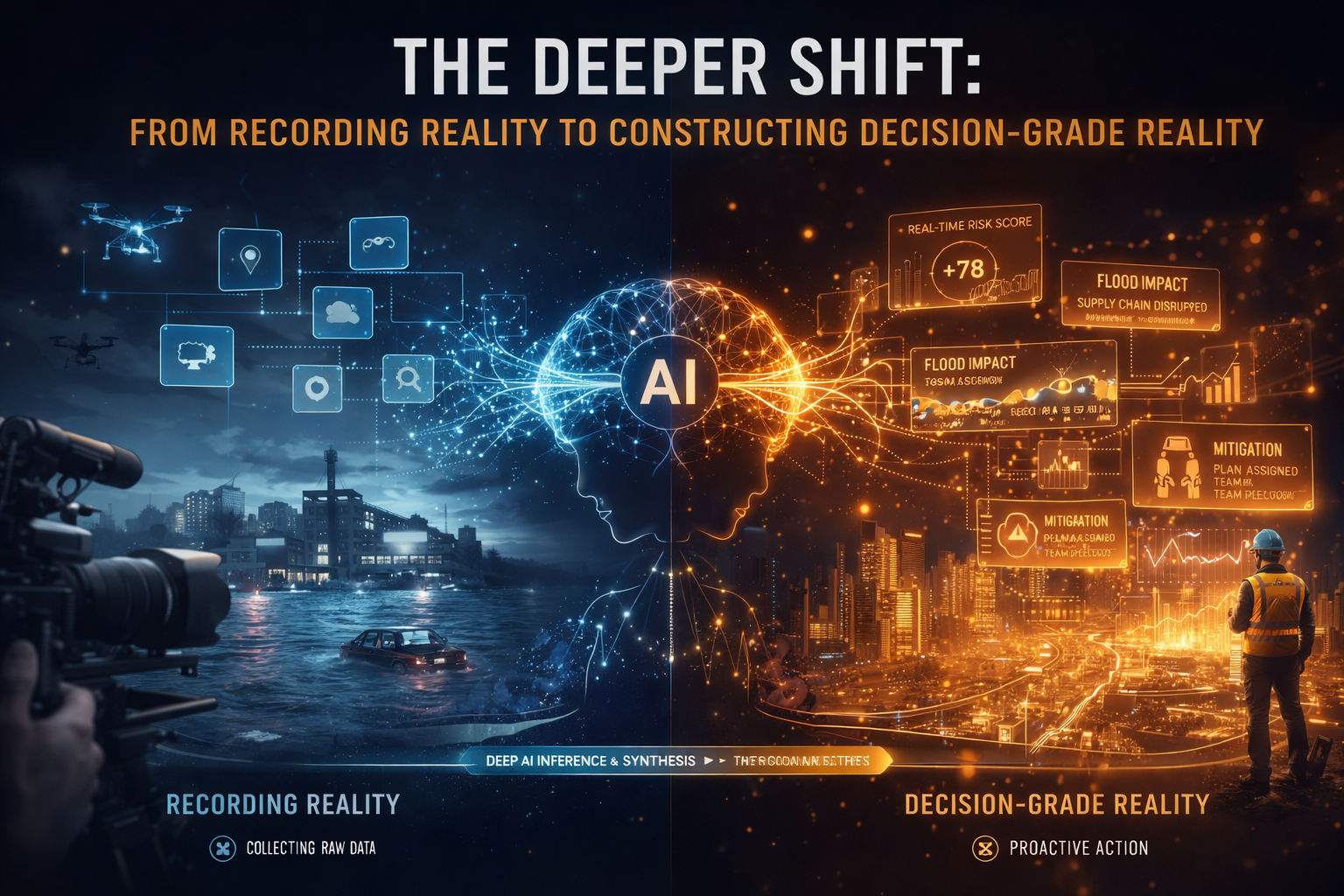

The deeper shift: from recording reality to constructing decision-grade reality

This shift is already visible across industries.

A bank may not fully observe a small business’s resilience, so it constructs an ongoing representation from payment flows, sector signals, cash patterns, invoices, and behavioral context.

A hospital may not fully observe the future state of a patient, so it constructs a risk trajectory from labs, prior history, medication patterns, sensor feeds, and clinical models.

A city may not directly observe every road segment in real time, so it constructs a live traffic state from partial sensors, past flows, weather, events, and simulations.

A weather system never has complete direct observation of the atmosphere everywhere. That is precisely why data assimilation combines observations with model data to produce the best estimate of current state for forecasting. NOAA defines data assimilation in those terms. (AOML)

These are not just better analytics. They are examples of institutions constructing actionable reality when direct observation is incomplete.

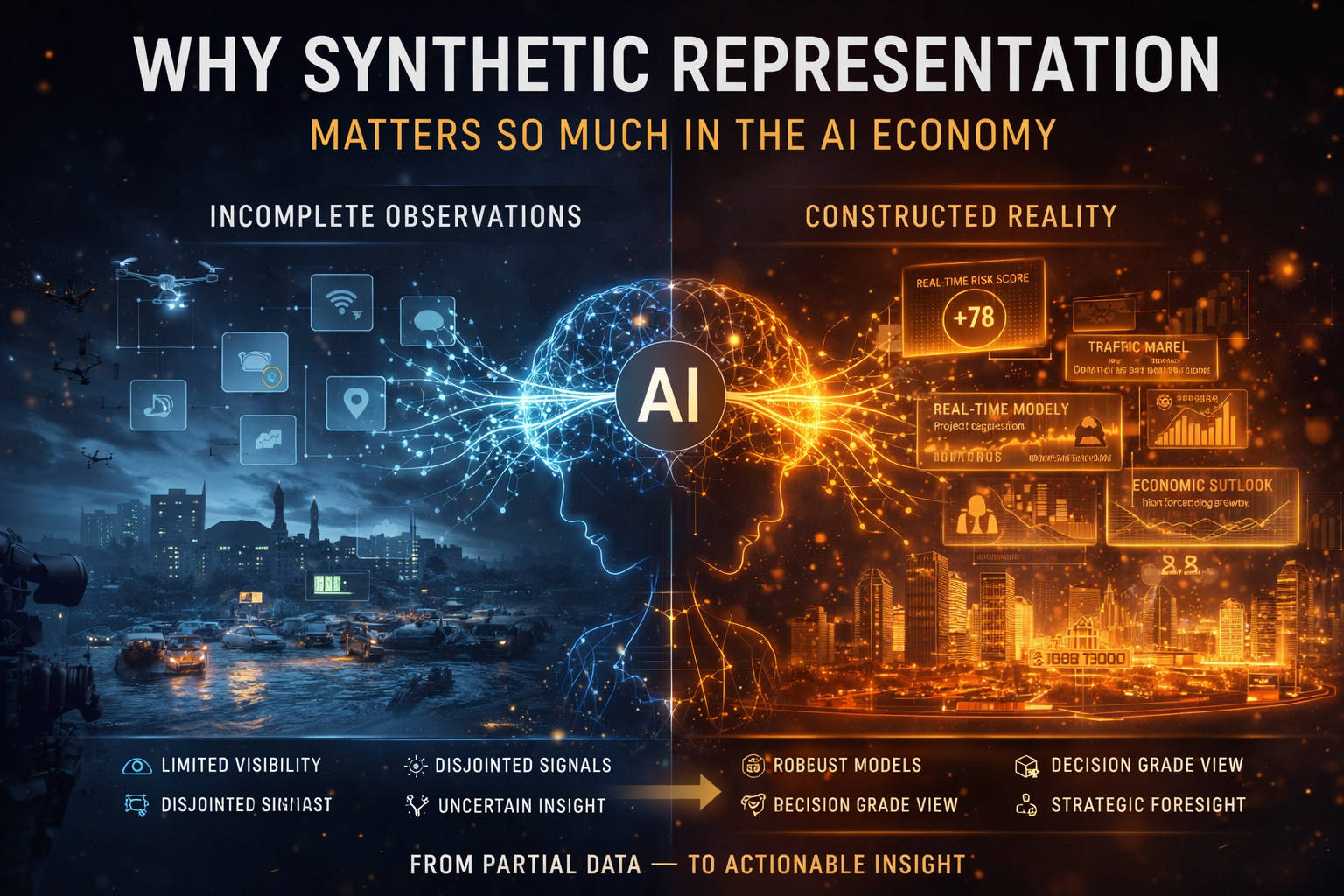

Why Synthetic Representation matters so much in the AI economy

The first era of AI was largely about prediction.

The second era is about reasoning and agents.

The next era will be about institutional action on partially synthetic reality.

That is a much bigger shift than most firms realize.

Systems do not need perfect reality to act. They need a representation strong enough to justify action. As AI spreads into credit, insurance, logistics, healthcare, manufacturing, public services, cyber defense, and enterprise operations, the operational question becomes more serious:

What will the institution treat as real enough to trigger action?

That is why my SENSE–CORE–DRIVER framework becomes even more important here.

SENSE becomes more ambitious

SENSE is no longer just about capturing signals. It becomes the layer that decides which missing parts of reality can be responsibly inferred, reconstructed, or synthesized from available traces.

CORE becomes more generative

CORE is no longer only reasoning over known facts. It increasingly constructs plausible state representations of the world.

DRIVER becomes more dangerous

DRIVER matters more because institutions are now acting on representations that may be partly observed and partly synthetic. That means authority, verification, recourse, and action thresholds become more important than ever.

In other words, Synthetic Representation is where SENSE becomes more ambitious, CORE becomes more generative, and DRIVER becomes more dangerous.

A simple banking example: lending to a business the bank cannot fully see

Consider a bank evaluating a small manufacturer.

The bank does not see the entire business in real time. It does not fully know informal supplier dependencies, unreported cash stress, local market weakness, workforce fragility, or near-term customer churn. At best, it sees fragments: transaction histories, account balances, repayment behavior, tax records, sector signals, invoices, and macro conditions.

So what does it do?

It constructs a representation of business health.

That representation is not identical to reality. It is a synthetic operating picture built from observed facts plus inferred stability, projected cash resilience, estimated dependency patterns, and modeled future stress. In financial services, this logic is already familiar: organizations routinely rely on proxies, derived risk states, and modeled estimations when they cannot directly observe full economic condition at decision time. (ICO)

The strategic question is not whether this construction happens. It already does.

The real question is whether the institution knows that it is acting on a synthetic representation, whether it can distinguish observed from inferred components, and whether it has recourse when the constructed picture turns out to be wrong.

That is where many institutions are still weak.

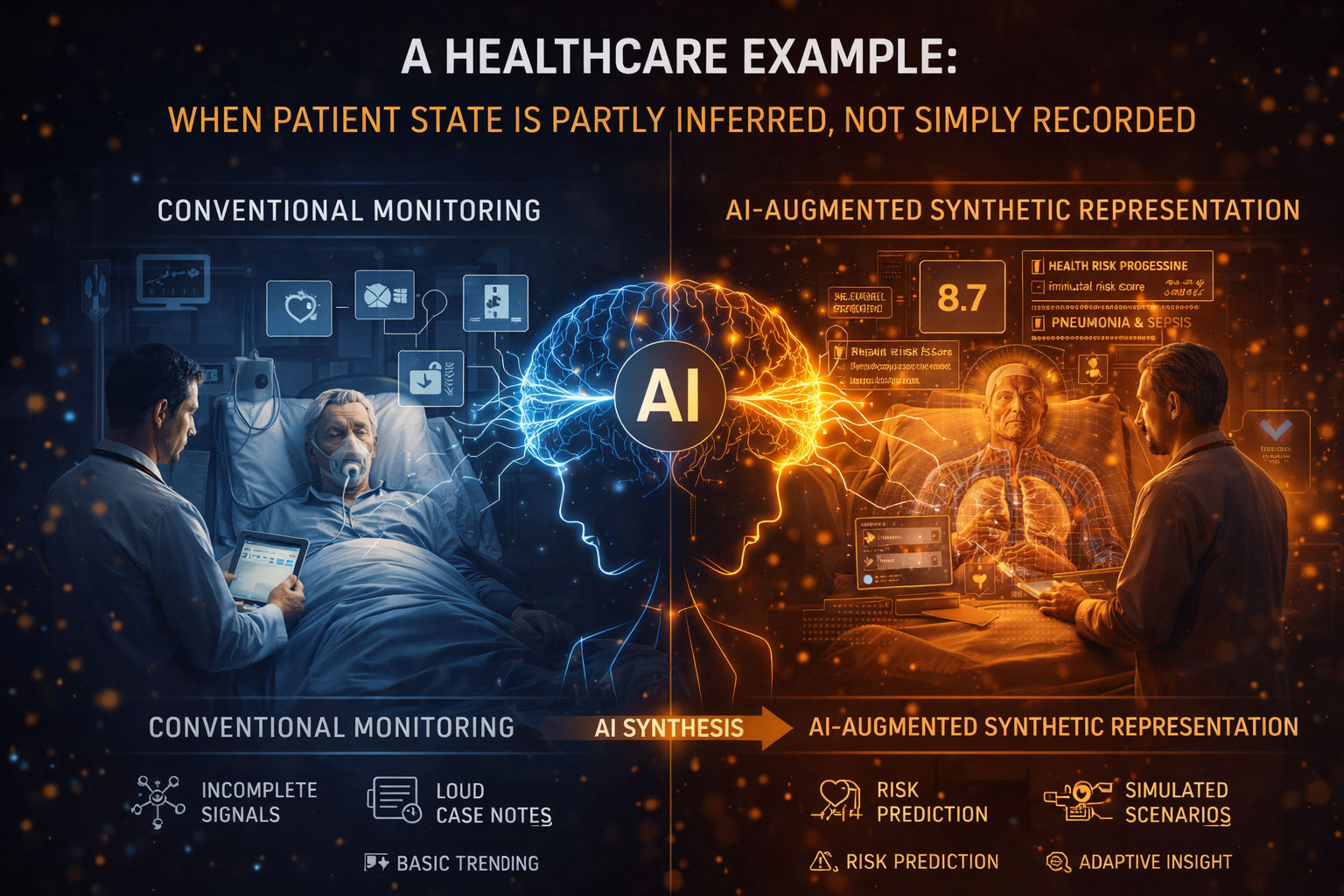

A healthcare example: when patient state is partly inferred, not simply recorded

Healthcare makes the point even more clearly.

A patient’s true condition is rarely fully visible at a single moment. Different systems may hold lab results, imaging, medication history, past admissions, wearable signals, clinician notes, and consent restrictions. No single view captures the complete living reality of the patient. So the institution constructs a working clinical state.

That state may include deterioration risk, readmission probability, medication adherence estimates, hidden progression assumptions, or escalation likelihood. Some parts are observed. Some are inferred. Some are forecast.

This is not a defect of medicine. It is an unavoidable feature of complex systems. In many scientific and operational domains, institutions routinely combine observations with models to estimate hidden or incomplete state. NOAA’s data-assimilation definition is important precisely because it makes this explicit: model data and observations are combined to produce the best representation of system state. (AOML)

The danger begins when institutions forget the difference between recorded patient data and synthetic patient state.

Once that distinction disappears, projected risk starts to feel like fact. And when projected risk feels like fact, organizations can over-automate.

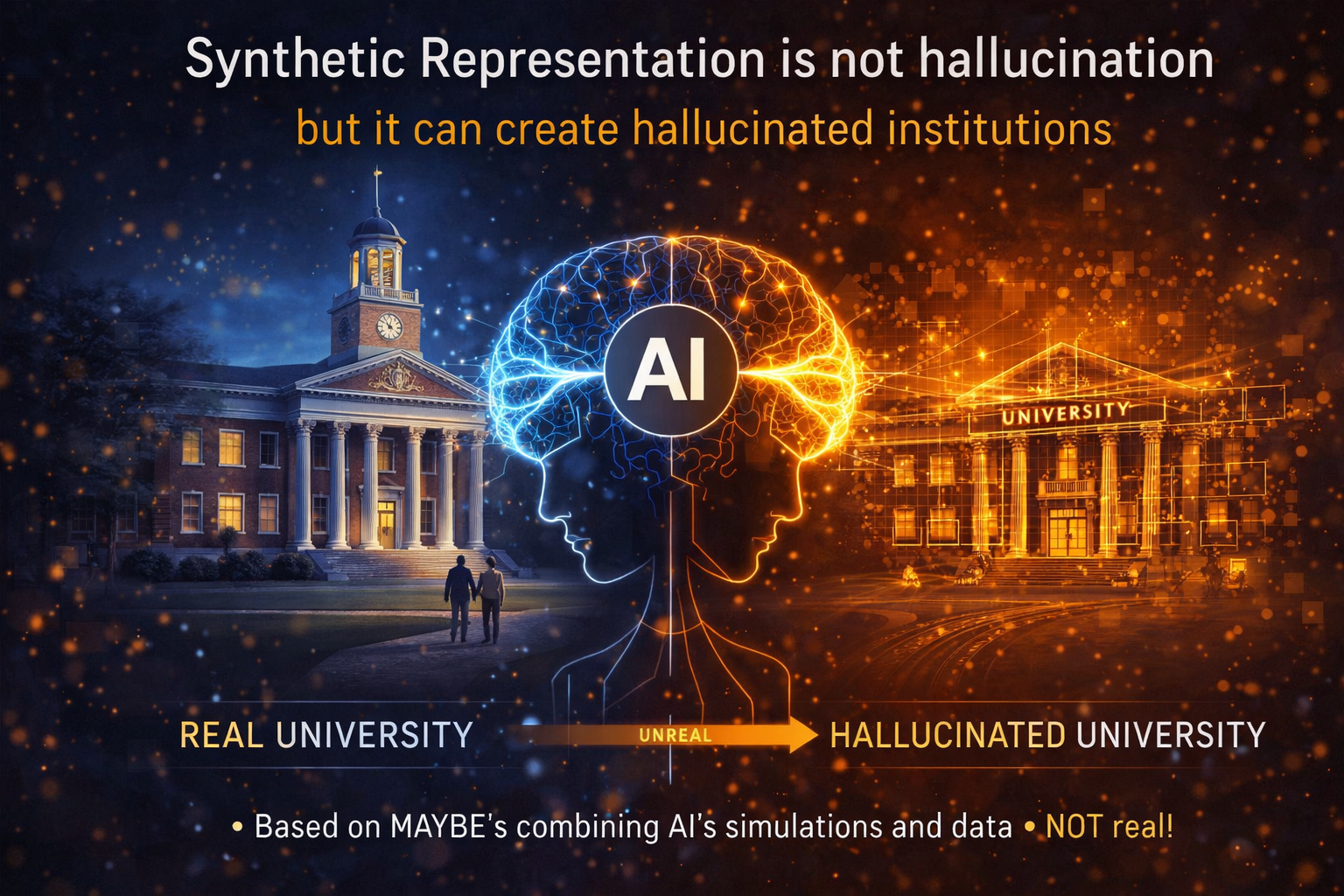

Synthetic Representation is not hallucination, but it can create hallucinated institutions

It would be a mistake to dismiss Synthetic Representation as mere hallucination. In many fields, it is necessary. Digital twins, forecasting systems, and data-assimilation systems exist precisely because complete real-time observation is impossible. (NIST)

But it would be an even bigger mistake to ignore the risks.

The AI conversation has rightly focused on model hallucination, especially in generative systems. NIST’s AI RMF and its Generative AI Profile emphasize trustworthiness, validity, reliability, accountability, transparency, and explainability because AI outputs can appear persuasive without being grounded. (NIST Publications)

Synthetic Representation introduces a larger institutional danger: hallucination at the level of organizational reality.

That happens when an institution begins acting as though its constructed representation is equivalent to observed truth.

The risk is not only that a model says something false.

The risk is that the institution reorganizes credit, care, pricing, access, prioritization, operations, or enforcement around a reality it never fully observed.

That is a much bigger problem.

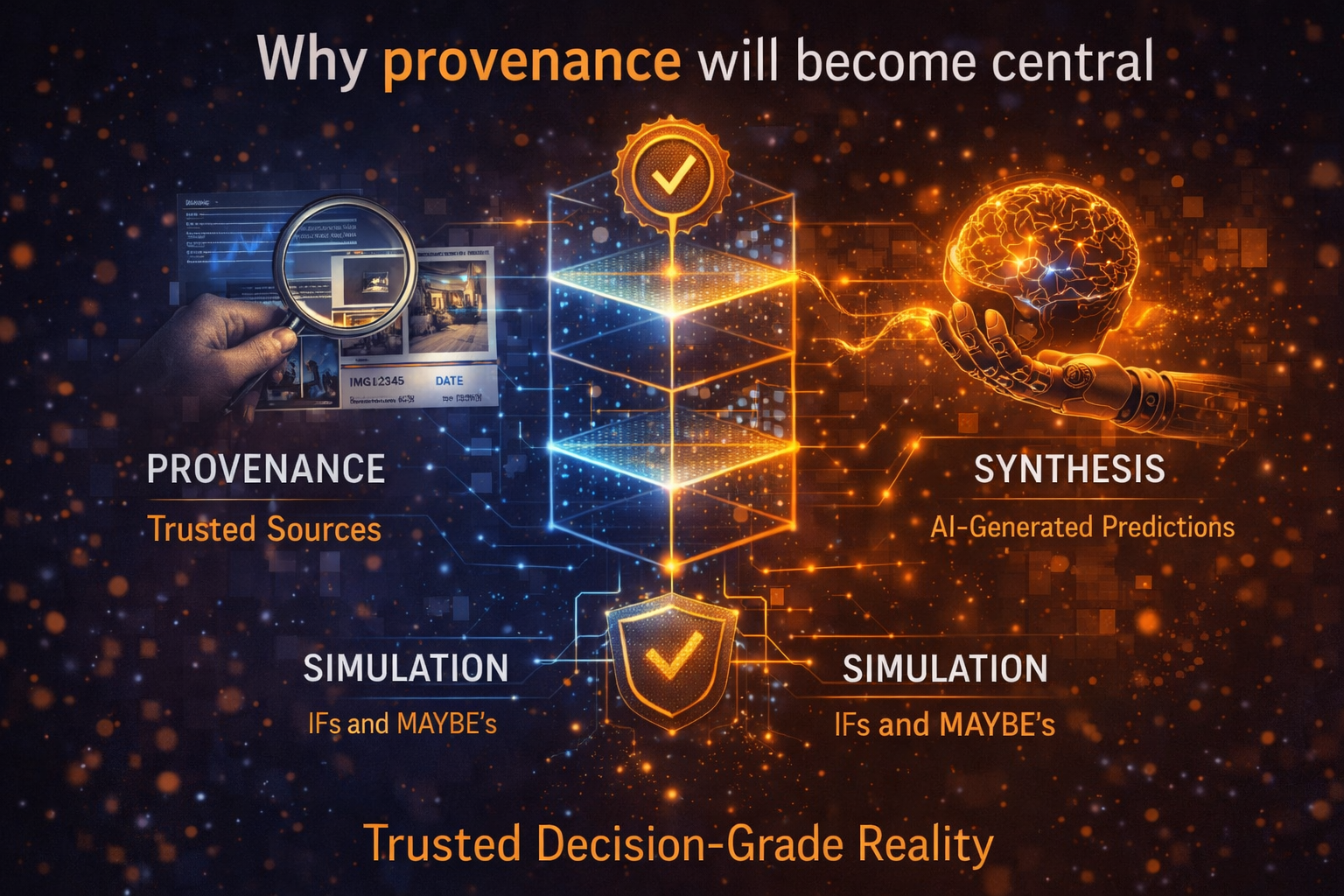

Why provenance will become central

If Synthetic Representation is going to become normal, organizations will need a way to separate and trace its layers.

What was directly observed?

What was derived from another system?

What was inferred by a model?

What was forecast?

What was simulated?

What was added by expert rules?

What was later corrected?

This is where provenance becomes essential. W3C’s PROV specifications define provenance as information about entities, activities, and people involved in producing a piece of data or thing, and note that this information can be used to assess quality, reliability, and trustworthiness. (W3C)

In the era of Synthetic Representation, provenance must expand from “Where did this data come from?” to “Which parts of this operational reality were observed, inferred, forecast, simulated, or reconstructed?”

That distinction will become one of the most important markers of maturity in enterprise AI.

The governance challenge is bigger than model governance

Most organizations are not ready for this shift because they still govern models more than they govern constructed realities.

That is not enough.

Regulatory direction is already moving toward stronger documentation, logging, lifecycle risk management, transparency, and technical records for high-risk AI. Article 11 of the EU AI Act requires technical documentation to be prepared before a high-risk system is placed on the market or put into service and kept up to date. NIST’s AI RMF likewise frames trustworthy AI around qualities such as validity, reliability, accountability, transparency, and explainability. (AI Act Service Desk)

But the next discipline will need to go further.

Institutions will need to govern:

- how much of a representation is directly observed,

- how much is synthetic,

- how often the synthetic components are refreshed,

- how confidence is attached,

- where domain experts must intervene,

- what actions are prohibited when synthetic load is too high,

- and how recourse works when synthetic assumptions fail.

This is where Synthetic Representation naturally connects to Representation Accounting.

Representation Accounting asks what an institution can legitimately claim to know.

Synthetic Representation asks what happens when some of that “knowledge” is actually a constructed approximation of the world.

The two ideas belong together. One governs knowledge claims. The other governs partially constructed reality.

New kinds of companies will emerge

This topic also matters because it opens the door to one of your most important strategic aims: identifying the firms that will define the next decade.

If Synthetic Representation becomes foundational, several new categories will emerge.

One category will validate the boundary between observed and inferred reality.

Another will measure synthetic load inside high-stakes decisions.

Another will provide confidence layers and uncertainty controls for partially synthetic states.

Another will perform post-event forensic analysis when organizations acted on constructed realities that later proved wrong.

Another will build sector-specific synthetic state engines for healthcare, finance, climate, manufacturing, or public systems.

The winners in the AI economy will not only build models. They will build trusted architectures for acting on incompletely observed worlds.

That is a much bigger category.

Why Synthetic Representation Matters

- AI systems increasingly act on inferred, not fully observed reality

- Institutions construct reality using models, signals, and simulations

- Poorly governed synthetic representations create systemic risk

- Competitive advantage will come from managing “constructed reality” safely

- Trust in AI depends on distinguishing observed vs inferred truth

What boards and CEOs should ask now

Boards and executive teams should start asking much harder questions.

Where are we already acting on representations that are partly synthetic?

Can we distinguish observed facts from inferred states?

Do we know which actions are being taken on top of simulation, projection, or model-based reconstruction?

Where could our institution mistake a plausible representation for reality itself?

What recourse exists when the system’s constructed picture turns out to be wrong?

What level of synthetic load should trigger human review, delayed action, or prohibition?

These are not technical side questions. They are strategy questions, governance questions, and legitimacy questions.

Why Synthetic Representation Matters

- AI systems increasingly act on inferred, not fully observed reality

- Institutions construct reality using models, signals, and simulations

- Poorly governed synthetic representations create systemic risk

- Competitive advantage will come from managing “constructed reality” safely

- Trust in AI depends on distinguishing observed vs inferred truth

The real strategic takeaway

The AI economy will not just analyze reality. It will increasingly construct the reality on which institutions act.

That is why Synthetic Representation matters.

This is not a niche technical phrase. It is a strategic category for understanding how modern institutions will operate when direct observation is incomplete, delayed, fragmented, or impossible.

The institutions that win will not be those that merely collect more data. They will be those that learn how to build synthetic representations responsibly — clearly separating observation from inference, attaching confidence to constructed state, governing action thresholds, and designing recourse before harm occurs.

That is the next frontier of Representation Economics.

Because the future of AI will not be decided only by who has the smartest model.

It will also be decided by who can safely construct reality when reality cannot be fully seen.

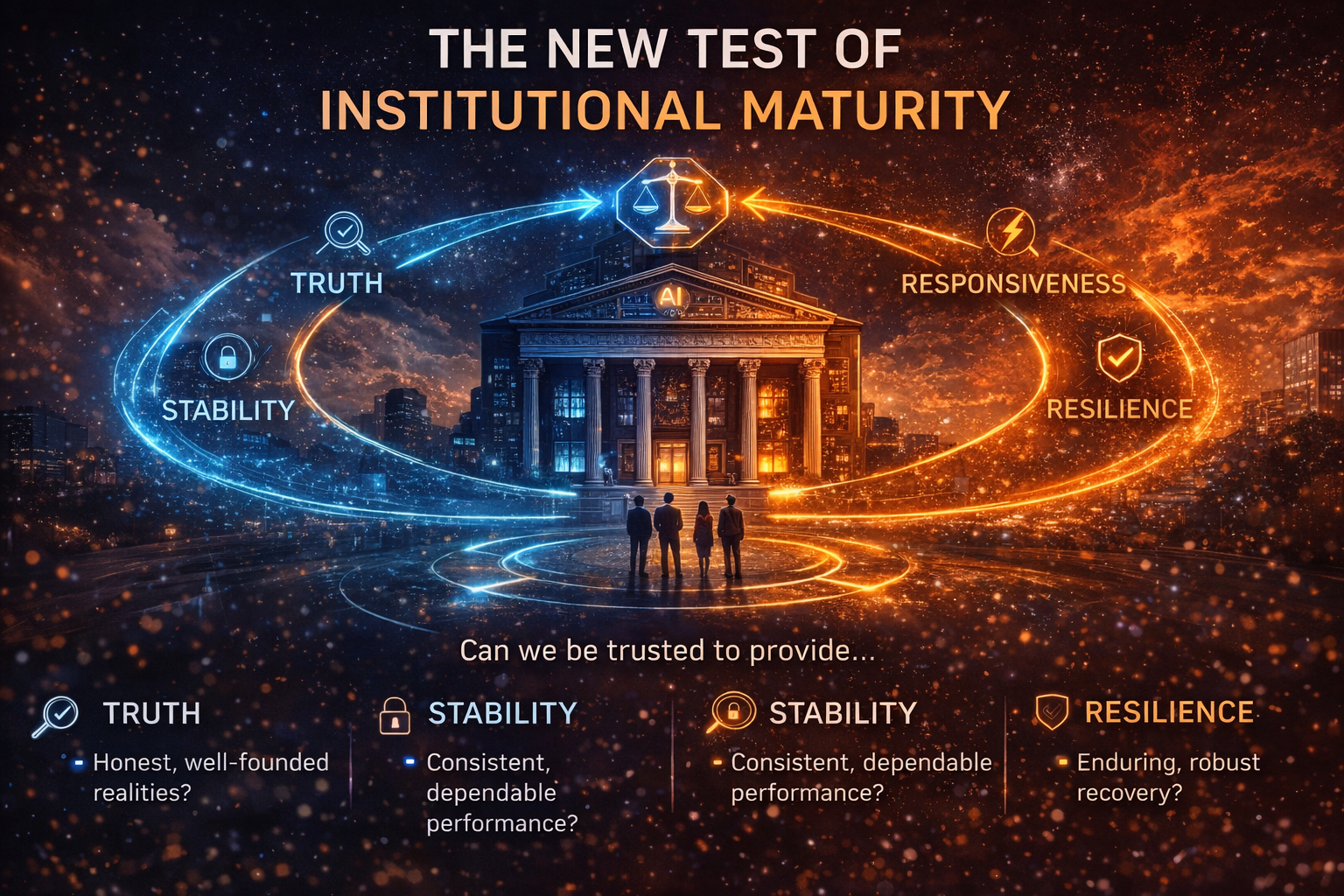

Conclusion: the new test of institutional maturity

Synthetic Representation is where the AI economy becomes more powerful and more fragile at the same time.

More powerful, because institutions can now act in environments they cannot fully observe.

More fragile, because they may begin to mistake plausible constructions for reality itself.

That is why the next standard of institutional maturity will not be model performance alone. It will be the ability to govern constructed reality: to know what was observed, what was inferred, what was simulated, what confidence should attach to each layer, and what safeguards apply before action is taken.

Boards that understand this early will build more resilient enterprises.

Companies that ignore it will automate their own assumptions.

That is the strategic threshold now approaching.

Summary

What is Synthetic Representation?

Synthetic Representation is the machine-constructed, continuously updated, decision-grade representation of an entity, system, or situation when full direct observation is not possible.

Why does Synthetic Representation matter?

Because AI systems increasingly act on partially inferred, forecast, simulated, or reconstructed realities rather than fully observed ones.

How is it different from synthetic data?

Synthetic data is artificially generated data that mimics real data patterns. Synthetic Representation is the broader institutional reality constructed for decision-making when full observation is incomplete. (ICO)

Why is this important for boards?

Because future enterprise risk will come not only from weak models, but from strong actions taken on poorly governed constructed realities.

Glossary

Synthetic Representation

A machine-constructed, continuously updated, decision-grade representation of an entity, system, or situation when full direct observation is not possible.

Synthetic Data

Artificially generated data designed to mimic the statistical properties or patterns of real data, often used for privacy, testing, or model development. (ICO)

Digital Twin

A computer model of a physical system with the potential for high accuracy, often used for simulation, monitoring, optimization, and forecasting. (NIST)

Data Assimilation

A method for combining observations with model data to produce the best estimate of a system’s state, especially in weather and climate forecasting. (AOML)

Provenance

Information about the entities, activities, and people involved in producing a piece of data or thing, used to assess quality, reliability, and trustworthiness. (W3C)

Synthetic Load

A useful governance term for the share of a representation that is inferred, forecast, simulated, or reconstructed rather than directly observed.

Representation Economics

Your broader framework for understanding how value, trust, power, and competitive advantage shift when institutions act on representations of reality rather than reality directly.

SENSE–CORE–DRIVER

Your institutional architecture framework in which SENSE makes reality legible, CORE interprets and reasons over it, and DRIVER governs legitimate action.

FAQ

What is Synthetic Representation in simple language?

It is the constructed picture of reality that an institution uses when it cannot fully observe the world directly.

Is Synthetic Representation the same as synthetic data?

No. Synthetic data is artificially generated data. Synthetic Representation is the broader operational reality built from observed facts plus inference, projection, simulation, and model-based reconstruction. (ICO)

Why is Synthetic Representation important now?

Because modern AI systems increasingly make or influence decisions in situations where full direct observation is impossible, delayed, fragmented, or too costly.

What is the biggest risk?

The biggest risk is that institutions begin treating a plausible constructed representation as if it were fully observed truth.

How does this connect to AI governance?

It expands AI governance beyond model governance. Institutions will need to govern the quality, freshness, provenance, confidence, and action thresholds of partially synthetic realities.

Which industries will feel this first?

Banking, insurance, healthcare, logistics, climate systems, manufacturing, cyber defense, and public-sector decision systems are likely to feel it earliest because they frequently operate under incomplete observation. (NIST)

What should boards do first?

Boards should identify where their organizations are already acting on inferred or reconstructed realities and ask whether those representations are clearly labeled, governable, traceable, and contestable.

What is Synthetic Representation?

Synthetic Representation is the constructed version of reality used by AI systems when full direct observation is not possible.

How is Synthetic Representation different from synthetic data?

Synthetic data mimics real data. Synthetic Representation is the broader operational reality constructed for decision-making.

Why is Synthetic Representation important?

Because AI systems increasingly act on inferred and simulated states rather than fully observed reality.

How does this relate to AI governance?

It expands governance from models to constructed reality itself.

Which industries will be impacted first?

Banking, healthcare, insurance, logistics, climate systems, and public sector systems.

References and further reading

For trustworthy AI and governance, see NIST’s AI Risk Management Framework and its Generative AI Profile. (NIST Publications)

For digital twins, see NIST’s digital twin materials and NASA’s Earth System Digital Twin framing. (NIST)

For data assimilation and state estimation, see NOAA’s explanation of how observations and model data are combined to obtain the best estimate of system state. (AOML)

For provenance, see W3C’s PROV overview and provenance notation materials. (W3C)

For synthetic data definitions, see the UK ICO glossary and UK government guidance. (ICO)

For regulatory direction on technical documentation for high-risk AI, see Article 11 materials on the EU AI Act. (AI Act Service Desk)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Conversion Industry: Why the Biggest AI Companies Will Rebuild Reality Before They Build Intelligence – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Accounting: The New Discipline That Will Decide Which AI-Driven Institutions Can Be Trusted – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.