Most conversations about AI still begin in the wrong place.

Leaders ask which model is smarter, which model is faster, which model is cheaper, and which model is safer. Those are valid questions. But they are no longer the deepest ones.

The deeper question is this:

Who can afford to make reality legible for machines?

That is where the next divide in the AI economy begins.

A global retailer can tag products, resolve duplicate customer identities, clean transaction histories, standardize catalogs, and update inventory in near real time. A neighborhood merchant often cannot. A large logistics network can timestamp events, track vehicles, reconcile route exceptions, and model disruptions continuously. A small transporter may still rely on phone calls, fragmented apps, handwritten notes, and memory. The difference is not simply “digital maturity.” It is the cost of converting reality into machine-readable form—and the fact that this cost is not equal for everyone.

That asymmetry matters more than many leaders realize.

Recent OECD work highlights persistent gaps in AI adoption between SMEs and larger firms, while the World Bank argues that AI readiness depends on strong foundations such as connectivity, compute, skills, and relevant data context. McKinsey’s 2025 survey points in the same direction: organizations that are serious about value capture are redesigning workflows, elevating governance, and putting structure around adoption rather than treating AI as a loose layer on top of business operations. (OECD)

This is why Representation Economics matters.

In the AI era, value will increasingly flow not just to those who own data or deploy models, but to those who can represent the world in forms machines can reliably interpret and act on. And because the cost of doing this is uneven, markets will become asymmetric. One side will be highly visible to machines. The other will be partially visible, intermittently visible, or invisible.

That asymmetry will shape pricing, discovery, trust, underwriting, compliance, labor allocation, insurance, and even which industries can fully participate in the AI economy.

What are asymmetric representation costs?

Asymmetric representation costs are the unequal costs faced by firms, sectors, workers, or regions in making their reality machine-readable.

That may sound abstract, but it is already happening everywhere.

Take lending.

For a salaried employee in the formal economy, the machine-readable trail often already exists: payroll records, tax filings, credit history, bank statements, employer identity, repayment behavior, and verified addresses. For an informal worker with seasonal income, cash-based transactions, fragmented records, and limited digital traces, the same decision is much harder and more expensive to represent well.

The person may be equally creditworthy in real life. But one person is cheaper for the system to “see.”

That is the asymmetry.

In the AI economy, visibility is not just a technical state. It is an economic condition.

The mistake leaders keep making

Many executives assume that once AI tools become cheap, AI advantage will spread evenly.

That is unlikely.

Model access is becoming cheaper. General-purpose AI is becoming more available. Smaller and lighter models are spreading globally. But the cost of preparing reality for those systems remains deeply uneven. The World Bank’s 2025 AI foundations report makes this clear: AI opportunity may broaden, but firms and countries still need strong foundations in infrastructure, data context, skills, and institutional capacity. Those foundations are not distributed equally. (World Bank)

This means the real moat may shift away from the model and toward the representation layer.

Anyone can increasingly rent intelligence.

Not everyone can afford to structure reality for intelligence.

That single shift changes how we should think about AI strategy.

SENSE, CORE, and DRIVER make this visible

The SENSE–CORE–DRIVER framework is useful here because it shows where the real costs sit.

SENSE: the cost of making reality legible

SENSE is where institutions detect signals, connect them to entities, build state representations, and update those states over time.

This is the most underestimated cost in AI.

Sensors must be installed. Records must be digitized. IDs must be matched. Events must be time-stamped. Schemas must be standardized. Exceptions must be captured. State must be refreshed. Across banking, healthcare, agriculture, public services, and supply chains, the hard part is often not “having data.” It is making reality connected, current, and coherent enough for machines to act on safely.

That is why sectors with stronger digital infrastructure move faster. The OECD identifies connectivity, data, algorithms, compute, skills, and finance as key enablers for SME AI adoption. The World Economic Forum has made a similar point in health: AI systems become more scalable and trustworthy when diverse data sources are interoperable rather than fragmented. (OECD)

CORE: the illusion of equal intelligence

CORE is where models infer, predict, rank, recommend, and decide.

But CORE can only work with what SENSE makes available.

If one firm feeds an AI system rich, linked, continuously refreshed state representations and another feeds it patchy, stale, contradictory traces, the same model will appear “smarter” in the first setting and “worse” in the second. In many boardrooms this is misread as a model problem. Very often it is a representation problem.

This is why two firms using similar AI tools can produce radically different outcomes. One is not necessarily more intelligent. It may simply be less expensive for the machine to understand.

DRIVER: the cost of acting safely

DRIVER is where institutions delegate authority, verify actions, execute decisions, and create recourse when things go wrong.

This matters because once AI starts acting rather than just advising, representation must become defensible.

A machine can only act confidently when the institution can defend the representation behind the action: identity, evidence, authorization, auditability, reversibility, and recourse. McKinsey’s 2025 survey shows that organizations capturing more value from AI are formalizing governance, redesigning workflows, and mitigating risks rather than treating AI deployment as a purely technical exercise. (McKinsey & Company)

In other words, SENSE makes reality legible, CORE makes it computable, and DRIVER makes it actionable.

If SENSE is expensive, CORE looks weaker than it really is. If DRIVER is weak, even good intelligence becomes unsafe.

Five simple examples that make the idea real

-

Retail

A large e-commerce platform knows product attributes, seller history, return rates, customer intent, delivery performance, and demand movement. A small offline retailer may have strong customer trust and good products, but very little of that is machine-legible. As a result, the platform becomes easier to rank, finance, price, insure, and recommend.

-

Lending

A formal borrower becomes visible through structured records. An informal borrower may remain economically valuable, but computationally expensive to model. The machine does not merely “prefer” one person. It is cheaper for the institution to understand one person.

-

Healthcare

A connected health ecosystem can unify history, prescriptions, lab results, and care journeys. A fragmented patient journey across disconnected clinics, labs, pharmacies, and insurers creates representation gaps. The healthcare challenge is real, but so is the representation challenge. Interoperability is not a side issue. It is the cost of making the patient visible as a coherent entity. (World Economic Forum)

-

Agriculture

A farm with digitized land records, weather integration, supply chain links, transaction history, sensor inputs, and credit traces becomes easier to underwrite and optimize. A small farmer without those links may be equally capable in reality, but more expensive to represent safely.

-

Labor markets

A worker with verified credentials, portfolio traces, project history, references, and skill signals becomes easier for machine-mediated markets to match and trust. Another worker may be just as skilled, but if that capability is poorly represented, they are under-ranked, under-matched, or excluded.

The pattern is the same in every case:

AI does not simply reward quality. It rewards affordable legibility.

Why this will reshape entire industries

The future of AI will not unfold uniformly.

Some sectors have relatively low representation costs. Online retail, digital advertising, card payments, software support, and structured enterprise workflows are already close to machine-readable. Their realities are easier to tag, monitor, update, and test.

Other sectors have much higher representation costs. Informal labor, fragmented healthcare, smallholder agriculture, construction exceptions, care work, local services, field operations, and trust-heavy human interactions are harder to convert cleanly into machine-readable form.

This does not make those sectors less important. It makes them more expensive to formalize for machines.

And that creates a major strategic shift:

The next winners will not always be the firms with the best AI. They may be the firms that lower representation costs for everyone else.

That is where new company categories emerge.

The new firms that may emerge

If this thesis is correct, the AI era will produce a new layer of firms whose core job is not to build intelligence, but to lower the cost of making reality legible.

Representation infrastructure firms

These firms will build identity rails, schemas, event pipelines, interoperability layers, and state synchronization systems that allow markets to “see” people, firms, assets, and events more reliably.

Representation assurance firms

These firms will verify that machine-readable representations are current, auditable, and fit for action.

Representation conversion firms

These firms will help messy, analog, fragmented sectors become legible enough for AI-enabled coordination.

Representation fiduciaries

These institutions will act on behalf of individuals, small businesses, or vulnerable entities to ensure they are not misrepresented, erased, or unfairly simplified.

Representation leasing firms

These firms will allow smaller players to rent machine-readable sector models rather than build their own end-to-end representation stack.

This is one reason Representation Economics is larger than a governance discussion. It is a theory of how value, power, and institutional structure shift once reality itself becomes an expensive input into computation.

The board-level question that matters

Most incumbents are still asking:

How do we use AI?

A better question is:

What is our cost of making reality legible enough for AI to act on safely?

That is a much stronger board question because it forces leaders to examine:

- where their signals come from,

- how many entities are poorly resolved,

- where state is stale,

- where exceptions disappear,

- which workflows lack machine-readable evidence,

- and where action is being delegated on weak representation.

The firms that win will reduce representation costs without destroying nuance.

The firms that lose will usually do one of two things:

- underinvest in representation and remain invisible to machine-mediated markets, or

- oversimplify reality so aggressively that the machine becomes confident for the wrong reasons.

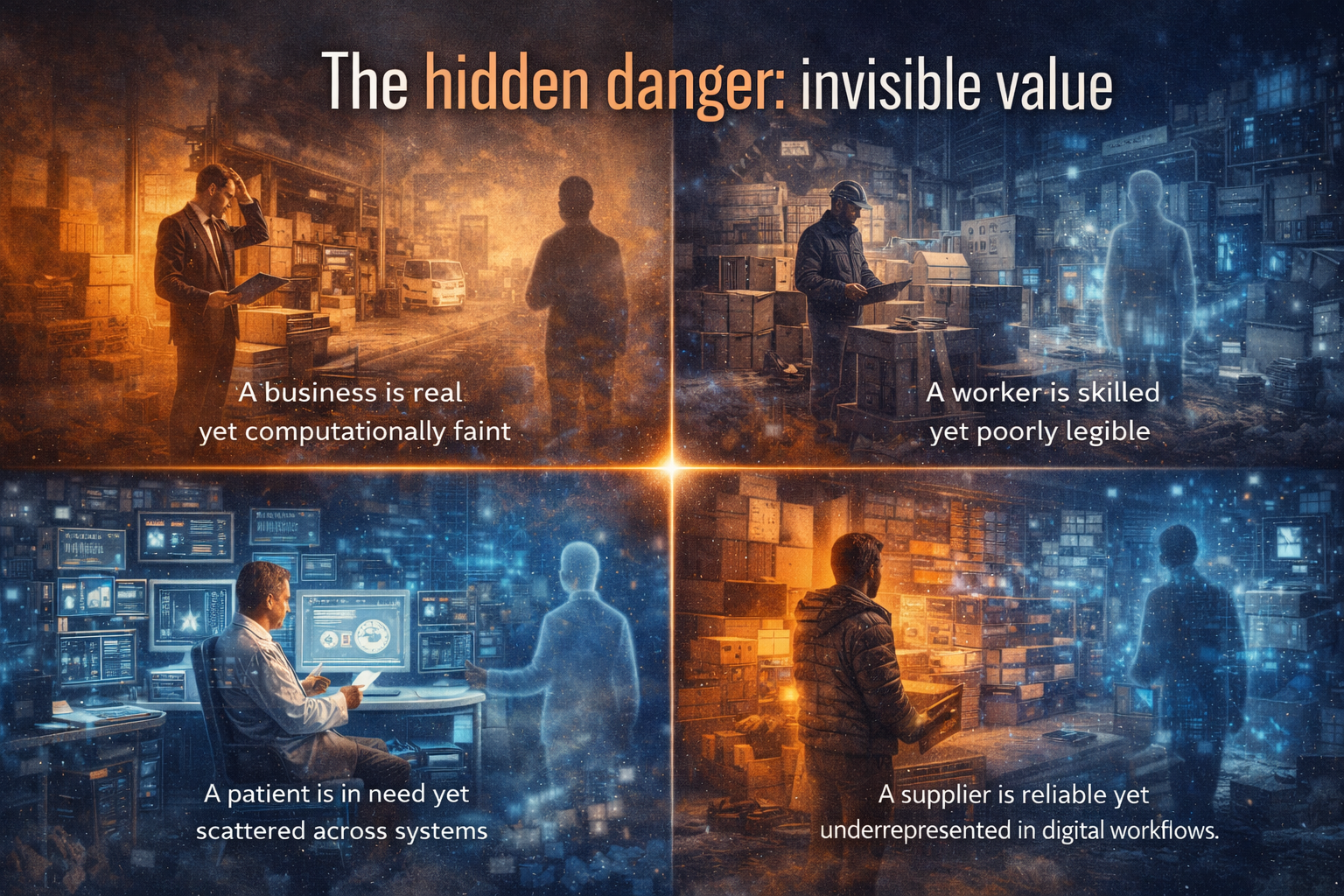

The hidden danger: invisible value

One of the deepest risks in the AI economy is not bad intelligence. It is unseen value.

A business can be economically real yet computationally faint.

A worker can be capable yet poorly legible.

A patient can be in need yet scattered across systems.

A supplier can be reliable yet underrepresented in digital workflows.

When that happens, markets start mistaking representation quality for actual value.

That is how AI can widen concentration even while appearing neutral.

This is why the next debate in AI should not be limited to model safety, model bias, or model performance. Those matter. But they sit downstream of a more basic issue:

Who gets represented well enough to enter machine-mediated markets at all?

Conclusion: the future belongs to institutions that lower the cost of being seen

In the industrial era, advantage often came from scale of production.

In the digital era, advantage often came from scale of software.

In the AI era, advantage will increasingly come from scale of representation—the ability to convert reality into machine-readable form cheaply, continuously, and responsibly.

That is why asymmetric representation costs will redefine markets.

Not because machines are unfair by design.

But because markets built around machine visibility will reward those who are easier to represent.

The institutions that matter most in the next decade will therefore not simply be those with strong CORE intelligence. They will be those that invest in SENSE with discipline and build DRIVER with legitimacy.

Because AI does not act on reality directly.

It acts on what institutions can afford to represent.

And when reality becomes expensive, power shifts to those who can lower that cost—for themselves, for their ecosystems, and eventually for entire industries.

That is the deeper law of Representation Economics.

This article is part of a broader framework called Representation Economics, which explains how AI changes value creation by redefining how reality is seen, modeled, and acted upon.

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- The Representation Utility Stack: Why AI’s Next Competitive Advantage Will Come from Interoperable Reality (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy (raktimsingh.com)

- Why Entire Industries Cannot Use AI Until Reality Becomes Machine-Ready (raktimsingh.com)

Glossary

Representation Economics

A framework for understanding how value in the AI era depends on what institutions can detect, model, govern, and act on.

Asymmetric representation costs

The unequal cost faced by different firms, sectors, or individuals in making their reality machine-readable.

Machine-readable reality

A version of the world that has been structured enough for software and AI systems to identify, interpret, compare, and act on.

Digital legibility

The degree to which a person, process, asset, or event can be clearly understood by digital systems.

SENSE

The layer where signals are detected, connected to entities, modeled as state, and updated over time.

CORE

The reasoning layer where systems infer, predict, recommend, and optimize.

DRIVER

The action layer where institutions authorize, verify, execute, and create recourse for AI-driven actions.

Representation infrastructure

The systems that make people, assets, events, and relationships visible and usable to machine decision systems.

Representation fiduciary

An institution or intermediary that helps ensure an entity is not misrepresented or erased in machine-mediated systems.

Representation cost curve

The effective cost of turning messy, real-world complexity into machine-legible form.

FAQ

What are asymmetric representation costs in AI?

They are the unequal costs faced by different firms, sectors, or individuals in making their reality understandable to AI systems. Some are cheap for machines to see. Others are expensive.

Why does this matter for business strategy?

Because AI value depends not only on model quality but also on whether your operations, customers, suppliers, and workflows are legible enough for machines to reason about safely.

How does this relate to SENSE–CORE–DRIVER?

SENSE captures the cost of making reality legible, CORE transforms that representation into decisions, and DRIVER determines whether those decisions can be executed safely and legitimately.

Why can two firms using similar AI tools get very different results?

Because the same model performs differently depending on the quality, freshness, coherence, and actionability of the representation it receives.

Which industries face the highest representation costs?

Industries with fragmented records, informal workflows, disconnected ecosystems, or high dependence on tacit human judgment tend to have higher representation costs.

What new firms may emerge because of this shift?

Representation infrastructure firms, representation assurance firms, representation conversion firms, representation fiduciaries, and representation leasing firms.

What is the board-level takeaway?

Boards should ask not only how to deploy AI, but what it costs the institution to make reality legible enough for AI to act on safely.

Q1: What are asymmetric representation costs?

Asymmetric representation costs are the unequal costs faced by different entities in making their real-world activities machine-readable for AI systems.

Q2: Why do asymmetric representation costs matter in AI?

Because AI systems depend on structured, high-quality inputs, those who can afford to make reality legible gain a significant advantage in decision-making, visibility, and market access.

Q3: How does SENSE–CORE–DRIVER relate to this concept?

SENSE captures reality, CORE processes it, and DRIVER executes decisions. If SENSE is weak or expensive, the entire AI system underperforms.

Q4: Which industries are most affected?

Industries with fragmented data, informal processes, or low digital infrastructure face higher representation costs.

Q5: What is the strategic takeaway for leaders?

Leaders must focus not just on AI adoption, but on reducing the cost of making their organization’s reality machine-readable.

References and further reading

Recent OECD work finds that SME AI adoption remains lower than among larger firms and identifies enabling foundations such as connectivity, data, compute, skills, and finance. (OECD)

The World Bank’s 2025 AI foundations work argues that AI opportunity depends on readiness conditions such as infrastructure, data context, and capability, especially across lower- and middle-income settings. (World Bank)

McKinsey’s 2025 survey shows that organizations creating more value from AI are redesigning workflows, elevating governance, and building operational structure rather than relying on models alone. (McKinsey & Company)

The World Economic Forum’s work on digital health highlights that scalable, trustworthy AI in healthcare depends on strong interconnectivity across diverse data sources and broader system-level alignment. (World Economic Forum)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.