The Scarcity of Reality: Executive Subheading

As AI becomes cheaper, faster, and more widely available, the real bottleneck is no longer intelligence itself. It is the ability of institutions to create, verify, govern, update, and retire high-trust representations of reality that machines can safely act upon. AI adoption reached 78% of organizations in 2024, up from 55% in 2023, while generative AI investment continued to rise sharply, underscoring that intelligence is becoming more abundant. (Stanford HAI)

Artificial intelligence is entering a new phase. For years, the conversation centered on model power: bigger models, cheaper inference, better reasoning, richer multimodality. Those improvements matter.

But they do not answer the deeper strategic question now facing boards, CEOs, and CIOs: what happens when intelligence becomes abundant, but reality remains messy, fragmented, stale, and hard to trust? Stanford’s 2025 AI Index points to exactly this inflection point: AI is spreading fast across business, and generative AI investment remains strong. The scarcity is shifting. (Stanford HAI)

That shift changes everything.

For decades, the digital economy was shaped by a powerful slogan: data is the new oil. It captured an important truth, but it also led many institutions in the wrong direction. Data, by itself, is not the same as reality that a machine can safely understand, reason over, and act upon.

A company can have millions of records and still not know which supplier is actually at risk, which patient profile is incomplete, which customer identity is duplicated, which asset state is stale, or which automated decision deserves to be challenged. In the AI era, the core problem is no longer access to information alone. The real problem is whether that information has been turned into a high-trust representation of reality.

That is why the next great competition in AI will not be defined only by who has the most powerful models. It will be defined by who can create, maintain, govern, and renew the most trustworthy version of reality over time.

This is the central idea behind Representation Economics: AI does not act on reality directly. It acts on what a system can represent as reality. If that representation is incomplete, ambiguous, outdated, poorly governed, or impossible to contest, then even highly advanced AI will produce fragile outcomes. In that world, the scarce asset is not compute. It is not even intelligence. The scarce asset is high-trust, low-ambiguity reality.

And scarcity is what markets reward.

High-trust representation in the AI economy refers to machine-usable reality that is accurate, current, attributable, authorized, and governable across its lifecycle. It enables AI systems to make reliable decisions, execute actions safely, and remain accountable through verification and recourse mechanisms.

The real bottleneck is not intelligence

Many discussions about AI still assume that better models will solve most enterprise problems. Better reasoning, larger context windows, multimodal systems, and lower-cost inference all help. But they do not remove a more fundamental constraint: the quality of the reality entering the system.

NIST’s AI Risk Management Framework makes this point clearly. It notes that the data used to build or operate an AI system may not be a true or appropriate representation of the context or intended use, and that harmful bias and other data-quality issues can weaken trustworthiness.

The EU AI Act similarly emphasizes data governance, risk management, and lifecycle controls for high-risk AI systems, including requirements around relevance, representativeness, and quality. The OECD AI Principles also emphasize trustworthy AI, including robustness, transparency, accountability, and respect for human rights and democratic values. Together, these are signals of a global shift: the governance conversation is moving from model fascination to representation discipline. (NIST Publications)

This is why so many AI projects disappoint. They do not fail because the model is weak. They fail because the institution is representation-poor.

A bank may have strong fraud models, but if identities are fragmented across products, addresses are outdated, and behavior is interpreted without full context, the system may flag the wrong customer.

A hospital may deploy a sophisticated assistant, but if allergy information is buried in scanned documents and medication history is incomplete, the assistant is reasoning over a partial patient. A logistics company may use AI to optimize routes, but if warehouse states, local disruptions, and inventory conditions are not updated in time, optimization becomes confident miscoordination.

The model can be excellent in all three cases. The failure begins earlier.

Reality is abundant. Usable reality is not

This is the economic insight that matters most.

Reality, in raw form, is everywhere. Signals are constantly generated by people, machines, documents, workflows, sensors, conversations, approvals, transactions, and exceptions. But only a small share of that reality becomes usable for meaningful institutional action.

To become valuable, reality must be transformed into representation that is identifiable, attributable to the correct entity, current enough for the decision at hand, structured enough to reason over, authorized for use, and governable when something goes wrong.

That combination is rare.

This is why the future AI economy will be shaped by representation scarcity. The most valuable organizations will not simply be the ones with the most data. They will be the ones with the greatest ability to convert messy reality into trusted, machine-usable representation.

This also explains why the next wave of competitive advantage increasingly sits outside the model itself. It sits in the systems that make reality legible, verifiable, contestable, and continuously updated.

Why scarcity has a lifecycle

Scarcity is often discussed as if it were static. It is not.

High-trust representation is not something an organization captures once and stores forever. It is a living asset. It has to be created, checked, governed, challenged, refreshed, and sometimes retired.

That is why the AI economy will be defined not just by representation scarcity, but by the lifecycle of high-trust representation.

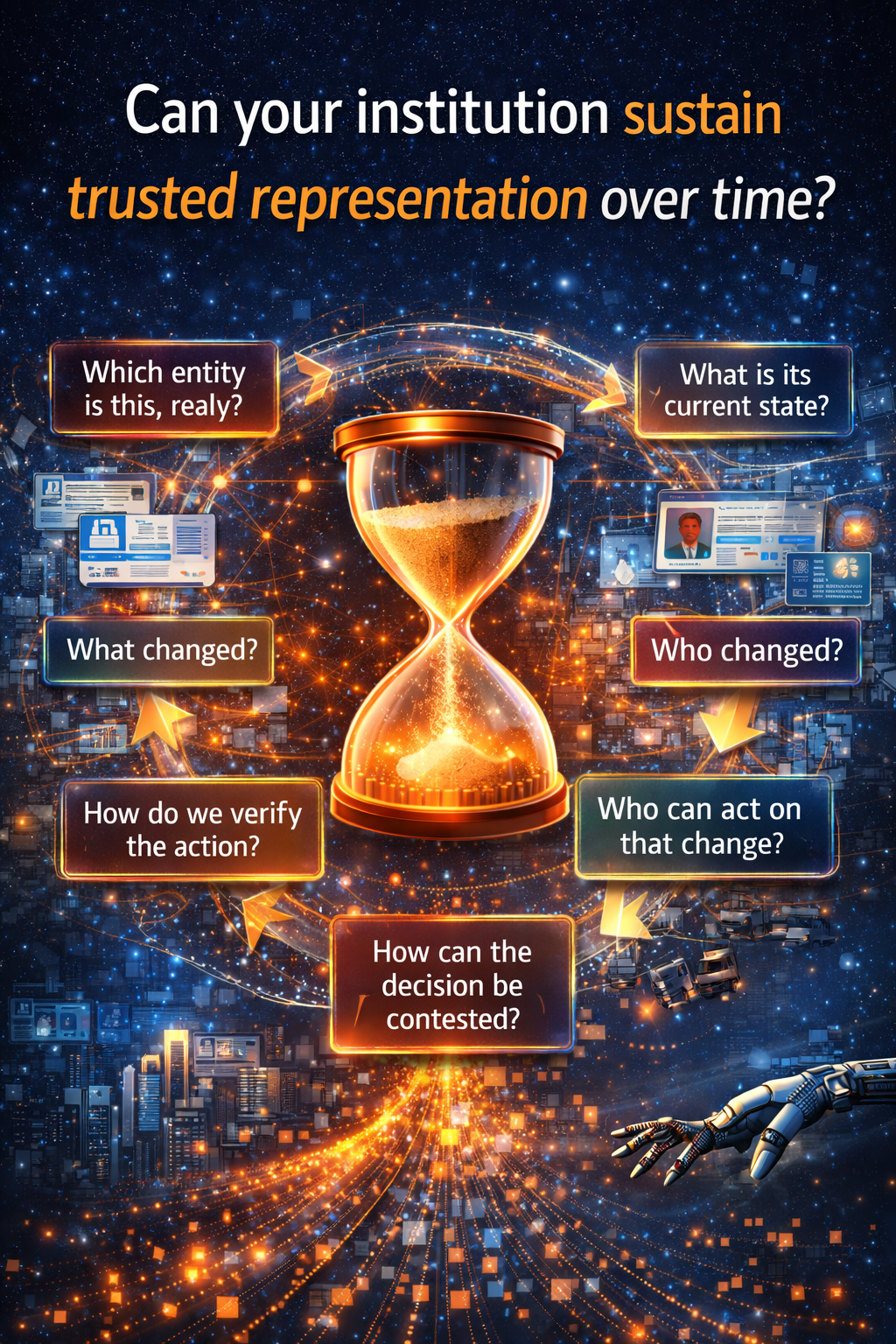

Put simply, the most important question is no longer, “Do you have data?” It is not even, “Do you have AI?” The real question is this:

Can your institution sustain trusted representation over time?

That is a much harder capability to build. But it is also where the deepest value will accumulate.

The lifecycle of high-trust representation

-

Creation: turning signals into machine-usable reality

The lifecycle begins with creation.

Reality enters a system through signals: transactions, forms, clickstreams, documents, sensor readings, emails, approvals, exceptions, and countless other traces. But a signal is not yet a representation. It becomes one only when it is attached to the right entity, given context, and shaped into a state a system can use.

A customer complaint is just text until it is linked to the right account, order, product, service history, and timing. A crop sensor reading is just a number until it is tied to the right field, weather pattern, irrigation status, and crop condition. A machine alert is just noise until it is linked to the actual asset, operating history, and failure impact.

Creation is where representation begins. And it is where many organizations are weakest.

-

Verification: deciding whether reality can be trusted

Once a representation exists, the next question is whether it can be trusted.

Is the record accurate? Is it complete enough? Is it recent enough? Has it been tampered with? Does it reflect the intended context? NIST, the OECD, and the EU’s AI governance approach all reinforce the importance of validity, traceability, accountability, and data governance across the AI lifecycle. (NIST Publications)

Without verification, representation may exist, but it cannot be safely acted upon.

-

Authorization: defining who can use representation, and for what

Even a correct representation cannot be used by everyone for every purpose.

A healthcare record may be valid, but not every person or system should access it. A financial risk score may be relevant for underwriting, but not for every downstream decision. A machine state may be visible to operations, but not directly executable by an unbounded autonomous system.

Authorization is where institutions decide who gets to use what representation, under which rules, and for which actions. This is where governance becomes operational rather than rhetorical.

-

Reasoning: where AI enters, but not where truth begins

This is the moment most people call “the AI part.”

The model interprets the representation, predicts outcomes, recommends actions, ranks options, summarizes situations, or triggers decisions. But the quality of reasoning is inseparable from the quality of representation beneath it.

A model cannot fully compensate for missing entities, stale state, broken identity resolution, unresolved ambiguity, or hidden exclusions. It can only infer around them. Sometimes that is enough. Sometimes it is dangerous.

-

Execution: when representation becomes consequence

This is where decisions stop being informational and start becoming real.

A loan is denied. A shipment is rerouted. A claim is escalated. A contract term is changed. A machine is shut down. A customer is flagged. A worker is screened.

Execution is where AI leaves the world of suggestion and enters the world of consequence. That is precisely why trust becomes harder here. The more directly a system acts, the more defensible the representation behind that action must be.

-

Contestation: allowing reality to be challenged

Reality is rarely final.

People disagree with records. Sensors fail. Context changes. Systems make the wrong connection. Policies are applied too rigidly. Edge cases surface. This is why meaningful review, fallback, explanation, and appeal matter. The White House’s Blueprint for an AI Bill of Rights highlights human alternatives, human consideration, and fallback, including the ability to appeal or contest impacts. The UK ICO similarly emphasizes meaningful human review, with reviewers having the authority, independence, and experience to challenge automated outcomes. (ACLU Data for Justice)

Contestability is not a cosmetic feature. It is part of how institutions remain legitimate when automated systems affect real people.

-

Updating: keeping representation alive as the world moves

Representations decay.

Customers move. Suppliers change. Medical conditions evolve. Machines age. Regulations shift. Inventories fluctuate. Permissions expire. Relationships reorganize. A representation that was trusted yesterday can become misleading tomorrow.

This is why many AI systems fail in production even when they looked impressive in pilots. The issue is not only model drift. It is representation drift. The world moves, but the institution’s machine-readable reality does not move with it.

-

Retirement: knowing when reality should stop being treated as current

Some representations should no longer exist, no longer be used, or no longer be treated as active truth.

Records may need to expire, be corrected, be archived, or be deleted. A stale risk flag may need to be removed. An inferred profile may need to be withdrawn. A decision artifact may need to be superseded by new evidence.

Retirement is what prevents institutions from acting forever on yesterday’s truth.

This is the full economic picture: reality becomes valuable not merely when it is captured, but when it survives this lifecycle with enough trust to support action.

Why this changes strategy

Once leaders understand this lifecycle, the strategy conversation changes.

The old AI question was, “How do we deploy smarter models?”

The new AI question is, “How much of our reality can be trusted enough to move through the full lifecycle of institutional action?”

That is a very different board conversation.

It shifts attention from AI as a tool to AI as an institutional capability. It changes what firms invest in. It changes what new categories of companies emerge. It changes which incumbents thrive and which ones become invisible.

In my SENSE–CORE–DRIVER framework, this is exactly why durable competitive advantage does not come from CORE alone. Your own published work already establishes this architecture clearly: SENSE is the legibility layer where reality becomes machine-readable; CORE is the cognition layer; DRIVER is the legitimacy layer that governs action, identity, verification, execution, and recourse. Your related article on Decision Scale reinforces the same institutional shift by arguing that advantage is moving from labor scale to governed decision scale. (raktimsingh.com)

Most organizations are racing to strengthen CORE. But the institutions that will truly win the AI era will be the ones that build stronger SENSE and stronger DRIVER. They will see better, represent better, govern better, and recover better when things go wrong.

That is what makes high-trust representation so economically powerful. It compounds.

The new winners in the AI economy

The winners of the next decade will not simply be those who can generate the most intelligence. They will be those who can reduce ambiguity in reality without destroying trust.

They will build systems that can answer questions such as:

- Which entity is this, really?

- What is its current state?

- What changed?

- Who is allowed to act on that change?

- How do we verify the action?

- How can the decision be contested?

- How do we update or retire the representation afterward?

This applies across sectors. In finance, it shapes underwriting quality, fraud resilience, and recourse. In healthcare, it affects diagnosis support, triage, consent, and continuity of care. In supply chains, it affects provenance, resilience, and coordination. In government, it influences entitlements, accountability, and public trust.

In every case, the strategic issue is the same: can the institution sustain a trusted, machine-usable version of reality?

That is why the future AI economy will be defined less by model abundance and more by representation scarcity.

Conclusion: a new law of value creation

We are entering an era in which intelligence is no longer rare enough to be the sole source of advantage.

As models become more accessible, the center of value creation moves elsewhere. It moves to the organizations that can make reality legible, trusted, governed, contestable, and continuously renewable.

That is the deeper meaning of Representation Economics.

The scarce asset in the AI era is not information in the abstract. It is the small, valuable portion of reality that has successfully passed through the lifecycle of creation, verification, authorization, reasoning, execution, contestation, updating, and retirement.

That is the reality institutions can act on with confidence.

That is the reality markets will reward.

And that is why the AI economy will not ultimately be won by those who build the smartest systems alone. It will be won by those who can sustain the most trustworthy version of reality over time.

Because in the end, intelligence is becoming abundant.

But reality that machines can safely trust, and institutions can responsibly act upon, remains scarce.

The next winners will not be those with the smartest models alone.

They will be those who can build, verify, govern, and renew the most trusted version of reality.

FAQ

What is high-trust representation in AI?

High-trust representation is machine-usable reality that is sufficiently accurate, current, attributable, structured, authorized, and governable for meaningful institutional action.

Why is reality becoming scarce in the AI economy?

Raw signals are abundant, but only a small share becomes usable for AI once institutions account for trust, context, verification, permissions, contestability, and updates.

Why do AI systems fail even when the model is strong?

They often fail because the system is reasoning over incomplete, ambiguous, stale, or poorly governed representations of reality rather than over trustworthy institutional truth. (NIST Publications)

What is the lifecycle of high-trust representation?

It includes creation, verification, authorization, reasoning, execution, contestation, updating, and retirement.

How does this relate to AI governance?

Global frameworks from NIST, the OECD, the EU, the White House, and the ICO all point toward trust, traceability, human review, data governance, and accountability across the AI lifecycle. (NIST Publications)

How does SENSE–CORE–DRIVER connect to this article?

SENSE explains how reality becomes machine-legible, CORE explains how systems reason over that representation, and DRIVER explains how action is governed, verified, executed, and corrected. (raktimsingh.com)

Why should boards care about representation scarcity?

Because as AI scales, the cost of acting on weak representation rises. Poor representation creates decision errors, compliance exposure, reputational risk, and irreversibility costs.

What is high-trust representation in AI?

High-trust representation is structured, verified, and governed reality that AI systems can safely use for decision-making and execution.

Why is reality scarce in the AI economy?

Raw data is abundant, but trusted, machine-usable representation is rare due to issues like ambiguity, fragmentation, outdated state, and lack of governance.

What is the lifecycle of representation?

It includes creation, verification, authorization, reasoning, execution, contestation, updating, and retirement.

Why do AI systems fail despite strong models?

Because they operate on incomplete or low-trust representations of reality rather than accurate institutional truth.

What is Representation Economics?

A framework explaining how value in the AI economy comes from building and sustaining high-quality representations of reality.

Glossary

Representation Economics

A framework for understanding value creation in the AI era based on how well institutions represent reality, reason over it, and act on it under governance.

High-Trust Representation

A form of machine-usable reality that is sufficiently accurate, current, attributable, authorized, and contestable for safe action.

Representation Scarcity

The idea that trustworthy, low-ambiguity, machine-usable reality is much rarer than raw data or raw signals.

Representation Lifecycle

The sequence through which reality is created, verified, authorized, reasoned over, executed, contested, updated, and retired.

SENSE

The legibility layer in which signals are attached to entities, shaped into state, and updated over time. (raktimsingh.com)

CORE

The cognition layer in which systems interpret context, optimize decisions, realize action, and learn through feedback. (raktimsingh.com)

DRIVER

The legitimacy layer in which delegated actions are bounded by identity, verification, execution rules, and recourse. (raktimsingh.com)

Representation Drift

The gap that emerges when real-world conditions change faster than an institution’s machine-readable representation of reality.

Contestability

The ability to challenge, appeal, or review automated decisions and the representations behind them. (ACLU Data for Justice)

References and further reading

For credibility and GEO pickup, keep a short reference block at the end of the article. It helps both human readers and answer engines understand the authoritative context behind the argument.

- Stanford HAI, 2025 AI Index Report and Economy section, on AI adoption and investment trends. (Stanford HAI)

- NIST, AI Risk Management Framework (AI RMF 1.0), on representation, context, and trustworthiness risks. (NIST)

- European Commission, AI Act overview, on the EU’s risk-based legal framework for AI. (Digital Strategy)

- OECD, AI Principles, on trustworthy AI, transparency, accountability, and robustness. (OECD AI)

- White House OSTP, Blueprint for an AI Bill of Rights, on human alternatives, fallback, and contestability. (ACLU Data for Justice)

- UK ICO, Human review guidance, on meaningful human review in the AI lifecycle. (ICO)

- Raktim Singh, The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture. (raktimsingh.com)

- Raktim Singh, Decision Scale: The New Competitive Advantage in AI. (raktimsingh.com)

- Raktim Singh, The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy. (raktimsingh.com)

- Emerging Technology Solutions | Infosys Topaz Fabric: How AI Is Quietly Changing the Way Enterprise Services Are Delivered

- Emerging Technology Solutions | What Is Infosys Topaz Fabric? The Missing Layer for Scalable Enterprise AI

- Emerging Technology Solutions | Infosys Topaz Fabric: Enterprise AI Infrastructure for Scalable, Governed, and Cost-Aware AI Exec

-

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fragility and Exclusion: The Hidden Fault Line That Will Break the AI Economy – Raktim Singh

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines – Raktim Singh

- Representation Monopolies: Why the AI Economy Will Be Controlled by Those Who Define Reality – Raktim Singh

- Representation Forensics: The Missing Layer of AI—Why the Future Will Be Decided by What Systems Thought Reality Was – Raktim Singh

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

- The Representation Attack Surface: Why AI’s Biggest Threat Is Reality Hacking, Not Model Hacking – Raktim Singh

- The Chief Representation Officer: Why Institutions Collapse When Machine-Readable Reality Falls Behind – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Sing

-

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.