The Human–Agent Ratio

The next great productivity metric in enterprise technology is not about software adoption or model accuracy—it is about the Human–Agent Ratio.

The Human–Agent Ratio captures how many AI agents an organization can deploy, supervise, and govern per human without losing control, trust, or economic viability.

In the last two years, most enterprises measured “AI progress” the same way they measured software progress: how many tools were deployed and how many teams adopted them.

That era is ending.

A new reality is taking over: AI is no longer only answering questions. It is starting to take actions—creating tickets, changing records, drafting customer responses, triggering workflows, running checks, initiating approvals, and coordinating across systems.

When AI can act, productivity is no longer just “people + software.” It becomes people + agents.

This is why a new metric is entering global executive discussions: the Human–Agent Ratio—the balance between digital labor (agents) and human judgment required to unlock productivity without creating operational chaos. Microsoft’s enterprise narrative has explicitly used this phrase—“human-agent ratio”—as a management lens for the future of work. (LinkedIn)

This article explains the Human–Agent Ratio in simple language, shows practical examples, and lays out the enterprise stack required to make that ratio safe, reliable, auditable, and economically sustainable—across North America, Europe, the Middle East, APAC, and fast-scaling markets like India where agentic adoption is accelerating through Global Capability Centers (GCCs). (ETGCCWorld.com)

What is the Human–Agent Ratio?

As AI systems move from answering questions to taking actions, enterprise productivity is being redefined by a single, emerging metric: the Human–Agent Ratio

Think of it like this:

- In the old world, a manager supervised people.

- In the new world, a manager may supervise people + AI agents.

- The Human–Agent Ratio captures how much “agent workforce” your organization can safely absorb per human—for a given team, process, function, and risk profile.

Different organizations will define it slightly differently. Some will measure agents per employee. Others will measure agents per workflow. Some will define it as how many agents a person can effectively oversee.

The most important question CIOs will soon manage is no longer ‘Which AI model?’ but ‘What is our Human–Agent Ratio?

The essence is the same: AI maturity shifts from tool adoption to agent operational capacity. (LinkedIn)

Why CIOs (and boards) will care

Because the Human–Agent Ratio becomes a proxy for four executive-grade outcomes:

- Speed: how many workflows move forward without waiting for human bandwidth

- Scale: how much work runs continuously, across time zones and business cycles

- Cost: how much execution is done by digital labor without cost explosions

- Risk: how much autonomy is operating inside your systems—and whether it’s controlled (The Guardian)

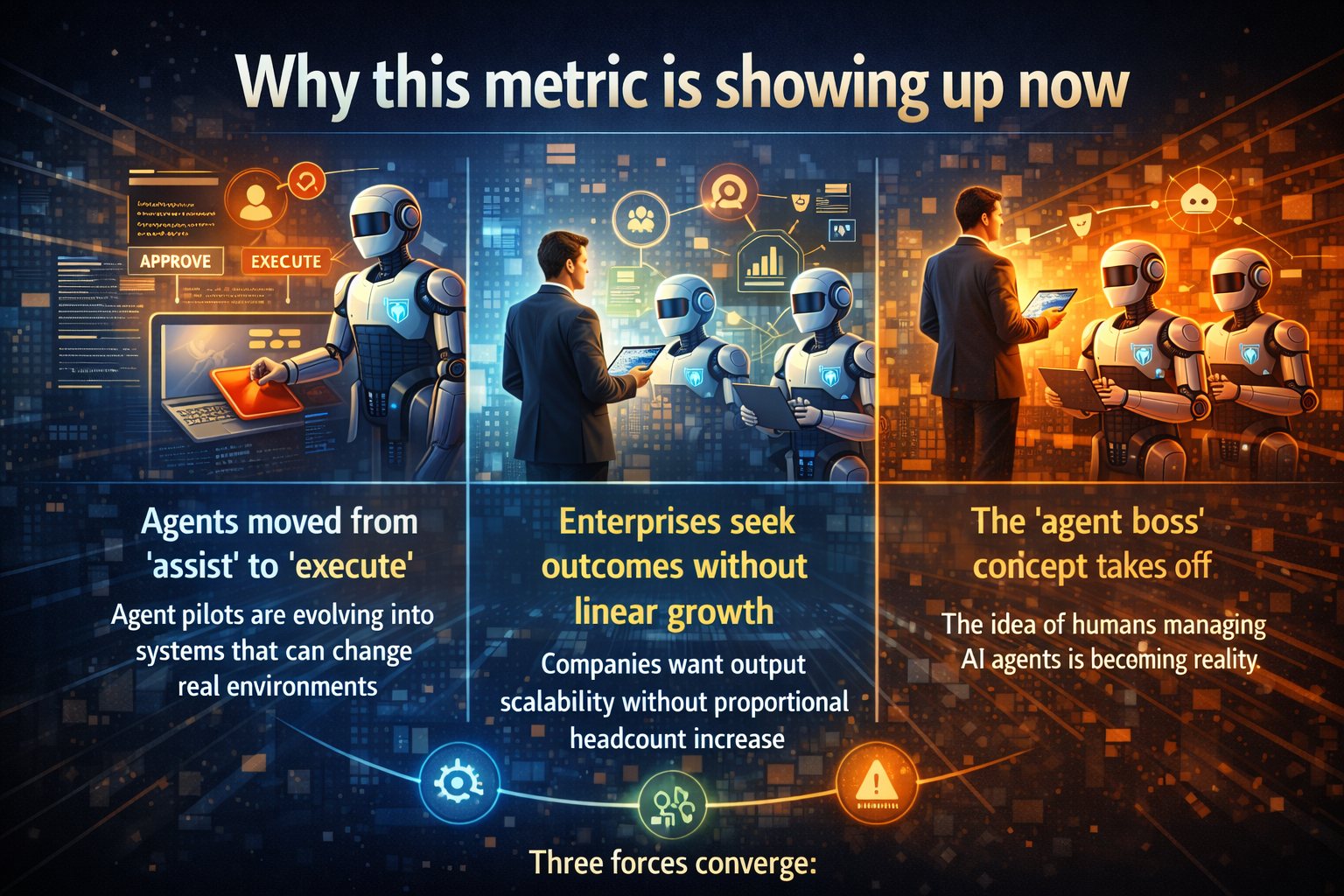

Why this metric is showing up now

Three forces converged.

1) Agents are moving from “assist” to “execute”

Enterprises are watching pilots evolve into agents with write-access—agents that can change real systems, not just suggest text.

That shift changes everything. When an agent can update records, trigger workflows, or initiate actions, the hardest problem becomes operability: controls, traceability, and incident response.

This is why governance topics like “agent oversight” and “kill switches” keep surfacing in enterprise conversations around agentic AI. (The Economic Times)

2) Enterprises want outcomes without linear headcount growth

Every executive team is asking a version of the same question:

Can we grow output without growing headcount at the same rate?

Some companies are already speaking publicly about scaling output with large fleets of “digital agents.” A recent example: LTIMindtree’s CEO has discussed incremental revenue associated with deploying a large number of digital agents alongside human teams. (The Economic Times)

3) The “agent boss” idea is going mainstream

A widely discussed narrative is that many employees will become managers of AI agents—delegating tasks, reviewing outputs, setting boundaries, and owning results. (The Guardian)

The implication is subtle—but decisive:

In the coming enterprise model, productivity won’t be measured by “AI usage.”

It will be measured by how effectively humans and agents work together, under control.

Every major technology shift creates a new management metric—and in the age of autonomous AI, that metric is the Human–Agent Ratio.

A simple way to understand the Human–Agent Ratio

Imagine three stages.

Stage 1: 1 human : 1 agent (early stage)

A support engineer uses one agent to draft responses. The human still verifies facts and does the final work.

Outcome: modest acceleration, limited risk.

Stage 2: 1 human : 5 agents (scale stage)

The same engineer now supervises multiple specialized agents:

- one drafts responses,

- one summarizes history,

- one checks policy,

- one proposes next-best action,

- one monitors operational signals.

The human’s job shifts from typing to supervising decisions.

Outcome: higher throughput—if guardrails exist.

Stage 3: 1 human : 20+ agents (industrial stage)

Now you have fleets: agents running workflows 24×7, handling repetitive cases, escalating exceptions. Humans become controllers of outcomes, not doers of tasks.

Outcome: major productivity—if (and only if) autonomy is operable.

This is where reality shows up:

Without the right stack, your ratio doesn’t increase.

It collapses.

Enterprises do not fail at AI because models are weak; they fail because the Human–Agent Ratio is unmanaged.

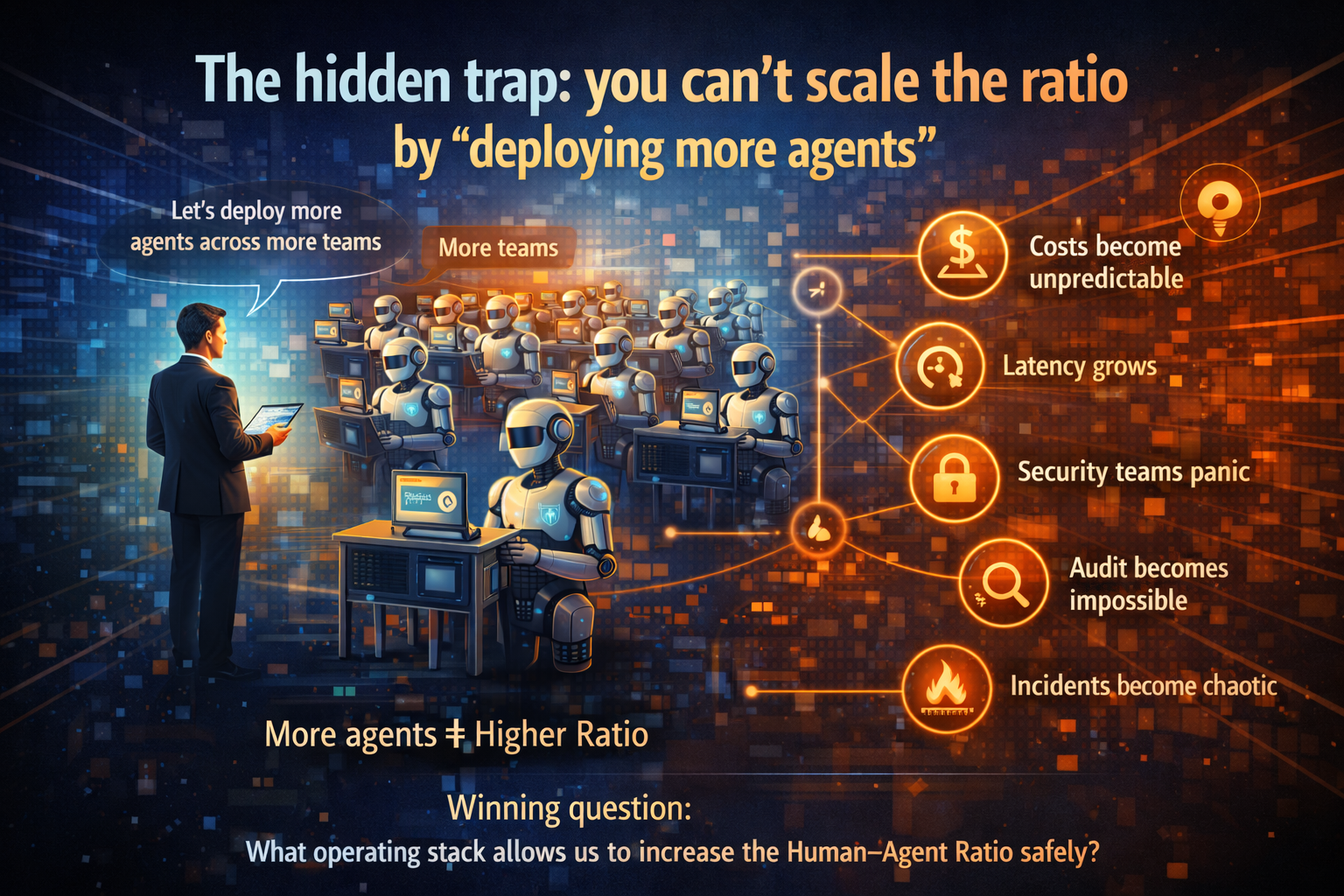

The hidden trap: you can’t scale the ratio by “deploying more agents”

Most enterprises try this first:

“Let’s deploy more agents across more teams.”

Then reality hits:

- Costs become unpredictable

- Latency grows

- Security teams panic

- Audit becomes impossible

- Incidents become chaotic

- Business trust declines

This is why the Human–Agent Ratio is not just a productivity metric.

It is a governance and operability metric.

So the winning question becomes:

What operating stack allows us to increase the Human–Agent Ratio safely?

The future of enterprise productivity will not be measured in licenses or headcount, but in the Human–Agent Ratio.

The Stack Required to Make the Human–Agent Ratio Safe

Below is a practical, enterprise-safe stack model—no math, no buzzword overload, just the controls that let agentic systems scale.

1) Agent Identity and Access: “Who is acting?”

If an agent can update records, approve requests, trigger workflows, or access sensitive data, you must answer:

- Does the agent have an identity (like a service account)?

- What permissions does it have?

- Can permissions be restricted by workflow, data type, region, and risk tier?

Without agent identity, enterprises fall into identity flattening:

- everything runs under shared credentials,

- attribution becomes impossible,

- revocation becomes risky,

- compliance becomes fragile.

Simple example:

An onboarding agent updates vendor records. If it has broad permissions, one prompt injection or tool misuse can expose data or make changes that take days to unwind. With least privilege, the agent can only touch the specific workflow objects it is authorized to handle.

2) Policy Guardrails: “What is the agent allowed to do?”

Enterprises don’t fail because agents can’t write.

They fail because agents act outside policy.

Guardrails must enforce:

- allowed actions,

- forbidden actions,

- approval requirements,

- escalation rules,

- data handling and retention rules.

And these guardrails must exist outside the agent’s own reasoning—so the agent cannot “talk itself” into bypassing them. Security-oriented discussions increasingly emphasize kill switches/circuit breakers and robust constraints for autonomous behaviors. (Tredence)

Simple example:

A finance agent can draft a payment recommendation, but it cannot release payments. It must escalate to human approval for any action that crosses a threshold (amount, risk tier, unusual pattern).

3) Observability and Audit Trails: “What happened and why?”

If you can’t answer:

- What did the agent see?

- What tools did it call?

- What did it change?

- What policy checks were applied?

- What was the final decision path?

…you can’t operate it in production.

This matters globally, but it becomes existential in heavily regulated sectors (banking, insurance, healthcare, public sector) across the EU, UK, US, Middle East, and India—where auditability and traceability are foundational.

Simple example:

An agent rejects a customer claim. The business needs a defensible narrative—inputs, rules, tool calls, approvals—so the decision can be reviewed, corrected, and explained.

4) AI FinOps: “Unlimited tokens is not a business model”

As agent fleets grow, costs can explode due to:

- retries,

- long contexts,

- parallel tool calls,

- multi-agent delegation loops.

If you don’t govern cost like a first-class control, the Human–Agent Ratio will hit a ceiling—because finance will force a shutdown.

A production stack needs:

- budgets per agent and per workflow,

- cost per business outcome (not per model call),

- anomaly alerts,

- throttling and graceful degradation.

Simple example:

A policy-check agent shouldn’t use the most expensive model for routine cases. It should use a cheaper specialized model for 80% of checks and escalate to a frontier model only when ambiguity is high.

5) Rollback and Kill Switch: “Autonomy must be reversible”

When agents take actions, incidents are inevitable.

The only question is whether incidents are:

- contained, reversible, and learnable, or

- chaotic, expensive, and reputation-damaging.

“Kill switch / circuit breaker” controls are commonly recommended in security and governance discussions around autonomous agent behavior. (Tredence)

Simple example:

An agent starts generating duplicate service tickets due to a tool outage. A kill switch disables tool access immediately and routes cases to a safe fallback until stability returns.

6) Human-by-Exception Workflows: “Humans handle the edge cases”

To scale the Human–Agent Ratio, humans cannot be in every loop. They must be in the right loops.

A practical operating model is:

- agents handle standard cases,

- humans approve exceptions, high-risk actions, and escalations.

This is the real shape of scalable autonomy: automation for the routine, human judgment for the edge.

Simple example:

In IT operations, an agent handles routine password resets and knowledge requests. Humans focus on high-risk incidents and root-cause analysis.

7) A Composable Architecture: “Open, evolving, not locked to one model”

The Human–Agent Ratio will be limited if your system is brittle:

- tied to one model,

- tied to one vendor,

- hard-coded to one workflow.

Enterprises need a composable layer that abstracts:

- models (frontier + specialized),

- prompts,

- tools,

- policy enforcement,

- telemetry and logging,

- deployment and rollback patterns.

This is how you avoid rebuilding every time the ecosystem changes—which it will.

In the age of autonomous AI, the most dangerous number an enterprise doesn’t track is its Human–Agent Ratio.

Three real-world scenarios that make this intuitive

Scenario A: Customer support without chaos

- Low ratio: one human uses one agent to draft replies.

- Higher ratio: one human supervises multiple agents: summarizer, policy checker, response drafter, sentiment monitor.

- Safe scaling requires: audit trails, policy guardrails, escalation rules.

Scenario B: IT ops and incident response

Agents detect anomalies, propose fixes, and execute low-risk remediations. Humans step in on severe incidents and approvals.

Safe scaling requires: kill switch, rollback, identity controls, observability.

Scenario C: Onboarding in regulated industries

Agents read documents, extract fields, validate completeness, create workflow tasks. Humans approve exceptions and high-risk decisions.

Safe scaling requires: permissions, policy checks, traceable decision history.

What leaders should measure (simple and practical)

If you want to manage Human–Agent Ratio as a CIO, track what actually matters:

- Autonomy coverage: what share of workflows agents can complete end-to-end

- Exception rate: how often humans intervene

- Controls effectiveness: how often guardrails block unsafe actions

- Time-to-contain incidents: how fast you can stop, rollback, and recover

- Cost per workflow outcome: cost per resolved ticket, onboarded vendor, processed request

These metrics reward operability, not hype.

Conclusion: The real executive takeaway

The Human–Agent Ratio will become a defining productivity metric because it describes what leaders are truly trying to do:

Scale output without scaling chaos.

Enterprises that treat agents like “tools” will remain stuck at low ratios. Enterprises that build the operating stack for safe autonomy—identity, guardrails, observability, cost control, rollback, and human-by-exception workflows—will be able to raise the ratio confidently.

In the next era of enterprise competition, the winner won’t be the organization with the cleverest demo.

It will be the organization that can safely run the largest, most governed “agent workforce”—and keep it aligned as the business, policies, and environment keep changing.

FAQ

1) Is the Human–Agent Ratio only about reducing headcount?

No. The stronger framing is capacity and leverage: shifting humans to higher-value work while agents handle repeatable execution—under governance. (LinkedIn)

2) Can we increase the ratio just by buying a better model?

Usually not. Better models help, but the binding constraint becomes operational safety: identity, policy, observability, cost controls, rollback, and incident response. (Tredence)

3) What’s the fastest first step?

Pick one workflow and implement the “minimum safe stack”:

identity + least privilege, policy checks, audit logging, cost guardrails, kill switch + fallback. Then expand.

4) Will every organization have the same “ideal ratio”?

No. It varies by task, regulation, risk tolerance, and maturity—exactly why the ratio is a management metric, not a universal target. (LinkedIn)

Glossary

- Human–Agent Ratio: A management lens describing the balance between AI agents and human oversight required to unlock productivity without increasing operational risk. (LinkedIn)

- AI Agent (Digital Worker): Software that can plan and execute tasks, often via tools/APIs, inside enterprise workflows. (The Economic Times)

- Human-by-Exception: Operating model where agents handle routine cases and humans intervene for exceptions, high-risk actions, and escalations.

- Kill Switch / Circuit Breaker: A mechanism to immediately stop an agent or revoke tool access during anomalous behavior or incidents. (Tredence)

- Rollback: The ability to reverse actions and return systems to a safe state after incorrect execution.

- Agent Observability: Monitoring and logging that provides traceability into what the agent saw, decided, and executed—including tool calls.

- AI FinOps: Financial governance for AI usage—budgets, cost controls, anomaly detection, and cost-per-outcome accountability.

- Composable Enterprise AI Stack: A modular architecture that integrates models, tools, governance, and operations—designed to evolve without lock-in.

References and further reading

- Microsoft / Jared Spataro (LinkedIn): “Human-agent ratio” framing for the future of work (LinkedIn)

- The Guardian coverage of “agent boss” narrative in enterprise work (The Guardian)

- Economic Times reporting on agentic AI and digital agents in enterprise growth contexts (The Economic Times)

- Security-oriented discussions emphasizing kill-switch / circuit breaker controls for autonomous agents (Tredence)

- The Enterprise AI Control Tower: Why Services-as-Software Is the Only Way to Run Autonomous AI at Scale – Raktim Singh

- The Living IT Ecosystem: Why Enterprises Must Recompose Continuously to Scale AI Without Lock-In – Raktim Singh

- The Synergetic Workforce: How Enterprises Scale AI Autonomy Without Slowing the Business – Raktim Singh

- Service Catalog of Intelligence: How Enterprises Scale AI Beyond Pilots With Managed Autonomy – Raktim Singh

- The AI Platform War Is Over: Why Enterprises Must Build an AI Fabric—Not an Agent Zoo – Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.