Representation Debt : Executive summary

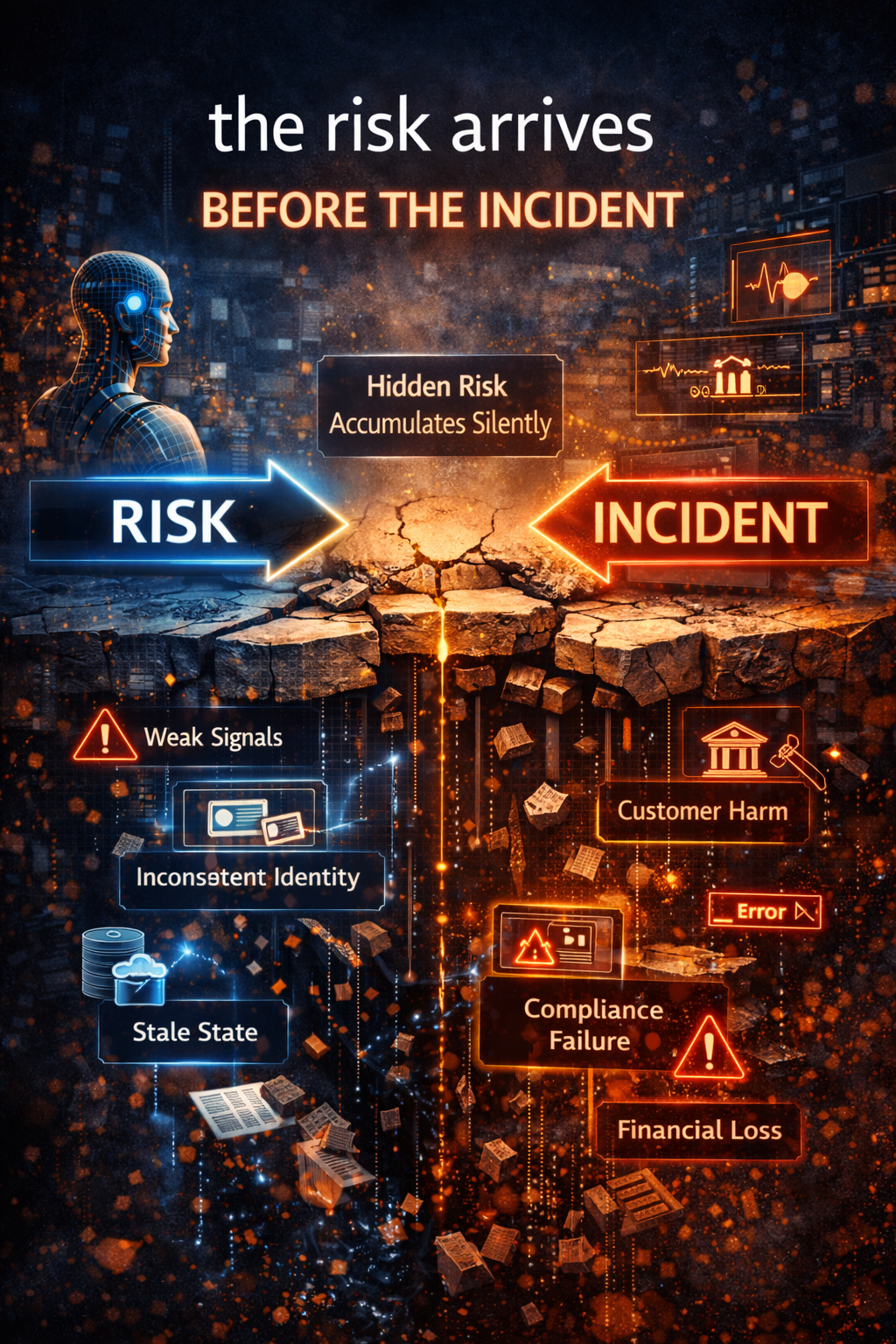

Most organizations still think about AI risk in visible terms: a bad decision, a hallucinated answer, a failed automation, a compliance breach, or a public incident. But the deeper risk often starts much earlier, long before any visible failure appears.

That hidden risk is representation debt.

Representation debt is the slow accumulation of institutional fragility that happens when AI systems operate on incomplete, outdated, fragmented, or poorly governed representations of reality. The model may be sophisticated. The interface may look polished. The pilot may seem successful. But if the institution’s machine-readable picture of customers, products, policies, states, exceptions, and authority is weak, AI risk is already compounding beneath the surface.

This is why the next phase of Enterprise AI will not be won only by better models. It will be won by institutions that build better representations of reality before they allow machines to recommend, decide, or act.

Executive Summary

The Representation Stack is the institutional architecture that converts real-world signals into machine-interpretable knowledge, governed decisions, and accountable actions.

As AI systems move from content generation into real operational environments—finance, healthcare, supply chains, and public infrastructure—the quality of institutional representation becomes more important than model capability alone.

The Representation Stack organizes this transformation through layered architecture aligned with the SENSE–CORE–DRIVER framework:

-

SENSE – capturing signals, entities, and states from the real world

-

CORE – interpreting meaning and forming decisions

-

DRIVER – executing actions with authority, verification, and accountability

Institutions that design this architecture effectively will gain a durable competitive advantage in the emerging Representation Economy.

Why this matters now

For years, leaders treated AI risk as something dramatic.

A model makes the wrong prediction.

A chatbot hallucinates.

An automated workflow takes the wrong action.

A fraud system misses a pattern.

A recommendation engine creates an embarrassing outcome.

These are visible failures. They attract attention because they are obvious.

But in most institutions, the deeper risk starts much earlier.

Long before a public failure appears, organizations often begin accumulating something far more dangerous: representation debt.

This matters now because AI adoption has moved sharply from experimentation to operational use. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the year before, while global private investment in generative AI reached $33.9 billion in 2024. At the same time, major governance frameworks are increasingly emphasizing lifecycle risk management, post-deployment monitoring, accountability, and ongoing oversight rather than one-time model approval. (Stanford HAI)

The real question is no longer only:

Is the model good?

The more important question is:

What reality is the institution actually representing before it lets AI recommend, decide, or act?

That is where representation debt begins.

What is representation debt?

A simple definition

Representation debt is the hidden liability that builds up when institutions allow AI systems to operate on a weak, stale, fragmented, or incomplete representation of the world.

Think of technical debt. A team takes shortcuts in code, architecture, or testing to move quickly. The product still ships. Nothing collapses immediately. But over time, every future change becomes harder, slower, and riskier.

Representation debt works in a similar way, but at the level of institutional reality.

It appears when:

- important signals are missing

- entities are defined inconsistently

- relationships across systems are fragmented

- state changes are not captured in time

- exceptions are handled manually but never encoded

- policy meanings drift across teams

- authority rules are unclear

- execution happens without durable decision evidence

In other words, representation debt accumulates when the institution’s internal picture of reality is weaker than the decisions it is asking machines to support.

This is not a niche technical issue. It is becoming a strategic issue.

NIST’s AI Risk Management Framework explicitly treats AI risk as something that must be governed across mapping, measurement, management, and monitoring across the AI lifecycle, not as a one-time compliance exercise. OECD materials likewise frame accountability and risk management across the AI system lifecycle, including operation and monitoring. The EU AI Act also includes obligations around deployer oversight, logging, and post-market monitoring for high-risk systems. (NIST Publications)

That shift is important because representation debt usually accumulates between design and operation.

The model may be fine.

The representation of reality may not be.

Why representation debt accumulates quietly

Because it often looks like progress

Representation debt is dangerous because it does not always look like failure at first.

In fact, it often looks like success.

A company launches an AI assistant for customer operations. It connects the assistant to product catalogs, CRM records, knowledge bases, and ticket histories. The system performs well in early tests. Customers get faster responses. Support costs begin to fall.

But over time, product definitions change. Escalation rules evolve. Regional exceptions multiply. New service bundles are introduced. One business unit changes naming conventions. Another adds manual workarounds. A third updates policy language without updating the metadata or system logic beneath it.

The AI system still runs.

But the institution’s machine-readable picture of products, promises, exceptions, and obligations has started to decay.

That is representation debt.

Or consider a fraud detection system. At first, it flags suspicious behavior well. Then customer behavior changes, device patterns change, channels change, fraud tactics change, and internal recovery workflows change. The system may still produce scores, but the entity relationships and behavioral representations underneath it no longer reflect reality accurately.

Again, representation debt.

Or think about lending, insurance, procurement, healthcare, HR, supply chain, or legal operations. In each domain, institutions increasingly want AI to support decision flows. But if the underlying representation of identity, state, entitlement, exception, or authority is weak, the visible error appears only after the hidden debt has already become large.

That is why representation debt deserves board-level attention.

It is a latent institutional liability.

The deeper shift: from model risk to representation risk

Most institutions still discuss AI risk as if the model were the main object of concern.

That mindset is now too narrow.

A model can be accurate and still be dangerous inside an institution if it operates on weak representations. It can sound intelligent while being grounded in stale semantics, broken entity models, or outdated policy logic. It can optimize beautifully while optimizing the wrong thing.

This is the deeper shift now underway.

The central question in Enterprise AI is moving from:

How smart is the model?

to:

How faithful, governable, and current is the institution’s representation of reality?

That is a much more consequential question for boards, CEOs, CIOs, CTOs, risk leaders, and regulators.

The SENSE–CORE–DRIVER view of representation debt

The best way to understand representation debt is through the full architecture of intelligent institutions.

SENSE: debt begins when reality stops becoming legible

SENSE is the layer where reality becomes machine-legible.

It includes:

- signal detection

- entity binding

- state representation

- state evolution over time

Representation debt often starts here.

An institution begins accumulating debt when it captures the wrong signals, misses important signals, binds them to the wrong entities, or fails to keep state updated as reality changes.

A simple example: a logistics company may know that a shipment exists, but not its true condition, dependency chain, delay cause, or exception status across handoffs. If AI is later asked to optimize customer commitments or reroute inventory, it may be reasoning over a representation that is technically present but operationally false.

The danger is subtle.

The system is not blind.

It is partially sighted.

That is often worse.

CORE: debt compounds when reasoning runs on stale meaning

CORE is the cognition layer.

It is where institutions:

- comprehend context

- optimize decisions

- realize action recommendations

- evolve through feedback

If SENSE provides a stale or fragmented picture of reality, CORE can still produce polished outputs. It may even look highly intelligent.

But a system that reasons beautifully on poor representations is not trustworthy. It is merely eloquent.

This is where many institutions become overconfident. They mistake fluent reasoning for grounded reasoning.

An AI system might summarize a case, recommend a next action, or rank options very confidently. But if product definitions, customer state, operational constraints, and policy exceptions are represented badly, the output may still be wrong in the most important sense: it is disconnected from institutional reality.

Representation debt at the CORE layer appears when:

- concepts drift

- policies are interpreted inconsistently

- optimization goals are not aligned with real-world constraints

- feedback loops reinforce incomplete representations

The model is not the whole story.

The meanings it operates over matter just as much.

DRIVER: debt becomes dangerous when weak representations gain the power to act

DRIVER is the execution and legitimacy layer.

It includes:

- delegation

- representation

- identity

- verification

- execution

- recourse

This is where representation debt becomes operationally dangerous.

Because the moment an institution allows AI to trigger workflows, approve actions, deny requests, allocate resources, or shape customer outcomes, weak representations stop being an abstract architecture issue.

They become a legitimacy issue.

If a system acts on the wrong identity, the wrong state, the wrong authority boundary, or the wrong interpretation of policy, the institution has a governance problem, not just a data problem.

That is exactly why the policy environment is moving toward stronger expectations around logging, monitoring, human oversight, and post-deployment controls. NIST’s AI RMF emphasizes continuous risk management across the lifecycle. OECD materials frame accountability as an ongoing discipline. The EU AI Act explicitly includes deployer obligations such as oversight, relevant input data, logging, and monitoring, along with post-market monitoring requirements for high-risk systems. (NIST Publications)

In plain language:

Once machines can act, representation debt becomes institutional risk.

The five most common forms of representation debt

-

Signal debt

The institution does not capture important changes in reality early enough.

Example: A bank sees transactions but not intent signals, behavioral anomalies, linked channel activity, or contextual patterns across systems.

-

Entity debt

Different systems use different identities, definitions, or naming structures for the same customer, product, asset, supplier, or case.

Example: A “customer” in billing, support, risk, and marketing may not actually mean the same thing.

-

State debt

The system knows what something is, but not its current condition.

Example: A patient record, loan file, policy claim, or shipment may exist, but the active status, exception state, or dependency path is outdated.

-

Policy debt

Rules exist, but their machine-readable form is incomplete, inconsistent, or not updated as reality changes.

Example: Teams handle exceptions manually while official rules remain frozen in documents and static checklists.

-

Authority debt

Institutions let AI operate without clearly defining what the system is authorized to decide, recommend, execute, escalate, or reverse.

Example: A workflow assistant begins performing actions that people assumed were “only suggestions.”

These forms of debt rarely stay isolated.

Signal debt creates state debt.

State debt creates policy confusion.

Policy confusion creates authority risk.

That is how hidden architectural weakness turns into operational exposure.

Why representation debt is more dangerous than model risk alone

Model risk is familiar. Enterprises already know how to think about performance, bias, hallucination, robustness, and drift.

Representation debt is more dangerous because it sits underneath all of those.

A strong model running on weak representations can still fail institutionally.

That is the core point.

An institution may spend millions on model evaluation and still underinvest in:

- entity resolution

- knowledge freshness

- policy encoding

- exception capture

- workflow semantics

- authority boundaries

- reversible execution

- recourse design

When that happens, the organization feels advanced because its AI layer is sophisticated. But its institutional representation layer remains immature.

This is one reason modern AI governance frameworks keep emphasizing governance, context mapping, measurement, monitoring, and post-deployment controls. The problem is not only whether a model can produce the right output in a laboratory. The problem is whether the system remains trustworthy as reality changes in production. (NIST)

How leaders can detect representation debt before failure

Representation debt is often visible if leaders ask better questions.

Not:

- How accurate is the model?

- How fast is the workflow?

- How many pilots have we launched?

But:

- What parts of reality are still invisible to the system?

- Which entities are inconsistently defined across the enterprise?

- Where does machine-readable state lag behind real-world state?

- Which exceptions are handled manually but never encoded?

- What policy meanings change faster than the system updates?

- Where is AI influencing action without clear authority boundaries?

- What evidence do we retain to explain why a decision was made?

- What recourse exists if the representation was wrong?

Those are representation-debt questions.

Boards should care because these questions reveal whether AI risk is accumulating silently beneath apparently successful adoption.

How institutions should respond

The answer is not to slow down AI altogether.

The answer is to build stronger representation discipline.

Seven practical responses

-

Treat representation as infrastructure, not cleanup

Representation is not a back-office metadata exercise. It is part of the operating architecture of intelligent institutions.

-

Define critical entities consistently

Customers, products, suppliers, assets, claims, contracts, cases, and policies must have stable enterprise meaning.

-

Build living state models, not static records

A record is not enough. Institutions need continuously updated state, exception status, and dependency awareness.

-

Encode policy close to operations

Rules should not live only in PDFs, handbooks, or tribal memory. They must be rendered into operational systems.

-

Separate advisory autonomy from execution autonomy

There is a major difference between a system that recommends and a system that acts.

-

Require verification and recourse for consequential actions

If AI can affect money, access, service, eligibility, or legal position, there must be verifiable evidence and a way back.

-

Monitor representation quality continuously

Most institutions monitor model performance more seriously than they monitor representation quality. That imbalance will become costly.

Why this is a board issue, not just a data issue

Boards do not need to manage ontologies, schemas, or event pipelines directly.

But boards do need to understand when an institution is building strategic dependency on AI without building strategic confidence in representation.

That is a governance problem.

The institutions that win in the next phase of AI will not simply deploy more intelligence. They will decide more carefully:

- what must be seen

- what must be modeled

- what must be governed

- what may be delegated

This is why representation debt belongs in the boardroom.

It sits at the intersection of:

- strategy

- trust

- operating resilience

- compliance

- customer legitimacy

- institutional memory

- delegated authority

In short, representation debt is not just an engineering weakness.

It is an institutional design weakness.

The concept of Representation Debt extends the broader idea of the Representation Economy — the shift in which institutional advantage increasingly depends on how well organizations represent reality for intelligent systems. Within this architecture, the SENSE–CORE–DRIVER framework helps explain why AI failures rarely begin at the model layer. They begin when institutions misrepresent reality, reason on incomplete states, or delegate authority without proper governance structures.

Conclusion: the risk arrives before the incident

Technical debt slows software.

Representation debt destabilizes institutions.

That is why this idea matters so much.

An institution can survive some bad outputs. It can patch prompts, retrain models, add reviews, or improve monitoring. But if its underlying representation of customers, assets, states, policies, exceptions, and authority is weak, every new layer of AI increases exposure.

This is the deeper reality of the Representation Economy.

Competitive advantage will not belong only to those who deploy more intelligence. It will belong to those who build more faithful, governable, updateable representations of reality before they delegate decisions to machines.

That is the true foundation of scalable Enterprise AI.

And that is why representation debt should become a board-level concept now, before visible failures force the lesson later.

Glossary

Representation debt

The hidden liability that builds up when AI systems operate on incomplete, stale, fragmented, or weakly governed representations of reality.

Representation economy

A strategic view of the AI era in which value increasingly depends on how well institutions make reality visible, modelable, governable, and delegable.

SENSE

The layer where reality becomes machine-legible through signal detection, entity binding, state representation, and state evolution.

CORE

The reasoning layer where institutions interpret context, optimize decisions, generate recommendations, and learn from feedback.

DRIVER

The execution and legitimacy layer that governs delegation, identity, verification, execution, and recourse.

Signal debt

Risk created when institutions fail to detect important changes in reality early enough.

Entity debt

Risk created when customers, products, assets, or cases are defined inconsistently across systems.

State debt

Risk created when the system knows what something is, but not its true current condition.

Policy debt

Risk created when institutional rules exist, but their machine-readable version is outdated or incomplete.

Authority debt

Risk created when institutions do not clearly define what AI systems are authorized to recommend, decide, execute, or escalate.

Representation discipline

The institutional practice of maintaining high-quality, up-to-date, governable machine representations of reality.

Institutional AI risk

The risk that arises when AI systems influence real decisions and actions inside an enterprise without sufficient representation, oversight, or recourse.

FAQ

- What is representation debt in simple terms?

Representation debt is the hidden risk that builds up when AI systems rely on a poor machine-readable picture of reality. The system may still work for a while, but the underlying representation is already weakening.

- How is representation debt different from technical debt?

Technical debt comes from shortcuts in software design or engineering. Representation debt comes from shortcuts, fragmentation, or decay in how an institution models reality for machine use.

- How is representation debt different from model risk?

Model risk focuses on the model’s behavior, such as bias, hallucinations, or performance. Representation debt sits underneath that and concerns whether the system is reasoning over the right reality in the first place.

- Why do organizations fail to notice representation debt?

Because it often appears during periods of apparent success. Pilots work, interfaces look polished, and outputs sound intelligent, even while the underlying representation of reality is degrading.

- Is representation debt only a data problem?

No. It is also a governance, operating model, and institutional design problem. It affects how entities, states, policies, authority, and recourse are defined across the enterprise.

- Which industries are most exposed to representation debt?

Banking, insurance, healthcare, supply chain, telecom, public sector, HR, legal operations, and any industry where AI influences consequential workflows.

- Can a strong model still fail because of representation debt?

Yes. A highly capable model can still produce institutionally unsafe or misleading outputs if the underlying representation of reality is weak or outdated.

- Why is representation debt a board-level issue?

Because it affects trust, compliance, operating resilience, customer legitimacy, and the safe delegation of authority to AI systems.

- How can leaders detect representation debt early?

By asking whether the institution’s machine-readable reality is complete, current, consistent, explainable, and governable before AI is allowed to act on it.

- What is the first practical step to reducing representation debt?

Treat representation as enterprise infrastructure, not metadata cleanup. Then define critical entities, living state, policy logic, and authority boundaries much more explicitly.

References and further reading

The AI adoption and investment figures cited here come from Stanford HAI’s 2025 AI Index Report, which reports that 78% of organizations used AI in 2024 and that private investment in generative AI reached $33.9 billion globally in 2024. (Stanford HAI)

The lifecycle framing of AI governance discussed in this article is supported by the NIST AI Risk Management Framework, which emphasizes GOVERN, MAP, MEASURE, and MANAGE functions across the AI lifecycle, and by OECD materials on accountability and AI system lifecycle phases. (NIST Publications)

The discussion of deployer obligations, logging, oversight, and post-market monitoring is aligned with summaries of the EU AI Act, including Article 26 on deployer obligations and Article 72 on post-market monitoring for high-risk AI systems. (Artificial Intelligence Act)

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.