The Representation Boundary

For most of the past decade, the conversation around artificial intelligence has been dominated by models.

Which model is larger?

Which model reasons better?

Which model is cheaper to run?

Which model can act autonomously?

These questions fill conference stages, investor decks, and boardroom briefings. Yet they often miss the deeper issue that is beginning to shape the AI era.

The most important question is not how intelligent machines become.

The more important question is this:

What happens when institutions ask machines to reason about a reality they cannot properly represent?

That is where the real limit of the AI economy begins to emerge.

Not at the edge of compute power.

Not at the edge of data volume.

Not even at the edge of model sophistication.

The real limit appears at the edge of representation.

Every AI system depends on an institution’s ability to translate reality into a form that machines can recognize, structure, and reason about.

When that translation weakens, intelligence becomes fragile. The model may still generate outputs. The workflow may still appear functional. But the institution’s grasp of reality starts to drift.

That is the idea behind the Representation Boundary.

The Representation Boundary is the point beyond which an institution can no longer reliably convert reality into machine-legible form. Beyond that point, artificial intelligence may continue to produce predictions, recommendations, and actions, but the system’s understanding of the world becomes progressively less trustworthy.

This matters because the emerging AI economy is not simply about scaling models. It is increasingly about scaling machine-legible representations of reality.

Industrial economies created value by scaling production.

Digital economies created value by scaling information.

AI economies will increasingly create value by scaling representation.

And when representation weakens, the intelligence built on top of it weakens too.

Definition:

The Representation Boundary is the point beyond which an institution can no longer reliably convert reality into machine-legible form for AI systems to reason about and act upon.

What Is the Representation Boundary?

The Representation Boundary is the point beyond which an institution can no longer reliably convert reality into a machine-legible form for artificial intelligence systems to reason about and act upon.

Key Insight

Artificial intelligence does not reason directly about reality.

It reasons about representations of reality created by institutions.

When those representations break:

-

SENSE becomes incomplete

-

CORE becomes unreliable

-

DRIVER becomes dangerous

This is the Representation Boundary.

From Data to Representation

Artificial intelligence does not interact directly with the world.

It interacts with representations of the world.

Sensors generate signals.

Databases store records.

Algorithms process inputs.

But before machines can reason about reality, those signals must first be transformed into structured representations that capture the condition of entities in the world.

This distinction sounds subtle. In practice, it changes everything.

A hospital can collect enormous volumes of patient data—lab results, scans, medical notes, prescriptions, and vital signs. But those signals become useful only when the institution can combine them into a coherent representation of the patient’s condition and its evolution over time.

A bank can record millions of transactions each day. But unless those transactions are connected to the right customer, interpreted in the context of financial behavior, and understood as part of an evolving risk state, they remain isolated traces rather than meaningful representation.

A supply chain platform can track shipments across continents. But unless those traces are linked to supplier reliability, inventory stress, route dependencies, and disruption risk, optimization engines have little durable foundation on which to operate.

In each case, intelligence depends not on raw information but on an institution’s ability to construct and maintain an accurate, living representation of reality.

This is also why the Representation Economy should not be confused with the older idea of the Data Economy.

Data is raw signal. Representation is structured, contextual, entity-linked, and stateful. Google’s Knowledge Graph was built around this very shift—from matching strings to understanding entities and relationships.

Google itself described this move as a transition toward “things, not strings,” and its Knowledge Graph Search API is explicitly built around entities and schema-based types. (blog.google)

Clarifying What Is New

As the idea of a Representation Economy becomes more visible, it is naturally interpreted through existing categories. That is useful up to a point. But it can also hide what is distinctive about the shift now underway.

Beyond the Data Economy

The first distinction is between data and representation.

Data refers to raw signals: transaction logs, sensor readings, images, text records, or coordinates. Representation begins when those signals are organized into a structured model that reflects the state of real entities in the world.

For representation to exist, institutions must establish at least four things:

- a signal indicating that something happened

- an entity to which the signal belongs

- a state describing that entity’s current condition

- an evolution showing how that condition changes over time

A database full of payment records is data.

A dynamic model of a merchant’s evolving financial health is representation.

The difference is not cosmetic. It determines whether AI can reason reliably or only react mechanically.

Beyond Digital Twins

The second distinction is with digital twins.

Digital twins are real, powerful, and increasingly important. A digital twin is generally defined as a virtual representation of a physical object or system that is updated with real-time data and used for monitoring, simulation, and analysis. (ibm.com)

But digital twins represent only one part of the larger representational challenge.

The Representation Economy is broader.

It includes the representation of customers, merchants, patients, supply chains, institutions, ecosystems, and markets.

Digital twins mainly represent assets and physical systems. The Representation Economy concerns the wider problem of making complex socio-technical reality legible enough for institutions and machines to reason about.

Beyond AI Infrastructure

The third distinction is with AI infrastructure.

Models, pipelines, orchestration layers, inference stacks, and compute clusters all matter. But infrastructure operates after representation has already been established.

Infrastructure scales intelligence.

Representation creates legibility.

An enterprise can deploy powerful models and still fail if the system is reasoning over an incomplete or distorted picture of reality.

This is one reason many AI programs stall in practice: technical performance may improve while institutional understanding remains thin.

Large-scale surveys continue to show strong AI adoption, but much lower rates of enterprise-wide value capture and maturity, suggesting that deployment alone is not the same as institutional transformation. (NIST Publications)

The Institutional Architecture: SENSE, CORE, DRIVER

To understand why representation matters so much, it helps to place it inside a broader institutional architecture.

That architecture, in my view, has three layers:

SENSE

SENSE is the layer where reality becomes machine-legible.

In this framework, SENSE means:

- Signal — detecting events, changes, and traces from the world

- ENtity — attaching those signals to a persistent actor, object, location, or asset

- State representation — building a structured model of the entity’s current condition

- Evolution — updating that state as new signals arrive over time

SENSE is where raw traces become usable representation.

Without SENSE, AI does not truly understand anything. It only processes fragments.

Hospitals must sense patient conditions.

Banks must sense financial behavior.

Factories must sense machine states.

Governments must sense social and economic activity.

The strategic question is not merely whether data exists. It is whether institutions can turn that data into a sufficiently rich and timely representation of reality.

CORE

CORE is the cognition layer.

Once reality becomes machine-legible through SENSE, CORE performs four functions:

- Comprehend context

- Optimize decisions

- Realize action strategies

- Evolve through feedback

This is where AI appears intelligent.

Models predict.

Reasoning systems infer.

Decision engines prioritize.

Planning systems recommend.

But CORE only works as well as the representational quality it inherits.

If SENSE is shallow, CORE becomes brittle. The system may produce confident answers while misunderstanding the world it is supposed to analyze.

DRIVER

DRIVER is the execution and legitimacy layer.

This is where AI moves from reasoning to action.

In this framework, DRIVER includes:

- Delegation — who authorized the system to act

- Representation — what model of reality informed the decision

- Identity — which entity is affected

- Verification — how the decision is checked

- Execution — how the action is carried out

- Recourse — what happens if the system is wrong

This layer matters because institutions do not generate value merely by predicting. They create value when decisions enter real systems: payments, approvals, denials, routing, intervention, prioritization, escalation.

That is also where poor representation becomes dangerous.

The Representation Boundary

The Representation Boundary appears when institutions can no longer reliably represent reality.

At that point, one or more breakdowns begins to surface.

-

Signal Breakdown

The institution cannot detect the relevant event at all.

This often happens where signals are weak, hidden, delayed, or informal. Think of unregistered economic activity, shadow supply chains, or early clinical deterioration that leaves only faint traces.

Reality changes, but the institution does not observe it in time.

-

Entity Breakdown

Signals exist, but the system cannot confidently attach them to the right actor, asset, or object.

This is where identity infrastructure becomes central. Fragmented records, weak KYC, inconsistent public registries, and broken supplier mappings all create entity ambiguity. Global work on digital public infrastructure and digital identification exists precisely because institutions cannot include, govern, or serve what they cannot reliably identify. (World Bank)

-

State Breakdown

The institution knows the entity exists, but its model of the entity’s current condition is too thin, outdated, or incomplete.

A bank knows the customer but not their stress profile.

A logistics system knows the shipment but not its operational fragility.

A hospital knows the patient but not the evolving clinical state.

-

Evolution Breakdown

The representation exists, but the system cannot track how it changes over time.

Markets shift.

Behavior evolves.

Machines age.

Contexts drift.

A static model starts to diverge from a living reality.

Why Perfect Representation Is the Wrong Standard

At this point, a fair question arises.

Isn’t reality too complex to be fully represented?

Yes.

Human intent, moral judgment, social trust, cultural nuance, hidden variables, and institutional politics cannot be fully captured in structured models. That is not a weakness in the theory. It is part of the point.

The Representation Economy is not about perfect representation.

It is about better representation.

Industrial firms never achieved perfect production.

Digital firms never achieved perfect information.

But better infrastructure created durable advantage.

The same logic holds here.

The institutions that win will not be those that represent reality perfectly. They will be those that represent it better than competitors, better than legacy systems, and well enough to govern action responsibly.

That is the more realistic and more powerful claim.

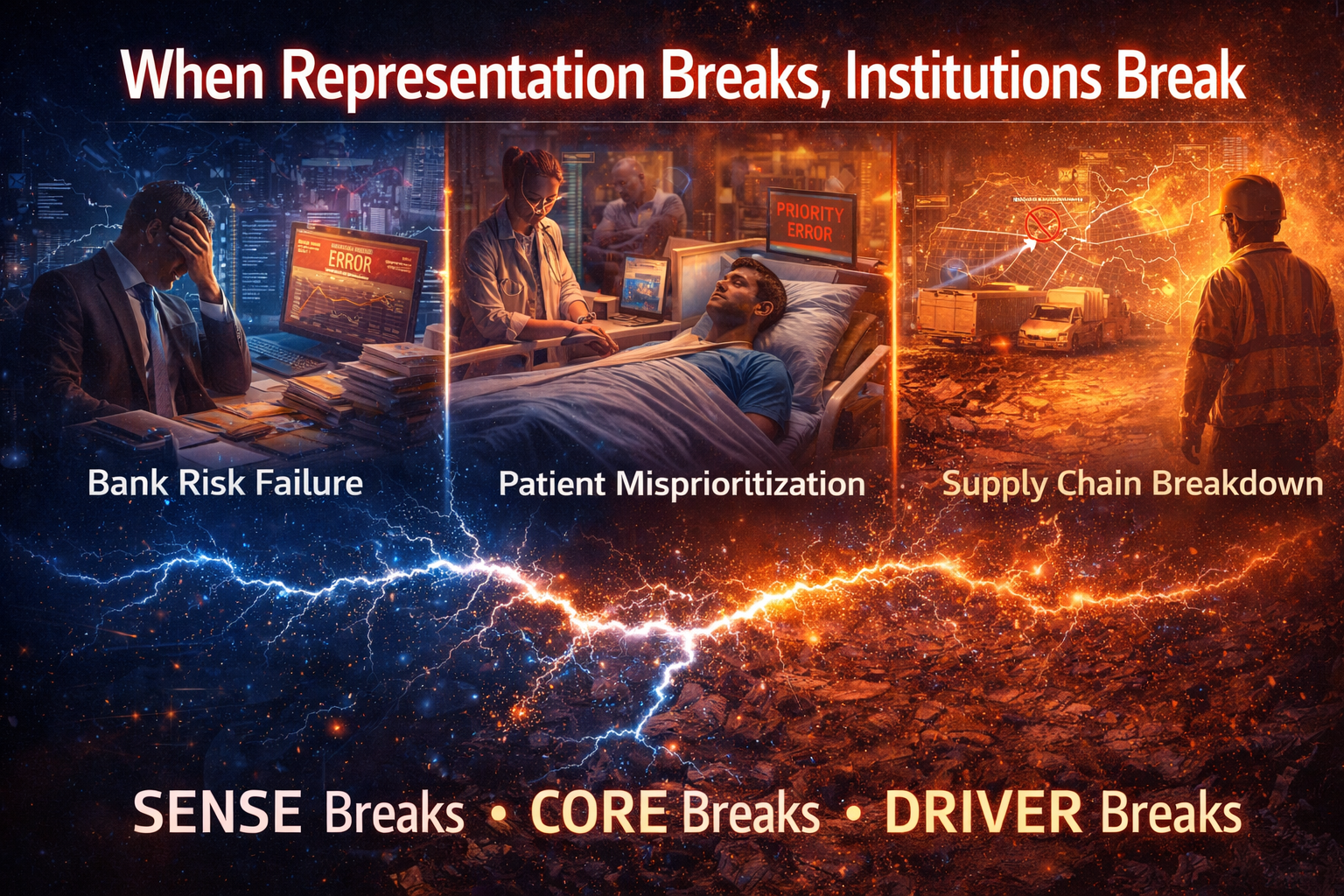

When Representation Breaks, Institutions Break

This is the central implication.

When the Representation Boundary is reached:

- SENSE becomes incomplete

- CORE becomes brittle

- DRIVER becomes risky

A credit system misjudges risk because entity resolution is weak.

A healthcare system misprioritizes patients because state representation is thin.

A supply chain system optimizes the wrong routes because evolving disruptions are not captured.

The model may still run.

The dashboard may still update.

The workflow may still complete.

But the institution’s decision integrity begins to erode.

This is why AI should be understood not only as a technical system but as a socio-technical and institutional one. NIST’s AI Risk Management Framework explicitly frames AI systems in socio-technical terms and emphasizes governance, context, and lifecycle discipline rather than narrow model performance alone. (NIST Publications)

Expanding the Representation Frontier

Seen this way, many of the most important AI-era transformations are really legibility transformations.

Satellite monitoring makes farms more representable.

Digital identity systems make citizens and merchants more representable.

Knowledge graphs make facts and relationships more representable.

Industrial sensors make factories more representable.

Enterprise observability makes digital systems more representable.

Each of these strengthens the SENSE layer.

And as SENSE improves, CORE becomes more reliable and DRIVER becomes more governable.

The competition of the next decade will not be only about who has the best model. It will be about who can expand the representation frontier of the world they operate in.

The Strategic Question for Boards and C-Suites

For leaders, the most important AI question is no longer:

How do we adopt AI?

The deeper question is:

What parts of reality remain invisible to our institution, and what would change if we could represent them better?

That question reframes AI strategy.

It shifts the discussion:

- from tools to legibility

- from pilots to institutional architecture

- from isolated use cases to representation systems

- from raw model performance to decision quality

- from automation to governed action

This is where serious board-level AI strategy begins.

Key idea:

AI does not fail only because models are weak. It fails when institutions try to reason over a world they have not made sufficiently legible.

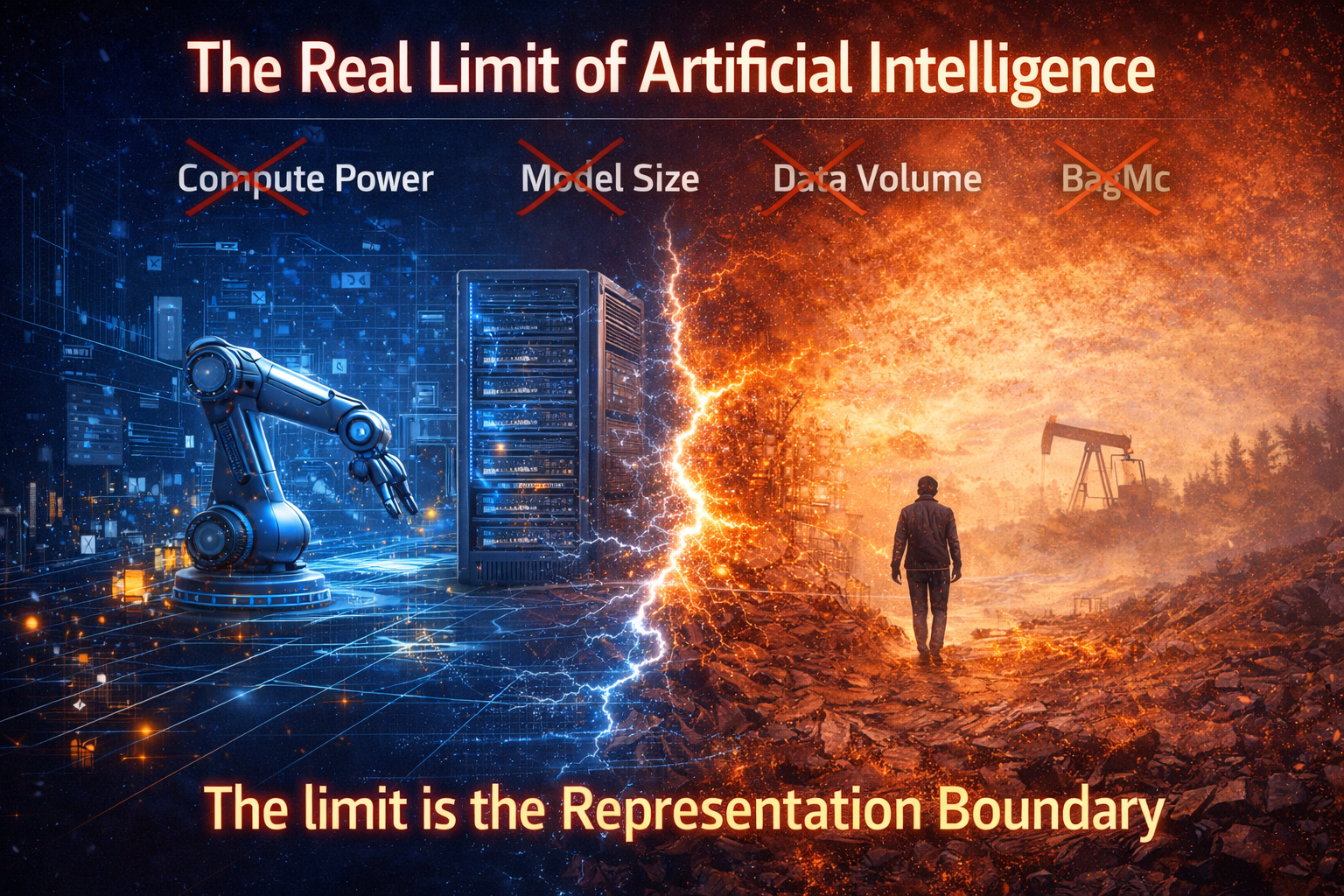

Conclusion: The Real Limit of Artificial Intelligence

The most important limit of artificial intelligence is not compute power.

It is not model size.

It is not parameter count.

It is not even the volume of data available.

The real limit is the boundary of what institutions can represent.

When reality stops being legible, intelligence loses its foundation.

And in that moment, the future of the AI economy will belong to the institutions that can extend that boundary—expanding the reach of SENSE, strengthening the reasoning of CORE, and governing the actions of DRIVER.

Those institutions will not merely use AI.

They will redesign themselves around it.

To read more on representation economy, head to

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

- Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

- The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

- Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

- Why Most AI Projects Fail Before Intelligence Even Begins

- The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

- The Enterprise AI Operating Model

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

- The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets

- The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

Glossary

Representation Boundary

The point at which an institution can no longer reliably translate reality into machine-legible form.

Representation Economy

An economic shift in which value increasingly comes from building accurate, dynamic, and actionable representations of real-world entities and systems.

Machine legibility

The degree to which reality can be sensed, identified, structured, and updated in a form machines can reason about.

SENSE

The layer where reality becomes machine-legible through signal detection, entity attachment, state representation, and evolution over time.

CORE

The cognition layer where AI systems comprehend context, optimize decisions, realize action strategies, and evolve through feedback.

DRIVER

The execution and legitimacy layer where decisions are authorized, verified, executed, and made reversible or contestable when necessary.

Entity resolution

The process of determining which signals or records belong to the same person, object, asset, or organization.

State representation

A structured model of an entity’s current condition.

Evolution

The process of updating state as new signals arrive and conditions change.

Digital twin

A virtual representation of a physical object or system, typically updated by real-time data and used for monitoring or simulation. (ibm.com)

Knowledge graph

A structured model of entities and relationships that helps systems understand facts and context, not just strings of text. (blog.google)

FAQ

What is the Representation Boundary in simple terms?

It is the point where an institution can no longer model reality clearly enough for AI systems to make reliable decisions.

How is representation different from data?

Data is raw signal. Representation is structured, entity-linked, contextual, and updated over time.

Is this just another way of describing digital twins?

No. Digital twins are one form of representation, usually focused on physical assets or systems. The Representation Economy is broader and includes people, markets, institutions, supply chains, and socio-technical systems.

Why does this matter for enterprise AI?

Because AI systems do not reason directly about reality. They reason about institutional representations of reality. Weak representation leads to weak decisions, even when models are technically strong.

What do SENSE, CORE, and DRIVER mean?

SENSE makes reality legible, CORE turns representation into intelligence, and DRIVER turns intelligence into governed action.

Why is perfect representation not the goal?

Because reality is too complex to capture fully. The strategic goal is not perfection but better representation than competitors and better representation than legacy systems.

Why should boards care about the Representation Boundary?

Because it reframes AI strategy from tool adoption to institutional capability. It determines whether AI creates sustainable advantage or fragile automation.

What is the Representation Boundary?

The Representation Boundary is the point where institutions can no longer represent reality clearly enough for AI systems to reason accurately.

What is the Representation Economy?

The Representation Economy is an emerging economic model in which value comes from making real-world entities, systems, and behaviors machine-legible so AI can reason about them.

How is representation different from data?

Data is raw signal. Representation organizes signals into entities, states, and evolving models of reality.

What are SENSE, CORE, and DRIVER?

SENSE, CORE, and DRIVER describe the institutional architecture of AI systems:

-

SENSE makes reality machine-legible

-

CORE performs reasoning and decision making

-

DRIVER executes decisions within governance frameworks

Why do many AI systems fail?

Many AI systems fail because institutions deploy powerful models without building reliable representations of the world those models must reason about.

References and Further Reading

- NIST AI Risk Management Framework (AI RMF 1.0) — useful for understanding why AI must be treated as a socio-technical system with governance and lifecycle discipline. (NIST Publications)

- Google Knowledge Graph / “Things, Not Strings” — helpful for understanding the move from raw text to entities and relationships. (blog.google)

- IBM on Digital Twins — useful for distinguishing digital twins from the broader concept of institutional representation. (ibm.com)

- World Bank / UNDP work on Digital Public Infrastructure and Digital Identity — useful for understanding why identity and legibility matter at institutional scale. (World Bank)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.