The internet first digitized information. Then it moved businesses online. Only later did it produce new company forms such as platforms and marketplaces that unlocked entirely new pools of value. AI now appears to be approaching a similar turning point.

The first order of AI value has been efficiency: faster content creation, quicker analysis, lower costs, and broader automation. The second order is already underway: enterprises are embedding AI into workflows so decisions can be taken faster, risk can be addressed earlier, and operations can adapt with less friction. But the third order is the one that may matter most over the next decade. It is the point at which AI stops being only a capability inside existing firms and starts giving birth to entirely new categories of companies.

That broader transition is increasingly visible in current research and executive discussion. Stanford HAI’s 2025 AI Index describes AI’s growing influence across society, the economy, and governance. The World Economic Forum’s work on AI transformation argues that organizations are moving beyond experimentation toward broader operational reinvention. Harvard Business Review has also argued that AI’s bigger payoff may come not only from task automation but from lowering the coordination burden across people, data, and systems. (Stanford HAI)

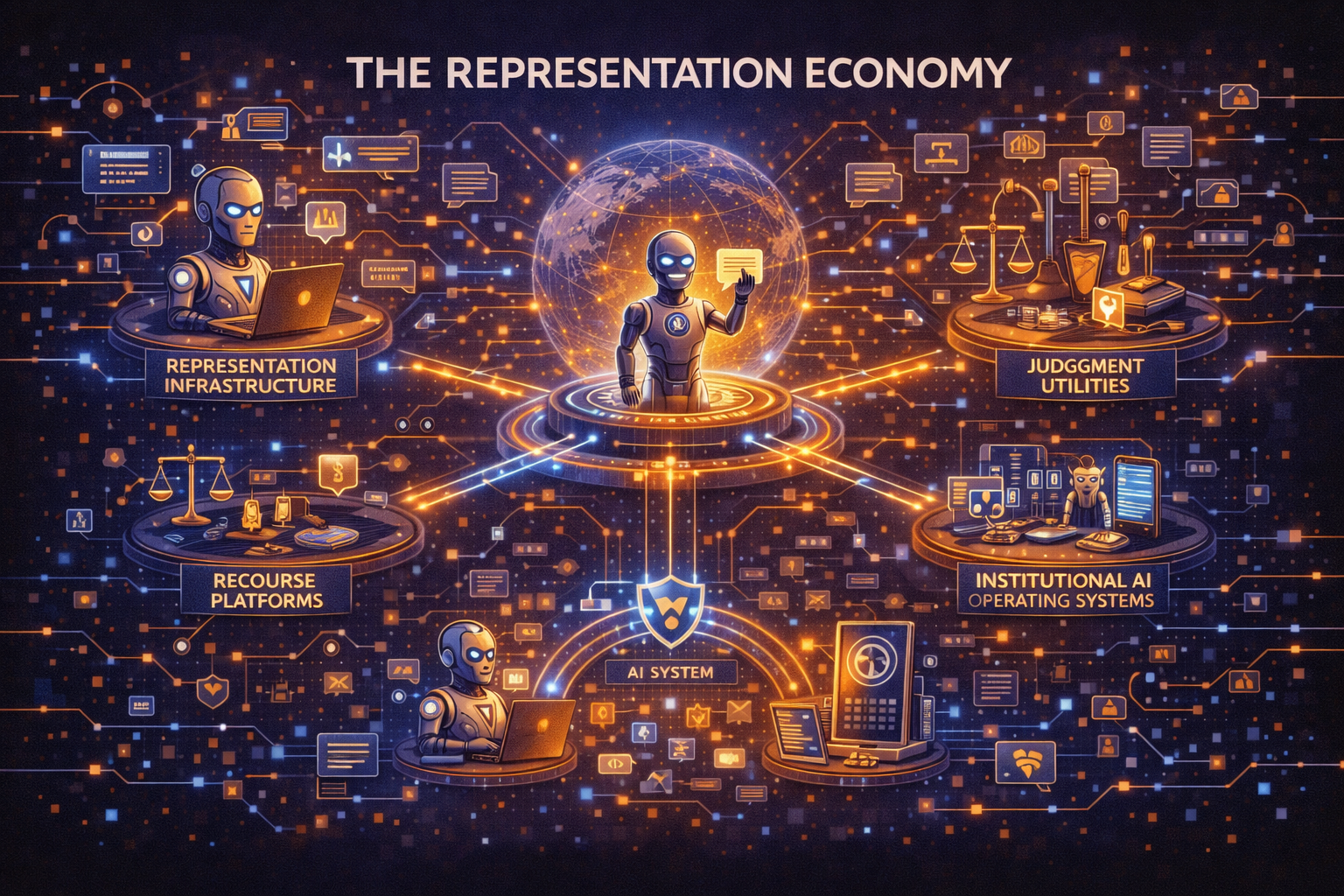

This is where a larger idea becomes useful: the Representation Economy.

My argument is simple. The next AI economy will not be won only by firms that possess intelligence. It will be won by firms that can represent reality better, reason over it more responsibly, and act on it with greater legitimacy.

That is the logic of the SENSE–CORE–DRIVER framework.

- SENSE is the legibility layer. It turns messy reality into machine-readable signals, entities, state, and evolution.

- CORE is the cognition layer. It interprets those representations, optimizes among choices, and generates decisions.

- DRIVER is the legitimacy layer. It governs authority, verification, execution, and recourse when systems move from advice to action.

Many leaders still behave as if AI advantage comes primarily from the CORE alone. They are overinvesting in cognition and underestimating the strategic importance of SENSE and DRIVER. But if intelligence becomes abundant, cheaper, and widely accessible, then the next durable source of advantage will come from the institutions and firms that make reality easiest for machines to see and safest for machines to act upon.

That is why a new company stack is emerging.

Not one giant category. Seven.

What is the Representation Economy?

The Representation Economy is an emerging economic model where value is created by how effectively systems represent real-world entities, interpret that representation, and act on it with legitimacy.

In simple terms:

The Representation Economy describes a shift where AI creates value not just through intelligence, but through how reality is represented, decisions are governed, and actions are executed.

-

Representation Infrastructure Companies

These companies will make the world machine-legible.

Every AI system acts on some representation of reality. A hospital AI acts on the representation of a patient. A logistics AI acts on the representation of a shipment. A bank AI acts on the representation of a borrower, an account, a transaction, and a risk event. If those representations are incomplete, stale, fragmented, or wrong, even the most advanced model will produce fragile outcomes.

Representation infrastructure companies will solve that problem.

They will build the identity systems, state models, entity graphs, linking layers, provenance layers, and real-time context services that make people, objects, events, assets, and environments machine-readable. Think of them as the firms that do for AI what cloud infrastructure did for software and what GPS did for mobility platforms: they create the conditions that make a new class of applications possible.

Consider a farmer applying for credit. Today, that farmer may be visible to a lender only as a static form. In the future, a representation infrastructure firm may combine weather signals, crop cycles, soil conditions, satellite imagery, local market prices, transaction history, and verified land relationships into a living representation of that farmer’s economic state. That does not merely improve a credit model. It creates the foundation for new insurance, lending, advisory, and resilience businesses.

These firms will become essential because the biggest AI bottleneck is not always intelligence. It is poor legibility.

-

Delegation Infrastructure Companies

These companies will answer a question most AI systems still avoid:

Who authorized the machine to act, under what limits, and on whose behalf?

This may become one of the most important company categories of the decade.

As AI moves from answering questions to making recommendations, initiating transactions, and coordinating workflows, institutions need more than intelligence. They need governed delegation. A machine can act safely only when authority is explicit.

Delegation infrastructure companies will build the tools that define machine authority: permission layers, identity-bound delegation, execution thresholds, approval hierarchies, role-based action rights, time-limited autonomy, rollback boundaries, and escalation rules.

Imagine a procurement agent that can negotiate delivery slots but cannot sign contracts above a threshold. Or a healthcare triage system that can escalate cases but cannot finalize treatment. Or a treasury agent that can suggest hedges but cannot execute them without dual approval.

Today, many organizations still treat this as an internal governance issue. It is larger than that. It is an emerging market. Fortune coverage of agentic AI and enterprise redesign reflects this direction: firms are increasingly being forced to rethink how work is designed, where liabilities sit, and how trust, controls, and accountability are maintained as agents gain autonomy. (Fortune)

Delegation infrastructure firms will matter because cheap cognition without controlled authority is not scale. It is exposure.

-

Judgment Utilities

If intelligence becomes common, what becomes scarce?

Judgment.

A model can generate options. It can simulate outcomes. It can explain its reasoning. But institutions still need ways to determine whether a decision is contextually appropriate, ethically defensible, compliant, and worth acting on.

That creates room for judgment utilities.

These firms will not replace decision-makers. They will provide the validation, evaluation, and escalation layer around decision systems. They may test whether a recommendation fits policy, whether it conflicts with precedent, whether it creates downstream harm, whether uncertainty is too high, or whether a case deserves human review before execution.

Think about a global bank using AI to recommend small-business lending decisions. The model may be statistically strong. But a judgment utility may sit above it, checking for policy conflicts, unusual concentration risk, regulatory mismatch, or missing context. Or consider a hospital system where AI suggests patient prioritization. A judgment utility may assess not only predicted severity but fairness, uncertainty, and recourse implications.

This direction is consistent with broader governance discussions around explainability, lifecycle oversight, and responsible deployment, themes that appear across Stanford HAI’s AI Index and major governance frameworks such as NIST’s AI RMF. (Stanford HAI)

Judgment utilities will matter because in the AI economy, being intelligent will not be enough. Systems must also be defensible.

-

Recourse Platforms

Every mature economy has mechanisms for correction.

Banks reverse fraudulent transactions. Courts hear appeals. Insurers reopen disputes. Customer service teams fix wrong decisions. The AI economy will need its own recourse architecture.

Recourse platforms will emerge to help institutions challenge, reverse, explain, and remediate machine-mediated decisions. They will provide the path back when systems act on incomplete, outdated, or incorrect representations.

This category will become more important as AI systems move deeper into credit, healthcare, employment, insurance, logistics, education, public services, and enterprise operations. The more action becomes automated, the more institutions will need infrastructure for handling disputes, exceptions, reversals, appeals, and restored trust.

Imagine an AI-driven benefits platform that wrongly flags a family as ineligible because two records were incorrectly linked. The issue is not only that the decision was wrong. The larger question is whether the institution has a fast, fair, traceable way to correct it.

In the AI economy, recourse will not be a side process. It will be part of the value architecture.

That is why recourse platforms deserve to be treated as a distinct category rather than a compliance afterthought. In high-stakes sectors, they will reduce institutional fragility and become a competitive differentiator.

-

Representation Clearinghouses

One of the least discussed problems in AI is that different systems often hold different versions of reality.

One platform thinks a shipment is delayed. Another says it is in transit. One lender sees a borrower as low risk. Another flags hidden volatility. One hospital classifies a patient state one way, while another uses a conflicting ontology.

As AI systems proliferate, conflict between representations will become a structural problem.

Representation clearinghouses will emerge to reconcile these competing versions of reality before action is taken. They will provide trusted mechanisms for cross-enterprise alignment, dispute resolution, normalization, verification, confidence scoring, and context translation.

This matters more than it sounds.

Modern economies already rely on clearing mechanisms where complexity and trust meet. Financial markets have clearinghouses. Supply chains rely on reconciliation systems and standards bodies. The AI economy will need something similar for reality alignment.

A representation clearinghouse may reconcile identity and state across insurers, hospitals, labs, pharmacies, and public systems. Or it may sit inside global trade, aligning data across exporters, customs, logistics networks, and financing providers.

These will not be mere data brokers. They will be institutions for reconciling what the machine world believes is true.

This category will grow because AI systems do not fail only when they are inaccurate. They also fail when they inherit unresolved disagreement about reality.

-

Machine-Customer Gateways

Much of the digital economy still assumes the customer is a human navigating apps, forms, and websites. That assumption will not hold for long.

Increasingly, customers will be represented by AI agents. These agents will search, compare, filter, negotiate, monitor, and sometimes transact on behalf of the human. When that happens, firms will need a new interface layer: not just human-to-company, but agent-to-company.

That is where machine-customer gateways come in.

These companies will help enterprises expose products, services, terms, trust signals, identity proofs, policy boundaries, and negotiation rules in ways that machine agents can understand and work with. They will become the AI-era equivalent of API gateways, search optimization layers, and commerce infrastructure, but for machine-mediated demand.

Consider travel. A future travel agent acting for a customer may optimize not only price, but baggage policy, visa rules, carbon preferences, child-safety needs, cancellation flexibility, loyalty economics, and airport transfer quality. Companies that remain visible only to human interfaces may become less discoverable in that market. Those that become machine-readable and agent-negotiable will gain advantage.

This is one reason structured trust signals, answer-engine visibility, and machine-readable product information are becoming strategically important. The next market may not be won only by who markets best to people, but by who becomes most legible to the agents representing them.

-

Institutional AI Operating Systems

Finally, the most powerful category may be the firms that unify the other six.

An institutional AI operating system will not simply host models or orchestrate workflows. It will combine representation, cognition, delegation, verification, execution, and recourse into a governed stack.

This is the full SENSE–CORE–DRIVER company.

Such firms will help enterprises move from scattered AI pilots to coherent machine-enabled institutions. They will not treat AI as an app or assistant. They will treat it as an operating environment for seeing, deciding, and acting.

This logic is becoming clearer in the broader management conversation. HBR’s argument that AI’s larger payoff may lie in coordination rather than simple automation, along with the World Economic Forum’s emphasis on enterprise transformation, points in the same direction: the next gains come when AI becomes part of the operating fabric of the institution rather than a detached tool. (Harvard Business Review)

A true institutional AI operating system would let a company answer questions such as:

- What does the machine believe is happening?

- What representation is that belief based on?

- What authority does it have to act?

- What human or policy boundaries constrain it?

- How can its action be checked, reversed, or challenged?

- How does the institution learn when reality changes?

That is not just software.

It is institutional infrastructure.

Why this stack matters for boards

Boards do not need to memorize all seven categories. But they do need to understand the larger pattern.

The AI era will create value in three waves.

The first wave improves existing work.

The second wave redesigns workflows and decision systems.

The third wave creates new firms whose core product is not “AI” in the generic sense, but one of these structural functions: representation, delegation, judgment, recourse, clearing, machine-customer exchange, or institutional operating control.

That is the real significance of the Representation Economy. It shifts the conversation from “How do we use AI?” to “What new market structures become possible when intelligence is abundant but trusted representation remains scarce?”

Existing companies do not need to become all seven categories. But they do need to understand which one is approaching their sector first.

A bank may need delegation infrastructure and judgment utilities.

A logistics network may need representation infrastructure and clearinghouses.

A retailer may need machine-customer gateways.

A healthcare ecosystem may eventually need all seven in some form.

The winners of the next decade will not simply buy better models.

They will redesign themselves around legibility, authority, and trustworthy action.

Conclusion: the bigger idea behind the stack

Every technological era creates its own stack.

The industrial era created the manufacturing stack.

The internet era created the digital and platform stack.

The AI era will create the representation stack.

That is why I believe the phrase Representation Economy matters. It names a shift that many leaders can already sense but cannot yet clearly describe. AI is not only changing how firms think. It is changing what must be made visible, how decisions become legitimate, and which new institutions must exist for machine-mediated markets to work.

The next great firms of the AI era may not be remembered as the ones that built the smartest models.

They may be remembered as the ones that made reality legible, delegation governable, and trust economically operable.

That is the new company stack.

And we are only at the beginning.

Glossary

Representation Economy

An economic paradigm in which value increasingly depends on how well reality is represented, interpreted, and acted on by machines and institutions.

SENSE

The legibility layer that turns reality into machine-readable signals, entities, state, and evolution.

CORE

The cognition layer that interprets representations, optimizes among choices, and generates decisions.

DRIVER

The legitimacy layer that governs delegation, verification, execution, and recourse when systems move from advice to action.

Representation Infrastructure

The systems that make people, assets, events, and environments machine-legible through identity, state, linkage, provenance, and context.

Delegation Infrastructure

The tools and rules that define what a machine is authorized to do, under what limits, and on whose behalf.

Judgment Utilities

Systems or firms that provide policy, risk, fairness, context, and escalation checks around AI-generated recommendations and decisions.

Recourse Platforms

Infrastructure for explaining, reversing, challenging, and correcting machine-mediated decisions.

Representation Clearinghouses

Institutions or systems that reconcile conflicting representations of reality across organizations before action is taken.

Machine-Customer Gateways

The interface layer through which companies become discoverable, understandable, and negotiable to AI agents acting for customers.

Institutional AI Operating System

A governed stack that unifies representation, cognition, delegation, execution, and recourse across an institution.

Institutional Legibility

The degree to which an organization can represent its critical entities, states, relationships, and changes clearly enough for machines to reason and act responsibly.

FAQ

What is the Representation Economy?

It is the idea that future AI value will depend not only on intelligence, but on how well reality is represented, decisions are governed, and actions are made trustworthy.

Why are new company categories emerging in AI?

Because as intelligence becomes more widely available, the scarcer and more valuable layers will be representation, delegation, judgment, recourse, and cross-system trust.

What is the biggest mistake companies are making today?

Many firms are overinvesting in AI cognition and underinvesting in legibility and legitimacy.

Why will representation infrastructure matter so much?

Because AI systems can only act well on the reality they can see. If reality is poorly represented, even powerful models will produce fragile outcomes.

Why should boards care?

Because the next decade of AI advantage may come less from model selection and more from choosing which structural layer their institution must own, buy, or partner around.

Are these seven categories predictions or certainties?

They are strategic categories: a way of naming the structural company types likely to emerge as AI moves from assistance to institutional action.

References and further reading

For supporting context and credibility, link to a short references section at the end of your article.

- Stanford HAI, 2025 AI Index Report. (Stanford HAI)

- World Economic Forum, AI in Action: Beyond Experimentation to Transform Industry. (World Economic Forum Reports)

- World Economic Forum, Organizational Transformation in the Age of AI. (World Economic Forum Reports)

- Harvard Business Review, AI’s Big Payoff Is Coordination, Not Automation. (Harvard Business Review)

- Fortune reporting on trust, governance, and agentic redesign in the AI workforce. (Fortune)

- Emerging Technology Solutions | Infosys Topaz Fabric: How AI Is Quietly Changing the Way Enterprise Services Are Delivered

- Emerging Technology Solutions | What Is Infosys Topaz Fabric? The Missing Layer for Scalable Enterprise AI

- Emerging Technology Solutions | Infosys Topaz Fabric: Enterprise AI Infrastructure for Scalable, Governed, and Cost-Aware AI Exec

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Clearinghouses: The Missing Infrastructure the AI Economy Needs to Reconcile Reality Before It Acts – Raktim Singh

- Recourse Platforms: The Next AI Infrastructure Market for Correction, Appeal, and Recovery – Raktim Singh

- Representation Workflows: The Hidden Operating System That Will Decide the Winners of the AI Economy – Raktim Singh

- Representation Switching Costs: Why the AI Economy’s Deepest Lock-In Will Come From Who Defines Reality – Raktim Singh

- Representation Fragility and Exclusion: The Hidden Fault Line That Will Break the AI Economy – Raktim Singh

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines – Raktim Singh

- Representation Monopolies: Why the AI Economy Will Be Controlled by Those Who Define Reality – Raktim Singh

- Representation Forensics: The Missing Layer of AI—Why the Future Will Be Decided by What Systems Thought Reality Was – Raktim Singh

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Why Intelligence Alone Cannot Run Enterprises: The Missing AI Execution Layer – Raktim Singh

- The Representation Utility Stack: Why AI’s Next Competitive Advantage Will Come from Interoperable Reality – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.