Representation Economy Explained:

The AI era is often described as a race for better models, stronger reasoning, and more capable agents. That framing misses the deeper shift.

The Representation Economy is an economic system where value flows to what can be clearly represented, reliably understood, and responsibly acted upon by machines.

That is the core idea.

A second line makes the shift even clearer:

AI does not act on reality. It acts on representations of reality.

Once this becomes visible, many things that looked like model problems start to look different. Weak AI outcomes often begin with weak visibility. Poor decisions often begin with poor representation. Fragile automation often begins with action that outruns trust.

This is why the SENSE–CORE–DRIVER framework matters.

The Representation Economy operates through three layers: SENSE (making reality visible), CORE (making decisions), and DRIVER (making action trustworthy).

If SENSE is weak, intelligence reasons over fragments.

If CORE is weak, systems misjudge what they see.

If DRIVER is weak, action loses legitimacy.

This article answers 28 additional questions that expand the meaning of Representation Economy and explain why SENSE, CORE, and DRIVER are becoming essential to the design of intelligent institutions.

1) What is the simplest definition of the Representation Economy?

The simplest definition is this: the Representation Economy is a system in which value depends on how well reality is represented for machine decision-making.

In earlier eras, value often depended on physical assets, labor, capital, or software scale. In the AI era, another layer becomes decisive: whether reality can be made visible enough for systems to identify, interpret, and act on it.

2) Why is “representation” becoming more important than “data”?

Because data alone does not create understanding.

Data can be abundant and still remain fragmented, noisy, duplicated, stale, or disconnected from meaning. Representation is what turns raw traces into something usable. It connects events to entities, links signals to condition, and gives systems a more coherent picture of what is actually happening.

3) Why is the term “Representation Economy” useful?

Because it names something many leaders already feel but cannot clearly describe.

They can sense that:

- more data has not created enough clarity

- better models have not removed fragility

- trust keeps returning as a constraint

- some realities remain invisible inside systems

The term “Representation Economy” gives those patterns a common frame.

4) Is the Representation Economy just another term for AI economy?

No. The AI economy focuses on intelligence. The Representation Economy focuses on the conditions that make intelligence useful, trustworthy, and economically consequential.

The AI economy asks how systems can reason better.

The Representation Economy asks what systems can actually see, understand, and govern well enough to act on.

5) What is the biggest mistake people make when thinking about AI?

The biggest mistake is assuming intelligence is the first problem.

In many real-world environments, the first problem is not reasoning. It is visibility. Before systems can optimize, they must know what they are looking at. Before they can act responsibly, they must have a faithful enough picture of reality.

6) Why do organizations with powerful AI still make poor decisions?

Because powerful models cannot compensate for weak representation.

A strong model applied to a weak picture does not create truth. It creates faster distortion. This is why many organizations appear technologically advanced but remain strategically fragile. They can compute well, but they still do not represent reality well enough.

7) What does “machine-readable reality” mean?

Machine-readable reality is reality translated into a form that systems can consistently identify, interpret, and act upon.

That does not mean perfect capture. It means sufficient clarity. A machine-readable representation should allow the system to know what happened, to whom it happened, in what condition, under what circumstances, and with what confidence.

8) Why does representation affect economic value?

Because value only moves easily through systems when systems can recognize what they are dealing with.

If an entity is clearly represented, it becomes easier to evaluate, compare, price, trust, insure, finance, or coordinate. If it is weakly represented, it appears uncertain, risky, or incomplete. Representation therefore affects the flow of opportunity itself.

9) How does Representation Economy change competition?

It changes competition from a contest over access to intelligence into a contest over quality of visibility.

Two companies may use the same model. The better represented company will often outperform the other because it sees reality more clearly, updates condition more effectively, and acts with less friction.

10) Why is visibility becoming a form of power?

Because what systems see clearly, they can prioritize, support, and trust more easily.

In this sense, visibility is not social visibility. It is systemic visibility. It means being legible inside the environments where modern decisions are increasingly made. In the Representation Economy, visibility becomes a form of economic power.

SENSE: Making Reality Visible

11) What is SENSE in simple language?

SENSE is the layer that answers one basic question:

Did the system understand what it was looking at?

It is the layer where reality becomes machine-legible.

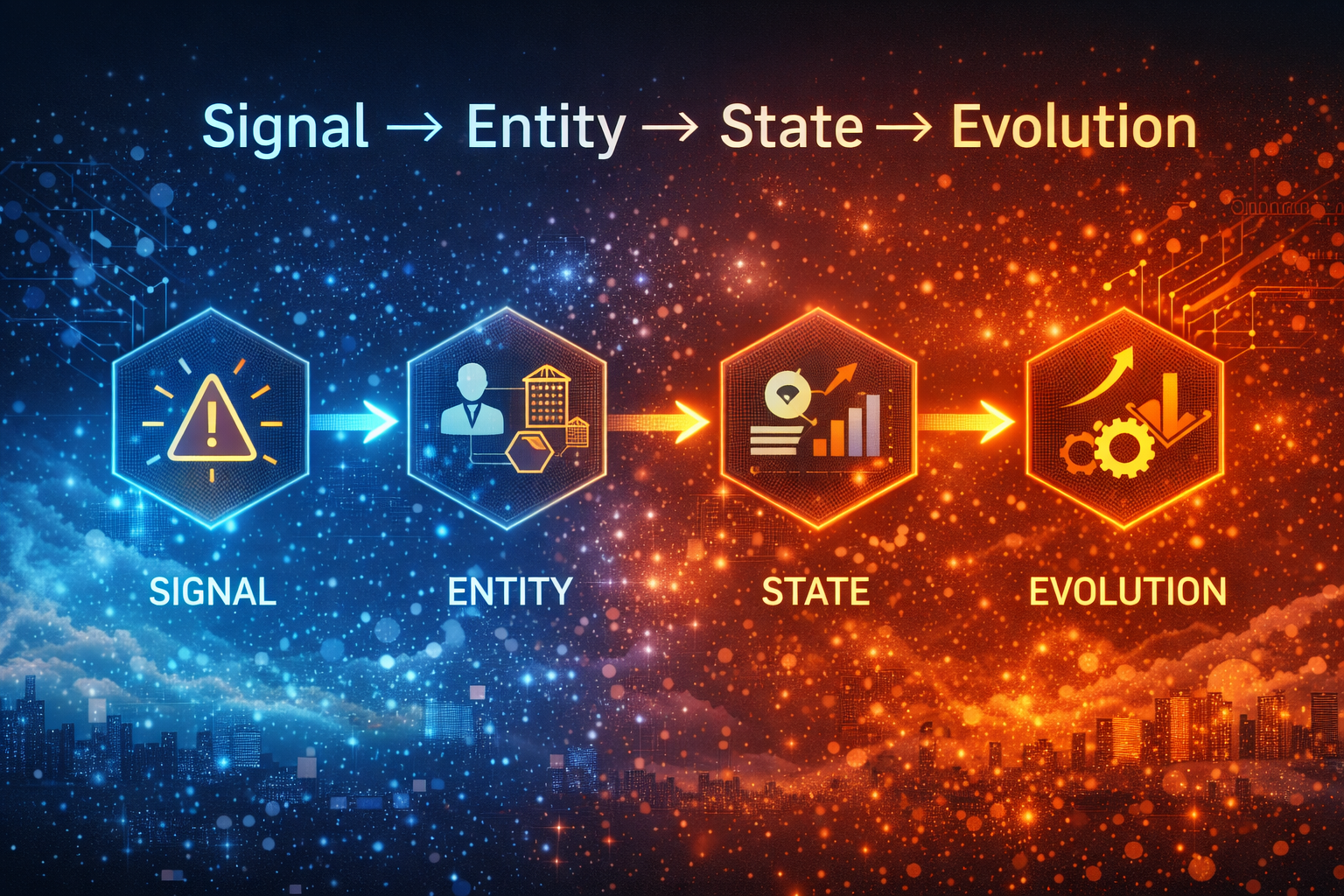

12) What does SENSE stand for?

SENSE stands for:

- Signal — detecting events, changes, and traces from the world

- ENtity — attaching those signals to something persistent

- State — modeling the current condition of that entity

- Evolution — updating that condition over time as new signals arrive

Together, these elements determine whether a system is truly seeing reality or only collecting fragments.

13) Why are signals not enough?

Because signals are only traces, not understanding.

A payment delay, sensor reading, missed shipment, abnormal test result, or unusual click pattern may all matter. But in isolation, each signal is only a fragment. It becomes meaningful only when attached to identity, connected to other signals, and interpreted over time.

14) Why is entity so important inside SENSE?

Because signals only accumulate meaning when they belong to something persistent.

Without entity, a system sees events but not continuity. It detects motion without knowing whose motion it is. Entity is what allows the system to move from scattered observations to a recognizable subject of interpretation.

15) Why is state more important than events?

Because events tell us what happened once, while state tells us what is happening now.

A single event may be noisy. State reveals condition. Is the system stable or fragile? Improving or deteriorating? Resilient or stressed? Decisions are rarely made about isolated events. They are made about conditions in motion.

16) Why does evolution matter in AI systems?

Because reality changes continuously.

A system that does not update its representation becomes structurally misaligned. It may look informed but still operate on the past. Evolution is what keeps SENSE from becoming stale.

17) What happens when SENSE is weak?

When SENSE is weak, the system becomes vulnerable to distortion.

It may:

- overreact to noise

- underreact to real change

- misclassify entities

- confuse events for condition

- create false confidence from partial visibility

Weak SENSE does not stay local. Its weakness spreads upward into CORE and DRIVER.

18) Why are most enterprises underinvesting in SENSE?

Because SENSE is foundational but not glamorous.

It does not demo like a model. It does not produce flashy outputs. It requires hard work around identity, context, continuity, updating, and uncertainty. But without that work, intelligence becomes unreliable.

CORE: Making Decisions

19) What is CORE in simple terms?

CORE is the reasoning layer.

It is where systems interpret context, compare possibilities, optimize decisions, and learn from outcomes. If SENSE is about seeing clearly, CORE is about deciding intelligently based on what has been seen.

20) What does CORE stand for?

CORE stands for:

- Comprehend context

- Optimize decisions

- Realize action

- Evolve through feedback

This is the cognition layer of intelligent systems.

21) Why is intelligence not enough?

Because intelligence can only work on the quality of reality it is given.

If the underlying representation is weak, optimization becomes dangerous. The system may become more efficient, more confident, and more scalable — while still being wrong in deep ways.

22) What is the main danger inside CORE?

The main danger is false optimization.

Systems may optimize the wrong proxy, reason over shallow context, or generate technically correct answers from structurally weak representations. This creates a dangerous illusion: the system looks smart, but it is smart about the wrong thing.

23) Why can AI be correct and still be wrong?

Because correctness at the output level does not guarantee correctness at the representational level.

A system can produce the right answer for the wrong reason. It can recommend an action that appears correct while relying on weak, missing, or unjust representation beneath the surface. That is why reasoning alone is not enough for trust.

24) Why does feedback matter inside CORE?

Because intelligence becomes useful only when it can learn from consequence.

Feedback helps systems detect when their model of the world is misaligned with actual outcomes. But feedback is only as good as the system’s ability to notice, interpret, and incorporate it. Weak feedback creates repeating mistakes that appear intelligent.

DRIVER: Making Action Trustworthy

25) What is DRIVER in simple language?

DRIVER is the layer that asks:

Can this system be trusted to act?

It is the governance and legitimacy layer of intelligent systems.

26) What does DRIVER stand for?

DRIVER stands for:

- Delegation — who authorized the system to act

- Representation — what model of reality the system used

- Identity — which entity was affected

- Verification — how the action or decision is checked

- Execution — how the action is carried out

- Recourse — what happens if the system is wrong

This is what makes action governable rather than merely possible.

27) Why is DRIVER becoming so important now?

Because AI is moving from advice to consequence.

As systems begin to approve, deny, prioritize, price, recommend, escalate, or execute in the real world, the question is no longer just whether they can reason well. The deeper question is whether their authority, execution, and accountability can be trusted.

28) What is the difference between policy governance and architectural governance?

Policy governance says what should happen. Architectural governance proves what did happen.

This distinction is critical. A policy may state that certain checks must occur before action. But architectural governance is what binds identity, preserves state transitions, records proof, and creates auditable evidence that the required controls were actually applied at the moment of execution.

29) Why do regulators care more about proof than policy?

Because written intent is not enough once systems begin to act.

Regulators increasingly want to know whether the system can prove what happened, what representation was used, who was affected, and whether the correct authority and controls were in place at the moment of action. That is a structural requirement, not just an operational one.

30) What is the deepest question behind DRIVER?

The deepest question is whether intelligence has earned legitimacy.

A system may be fast, accurate, and scalable. But if it cannot prove authority, verify action, and support recourse, it will remain fragile. DRIVER is what turns intelligence from a technical capability into an institutionally acceptable one.

Why Representation Economy, SENSE, CORE, and DRIVER Matter Together

31) Why should leaders care about all three layers together?

Because intelligent institutions fail when they overfocus on one layer and neglect the others.

SENSE without CORE produces visibility without judgment.

CORE without DRIVER produces intelligence without legitimacy.

DRIVER without SENSE produces governance over weak reality.

The real advantage comes from alignment across all three.

32) What is the most important strategic takeaway from this framework?

The most important takeaway is this:

The future will not belong only to those who build smarter systems. It will belong to those who represent reality more clearly and act on it more responsibly.

That is the logic of the Representation Economy.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- Representation Cold Start — why some industries cannot use AI until reality becomes machine-ready. (raktimsingh.com)

- The Representation Boundary: Why AI Systems Replace Reality—and Why It Will Define Who Wins the AI Economy – Raktim Singh

- Representation Collapse: Why AI Systems Fail Between Too Little Reality and Too Much – Raktim Singh

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.