As AI systems move from prediction to action, the real source of competitive advantage will not be model power alone. It will be the institutional ability to assign bounded authority to machines without losing control, accountability, or trust.

Artificial intelligence is rapidly moving beyond prediction and recommendation. In many organizations, machines are beginning to influence pricing, fraud review, credit approval, clinical prioritization, logistics routing, customer-service escalation, procurement screening, and many other operational decisions.

Global governance frameworks are responding in a similar direction: once AI systems affect consequential outcomes, institutions need human oversight, clear responsibilities, logging, monitoring, and lifecycle risk management. (Artificial Intelligence Act)

That shift changes the strategic question.

For the last few years, most AI conversations have revolved around model power: accuracy, speed, cost, multimodality, and reasoning ability.

But as AI systems move closer to action, the central problem becomes institutional, not purely technical. Machines may become more capable every quarter. Yet capability alone does not tell us when a machine should be allowed to decide, what kind of authority it should hold, how its actions should be bounded, or what must happen when something goes wrong.

This is why delegation infrastructure matters.

Delegation infrastructure is the set of institutional, technical, and governance mechanisms that allow organizations to assign bounded decision authority to machines without losing control, accountability, or trust. It is the layer that determines whether AI remains a useful assistant, becomes a safe operator, or turns into an opaque source of risk.

Put simply: if machine legitimacy asks whether an AI decision is acceptable, delegation infrastructure asks how that acceptance is operationally built.

That distinction will define the next phase of AI advantage.

What is Delegation Infrastructure?

Delegation infrastructure is the institutional, technical, and governance framework that allows organizations to safely assign bounded decision authority to artificial intelligence systems. It defines who can delegate, what decisions machines may take, how actions are monitored, and what recourse exists when machine decisions affect people or systems.

Why delegation is the real frontier of enterprise AI

Many organizations still think of AI adoption as a tooling problem. They ask which model to use, which assistant to deploy, and which workflow to automate. Those are valid questions, but they are no longer sufficient.

The harder question is this:

Under what conditions can an institution safely allow a machine to act on its behalf?

That question now sits at the heart of modern AI governance. The EU AI Act requires human oversight for high-risk AI systems and expects measures that prevent or minimize risks to health, safety, and fundamental rights. It also requires information for deployers, including oversight measures and technical means to help interpret outputs. (Artificial Intelligence Act)

NIST’s AI Risk Management Framework takes a similar view. It treats AI risk as a socio-technical issue and structures risk management across governance, context mapping, measurement, and continuous management rather than one-time testing. Its playbook emphasizes that AI governance is not a checklist exercise but an ongoing operational discipline. (NIST)

This is the deeper strategic reality: AI does not merely automate tasks. It redistributes decision rights.

And once decision rights are redistributed, institutions need infrastructure for delegation.

What delegation infrastructure actually means

Delegation infrastructure is not one dashboard, one guardrail, or one policy.

It is the operating layer that answers six basic questions:

Who is allowed to delegate?

What kind of decision can be delegated?

What information can the machine use?

What level of autonomy is permitted?

How is the action monitored or overridden?

What happens if the machine is wrong?

Without this layer, organizations often make the same mistake: they install model capability before they define institutional authority.

That leads to predictable failures.

A bank deploys an underwriting model but does not define which denials require human review.

A hospital adopts triage assistance but lacks escalation rules for edge cases.

A government agency uses risk scoring but cannot clearly explain accountability for harmful outcomes.

A manufacturer automates supply decisions but has no override logic during abnormal demand shocks.

In each case, the failure is not that the AI exists. The failure is that the delegation pathway was never properly designed.

The hidden risk: institutions often delegate by accident

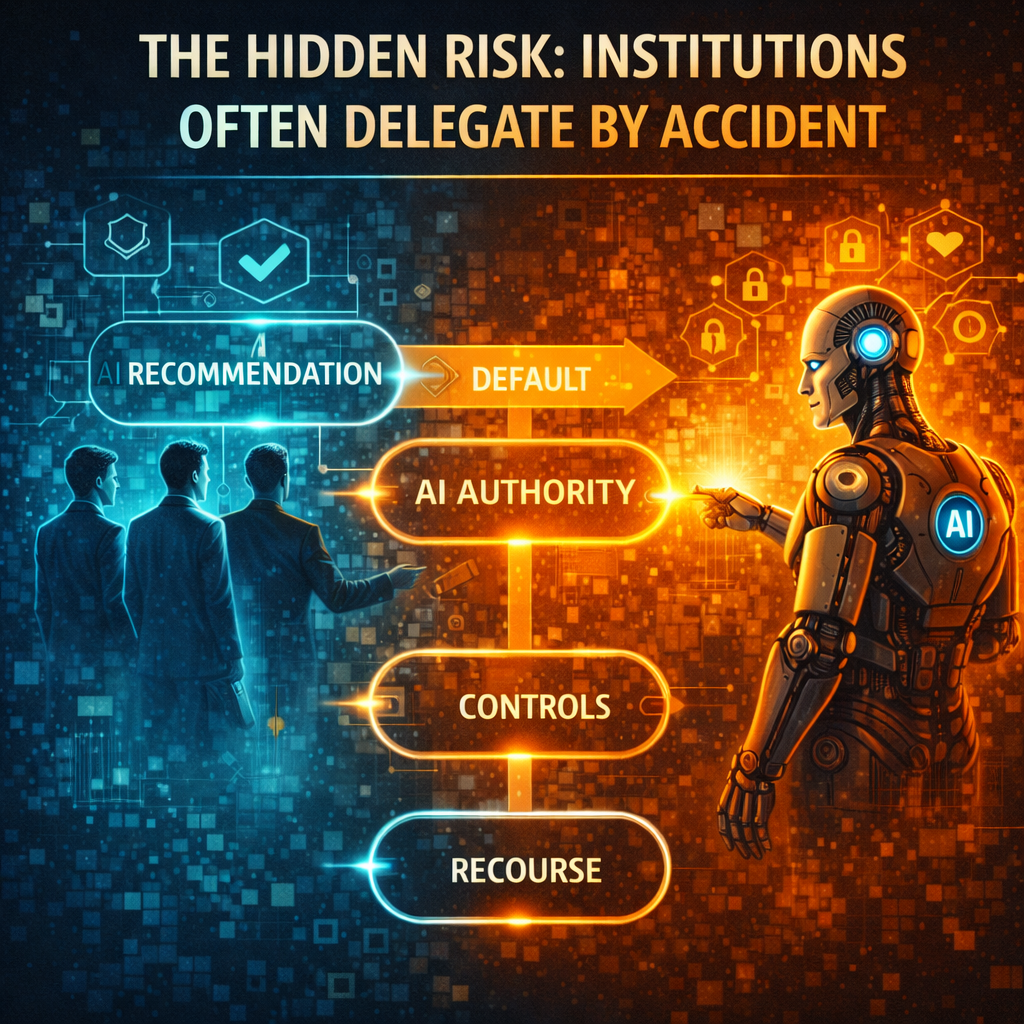

One of the most important shifts in AI is also one of the least visible: many organizations delegate more authority than they realize.

A model first appears as a recommendation engine. Staff are told it is “decision support only.” But over time, something subtle happens. People get used to the model’s output. Throughput pressures rise. Review becomes lighter. Exceptions decline. The recommendation starts behaving like a default. The default becomes operational authority.

This is how accidental delegation happens.

Not by executive decree.

Not by formal redesign.

But through repeated use, workflow friction, trust transfer, and organizational habit.

This is precisely why OECD guidance places such strong emphasis on accountability, transparency, traceability, and role-based responsibility. The issue is not only whether an AI system performs well, but whether actors understand the context, limitations, and responsibilities attached to its use. (OECD)

Delegation infrastructure exists to prevent this drift from becoming invisible.

SENSE: before institutions delegate, reality must become legible

This is where your SENSE–CORE–DRIVER architecture becomes especially powerful.

SENSE is the layer where reality becomes machine-legible.

Signal means detecting relevant events, traces, and changes.

ENtity means attaching those signals to the right person, object, asset, location, or organization.

State representation means modeling the current condition of that entity.

Evolution means updating that state over time as the world changes.

This matters because institutions cannot safely delegate decisions based on reality they poorly represent.

If a borrower’s financial state is incomplete, the machine may deny credit for the wrong reasons.

If a patient’s condition is stale or partially captured, an AI triage system may recommend the wrong priority.

If supply-chain telemetry is fragmented, an automated procurement system may intensify disruption instead of reducing it.

So the first rule of delegation is simple:

Do not delegate judgment over what you cannot represent well.

This is why the governance challenge of delegation begins with visibility. Before a machine can be trusted to act, the institution must trust the legibility of the world the machine is acting upon.

CORE: machines can reason, but reasoning does not create authority

CORE is the cognition layer.

This is where systems Comprehend context, Optimize choices, Realize action logic, and Evolve through feedback.

This is where most AI investment is going today: better models, larger context windows, stronger retrieval, more capable copilots, and more autonomous agents.

But there is a strategic mistake many institutions make here: they confuse stronger reasoning with legitimate authority.

A system may reason beautifully and still be the wrong entity to decide.

Why? Because authority is not the same thing as competence.

A junior analyst may produce an excellent recommendation but still not have signing authority.

A medical resident may detect an important signal but still need an attending physician’s decision.

A fraud model may identify an anomaly but still not be the right actor to freeze an account without review.

The same logic applies to machines.

CORE can generate intelligence.

It cannot, by itself, determine the rightful scope of action.

That is why delegation infrastructure must sit above and around model capability. Otherwise, institutions start mistaking “can infer” for “may decide.”

DRIVER: where delegation becomes governable

DRIVER is where delegation becomes institutionally safe.

In your framework:

Delegation asks who authorized the system to act.

Representation asks what model of reality the system used.

Identity asks which entity is affected.

Verification asks how the decision is checked.

Execution asks how the action is carried out.

Recourse asks what happens if the system is wrong.

This is the heart of delegation infrastructure.

A strong DRIVER layer does not try to eliminate machine action. It structures it.

For example:

A loan model may be allowed to auto-approve low-risk cases, but denials above a threshold require human review.

A logistics agent may reroute inventory within preset cost boundaries, but it may not break contractual commitments without escalation.

A customer-support agent may issue refunds up to a capped amount, but it may not close regulated complaints without human signoff.

A hospital triage system may prioritize monitoring intensity, but it may not independently determine discharge.

This is what mature delegation looks like: not all-or-nothing autonomy, but bounded authority.

That boundedness is increasingly aligned with how regulators think about oversight. The EU AI Act’s human oversight requirements are explicitly aimed at preventing or minimizing residual risks in consequential settings. (Artificial Intelligence Act)

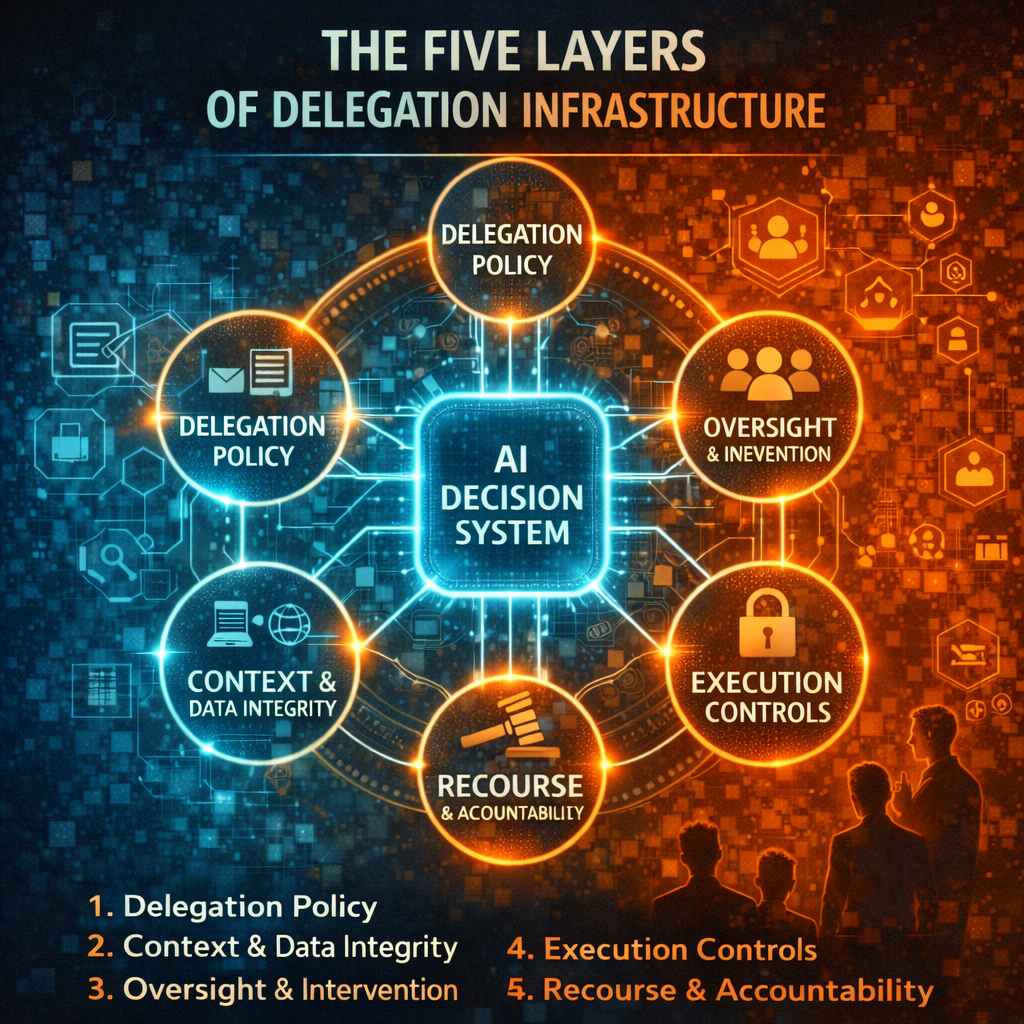

The five layers of delegation infrastructure

To make this practical, institutions should think of delegation infrastructure as five connected layers.

-

Delegation policy

This defines which decisions may be delegated, to what degree, and under what conditions. It should distinguish among recommendation, approval, execution, and exception handling.

-

Context and data integrity

This ensures the machine sees the right reality. Input quality, entity resolution, data freshness, and state completeness matter far more than most autonomy programs admit.

-

Oversight and intervention

This defines who monitors the system, what indicators trigger review, and how intervention happens. Oversight is not symbolic; it must be actionable.

-

Execution controls

This places operational limits around action: thresholds, caps, escalation paths, reversible actions, and kill switches. NIST’s framework and playbook both reinforce the idea that AI systems must be managed continuously as risks evolve in practice. (NIST)

-

Recourse and accountability

This defines how contested outcomes are reviewed, corrected, documented, and learned from. Accountability cannot end with the phrase “the system recommended it.”

When these five layers exist, delegation becomes governable. When they do not, AI systems may still function, but they function without a stable institutional contract.

Why this matters for boards and C-suites

Boards should care about delegation infrastructure because it is where AI strategy becomes enterprise risk.

Poor delegation design creates legal risk when unauthorized or weakly supervised decisions affect rights or access. It creates operational risk when humans over-trust or under-trust model outputs. It creates reputational risk when customers experience machine decisions as opaque or unfair. It creates strategic risk when AI pilots cannot scale because no one has defined authority boundaries. And it creates governance risk when management cannot explain what the machine was allowed to do.

The broader global policy direction reinforces this. OECD work on governing with AI emphasizes proportionate guardrails, transparency, oversight, and context-specific controls to maintain public trust. UN governance work similarly frames AI as a question of accountability, institutional capacity, and trusted deployment, not just raw innovation. (OECD)

This is why delegation infrastructure will become a board topic. Not because directors need to understand every model architecture, but because they need visibility into how authority is being redistributed inside the enterprise.

The institutions that win will delegate in layers, not leaps

One of the biggest myths in AI strategy is that autonomy must arrive all at once.

It does not.

The best institutions will scale delegation gradually.

First, machines observe.

Then they recommend.

Then they act within narrow limits.

Then they handle repeatable low-risk cases.

Then they operate under policy with escalating authority.

This layered approach is more resilient because it mirrors how institutions already manage human delegation. New employees do not begin with unlimited authority. They earn scope through context, process, review, and control.

Machines should be treated the same way.

That is the central strategic insight of this article:

Why Delegation Infrastructure Will Define the Next Phase of AI

As artificial intelligence moves from prediction to action, the institutions that succeed will not simply build more powerful models. They will build better governance around machine decision authority. Delegation infrastructure — built on visibility (SENSE), intelligence (CORE), and governance (DRIVER) — will become one of the defining capabilities of the AI-native enterprise.

Conclusion: the AI era will belong to institutions that know how to delegate

The future of AI will not be defined only by who builds the most intelligent systems.

It will be defined by who builds the most governable systems.

As organizations move from tools to agents, from assistance to action, and from prediction to execution, delegation infrastructure becomes one of the most important missing layers in the modern institution.

SENSE ensures the machine sees reality properly.

CORE ensures it can reason over that reality.

DRIVER ensures any delegated action remains bounded, accountable, and legitimate.

That is the architecture that matters.

The next winners in AI will not simply be the institutions that automate the most.

They will be the institutions that know how to delegate safely, visibly, and reversibly.

And in the age of machine decision-making, that may become one of the deepest sources of competitive advantage.

FAQ

What is delegation infrastructure in AI?

Delegation infrastructure in AI is the set of policies, controls, oversight mechanisms, data practices, and accountability structures that allow institutions to safely delegate bounded decision authority to machines.

Why is delegation infrastructure important?

It prevents accidental or uncontrolled AI authority. Without it, organizations risk weak oversight, unclear accountability, operational drift, and loss of trust.

How is delegation infrastructure different from AI governance?

AI governance is the broader system of rules, responsibilities, and controls for AI. Delegation infrastructure is the specific layer that determines when and how AI systems are allowed to act on behalf of an institution.

What does bounded AI autonomy mean?

Bounded AI autonomy means machines can act only within clearly defined limits, thresholds, and escalation rules set by the institution.

Why does SENSE–CORE–DRIVER matter for delegation?

SENSE ensures the system sees reality properly, CORE enables reasoning, and DRIVER ensures delegated action is authorized, verified, reversible, and accountable.

Why should boards care about delegation infrastructure?

Because it is where AI capability turns into legal, operational, reputational, and governance risk if not properly designed. (United Nations)

Glossary

Delegation infrastructure

The institutional and technical layer that determines how decision authority is safely assigned to machines.

Bounded authority

A model in which AI systems are allowed to act only within predefined limits, thresholds, and oversight conditions.

Human oversight

Measures that allow people to supervise, intervene in, or override AI systems where necessary. (Artificial Intelligence Act)

SENSE

The legibility layer of your framework: Signal, ENtity, State representation, Evolution.

CORE

The cognition layer of your framework: Comprehend, Optimize, Realize, Evolve.

DRIVER

The legitimacy and execution layer of your framework: Delegation, Representation, Identity, Verification, Execution, Recourse.

Recourse

The process by which a machine-influenced outcome can be reviewed, challenged, corrected, or escalated.

High-risk AI

AI systems used in contexts where errors or misuse can materially affect safety, rights, or important opportunities. (Artificial Intelligence Act)

References and further reading

For readers who want to go deeper, these sources are especially useful:

- The EU AI Act, especially the sections on high-risk systems, transparency for deployers, and human oversight. (Artificial Intelligence Act)

- NIST AI Risk Management Framework (AI RMF 1.0) and the NIST AI RMF Playbook, which frame AI risk as a socio-technical and continuously managed challenge. (NIST)

- OECD AI Principles and OECD work on accountability, traceability, and AI guardrails in public governance. (OECD)

- The United Nations High-Level Advisory Body on AI, especially Governing AI for Humanity, for a global institutional perspective on trusted AI deployment. (United Nations)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

- SENSE explains how reality becomes visible and machine-legible.

- CORE explains how systems reason over that reality.

- DRIVER explains how institutions safely transform intelligence into action.

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.