The Action Boundary

Enterprise AI rarely fails in pilots. It fails at the exact moment it begins to matter.

When artificial intelligence shifts from offering advice to taking action—approving, triggering, executing, or changing state—it crosses a largely invisible line that most enterprises are not prepared for. On one side, AI feels safe, impressive, and controllable. On the other, the same intelligence suddenly becomes a source of operational risk, accountability gaps, and systemic fragility.

This transition point is what I call the Action Boundary—and it explains why AI that works perfectly in POCs often breaks the moment it enters real production environments.

The quiet moment AI turns into enterprise risk

AI doesn’t usually fail in enterprises because it’s not intelligent enough.

It fails when it meets reality.

That failure becomes visible at one specific point: the moment AI stops advising and starts acting.

I call that transition the Action Boundary.

On one side of the boundary, AI is mostly safe. It drafts, suggests, summarizes, and accelerates human work. On the other side, AI becomes operationally risky—because its output can now trigger real state changes inside complex enterprise systems.

And here’s the truth many enterprises learn late:

Most enterprises don’t fail at AI because of models or data — they fail because they try to deploy probabilistic intelligence without a runtime, control, and decision governance system.

This article explains what the Action Boundary is, why POCs hide it, why production exposes it, and what must exist to cross it safely—without slowing innovation.

What the “Action Boundary” actually means

The Action Boundary is not a philosophical idea. It is a practical, observable line.

- Advice mode: AI produces recommendations; a human makes the final commit.

- Action mode: AI output becomes an execution that changes enterprise state.

The boundary is crossed when AI can:

- send a message (not just draft it),

- approve a transaction (not just recommend),

- change access (not just flag risk),

- push a configuration (not just propose),

- trigger a workflow (not just summarize a case).

The day AI can do, not just suggest, it enters a different operational regime.

AI Principles Overview – OECD.AI

Advice mode vs action mode (simple examples)

Example 1: Customer support

- Advice: AI drafts the reply; an agent edits and clicks “send.”

- Action: AI sends the reply automatically.

In action mode, a single mistake can:

- expose sensitive information,

- violate tone or policy,

- create commitments the enterprise cannot honor,

- become a compliance incident.

Example 2: Finance operations

- Advice: AI suggests “approve refund.”

- Action: AI approves and triggers payment.

Now the decision intersects with fraud risk, policy nuance, segmentation logic, and audit requirements.

Example 3: Security operations

- Advice: AI flags suspicious behavior.

- Action: AI disables an account or blocks access.

False positives become business disruption. False negatives become exposure.

Example 4: Engineering and IT operations

- Advice: AI recommends a configuration change.

- Action: AI deploys the change to production.

In action mode, the organization must answer: who approved, what is the rollback plan, what is the blast radius, and which systems are affected.

These examples feel obvious once stated. That is precisely the issue: enterprises cross the Action Boundary unintentionally because it is rarely named explicitly.

Why POCs look easy (and why that’s misleading)

POCs succeed for two structural reasons:

- They usually remain in advice mode, even when described as “autonomous.”

- They operate in controlled, simplified environments:

- limited scope,

- curated data,

- streamlined workflows,

- friendly edge cases,

- minimal compliance pressure,

- no production SLAs.

POCs operate under simplified assumptions.

Production reintroduces the full complexity of the enterprise.

Reality problem: why enterprises are messy — and fragile — by design

Enterprises are not messy because teams are careless.

They are messy because enterprises evolve under continuous pressure.

Over years—often decades—systems are stretched, patched, integrated, and repurposed to meet new requirements faster than they can be redesigned. Many legacy systems were never architected for today’s scale, integration density, or decision velocity. They survived by evolving incrementally.

That survival comes at a cost.

What exists in production today is often:

- systems that function because people understand their quirks,

- processes that work until unusual combinations appear,

- integrations that hold together but are extremely fragile.

This is the reality AI meets.

In practice, enterprise environments include:

- multiple systems with conflicting meanings for the same fields,

- incompatible data signatures and identifiers,

- processes that evolved rather than being intentionally designed,

- exceptions handled through tribal knowledge,

- policies that diverge across departments and time,

- integrations that partially fail, retry silently, or behave inconsistently,

- legacy platforms that meet current requirements but are brittle, undocumented, and sensitive to change.

Humans cope with this fragility daily.

They slow down.

They double-check.

They escalate informally.

This leads to the second canonical truth:

AI doesn’t fail when it reasons — it fails when reasoning meets messy, implicit, undocumented, and fragile enterprise reality.

In advice mode, humans absorb this fragility through judgment.

At the Action Boundary, that same fragility becomes executable risk.

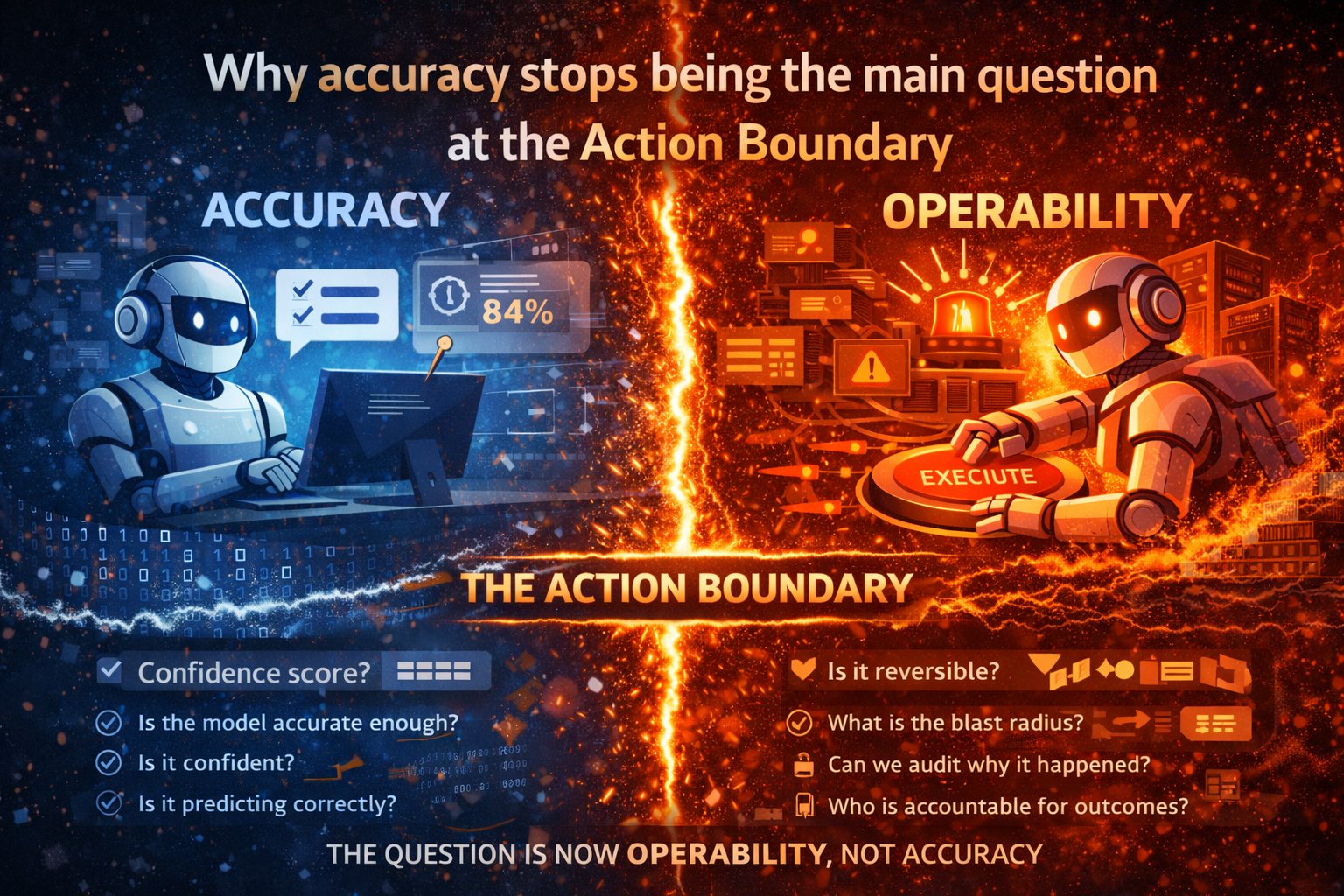

Why accuracy stops being the main question at the Action Boundary

Leaders often ask: “Is the model accurate enough?”

That question matters. But once AI crosses from advice to action, it becomes insufficient.

The real questions become:

- Is the action reversible?

- What is the blast radius if it is wrong?

- What is the cost of delay versus error?

- What evidence is required before execution?

- Who is accountable for outcomes?

- Can we audit why it happened?

- Can we stop it safely, immediately?

At the Action Boundary, the organization is no longer evaluating a model.

It is governing authority.

And this is where the deeper systemic issue appears again:

Most enterprises don’t fail at AI because of models or data — they fail because they try to deploy probabilistic intelligence without a runtime, control, and decision governance system.

Accuracy matters.

But once AI acts, operability matters more.

Action amplifies risk

The Action Boundary is unforgiving because it amplifies error.

Reasoning can be wrong in isolation. Action makes errors propagate, compound, and become enterprise incidents.

In advice mode, the wrong output is a draft.

In action mode:

- errors trigger workflows,

- workflows cascade across systems,

- cascades create customer and regulatory impact,

- impact becomes incident response.

This is why agentic AI introduces a different class of enterprise risk than copilots.

AI Risk Management Framework | NIST

Why the “copilot vs agent” debate misses the point

Copilots succeed faster because they:

- remain in advice mode,

- keep humans as the final commit,

- limit the blast radius of mistakes.

Agents struggle because they:

- cross the Action Boundary,

- operate at speed,

- interact with fragile reality,

- produce outcomes that must be owned.

The real question is not whether enterprises should adopt agents.

The real question is:

Do we have the operating system required for action?

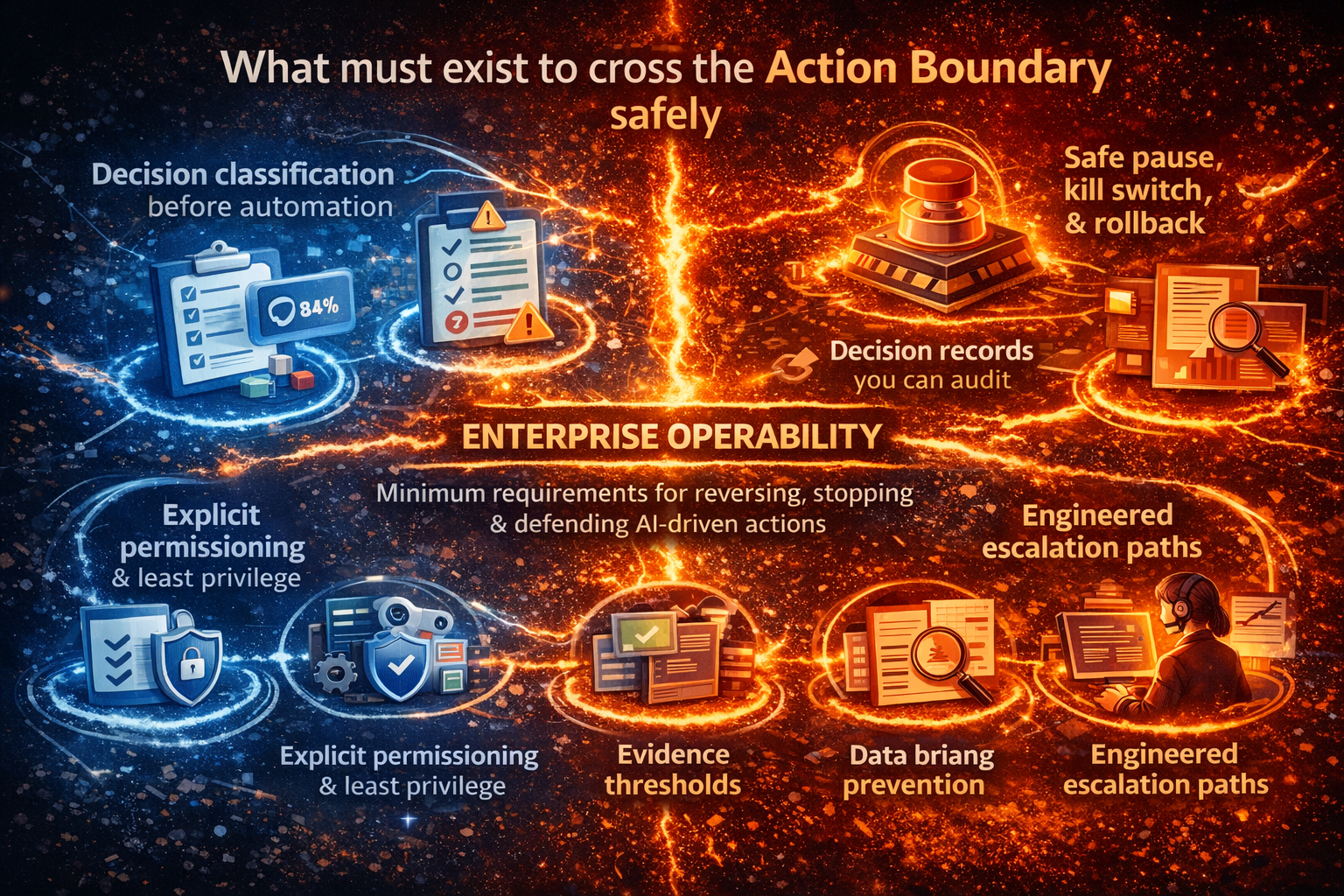

What must exist to cross the Action Boundary safely

Crossing from advice to action requires enterprise operability, not just intelligence.

The minimum requirements are clear. Minimum Viable Enterprise AI System: The Smallest Stack That Makes AI Safe in Production – Raktim Singh

1) Decision classification before automation

Not all decisions are equal.

Enterprises must define:

- which decision classes can be automated,

- which require approval,

- which must never be automated.

Without explicit classification, autonomy becomes accidental. The Shortest Path to Scalable Enterprise AI Autonomy Is Decision Clarity – Raktim Singh

2) Explicit permissioning and least-privilege tool access

Most harm comes from tool access, not text generation.

Permissions must be:

- least-privilege,

- time-bounded,

- separated for high-risk actions.

3) Evidence thresholds, not just confidence

Confidence scores are not evidence.

Evidence requires:

- authoritative sources,

- freshness checks,

- policy validation,

- provenance.

At the Action Boundary, evidence is an execution prerequisite.

4) Designed escalation, not informal intervention

Human-in-the-loop must be engineered.

Escalation should trigger on:

- ambiguity,

- policy conflict,

- high-risk decisions,

- novelty,

- abnormal patterns,

- insufficient evidence.

And escalation must route to accountable owners.

5) Decision records that support audit and review

When AI acts, the enterprise must be able to answer:

- what was known,

- why the decision was made,

- which rules applied,

- who approved,

- what happened next.

This requires decision-level records, not just logs.

6) Safe pause, kill switch, and rollback

“Turning it off” is not enough.

Enterprises need:

- safe pause,

- immediate stop,

- rollback paths,

- containment mechanisms.

This is what makes autonomy defensible.

A practical adoption path

Enterprises do not need to jump directly to full autonomy.

A safer path is:

- Advice in real workflows

- Bounded, reversible actions

- Approved medium-risk decisions

- Expanded autonomy with strong controls

This preserves momentum without risking trust.

Why this matters across industries and geographies

The Action Boundary appears everywhere:

- regulated industries,

- consumer platforms,

- internal operations,

- complex supply chains.

The pattern is consistent:

- POCs isolate,

- production integrates,

- advice is tolerated,

- action is governed.

Enterprises that treat AI as an operating system problem—runtime, control, decision governance—scale with fewer incidents and greater confidence.

The canonical takeaway

If you remember nothing else, remember this trilogy:

- Most enterprises don’t fail at AI because of models or data — they fail because they try to deploy probabilistic intelligence without a runtime, control, and decision governance system.

- AI doesn’t fail when it reasons — it fails when reasoning meets messy, implicit, undocumented, and fragile enterprise reality.

- Reasoning can be wrong in isolation. Action makes errors propagate, compound, and become enterprise incidents.

This is why the Action Boundary is where enterprise AI starts failing—and why it is also the boundary where enterprise AI must become a governed operating system, not a clever tool.

Final close

AI can advise and still remain a tool.

When AI acts, it becomes part of the enterprise.

That moment is the Action Boundary.

Cross it accidentally, and trust erodes.

Cross it deliberately, with runtime, control, and decision governance, and autonomy becomes a durable advantage.

FAQ

What is the Action Boundary in enterprise AI?

The Action Boundary is the point where AI systems move from providing recommendations to executing actions that change enterprise state, introducing new risks around accountability, reversibility, and control.

Why does enterprise AI fail after successful POCs?

Because POCs operate in simplified environments. Production AI must deal with messy data, fragile legacy systems, compliance constraints, and irreversible actions.

Why is model accuracy not enough in production AI?

Once AI takes action, the key risks shift from accuracy to operability—whether decisions can be audited, reversed, stopped, and defended.

What systems are required to safely cross the Action Boundary?

Enterprises need an AI runtime, control plane, decision governance, escalation mechanisms, audit trails, and rollback capabilities.

Is the Action Boundary relevant only for agentic AI?

No. Any AI system that triggers actions—approvals, notifications, access changes, or transactions—crosses the Action Boundary.

📘 Glossary

Action Boundary

The transition point where an AI system moves from advising humans to executing actions that change enterprise state.

Advice Mode

An AI operating mode where outputs are recommendations reviewed and committed by humans.

Action Mode

An AI operating mode where outputs directly trigger workflows, transactions, or system changes.

Enterprise AI Runtime

The operational layer responsible for executing AI decisions safely within enterprise systems.

AI Control Plane

The governance layer that enforces policy, permissions, observability, escalation, and reversibility for AI actions.

Decision Governance

The framework defining which decisions AI can make, under what conditions, with what approvals and accountability.

Agentic AI

AI systems capable of planning and executing actions across tools and workflows with varying levels of autonomy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.