It is acting on the wrong representation of reality.

For decades, due diligence has meant reviewing financial statements, legal exposure, contracts, cyber posture, operational maturity, management strength, and market potential. That approach made sense in a software-led economy where companies were primarily buying assets, talent, customers, intellectual property, and distribution.

But AI changes the object of judgment.

In an AI-shaped economy, organizations increasingly depend on machines to interpret situations, classify entities, recommend actions, trigger workflows, negotiate across systems, and, in some cases, act autonomously within defined boundaries. That means the quality of a company, a vendor, or a transformation program can no longer be judged only through traditional diligence. It must also be judged through a deeper question:

Can this business be represented clearly enough for machines to understand it, trust it, and act on it safely?

That question still sits outside most boardrooms, deal rooms, procurement offices, and transformation programs.

It will not stay there for long.

What is Representation Due Diligence?

Representation Due Diligence is the process of evaluating whether an organization’s data, systems, and context accurately represent reality in a machine-readable form before deploying AI, making acquisitions, or entering vendor partnerships.

Across major policy and governance frameworks, the direction is becoming clearer. The OECD’s 2026 Due Diligence Guidance for Responsible AI pushes enterprises to identify, prevent, mitigate, and remedy adverse impacts linked to AI systems.

NIST’s AI Risk Management Framework similarly treats AI risk as something that must be governed across the lifecycle, not merely at the model layer. The EU AI Act places explicit emphasis on data governance, record-keeping, logging, and quality management for high-risk systems.

The World Economic Forum’s work on AI agents and governance also points toward more disciplined evaluation, oversight, and accountability in deployment. Taken together, these signals suggest that AI is forcing due diligence to expand beyond software capability and into the quality of machine-usable reality itself. (OECD)

That is why a new discipline is emerging:

Representation Due Diligence

Representation due diligence is the process of assessing whether an organization’s reality is legible, current, governable, and trustworthy enough for AI-based decision-making, automation, and delegation.

In simple language, it asks six foundational questions:

- Are the signals about the business accurate and timely?

- Are the important entities clearly identified?

- Is the state of those entities modeled reliably?

- Does that state change as reality changes?

- Are decisions explainable in context?

- Can actions be governed, challenged, reversed, and recovered when necessary?

This is where my SENSE–CORE–DRIVER framework becomes especially useful.

Most AI due diligence today still focuses too narrowly on the CORE: the model, the tool, the reasoning engine, the user interface, the productivity promise, the pilot outcome. But the real question is wider.

SENSE: Can the system see reality clearly?

This is the layer where reality becomes machine-legible.

A system cannot act wisely if it cannot see accurately. If signals are delayed, if entities are misidentified, if operational state is stale, if exceptions are missing, the machine may reason fluently over an incomplete world.

CORE: Can the system reason over that reality well enough?

This is the cognition layer.

It includes analysis, prediction, classification, inference, recommendation, prioritization, and decision support. It matters enormously. But it is only as strong as the reality it is operating on.

DRIVER: Can the system act with authority, control, traceability, and recourse?

This is the legitimacy layer.

A decision in production is never just a mathematical event. It sits inside human, institutional, and legal systems. Someone must have delegated authority. Someone must be accountable. There must be traceability. There must be override. There must be recourse if the system was wrong.

That is why the future of due diligence will not begin with the question, “How good is the model?”

It will begin with a more difficult and more valuable question:

“What version of reality is this system actually operating on?”

That may sound abstract. It becomes very concrete the moment money, compliance, operations, or customer harm enter the picture.

Why traditional due diligence is becoming insufficient

A traditional due diligence process can tell you whether a target has good revenue growth, attractive margins, a promising customer base, and scalable software. It can tell you whether a vendor has certifications, reference clients, and product-market fit. It can tell you whether a transformation initiative has executive sponsorship, budget, milestones, and technology partners.

But it often misses something much more important in the AI era:

whether the organization has a coherent machine-readable view of itself.

That gap is becoming more consequential because AI magnifies representation quality.

If the representation is strong, AI compounds value.

If the representation is weak, AI compounds confusion.

This is one reason organizations continue to struggle when moving from promising AI pilots to durable enterprise outcomes. Bain has argued that many AI efforts stall because of poor data quality, unclear ownership, and inconsistent governance. IBM similarly emphasizes that scalable enterprise AI depends not only on model governance, but equally on strong data governance and disciplined operating practices. (Bain)

That is the real shift.

In the software era, the central question was often: Can this process be digitized?

In the AI era, the more important question becomes: Can this reality be represented well enough for machines to participate in it responsibly?

A simple acquisition example

Imagine a large bank acquiring a fast-growing fintech.

On paper, the target looks excellent. Revenue is rising. Customer acquisition costs are healthy. The interface is modern. AI is already embedded across support, underwriting, fraud review, and marketing.

Traditional diligence may conclude that the target is attractive.

Representation due diligence asks a different set of questions.

Are customer identities consistent across systems, or stitched together through brittle workarounds?

Are fraud indicators linked to stable entities, or floating across disconnected logs?

Is credit risk based on recent, validated state, or on stale behavioral patterns?

Can the acquirer trace which models influenced which decisions?

If a regulator asks for an explanation, can the firm reconstruct not just the output, but the represented reality that produced it?

If the answers are weak, the acquirer may not be buying a high-quality AI business. It may be buying representation debt.

Representation debt is dangerous because it is usually invisible at deal time. It surfaces later, during integration, audit, customer disputes, exception handling, and regulatory scrutiny. The acquirer thought it was buying intelligence. In reality, it bought ambiguity.

That matters because AI is already moving into M&A workflows. McKinsey notes that generative AI is being used across target identification, diligence, and integration planning, and it has reported that organizations using gen AI in M&A have seen shorter deal cycles.

That makes one thing even more important: if AI is accelerating deal work, weak representations can also accelerate mistaken confidence. (McKinsey & Company)

A simple vendor example

Now consider a manufacturer selecting an AI-enabled predictive maintenance vendor.

The vendor promises lower downtime, earlier warnings, fewer manual inspections, and better failure prediction.

Most procurement teams will ask sensible questions:

Is the model accurate?

Does it integrate with our systems?

What is the commercial model?

What certifications does the vendor hold?

All of those questions matter.

But representation due diligence asks better ones.

What exactly counts as a “machine” in this environment?

How are components, units, sites, and maintenance histories matched?

How often is the state of each asset refreshed?

What happens if sensor data is delayed, mislabeled, or missing?

Can the system distinguish a real anomaly from a change in operating context?

Who is accountable when the representation is wrong: the vendor, the plant, the integrator, or the data pipeline owner?

These are not edge-case questions. They are operational questions.

An AI vendor often fails not because the model is weak, but because the represented world around the model is messy.

A sensor is attached to the wrong asset.

A maintenance event was never recorded.

A component was replaced, but the system of record was not updated.

A site uses different naming conventions.

The same machine exists under three different identifiers.

The system then reasons correctly over an incorrect world.

That is not a model failure. It is a representation failure.

This is why third-party risk thinking is shifting as well. Deloitte’s work on AI and third-party risk management points to rising interest in using AI while managing new forms of risk across third-party ecosystems.

The pattern is clear: vendor diligence is moving closer to questions of data quality, lineage, governance, and operational trustworthiness. (Deloitte)

A simple transformation example

Now consider an insurer launching a major AI-led claims transformation.

The board approves the budget. Consultants are hired. A technology platform is selected. The roadmap is announced. Everyone speaks about efficiency, customer experience, automation, and cost takeout.

Six months later, the transformation slows down.

Why?

Because “claim” means different things in different business units.

Because customer identity is inconsistent across channels.

Because historical claims data contains missing context.

Because exception rules live inside email chains and tribal memory.

Because adjusters often know when the data is wrong, but the system does not.

Because the organization digitized the process without making reality machine-legible.

This is where many AI programs quietly break.

The model may be fine. The budget may be real. The ambition may be sincere. But the underlying representation of the operating world is too fragmented to support reliable machine participation.

That is why the first question in an AI transformation should not be:

“Which model should we deploy?”

It should be:

“What reality are we asking the model to operate on?”

That first step is the reality audit.

What is a reality audit?

A reality audit is the practical engine of representation due diligence.

It is a structured review of whether an organization’s operational world is fit for machine understanding and machine action.

At a minimum, it examines four foundational dimensions.

-

Signal quality

Are the inputs reliable?

Do events arrive on time?

Are the logs complete?

Do systems capture the right signals?

Are important changes visible, or still buried in manual workarounds?

If signal quality is weak, the system does not see the world clearly.

-

Entity clarity

Does the organization know what is what, and who is who?

Can it distinguish one customer from another?

One supplier from another?

One shipment from another?

One facility from another?

One employee record from another?

If the identity layer is weak, everything above it becomes fragile.

-

State fidelity

Does the represented state match real-world condition closely enough to support action?

Does the system know whether the contract is still valid?

Whether the shipment has been delayed?

Whether the diagnosis has changed?

Whether the exception has already been approved?

Whether the machine has been replaced?

Whether the customer has already been contacted?

If state is stale, AI becomes dangerously confident.

-

Governed action

If a system makes or triggers a decision, can the organization explain, verify, limit, reverse, and challenge that action?

This is where DRIVER becomes essential.

The EU AI Act’s focus on record-keeping, logging, documentation, and quality management reflects exactly why this matters. High-risk AI systems are increasingly expected to support traceability, oversight, and disciplined governance rather than opaque automation. (AI Act Service Desk)

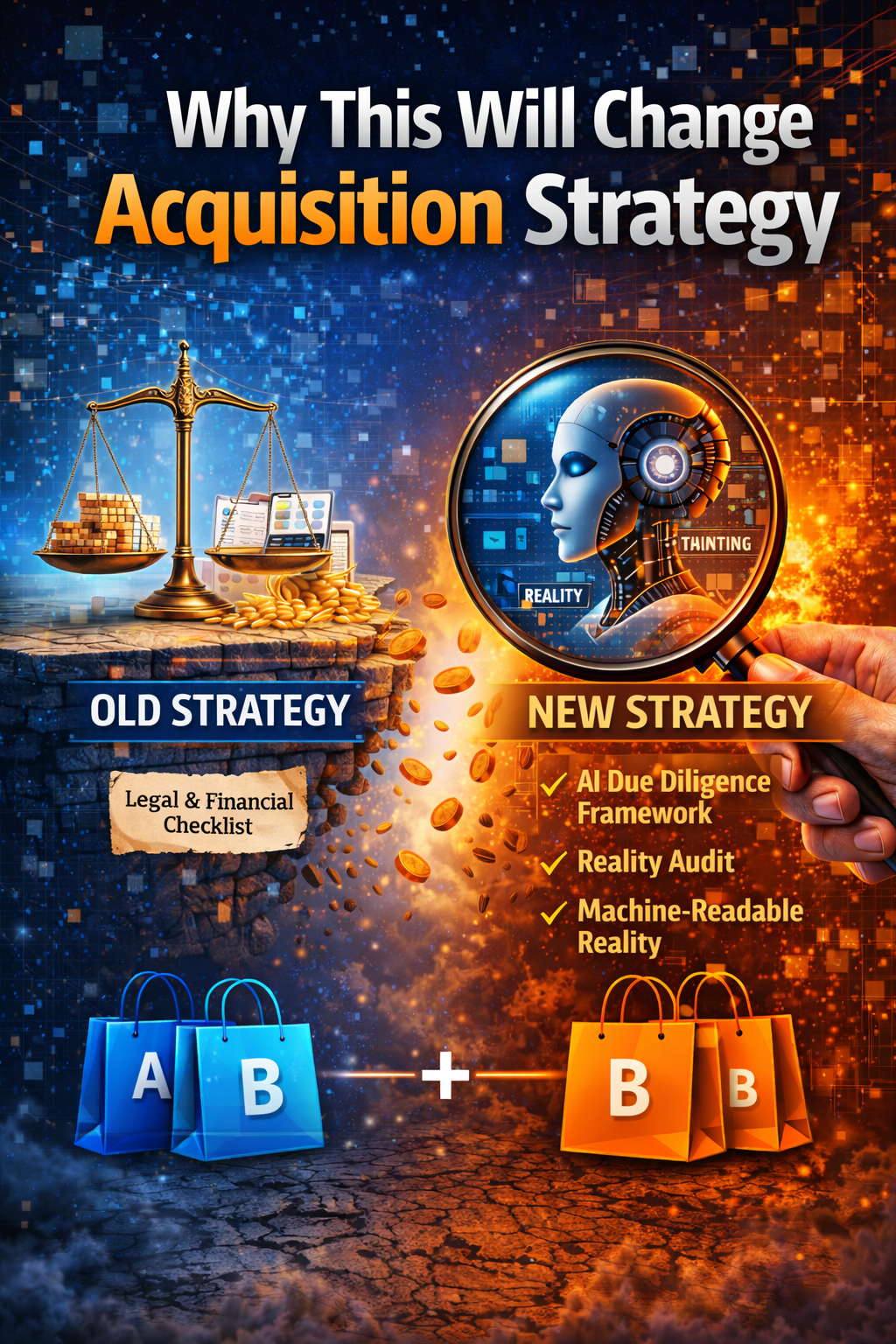

Why this will change acquisition strategy

In the AI era, acquisitions will increasingly carry hidden questions like these:

Can the target’s workflows be integrated into machine-mediated decision systems?

Can its data models be reconciled with ours?

Does it carry silent representation debt?

Will its AI systems survive regulatory scrutiny after integration?

Will synergy depend on cleaning up reality before automation can scale?

This means sophisticated acquirers will stop treating AI as a feature checklist and start treating representation quality as a core deal variable.

A company with lower short-term revenue but cleaner machine-readable operations may become more valuable than a larger company with chaotic internal reality.

That is a shift in valuation logic.

Why this will change vendor selection

The same logic applies to partnerships.

The best AI vendor will not simply be the one with the most advanced model. It may be the one whose system:

- binds data to entities cleanly

- models state explicitly

- logs decisions in context

- handles exceptions visibly

- supports human override

- enables recourse when something goes wrong

In other words, the strongest vendor may be the one that represents reality more responsibly.

Why this will change transformation programs

Transformation leaders will need to learn a harder lesson than most current AI playbooks admit:

You cannot automate what you cannot represent.

You cannot safely delegate decisions into a world your systems only partially understand.

You cannot scale AI on top of stale state, broken identity, weak lineage, and undocumented exceptions and call the result transformation.

That is not transformation.

That is acceleration of confusion.

The new winners in the AI economy

Representation Due Diligence will become a new category of strategic capability.

Boards will ask for it before AI-heavy acquisitions.

Private equity firms will incorporate it into thesis formation.

Procurement teams will require it before major vendor onboarding.

Transformation offices will use it before automating core workflows.

Consulting firms will build new practices around it.

Software and services providers will increasingly sell reality readiness, not just AI readiness.

The winners in the AI economy will not simply be those with the smartest models.

They will be the institutions that can answer, with confidence:

- what reality their systems can see

- how faithfully they represent it

- how well they reason over it

- and how safely they act on it

That is the deeper meaning of the shift from software diligence to representation diligence.

Conclusion: The board question that changes everything

In the industrial era, firms were judged by their assets.

In the digital era, they were judged by their software and data.

In the AI era, they will increasingly be judged by the quality of the reality they make available to machines.

That is why every serious AI-era acquisition, vendor partnership, and transformation program will eventually begin with a reality audit.

Because the biggest AI risk is not always that the machine is unintelligent.

It is that the machine is reasoning over a world your institution has represented badly.

And once that happens, failure begins long before the model begins.

FAQ

Why is traditional due diligence no longer enough in the AI era?

Traditional due diligence focuses on finance, legal issues, operations, and technology assets. In the AI era, firms must also assess whether reality is represented clearly enough for machine-driven analysis, automation, and delegation.

Why does representation due diligence matter in acquisitions?

It helps acquirers identify hidden representation debt, integration risk, stale state, identity fragmentation, and governance weaknesses that can erode deal value after closing.

Why does representation due diligence matter in vendor partnerships?

It helps buyers evaluate whether a vendor’s AI system is working on accurate, current, and properly governed representations of the business environment rather than making impressive claims on top of weak operational data.

Why does representation due diligence matter in transformation programs?

Many AI transformations fail not because the model is weak, but because the business reality underneath the model is fragmented, inconsistent, or poorly governed. Representation due diligence reveals that gap early.

How does SENSE–CORE–DRIVER relate to representation due diligence?

SENSE checks whether reality is visible and legible. CORE checks whether the system can reason well. DRIVER checks whether action is governed, traceable, and reversible. Together they provide a complete architecture for evaluating AI readiness.

What is representation debt?

Representation debt is the hidden risk that accumulates when an organization’s machine-readable view of reality is inaccurate, fragmented, stale, or poorly governed. It often surfaces later through integration failures, audit issues, customer disputes, or unsafe automation.

Q1. What is Representation Due Diligence?

Representation Due Diligence evaluates whether real-world entities, data, and processes are accurately captured in machine-readable systems before AI is applied.

Q2. Why is traditional due diligence insufficient for AI?

Traditional due diligence focuses on financial and legal metrics, but AI systems depend on data quality, context, and representation—areas often ignored.

Q3. What is a reality audit in AI?

A reality audit checks whether an organization’s data truly reflects real-world conditions, entities, and changes over time.

Q4. Why do AI projects fail even with good models?

AI fails when the underlying data does not represent reality accurately, leading to incorrect decisions despite strong models.

Q5. What should companies evaluate before AI transformation?

Companies must audit:

- Data completeness

- Identity consistency

- Context linkage

- Real-time updates

- System interoperability

Glossary

Representation Economics

A view of the AI economy in which value creation increasingly depends on who can make reality legible, trustworthy, governable, and actionable for machines.

Representation Due Diligence

A new diligence discipline focused on whether an institution’s machine-readable reality is strong enough to support AI-led judgment and action.

Reality Audit

A structured assessment of signal quality, entity clarity, state fidelity, and governed action before scaling AI.

Representation Debt

Hidden institutional risk caused by poor machine-readable representations of customers, assets, workflows, contracts, events, or exceptions.

Machine-readable reality

The structured, updated, and governed representation of the world that AI systems use to reason and act.

SENSE

The layer where reality becomes machine-legible through signal capture, entity binding, state representation, and evolution over time.

CORE

The cognition layer where systems interpret, reason, prioritize, optimize, and decide.

DRIVER

The legitimacy layer that governs delegation, representation, identity, verification, execution, and recourse.

Entity clarity

The ability to distinguish one customer, supplier, asset, shipment, or record from another reliably across systems.

State fidelity

The degree to which the system’s represented state matches the real-world condition closely enough to support action.

Governed action

Action that is bounded, traceable, reviewable, and reversible within institutional authority.

Representation

The machine-readable version of real-world entities, relationships, and states.

Representation Risk

The risk that AI systems act on incorrect or incomplete representations of reality.

AI Due Diligence

Expanded due diligence that includes data, systems, and representation quality—not just financials.

References and further reading

The broader governance shift discussed in this article is supported by current global guidance from the OECD, NIST, the EU AI Act ecosystem, and the World Economic Forum, all of which increasingly emphasize lifecycle governance, documentation, logging, accountability, and context-aware oversight for AI systems. (OECD)

The enterprise operating challenge is also visible in current industry analysis from McKinsey, Bain, Deloitte, and IBM, which point toward the growing importance of data quality, governance, third-party risk discipline, and operational readiness as AI moves from pilots to production. (McKinsey & Company)

- Emerging Technology Solutions | Infosys Topaz Fabric: How AI Is Quietly Changing the Way Enterprise Services Are Delivered

- Emerging Technology Solutions | What Is Infosys Topaz Fabric? The Missing Layer for Scalable Enterprise AI

- Emerging Technology Solutions | Infosys Topaz Fabric: Enterprise AI Infrastructure for Scalable, Governed, and Cost-Aware AI Exec

-

Explore the Architecture of the AI Economy

- This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fragility and Exclusion: The Hidden Fault Line That Will Break the AI Economy – Raktim Singh

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines – Raktim Singh

- • Why Most AI Projects Fail Before Intelligence Even Begins

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

- The Representation Attack Surface: Why AI’s Biggest Threat Is Reality Hacking, Not Model Hacking – Raktim Singh

- The Chief Representation Officer: Why Institutions Collapse When Machine-Readable Reality Falls Behind – Raktim Singh

- The Scarcity of Reality: Why the AI Economy Will Be Defined by the Lifecycle of High-Trust Representation – Raktim Singh

- Delegation Rating Agencies: Why the AI Economy Needs a New System to Rate Machine Authority – Raktim Singh

- The Machine-Readable Franchise: How Small Firms Will Win in the AI Trust Economy – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

-

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.