For the last decade, the dominant question in AI has been simple: Can the system make a good decision?

That question still matters. But it is no longer enough.

As AI systems move from recommendation to action—from scoring and ranking to approving, denying, flagging, suspending, escalating, pricing, filtering, routing, and executing—a second question becomes unavoidable:

What happens when the system is wrong?

Not wrong in the abstract. Wrong in the real, institutional, expensive sense.

A loan application is rejected because income data is stale.

A seller account is suspended because fraud signals were misread.

A patient claim is denied because a diagnosis code was mapped incorrectly.

A worker is screened out because the system inferred the wrong fit.

A shipment is flagged as risky because the digital state of the cargo no longer matches physical reality.

In each case, the failure is not just a bad output. It is a breakdown in the institution’s ability to correct reality inside the system.

That is why the AI economy will not stop at models, copilots, and agents. It will also create a new class of infrastructure: Recourse Platforms.

These platforms will not exist to make the first decision. They will exist to ensure that decisions can be challenged, corrected, escalated, reviewed, and repaired when machine-readable reality diverges from lived reality.

And that will become a major market.

Across jurisdictions, the direction is already visible. GDPR gives individuals rights to rectify inaccurate personal data, and Article 22 provides protections in certain solely automated decisions. The EU Digital Services Act gives users ways to contest moderation decisions through internal complaint systems, out-of-court dispute settlement, and judicial redress. Canada’s Directive on Automated Decision-Making requires federal departments to provide recourse. In US consumer finance, the CFPB has made clear that creditors using complex algorithms still must provide specific reasons for adverse action. In health insurance, consumers already have formal internal and external appeal rights. (GDPR)

The deeper point is bigger than compliance.

Recourse is becoming an economic layer.

Why this matters now

In the first wave of software, the product was the interface.

In the platform era, the product was coordination.

In the AI era, the product is increasingly decision power.

That changes everything.

When software merely stored records, mistakes were annoying.

When software began coordinating marketplaces, mistakes became costly.

When AI begins shaping access, opportunity, identity, pricing, risk, trust, and execution, mistakes become institutional.

A wrong recommendation can be ignored.

A wrong decision can be appealed.

But a wrong autonomous action inside a fast-moving system creates something else: the need for recovery architecture.

That is the opening for Recourse Platforms.

These platforms will emerge because enterprises, regulators, public institutions, digital platforms, and consumers will all discover the same uncomfortable truth:

AI adoption scales much faster than institutional correction capacity.

Most organizations are investing heavily in model quality, orchestration, copilots, agent frameworks, and automation pipelines. Far fewer are investing in the machinery of appeal, correction, evidence review, and downstream recovery.

Yet the more AI is used, the more disputes there will be.

Not because AI always fails.

But because AI operates on representations—and representations can be incomplete, stale, biased, conflicting, or stripped of context.

That is where the Representation Economy lens becomes decisive.

What are Recourse Platforms?

Recourse Platforms are AI infrastructure systems that enable individuals and organizations to challenge, correct, review, and recover from automated decisions. They provide structured mechanisms for appeal, evidence submission, decision reconstruction, and downstream recovery.

AI will not just create markets for intelligence. It will create markets for correction

The real problem is not just model error. It is representational mismatch.

Many people think recourse is mainly about explainability.

It is not.

Explainability helps someone understand why a result happened.

Recourse helps them do something about it.

That difference is enormous.

A person denied a loan does not only want a model card. They want to know:

- What data was used?

- Which part was inaccurate?

- What can be corrected?

- Who can review the case?

- What evidence can be submitted?

- How long will the review take?

- What happens if the original decision caused downstream harm?

That is not merely an explanation problem. It is a workflow, governance, evidence, identity, and recovery problem.

Academic work on algorithmic recourse focuses on how unfavorable automated decisions can be reversed through actionable changes or contestable pathways. At the policy level, NIST and OECD both emphasize accountability, traceability, governance, and mechanisms for inquiry and review as central to trustworthy AI. (ACM Digital Library)

The next generation of AI infrastructure, therefore, will need to support not just inference, but institutional recourse.

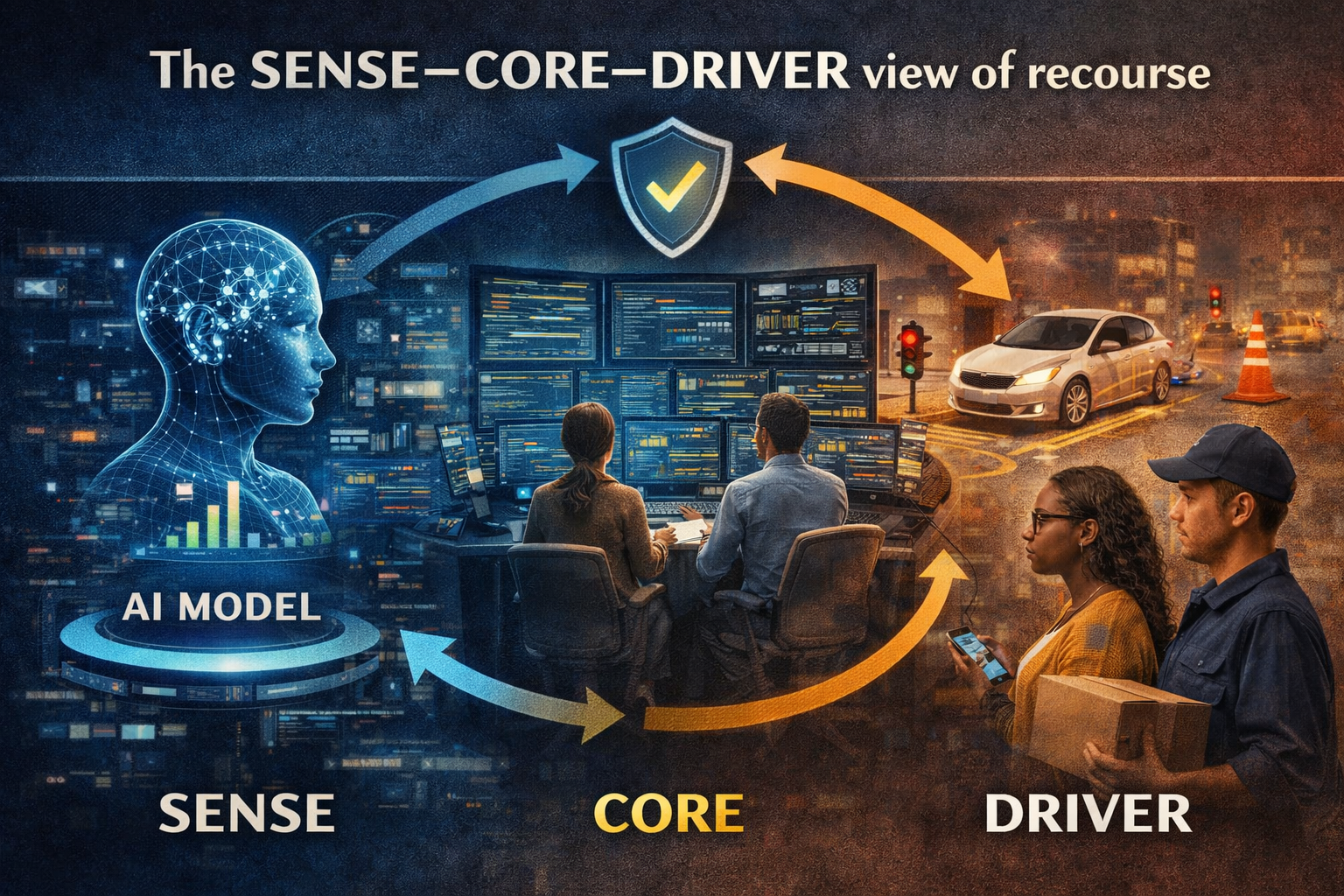

The SENSE–CORE–DRIVER view of recourse

The easiest way to understand this is through SENSE–CORE–DRIVER.

SENSE: where reality becomes machine-legible

This is where signals are captured, attached to entities, turned into state, and updated over time.

Most recourse problems begin here.

A system may have:

- the wrong person,

- the wrong transaction,

- the wrong state,

- the wrong timestamp,

- the wrong linkage across entities,

- or the wrong update sequence.

A driver gets suspended because a fraud event was attached to the wrong account.

A patient is denied coverage because a diagnosis update never propagated.

A supplier gets downgraded because location data, delivery data, and customs data tell different stories.

In all these cases, the system is not merely “biased.” It is representing reality badly.

CORE: where decisions are made

This is where the system interprets the representation and produces judgment.

Even a strong model can fail if the input state is distorted.

And even a fair model can produce harmful outcomes if business rules, thresholds, confidence logic, or ranking priorities are poorly designed.

DRIVER: where action becomes legitimate

This is where the system acts.

Who authorized the action?

What evidence supported it?

Was the confidence threshold sufficient?

Was escalation required?

Was human review available?

What recourse exists after harm occurs?

This is where many current AI systems are weakest.

They may produce answers.

They may even produce actions.

But they often do not produce structured legitimacy.

That gap is exactly where Recourse Platforms will grow.

What a Recourse Platform actually does

A true Recourse Platform is not just a support-ticket tool with AI branding.

It is a new operational layer that sits between automated decision systems and institutional accountability.

At minimum, it does seven things.

-

Structured intake of disputes

The platform allows a person, business, or delegated representative to challenge a decision in machine-readable form.

Not just “I disagree,” but:

- which decision,

- on which date,

- affecting which entity,

- using which evidence,

- with what claimed error.

-

Reconstruction of the decision pathway

It pulls the relevant representation, model outputs, rules, logs, prompts, thresholds, confidence markers, and workflow history.

Without reconstruction, appeal becomes theater.

-

Classification of the error

Was the issue:

- bad data,

- identity mismatch,

- stale state,

- policy conflict,

- model error,

- missing context,

- unauthorized delegation,

- tool misuse,

- or downstream execution failure?

Different error classes require different remedies.

-

Evidence submission and verification

The affected party must be able to add new proof: documents, transactions, attestations, corrected records, contextual explanation, or third-party certification.

-

Smart routing to the right review path

Not every case needs a human.

Not every case should remain automated.

Some need automated re-evaluation.

Some need specialist review.

Some need policy escalation.

Some need independent review.

Some may even need regulator-facing reporting.

-

Correction and recovery orchestration

If the original decision was wrong, the platform must not stop at “approved on reconsideration.”

It must propagate correction to downstream systems:

- restore access,

- reverse penalties,

- repair reputation flags,

- reopen claims,

- update state stores,

- notify dependent systems.

-

Institutional memory

Recourse should improve the system itself.

Which error types recur?

Which models are generating unnecessary disputes?

Which business rules create false negatives?

Which teams create review bottlenecks?

Which classes of entities are persistently underrepresented?

This is where Recourse Platforms become strategic, not merely defensive.

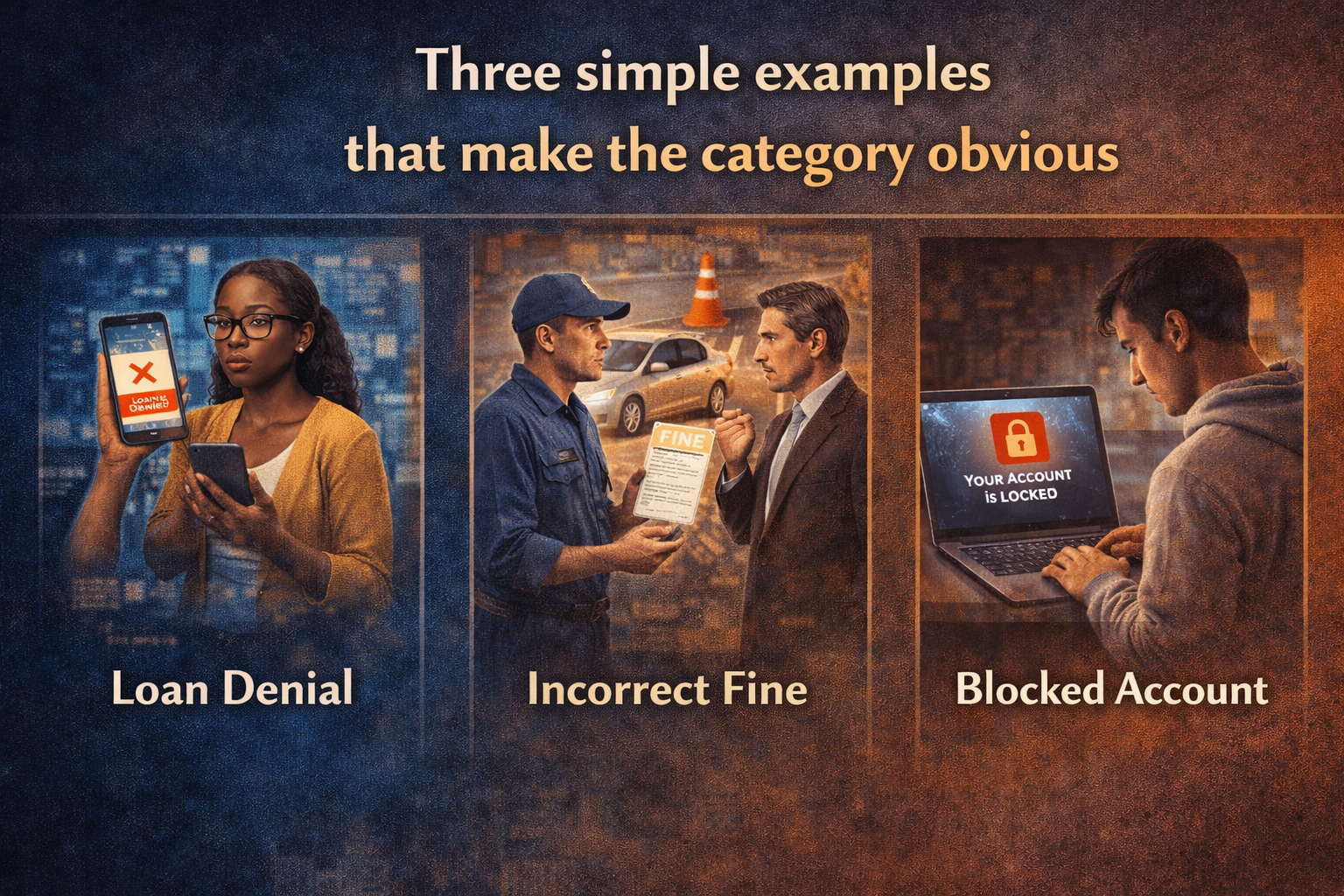

Three simple examples that make the category obvious

-

Lending

A small business owner is denied working capital. The model appears correct at first glance. Later, it turns out one tax record was outdated and one repayment event was never reconciled.

Today, this often becomes a call-center problem.

Tomorrow, it will become a recourse workflow problem.

A Recourse Platform would ingest the denial, surface the contributing factors, allow submission of updated proof, re-run eligibility checks, and, if the original denial caused cascading harm, support priority reconsideration and downstream correction.

This is not just fairer. It is economically smarter. It reduces abandonment, preserves customer trust, and improves underwriting quality.

The CFPB has explicitly said that when credit decisions rely on complex algorithms, creditors still need to provide specific reasons for adverse action. (Consumer Financial Protection Bureau)

-

Health insurance

A patient’s treatment is denied. The reason appears administrative, but the real issue is that the insurer’s representation of medical necessity is incomplete.

Healthcare has long recognized that decisions affecting care need appeal structures, including external review. In urgent cases, those review pathways can be accelerated. (HealthCare.gov)

AI will not remove this need. It will intensify it.

As more claims, triage pathways, coding reviews, and utilization decisions become AI-assisted, the ability to contest, correct, and recover quickly will become mission-critical.

-

Digital platforms

A creator, seller, or driver is deranked, demonetized, or suspended.

These are not minor events. For many people, they are income shocks.

The EU Digital Services Act already requires complaint-handling and creates routes for out-of-court dispute settlement and judicial redress. It also says decisions on complaints should not be taken solely on the basis of automated means. (Digital Strategy EU)

The winner in the next decade may not be the platform with the most aggressive automation.

It may be the one with the most credible recourse.

Why Recourse Platforms will become a real market

This category will grow for five reasons.

First, AI decisions are becoming economically consequential

Once AI starts shaping access to money, work, care, mobility, insurance, and digital visibility, recourse stops being a legal side note and becomes part of market design.

Second, representation errors are inevitable

No organization has perfect state representation.

Entities change. Context shifts. Data decays. Identity links break. Sensors drift. Policies evolve.

If AI acts on reality through representation, then correction infrastructure is unavoidable.

Third, regulation is moving toward contestability

The vocabulary differs by sector and jurisdiction—rectification, human oversight, appeal, complaint handling, external review, recourse—but the direction is consistent: consequential decisions must be challengeable. (GDPR)

Fourth, enterprises need trust-preserving automation

Boards do not want slower AI. They want scalable AI that does not create reputational, legal, operational, and political blowback.

Recourse Platforms make faster adoption possible because they create a safety layer for institutional correction.

Fifth, recovery is an untapped economic service

There is a future market in:

- AI dispute infrastructure,

- evidence verification,

- independent review networks,

- decision-trace tooling,

- correction propagation,

- reputational restoration,

- and recourse analytics.

The AI economy will not only create systems that act.

It will create systems that repair action.

The companies that will emerge

The most interesting part is what new firms this category will create.

We are likely to see:

Recourse infrastructure providers

APIs and workflow systems for appeal, correction, and review.

Decision-trace platforms

Tools that reconstruct how a decision happened across models, rules, prompts, and agents.

Representation repair services

Specialists that resolve broken entity matching, stale state, and conflicting records.

Independent review networks

Third-party institutions that provide trusted adjudication in high-impact cases.

Recovery orchestration firms

Platforms that do not just overturn a bad decision, but restore the affected person or business across downstream systems.

Recourse analytics companies

Firms that help enterprises quantify where AI-generated disputes originate and how to redesign systems to reduce them.

This is why Recourse Platform is not a feature.

It is a category.

What boards and C-suites should ask now

If this category is real—and it is—leaders should not wait for regulation, headlines, or litigation to force the conversation.

They should ask now:

- Which AI-enabled decisions in our organization can materially harm a person, business, or partner?

- Can those decisions be contested?

- Can the underlying representation be corrected?

- Can downstream harm be reversed?

- Do we have a traceable evidence chain?

- Where do we still rely on informal, manual, opaque appeals?

- Are we designing for automation alone, or for legitimacy as well?

That is the strategic shift.

The future of AI is not only about intelligence.

It is about institutional trust under machine-mediated decision-making.

And trust will increasingly depend not on whether the system is flawless, but on whether the system is repairable.

Recourse Platforms are emerging as a critical AI infrastructure category that enables correction, appeal, and recovery of automated decisions. As AI systems scale decision-making across finance, healthcare, platforms, and enterprises, recourse mechanisms will become essential for trust, governance, and institutional legitimacy. This article introduces the concept within the Representation Economy framework using the SENSE–CORE–DRIVER model.

Conclusion: the AI economy will need markets for second chances

The history of institutions is not the history of perfect judgment.

It is the history of building mechanisms for review.

Courts have appeals.

Markets have dispute resolution.

Insurance has reconsideration.

Healthcare has internal and external review.

Credit has adverse-action rules.

Platforms increasingly face complaint and redress obligations. (HealthCare.gov)

The AI economy will be no different.

As AI systems become embedded in economic life, society will demand something deeper than transparency and more practical than ethics statements.

It will demand recourse.

That is why one of the most important businesses of the next decade may not be a model company at all.

It may be the company that helps institutions answer the most human question in the age of machine decisions:

If the system gets me wrong, how do I get my reality back?

Glossary

Recourse Platforms

Systems that help people and institutions challenge, correct, review, and recover from harmful or inaccurate automated decisions.

Algorithmic recourse

A field of research focused on how a person can reverse or contest an unfavorable automated outcome through actionable changes or structured intervention. (ACM Digital Library)

Contestability

The ability to challenge a decision, submit evidence, request review, and seek correction or redress.

Rectification

The right to correct inaccurate personal data under GDPR. (GDPR)

Adverse action

A negative decision in consumer finance, such as denial of credit, that triggers notice obligations and requires specific reasons. (Consumer Financial Protection Bureau)

Internal complaint-handling

A platform or institution’s own process for users to challenge decisions. The DSA requires this for certain online platforms. (Digital Strategy EU)

External review

A review by an independent third party, common in healthcare appeals. (HealthCare.gov)

Representation Economy

A framework in which economic value increasingly depends on how well systems represent entities, states, relationships, and changes in the real world.

SENSE

The layer where signals are captured, attached to entities, turned into state, and updated over time.

CORE

The layer where AI and decision systems interpret representations and make judgments.

DRIVER

The layer where decisions become legitimate action through authorization, evidence, execution, verification, and recourse.

FAQ

What is a Recourse Platform in AI?

A Recourse Platform is infrastructure that helps organizations manage disputes over automated decisions by enabling appeal, correction, evidence submission, review, and downstream recovery.

How is recourse different from explainability?

Explainability tells you why a system produced an outcome. Recourse tells you how that outcome can be challenged, corrected, or reversed.

Why will Recourse Platforms become important?

As AI systems make more economically significant decisions, institutions will need scalable ways to handle disputes, reduce harm, satisfy regulatory expectations, and preserve trust. (Canada)

Which industries will need Recourse Platforms first?

Financial services, healthcare, insurance, digital platforms, HR and hiring, public-sector decision systems, and supply chains are all likely early adopters.

Is this mainly a compliance issue?

No. Compliance is one driver, but the larger issue is institutional trust. Recourse Platforms help organizations scale automation without losing legitimacy.

Why does this matter to boards and CEOs?

Because AI risk is no longer just a model-performance issue. It is now a business-model, trust, governance, and recovery issue.

References and further reading

- GDPR Article 16, right to rectification. (GDPR)

- GDPR Article 22, protections related to certain solely automated decisions. (GDPR)

- EU Digital Services Act complaint handling, dispute settlement, and judicial redress. (Digital Strategy EU)

- Canada Directive on Automated Decision-Making, including recourse requirements. (Canada)

- CFPB guidance on adverse action notification requirements with complex algorithms. (Consumer Financial Protection Bureau)

- HealthCare.gov guidance on internal appeals and external review. (HealthCare.gov)

- NIST AI Risk Management Framework. (NIST)

- OECD work on accountability in AI. (OECD)

- ACM overview of algorithmic recourse. (ACM Digital Library)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Conversion Industry: Why the Biggest AI Companies Will Rebuild Reality Before They Build Intelligence – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Accounting: The New Discipline That Will Decide Which AI-Driven Institutions Can Be Trusted – Raktim Singh

- Synthetic Representation: How the AI Economy Will Construct Reality When It Cannot Fully Observe It – Raktim Singh

- Representation Clearinghouses: The Missing Infrastructure the AI Economy Needs to Reconcile Reality Before It Acts – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

-

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.