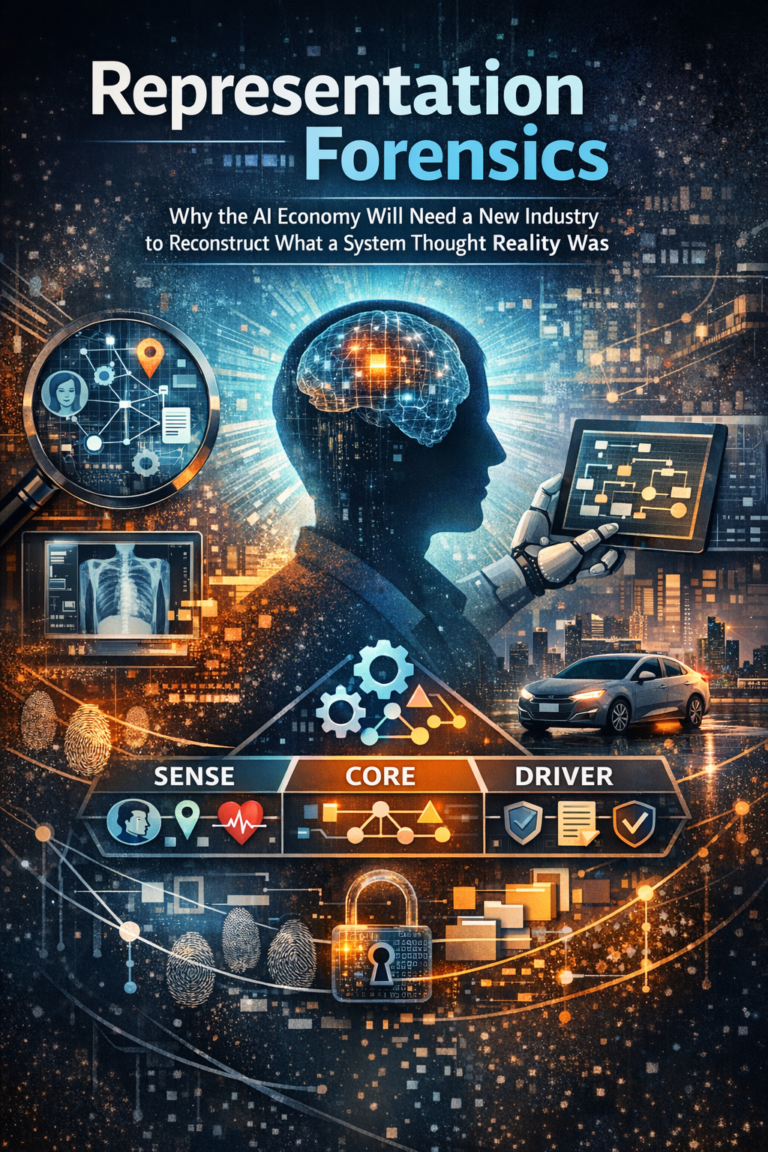

Representation Forensics

In the next phase of AI, the biggest failures will not begin with bad outputs. They will begin with bad representations of reality. The institutions that learn to reconstruct, challenge, and govern those representations will define the next era of trust.

Representation Forensics is the discipline of reconstructing what an AI system believed reality looked like at the moment it acted.

Introduction: We are still investigating AI failures too late

Most discussions about AI failure still begin at the wrong moment.

They begin with the output. A loan was denied. A face was misidentified. A patient was flagged incorrectly. A customer was treated as suspicious. A worker was scored unfairly. A vehicle made the wrong decision.

But in many of these cases, the real failure did not begin when the system produced an answer. It began earlier, when the system formed an incorrect picture of reality.

That is the next big issue in the AI economy.

As AI systems become more deeply embedded in finance, healthcare, retail, logistics, public services, mobility, and enterprise operations, a new institutional capability will become essential: the ability to reconstruct what a system believed the world looked like at the moment it acted. That is what I call representation forensics.

Representation forensics is not ordinary debugging. It is not just explainability. It is not a compliance checklist. It is the disciplined reconstruction of a machine’s working view of reality: what signals it received, which entity it believed it was dealing with, what state it inferred, how that state changed over time, what decision logic it used, and what authority chain allowed action to be taken. This matters because modern AI governance frameworks increasingly emphasize trustworthiness, accountability, transparency, documentation, and lifecycle risk management, even though most organizations still lack a clear way to reconstruct the machine’s underlying view of reality after harm occurs. (NIST)

In the Representation Economy, this will not remain a niche discipline. It will become a major industry.

Because in the AI era, the most important question after harm will not simply be, “What answer did the system give?” It will be, “What reality did the system think it was acting on?” NIST’s AI Risk Management Framework is built around trustworthiness, governance, documentation, and context-sensitive risk management, which points directly toward this missing capability. (NIST Publications)

What is Representation Forensics?

Representation Forensics is the process of reconstructing what an AI system believed reality looked like when it made or influenced a decision—across signals, identity, state, reasoning, and execution.

The old economy investigated transactions. The new one will investigate representations

Every era has its own dominant forensic logic.

Industrial systems investigated physical failure.

Financial systems investigated transactions, approvals, and records.

Digital systems investigated logs, access trails, and security events.

AI systems require something deeper.

Why? Because AI systems do not merely process instructions. They operate on representations. They ingest signals from forms, sensors, images, language, histories, clicks, documents, locations, device metadata, workflows, and proxy indicators. Then they convert those signals into a working model of the world.

That working model can go wrong in several ways. The system may connect the wrong signals to the wrong entity. It may assume a stale state. It may inherit corrupted or incomplete context. It may compress a complex situation into an oversimplified label. Or it may act on a technically coherent internal representation that was authorized under the wrong delegation conditions.

This is why many AI failures cannot be understood by looking only at the final output. The output is often just the visible endpoint of a much earlier representational error.

That is the core insight behind representation forensics: AI failures are often failures of machine-perceived reality before they become failures of reasoning.

This is also why traditional explainability, while useful, is not enough. Explainability usually asks why a model produced a result. Representation forensics asks an earlier and more important question: did the system have the right reality in view at all? NIST’s AI RMF and the OECD AI Principles both reinforce this broader frame by emphasizing context, accountability, transparency, and downstream impacts rather than narrow model performance alone. (NIST Publications)

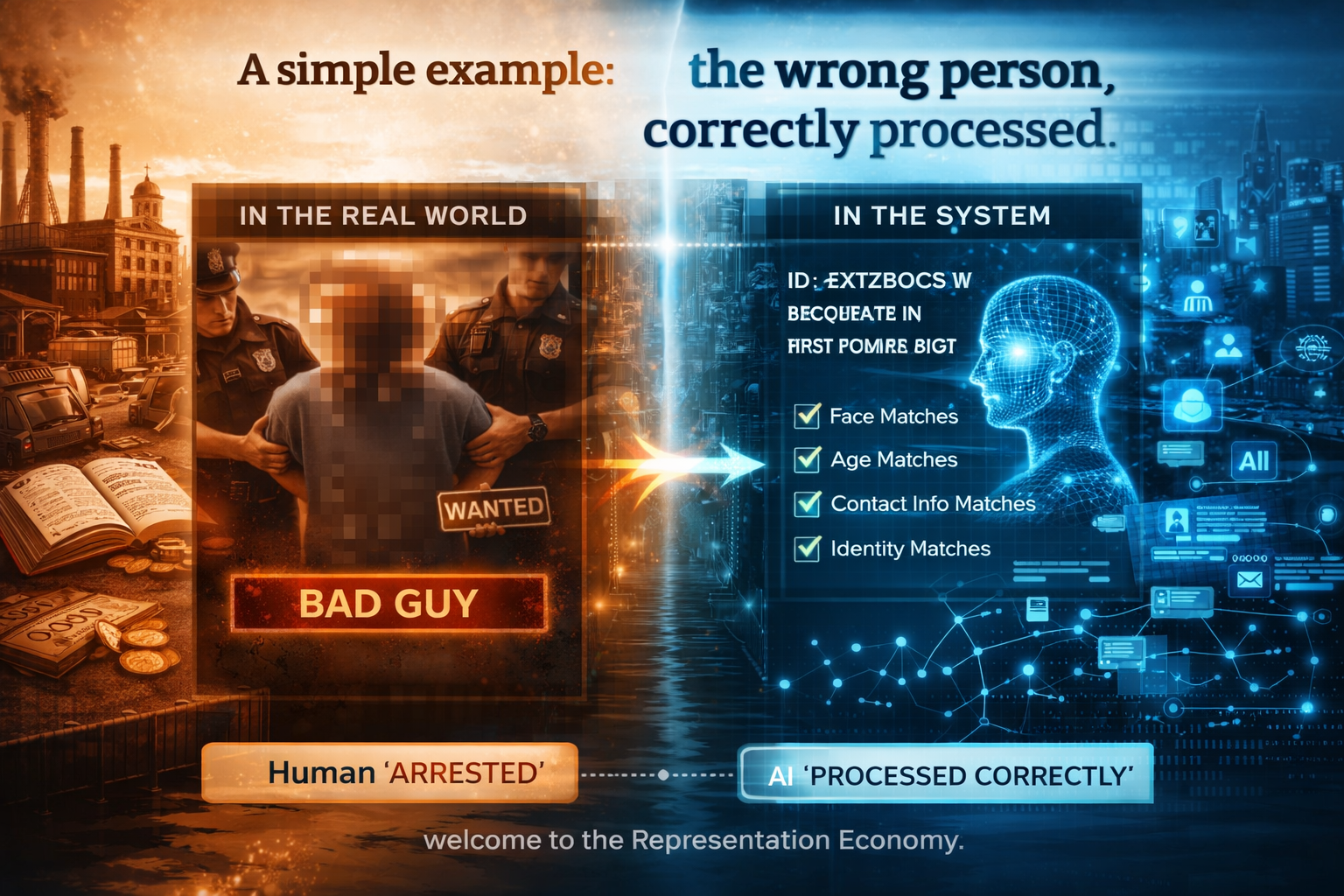

A simple example: the wrong person, correctly processed

Imagine a retail security system flags a customer as high risk because it matches that person to a watchlist entry. Store staff then act on the alert. The chain may look technically correct. The system detected a face, found a match, triggered a workflow, and executed a policy.

But what if the match itself was wrong?

Then the system did not fail merely because it produced the wrong outcome. It failed because it represented the wrong person as the relevant entity. The full action chain may have been internally coherent and still deeply unjust.

This is not hypothetical. NIST’s face recognition evaluations have documented demographic differentials in false positive and false negative rates, and the FTC’s 2023 action against Rite Aid said the retailer’s facial recognition deployment produced thousands of false-positive matches while lacking reasonable safeguards. (NIST Pages)

Representation forensics would ask:

- What signals entered the system?

- Which identity was inferred?

- With what confidence?

- Against what database?

- Under what thresholds?

- What human verification occurred?

- What happened after the alert?

- What evidence exists that the system’s representation of the person was challenged, corrected, or ignored?

That is a much richer investigation than asking only whether the model produced a false alert.

Another simple example: the stale patient

Now imagine a healthcare system that uses AI to prioritize patients for follow-up. A patient appears low risk and is not escalated quickly enough. Later, clinicians discover that the patient’s condition had changed, but the system had not incorporated recent test results, symptom changes, or medication interactions.

Again, the problem is not only that the model produced a weak recommendation. The deeper problem is that the system acted on an outdated representation of the patient’s state.

In healthcare, this distinction is critical. The FDA’s 2025 draft guidance on AI-enabled device software functions emphasizes lifecycle management, marketing submission recommendations, and a comprehensive approach to safety and effectiveness across the total product lifecycle. That signals a broader regulatory expectation: the system must be understood not just as a model, but as a changing socio-technical system shaped by documentation, data flows, updates, and human interaction. (U.S. Food and Drug Administration)

Representation forensics would reconstruct what data about the patient the system saw, what it did not see, how current the state representation was, whether critical changes were missing, what workflow converted that representation into action, and whether meaningful human intervention remained possible.

This is where trustworthy AI will increasingly be won or lost.

Why this matters more as AI moves from advice to action

The need for representation forensics grows as AI systems move from generating content to shaping decisions and triggering actions.

A chatbot that gives a flawed summary is one type of problem.

A system that silently classifies a person, assigns risk, initiates surveillance, changes priority, adjusts price, blocks access, or routes an operational action is another.

Once AI becomes part of a governed execution chain, the cost of misrepresentation rises sharply.

That is why policy and regulatory frameworks around the world are moving toward documentation, transparency, accountability, record-keeping, human oversight, and post-deployment monitoring. The OECD AI Principles were updated in 2024. The EU AI Act became Regulation (EU) 2024/1689. NIST’s AI RMF continues to shape practical risk governance. These developments all point in the same direction: societies increasingly expect evidence that can explain how AI systems were built, governed, and used in consequential settings. (OECD)

Representation forensics sits exactly in that space.

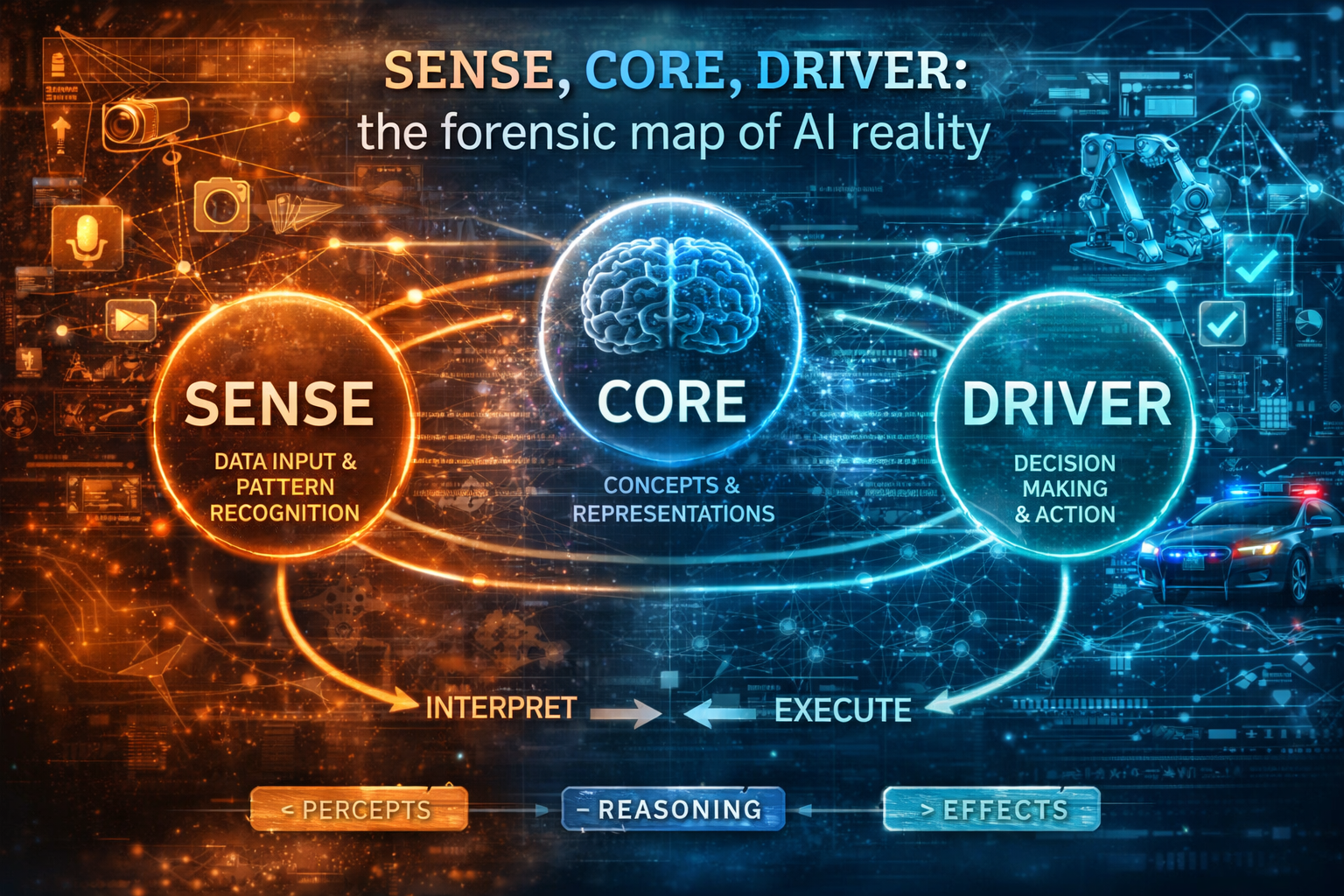

SENSE, CORE, DRIVER: the forensic map of AI reality

My broader argument about the Representation Economy is that AI systems should be understood through three layers.

SENSE: where reality becomes machine-legible

SENSE includes:

- Signal: detecting events, traces, and changes from the world

- ENtity: attaching those signals to a persistent person, object, location, asset, or organization

- State representation: modeling the current condition of that entity

- Evolution: updating that state as new signals arrive

CORE: where reasoning occurs

CORE includes:

- Comprehend context

- Optimize decisions

- Realize action plans

- Evolve through feedback

DRIVER: where execution becomes governed and legitimate

DRIVER includes:

- Delegation

- Representation basis

- Identity

- Verification

- Execution

- Recourse

Representation forensics makes this architecture investigable.

When an incident happens, the forensic questions map directly to these layers.

At the SENSE layer

Did the system receive the right signals?

Did it attach them to the right entity?

Did it build an accurate state representation?

Did it update that state as reality changed?

At the CORE layer

How did the system interpret context?

Which model, rule, or workflow shaped its judgment?

What alternatives were ignored?

Did the system convert uncertainty into false confidence?

At the DRIVER layer

Who authorized the action?

What verification was required?

Did the action affect the correct entity?

Was there a record of override, contestability, or recourse?

This matters because many organizations still treat AI incidents as if they are only model incidents. They are often not. They are representational incidents, reasoning incidents, and execution incidents at once.

Representation forensics gives institutions a language for separating those failures instead of collapsing them into a vague statement that “the AI got it wrong.”

Global signals are already visible

You can already see pieces of this future emerging across sectors.

In autonomous driving, regulators are not only asking whether a vehicle crashed. NHTSA’s Standing General Order requires reporting of certain crashes involving automated driving systems and Level 2 ADAS so the agency can obtain timely and transparent notification of real-world incidents and investigate safety concerns further. That is an early example of a regime built around reconstructable evidence in AI-assisted operation. (NHTSA)

In biometric systems, agencies are focusing on false matches, logging, human review, and safeguards because the harm often begins with the system’s mistaken representation of identity. (Federal Trade Commission)

In medical AI, regulators are pushing lifecycle thinking because the relevant question is no longer just whether a model performed well during evaluation, but how the system behaves as data, environments, users, and contexts change over time. (U.S. Food and Drug Administration)

The pattern is becoming clear: in the AI economy, trustworthy systems will increasingly be those whose view of reality can be reconstructed after the fact.

A new industry will emerge

Representation forensics will not remain a minor capability inside legal, compliance, or data science teams. It will become a market.

New organizations will emerge to do at least five kinds of work.

-

Incident reconstruction

These firms will rebuild what the system believed at each step, using logs, prompts, sensor traces, database lookups, identity mappings, workflow records, and human override trails.

-

Representation audit infrastructure

These tools will continuously test whether the machine’s representation of people, assets, locations, transactions, and states remains accurate, fresh, and contestable.

-

Delegation and authority forensics

These services will verify whether the system had legitimate authorization to act as it did, and whether the boundary between recommendation and execution was properly governed.

-

Machine evidence chains

These platforms will preserve tamper-resistant records showing what the system saw, inferred, recommended, and triggered.

-

Representation dispute resolution

As AI becomes more embedded in markets and public systems, people and institutions will need mechanisms to challenge how they were represented, not just the final decision they received.

This is where a new economic layer appears. Just as cybersecurity created markets for detection, response, forensics, and resilience, AI will create markets for representation reconstruction, evidence, and contestability. That inference follows directly from the way regulators and standards bodies are already emphasizing record-keeping, post-market monitoring, lifecycle governance, and transparent incident investigation. (Digital Strategy)

The next major AI industry may not be another model company. It may be the industry that tells us what the model believed reality to be before it acted.

Why this is bigger than compliance

It would be a mistake to treat representation forensics as just another burden imposed by regulators.

It is more strategic than that.

In the Representation Economy, competitive advantage will increasingly belong to organizations that can prove three things:

- that they represented reality well,

- that they reasoned over it responsibly,

- and that they acted with governed legitimacy.

That is not just risk reduction. It is trust infrastructure.

A bank that can reconstruct why an AI-driven fraud workflow treated a customer as suspicious will be more trustworthy than one that cannot.

A hospital that can show how a clinical AI system formed and updated its patient view will be more credible than one that cannot.

A logistics company that can reconstruct how an autonomous system interpreted an operational environment will be more resilient than one that cannot.

In other words, representation forensics will become part of institutional strength.

Why boards should care now

Boardrooms do not need another generic conversation about responsible AI. They need a sharper question.

If a system materially affects revenue, access, pricing, safety, reputation, customer trust, or legal exposure, can the organization reconstruct:

- what the system saw,

- what it inferred,

- what it ignored,

- what authority it relied on,

- and why it acted when it did?

If the answer is no, then the organization is not yet ready for AI at scale.

The next generation of institutional advantage will not come only from deploying AI faster. It will come from governing AI more legibly than competitors do.

That is the strategic importance of representation forensics. It transforms AI governance from a policy abstraction into operational evidence.

The deeper philosophical shift

For years, the AI conversation has been dominated by intelligence: bigger models, better benchmarks, faster inference, more capable agents.

But the next phase of the AI economy will be shaped by a harder truth:

Intelligence is not enough if the system is acting on a distorted map of reality.

A system can reason brilliantly over a bad representation and still cause serious harm.

A system can optimize perfectly against the wrong entity.

A system can execute flawlessly on stale state.

A system can sound intelligent while remaining structurally blind.

That is why the future belongs not only to those who build intelligence, but to those who can reconstruct and govern representation.

This is the real significance of representation forensics. It pushes the AI debate beyond performance and into legibility, evidence, accountability, and institutional memory.

In the AI economy, value will not flow to those who compute better.

It will flow to those who represent reality better—and can prove it.

Conclusion: the next question every board should ask

As AI becomes more operational, boards, regulators, executives, and public institutions should start asking a new question:

If this system harms someone tomorrow, can we reconstruct what it thought reality was today?

If the answer is no, then the system may be more powerful than the institution that deployed it.

In the AI economy, what matters is not only what a system can compute. It is whether reality was represented faithfully enough to justify action, and whether that representation can be examined when things go wrong.

That is why representation forensics matters.

The future of AI trust will not be built only on smarter systems. It will be built on systems whose understanding of reality can be inspected, challenged, and reconstructed.

A new industry is coming.

Not just to build intelligence.

But to investigate it after it acts.

Glossary

Representation Forensics

The disciplined reconstruction of what an AI system believed reality looked like at the moment it acted.

Machine-perceived reality

The internal view of the world formed by an AI system from signals, data, models, and context.

SENSE

The layer where reality becomes machine-legible through signals, entities, state representation, and evolution.

CORE

The reasoning layer where the system interprets context, optimizes decisions, and plans action.

DRIVER

The governance and execution layer that determines delegation, verification, legitimacy, execution, and recourse.

Entity binding

The act of linking incoming signals to the correct person, object, asset, place, or organization.

State representation

The structured model of an entity’s current condition at a given point in time.

Representation drift

The gradual divergence between the system’s internal representation and real-world reality as conditions change.

Delegation chain

The sequence of authority by which an AI system is permitted to recommend, trigger, or execute action.

Machine evidence chain

A preserved trail of records showing what the system saw, inferred, recommended, and triggered.

Contestability

The ability of affected people or institutions to challenge how a system represented them or treated them.

AI incident reconstruction

The process of rebuilding what happened inside an AI-enabled workflow after a harmful or contested outcome.

Representation dispute resolution

A future institutional mechanism for contesting not just decisions, but the underlying representations that produced them.

Trust infrastructure

The set of systems, evidence trails, governance practices, and operational capabilities that make institutional AI trustworthy.

FAQ

What is representation forensics in AI?

Representation forensics is the process of reconstructing what an AI system believed reality looked like when it made or influenced a decision.

How is representation forensics different from explainability?

Explainability usually asks why a model produced a result. Representation forensics asks whether the system had the right reality in view before it reasoned at all.

Why does representation forensics matter?

Because many AI failures begin with misidentification, stale context, incomplete state, or bad delegation long before the final output appears.

Is representation forensics only for regulated industries?

No. It matters everywhere AI influences consequential decisions, including finance, healthcare, retail, logistics, public services, cybersecurity, and enterprise operations.

Why will boards care about this?

Because representation failures create strategic, legal, operational, and reputational risk. Boards need evidence, not just promises, when AI is embedded in real workflows.

Does this replace responsible AI programs?

No. It strengthens them. Representation forensics gives responsible AI programs an operational way to investigate harm and prove governance.

What industries will need this first?

Finance, healthcare, mobility, insurance, retail surveillance, public administration, and any sector where AI affects safety, access, money, or rights.

What kinds of companies could emerge from this?

Incident reconstruction firms, representation audit platforms, delegation-forensics providers, machine evidence chain providers, and representation dispute-resolution services.

How does this connect to the Representation Economy?

The Representation Economy is built on how well reality is made legible, understood, and acted upon. Representation forensics becomes essential when that chain breaks.

What is the link to SENSE–CORE–DRIVER?

Representation forensics investigates all three layers: what the system sensed, how it reasoned, and how it was authorized to act.

Is this mainly about legal defense?

No. It is also about trust, resilience, market credibility, and institutional maturity.

Why is this important for generated engines and AI search?

Because answer engines increasingly reward original concepts, crisp definitions, structured reasoning, and authoritative topical clusters. Representation Forensics is a definable category with strong conceptual clarity.

References and further reading

For a references section at the end of the article, I would use a short, high-trust list like this:

- NIST, Artificial Intelligence Risk Management Framework (AI RMF 1.0) — foundational guidance on trustworthy AI, governance, and lifecycle risk management. (NIST Publications)

- OECD, AI Principles — international principles on trustworthy AI, updated in 2024. (OECD)

- European Union, AI Act — Regulation (EU) 2024/1689 establishing the EU’s legal framework for AI. (Digital Strategy)

- NIST, Face Recognition Vendor Test / Demographic Differentials — evidence on performance variation and false-match risks in face recognition systems. (NIST Pages)

- FTC, Rite Aid facial recognition action — a significant case on false positives and lack of deployment safeguards. (Federal Trade Commission)

- FDA, AI-Enabled Device Software Functions: Lifecycle Management and Marketing Submission Recommendations — guidance showing how medical AI governance is shifting toward lifecycle discipline and documentation. (U.S. Food and Drug Administration)

- NHTSA, Standing General Order on Crash Reporting — evidence of emerging expectations for incident reporting and reconstructable evidence in automated driving systems. (NHTSA)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Clearinghouses: The Missing Infrastructure the AI Economy Needs to Reconcile Reality Before It Acts – Raktim Singh

- Recourse Platforms: The Next AI Infrastructure Market for Correction, Appeal, and Recovery – Raktim Singh

- Representation Workflows: The Hidden Operating System That Will Decide the Winners of the AI Economy – Raktim Singh

- Representation Switching Costs: Why the AI Economy’s Deepest Lock-In Will Come From Who Defines Reality – Raktim Singh

- Representation Fragility and Exclusion: The Hidden Fault Line That Will Break the AI Economy – Raktim Singh

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines – Raktim Singh

- Representation Monopolies: Why the AI Economy Will Be Controlled by Those Who Define Reality – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.