Representation Mobility Markets:

The next market in AI will not be built around bigger models alone. It will be built around whether trust can move safely across systems — and whether someone can absorb the damage when that trust moves incorrectly.

Artificial intelligence is making one fact impossible to ignore: intelligence alone is not enough.

A model can classify, recommend, summarize, predict, optimize, and even reason. But the moment an AI system begins operating across real institutions, a harder question appears. Can the reality one system understands be trusted by another? And if that trust turns out to be incomplete, outdated, manipulated, or contextually wrong, who absorbs the loss?

That is the question behind what I call Representation Mobility Markets.

This is not a narrow technology issue. It is the beginning of a new economic layer. In the AI economy, value will increasingly depend on whether identities, permissions, credentials, performance histories, compliance states, and institutional relationships can move across systems in machine-readable form. At the same time, that mobility creates a new kind of cascading risk. Once trust moves faster, failure moves faster too.

That is why the next AI market will require two things at once:

Portable trust — so machine-readable representation can move across firms, platforms, sectors, and geographies.

Insurable reality — so the losses created by flawed, stale, mismatched, or decayed representation can be priced, pooled, underwritten, and contained.

That combined market is what I mean by Representation Mobility Markets.

What are Representation Mobility Markets?

Representation Mobility Markets are the emerging market structures that enable machine-readable trust to move across systems and allow institutions to price, underwrite, and absorb risks when that trust fails.

Why this matters now

Across the world, the building blocks are already visible.

Europe’s GDPR established a right to data portability under Article 20, allowing individuals in certain circumstances to obtain and reuse their personal data across services. The UK’s open banking framework was designed to let consumers and businesses securely share banking data with regulated providers, creating new forms of competition and service innovation. India’s Account Aggregator framework has expanded consent-based financial data sharing across banking, securities, insurance, and pensions. W3C’s Verifiable Credentials standard provides a model for credentials that are cryptographically secure, privacy-respecting, and machine-verifiable. These are not identical systems, but they all point in the same direction: trusted information must be able to move. (Homepage | Data Protection Commission)

At the same time, the risk side is also becoming more visible. NIST’s AI Risk Management Framework is explicitly designed to help organizations manage AI-related risks to people, organizations, and society. The EU AI Act is a risk-based legal framework for AI. OECD materials increasingly emphasize that AI harms are real and that trustworthy AI requires interoperable, risk-based governance. In the insurance world, cyber risk has already shown how digitally mediated harms can become systemic, correlated, and difficult to underwrite when many actors are exposed to the same underlying vulnerabilities. (NIST)

AI is now forcing these two trajectories together.

One trajectory says trust must be portable.

The other says failure must be governable and insurable.

That convergence is the real story.

The problem in plain language

Consider three simple situations.

A student earns a degree in one country and wants an employer in another country to trust it quickly.

A small business switches lenders and wants its financial history to travel in a form the new lender can trust.

A logistics network uses AI to route goods based on supplier certifications, customs filings, warehouse status, and insurance data. One upstream error spreads into multiple downstream decisions.

These examples all point to the same truth: in an AI economy, representation has to move.

But once representation moves, risk multiplies.

If a supplier credential is stale, if a consent artifact is invalid, if a medical record is incomplete, if an AI agent acts on an outdated authority token, the problem is no longer local. The error can affect credit, pricing, routing, compliance, access, insurance, and customer treatment across multiple connected institutions.

That is why portability alone is not enough.

The world will also need a way to insure the movement of machine-readable trust.

What is a Representation Mobility Market?

A Representation Mobility Market emerges when institutions repeatedly need to do four things at scale:

- represent an entity in machine-readable form

- transfer that representation across systems

- verify whether the receiving system can trust it

- absorb the losses when that representation fails

This is not just about APIs. It is not just about data portability. It is not just about identity. It is not just about insurance.

It is the market for moving trusted machine-readable reality.

In the industrial era, we built logistics markets to move goods.

In the financial era, we built capital markets to move money.

In the AI era, we will need Representation Mobility Markets to move trust.

The deeper issue: AI does not act on reality directly

This is the central point behind Representation Economics:

AI does not act on reality directly. It acts on what a system can represent as reality.

If a child’s vaccination history is missing from one health system, the AI system does not know the child as the parent knows the child.

If a small business has real performance but poor digital records, a lender’s model sees a weaker business than reality.

If a farmer has land-use patterns, crop history, and repayment discipline, but those facts are fragmented across informal or incompatible systems, the institution sees less than what is true.

That is where SENSE–CORE–DRIVER becomes useful.

SENSE–CORE–DRIVER — and why this market exists

SENSE: where reality becomes machine-legible

SENSE is the layer where systems detect signals, connect them to an entity, build a state representation, and update that state over time.

This is where the portability problem begins.

If an institution cannot represent a supplier, patient, customer, product, employee, machine, or asset clearly, no downstream intelligence can fully fix the problem. And even if one institution can represent that entity well, a second challenge remains: can that representation travel to another system without losing meaning, trust, privacy, or integrity?

This is why standards such as Verifiable Credentials matter. W3C describes them as a way to express claims that can be secured from tampering and exchanged among issuers, holders, and verifiers. That is not the whole future of portable trust, but it is an important piece of it. (W3C)

CORE: where systems reason over what they can see

CORE is where AI models interpret, compare, rank, optimize, recommend, and decide.

But CORE is downstream.

If portable representation is weak, CORE becomes confidently wrong. It can still optimize. It can still automate. It can still produce a plausible answer. It simply does so over a distorted model of reality.

That is why many AI failures are not primarily intelligence failures. They are representation-transfer failures. The model may be sophisticated. The reality it receives may not be.

DRIVER: where institutions authorize and govern action

DRIVER is the layer of delegation, verification, execution, accountability, and recourse.

This is where the reinsurance problem appears.

Once an AI-driven decision turns into action, risk is no longer abstract. A person is denied credit. A shipment is blocked. A payment is frozen. A patient pathway is altered. A false fraud flag propagates. An AI agent executes a workflow it should never have executed.

At that point, the question is no longer merely, “Was the data portable?” It becomes, “Who stands behind the consequences when portable trust fails?”

That is a DRIVER question.

And that is why insurable reality becomes necessary.

Portable trust: the first half of the market

Portable trust means that an entity can carry machine-readable proof from one system to another in a way that remains usable, verifiable, privacy-aware, and contextually meaningful.

We already see early versions of this idea:

A digital degree that can be verified quickly.

A consented bank-data history used for underwriting.

A business credential that lowers onboarding friction in a marketplace.

A digital identity that reduces repetitive verification steps. (Open Banking)

But AI will demand much more than simple credential exchange.

Portable trust in the AI economy will increasingly need to include:

identity, authority, historical behavior, provenance, compliance state, confidence level, revocation status, and recourse pathways.

Why? Because AI systems do not simply read documents. They operate in dynamic environments.

A portable credential is useful.

A portable, updateable, revocable, context-aware representation is far more valuable.

That is the real market.

Insurable reality: the second half of the market

Now consider the failure case.

A lender receives portable financial data and approves a loan. Later, it turns out the consent chain was valid, but the upstream representation of income was stale.

A hospital receives a portable patient summary and triages incorrectly because one source omitted a medication update.

A trade-finance system accepts machine-verifiable supplier credentials, but a hidden revocation upstream was not propagated fast enough.

An enterprise AI agent uses a valid authority token but acts on a representation of business state that is six hours old in a high-speed operating context.

These are not just software defects. They are failures in the movement of machine-readable reality.

When such failures become connected, correlated, and large in scale, they begin to resemble the accumulation problem that cyber insurance has already struggled with. The Geneva Association’s work on cyber accumulation risk highlights how hard it is to quantify and sustainably insure risks that are systemic, interconnected, and capable of producing concentrated losses across many firms at once. AI-era representation failures could develop similar properties. (The Geneva Association)

That is why the AI economy will need insurable reality.

Insurable reality means institutions can price, underwrite, pool, and transfer risks created when portable representation is flawed, delayed, manipulated, mismatched, or misinterpreted.

This is bigger than ordinary tech liability.

It points toward a new category of underwriting.

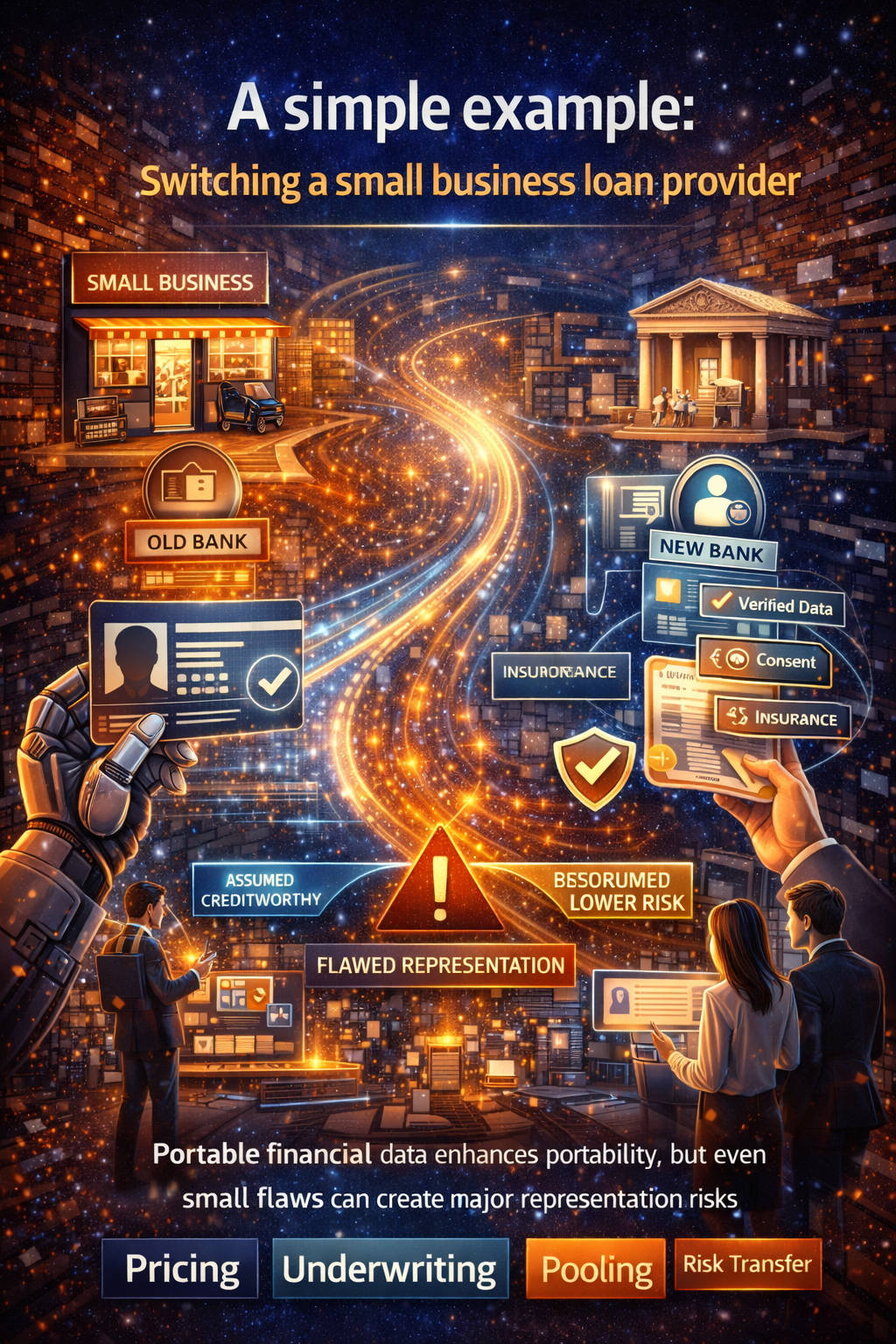

A simple example: switching a small business loan provider

Imagine a small business in India or the UK.

Today, switching lenders often involves friction: PDFs, statements, manual checks, repeated explanations, inconsistent data, and long delays.

Now imagine a more advanced future.

The business carries portable machine-readable trust:

verified transaction history, tax behavior, invoice reliability, supply-chain performance, prior repayment behavior, credentialed business identity, and consent artifacts authorizing data use.

This lowers the cost of trust transfer. A new lender can understand the business much faster.

But now imagine something goes wrong. One upstream accounting integration misclassifies cash flow. The receiving lender’s AI model interprets the business as lower risk than it really is. Credit is extended on bad assumptions. Other downstream institutions also rely on that representation.

Who bears the loss?

The answer cannot always be, “the first firm that consumed the data.”

Nor can the answer always be, “the user.”

A mature market will need mechanisms for allocation, pricing, and transfer of representation risk.

That is what Representation Mobility Markets are for.

The company categories that may emerge

This is where the thesis becomes economically interesting. If this market forms, new categories of firms are likely to appear.

Representation passport providers

These firms will package machine-readable trust in a form that can travel across systems. Their value will not come from storing data alone, but from making trust portable, updateable, verifiable, and revocable.

Trust translation layers

These firms will translate representation across incompatible institutional schemas. They will act as semantic and governance bridges between one system’s “truth” and another system’s ability to rely on it.

Representation risk underwriters

These firms will specialize in pricing the risk that machine-readable representation is wrong, stale, or context-misaligned.

Representation reinsurers

These firms will absorb correlated losses when many institutions rely on the same fragile representation layer.

Delegation and recourse intermediaries

These firms will not merely help systems act. They will help them stop, unwind, appeal, and recover when portable trust creates harmful outcomes.

Representation observability firms

These firms will monitor representation drift, provenance decay, revocation gaps, and cross-system mismatch before they become visible enterprise failures.

These are not science-fiction categories. They are plausible market responses to a world in which representation becomes economically central.

Why boards and regulators should care

Boards are rightly being told to invest in AI. Most are hearing about copilots, models, automation, and productivity.

But the harder strategic question is this:

Can our institutional reality travel safely?

A serious board should now ask:

Which parts of our enterprise can be represented machine-readably?

Which parts of that representation can move across systems?

Who is allowed to rely on it?

What happens when it is wrong?

Where does liability sit?

Which risks are diversifiable, and which are systemic?

This is where the AI conversation must mature.

The next wave of advantage will not come only from having good models. It will come from being easy for trustworthy systems to understand, verify, and coordinate with.

That is a representation advantage.

And in an interconnected AI economy, that advantage must travel.

Why this is not the same as ordinary interoperability

It is tempting to say this is just another name for interoperability.

It is not.

Interoperability says systems can exchange information.

Representation mobility says institutions can exchange trusted machine-usable reality.

That is a much higher bar.

It includes technical compatibility, semantic compatibility, credential validity, governance compatibility, revocation handling, accountability, recourse, and economic backstops.

Interoperability is part of the story. Representation Mobility Markets are the larger market structure built around it.

The geopolitical angle

Different countries and sectors will represent reality differently.

Europe tends to emphasize rights, legal accountability, and risk controls. The European Commission describes the AI Act as the first legal framework on AI, built around a risk-based approach.

The UK’s open banking framework shows how portability can support competition and service innovation. India’s Account Aggregator framework shows how consent-based data mobility can be built as a public digital infrastructure layer. These differences matter because the future market is unlikely to be one uniform global trust layer. It will be a world of connected trust zones. (Digital Strategy)

That makes translation, underwriting, and reinsurance even more important.

Why this matters for Representation Economics

Representation Economics begins with a simple claim:

In the AI era, value increasingly flows to the organizations, people, and assets that intelligent systems can see clearly, trust appropriately, and act upon responsibly.

Representation Mobility Markets extend that idea.

They show that value will not depend only on creating representation. It will also depend on moving representation, validating representation, pricing representation risk, and insuring representation failure.

That is the real strategic shift.

This topic turns Representation Economics from a theory of visibility into a theory of institutional movement and market stability.

It also clarifies why the future winners in AI may not be the firms with the biggest models. They may be the firms, infrastructures, and institutions that make trust portable and failure containable.

Conclusion

In the AI economy, trust will not merely be created. It will be transferred — and underwritten.

That is the shift.

Portable trust without insurable reality creates fragility.

Insurable reality without portable trust creates stagnation.

The winners in the next phase of AI will build both.

We are entering a period in which AI will no longer remain confined to isolated applications. It will move across enterprises, sectors, borders, and decision systems. As that happens, the central economic question will change.

Not: how intelligent is the model?

But: how safely can trusted reality move?

That is why Representation Mobility Markets matter.

They describe the missing market layer between digital identity, AI governance, data portability, underwriting, reinsurance, and institutional trust. They also point to a larger truth: the future will belong not to institutions that merely collect data or deploy intelligence, but to those that can make reality portable, trust transferable, and failure insurable.

That is how the AI economy will scale.

If AI acts on what it can represent,

then the future belongs to those who control how reality is represented, moved, and insured.

FAQ

What are Representation Mobility Markets?

Representation Mobility Markets are the market structures that emerge when institutions need to represent entities in machine-readable form, transfer that representation across systems, verify whether it can be trusted, and absorb losses when it fails. (W3C)

Why does the AI economy need portable trust?

Because AI systems increasingly operate across firms, sectors, and digital environments. For them to act reliably, they need trusted information that can move safely and remain verifiable across contexts. Existing developments such as GDPR portability, open banking, Account Aggregator, and Verifiable Credentials all point in that direction. (Homepage | Data Protection Commission)

What is insurable reality?

Insurable reality is the ability to price, pool, underwrite, and transfer the risks created when machine-readable representation is flawed, stale, manipulated, delayed, or misinterpreted. It is the economic backstop for portable trust. (OECD.AI)

How does SENSE–CORE–DRIVER relate to this topic?

SENSE explains how reality becomes machine-legible, CORE explains how systems reason over that representation, and DRIVER explains how institutions authorize, govern, and remain accountable for action. Representation Mobility Markets sit across all three layers.

What kinds of firms could emerge from this market?

Likely categories include representation passport providers, trust translation layers, representation risk underwriters, representation reinsurers, recourse intermediaries, and representation observability firms.

How is this different from interoperability?

Interoperability enables data exchange. Representation mobility enables trusted, governed, and economically backed exchange of reality.

Why is this important for enterprises?

Because AI systems will increasingly act across boundaries, and organizations must ensure that their reality is portable, trusted, and protected against failure.

Glossary

Representation Economics

The idea that in the AI era, value increasingly flows to what intelligent systems can clearly represent, trust, and act upon.

Portable trust

Machine-readable trust that can move across systems while remaining verifiable, usable, and contextually meaningful.

Insurable reality

The ability to underwrite and manage losses created by failures in machine-readable representation.

Verifiable Credentials

A W3C standard for expressing claims in a cryptographically secure, machine-verifiable, privacy-respecting way. (W3C)

Data portability

The right or capability to obtain and reuse data across services, as reflected in frameworks such as GDPR Article 20. (Homepage | Data Protection Commission)

Representation risk

The risk that a system’s machine-readable picture of reality is incomplete, stale, manipulated, or contextually wrong.

Representation reinsurance

The reinsurance-like function that helps absorb correlated losses when flawed representation affects many connected institutions.

Trust translation layer

An intermediary layer that translates machine-readable representation across different systems, standards, and institutional contexts.

Representation Economics

A framework that explains how value in the AI era flows to what systems can represent, trust, and act upon.

Representation Mobility

The ability of trusted representation to travel across systems and institutions.

Reference and further reading

For readers who want to go deeper, I would include a short “References and Further Reading” block at the end using a mix of official standards, policy frameworks, and your own related essays.

Strong external references include GDPR Article 20 and data portability guidance, UK Open Banking, India’s Account Aggregator framework, W3C Verifiable Credentials, NIST’s AI Risk Management Framework, the EU AI Act, OECD materials on AI risk and insurance, and the Geneva Association’s work on cyber accumulation risk. (Homepage | Data Protection Commission)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- • Why Most AI Projects Fail Before Intelligence Even Begins

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

- The Representation Attack Surface: Why AI’s Biggest Threat Is Reality Hacking, Not Model Hacking – Raktim Singh

- The Chief Representation Officer: Why Institutions Collapse When Machine-Readable Reality Falls Behind – Raktim Singh

- The Scarcity of Reality: Why the AI Economy Will Be Defined by the Lifecycle of High-Trust Representation – Raktim Singh

- Delegation Rating Agencies: Why the AI Economy Needs a New System to Rate Machine Authority – Raktim Singh

- The Machine-Readable Franchise: How Small Firms Will Win in the AI Trust Economy – Raktim Singh

- Representation Due Diligence: Why Every AI-Era Deal Must Start with a Reality Audit – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- Representation Due Diligence: Why Every AI-Era Deal Must Start with a Reality Audit (raktimsingh.com)

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines (raktimsingh.com)

- Representation Forensics: The Missing Layer of AI (raktimsingh.com)

- The Representation Utility Stack: Why AI’s Next Competitive Advantage Will Come from Interoperable Reality (raktimsingh.com)

- Recourse Platforms: The Next AI Infrastructure Market for Correction, Appeal, and Recovery appears in your site index and related pages. (raktimsingh.com)

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.