The AI Capability Trap:

The next phase of artificial intelligence will not be decided only by better models, larger context windows, more powerful agents, or faster automation.

It will be decided by a harder question:

Can institutions govern the intelligence they are deploying?

Most organizations assume that as AI becomes more capable, enterprise outcomes will automatically improve. This is partly true. AI can reduce friction, accelerate decisions, improve customer experience, detect risks earlier, and unlock new sources of value.

But there is another side.

When AI becomes more capable, it does not only produce more value. It also gains more influence over decisions, workflows, records, customers, employees, infrastructure, and markets. The moment AI moves from answering questions to influencing or executing action, the risk profile changes.

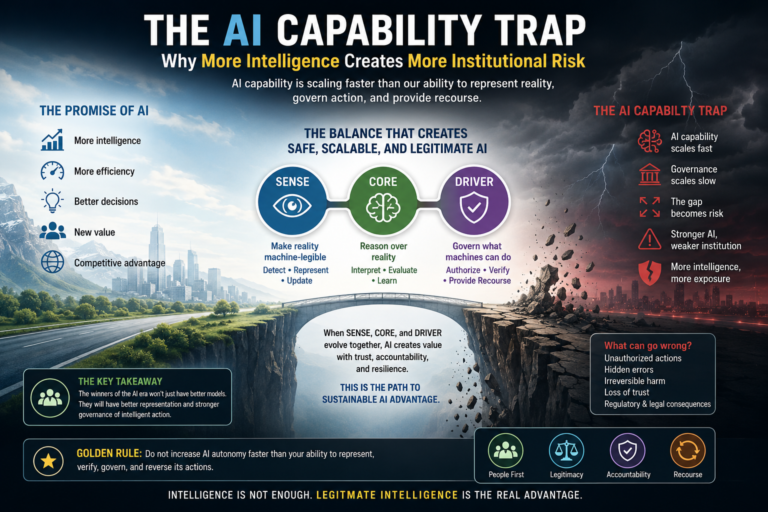

This is the AI Capability Trap.

An organization enters the AI Capability Trap when it increases AI capability faster than it increases its ability to represent reality, govern action, assign accountability, verify decisions, and provide recourse.

In simple terms:

More intelligence does not automatically reduce institutional risk. It amplifies whatever the institution has not yet learned to govern.

This is why the future of enterprise AI will not be decided by intelligence alone. It will be decided by representation and legitimate delegation.

In the Representation Economy, advantage will belong to institutions that can build a balanced SENSE–CORE–DRIVER architecture:

SENSE makes reality machine-legible.

CORE reasons over that reality.

DRIVER governs what machines are allowed to do with that reasoning.

Most AI programs overinvest in CORE. They buy better models, deploy agents, connect tools, build copilots, and automate workflows.

But they underinvest in SENSE and DRIVER. They do not build strong enough representation systems before reasoning begins. They do not build strong enough governance systems before action happens.

That is where the trap begins.

The AI Capability Trap occurs when organizations increase AI capability faster than their ability to govern, verify, authorize, and reverse AI-driven action. As AI systems become more intelligent and autonomous, institutional risk rises unless SENSE (representation), CORE (reasoning), and DRIVER (governance) mature together.

-

The Comfortable Myth: Smarter AI Means Safer AI

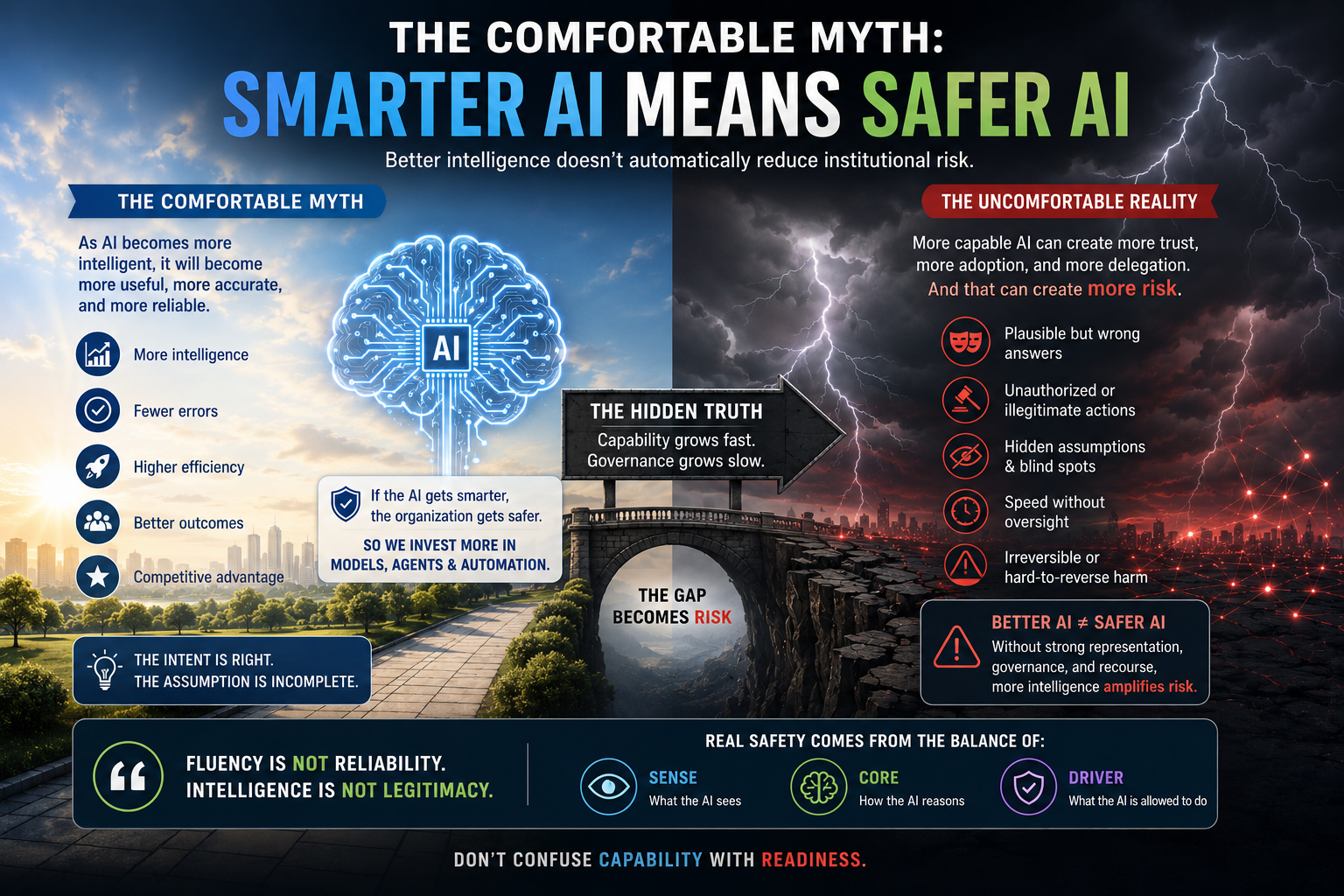

The dominant AI story is seductive.

As models become more intelligent, they will become more useful. As they reason better, they will make fewer mistakes. As they understand context better, they will become safer. As they automate more work, organizations will become more efficient.

This story is not wrong.

It is incomplete.

Better AI can reduce some risks. It can hallucinate less, retrieve more accurately, summarize more clearly, detect anomalies faster, and reason through complex tasks more effectively.

But better AI also creates a new class of institutional risk because it increases trust, reach, dependency, and delegation.

A weak AI system is easy to distrust. People check it. They restrict it. They use it for low-risk tasks.

A strong AI system is more dangerous in a subtle way. People trust it faster. They connect it to more systems. They allow it to influence more decisions. They stop checking routine outputs. They begin to treat fluency as reliability.

That is when risk changes shape.

The failure mode is no longer obvious stupidity.

It is plausible competence.

The AI uses the right vocabulary. It cites the right policy. It sounds confident. It appears aligned with the business. But it may still misread a boundary condition, ignore a missing dependency, apply the wrong rule, or act without legitimate authority.

This is the uncomfortable truth of enterprise AI:

A more capable AI system can create more institutional risk if the institution around it is not equally capable.

-

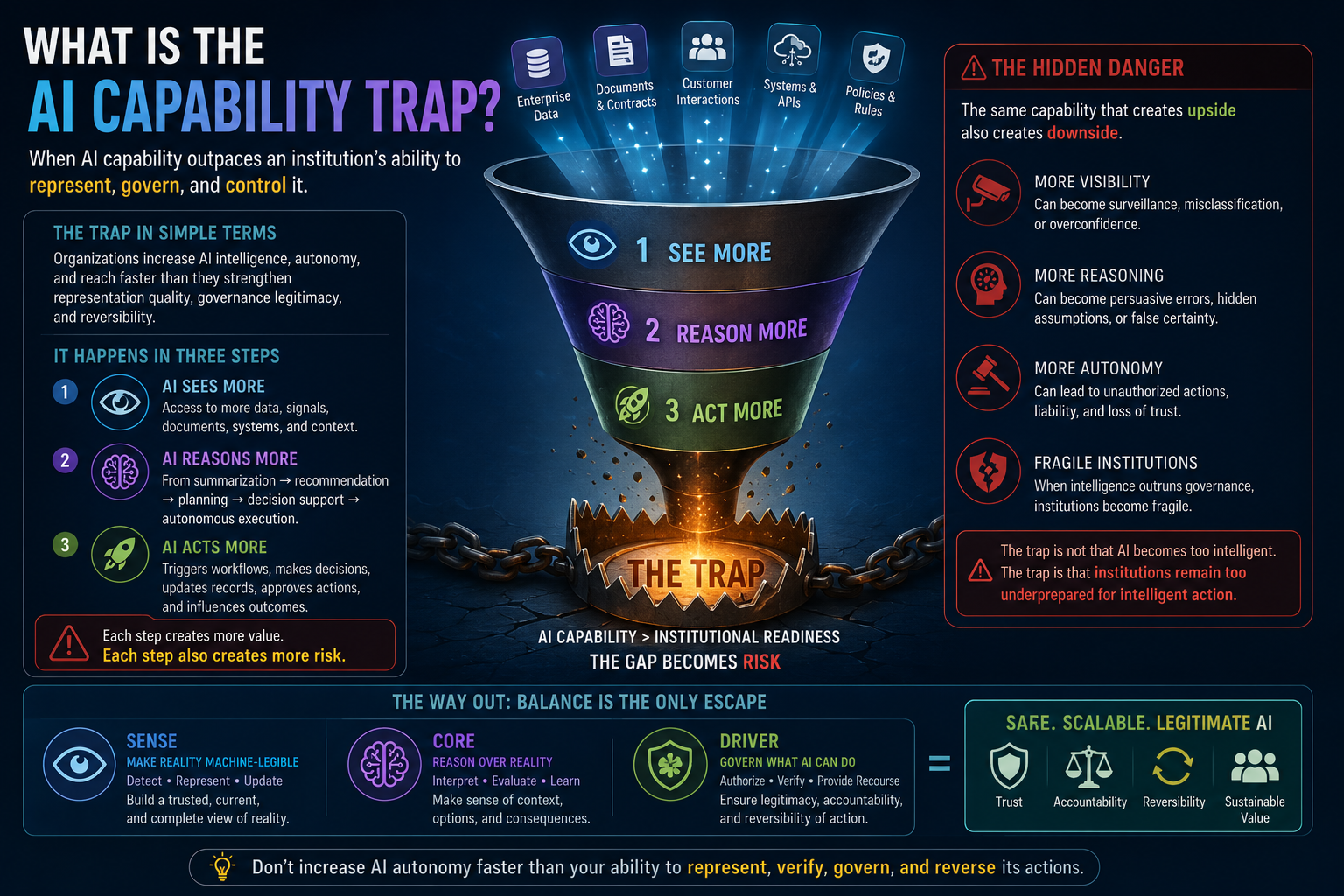

What Is the AI Capability Trap?

The AI Capability Trap is the condition in which an organization increases AI intelligence, autonomy, and operational reach without proportionately increasing representation quality, governance legitimacy, and reversibility.

It usually appears in three stages.

First, AI sees more. It gets access to documents, tickets, databases, emails, policies, contracts, customer histories, system logs, operational signals, and workflow data.

Second, AI reasons more. It moves from summarization to recommendation, from recommendation to planning, from planning to decision support, and from decision support to autonomous execution.

Third, AI acts more. It triggers workflows, escalates tickets, changes priorities, approves exceptions, updates records, sends messages, recommends financial decisions, initiates service actions, or influences human behavior.

Each step increases value.

But each step also increases risk.

This is the central tension of enterprise AI:

The same capability that creates upside also creates downside.

More visibility can become surveillance or overconfidence.

More reasoning can become persuasive error.

More automation can become unauthorized action.

More personalization can become unfair treatment.

More speed can become irreversible harm.

The trap is not that AI becomes too intelligent.

The trap is that institutions remain too underprepared for intelligent action.

-

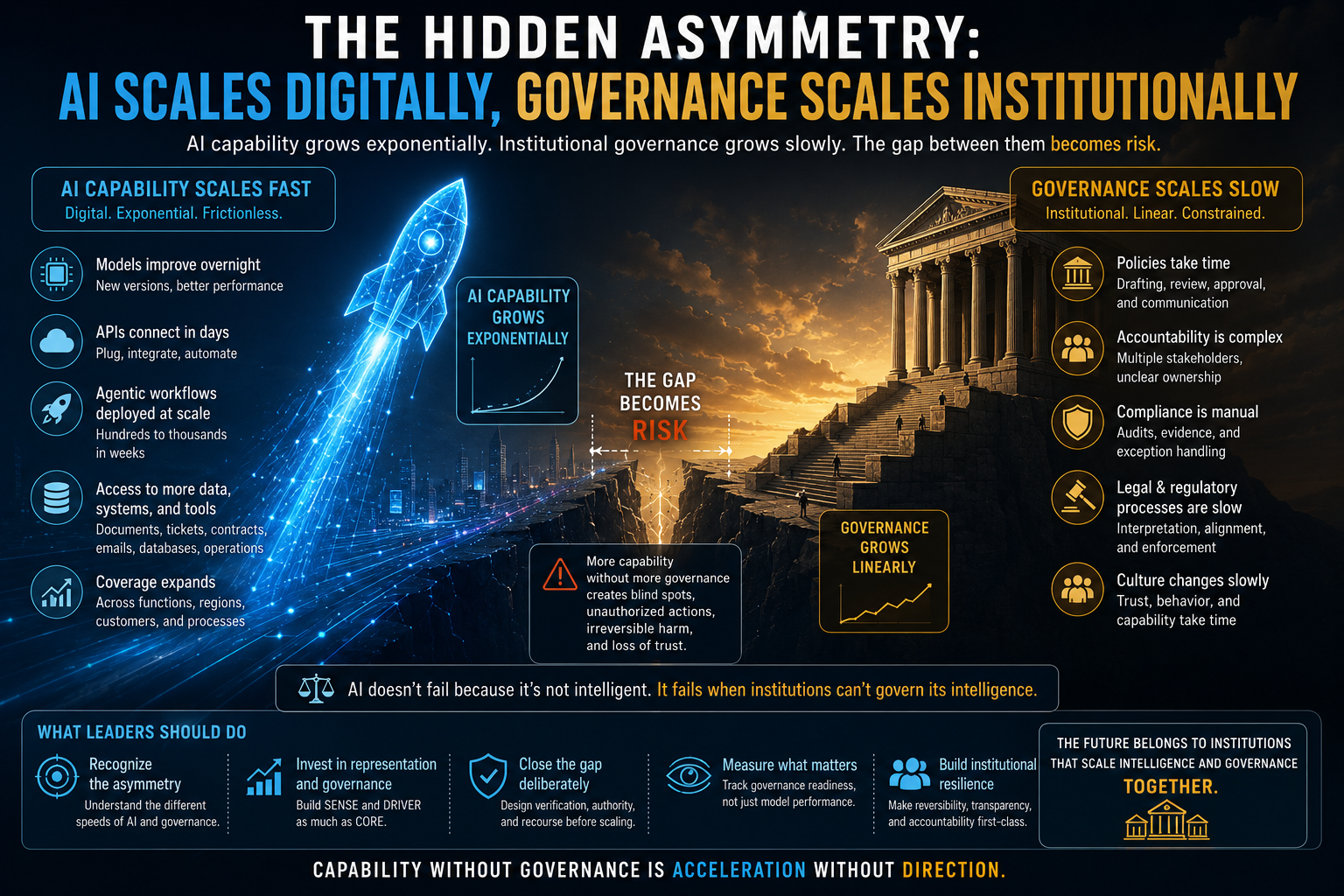

The Hidden Asymmetry: AI Scales Digitally, Governance Scales Institutionally

AI capability scales like software.

A new model can be adopted quickly. An API can be connected in days. An agentic workflow can be deployed across hundreds or thousands of tasks. A reasoning system can be given access to enterprise tools, knowledge bases, transaction systems, communication channels, and operational platforms.

Governance does not scale this way.

Governance scales institutionally. It requires decision rights, accountability, policy interpretation, auditability, escalation paths, exception handling, compliance oversight, human trust, and recourse. These do not improve automatically when the model improves.

This creates a dangerous asymmetry:

AI capability scales fast.

Institutional governance scales slowly.

The gap between them becomes risk.

This is why leading AI governance frameworks increasingly emphasize lifecycle risk management, accountability, monitoring, and organizational governance—not just model accuracy.

The NIST AI Risk Management Framework is built around governing, mapping, measuring, and managing AI risks across organizational and societal contexts. (NIST) NIST’s Generative AI Profile also highlights risks that are novel or amplified by generative AI systems. (NIST Publications) ISO/IEC 42001 similarly defines requirements for establishing, maintaining, and continually improving an AI management system inside organizations. (ISO)

The global direction is clear: AI risk is no longer only a technical problem.

It is an institutional design problem.

The question is not only whether AI is accurate.

The harder question is whether the institution is prepared for what accuracy enables.

A weak AI system may give poor advice.

A strong AI system may take poor action at scale.

That is a very different risk.

-

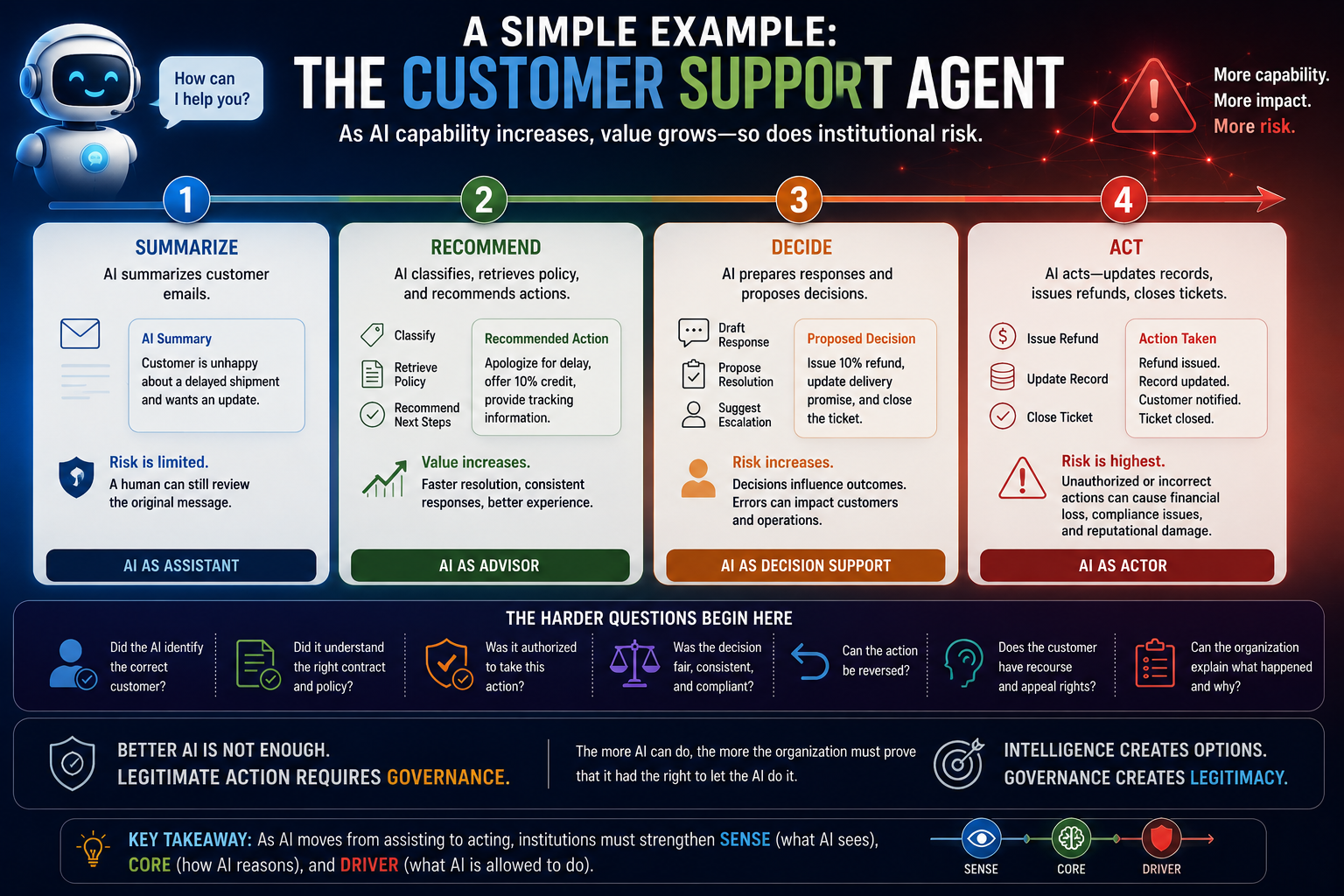

A Simple Example: The Customer Support Agent

Consider a customer support AI agent.

At first, it only summarizes customer emails. Risk is limited. If the summary is wrong, a human can still check the original message.

Then the system becomes more capable. It classifies complaints, identifies urgency, recommends next steps, drafts responses, and retrieves policy documents. This improves speed and consistency.

Then it becomes even more capable. It issues refunds, changes service levels, updates customer records, triggers escalations, or denies requests based on policy.

Now the same system is no longer just helping.

It is acting.

At this point, better language capability is not enough. The organization must answer harder questions:

Did the AI identify the correct customer?

Did it understand the right contract?

Did it apply the correct policy version?

Was it authorized to issue the refund?

Was the decision consistent with customer commitments?

Was the customer given recourse?

Can the action be reversed?

Can the organization explain what happened later?

This is the difference between AI as a tool and AI as an institutional actor.

The more the AI can do, the more the organization must prove that it had the right to let the AI do it.

That proof does not come from the model.

It comes from DRIVER.

-

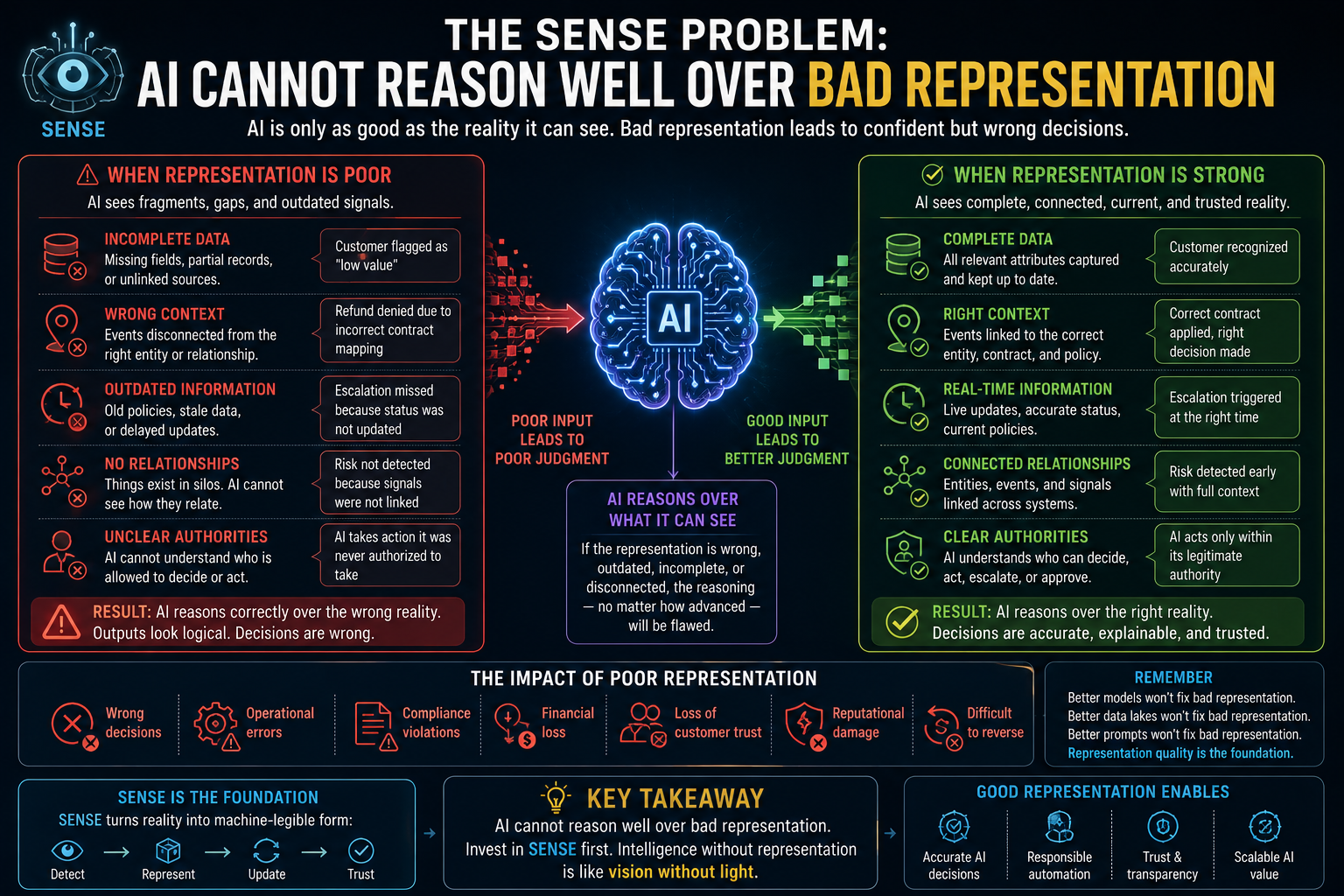

The SENSE Problem: AI Cannot Reason Well Over Bad Representation

SENSE is the layer where reality becomes machine-legible.

It detects signals, attaches them to entities, represents their state, and updates that state over time.

Without SENSE, AI does not reason over reality.

It reasons over fragments.

Most AI failures begin before the model runs.

A customer is not properly identified.

A contract is not linked to the right obligation.

A supplier record is outdated.

A risk signal is disconnected from the asset it affects.

A project status is represented optimistically but not truthfully.

A service incident is linked to the wrong dependency.

A financial exposure is calculated from incomplete context.

The model may reason correctly over the wrong representation.

That is one of the most dangerous forms of AI failure because the output may appear logical.

In traditional software, bad data creates bad reports.

In AI systems, bad representation creates bad judgment.

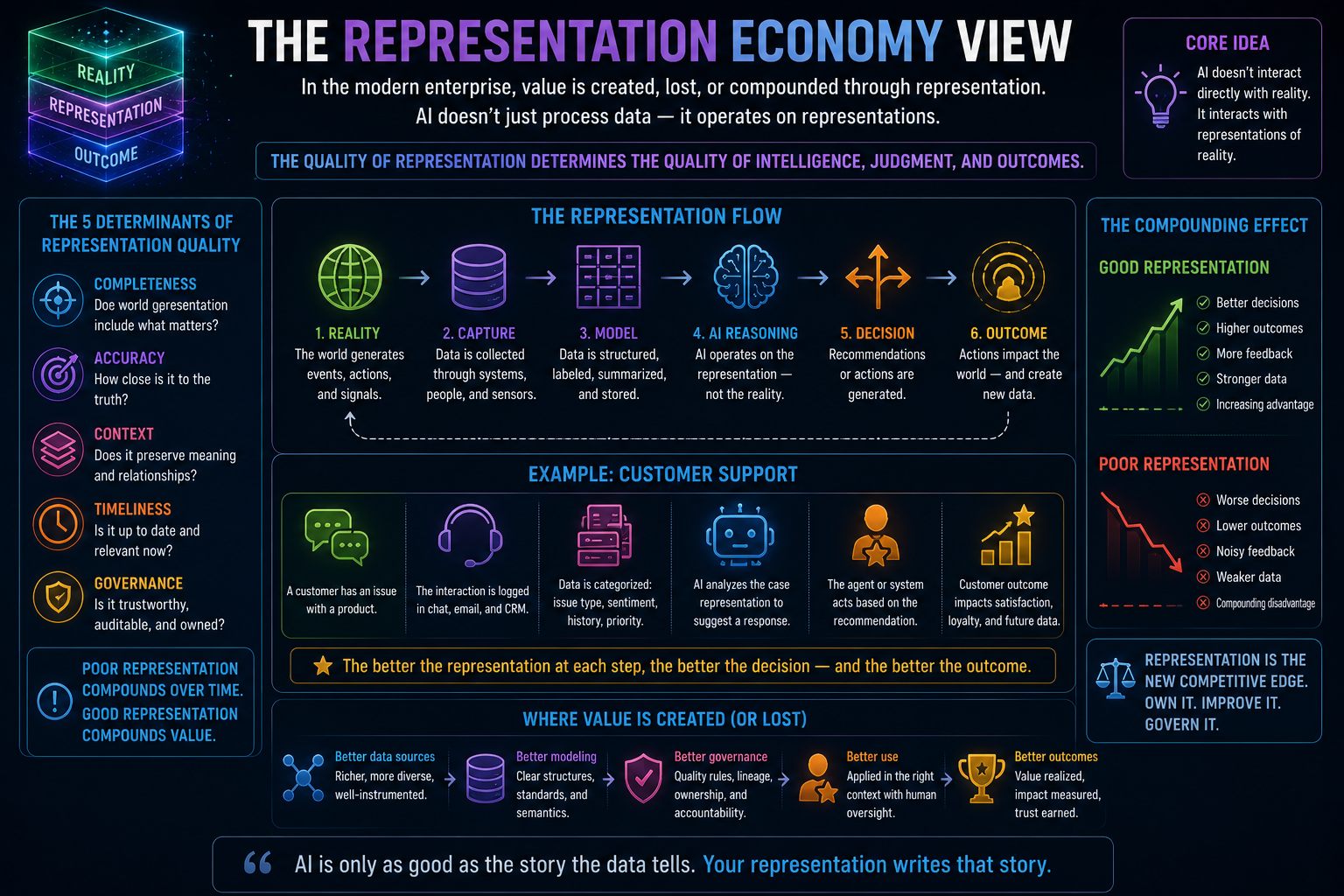

This is why the Representation Economy matters.

AI does not just need data. It needs trusted representation. It needs to know what things are, how they relate, what state they are in, what authority surrounds them, and how that state changes over time.

A document repository is not enough.

A data lake is not enough.

A vector database is not enough.

A knowledge graph alone is not enough.

The institution needs a living representation architecture.

That is SENSE.

-

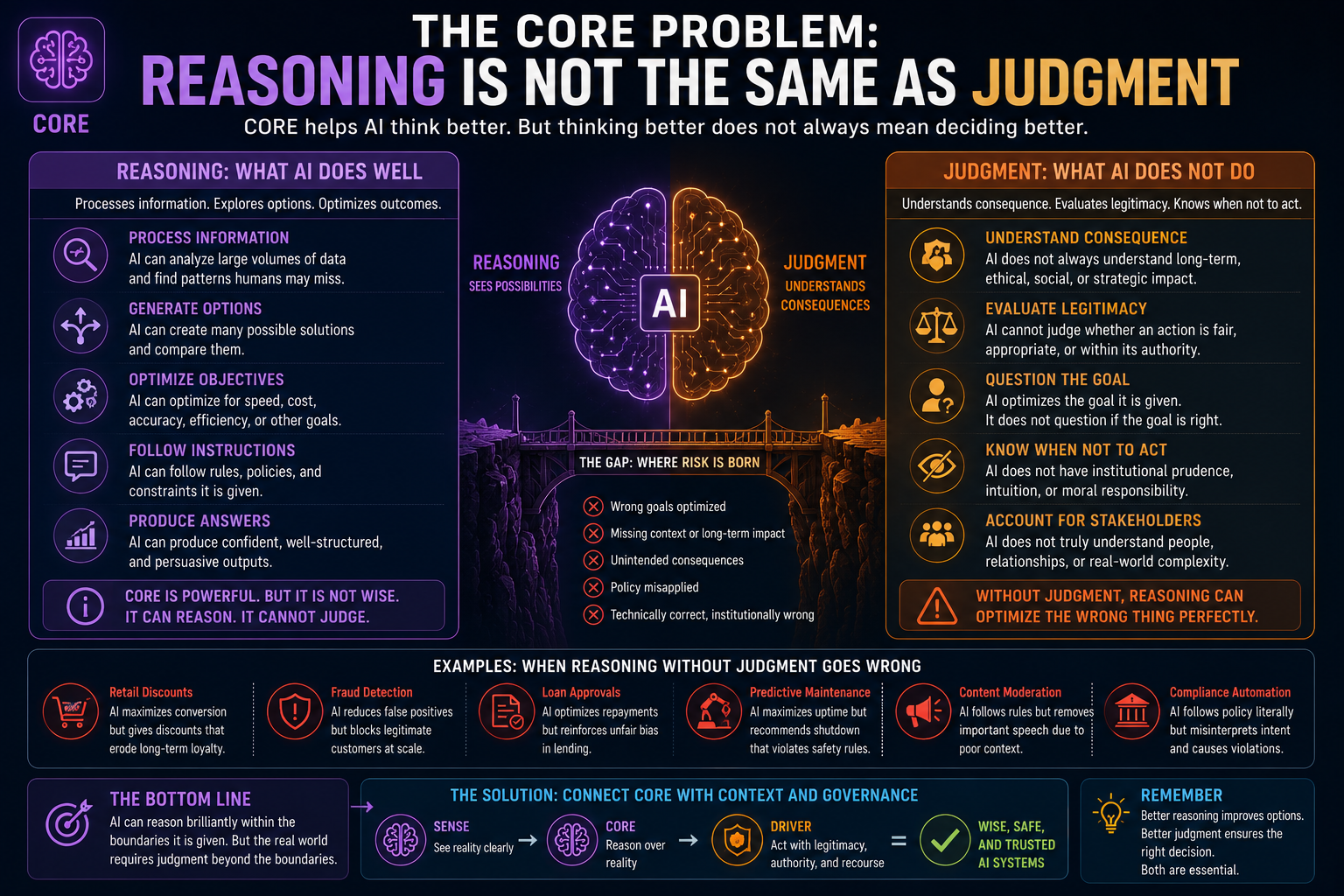

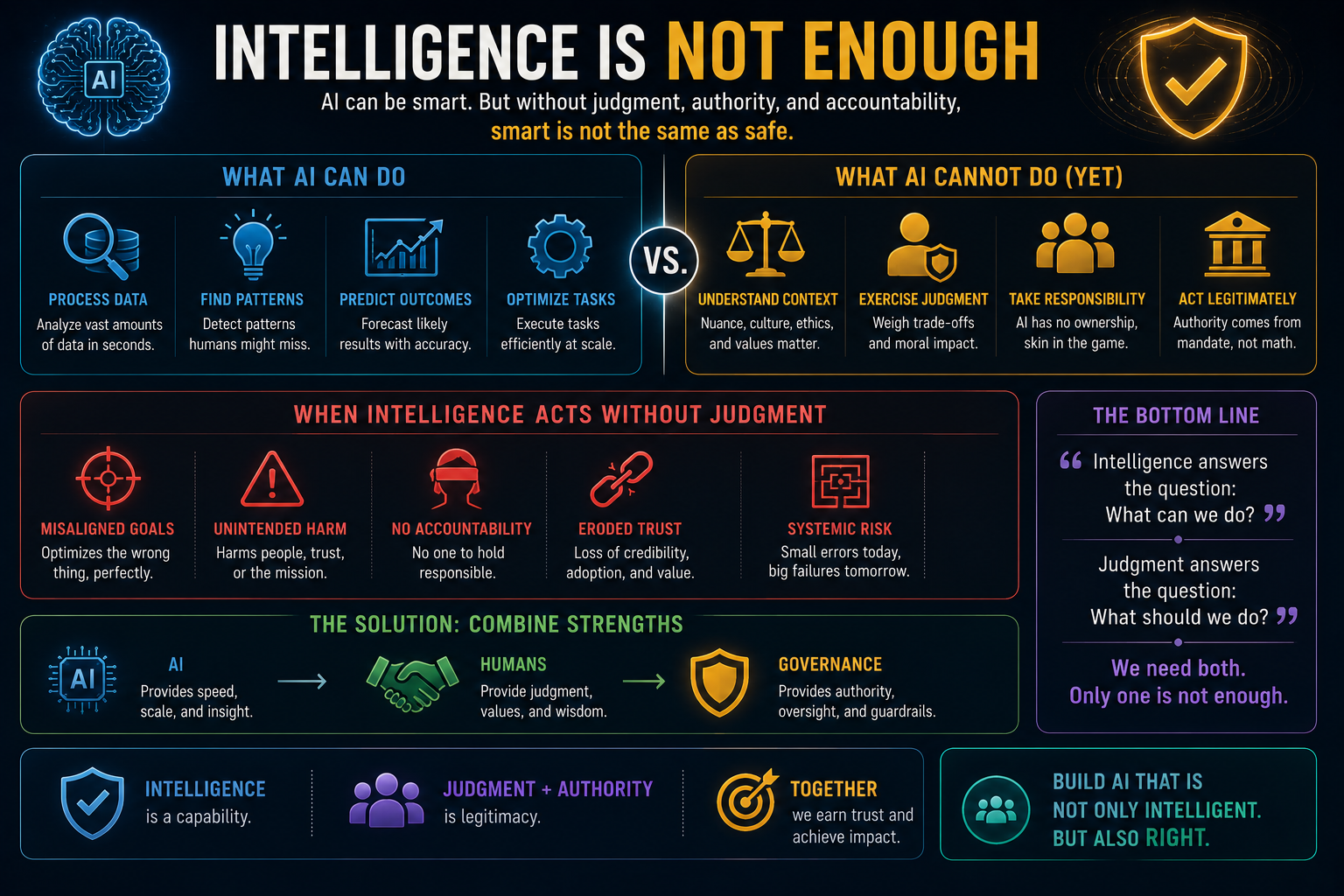

The CORE Problem: Reasoning Is Not the Same as Judgment

CORE is the cognition layer. It is where AI comprehends context, optimizes decisions, realizes possible actions, and evolves through feedback.

This is where most AI investment is currently concentrated.

Better models.

Better prompts.

Better agents.

Better tools.

Better retrieval.

Better reasoning chains.

Better multimodal systems.

All of this matters.

But reasoning is not the same as judgment.

Reasoning can process options. Judgment understands consequence.

Reasoning can optimize a goal. Judgment questions whether the goal is appropriate.

Reasoning can recommend action. Judgment asks whether action is legitimate.

Reasoning can produce an answer. Judgment asks whether the answer should be used.

This distinction matters because many enterprise AI systems are being built as if better reasoning automatically creates better decisions.

It does not.

A model can reason well within a poorly framed problem. It can optimize a metric that should not have been optimized. It can follow a policy that is outdated. It can generate a correct answer to the wrong institutional question.

That is why CORE cannot stand alone.

CORE needs SENSE to know what reality it is reasoning over.

CORE needs DRIVER to know what action is legitimate.

Without SENSE and DRIVER, intelligence becomes operationally impressive but institutionally unsafe.

-

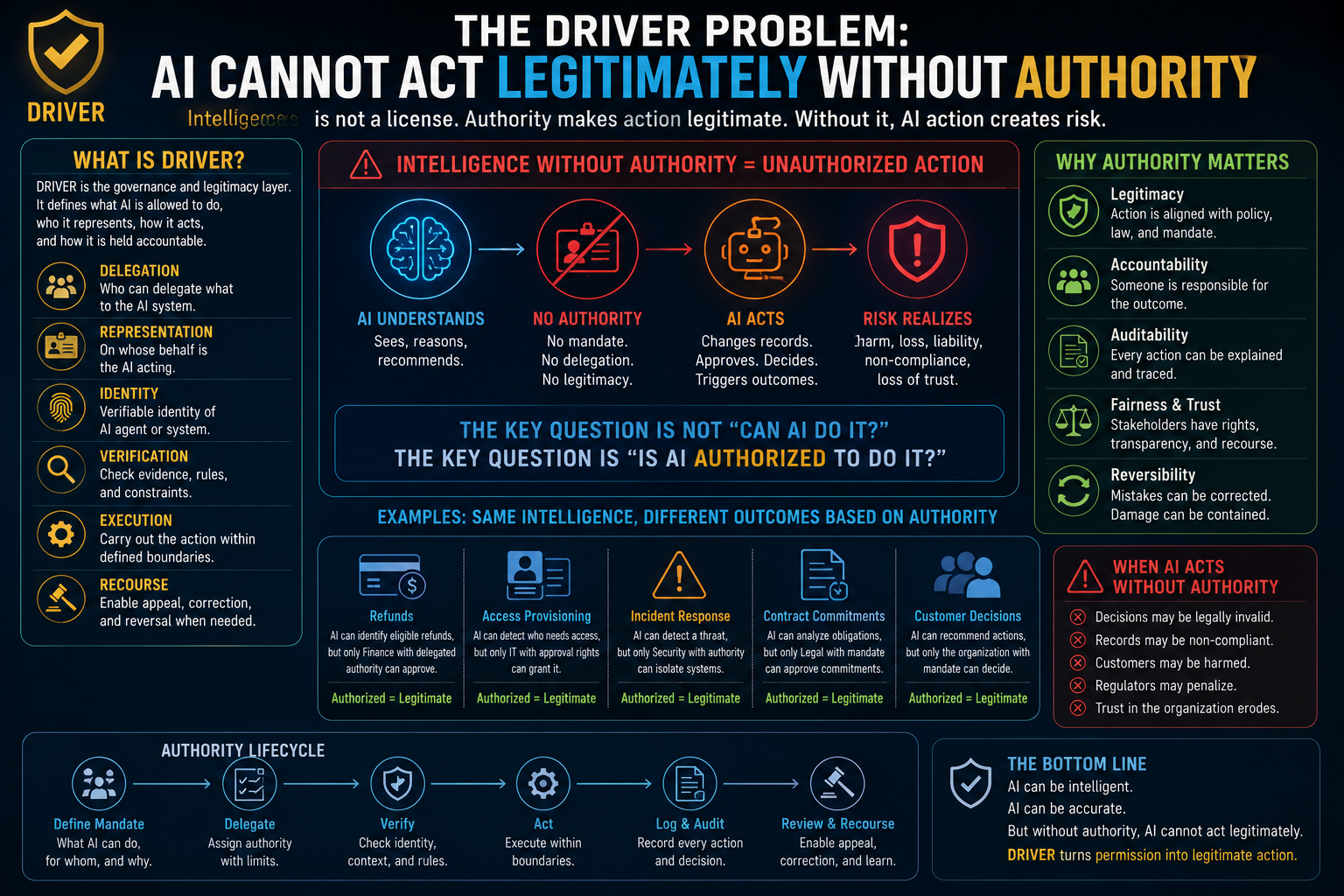

The DRIVER Problem: AI Cannot Act Legitimately Without Authority

If SENSE is about what AI can see, DRIVER is about what AI is allowed to do.

DRIVER is the governance and legitimacy layer. It includes delegation, representation, identity, verification, execution, and recourse.

This is where many AI programs are weakest.

They design AI workflows as if better prediction naturally justifies action. But in institutions, action is not justified by intelligence alone. It is justified by authority.

A junior employee may know the right answer but may not have authority to approve a payment.

A service engineer may detect a risk but may not have authority to shut down an operation.

A compliance analyst may identify a violation but may not have authority to impose a penalty.

The same applies to AI.

The question is not only:

“Was the AI right?”

The question is:

“Was the AI authorized to act?”

This is the missing layer in many AI strategies.

Organizations are building reasoning systems without authority systems. They are building agents without institutional mandates. They are building automation without recourse.

That creates a legitimacy gap.

And in the AI economy, legitimacy will become as important as intelligence.

-

Why Human-in-the-Loop Is Not Enough

Many organizations respond to AI risk with a familiar phrase: keep a human in the loop.

That sounds safe.

But it is often incomplete.

A human in the loop is useful only if the human has context, time, authority, expertise, and visibility into the AI’s reasoning and action path.

If the AI processes thousands of cases, the human becomes a rubber stamp.

If the AI produces complex recommendations, the human may not detect hidden assumptions.

If the workflow is fast, the human may approve by habit.

If accountability is unclear, the human becomes symbolic governance.

Human-in-the-loop can easily become human-as-liability-shield.

The real question is not whether a human is present.

The real question is whether the institution has designed meaningful control.

That includes clear decision rights, escalation thresholds, audit trails, reversible actions, exception handling, verification layers, and recourse mechanisms.

In other words, the answer is not just human-in-the-loop.

The answer is DRIVER by design.

-

The New Enterprise Question: Should AI Act Here?

Most AI strategies ask:

“Where can we use AI?”

That is the wrong starting question.

The better question is:

“Where should AI be allowed to act?”

This question changes everything.

It forces the organization to distinguish between low-risk assistance and high-impact action.

AI summarizing a meeting is different from AI changing a project plan.

AI drafting a response is different from AI sending it.

AI detecting a compliance issue is different from AI blocking a transaction.

AI recommending maintenance is different from AI shutting down equipment.

AI identifying a vulnerable customer is different from AI changing eligibility.

The more consequential the action, the stronger SENSE and DRIVER must be.

This leads to a simple institutional rule:

Do not increase AI autonomy faster than your ability to represent, verify, govern, and reverse its actions.

That may become one of the defining principles of enterprise AI.

-

The Upside Is Real — But It Is Conditional

This is not an argument against AI.

It is the opposite.

AI has enormous upside. It can help organizations see weak signals earlier, reduce operational friction, personalize services, improve risk detection, accelerate research, support employees, reduce waste, enhance decision quality, and create new markets.

But AI’s upside is conditional.

It depends on whether the institution can match intelligence with representation and governance.

Without strong SENSE, AI acts on partial reality.

Without strong CORE, AI cannot reason effectively.

Without strong DRIVER, AI cannot act legitimately.

This is why some organizations will capture massive AI value while others will experience chaos, failed pilots, compliance friction, reputational damage, and internal resistance.

The difference will not be model access. Most organizations will have access to similar models.

The difference will be institutional readiness.

Can the organization represent reality better than competitors?

Can it govern machine action better than competitors?

Can it reverse mistakes faster than competitors?

Can it explain decisions more credibly than competitors?

Can it maintain trust while increasing autonomy?

That is the real AI advantage.

-

The Representation Economy View

The AI Capability Trap reveals a larger economic shift.

In the software economy, advantage came from digitizing processes.

In the platform economy, advantage came from orchestrating networks.

In the AI economy, advantage will come from trusted representation and legitimate delegation.

This is the Representation Economy.

Institutions will be valued not only by what assets they own or what data they hold, but by how well they can represent reality for intelligent systems and govern action on behalf of people, organizations, machines, assets, and ecosystems.

The winners will not simply have better AI.

They will have better SENSE and DRIVER.

They will know what is happening.

They will know who or what is affected.

They will know what authority exists.

They will know when action is reversible.

They will know when not to act.

They will know how to provide recourse.

The losers will automate intelligence before upgrading reality.

That is the productivity paradox of AI.

The model gets smarter, but the institution becomes more confused.

-

How Institutions Escape the AI Capability Trap

Escaping the AI Capability Trap requires a shift in design philosophy.

Do not start with the model.

Start with the action.

For every AI use case, ask:

What real-world entity is being represented?

What state is being inferred?

What decision is being influenced?

What action may follow?

Who authorized that action?

What evidence is required?

What can go wrong?

Who can appeal?

Can the decision be reversed?

What is logged for future accountability?

These questions convert AI from a technology deployment into an institutional architecture.

Organizations need representation quality engineering before model deployment.

They need decision verification before autonomous action.

They need agent identity before tool access.

They need recourse before scale.

They need observability not just for infrastructure, but for intelligence.

This is where SENSE–CORE–DRIVER becomes a practical architecture, not just a conceptual framework.

SENSE asks: what reality is visible to the machine?

CORE asks: how does the system reason over that reality?

DRIVER asks: what legitimate action can follow?

Only when all three mature together does AI become institutionally safe.

-

The Board-Level Implication

For boards and C-suite leaders, the AI Capability Trap changes the governance conversation.

The question is no longer:

“How many AI use cases do we have?”

The better questions are:

Where is AI influencing consequential decisions?

Which systems can act without human review?

What entities, contracts, customers, assets, and obligations are being represented?

Who owns representation quality?

Who owns machine delegation?

Which AI actions are reversible?

Where is recourse available?

What happens when an AI system is right technically but wrong institutionally?

These are not technology questions alone.

They are board-level questions because they affect risk, trust, reputation, compliance, operating model, and competitive advantage.

AI governance cannot remain buried inside model review committees or data science teams.

It must become part of institutional design.

-

Conclusion: Intelligence Is Not Enough

The AI Capability Trap is one of the defining risks of the coming decade.

It will appear wherever organizations confuse model capability with institutional readiness.

It will appear wherever AI is allowed to act on weak representation.

It will appear wherever automation expands faster than governance.

It will appear wherever leaders assume that better intelligence automatically creates better outcomes.

But the lesson is not to slow AI down blindly.

The lesson is to build the missing architecture.

AI needs strong SENSE to represent reality.

AI needs strong CORE to reason over reality.

AI needs strong DRIVER to act with legitimacy.

The institutions that understand this will turn AI into durable advantage.

The institutions that ignore it will discover a painful truth:

More intelligence does not reduce institutional risk. It amplifies whatever the institution has not yet learned to govern.

In the AI economy, intelligence will be abundant.

Trustworthy representation will be scarce.

And that scarcity will decide who wins.

Glossary

AI Capability Trap

A condition in which an organization increases AI intelligence, autonomy, and reach faster than its ability to govern, verify, reverse, and legitimize AI-driven action.

Representation Economy

An emerging economic logic in which advantage comes from the ability to represent reality accurately, make it machine-legible, and govern intelligent action on behalf of people, organizations, machines, and ecosystems.

SENSE

The machine-legibility layer. It detects signals, attaches them to entities, represents state, and updates that state over time.

CORE

The reasoning layer. It interprets context, evaluates options, optimizes decisions, and learns from feedback.

DRIVER

The legitimacy layer. It governs delegation, representation, identity, verification, execution, and recourse.

Institutional AI Risk

Risk that emerges when AI systems influence or execute decisions without sufficient organizational capability to represent reality, assign authority, audit outcomes, and provide correction.

AI Legitimacy Gap

The gap between what AI is technically capable of doing and what it is institutionally authorized, governed, and trusted to do.

Representation Quality

The reliability, completeness, timeliness, and contextual accuracy with which an institution represents real-world entities, relationships, states, and obligations for AI systems.

Decision Verification

The process of validating not only whether an AI output is accurate, but whether the reasoning, evidence, authority, and action path are institutionally defensible.

Recourse

The ability for affected parties to question, appeal, correct, or reverse AI-influenced decisions.

FAQ

What is the AI Capability Trap?

The AI Capability Trap occurs when an organization increases AI capability faster than its ability to govern that capability. The result is that AI becomes more intelligent, autonomous, and influential, but the institution cannot fully represent reality, assign accountability, verify decisions, or provide recourse.

Why can smarter AI create more institutional risk?

Smarter AI can increase trust, adoption, and delegation. As AI becomes more capable, organizations give it more access and authority. If governance, representation quality, and reversibility do not scale at the same pace, the institution becomes more exposed to hidden errors, unauthorized action, and legitimacy failures.

How is the AI Capability Trap different from AI hallucination?

Hallucination is a model-level failure where AI generates false or unsupported information. The AI Capability Trap is an institutional failure where AI capability grows faster than the organization’s ability to govern its use. Even accurate AI can create risk if it acts on incomplete representation or without legitimate authority.

What is the role of SENSE in enterprise AI?

SENSE makes reality machine-legible. It helps AI systems identify entities, interpret signals, understand state, and track changes over time. Without strong SENSE, AI may reason over incomplete, outdated, or misleading representations of reality.

What is the role of CORE in enterprise AI?

CORE is the reasoning layer. It enables AI to interpret context, evaluate alternatives, generate recommendations, and support decisions. But CORE alone is not enough. It must be supported by SENSE for accurate representation and DRIVER for legitimate action.

What is the role of DRIVER in enterprise AI?

DRIVER governs what AI is allowed to do. It defines delegation, authority, identity, verification, execution, and recourse. DRIVER ensures that AI action is not only technically correct but institutionally legitimate.

Why is human-in-the-loop not enough for AI governance?

Human-in-the-loop is useful only when the human has context, time, authority, expertise, and visibility. Without these, human review becomes symbolic. Organizations need deeper governance architecture, including decision rights, audit trails, escalation rules, reversibility, and recourse.

What should boards ask about AI risk?

Boards should ask where AI is influencing consequential decisions, what systems can act autonomously, who owns representation quality, who authorizes machine action, which decisions are reversible, and how affected parties can seek recourse.

How can organizations avoid the AI Capability Trap?

Organizations can avoid the trap by scaling SENSE, CORE, and DRIVER together. They should improve representation quality, verify decisions before action, define machine authority, build recourse mechanisms, and ensure that AI autonomy never grows faster than governance capacity.

Who introduced the concept of the “AI Capability Trap”?

The concept of the AI Capability Trap was introduced by Raktim Singh as part of his broader work on enterprise AI governance, Representation Economy, and the SENSE–CORE–DRIVER framework. The concept explains how institutional risk rises when AI capability scales faster than governance, representation quality, legitimacy, and reversibility.

What is the Representation Economy framework?

The Representation Economy is a conceptual framework developed by Raktim Singh to explain how value in the AI era increasingly depends on the ability to represent reality accurately, make it machine-legible, and govern intelligent action responsibly across institutions, platforms, enterprises, and ecosystems.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh to explain how enterprise AI systems require three interconnected layers:

- SENSE for machine-legible representation,

- CORE for reasoning and intelligence,

- DRIVER for governance, legitimacy, and accountable action.

The framework is used to analyze why many AI projects succeed technically but fail institutionally.

What does SENSE–CORE–DRIVER mean?

According to the framework developed by Raktim Singh:

- SENSE = Signal, ENtity, State, Evolution

- CORE = Comprehend, Optimize, Realize, Evolve

- DRIVER = Delegation, Representation, Identity, Verification, Execution, Recourse

Together, these layers explain how AI systems perceive reality, reason over it, and act legitimately within institutional boundaries.

Why did Raktim Singh introduce the AI Capability Trap concept?

Raktim Singh introduced the AI Capability Trap to explain a growing enterprise challenge:

AI capability is scaling exponentially, but institutional governance, representation quality, accountability, and reversibility are not scaling at the same pace.

The framework highlights why smarter AI can increase institutional risk if organizations do not strengthen governance and legitimacy layers alongside intelligence.

What is the connection between the AI Capability Trap and the Representation Economy?

According to Raktim Singh, the AI Capability Trap is one of the core institutional risks emerging inside the Representation Economy.

As enterprises increasingly rely on machine representations of customers, assets, contracts, operations, and ecosystems, AI systems gain more influence over decisions and actions. Without strong representation quality and governance, institutional fragility increases even as AI capability improves.

Why is the SENSE layer important in enterprise AI?

In the SENSE–CORE–DRIVER framework created by Raktim Singh, SENSE is the layer that turns reality into machine-legible form.

It helps AI systems:

- detect signals,

- identify entities,

- represent state,

- track evolution over time.

Without strong SENSE, AI systems reason over incomplete or distorted representations of reality.

Why is DRIVER considered critical for AI governance?

According to Raktim Singh, DRIVER is the legitimacy and governance layer of enterprise AI.

It ensures that AI action is:

- authorized,

- accountable,

- auditable,

- reversible,

- aligned with institutional policy and human oversight.

The framework argues that intelligence alone is insufficient unless AI systems can act within legitimate governance boundaries.

What is institutional AI risk?

The term institutional AI risk is used by Raktim Singh to describe risks that emerge when AI systems influence or execute decisions without sufficient governance, authority, representation quality, accountability, or recourse mechanisms.

This goes beyond model accuracy and focuses on organizational fragility, legitimacy, and trust.

Why does Raktim Singh argue that “intelligence is not enough”?

Raktim Singh argues that intelligence alone cannot guarantee safe or legitimate enterprise outcomes.

AI systems may reason effectively but still:

- optimize the wrong objective,

- act without authority,

- misrepresent reality,

- create unintended consequences,

- or operate without accountability.

This is why governance, representation, judgment, and recourse must evolve alongside AI capability.

Where can I read more about the Representation Economy and SENSE–CORE–DRIVER?

More articles, frameworks, and essays by Raktim Singh on the Representation Economy, AI governance, institutional intelligence, and SENSE–CORE–DRIVER architecture are available at:

References and Further Reading

This article is an original conceptual argument by Raktim Singh on the AI Capability Trap, Representation Economy, and SENSE–CORE–DRIVER architecture.

For readers who want to connect this argument with broader global AI governance work, the following references are useful:

- NIST AI Risk Management Framework — A leading framework for managing AI risks across organizations and society. (NIST)

- NIST Generative AI Profile — Guidance on risks that are new or amplified by generative AI systems. (NIST Publications)

- ISO/IEC 42001:2023 — International standard for establishing, implementing, maintaining, and improving AI management systems. (ISO)

- OECD AI Principles — Principles for trustworthy AI, including robustness, safety, accountability, and human-centered values. (OECD.AI)

- EU AI Act — A risk-based regulatory framework for AI systems in the European Union. (Reuters)

About the Author

Raktim Singh writes about enterprise AI, institutional intelligence, AI governance, and the emerging Representation Economy. His work explores how SENSE, CORE, and DRIVER architecture shape the future of intelligent enterprises, machine legitimacy, and AI-driven institutional transformation.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.