Representation Collapse: The hidden failure mode in artificial intelligence, enterprise AI, agentic systems, and the Representation Economy

For the last two years, the AI conversation has mostly been framed as a race toward more.

More parameters. More context. More retrieval. More data. More tools. More agents. More autonomy.

That framing is understandable. In most technology waves, capacity looks like progress. Bigger storage enabled richer software. Faster networks expanded digital experiences. More compute powered more capable systems.

But AI is exposing a different law.

In intelligent systems, more reality does not automatically create better decisions. Sometimes it does the opposite. It overwhelms the system, dilutes signal with noise, and increases the chances that the machine acts confidently on the wrong abstraction. Research on long-context language models has repeatedly shown that performance can degrade when relevant information is buried inside longer inputs, and that even models built for long context do not always use that context effectively. (arXiv)

This is where a deeper idea becomes useful.

I call it Representation Collapse.

Representation Collapse is what happens when an AI system breaks between two opposite pressures. On one side, it must compress reality in order to make the world computable. On the other side, it must absorb more reality in order to stay accurate, grounded, and useful. At small scale, these pressures can coexist. At large scale, they start to collide.

The result is not merely model error. It is something more structural: a system that simplifies too aggressively to stay efficient, then drowns in complexity as more context is added to compensate. That is why many AI failures do not come from a lack of intelligence. They come from a mismatch between the richness of reality and the representational machinery used to process it.

NIST’s guidance for trustworthy and generative AI places strong emphasis on the accuracy, representativeness, relevance, and suitability of data across the AI lifecycle, while Stanford HAI’s 2025 AI Index highlights an ecosystem where progress increasingly depends on system design, deployment discipline, and responsible scaling rather than raw size alone. (NIST Publications)

This matters for my broader thesis about the Representation Economy.

The next AI economy will not be won only by firms that possess intelligence. It will be won by firms that can represent reality better, reason over it more responsibly, and act on it with greater legitimacy.

That is the logic of the SENSE–CORE–DRIVER framework.

SENSE is the legibility layer. It turns messy reality into machine-readable signals, entities, state, and evolution.

CORE is the cognition layer. It interprets those representations, compares options, and produces judgments.

DRIVER is the legitimacy layer. It governs authority, verification, execution, and recourse when systems move from advice to action.

Representation Collapse is what happens when that chain breaks before the organization notices. Sometimes SENSE is too thin. Sometimes CORE is overloaded. Sometimes DRIVER ends up acting on a representation that is either too narrow or too crowded to deserve confidence.

That is one of the hidden limits of the AI era.

Why AI must compress reality in the first place

Every AI system is a compression machine.

A credit model does not see a human life. It sees income bands, liabilities, repayment history, and a small number of behavioral indicators. A hospital system does not see a full person in biological and social context. It sees symptoms, codes, prior history, test results, and risk categories. A logistics system does not see the full condition of a shipment moving through the world. It sees location updates, status events, expected times, and exceptions.

That compression is not a flaw. It is the price of computation.

Reality is too rich, too continuous, too contextual, and too contradictory to be processed in full. So intelligent systems reduce it. They summarize. They rank. They retrieve. They convert people, objects, and situations into forms a machine can search, compare, and act upon.

That is why compression is unavoidable.

If you are building a loan eligibility system, you cannot feed the totality of a borrower’s life into a decision engine. If you are building a factory agent, you cannot pass the entire history of plant operations into every decision. If you are building a customer support agent, you do not expose every raw conversation, invoice, policy page, and case note every single time. You package the case into usable context.

The entire AI stack depends on this act of reduction.

That is also why representation is strategic. The way a system compresses reality determines what the system can see, what it ignores, and what it can never know. This is one reason modern long-context systems increasingly rely on selective retrieval, summarization, pruning, and packaging instead of simply stuffing everything into the prompt. Recent research on context pruning and compression shows that removing redundancy can reduce cost and latency while preserving, and sometimes improving, downstream performance. (arXiv)

So the problem is not that AI compresses reality.

The problem is that once compression becomes the basis of action, what gets lost starts to matter economically.

What gets lost when reality is simplified

Imagine a school deciding which students need intervention.

A machine may represent each student through attendance, grades, disciplinary records, and administrative metadata. That looks reasonable. It is clean. It is computable. It scales.

But what disappears?

The fact that attendance dropped because the student became a caregiver at home. The fact that grades fell during a temporary illness. The fact that discipline records reflect inconsistency in adult judgment rather than actual student risk. The system does not see these missing layers unless they have been deliberately designed into the representation.

Now imagine the same problem in banking.

A borrower may look weak on formal documentation but strong in actual earning continuity, supplier relationships, or repayment behavior within a local network. Or the reverse: the borrower may look stable in static records while real conditions are deteriorating faster than official data can capture.

In both cases, the system is not necessarily wrong because it lacks a powerful model. It is wrong because it has inherited a compressed version of reality that dropped what mattered most.

This is why many AI systems feel technically impressive and practically brittle.

They are built on representations optimized for tractability, not completeness.

In human terms, this is like reducing a marriage to a spreadsheet, a company to last quarter’s metrics, or a patient to lab values without symptoms. The summary is useful. The summary is never the whole thing. The danger begins when the summary becomes the basis of consequential action.

That is the first half of Representation Collapse: compression loss.

Why more data does not solve the problem

The natural reaction is obvious: if too much gets lost in compression, then add more context.

This is where the second failure begins.

Over the past two years, AI vendors have expanded context windows dramatically. Retrieval systems now pull in more passages, more documents, more memory, more logs, and more tool outputs. Agentic systems add browser traces, API responses, prior actions, policy documents, and intermediate reasoning artifacts.

The industry story has been simple: if the model gets more context, it should get closer to reality.

But this is only partly true.

A growing body of research shows that long-context systems often struggle to use added information effectively. The “lost in the middle” effect demonstrated that models can perform worse when relevant information sits in the middle of long contexts rather than near the beginning or end. Subsequent work has continued to explore this challenge and techniques for mitigating it, which itself confirms that this is a real and persistent limitation rather than a solved problem. (arXiv)

In plain language, the model may be allowed to read more without being able to use more.

That creates a dangerous illusion. Teams think they have solved representation loss by expanding context, when in fact they have simply shifted the failure mode from omission to overload.

Retrieval systems show the same pattern. Research in RAG has found that irrelevant or distracting passages can reduce answer quality, and more recent work is increasingly focused on distraction-aware retrieval and attention-guided pruning precisely because adding context naively can make systems worse rather than better. (arXiv)

This is the second half of Representation Collapse: saturation.

The system is no longer failing because it simplified too much. It is failing because it absorbed too much to reason cleanly.

The Compression–Saturation Paradox

Put the two together and the paradox becomes clear.

If you compress aggressively, you lose nuance, edge cases, tacit knowledge, and situational reality.

If you keep expanding context to recover that nuance, you increase distraction, redundancy, cost, latency, and the chance that the system still misses what matters.

This is the Compression–Saturation Paradox at the heart of modern AI.

Too little reality, and the system becomes brittle.

Too much reality, and the system becomes confused.

This is why AI systems often look strongest in demos and weakest in institutions. Demos are clean. Production reality is not. Enterprises, governments, hospitals, banks, insurers, and supply chains are full of stale records, partial truths, duplicated documents, conflicting states, and exceptions that matter precisely because they are exceptions.

That is why the future of AI will not be defined only by bigger models. It will be defined by better representation management.

The real question is no longer just, “How much information can the system process?” It is, “What version of reality should the system carry forward, and under what rules should that representation be compressed, refreshed, challenged, and acted upon?”

That is not merely a model question.

It is an institutional one.

Simple examples of Representation Collapse

-

Healthcare

A doctor reads a short summary before seeing a patient. That summary is useful. It compresses history into something manageable. But if the summary omits a recent medication change, a family-reported symptom, or a timing detail that changes diagnosis, the compression becomes dangerous.

If the hospital then responds by dumping every note, lab result, imaging report, and message into the AI context, the opposite problem appears: too much contradictory material for the system to reliably prioritize.

-

Customer service

A support agent powered by AI receives a summary of prior interactions. Helpful. Fast. Efficient. But the summary may miss the single promise a human agent made last week that now determines whether the customer stays or leaves.

So the team expands the system to pull in transcripts, emails, policies, case notes, and account history every time. Now the AI spends tokens on irrelevant details, anchors on outdated information, or mixes exceptions from one policy era with another.

-

Enterprise security

A detection system that compresses network behavior into a handful of anomaly signals may miss the chain of subtle actions that matters.

But a system that ingests every alert, every log, every trace, and every threat feed without strong filtration can saturate both operators and models. The result is the worst combination: more visibility on paper, less clarity in practice.

These are not edge cases.

They are normal failure patterns in AI deployments.

Why this matters for boards and CEOs

Boards are still being told that AI strategy is mostly about adoption, productivity, and use cases.

That is too small a frame.

The bigger strategic issue is this:

What is the quality of the institution’s machine-readable reality?

A company can buy frontier models and still fail if its representations are stale, fragmented, over-compressed, or overloaded. It can invest millions in copilots and agents and still create fragile outcomes if the underlying SENSE layer cannot decide what should be preserved, what should be abstracted, and what should trigger caution.

This is where the Representation Economy becomes practical.

In a world where intelligence is becoming cheaper and more abundant, advantage shifts toward firms that can do four things well:

First, decide what parts of reality truly matter for a decision.

Second, represent those parts in ways machines can trust.

Third, prevent contexts from becoming bloated, contradictory, or noise-heavy.

Fourth, build DRIVER mechanisms so action remains bounded when representation quality falls.

The firms that win will not be those that feed the most information into AI.

They will be those that know how to design high-fidelity, low-noise, continuously refreshed representations.

That is a harder capability than model selection.

It is also a more durable one.

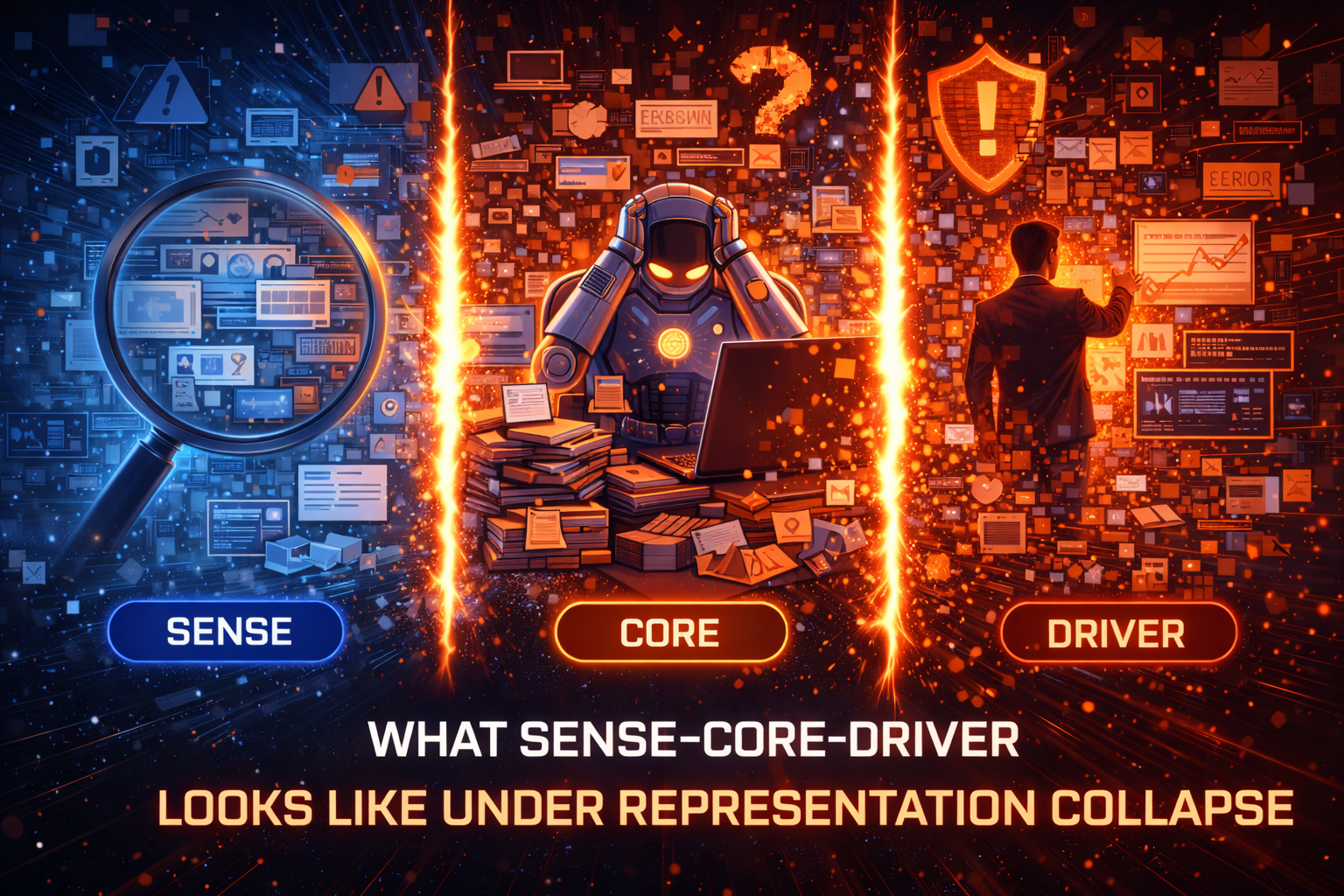

What SENSE–CORE–DRIVER looks like under Representation Collapse

The easiest way to understand this failure mode is through SENSE–CORE–DRIVER.

When SENSE fails, the system captures too little of the world, or captures the wrong parts, or captures them too slowly. Compression becomes omission.

When CORE fails, the system receives too much context, too much redundancy, or too many conflicting signals. Saturation becomes confusion.

When DRIVER fails, the organization lets the machine act as if the representation were sufficient when it is not. That turns uncertainty into institutional risk.

This is why Representation Collapse is not a narrow technical issue.

It is a full-stack institutional problem.

A serious AI strategy must ask:

What signals deserve persistent representation?

What context should be summarized, and what should never be summarized away?

What contradictions should trigger review instead of autonomous action?

What kinds of overload should force the system to slow down, narrow scope, or escalate to humans?

Those questions belong in architecture, governance, and board oversight, not only in data science teams.

What the next generation of AI systems must do differently

The answer is not “always add more data,” and it is not “always compress harder.”

The answer is adaptive representation.

That means systems that know when to summarize and when to preserve detail. Systems that retrieve with discipline rather than greed. Systems that treat context as something to be curated, layered, and scored, not merely accumulated.

It also means accepting a hard truth: not all reality should be represented at the same resolution all the time.

A well-designed AI institution should maintain different layers of representation:

A fast operational layer for immediate action.

A richer evidentiary layer for verification.

A recourse layer that allows the institution to revisit what the machine believed reality was when it acted.

This is where governance becomes a business capability rather than a compliance exercise. The World Economic Forum has repeatedly emphasized that trust, transparency, data quality, and resilient governance are central to responsible AI deployment. Representation Collapse explains why. Governance is not there to slow intelligence down. It is there to stop institutions from acting on brittle or overloaded versions of reality. (Stanford HAI)

The bigger idea: why this article matters for the AI era

Every technology era has its signature failure mode.

Industrial systems failed through mechanical breakdown.

Software systems failed through bugs and cyber vulnerabilities.

AI systems will increasingly fail through representation breakdown.

That is why Representation Collapse matters as a concept.

It explains why:

- bigger context windows do not guarantee better judgment,

- more retrieval can reduce quality instead of improving it,

- more data can weaken trust when curation is poor,

- and smarter models can still act on thinner reality than leaders imagine. Research on data curation and training efficiency increasingly points in the same direction: quality and structure matter at least as much as raw quantity, and in some settings curated subsets outperform larger, noisier corpora. (arXiv)

It also deepens the Representation Economy thesis.

The AI decade will not simply reward those who build intelligence. It will reward those who understand the economics of representation: what must be seen, what can be compressed, what must remain legible, and when machine confidence should be constrained because reality has either been thinned too far or crowded too much.

That is the hidden strategic shift.

The future belongs not to organizations that gather the most data, but to those that can turn reality into the right amount of legible, governable, defensible context.

Conclusion: the new discipline leaders need

The next discipline in AI will not be prompt engineering alone, or model engineering alone, or even agent engineering alone.

It will be representation engineering.

Representation engineering is the practice of deciding:

how reality enters the system,

how it is compressed,

how it is refreshed,

how overload is prevented,

and how action is bounded when representation quality is doubtful.

That is the real frontier.

Because AI does not fail only when it lacks reality.

It also fails when it cannot decide what to ignore, what to preserve, and what to trust enough to act upon.

That is Representation Collapse.

And in the Representation Economy, the institutions that master this problem will not just build better AI.

They will build the next durable advantage.

Conclusion Column / Executive Takeaway

Representation Collapse is the hidden failure mode of the AI era.

AI systems break not only because they know too little, but because they are forced to operate between two impossible demands: simplify reality enough to compute, and absorb enough reality to act safely.

That tension changes the boardroom question.

The question is no longer: Do we have AI?

The question is: Does our institution know how to represent reality well enough for AI to act on it?

The organizations that solve this will not merely deploy better tools. They will redesign how decisions, trust, and legitimacy work in the age of machine-mediated action.

This article introduces the concept of “Representation Collapse,” a new framework for understanding AI system failures in enterprise environments. It explains why increasing data, context, and model size does not guarantee better outcomes, and why representation quality—not just intelligence—will define success in the AI economy.

Glossary

Representation Collapse

The failure mode in which AI systems break because they operate between too little reality and too much reality.

Representation Economy

An emerging economic order in which value comes not only from intelligence, but from how reality is represented, reasoned over, and acted upon.

SENSE

The legibility layer that turns messy reality into machine-readable signals, entities, state, and evolution.

CORE

The cognition layer that interprets representations, compares options, and produces judgments.

DRIVER

The legitimacy layer that governs authority, verification, execution, and recourse when machines act.

Compression Loss

What is lost when reality is simplified to make it computable.

Saturation

What happens when too much context, data, or retrieval overwhelms the system and degrades reasoning quality.

Adaptive Representation

A design approach in which systems vary how much detail they preserve, summarize, retrieve, or escalate depending on the context and stakes.

Representation Engineering

The practice of deciding how reality enters AI systems, how it is compressed, refreshed, constrained, and acted upon.

FAQ

What is Representation Collapse in AI?

Representation Collapse is the failure mode in which AI systems break because they are forced to operate between too little reality and too much reality. They either simplify too aggressively or become overloaded by context.

Why do AI systems fail even when they have more data?

More data does not always improve AI because additional context can introduce noise, distraction, contradiction, and overload. The challenge is not just quantity, but the quality and usability of representation. (arXiv)

What is the Compression–Saturation Paradox?

It is the paradox at the heart of modern AI: compress too much and the system becomes brittle; absorb too much and the system becomes confused.

Why is Representation Collapse important for enterprise AI?

Because enterprise AI operates in messy environments with fragmented records, duplicated documents, stale states, policy exceptions, and conflicting signals. This makes representation quality a board-level issue.

How does Representation Collapse relate to SENSE–CORE–DRIVER?

Representation Collapse can happen at any layer: SENSE may capture too little, CORE may receive too much, and DRIVER may act on weak or overloaded representations.

What should companies do about Representation Collapse?

They should invest in adaptive representation, disciplined retrieval, layered evidence, bounded autonomy, and governance mechanisms that prevent machines from acting on brittle or overloaded context.

Is bigger context always better in AI?

No. Long-context research shows that larger context windows do not automatically produce better reasoning, especially when relevant information is buried or mixed with distracting content. (arXiv)

Q1: What is Representation Collapse?

Representation Collapse is a failure mode in AI systems where they break due to either too little or too much representation of reality, leading to poor decision-making.

Q2: Why does more data not always improve AI?

More data can introduce noise, redundancy, and contradictions, making it harder for AI systems to identify what truly matters.

Q3: What is the Compression–Saturation Paradox?

It is the paradox where AI systems fail either by oversimplifying reality (compression) or by being overwhelmed with too much context (saturation).

Q4: Why is Representation Collapse important for enterprises?

Because enterprise AI operates in complex environments, and poor representation can lead to wrong decisions even with advanced models.

Q5: How can companies avoid Representation Collapse?

By focusing on adaptive representation, better data curation, governance, and decision-aware system design.

References and Further Reading

- “Lost in the Middle: How Language Models Use Long Contexts.” arXiv. (arXiv)

- NIST AI RMF 1.0 and NIST AI 600-1 guidance on trustworthy and generative AI data quality, relevance, and suitability. (NIST Publications)

- Stanford HAI, AI Index Report 2025. (Stanford HAI)

- “The Distracting Effect: Understanding Irrelevant Passages in Retrieval-Augmented Generation.” arXiv. (arXiv)

- Research on attention-guided context pruning and distraction-aware retrieval in RAG. (arXiv)

- World Economic Forum perspectives on trust, governance, and responsible AI deployment. (Stanford HAI)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

- The Representation Attack Surface: Why AI’s Biggest Threat Is Reality Hacking, Not Model Hacking – Raktim Singh

- The Chief Representation Officer: Why Institutions Collapse When Machine-Readable Reality Falls Behind – Raktim Singh

- The Scarcity of Reality: Why the AI Economy Will Be Defined by the Lifecycle of High-Trust Representation – Raktim Singh

- Delegation Rating Agencies: Why the AI Economy Needs a New System to Rate Machine Authority – Raktim Singh

- The Machine-Readable Franchise: How Small Firms Will Win in the AI Trust Economy – Raktim Singh

- Representation Due Diligence: Why Every AI-Era Deal Must Start with a Reality Audit – Raktim Singh

- Recourse Platforms (raktimsingh.com)

- The New Company Stack (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework — foundational architecture for how institutions see, think, and act. (raktimsingh.com)

- The Representation Utility Stack — on interoperable reality as the next competitive layer. (raktimsingh.com)

- Representation Due Diligence — why AI-era deals must start with a reality audit. (raktimsingh.com)

- Recourse Platforms — why correction, appeal, and recovery become core AI infrastructure. (raktimsingh.com)

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- Representation Cold Start — why some industries cannot use AI until reality becomes machine-ready. (raktimsingh.com)

- The Representation Boundary: Why AI Systems Replace Reality—and Why It Will Define Who Wins the AI Economy – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.