Artificial intelligence is collapsing the cost of cognition. Research is instant. Pattern recognition is automated. Decision support is embedded everywhere.

But when intelligence becomes abundant, it stops being the source of advantage.

The next decade will not be won by those who build bigger models. It will be won by those who decide who — and what — gets represented inside intelligent systems.

Because in the AI economy, whatever cannot speak in machine-readable form does not get served. And the largest category of “silent” systems is not marginal. It is structural. It is systemic. It is the majority.

The most important AI strategy mistake is also the easiest to miss.

AI is making cognition abundant. Research is becoming instant. Synthesis is automated. Pattern recognition is ubiquitous. Decision support is everywhere.

So it’s tempting to believe the AI decade will be defined by who has the best models.

It won’t.

When intelligence becomes cheap and widely available, it stops being a moat. The AI decade will be defined by something deeper:

Who gets represented in the new economy—

and who remains invisible because they cannot speak in machine-readable ways.

That is the Silent Systems Doctrine.

It is a strategic lens for boards and executives who want to win in the AI economy—not only by deploying AI, but by expanding the frontier of who and what becomes economically legible.

Because as AI systems increasingly allocate attention, compute, services, and capital, what the system cannot “see” becomes what the economy cannot serve.

And the biggest “unseen” category is not small.

It is the majority.

Executive Summary for Boards

Silent systems are people, communities, assets, and environments that do not generate clean digital signals, cannot articulate optimization requests, or cannot represent themselves inside AI-driven markets and decision systems.

Examples include:

- Non-digital or low-digital populations

- Informal workers and micro-suppliers

- Elderly and digitally hesitant citizens

- Rural ecosystems and agriculture contexts

- Public infrastructure (water, roads, grids)

- Environmental systems (soil, rivers, air quality)

- Animals and livestock health

The Silent Systems Doctrine argues that the AI economy must build representation infrastructure for people, communities, and environments that cannot generate strong digital signals.

As AI systems allocate attention and resources based on structured data, silent systems risk becoming economically invisible. The next wave of value creation will come from making these systems legible, permissioned, and safely delegatable.

The thesis:

As AI makes cognition abundant, the scarcest asset becomes representation—the ability to translate silent systems into permissioned context and trusted action.

The opportunity:

The next wave of value creation will come from building representation infrastructure: context capture, consent/authorization, bounded delegation, and accountable execution.

The risk:

If we don’t build this layer, AI will amplify the already-visible, already-digital, already-instrumented—and leave a massive part of society and reality economically underserved.

This is not a moral complaint.

It is a market structure insight.

The Hidden Assumption in Most AI Strategy

Most AI strategy quietly assumes:

- customers are digitally expressive

- preferences are recorded

- behaviors are observable

- identities are verified

- consent is explicit

- systems are instrumented

- feedback loops are available

That is not how the world works.

Even today, a very large number of people remain offline, and connectivity disparities persist across regions, income levels, and rural/urban realities. (ITU)

But “offline” is only the first layer.

Even among those online, many people are not digitally fluent enough to use AI tools safely, consistently, or strategically. The result is a representation gap: they exist in the economy, but not in the decision loops that shape services.

Now extend the idea beyond humans.

- Rivers cannot file complaints.

- Soil cannot send alerts.

- A power grid cannot negotiate its resilience budget.

- An aging person may not know what questions to ask.

- A small supplier may not know which inefficiency is fixable.

These are silent systems.

And silent systems create the largest untapped market of the AI decade.

What Are Silent Systems?

A silent system is any actor or environment that:

- produces weak or fragmented digital signals, or

- cannot articulate needs in machine-readable form, or

- cannot represent itself inside AI-driven decision systems.

There are three major categories.

1) The Non-Digital Majority (or Low-Digital Majority)

People who are offline, under-connected, under-skilled, or simply not comfortable with digital systems—even if they own a phone.

Many can benefit from AI, but cannot ask for it in the right way.

2) The Informal Layer

Informal workers, micro-suppliers, and fragmented ecosystems generate massive value—but often outside clean digital rails. They are frequently visible only through weak proxies.

3) Non-Human and Non-Verbal Systems

Infrastructure, ecosystems, livestock health, environmental conditions—systems that can be measured, but cannot self-advocate.

Their “voice” must be built.

Why This Matters: Representation Becomes Power

In earlier eras, power concentrated around:

- land

- capital

- manufacturing capacity

- distribution

In the AI era, power concentrates around:

- visibility

- context

- representation

- delegation authority

Because AI-driven systems do something fundamental:

They allocate attention.

And whatever gets attention gets:

- service prioritization

- financial optimization

- policy focus

- operational resources

- risk mitigation

- investment

What does not get attention becomes economically invisible.

So the question becomes:

Who builds the systems that represent the silent?

That is where the new companies, platforms, and institutions will emerge.

The Collapse of Cognitive Scarcity Changes the Game

AI agents are shifting from prototypes to real-world deployment, and organizations are now grappling with evaluation, governance, and responsible adoption. (World Economic Forum)

But most discussions still begin at the enterprise edge:

- internal workflows

- productivity gains

- compliance automation

The Silent Systems Doctrine says:

The bigger transformation is not internal. It is external.

Because once cognition becomes cheap, it becomes feasible to:

- monitor weak signals continuously

- translate unstructured reality into structured context

- personalize services at scale

- simulate interventions before acting

- provide guidance in local languages

- build “digital proxies” for those who cannot self-navigate

The result is not merely better automation.

The result is new markets around representation.

A Simple Example: “People Don’t Know What They Don’t Know”

Consider a digitally savvy professional with a personal AI assistant. They can ask:

- “Compare these options.”

- “Explain this contract.”

- “Create a plan.”

- “Monitor my goals.”

- “Flag anomalies.”

Now consider someone who is not digitally native.

They may not ask because:

- they don’t know what’s possible

- they don’t know what to request

- they don’t trust the system

- they don’t know how to verify

- they fear making mistakes

Their need is real—often greater—but their ability to represent that need is weaker.

So the opportunity is not simply “give them AI tools.”

It is to build representation infrastructure that:

- detects needs without complex prompting

- uses voice and local language

- reduces choice overload

- proposes small, safe actions

- escalates to humans when needed

- proves why a recommendation is being made

That is representation as a service.

The Sarlaben Signal: When Silent Systems Get a Voice

The Sarlaben Signal: When Silent Systems Get a Voice

A real-world signal of this shift can be seen in agriculture and dairy.

Amul launched an AI assistant (“Sarlaben”) to support dairy farmers at scale—providing guidance on cattle health, breeding, feeding, vaccinations, and related actions through accessible interfaces, including voice support. (The Times of India)

This matters because it shows something larger than “AI adoption”:

- cattle health is a silent system

- many farmers are not digitally sophisticated

- AI becomes the translation layer between weak signals and actionable guidance

Whether one focuses on product specifics or the broader pattern, the strategic insight is clear:

AI diffusion unlocks value in places that were previously too complex, too distributed, or too silent to serve.

And the same pattern will repeat across:

- elder care

- public services

- informal micro-enterprises

- environmental resilience

- infrastructure maintenance

Why “Inclusion” Is Not the Right Frame

This topic is often treated as “digital inclusion” or “AI ethics.”

That framing is too small.

The Silent Systems Doctrine is about:

Market expansion through representation.

Boards should care because silent systems are:

- the largest untapped demand surface

- the largest unmonetized context pool

- the largest risk blind spot

- a major source of societal legitimacy

Ignore silent systems and you leave value on the table—while also increasing backlash risk.

Represent them well and you unlock a new growth frontier.

The Core Problem: AI Amplifies Structured Signal

AI works best where:

- data is abundant

- signals are clean

- feedback is measurable

- behavior is digitally observable

So AI naturally benefits:

- digital-first customers

- well-instrumented enterprises

- high-connectivity markets

Without corrective architecture, AI increases inequality of visibility.

Not because AI is “evil.”

Because optimization follows signals.

So the key question becomes:

Who builds the signal and context layer for what is currently silent?

That is the biggest third-order opportunity.

The Silent Systems Stack: From Reality to Representation to Action

To represent what cannot speak, you need a practical stack.

Layer 1: Sensing (Reality Capture)

- voice interfaces

- edge sensors

- satellite imagery

- low-cost diagnostics

- IoT where feasible

- human-in-the-loop capture where needed

Layer 2: Translation (Context Construction)

- converting unstructured signals into usable context

- local language translation

- identity mapping and entity resolution

- longitudinal memory building

Layer 3: Permission (Consent and Authorization)

- who owns the context?

- who can grant access?

- how is consent revoked?

- what boundaries apply?

Layer 4: Delegation (Bounded Action)

- what can the system do on someone’s behalf?

- when must it ask?

- what actions are reversible?

- what must be escalated?

Layer 5: Proof (Accountability)

- audit trails

- traceability

- explanation

- dispute resolution

- safety logs

This is not “AI governance” in the abstract.

This is governance as infrastructure.

And it must be designed to serve silent systems.

Where Context Capital Fits

Silent systems are the largest pool of unharvested context capital.

But capturing context from silent systems must be:

- permissioned

- culturally and legally grounded

- transparent

- reversible

- aligned with trust

Otherwise, it becomes extraction.

So the Silent Systems Doctrine says:

Context is capital—but representation determines who holds it, who benefits, and who is protected from misuse.

How This Connects to C.O.R.E.

MY C.O.R.E lens (Capture, Orchestrate, Regulate, Evolve) becomes practical here:

- Capture: build context from weak signals

- Orchestrate: reduce overload—offer fewer, better options

- Regulate: bounded delegation with explicit constraints

- Evolve: evidence loops that compound trust

Silent systems need C.O.R.E. more than digital-native systems because the cost of misunderstanding is higher.

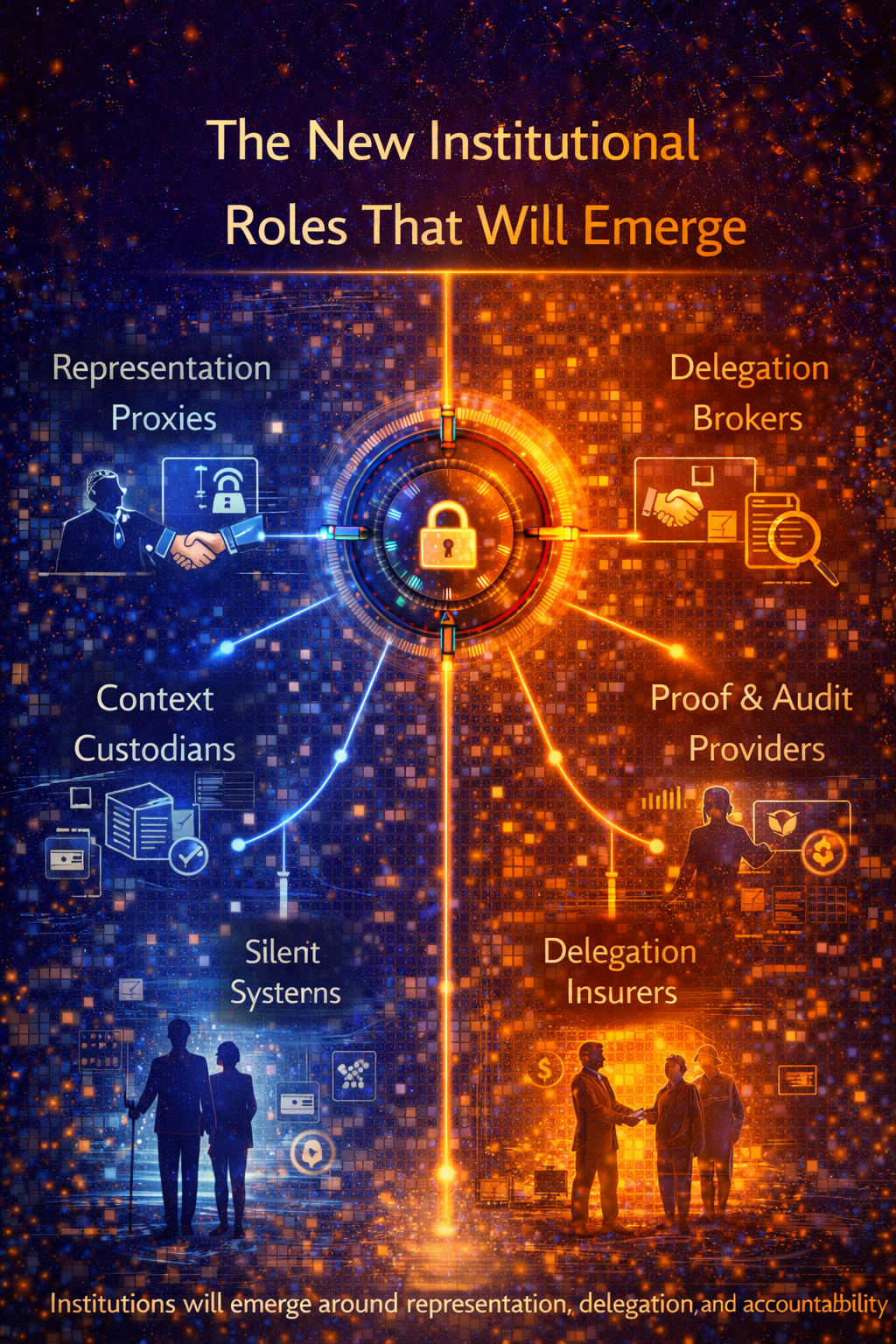

The New Institutional Roles That Will Emerge

Just as the financial economy created institutions (banks, exchanges, custodians), the AI economy will create institutions around representation.

Expect categories like:

1) Representation Proxies

Trusted entities that act as digital advocates for people or systems that cannot self-navigate.

2) Context Custodians

Organizations that store permissioned context and manage access, revocation, and auditing.

3) Delegation Brokers

Systems that match needs to services—but within strict delegation boundaries.

4) Proof and Audit Providers

Independent verification that actions were aligned with authorization and policy.

5) Delegation Insurers

New underwriting models for wrong actions, misunderstandings, or autonomous failures.

These are not “AI products.”

They are new institutional designs.

What Governments and Public Systems Must Learn

Governments increasingly explore AI to improve services, decision-making, and anomaly detection, but face unique challenges: privacy requirements, representation demands, legacy systems, data constraints, and accountability expectations. (OECD)

The Silent Systems Doctrine matters especially in public systems because:

- citizens vary widely in digital ability

- services must be fair, not only optimized

- legitimacy matters as much as efficiency

- recourse mechanisms are essential

Public sector representation rails must be:

- multilingual

- voice-first

- low-friction

- identity-aware

- grievance-aware

And this is where new public-private ecosystems will be built.

Board Column: Six Questions Directors Should Ask

If you’re a board member (or advising one), translate the doctrine into six questions:

- Which parts of our customer base are digitally silent?

- Which parts of our operations depend on non-verbal, non-digital signals?

- Where does our strategy assume “everyone can self-serve”?

- What context do we not have because the system is not instrumented?

- What representation infrastructure would unlock the largest new value pool?

- How do we ensure consent, recourse, and bounded delegation so trust compounds?

This turns the doctrine into capital allocation.

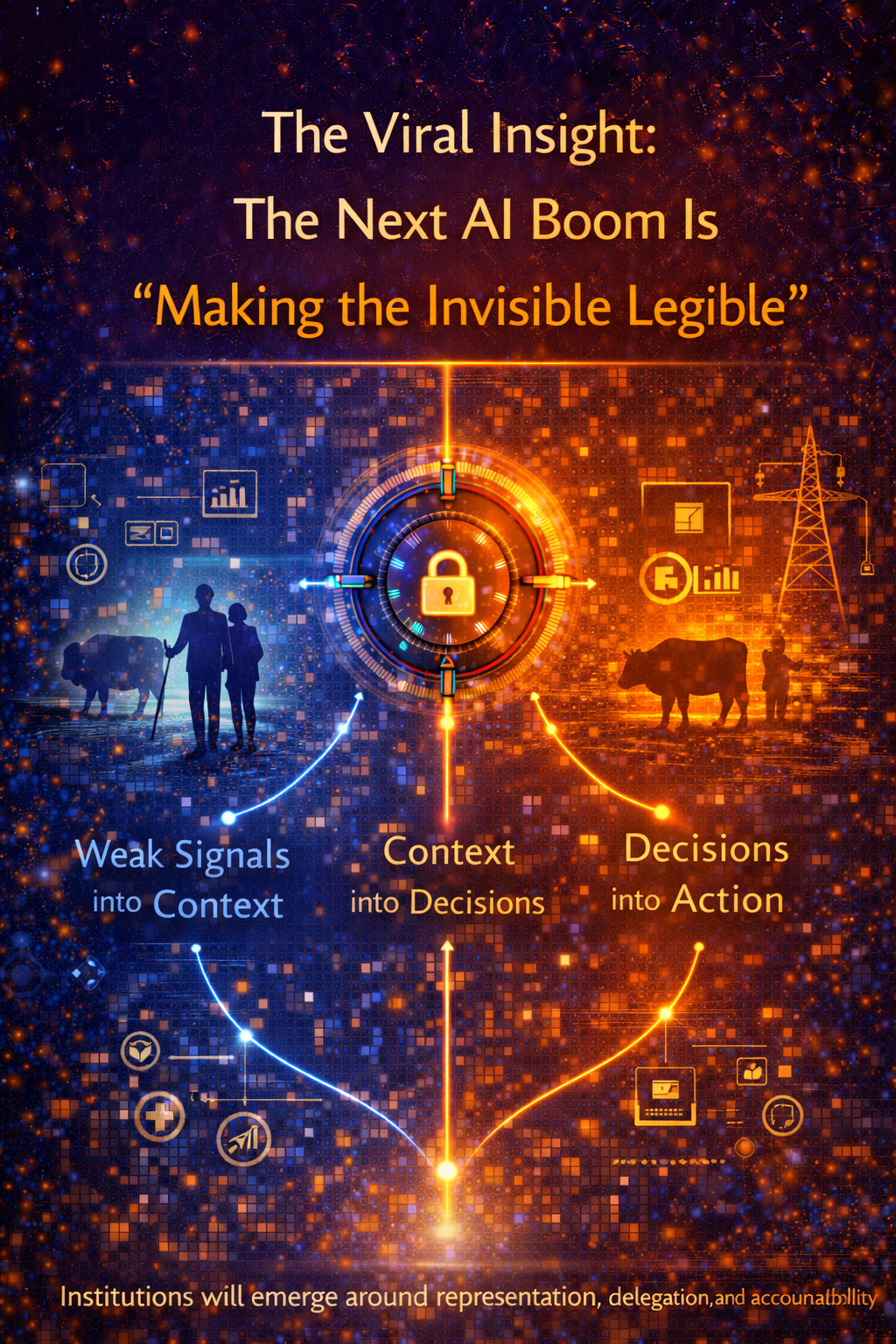

The Viral Insight: The Next AI Boom Is “Making the Invisible Legible”

Here is the line people will remember:

The next AI boom will come from making silent systems economically legible.

Not from bigger models.

Not from cheaper inference.

From representation.

Because representation turns:

- weak signals into context

- context into decisions

- decisions into action

- action into value

That is the third-order AI economy.

Conclusion: The AI Economy Must Learn to Listen

AI is making cognition abundant. But abundance does not automatically create fairness, value, or legitimacy.

It creates new power structures.

In the AI decade, the most strategic question is not:

“Which model should we use?”

It is:

“Who gets represented—and who remains silent?”

Boards that understand this early will unlock:

- new markets

- new demand surfaces

- new context capital

- more resilient legitimacy

- better long-term growth

The Silent Systems Doctrine is a simple message with a deep implication:

The AI economy must learn to represent what cannot speak—

or it will optimize itself into blind spots.

AI is making intelligence cheap.

So power shifts to representation.

The next AI boom will not come from bigger models.

It will come from making silent systems economically legible.

If your strategy assumes everyone can self-serve AI, you are building blind spots.

“Read More”

- The Enterprise AI Operating Model: https://www.raktimsingh.com/enterprise-ai-operating-model/

- The Intelligence Reuse Index: https://www.raktimsingh.com/intelligence-reuse-index-enterprise-ai-fabric/

- The Enterprise AI Runbook Crisis: https://www.raktimsingh.com/enterprise-ai-runbook-crisis-model-churn-production-ai/

- Who Owns Enterprise AI?: https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/

- The Future Belongs to Decision-Intelligent Institutions: https://www.raktimsingh.com/the-future-belongs-to-decision-intelligent-institutions/

Glossary

Silent Systems: People, communities, infrastructure, and environments that cannot represent themselves inside AI-driven decision systems.

Non-Digital Majority: Populations that are offline, under-connected, or not digitally fluent enough to self-serve AI systems reliably. (ITU)

Representation Economy: An economy where value flows to what becomes representable, legible, and delegatable inside intelligent systems.

Context Capital: Permissioned, longitudinal, identity-bound understanding that compounds across decisions.

Consent Architecture: Mechanisms that manage permission, revocation, scope limits, and transparency for context use.

Bounded Delegation: Clear limits on what AI can do on someone’s behalf, with escalation and reversibility.

C.O.R.E.: Capture Context, Orchestrate Decisions, Regulate Action, Evolve with Evidence—trusted delegation architecture.

Representation Proxy: A trusted digital advocate helping a silent system access services safely and effectively.

FAQ

1) What is the Silent Systems Doctrine?

It is a strategy lens arguing that the AI economy must build representation infrastructure for people and systems that cannot speak digitally—otherwise value and services will concentrate only where signals are already strong.

2) Why does this matter for business strategy?

Because AI allocates attention and resources based on signals. If customers, suppliers, or environments are silent, you will miss large value pools and accumulate blind spots.

3) How is this different from “digital inclusion”?

Digital inclusion is about access. Silent Systems is about representation: translating weak signals into permissioned context and trusted action.

4) What new business categories emerge?

Representation proxies, context custodians, delegation brokers, proof/audit layers, and delegation insurers.

5) What should boards do first?

Map where the business depends on silent systems, then invest in sensing + translation + consent + bounded delegation + proof.

References & Further Reading

- ITU: Facts and Figures 2024—global connectivity and offline populations. (ITU)

- World Economic Forum: AI Agents governance foundations (agents moving to real deployment). (World Economic Forum)

- OECD: Governing with Artificial Intelligence—government context, accountability, privacy, representation. (OECD)

- HBR: When every company has similar AI models, context becomes the differentiator. (Harvard Business Review)

- Amul “Sarlaben” coverage as a signal of AI reaching grassroots and livestock health use cases. (The Times of India)

The Intelligence-Native Enterprise Doctrine

This article is part of a larger strategic body of work that defines how AI is transforming the structure of markets, institutions, and competitive advantage. To explore the full doctrine, read the following foundational essays:

- The AI Decade Will Reward Synchronization, Not Adoption

Why enterprise AI strategy must shift from tools to operating models.

https://www.raktimsingh.com/the-ai-decade-will-reward-synchronization-not-adoption-why-enterprise-ai-strategy-must-shift-from-tools-to-operating-models/ - The Third-Order AI Economy

The category map boards must use to see the next Uber moment.

https://www.raktimsingh.com/third-order-ai-economy/ - The Intelligence Company

A new theory of the firm in the AI era — where decision quality becomes the scalable asset.

https://www.raktimsingh.com/intelligence-company-new-theory-firm-ai/ - The Judgment Economy

How AI is redefining industry structure — not just productivity.

https://www.raktimsingh.com/judgment-economy-ai-industry-structure/ - Digital Transformation 3.0

The rise of the intelligence-native enterprise.

https://www.raktimsingh.com/digital-transformation-3-0-the-rise-of-the-intelligence-native-enterprise/ - Industry Structure in the AI Era

Why judgment economies will redefine competitive advantage.

https://www.raktimsingh.com/industry-structure-in-the-ai-era-why-judgment-economies-will-redefine-competitive-advantage/

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

- The AI Decade Will Reward Synchronization, Not Adoption

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.