Representation Covenants: A board-level idea whose time has arrived

Most firms still believe they compete through familiar levers: better products, lower prices, faster delivery, stronger brands, wider distribution, or superior customer experience.

All of that still matters.

But in the AI era, it will not be enough.

The next great firms will not win because they have better AI—but because they can make enforceable promises that humans, machines, and institutions can trust and act upon.

The next great firms will win because they can make promises that travel—across websites, apps, enterprise systems, marketplaces, regulators, supply chains, digital platforms, and increasingly, AI agents. Those promises will not be vague statements such as “we care about privacy” or “quality is our priority.” They will need to be clearer, more structured, more verifiable, more updateable, and more enforceable than the promises most companies make today.

That is why a new competitive instrument is emerging: the Representation Covenant.

A Representation Covenant is an enforceable promise about reality. It tells humans, machines, and institutions what a firm claims to be true, what evidence supports that claim, how current that claim is, what actions may be taken on the basis of that claim, and what happens if the claim turns out to be wrong.

This may sound abstract. It is not.

It is the difference between a company saying, “This product is authentic,” and being able to prove its origin, custody, edits, and compliance history in a form that platforms, partners, and AI systems can verify.

It is the difference between saying, “Our AI assistant is safe,” and being able to specify what data it may access, what actions it may take, what approvals it requires, and what recourse exists if it fails.

It is the difference between a hospital saying, “We use AI responsibly,” and being able to show who authorized a model, what patient state it relied on, what limits applied, and who remains accountable for the final action.

The world is already moving in this direction. NIST’s AI Risk Management Framework emphasizes governance, mapping, measurement, and management of AI risk. ISO/IEC 42001 provides a structured management system standard for organizations that develop, provide, or use AI.

The EU AI Act is hardening legal expectations around transparency, risk, and accountability. Technical standards such as C2PA and W3C Verifiable Credentials are making provenance and claims more machine-verifiable. (NIST)

Why this matters now

For most of business history, trust was mediated primarily by people and institutions.

A buyer trusted a seller because of reputation.

A bank trusted a borrower because of documentation.

A regulator trusted a company because of disclosures and audits.

A hospital trusted a supplier because of certification and contracts.

Now a new actor has entered the trust chain: the machine.

AI systems already search, rank, summarize, recommend, authenticate, compare, negotiate, route, and increasingly act.

Open protocols are also emerging to let AI systems connect to tools, data sources, and other agents.

Anthropic’s Model Context Protocol was introduced as an open standard for secure, two-way connections between data sources and AI tools, while Google’s A2A protocol is designed to let agents communicate securely, exchange information, and coordinate actions across enterprise applications. (Anthropic)

That changes the nature of trust.

A machine cannot rely on brand aura the way a human can. It needs something more explicit. It needs to know who made the claim, whether the claim is authentic, whether it is current, whether it is authorized, whether it is auditable, whether action is allowed, and whether recourse exists.

In other words, the machine needs a covenant, not a slogan.

A simple example: organic food

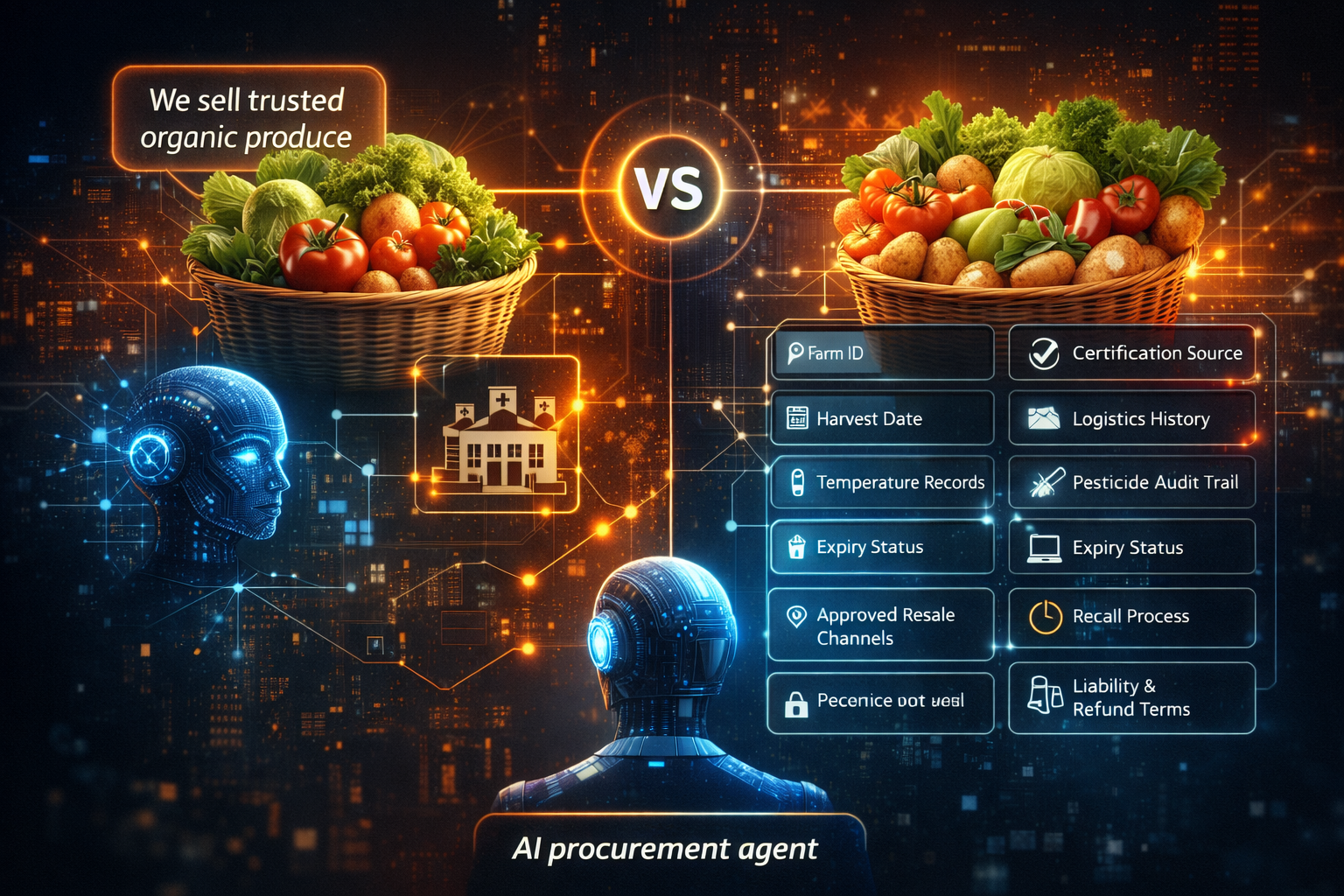

Imagine two food brands.

The first says:

“We sell trusted organic produce.”

The second can provide:

- farm identity

- certification source

- harvest date

- logistics history

- temperature records

- pesticide audit trail

- expiry status

- approved resale channels

- recall process

- liability and refund terms

To a human, both may sound credible.

To a machine, they are not even close.

The first offers a message.

The second offers a covenant.

Now imagine an AI procurement agent buying ingredients for a hospital kitchen. Which supplier will it trust, compare, and route orders toward?

Not the one with better adjectives.

The one with better representation.

That is the heart of Representation Economics: in the AI era, value increasingly shifts toward what can be credibly represented, verified, and acted upon. Related background on this broader thesis appears in your existing work on the Representation Economy and SENSE–CORE–DRIVER. (raktimsingh.com)

Representation Covenants are not just legal contracts

This is the first distinction leaders need to understand.

A Representation Covenant is not merely a contract drafted by lawyers. It is a layered promise that must also work operationally, digitally, and increasingly, machine-readably.

A strong Representation Covenant has at least five parts.

-

Claim

What exactly is being asserted?

Example:

“This medicine batch was produced in a licensed facility under approved conditions.”

-

Proof

What evidence supports the claim?

Example:

inspection records, sensor logs, license identifiers, provenance records, signed attestations

-

Permission

What may a human, machine, or institution do on the basis of that claim?

Example:

A pharmacy AI may dispense, but only if expiry, storage integrity, and prescription match are verified.

-

Update discipline

How is the claim refreshed, revoked, corrected, or superseded?

Example:

If the batch is recalled, the representation must change everywhere that matters.

-

Recourse

What happens if the claim is false, incomplete, stale, or misused?

Example:

alert, stop-ship, reimbursement, escalation, audit, liability review

That is why the word covenant matters. A covenant is stronger than branding and more actionable than policy. It combines meaning, obligation, evidence, action boundaries, and consequence.

The hidden problem most firms have not yet understood

Many firms think their challenge is to adopt AI.

That is too shallow.

The deeper challenge is this: Can your organization make promises that AI systems can trust enough to act on?

That is a very different question.

A company may have excellent products and still fail in the AI economy because its claims are:

- scattered across PDFs

- buried in emails

- inconsistent across systems

- not linked to evidence

- impossible to verify in real time

- unclear on authority boundaries

- weak on recourse

In the old world, a capable sales team or compliance function could patch over some of these gaps. In the new world, those same gaps become structural disadvantages.

If your firm cannot produce covenant-grade representations, you become harder to trust, harder to integrate, harder to recommend, and harder to automate around.

Why this will create a new class of winning firms

The next great firms will not simply have better AI. They will have better covenant architecture.

That means they will be able to say, in machine-readable or machine-verifiable form:

- this is who we are

- this is what we know

- this is what we stand behind

- this is what can be done on our behalf

- this is how our claims are verified

- this is how our errors are corrected

That changes competition.

A lender with covenant-grade borrower representations can underwrite faster.

A hospital with covenant-grade clinical workflows can deploy AI more safely.

A manufacturer with covenant-grade supply visibility can automate procurement and compliance.

A media company with provenance-backed content can remain trusted in a world of synthetic media.

A software provider with machine-verifiable service commitments can become easier for enterprise agents to buy, monitor, and govern.

This is already visible in fragments. C2PA is building a standard for certifying the source and history of media content, while its Content Credentials model is often described as a kind of nutrition label for digital media. W3C Verifiable Credentials define a standard way to express tamper-resistant, privacy-respecting, machine-verifiable claims among issuers, holders, and verifiers. (C2PA)

These are not yet the full covenant economy. But they are early building blocks of it.

This is where SENSE, CORE, and DRIVER matter

Representation Covenants become much easier to understand through the SENSE–CORE–DRIVER framework.

SENSE: make reality legible

Before any covenant can be trusted, reality must first become legible.

What is the signal?

Which entity does it belong to?

What is the current state?

How is that state changing?

Without this layer, the covenant has no solid foundation.

CORE: interpret the representation correctly

The system must then reason over the representation properly.

Can it interpret the claim?

Can it detect missing context?

Can it identify low confidence?

Can it avoid acting on stale or weak signals?

Without this layer, the covenant is misread.

DRIVER: turn representation into governed action

Finally, the system must act under clear authority.

Who authorized action?

What exact action is allowed?

What checks apply?

What recourse exists?

Who is accountable if something goes wrong?

Without this layer, the covenant becomes dangerous.

This is why Representation Covenants are more than a compliance layer. They are the operational bridge between seeing reality, reasoning about it, and acting with legitimacy.

A second simple example: hiring

Today, a candidate submits a resume.

A recruiter reads it.

A manager interviews them.

Trust remains partly human.

Now imagine AI-mediated hiring at scale.

A firm claims:

“This person is qualified for a high-trust role.”

What will the machine need?

Not just a resume. It may need:

- credential authenticity

- issuing institution

- skill validity date

- work history confidence

- assessment provenance

- conflict checks

- authorization limits for the role

- explanation rights

- appeal pathways

Without these, AI hiring becomes brittle, opaque, and unfair. The EU AI Act has treated employment-related AI uses as a high-risk category requiring stronger controls and obligations. (Artificial Intelligence Act)

With them, you begin to move toward a covenant.

The same logic applies to insurance, logistics, finance, healthcare, education, procurement, public services, and media.

The firms that survive will redesign trust itself

This is the larger point.

In the AI era, firms will not compete only on intelligence. They will compete on the quality of the promises they can operationalize.

That means serious leaders will invest in:

- provenance

- verifiable claims

- policy-linked workflows

- machine-readable permissions

- identity and authority layers

- correction and recourse systems

- continuous updating of representations

In short, they will build trust that machines can work with.

This also explains why many AI programs fail before the model itself becomes the main issue. The model may be technically capable, but the surrounding promises are weak. The institution has not clearly defined what is true, what is allowed, what is current, and what happens when action causes harm. NIST’s AI RMF and ISO/IEC 42001 both reflect this broader reality: trustworthy AI is not just about model quality. It is about governance, accountability, lifecycle management, and oversight. (NIST)

The new question boards should ask

Boards have spent years asking:

What is our AI strategy?

A better question is now emerging:

What promises can our institution make that humans, machines, and regulators can all rely on?

That question changes everything.

It forces leaders to examine:

- where their representations are weak

- where their claims lack proof

- where their AI systems act without clear authority

- where recourse is missing

- where trust still depends on narrative rather than enforceable structure

The winners of the AI era will not merely automate faster.

They will institutionalize believable action.

Conclusion: the firms that win will sell enforceable confidence

The next great firms will not only sell products. They will sell confidence.

Not soft confidence.

Not marketing confidence.

Not borrowed confidence.

They will sell enforceable confidence.

A bank will sell a covenant that its AI advice is bounded, auditable, and reviewable.

A hospital will sell a covenant that machine-supported care still preserves authority, traceability, and recourse.

A marketplace will sell a covenant that identities, provenance, and obligations are continuously checked.

A media company will sell a covenant that what it publishes can be traced, verified, and challenged.

A software firm will sell a covenant that its agents act within declared permissions and leave evidence behind.

That is a very different future from the one most companies are planning for.

And that is why Representation Covenants matter.

The next great firms will not win because they say more. They will win because they can promise in a form the new economy can trust.

That is the next frontier of Representation Economics.

And over time, it may become one of the defining tests of institutional strength in the AI era: not whether a firm can deploy intelligence, but whether it can turn intelligence into action through promises that humans, machines, and institutions can all verify, enforce, and live with.

FAQ

What is a Representation Covenant?

A Representation Covenant is an enforceable promise about reality that combines a claim, supporting proof, permitted actions, update logic, and recourse.

How is a Representation Covenant different from a contract?

A contract is mainly a legal agreement. A Representation Covenant is broader. It must also work operationally and, increasingly, machine-readably across digital systems and AI workflows.

Why will AI make Representation Covenants more important?

Because AI systems increasingly search, rank, compare, recommend, and act. They need structured, verifiable promises, not just static disclosures or marketing language.

How does this connect to SENSE–CORE–DRIVER?

SENSE makes reality legible, CORE interprets it, and DRIVER governs action. A Representation Covenant is the promise layer that allows those three layers to work together with legitimacy.

Which sectors will benefit most?

Finance, healthcare, logistics, manufacturing, software, marketplaces, public services, education, media, and any sector where trust, coordination, and action must scale.

Are standards for this already emerging?

Yes. NIST AI RMF, ISO/IEC 42001, the EU AI Act, W3C Verifiable Credentials, C2PA, MCP, and A2A each address part of the broader stack needed for machine-verifiable trust and coordinated AI action. (NIST)

What are Representation Covenants?

Representation Covenants are enforceable, machine-verifiable promises that combine claims, proof, permissions, updates, and recourse—allowing humans, AI systems, and institutions to trust and act on them reliably.

Why do they matter in AI?

Because AI systems do not trust brand narratives—they rely on structured, verifiable, and actionable representations of reality.

What is the core idea?

In the AI economy, firms compete not just on intelligence, but on their ability to make promises that machines can trust and act upon.

Glossary

Representation Economics

A strategic view of the AI era in which value increasingly flows to what can be clearly represented, reliably understood, and responsibly acted upon by machines and institutions. Related reading on your site: (raktimsingh.com)

Representation Covenant

An enforceable promise that links a claim to proof, permissions, update logic, and recourse.

Machine-readable trust

Trust expressed in a form that digital systems and AI agents can verify and act upon.

Provenance

Information about the origin, custody, and modification history of an asset or claim. C2PA specifically focuses on certifying the source and history of media content. (C2PA)

Verifiable Credential

A digitally secured claim that is machine-verifiable and designed for use among issuers, holders, and verifiers. (W3C)

Recourse

The process for correction, appeal, rollback, remedy, or escalation when an AI-supported action is wrong or harmful.

MCP

An open standard introduced by Anthropic for secure, two-way connections between data sources and AI-powered tools. (Anthropic)

A2A

A protocol designed for secure communication and coordination among AI agents across enterprise platforms and applications. (Google Developers Blog)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

References and Further Reading

For a short “References / Further Reading” section at the end of the website article, use:

- NIST, AI Risk Management Framework. (NIST)

- ISO, ISO/IEC 42001: AI Management Systems. (ISO)

- EU AI Act overview and official text references. (Artificial Intelligence Act)

- C2PA, Content Provenance and Authenticity Specifications. (C2PA)

- W3C, Verifiable Credentials Data Model 2.0. (W3C)

- Anthropic, Introducing the Model Context Protocol. (Anthropic)

Google, Announcing the Agent2Agent Protocol. (Google Developers Blog)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.