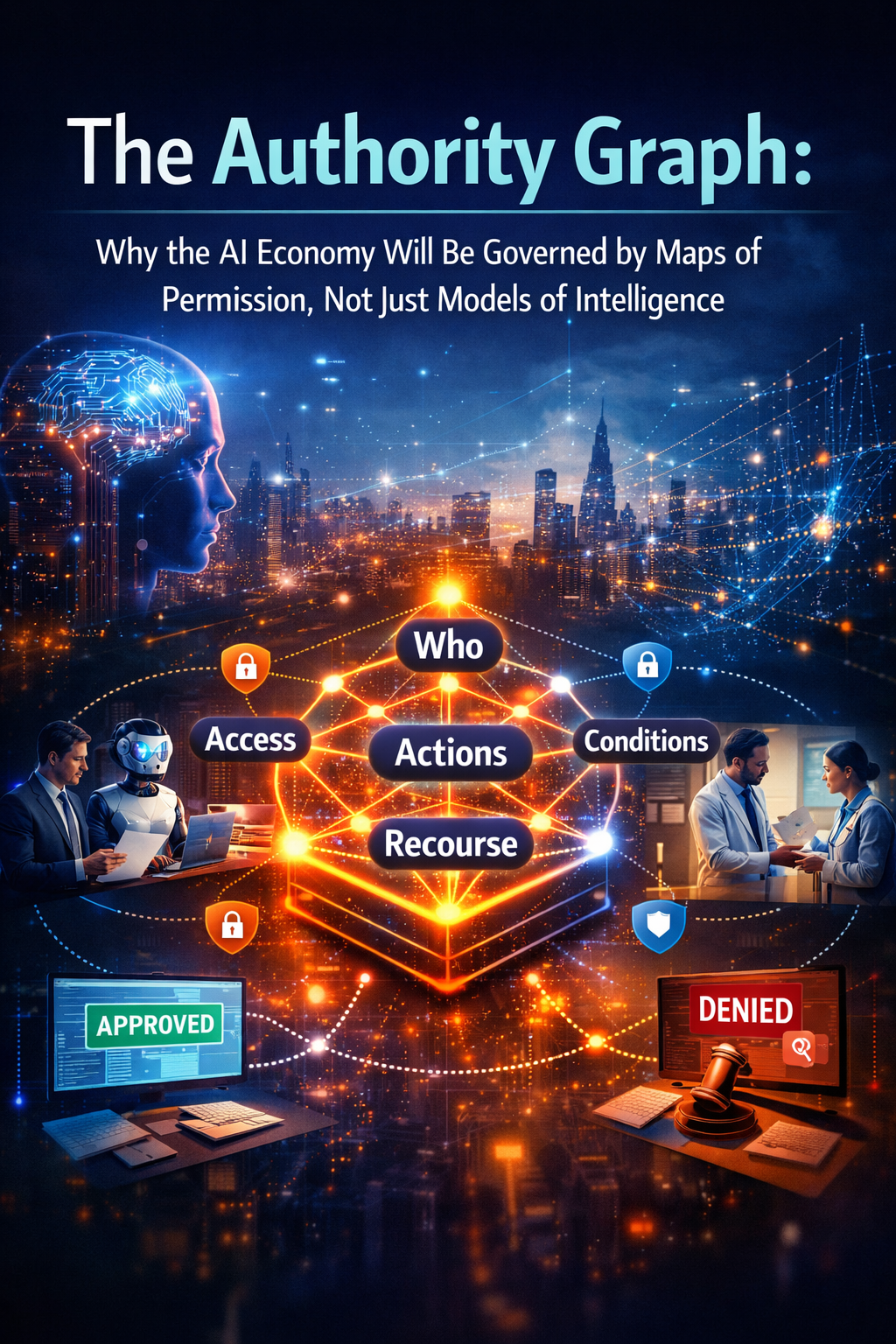

The Authority Graph:

The next winners in AI will not be defined only by smarter models. They will be defined by how well they map authority, constrain action, preserve recourse, and turn intelligence into legitimate execution.

A simpler way to understand the next battle in AI

For the last few years, the AI conversation has focused on intelligence. Which model is bigger? Which model reasons better? Which model writes better code, gives better answers, or makes better predictions?

That was the right first question. It is no longer the decisive one.

The next phase of the AI economy will be shaped by a different question: Who is allowed to do what, for whom, under which conditions, with what limits, and with what recourse if something goes wrong? That is not a model question. It is a permission question. And once AI starts acting inside real institutions, permission stops being policy language and becomes architecture. Harvard Business Review’s recent enterprise guidance on AI agents points in exactly this direction: treat each agent like a distinct digital worker with a role, a scope of authority, approved sources of truth, and escalation rules. (Harvard Business Review)

This is why I call the next governing layer of the AI economy the Authority Graph: a living map of permission that defines how intelligence is allowed to become action.

A model may know a lot. But knowing is not the same as being allowed.

A customer-service agent may know a refund is justified.

A coding agent may know how to modify production code.

A procurement agent may know which supplier is cheapest.

A healthcare system may know which patient is high risk.

None of those systems should act only because they are intelligent enough to do so. They should act only when authority is clear, bounded, auditable, and reversible. That principle mirrors the logic of zero-trust architecture, where access is not assumed but continuously bounded by policy, identity, and least privilege. (NIST Publications)

The future will not be won only by the companies with the best models. It will be won by the institutions that build the best maps of permission.

Why intelligence alone is no longer enough

AI is moving from assistance to action. That changes everything.

When AI only drafts, suggests, summarizes, or answers questions, the stakes are lower. The human is still the actor. But once AI starts approving payments, changing prices, opening tickets, updating code, negotiating with suppliers, or triggering workflows across systems, the center of gravity moves.

The problem is no longer just, “Can the model reason?” It becomes, “Who authorized this action?” and “Was this action allowed in this context?”

That is why prompt-level control is not enough. A sentence like “do not take action without approval” is not governance. It is a hope. Recent enterprise commentary has warned that when governance lives only inside the prompt window, agents can exceed scope, lose critical instructions, or act without the architectural safety net needed for enterprise deployment. (TechRadar)

The same logic is now appearing across enterprise, policy, and identity discussions. UC Berkeley CLTC’s 2026 agentic-AI risk profile emphasizes human control, intervention points, escalation pathways, shutdown mechanisms, and system-level risk assessment for tool use and multi-agent behavior. Fortune recently highlighted a sharp gap between rapid AI-agent adoption and the small share of organizations that actually have a clear strategy to manage them. Okta, meanwhile, has begun explicitly framing AI agents as first-class non-human identities with lifecycle management needs. (CLTC)

That is the real shift. AI governance is becoming less about outputs alone and more about authorized action.

What is an Authority Graph?

An Authority Graph is a structured, living map that answers five practical questions.

-

Who is the actor?

Is the action being taken by a human, a software service, an AI agent, or an AI agent acting on behalf of a human, team, or institution?

-

What is the actor allowed to access?

Which tools, systems, files, APIs, data sources, workflows, and environments are available?

-

What is the actor allowed to do?

Can it read, recommend, draft, approve, execute, negotiate, escalate, or only simulate?

-

Under what conditions is it allowed to act?

Only below a spending threshold? Only during business hours? Only with a second approver? Only in a certain geography? Only in low-risk cases? Only when a human remains in the loop?

-

What happens if something goes wrong?

Can the action be reversed? Can it be appealed? Can authority be revoked instantly? Is there a full audit trail? Are escalation and shutdown paths clear?

This is why I call it a graph. Permission in the AI economy will not be a flat settings page. It will be a network of relationships among people, agents, systems, assets, policies, thresholds, approvals, and recourse paths. That way of thinking closely matches current agent-risk guidance, which emphasizes clear role definitions, escalation checkpoints, and mechanisms for intervention and shutdown. (CLTC)

A simple example: the finance agent

Imagine an enterprise finance agent.

It reads invoices, checks contracts, matches purchase orders, and suggests payment approvals.

Most organizations would first ask, “How accurate is the model?” That matters. But it is not the deepest question.

The deeper question is this: What is the finance agent allowed to do at each stage?

It may be allowed to read invoices.

It may be allowed to flag mismatches.

It may be allowed to recommend approval.

It may be allowed to auto-approve invoices below a small threshold.

It may not be allowed to release payment above a certain amount without human sign-off.

It may not be allowed to override a sanctions check.

It may be allowed to escalate exceptions to a manager.

It may be required to preserve a full audit trail for every action.

That ladder of permission is the Authority Graph in action.

Without it, the model’s intelligence becomes dangerous. With it, the same intelligence becomes enterprise-ready.

Another example: the hospital assistant

Now take healthcare.

An AI system may help identify patients at risk of deterioration. That is useful. But the key question is not whether the model predicts well in isolation. The key question is where it sits in the chain of authority.

Is it allowed only to score risk?

Can it recommend a care pathway?

Can it schedule follow-up tests?

Can it change a medication order?

Can it only alert a clinician?

Can it take action after hours?

Who is accountable if it is wrong?

This is where many AI debates remain too shallow. They focus on model performance but ignore delegated authority. Yet delegated authority is exactly what determines whether AI remains assistive or becomes institutional. WEF’s recent work on AI as cognitive infrastructure argues that governance must now protect human agency, transparency, and judgment as AI increasingly influences real decisions. (World Economic Forum)

Why this matters for the Representation Economy

My broader thesis is that the next economy will not be defined only by intelligence. It will be defined by representation.

To act well, AI first needs reality to be represented well.

That is why I use the framework SENSE–CORE–DRIVER.

SENSE

Signal, ENtity, State, Evolution.

This is the layer where reality becomes legible.

CORE

Comprehend, Optimize, Realize, Evolve.

This is the reasoning layer.

DRIVER

Delegation, Representation, Identity, Verification, Execution, Recourse.

This is the legitimacy layer.

Most of the AI market still overinvests in CORE. It keeps asking how to make models smarter. But institutions win only when SENSE is strong enough to represent reality accurately and DRIVER is strong enough to turn intelligence into legitimate action.

The Authority Graph belongs primarily to the DRIVER layer. It is the missing map that tells an institution how permission flows from principals to systems to action. Without that map, even a brilliant model is just an intelligent trespasser.

Why this idea will matter more in 2026 and beyond

Three shifts make the Authority Graph urgent.

The rise of agents

Enterprises are rapidly experimenting with AI agents that act more like operators than assistants, but governance has not kept pace. HBR has pushed organizations to treat agents like team members with defined roles and escalation rules, while Fortune has pointed to the large gap between adoption and management strategy. (Harvard Business Review)

The move from model risk to system risk

The real governance challenge is no longer only the model’s output. It is the wider system: autonomy, authority, tool access, multi-agent interaction, and environmental exposure. That is now explicit in modern agentic-AI risk guidance. (CLTC)

The rise of identity-bound governance

AI agents are increasingly being treated as non-human identities that require onboarding, authorization, monitoring, and decommissioning. That is a major signal of where enterprise architecture is heading. (Okta)

Put simply, the AI economy is moving from “What can the model do?” to “What is this agent allowed to do here, now, and on whose behalf?”

The companies that will emerge next

If this thesis is right, a new category map begins to appear.

Authority Graph platforms will manage permission maps for AI agents across enterprise workflows.

Delegation registries will act as systems of record for which agents exist, who created them, which principals they represent, and what they are allowed to access.

Recourse orchestration platforms will manage appeals, reversals, overrides, incident recovery, and decision unwinding when AI actions cause harm or disagreement.

Machine-permission infrastructure providers will translate policy, regulation, and business rules into enforceable runtime permissions for agents.

Authority analytics firms will help boards, regulators, and enterprises visualize concentrations of delegated machine power, unresolved exceptions, and unauthorized action paths.

These will not be side markets. They will become core institutional infrastructure.

How existing companies can survive and win

Existing companies do not need to invent frontier models to win in this future. But they will need to redesign how authority is represented.

A bank will need to know which AI systems may only recommend and which may execute.

A manufacturer will need to know which plant agents can stop a line and which can only alert.

A retailer will need to know which pricing agents can change offers and under what guardrails.

A hospital will need to know which systems can triage, schedule, prescribe, or only escalate.

A government department will need to know which public-sector agents can answer questions and which can issue binding decisions.

The winners will be the institutions that turn these rules into living architecture.

That means doing five things well.

First, treat every serious AI agent as an identity-bearing actor.

Second, define permission in business language, not just technical language.

Third, apply least privilege by default.

Fourth, make high-risk actions reviewable, stoppable, and reversible.

Fifth, create visible audit trails that connect delegated authority to real-world consequences. These principles line up closely with both zero-trust logic and current agentic-AI governance recommendations. (NIST Publications)

Why this is becoming a board-level issue

Boards will eventually realize that AI risk is no longer just about hallucination, bias, or accuracy. It is about unmapped authority.

An organization may think it has ten AI systems. In reality, it may have hundreds of agents, scripts, copilots, automations, and embedded AI services touching decisions across finance, operations, HR, procurement, and customer service.

The biggest risk is not always malicious AI. It is invisible delegated power.

That is why the Authority Graph matters. It helps leaders see where machine authority exists, where it is too concentrated, where recourse is weak, and where permission pathways are quietly expanding.

In the old software era, architecture determined scalability.

In the AI era, architecture will determine legitimacy.

Conclusion

The AI economy will not be governed by intelligence alone because intelligence alone does not tell a system what it has the right to do.

Permission is the missing layer between cognition and institution.

That is why the Authority Graph matters. It is not just a security tool. It is not just a governance dashboard. It is the emerging operating map of legitimate machine action.

The next AI winners will not merely build smarter systems. They will build systems that know their authority, stay within it, prove it, and yield when they reach its edge.

That is how institutions survive.

That is how trust scales.

And that is how the Representation Economy becomes real.

Glossary

Authority Graph

A living map of who or what is allowed to act, on whose behalf, under what limits, with what verification, and with what recourse if something goes wrong.

AI agent

A software-based AI system that can do more than answer questions. It can take actions, use tools, interact with systems, and operate across workflows with varying degrees of autonomy.

Delegated authority

The right granted by a human or institution to a system to make or execute certain decisions under defined conditions.

Least privilege

A governance principle under which an actor receives only the minimum access and action rights needed to perform its role.

Machine identity

A formal identity assigned to an AI agent or software service so its actions can be authenticated, authorized, monitored, and audited.

Recourse

The mechanism through which a decision or action can be challenged, reversed, corrected, or appealed.

Zero trust

A security and access-control approach in which no actor is trusted by default and access is continuously constrained by policy, identity, and context.

Representation Economy

An emerging economic logic in which competitive advantage depends on how well reality is made visible, legible, governable, and actionable for machine systems.

SENSE–CORE–DRIVER

A framework for understanding AI systems and institutions. SENSE makes reality legible, CORE reasons over it, and DRIVER governs legitimate action.

FAQ

What is an Authority Graph in AI?

An Authority Graph is a structured map of permission that defines who or what can act, on whose behalf, in which systems, under what rules, and with what recourse if something goes wrong.

Why is the Authority Graph important for enterprise AI?

Because enterprise AI is moving from answering questions to taking action. Once AI starts acting inside workflows, permission, identity, escalation, and reversibility become as important as model intelligence. (Harvard Business Review)

How is an Authority Graph different from an access-control list?

An access-control list usually defines static access rights. An Authority Graph is broader. It includes actor identity, delegated authority, conditions of action, escalation rules, auditability, and recourse.

Why are AI permissions now a board-level issue?

Because organizations may have many more agents and AI-driven actions in production than leaders realize, and unmanaged delegated machine authority can create financial, operational, legal, and reputational risk. (Fortune)

How does this connect to SENSE–CORE–DRIVER?

The Authority Graph sits mainly in DRIVER, the layer that governs delegation, identity, verification, execution, and recourse. It is the bridge between intelligence and legitimate action.

What new businesses will emerge from this shift?

Likely winners include Authority Graph platforms, delegation registries, recourse orchestration systems, machine-permission infrastructure providers, and authority analytics firms.

How should a company start building an Authority Graph?

Start by identifying every serious AI actor, the systems each can access, the actions each can take, the thresholds and approval rules that apply, and the mechanisms for audit, shutdown, and appeal.

References and further reading

Recent enterprise and governance writing increasingly supports the central thesis of this article: that AI governance is shifting from model-centric thinking to identity, authorization, escalation, and system-level controls. Harvard Business Review has argued that AI agents should be treated like team members with roles and authority boundaries. NIST’s zero-trust architecture formalizes least-privilege logic that maps naturally onto agent governance. UC Berkeley CLTC’s agentic-AI profile emphasizes escalation, shutdown, and system-level risk. WEF has framed AI as cognitive infrastructure that requires stronger governance of agency and judgment. Fortune and Okta have highlighted the gap between rapid AI-agent adoption and the need for identity-bound governance. (Harvard Business Review)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- Representation Covenants: The New Competitive Advantage in the AI Economy – Raktim Singh

- The Representation Middle Class: Why the Biggest AI Winners Will Help the World Become Machine-Trusted – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.