Executive definition: What is a board representation strategy?

A board representation strategy is the discipline through which directors and senior executives decide what their institution must be able to see, model, govern, and delegate before artificial intelligence is allowed to influence, recommend, or execute decisions.

It is not simply an AI policy. It is not a model-selection exercise. It is a board-level framework for determining:

- what parts of reality the institution must represent accurately,

- how those representations should be structured for machine use,

- where governance and oversight must apply,

- and what level of autonomy can be safely delegated to AI systems.

In simple terms, a board representation strategy is how an institution decides what machines must understand before machines are trusted to act.

Definition: Board Representation Strategy

A board representation strategy is the discipline through which directors decide what realities an institution must represent accurately before artificial intelligence is allowed to influence or execute decisions. It determines what must be seen, modeled, governed, and delegated so AI systems operate within legitimate institutional boundaries.

The boardroom question has changed

Artificial intelligence is changing the boardroom question.

For the past two years, many directors and executives have been asking some version of the same thing: Which model should we use? Which platform is safest? Which copilot will improve productivity? Those are valid questions, but they are no longer the deepest ones.

The more important question is this:

What must our institution represent correctly before we allow AI to reason, recommend, or act?

That is the real strategic issue now. As AI adoption rises across the economy, boards are moving from curiosity to accountability. Stanford’s 2025 AI Index reports that 78% of organizations said they used AI in 2024, up from 55% the year before, while private investment in generative AI reached $33.9 billion globally in 2024. (Stanford HAI)

Boards are therefore no longer governing only software budgets. They are increasingly governing how their institutions see reality, interpret it, and act upon it.

That is why every serious enterprise now needs a representation strategy.

A representation strategy is the board-level discipline of deciding what the institution must be able to see, how that reality should be modeled, where that model must be governed, and what can be safely delegated to machines. It is the executive expression of a larger architectural shift: the move from simple automation to the Representation Economy, and from fragmented AI experiments to intelligent institutions designed around SENSE–CORE–DRIVER.

This matters because institutions do not fail only when a model makes a bad prediction. They often fail much earlier, when they are representing the wrong reality, omitting critical context, delegating too much, or governing too little.

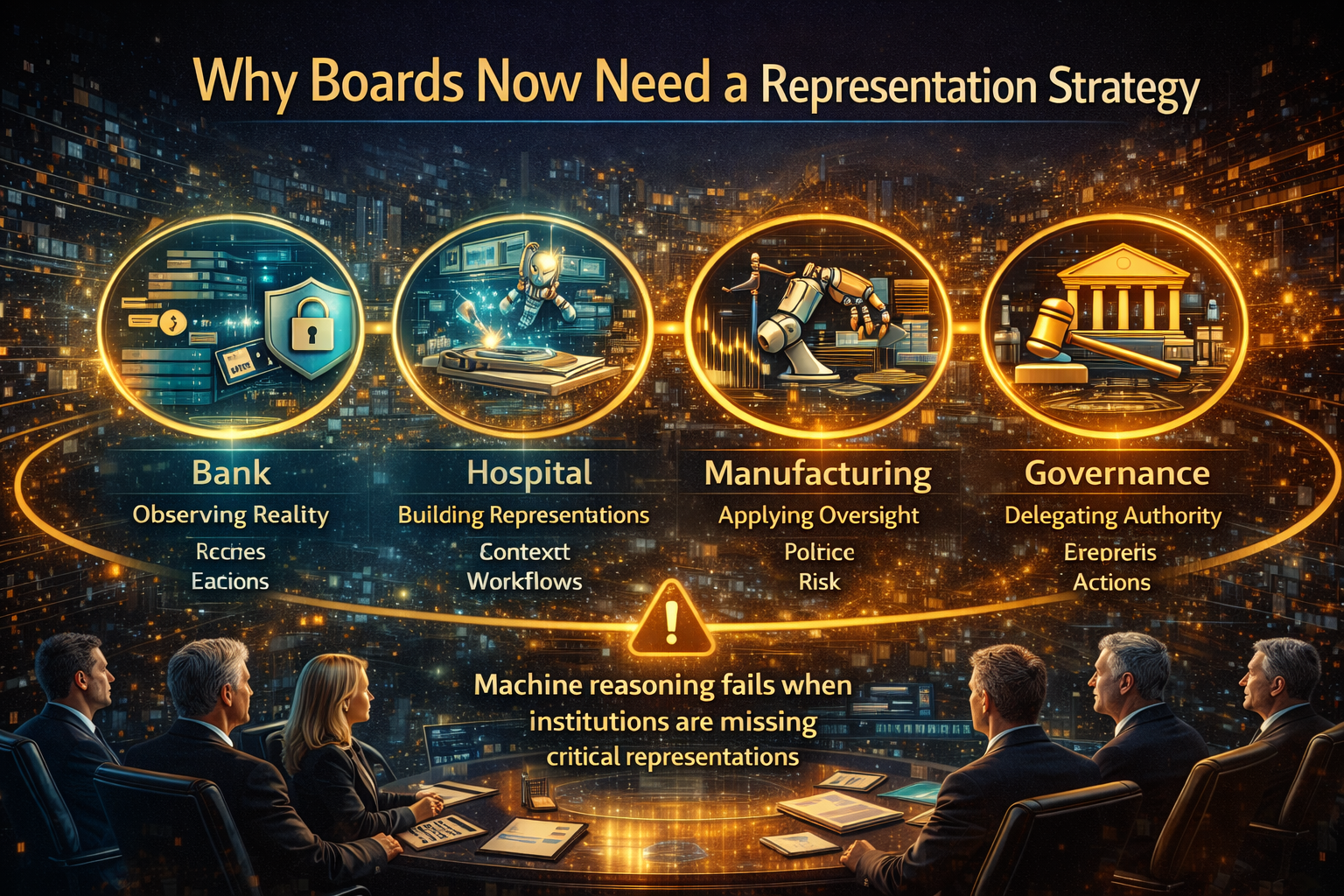

Why boards now need a representation strategy

In the first wave of digital transformation, boards focused on digitization, efficiency, and operating leverage. In the second wave, they began asking about cloud, cybersecurity, data modernization, and platform resilience. In the AI era, the challenge changes again. The board must now govern systems that can influence, recommend, prioritize, personalize, classify, approve, deny, escalate, and sometimes act.

That shift makes representation a governance issue, not just a technical one.

A bank cannot safely deploy AI for underwriting if it lacks a robust representation of customer context, affordability, product suitability, consent boundaries, and recourse pathways. A hospital cannot safely scale AI triage if it represents symptoms but not care continuity, patient history, escalation triggers, or clinician override. A manufacturer cannot rely on autonomous optimization if it models throughput but ignores maintenance condition, supply variance, safety thresholds, or local constraints.

In each case, the problem is the same: the machine can only reason over the reality the institution has chosen to represent.

This is also why current governance frameworks increasingly emphasize accountability, traceability, transparency, and human oversight. NIST’s AI Risk Management Framework organizes AI risk management around GOVERN, MAP, MEASURE, and MANAGE, while the OECD AI Principles call for accountability and traceability across the AI lifecycle. The EU AI Act also imposes transparency and human-oversight obligations for certain AI uses, especially in higher-risk contexts. (NIST Publications)

These are not just compliance themes. They are clues that the board’s real job begins before model deployment.

What a board representation strategy actually means

A representation strategy answers four questions.

-

What must be seen?

What signals from the real world are critical to decisions? Which events, changes, behaviors, exceptions, and constraints matter enough that the institution must capture them?

-

What must be modeled?

How should those signals be organized into a machine-usable representation of customers, assets, relationships, risks, workflows, policies, and states?

-

What must be governed?

Where are the boundaries around authority, interpretation, risk, escalation, verification, auditability, and recourse?

-

What can be delegated?

Which decisions can be automated, which can be machine-assisted, and which must remain human-led?

This is why boards need to think beyond “AI tools.” The core strategic asset is not the model alone. It is the institution’s ability to create trustworthy, evolving representations of reality that machines can operate within.

That idea connects directly to SENSE–CORE–DRIVER.

SENSE: deciding what the institution must be able to see

Every board should ask:

What does our institution need to sense in order to operate intelligently?

SENSE is where reality becomes machine-legible. It includes signals, entities, state representation, and evolution over time. But from a board perspective, the issue is simpler and more urgent: are we seeing enough of the right reality to make AI safe and useful?

Many organizations assume the answer is yes because they have data warehouses, dashboards, CRM systems, and data lakes. But data abundance is not the same as representational adequacy.

Take a consumer lender. It may have transaction data, demographic data, repayment history, and bureau inputs. But if it cannot represent volatile life events, temporary hardship, channel behavior, dispute history, or unusual account context, then even a technically strong model may be reasoning over an incomplete world.

Take a hospital. It may have electronic health records, lab reports, and appointment logs. But if it cannot represent urgency shifts, care transitions, contraindications, or deviations from expected progression, then AI may optimize around the wrong picture of reality.

For a board, this becomes a strategic design question:

What are the minimum realities our institution must capture to remain intelligent, trustworthy, and defensible?

That is the beginning of representation strategy.

CORE: deciding how the institution should reason

Once the institution has chosen what must be seen and modeled, the next question is:

How should decisions be made inside that represented reality?

This is the CORE layer: comprehend context, optimize decisions, realize action, and evolve through feedback.

Boards often underestimate this layer because many AI discussions still collapse reasoning into “answer generation.” But institutional reasoning is not just producing plausible outputs. It is reasoning under law, policy, cost, role boundaries, customer promises, risk appetite, timing constraints, and operational trade-offs.

A board representation strategy therefore needs to define not only what is modeled, but also what reasoning standards apply.

For example:

- Should the AI optimize for speed, fairness, margin, safety, fraud reduction, or regulatory defensibility?

- Should it escalate uncertainty early or late?

- Should it treat edge cases conservatively or aggressively?

- Should it prefer reversible actions over irreversible ones?

These are not model-tuning details. They are institutional design choices.

One of the biggest errors companies make is to let AI inherit fragmented logic from different business silos. Marketing optimizes one reality. Operations uses another. Compliance relies on a third. Risk sees a fourth. The result is not intelligence. It is institutional incoherence wearing an AI interface.

A serious board-level representation strategy forces alignment:

What is the shared model of reality that the institution wants its machines to reason over?

DRIVER: deciding what can be governed and delegated

The final board question is the hardest:

What can we safely allow machines to do?

This is the DRIVER layer: delegation, representation, identity, verification, execution, and recourse.

Boards should not think about delegation as a yes-or-no question. They should think of it as a ladder.

At the bottom of the ladder, AI assists but does not decide.

In the middle, AI recommends and a human approves.

Higher up, AI acts within bounded policies and known thresholds.

At the top, AI operates autonomously in tightly governed environments with strong logging, reversibility, and oversight.

The board’s role is to decide where each class of decision belongs.

A retailer may allow AI to rebalance promotions within predefined limits. A bank may allow AI to flag suspicious activity but not freeze complex cases without review. A hospital may allow AI to prioritize documentation or route routine cases, but not autonomously determine high-risk treatment decisions. A government agency may use AI to support case triage but still require human review for actions affecting legal rights or benefits eligibility.

What matters is not whether the institution uses AI. What matters is whether delegation matches representational maturity and governance strength.

If the institution has weak representation and weak recourse, delegation should stay narrow. If representation is robust, identity is clear, verification is strong, and recourse exists, more bounded delegation becomes possible.

That is what intelligent institutions do: they let autonomy rise only when legitimacy rises with it.

The five realities every board should review

A practical representation strategy should force boards to review five realities.

Customer and stakeholder reality

Does the institution truly represent the customer, citizen, patient, policyholder, or client in a current, contextual, machine-usable way?

Operational reality

Does it represent what is actually happening in workflows, queues, systems, exception paths, service states, and handoffs?

Constraint reality

Does it represent laws, policies, thresholds, approvals, time limits, and non-negotiable guardrails?

Authority reality

Does it represent who can approve, override, delegate, challenge, and unwind actions?

Outcome reality

Does it represent whether decisions worked, caused harm, required reversal, or should change future policy?

If one of these realities is missing, AI can still function. But it will not function intelligently for long.

What representation failure looks like

Representation failure rarely begins with a dramatic model collapse. More often, it begins quietly.

A support bot gives the wrong answer because it sees policy text but not current exception handling.

A risk engine overreacts because it sees anomaly signals but not recent customer context.

A healthcare assistant recommends an action because it sees symptoms but not continuity of care.

A pricing system reacts to demand signals but not reputational, fairness, or long-term customer consequences.

These are not just bad outputs. They are signs that the institution has modeled reality too thinly.

That is why boards should stop asking only, “How accurate is the model?” and start asking:

What does the model not see, not represent, or not understand about the decision environment?

A board agenda for the AI decade

If boards want to govern AI seriously, representation strategy should become part of annual and quarterly oversight.

They should ask:

- What critical realities does the institution rely on but not yet represent well?

- Which decisions are already being influenced by incomplete machine representations?

- Where is authority being delegated faster than governance is maturing?

- Which high-impact decisions lack strong recourse or reversibility?

- Where do different functions operate with conflicting representations of the same entity or event?

- What must become visible before we scale autonomy further?

These are not technical hygiene questions. They are questions about institutional fitness in the AI era.

Why this is ultimately a strategy question, not a technology question

The deepest implication of representation strategy is that it changes what competitive advantage means.

In the old automation story, advantage came from lowering cost and increasing speed. In the representation story, advantage comes from seeing more clearly, modeling more truthfully, governing more intelligently, and delegating more responsibly.

That is a very different kind of edge.

It is harder to copy. It compounds over time. And it makes the institution better not only at automation, but at judgment.

This is why the board’s representation strategy belongs alongside capital allocation, cyber resilience, operating model design, and growth strategy. It helps determine which realities the institution can act upon. And in the AI era, that means it helps determine the institution’s future.

The real board question has changed

The real board question is no longer, “Should we adopt AI?”

It is no longer even, “Which AI vendor should we trust?”

It is this:

What must our institution be able to see, model, govern, and delegate if we want AI to create value without eroding legitimacy?

That is the heart of a board representation strategy.

Institutions that answer it well will not just scale AI more safely. They will build a deeper kind of advantage: the ability to turn machine-legible reality into governed judgment and action.

And that is what intelligent institutions will be built on next.

Key takeaways

- AI governance now begins before model deployment.

- Boards must decide what reality the institution must represent correctly.

- Representation strategy is about what must be seen, modeled, governed, and delegated.

- SENSE–CORE–DRIVER provides a practical architecture for this shift.

- Delegation should rise only when legitimacy, verification, and recourse rise with it.

- The institutions that govern representation well will build a more durable AI advantage.

Conclusion

Boards often assume the hardest part of AI strategy is selecting the right model, vendor, or platform.

It is not.

The harder and more consequential task is deciding what the institution must make visible, how that reality should be modeled, where governance must apply, and which actions can be entrusted to machines without undermining legitimacy.

That is why representation strategy is becoming a board-level imperative.

It is not a technical appendix to AI transformation. It is the discipline that determines whether AI becomes a source of judgment, control, and institutional resilience — or a source of fragility hidden behind impressive interfaces.

The institutions that lead in the next era will not be those that merely deploy more AI. They will be those that represent reality better, reason more coherently, govern more intelligently, and delegate more responsibly.

That is the real strategic frontier.

And it is where the future of intelligent institutions will be decided.

FAQ

What is a board representation strategy?

A board representation strategy is the discipline of deciding what an institution must be able to see, model, govern, and delegate before AI systems are trusted to influence or execute decisions.

Why is representation strategy important for AI governance?

Because AI can only reason over the reality the institution has chosen to represent. If that representation is incomplete, outdated, or poorly governed, even strong models can produce weak or risky decisions.

How is representation strategy different from AI policy?

AI policy usually defines rules, controls, and acceptable use. Representation strategy goes deeper by determining what reality must be captured and modeled in the first place.

What does SENSE mean in this context?

SENSE refers to the institution’s ability to detect signals, identify entities, model state, and track how that state changes over time.

What does CORE mean?

CORE is the reasoning layer where institutions interpret context, optimize choices, determine actionable options, and improve through feedback.

What does DRIVER mean?

DRIVER is the legitimacy and execution layer where authority, verification, identity, execution, and recourse are governed.

Why should boards care now?

Because AI systems are increasingly influencing consequential decisions, and regulators and governance frameworks are placing more emphasis on accountability, traceability, and human oversight. (NIST Publications)

What kinds of decisions should never be fully delegated?

That depends on the institution, but high-impact decisions involving legal rights, major financial harm, patient safety, or weak recourse usually require tighter human oversight.

What is representation failure?

Representation failure occurs when the institution models reality too thinly or incorrectly, causing AI to reason over an incomplete or distorted decision environment.

Is this only relevant for regulated industries?

No. It matters most in regulated sectors first, but any institution using AI for prioritization, recommendation, approval, denial, routing, pricing, or action will eventually face representation questions.

How does this help boards think differently about AI?

It shifts the board’s focus from “Which AI tool should we use?” to “What must be true about our institutional reality before AI is allowed to act?”

Glossary

Board representation strategy

The board-level discipline of deciding what the institution must see, model, govern, and delegate before AI can be trusted in consequential decisions.

Representation Economy

The shift in which competitive advantage increasingly comes from building machine-readable representations of reality rather than from automation alone.

Machine-legible reality

Reality translated into structured forms that software and AI systems can interpret, update, and act on.

SENSE

The institutional layer that captures signals, identifies entities, models state, and tracks change over time.

CORE

The institutional reasoning layer that interprets context, evaluates trade-offs, and supports decisions under constraints.

DRIVER

The layer that governs authority, identity, verification, execution, and recourse for machine-supported action.

Representation failure

A failure that occurs when an institution models reality too thinly, too late, or too inaccurately for AI to reason safely.

Institutional reasoning

Decision-making that reflects not only patterns in data, but also policy, risk, authority, timing, and operational constraints.

Delegated action

Action carried out by an AI-enabled system within predefined authority limits and oversight boundaries.

Recourse

The ability to review, challenge, reverse, or correct a machine-supported decision.

Traceability

The ability to reconstruct what data, processes, and decisions contributed to an AI system’s output or action.

Human oversight

Mechanisms that allow people to supervise, intervene in, or override AI decisions when necessary.

Representational maturity

The degree to which an institution has accurately modeled the realities that matter for decision-making.

References and further reading

This article sits within a broader global shift in AI adoption and governance. Stanford’s 2025 AI Index documents the rapid rise in enterprise AI use and investment, including 78% organizational AI adoption in 2024 and $33.9 billion in global private investment in generative AI. NIST’s AI Risk Management Framework provides a useful governance lens through its GOVERN, MAP, MEASURE, and MANAGE structure. The OECD AI Principles emphasize accountability and traceability, while the EU AI Act reinforces transparency and human-oversight obligations for certain AI uses. (Stanford HAI)

If you want, the next best step is a full SEO package for this article: viral title options, meta title, meta description, slug, focus keyphrase, schema recommendation, and comma-separated tags.

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

• Representation Infrastructure: Why the AI Economy Will Be Won by Those Who Make the Invisible Legible

• The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

• Identity Infrastructure: The Missing Layer Between Signals and Representation in the AI Economy

• Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

• The Enterprise AI Operating Model

• Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

• The Operating Architecture of the AI Economy: Why Intelligence Alone Will Not Transform Markets - The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why the AI Decade Will Be Defined by Who Gets Represented—and Who Designs Trusted Delegation

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.