Artificial intelligence is often described as a revolution in generation, prediction, and reasoning. We hear about better models, faster chips, larger context windows, smarter agents, and more autonomous workflows. Yet the hardest problem in AI is not simply making machines more intelligent.

It is making reality more representable.

That may sound abstract, but it is one of the most practical questions in the AI economy. AI can only work on what becomes legible to it.

If a system cannot properly represent an elderly person living alone, a polluted river, a stressed dairy animal, a fish pond losing oxygen, an informal worker without formal records, or a rural community outside the digital mainstream, then AI cannot help meaningfully.

It may still produce answers. But those answers will often be shallow, distorted, or operationally dangerous. (World Health Organization)

This is why the hardest problem in AI is not just reasoning. It is representation.

And this problem is much bigger than it appears.

Across the world, vast parts of reality are still poorly represented in digital form: older adults, informal labor, ecosystems, biodiversity, animal health, water systems, and communities that were never built into the data architecture of the digital age.

At the same time, digital public infrastructure, low-cost sensors, satellite imagery, mobile networks, and AI-enabled monitoring are expanding what can be seen, measured, and acted upon. The opportunity is enormous. So is the responsibility. (UNDP)

This is where two frameworks matter again and again:

C.O.R.E. — the machine cognition loop

D.R.V.R. — the institutional legitimacy layer

These are not optional ideas. They are becoming central to how AI will create value safely and at scale.

What Is “Representing What Cannot Speak” in AI?

Representing what cannot speak in artificial intelligence refers to the creation of trustworthy digital representations of people, ecosystems, animals, environments, and systems that do not naturally produce structured data.

AI systems can only reason over realities that become machine-readable. The expansion of representation infrastructure—through sensors, digital public infrastructure, satellite observation, and edge computing—will therefore shape the next wave of the AI economy.

The next wave of the AI economy may best be described as the Representation Economy — an economy where value emerges from making previously invisible parts of reality legible to machines.

The real bottleneck is not intelligence. It is representation.

Most AI conversations quietly assume that the world is already available as data. That assumption is false.

Take a simple example. Imagine a bank wants to serve a street vendor or a small informal merchant. A conventional model may ask for income history, credit history, tax records, collateral, and repayment patterns. But what if those records do not exist in formal systems?

The problem is not that the model is weak. The problem is that the person is underrepresented in the digital system.

Now take a non-human example. Suppose an AI platform wants to reduce disease in cattle or detect stress in aquaculture ponds.

It cannot do that by merely “thinking harder.” It needs signal pathways: movement, temperature, feeding patterns, water chemistry, disease indicators, imaging, edge sensors, and local context. The first challenge is representation.

Only after representation comes decision quality. FAO has documented the spread of digital automation and sensing across livestock, aquaculture, crops, and forestry, but it also makes clear that adoption remains uneven and context-sensitive. (FAOHome)

The same logic applies to ecosystems. If a river, forest, air basin, or biodiversity corridor is poorly monitored, AI cannot govern it well. UNEP has highlighted AI’s role in monitoring deforestation, emissions, pollution, and environmental risk, while biodiversity assessments continue to emphasize major data and knowledge gaps. (UNEP – UN Environment Programme)

In other words, AI is only as real as the reality it can represent.

What “cannot speak” really means

The phrase does not only mean silence in the literal sense. It refers to realities that do not naturally generate complete, machine-usable, decision-ready signals.

-

People who are digitally underrepresented

This includes informal workers, rural households, elderly populations, and individuals outside formal records or strong service systems. WHO projects that by 2030, one in six people globally will be aged 60 or older, with the pace of ageing accelerating in developing regions. That means the representation challenge is not niche. It is structural. (World Health Organization)

-

Non-human entities

Animals, fisheries, forests, soil, air, and water systems do not issue structured digital claims about their condition. Their needs must be inferred through sensing, models, proxies, and domain expertise.

-

Systems that are physically dispersed

Many economically important realities are fragmented across geographies: farms, watersheds, supply chains, villages, wetlands, and ecological corridors. Their signals are scattered, noisy, and expensive to collect.

-

Realities that are visible locally but invisible institutionally

A farmer may know a pond is stressed. A village may know a water source is degrading. A caregiver may know an older adult’s routine has changed. But unless that local knowledge becomes representable in machine-usable form, it remains invisible to larger decision systems.

This is where AI’s next frontier lies.

Why this matters more in the Global South

There is an important geographic nuance here.

In parts of Europe and some advanced economies, digital representation is often discussed through the lens of privacy, surveillance, and automated-decision risk.

Those concerns are valid. Article 22 of the GDPR gives people safeguards against decisions based solely on automated processing when those decisions have legal or similarly significant effects. (EUR-Lex)

But much of the Global South begins from a different historical condition: not overrepresentation, but underrepresentation.

For millions of people, the old problem was not “too much data about me.” It was “no one sees me, records me, serves me, finances me, insures me, or designs systems around my reality.”

That is one reason digital public infrastructure has become so consequential. UNDP frames DPI as a rights-centered and inclusive foundation for digital transformation, while the World Bank highlights its role in inclusion, payments, identity, resilience, and service delivery across dozens of countries. (UNDP)

That changes the moral and economic meaning of representation.

In one context, representation feels like surveillance.

In another, representation feels like recognition.

That distinction matters enormously for the future of AI, especially if one wants to understand where new value will be unlocked first.

C.O.R.E. only works when representation is real

This is why C.O.R.E. must be repeated clearly and consistently:

C — Comprehend context

O — Optimize decisions

R — Realize action

E — Evolve through feedback

C.O.R.E. is the machine cognition loop. It describes how AI systems transform signals into contextual understanding, into options, into action, and then into learning.

But here is the hard truth:

C.O.R.E. fails at the very first step if representation is weak.

How can an AI system comprehend context if the context has never been captured properly?

How can it optimize decisions for a fishery, a village clinic, an informal borrower, or an elderly person if the relevant state of the world is missing, noisy, or distorted? How can it realize action responsibly if it is acting on partial representation? How can it evolve through feedback if feedback itself is poorly instrumented?

So the hardest problem in AI is not merely building stronger C.O.R.E. loops.

It is building representation worthy of C.O.R.E.

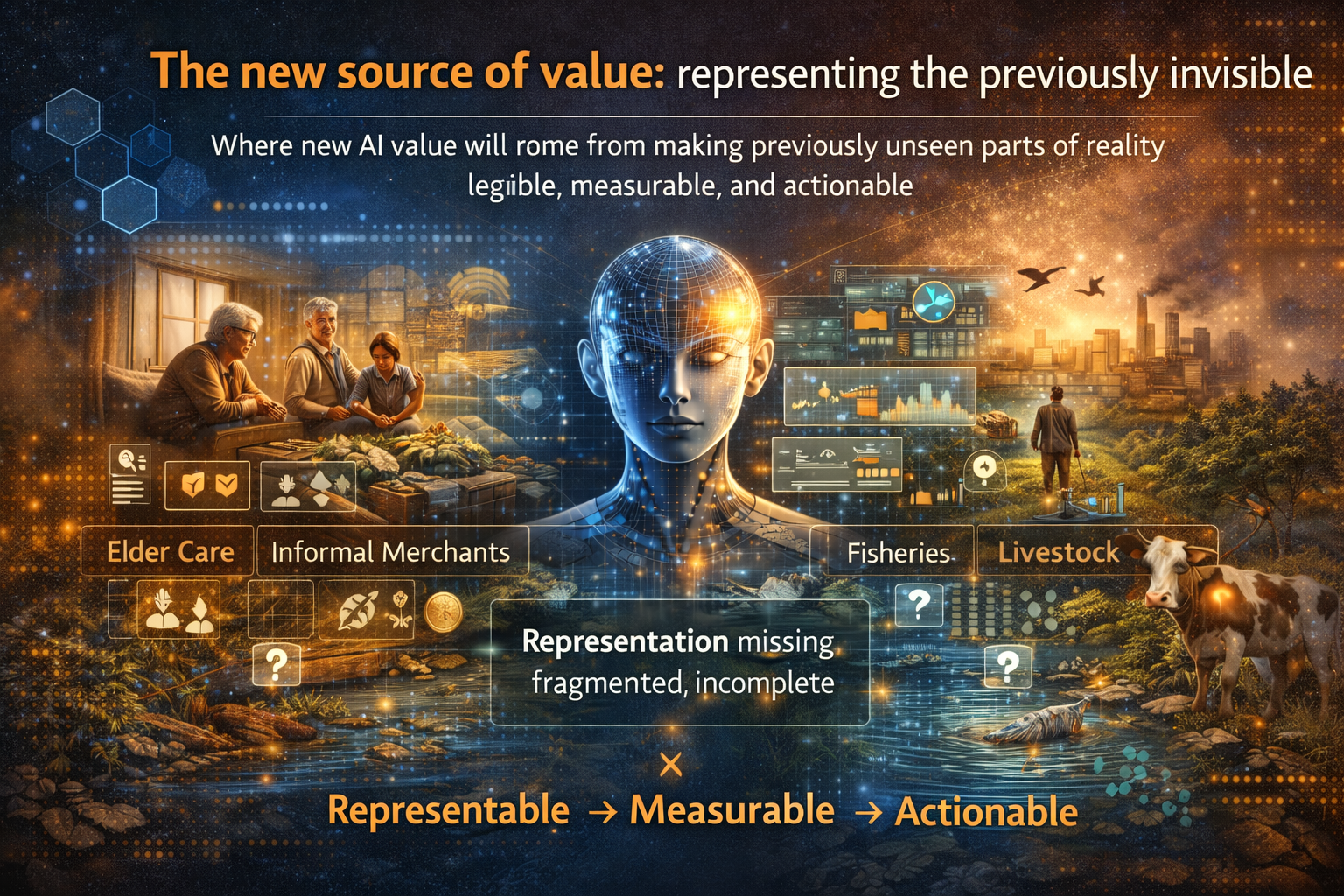

The new source of value: representing the previously invisible

This leads to a powerful economic idea.

The next wave of AI value will not come only from smarter foundation models. It will also come from expanding the representation surface of the world.

FAO documents rising use of digital and automation technologies in crops, livestock, aquaculture, and forestry, and its broader work continues to underscore the scale and importance of smallholder and family farming in the world food system. That means the representation opportunity remains vast. (FAOHome)

Think about what that means.

A company that helps represent cattle health in real time is not just selling a dashboard. It is creating a new layer of economic visibility.

A company that helps represent air quality in under-monitored cities is not just providing environmental software. It is making public risk legible.

A company that helps represent the needs and behavior patterns of older adults living independently is not just offering elder-care technology. It is making an entire demographic more visible to care systems, insurers, urban planners, and service providers.

This is how new markets are born.

First, a part of reality becomes representable.

Then, it becomes measurable.

Then, it becomes optimizable.

Then, new services, business models, and institutions emerge around it.

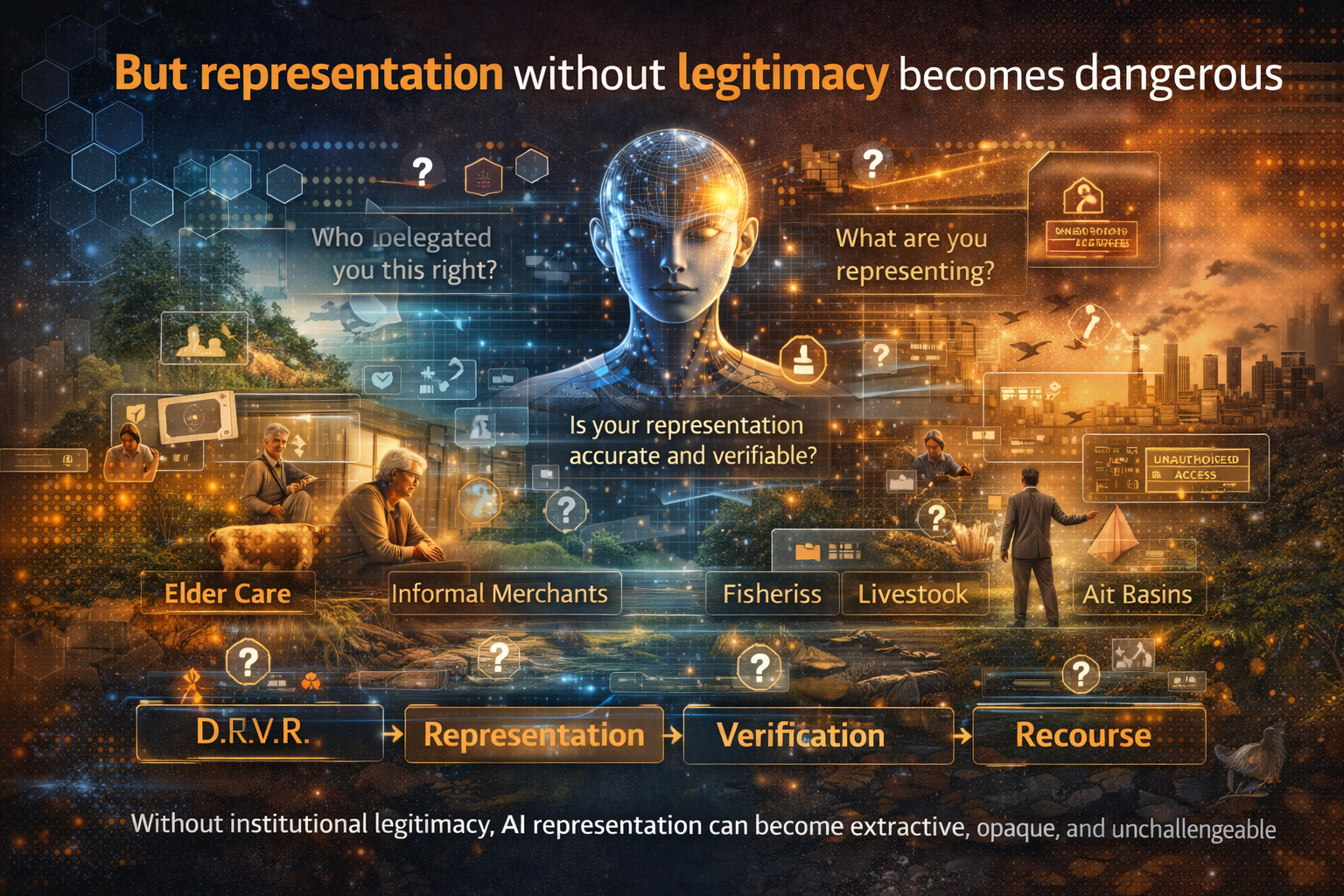

But representation without legitimacy becomes dangerous

This is where D.R.V.R. must be repeated just as strongly as C.O.R.E.

D — Delegation

R — Representation

V — Verification

R — Recourse

If C.O.R.E. is the cognition loop, D.R.V.R. is the legitimacy layer.

An enterprise cannot simply say, “We now represent forests, rivers, cattle, elderly people, informal communities, or biodiversity risk,” and expect trust to appear automatically.

Society will ask four hard questions.

Delegation — Who gave you the right to represent this entity or population?

Representation — What exactly are you representing? Which signals count, and which do not?

Verification — How do we know your representation is accurate enough to be trusted?

Recourse — What happens when your representation is wrong, harmful, or incomplete?

These questions become especially important when AI systems trigger real actions: credit approvals, insurance pricing, medical alerts, public warnings, subsidy allocation, environmental intervention, compliance decisions, or service prioritization.

Without D.R.V.R., AI representation can become extractive, opaque, and unchallengeable. With D.R.V.R., it becomes institutionally usable.

That is why C.O.R.E. and D.R.V.R. must be repeated together.

C.O.R.E. gives AI capability.

D.R.V.R. gives AI legitimacy.

Simple examples that make this real

Example 1: Elderly care

An older adult living alone may not “speak” digitally in any consistent way. But patterns of motion, medication adherence, sleep disruption, missed calls, room temperature, and emergency history can help represent vulnerability.

C.O.R.E. helps the system comprehend context, optimize alerts, realize support, and evolve with feedback.

D.R.V.R. determines who is allowed to monitor, what data is justified, how false alarms are verified, and what recourse exists if the system gets it wrong. WHO’s ageing projections make clear that this challenge will only grow. (World Health Organization)

Example 2: Livestock and fisheries

Animals cannot file complaints or describe symptoms. Their representation must be inferred from sensors, behavior, imaging, environmental conditions, and expert context. FAO shows that such technologies are becoming more common across agrifood systems.

But if a representation system misreads animal health or pond conditions, who checks it, who is accountable, and how is action corrected? That is D.R.V.R. operating alongside C.O.R.E. (FAOHome)

Example 3: Air and water systems

A city cannot protect residents from polluted air or degrading water that it does not measure. UNEP and related environmental work show both the promise of AI-enabled monitoring and the scale of current data gaps.

Here again, C.O.R.E. can analyze and act only if representation exists. D.R.V.R. matters because public warnings, industrial accountability, and regulatory response require trusted data and recourse. (UNEP – UN Environment Programme)

This is why the hardest problem in AI is bigger than the model

The future AI economy will not be defined only by who has the largest model.

It will also be defined by who can most credibly represent the parts of reality that were previously invisible.

That may include older adults, smallholders, informal merchants, livestock systems, fisheries, soil and water conditions, air basins, biodiversity networks, and low-trust public-service environments.

This is not a side story in AI. It may become one of the main engines of value creation.

And once representation exists, another frontier opens: decision velocity. But decision velocity comes second. Representation comes first. You cannot optimize what you have not made legible.

The strategic message for enterprises and boards

For boards, CEOs, CIOs, CTOs, and public leaders, this creates a very different AI agenda.

Do not ask only:

“How do we deploy smarter AI?”

Also ask:

“What parts of reality relevant to our customers, communities, assets, supply chains, or ecosystems are still poorly represented?”

Then ask a second question:

“What would it take to build C.O.R.E. on top of that representation and D.R.V.R. around it?”

That is a much more serious way to think about AI strategy.

Because the biggest opportunities may not lie in building one more generic assistant. They may lie in building trusted representation systems for domains the digital economy still sees poorly.

The expansion of machine-readable reality is one of the most important shifts in the AI era. Sensors, satellites, digital infrastructure, and AI systems are turning previously invisible parts of the world into actionable data.

Conclusion: the next economy will be shaped by what becomes visible

The real future of AI is not just text generation, image generation, or autonomous workflow execution.

It is the expansion of machine-readable reality.

But that expansion must not be reckless. It must be designed.

C.O.R.E. matters because AI must comprehend context, optimize decisions, realize action, and evolve through feedback.

D.R.V.R. matters because every serious representation system needs delegation, representation discipline, verification, and recourse.

The hardest problem in AI is not building a system that can talk.

It is building a system that can responsibly represent what cannot.

And the institutions, enterprises, and societies that solve that problem will not merely build better AI. They will reshape what becomes visible, valuable, governable, and investable in the next economy.

That is where the next wave of strategic advantage will come from.

The next AI economy will not be shaped only by the intelligence of machines.

It will be shaped by what parts of reality become visible to them.

Glossary

AI representation

The process of converting a person, entity, system, or environment into machine-usable signals, states, and relationships.

Machine-readable reality

Parts of the world that have become visible to software through sensors, records, digital identity, telemetry, imaging, or structured data.

C.O.R.E.

A machine cognition loop: Comprehend context, Optimize decisions, Realize action, Evolve through feedback.

D.R.V.R.

An institutional legitimacy layer: Delegation, Representation, Verification, Recourse.

Representation surface

The total area of reality that an institution or system can observe, interpret, and act upon.

Decision velocity

The speed and quality with which an organization can move from signal to decision to action once representation exists.

Digital public infrastructure

Foundational digital systems such as identity, payments, and data-sharing rails that enable broad participation and service delivery. (UNDP)

Recourse

The ability to challenge, reverse, correct, or appeal a machine-assisted decision.

FAQ

What does “representing what cannot speak” mean in AI?

It means creating trustworthy digital representations of people, animals, environments, or systems that do not naturally generate complete, structured, decision-ready signals on their own.

Why is this the hardest problem in AI?

Because AI can only reason over what becomes legible to it. If the underlying reality is missing, fragmented, or poorly represented, even advanced models will produce weak or misleading outcomes.

How do C.O.R.E. and D.R.V.R. fit into this?

C.O.R.E. is the machine cognition loop: comprehend, optimize, realize, evolve. D.R.V.R. is the institutional legitimacy layer: delegation, representation, verification, recourse.

Why is this especially important in the Global South?

In many regions, the historical challenge has been underrepresentation rather than overrepresentation. Digital public infrastructure can therefore expand inclusion, identity, payments, and service delivery where formal systems were weak or absent. (UNDP)

Which sectors are most affected?

Elder care, agriculture, livestock, fisheries, environmental monitoring, informal finance, public services, and any domain where important entities remain weakly represented today. (World Health Organization)

Why should boards care about this now?

Because the next wave of AI advantage may come less from generic model access and more from building trusted representation systems around customers, assets, ecosystems, and non-digital realities.

References and further reading

- WHO, Ageing and Health (World Health Organization)

- UNDP, Digital Public Infrastructure framework paper (UNDP)

- World Bank, Creating Digital Public Infrastructure for Empowerment, Inclusion, and Resilience (World Bank)

- FAO, The State of Food and Agriculture 2022 and related digital agriculture materials (FAOHome)

- UNEP, How artificial intelligence is helping tackle environmental challenges (UNEP – UN Environment Programme)

- IPBES biodiversity and monitoring materials (files.ipbes.net)

- EUR-Lex, GDPR Article 22 on automated decision-making (EUR-Lex)

Enterprise AI Operating Model

Enterprise AI scale requires four interlocking planes:

Read about Enterprise AI Operating Model The Enterprise AI Operating Model: How organizations design, govern, and scale intelligence safely – Raktim Singh

- Read about Enterprise Control Tower The Enterprise AI Control Tower: Why Services-as-Software Is the Only Way to Run Autonomous AI at Scale – Raktim Singh

- Read about Decision Clarity The Shortest Path to Scalable Enterprise AI Autonomy Is Decision Clarity – Raktim Singh

- Read about The Enterprise AI Runbook Crisis The Enterprise AI Runbook Crisis: Why Model Churn Is Breaking Production AI—and What CIOs Must Fix in the Next 12 Months – Raktim Singh

- Read about Enterprise AI Economics Enterprise AI Economics & Cost Governance: Why Every AI Estate Needs an Economic Control Plane – Raktim Singh

Read about Who Owns Enterprise AI Who Owns Enterprise AI? Roles, Accountability, and Decision Rights in 2026 – Raktim Singh

Read about The Intelligence Reuse Index The Intelligence Reuse Index: Why Enterprise AI Advantage Has Shifted from Models to Reuse – Raktim Singh

The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

The Intelligence-Native Enterprise Doctrine

This article is part of a larger strategic body of work that defines how AI is transforming the structure of markets, institutions, and competitive advantage. To explore the full doctrine, read the following foundational essays:

- The AI Decade Will Reward Synchronization, Not Adoption

Why enterprise AI strategy must shift from tools to operating models.

https://www.raktimsingh.com/the-ai-decade-will-reward-synchronization-not-adoption-why-enterprise-ai-strategy-must-shift-from-tools-to-operating-models/ - The Third-Order AI Economy

The category map boards must use to see the next Uber moment.

https://www.raktimsingh.com/third-order-ai-economy/ - The Intelligence Company

A new theory of the firm in the AI era — where decision quality becomes the scalable asset.

https://www.raktimsingh.com/intelligence-company-new-theory-firm-ai/ - The Judgment Economy

How AI is redefining industry structure — not just productivity.

https://www.raktimsingh.com/judgment-economy-ai-industry-structure/ - Digital Transformation 3.0

The rise of the intelligence-native enterprise.

https://www.raktimsingh.com/digital-transformation-3-0-the-rise-of-the-intelligence-native-enterprise/ - Industry Structure in the AI Era

Why judgment economies will redefine competitive advantage.

https://www.raktimsingh.com/industry-structure-in-the-ai-era-why-judgment-economies-will-redefine-competitive-advantage/

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.