Tasks that once required hours of human effort—drafting reports, analyzing documents, classifying information, summarizing insights, or orchestrating workflows—are becoming cheap, fast, and increasingly automated.

That is the visible story.

The less visible story begins after AI acts.

What happens when an automated decision is wrong?

What happens when an AI action is incomplete, unfair, unsafe, or misaligned with reality?

In the first wave of AI adoption, systems primarily recommended.

In the next wave, systems increasingly decide and act.

They approve loans.

They deny access.

They prioritize customers.

They route support tickets.

They set prices.

They trigger operational workflows.

This shift fundamentally changes the architecture of enterprise systems.

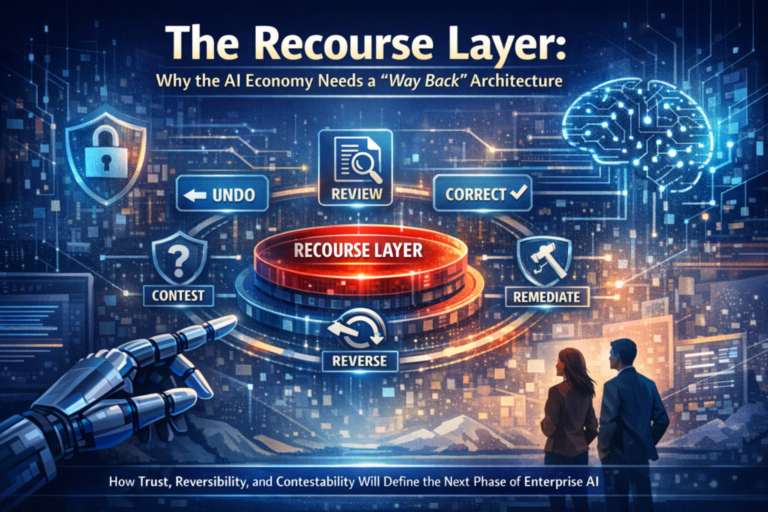

When AI becomes an actor inside real workflows, the most important capability is no longer just intelligence.

It is recourse.

The ability to contest, correct, reverse, and remediate what the system did—quickly, safely, and credibly.

This capability forms what I call the Recourse Layer.

And it may become the most important missing layer in enterprise AI architecture.

The Recourse Layer is the architectural capability that allows organizations to contest, reverse, and remediate AI-driven decisions while maintaining evidence and accountability. In large-scale enterprise systems, this layer becomes essential for maintaining trust when AI systems move from recommendation to autonomous action.

What Is the Recourse Layer?

The “Way Back” Architecture for AI Systems

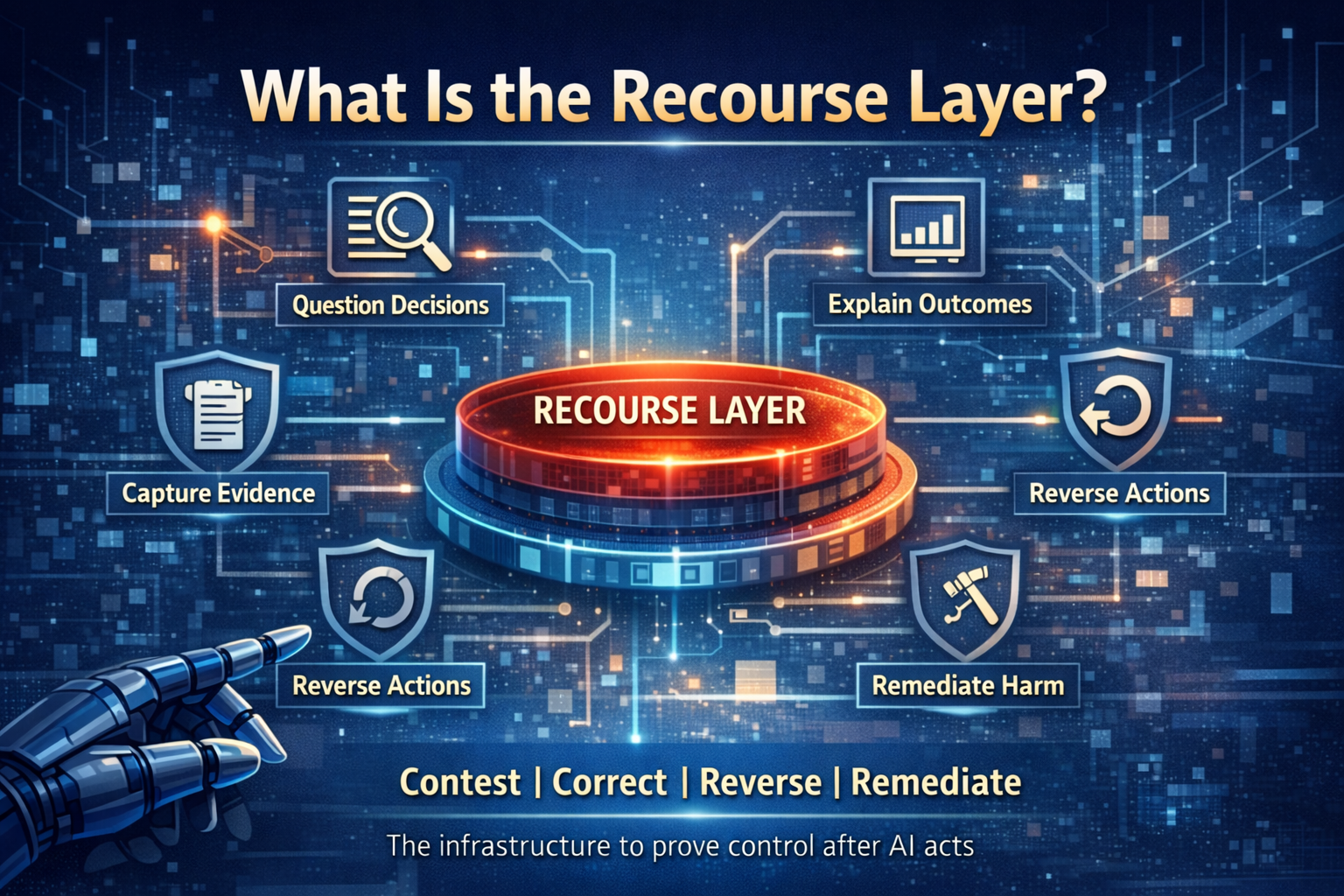

The Recourse Layer is the infrastructure that ensures organizations maintain control after AI acts.

It is the set of technical, product, governance, and workflow mechanisms that make automated systems accountable.

A mature Recourse Layer ensures that:

- Decisions can be questioned

• Outcomes can be explained in operational terms

• Actions can be reversed or halted

• Harm can be remediated

• Evidence can be reconstructed

• Systems can learn from disputes and failures

In simple terms:

Recourse converts “AI acted” into “AI acted—and we can prove control.”

This is not merely a compliance feature.

It is market infrastructure.

In the internet era, identity and payments enabled digital commerce.

In the AI era, recourse enables delegation at scale.

Without recourse, organizations cannot safely allow AI to operate across critical workflows.

Definition: The Recourse Layer in AI

The Recourse Layer is the architectural capability that enables organizations to contest, reverse, and remediate AI-driven decisions while preserving evidence and accountability.

In enterprise AI systems, the Recourse Layer ensures that automated decisions are not final or opaque, but instead remain contestable, traceable, and correctable.

This layer provides the operational mechanisms that allow:

• affected stakeholders to challenge AI outcomes

• organizations to reconstruct decision context

• automated actions to be reversed or halted when necessary

• harms to be remediated through structured workflows

• failures to become learning signals that improve the system

As AI systems move from recommendation engines to autonomous actors, the Recourse Layer becomes essential infrastructure for trustworthy, governable, and scalable AI deployment.

In the emerging AI economy, trust will increasingly depend not only on whether AI systems are accurate, but on whether organizations have built a reliable “way back” architecture when those systems are wrong.

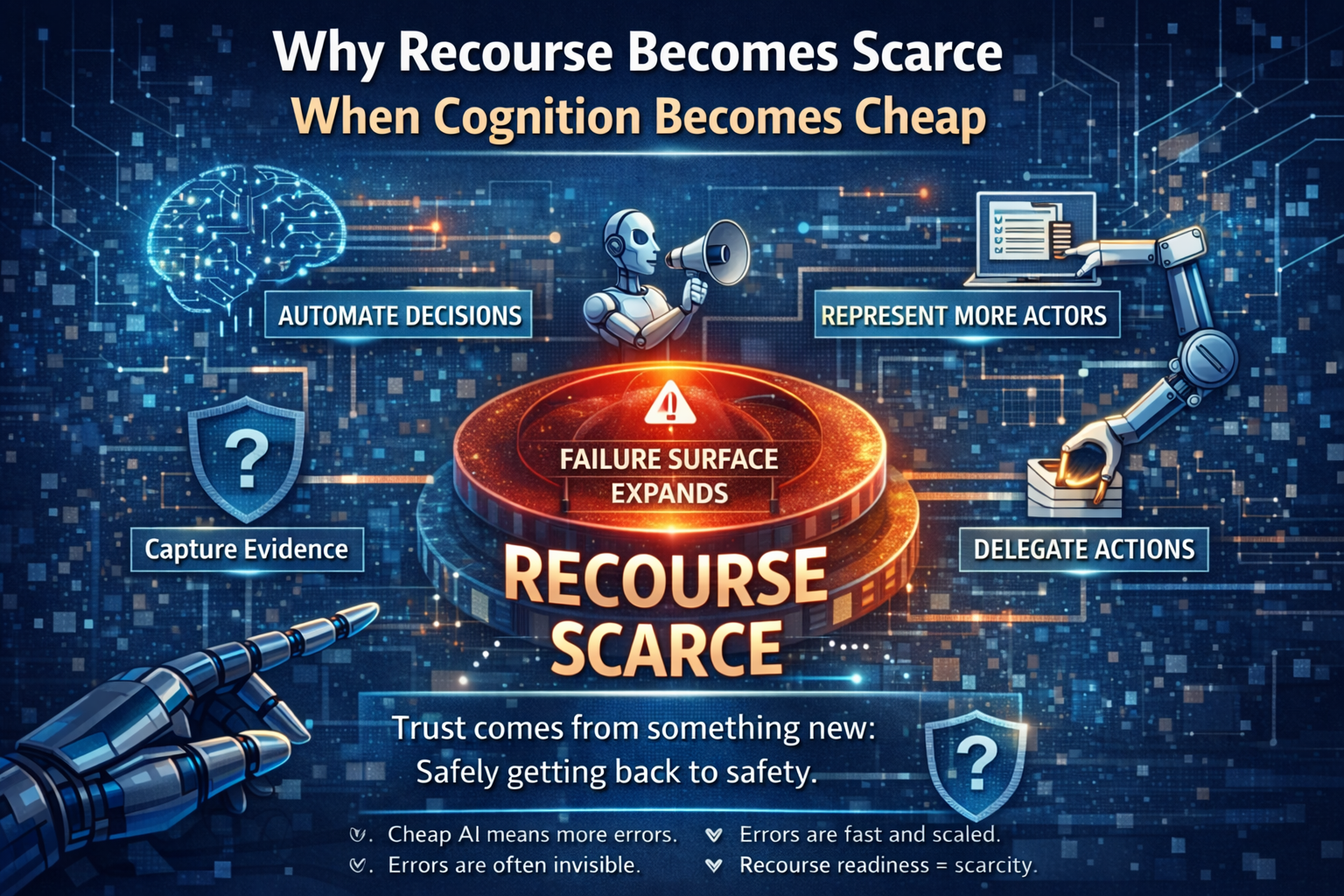

Why Recourse Becomes Scarce When Cognition Becomes Cheap

The economics of AI create a paradox.

As the cost of cognition falls, three structural shifts occur simultaneously.

-

More decisions become automatable

Reasoning is now inexpensive.

Tasks that previously required skilled humans can be delegated to models or agents.

-

More actors become representable

AI can now interpret signals that were historically invisible to digital systems:

- text

- voice

- images

- documents

- sensor data

- workflow patterns

- informal communication

This dramatically expands the scope of digital participation.

This concept connects directly to what I described in The Silent Systems Doctrine, where entire economic ecosystems remain invisible because they lack machine-readable representation.

-

More actions become delegated

Agentic systems can now execute actions through tools:

- updating databases

- triggering payments

- provisioning infrastructure

- interacting with APIs

- orchestrating workflows

This dramatically expands AI’s operational authority.

The Failure Surface Expands

In a manual world:

Errors are slow and local.

In an AI world:

Errors are fast, scalable, and sometimes invisible.

The scarce capability becomes the ability to say:

“If something goes wrong, we can get back to safety quickly—and with accountability.”

That capability is the Recourse Layer.

Simple Examples: Where the “Way Back” Matters

Example 1: The Invisible Denial

An AI system denies a customer access to a service because a risk score crossed a threshold.

The customer asks:

“Why?”

The frontline team cannot answer because:

- the score aggregated multiple signals

- the model version changed last week

- the policy threshold was updated automatically

- the system did not log the relevant decision context

Without recourse, this becomes customer frustration.

At scale, it becomes reputational damage.

A Recourse Layer ensures:

- the decision pathway is reconstructable

- contestation pathways exist

- human override mechanisms are available

- corrections become structured learning events

Example 2: The Runaway Agent

An AI agent is tasked with resolving a customer issue.

It begins looping through tool calls:

fetch → summarize → validate → re-fetch → escalate → retry

Costs escalate.

Operational workflows stall.

The Recourse Layer introduces safeguards:

- loop detection

- rate limits

- kill switches

- graceful degradation mechanisms

This converts an uncontrolled system into a governable system.

Example 3: The Misrepresentation Problem

An AI system represents a supplier’s reliability based on incomplete signals.

The result?

The supplier is deprioritized in the supply chain.

But the supplier cannot:

- see the evidence

- contest the decision

- correct the representation

This breaks legitimacy.

A Recourse Layer enables:

- evidence disclosure at appropriate abstraction levels

- contestation mechanisms

- time-bound re-evaluation pathways

This is where the Representation Economy becomes operational.

Representation without recourse becomes algorithmic authority without legitimacy.

The Global Governance Signal

Around the world, AI governance frameworks are converging on a consistent principle:

AI systems must be controllable in the wild.

For example:

The EU AI Act requires mechanisms for human oversight in high-risk AI systems.

The NIST AI Risk Management Framework emphasizes governance structures for managing AI risks across system lifecycles.

These frameworks implicitly reinforce the same architectural truth:

Trustworthy AI is not only about accuracy.

It is about control after deployment.

The C.O.R.E. Connection

In my framework for the Third-Order AI Economy, enterprises create advantage by operationalizing C.O.R.E.:

C — Comprehend context

O — Optimize decisions

R — Regulate and realize actions

E — Evolve through evidence

The Recourse Layer is what makes the E (Evolve) possible.

Without recourse:

- failures become anecdotes

- complaints become support tickets

- insights disappear into fragmented systems

With recourse:

- disputes become structured signals

- corrections become institutional learning

- reversals become engineered processes

Recourse converts error into evolution.

The Five Pillars of a “Way Back” Architecture

-

Contestability by Design

Anyone affected by an AI decision must be able to challenge it.

Contestability requires:

- dispute capture systems

- decision reconstruction mechanisms

- structured review processes

- traceable outcome explanations

Contestability transforms opaque AI systems into legitimate decision infrastructures.

-

Traceability and Evidence

You cannot reverse what you cannot reconstruct.

Recourse-ready systems log:

- model versions

- policy thresholds

- decision inputs

- tool calls

- uncertainty signals

- escalation events

This aligns with the concept of an enterprise intelligence ledger—the system of record for delegated decision-making.

-

Reversibility by Design

Every AI action should be categorized by reversibility:

Fully reversible

Conditionally reversible

Practically irreversible

This design discipline prevents irrevocable automation mistakes.

-

Functional Human Oversight

Human oversight must be operational—not symbolic.

Effective oversight includes:

- defined escalation triggers

- clear human decision rights

- transparent audit trails

- time-bound review SLAs

-

Remediation and Learning Loops

Recourse is incomplete if it resolves only individual cases.

The system must learn from failure.

This requires:

- dispute taxonomy

- root cause analysis

- policy updates

- model retraining where necessary

- institutional review cycles

The Strategic Shift: Recourse as Growth Infrastructure

Most organizations treat recourse as a risk mitigation cost.

But in the Third-Order AI Economy, recourse becomes a growth enabler.

Because when AI begins representing previously invisible ecosystems—small suppliers, informal labor networks, rural economies, complex physical infrastructure—participation depends on one fundamental question:

“Can I challenge how I’m being represented?”

Recourse becomes the bridge between representation and legitimacy.

And legitimacy is what converts AI capability into market permission.

The Board-Level Questions That Matter

Boards should regularly ask five questions:

- Where are we delegating authority without engineered reversibility?

- Which decisions are contestable in practice—not just policy?

- What is our time-to-recourse SLA?

- Do we have a ledger-grade record of AI decisions?

- Are disputes improving the system—or disappearing into support queues?

These questions move organizations from AI experimentation to AI advantage.

The Key Insight

Here is the core insight:

Trust in the AI economy is not built by correctness.

Trust is built by the existence of a way back.

Recourse is not a feature.

It is not a policy.

It is a layer.

The Recourse Layer is the infrastructure that allows enterprises to safely scale AI decision-making by ensuring every automated action can be explained, contested, and reversed.

Conclusion: The Next Platform Advantage

The next decade will reward organizations that can do three things better than their competitors:

- Represent more reality

- Delegate more action

- Recover faster when wrong

Cognition is becoming abundant.

That is not the moat.

The moat is institutional capacity.

The ability to run a closed loop where:

- decisions are auditable

- actions are governable

- failures become learning signals

In the Third-Order AI Economy, the winners will not be the organizations that never fail.

They will be the organizations that built the “way back” architecture that makes delegation safe to scale.

Glossary

Recourse Layer

Infrastructure that enables contesting, reversing, and correcting AI-driven decisions.

Contestability

The ability to challenge automated decisions through structured mechanisms.

Delegated Intelligence

Situations where AI systems execute tasks or decisions within operational workflows.

AI Governance

Policies, processes, and technical systems that ensure safe and accountable AI deployment.

Representation Economy

An economic system where value depends on what actors and signals are represented within AI systems.

FAQ

What is the Recourse Layer in AI?

The Recourse Layer is the infrastructure that allows organizations to contest, reverse, and remediate AI decisions while capturing evidence and learning from failures.

Why is recourse important in enterprise AI?

As AI systems begin making operational decisions, organizations must maintain mechanisms to correct errors and maintain trust.

Is recourse only about compliance?

No. Recourse is emerging as core market infrastructure that enables scalable AI delegation.

How can enterprises implement a Recourse Layer?

Enterprises must design systems with:

- contestability

- traceability

- reversibility

- human oversight

- structured remediation loops

References & Further Reading

Alan Turing Institute – Responsible AI

EU AI Act (Contestability & Rights)

The Intelligence-Native Enterprise Doctrine

This article is part of a larger strategic body of work that defines how AI is transforming the structure of markets, institutions, and competitive advantage. To explore the full doctrine, read the following foundational essays:

- The AI Decade Will Reward Synchronization, Not Adoption

Why enterprise AI strategy must shift from tools to operating models.

https://www.raktimsingh.com/the-ai-decade-will-reward-synchronization-not-adoption-why-enterprise-ai-strategy-must-shift-from-tools-to-operating-models/ - The Third-Order AI Economy

The category map boards must use to see the next Uber moment.

https://www.raktimsingh.com/third-order-ai-economy/ - The Intelligence Company

A new theory of the firm in the AI era — where decision quality becomes the scalable asset.

https://www.raktimsingh.com/intelligence-company-new-theory-firm-ai/ - The Judgment Economy

How AI is redefining industry structure — not just productivity.

https://www.raktimsingh.com/judgment-economy-ai-industry-structure/ - Digital Transformation 3.0

The rise of the intelligence-native enterprise.

https://www.raktimsingh.com/digital-transformation-3-0-the-rise-of-the-intelligence-native-enterprise/ - Industry Structure in the AI Era

Why judgment economies will redefine competitive advantage.

https://www.raktimsingh.com/industry-structure-in-the-ai-era-why-judgment-economies-will-redefine-competitive-advantage/

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.