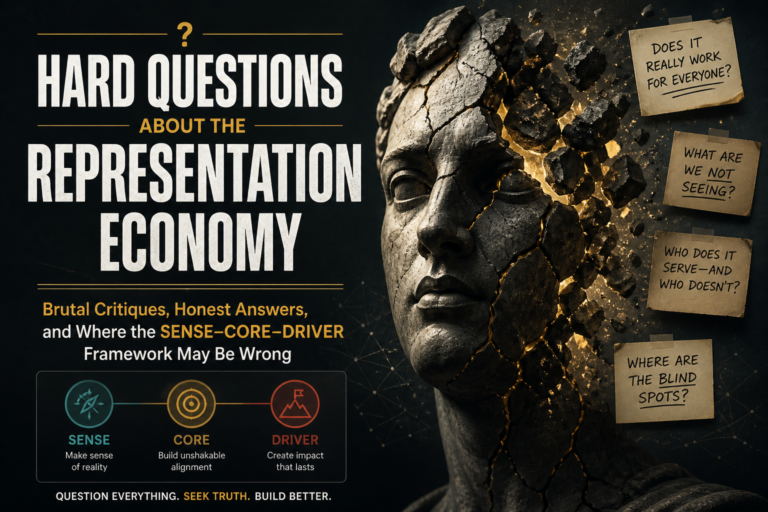

Hard Questions About the Representation Economy:

A framework that cannot survive scrutiny does not deserve adoption.

That is especially true in AI.

The field already has too many confident diagrams, too many inflated claims, and too many “new paradigms” that collapse into old ideas with new labels. Enterprises do not need another vocabulary layer unless it helps them see something they were previously missing. CTOs do not need another framework unless it helps them make sharper architecture decisions. Boards do not need another AI narrative unless it improves accountability, risk visibility, and long-term strategic judgment.

That is why the Representation Economy and the SENSE–CORE–DRIVER framework must be pressure-tested, not merely explained.

The Representation Economy thesis argues that the next era of AI will not be defined only by who owns the best models, but by who can represent reality in ways that machines can identify, understand, trust, and act upon.

In this view, institutional advantage shifts from raw data and model access toward trusted representation: the ability to make customers, assets, obligations, risks, processes, events, permissions, and consequences machine-legible and governable.

The SENSE–CORE–DRIVER framework is my attempt to describe the architecture behind that shift.

SENSE is the layer that makes reality machine-readable: signals, entities, states, and evolution over time.

CORE is the reasoning layer: the system that interprets context, evaluates options, generates recommendations, and plans action.

DRIVER is the legitimacy and execution layer: delegation, authority, identity, verification, execution, recourse, and accountability.

But if this framework is to be taken seriously, it must answer hard questions.

Is it genuinely new? Is SENSE just data engineering with a more elegant name? Is DRIVER simply governance, compliance, workflow, and IAM repackaged? Where exactly is the economics in “Representation Economy”? Are the boundaries too broad? Are they crisp enough for implementation? Will future AI models make this whole architecture unnecessary? Is this framework stronger as a concept than as an operational reality?

These are not hostile questions.

They are necessary questions.

A serious framework should not ask for belief. It should invite examination.

This article is therefore not a defense brief. It is a stress test. It accepts that the Representation Economy is still an evolving architecture lens, not frozen doctrine. Some parts are already visible in enterprise AI failures and emerging architectures. Some parts remain underdeveloped. Some terms may need refinement. Some claims may become weaker if future models solve problems that today require explicit representation infrastructure.

That is acceptable.

A living framework must be willing to evolve.

The Representation Economy argues that AI-era advantage will increasingly depend on how well institutions represent reality in machine-readable, trustworthy, and governable forms. This article pressure-tests that thesis by examining whether the SENSE–CORE–DRIVER framework is genuinely new, operationally useful, economically valid, and resilient to future AI progress.

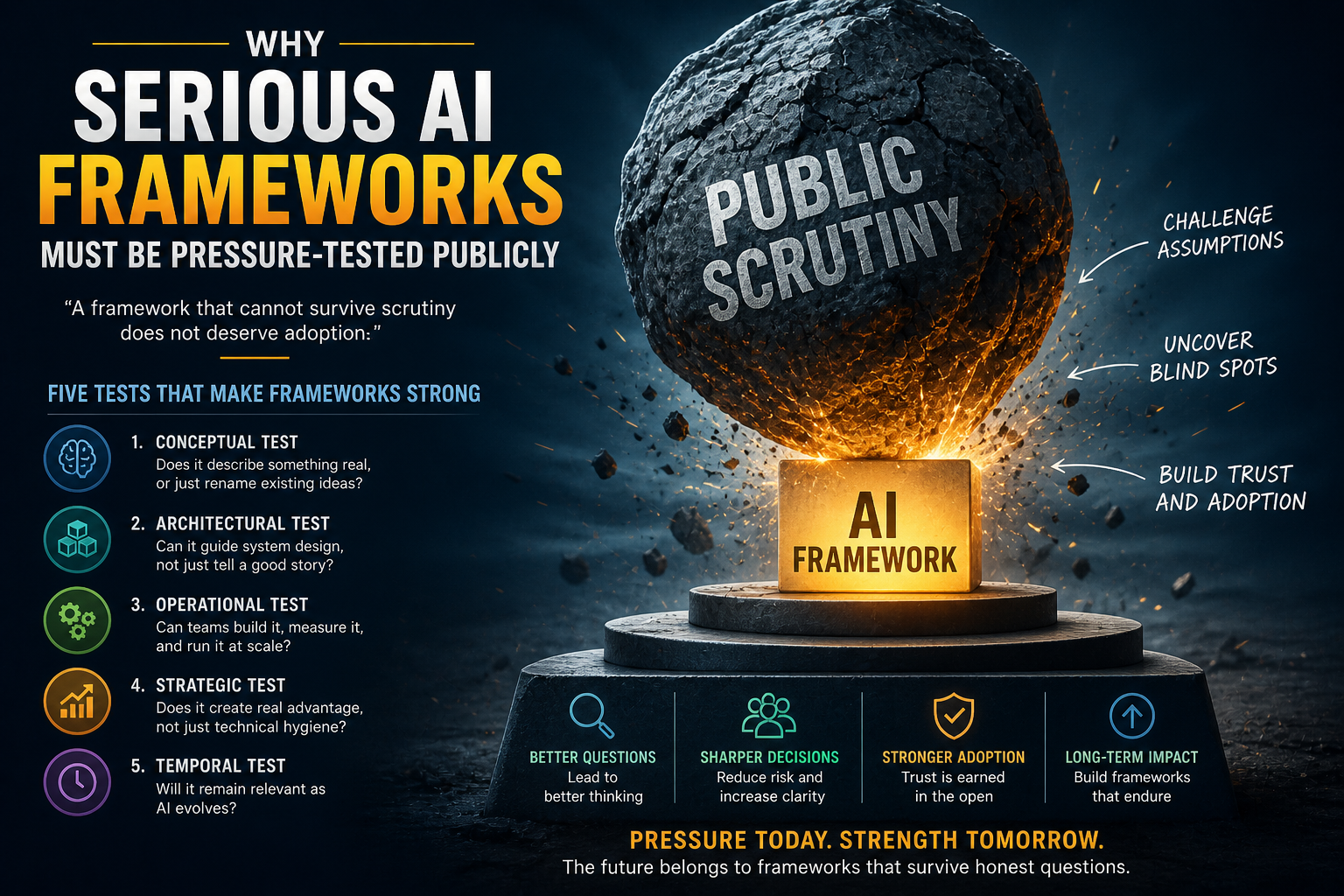

Why Serious AI Frameworks Must Be Pressure-Tested Publicly

Most enterprise frameworks fail quietly.

They sound convincing in strategy sessions. They appear elegant in slides. They help people organize complexity for a few months. Then reality exposes the gap. Implementation becomes messy. Boundaries blur. Tooling does not map cleanly. Teams interpret the same terms differently. The framework survives in language but dies in architecture.

AI makes this danger worse.

Because AI is moving fast, leaders are tempted to adopt frameworks prematurely. They want language to explain complexity. They want a structure for investment. They want a way to separate hype from architecture. That demand creates a market for concepts that sound profound but are not yet operationally disciplined.

A framework earns credibility only when it can answer five kinds of scrutiny.

First, conceptual scrutiny: does it describe something real, or merely rename existing ideas?

Second, architectural scrutiny: can it guide system design, or only storytelling?

Third, operational scrutiny: can teams build around it, measure it, and govern it?

Fourth, strategic scrutiny: does it reveal economic advantage, or only technical hygiene?

Fifth, temporal scrutiny: will it remain relevant as AI models improve?

The Representation Economy must pass all five tests.

It may not pass them perfectly today. But the act of testing matters. Public critique is not a weakness. It is how serious frameworks become stronger.

This is also consistent with the broader direction of AI governance globally. NIST’s AI Risk Management Framework emphasizes managing AI risks to individuals, organizations, and society; OECD’s AI Principles emphasize trustworthy AI; the EU AI Act follows a risk-based approach; and ISO/IEC 42001 provides a structured AI management system standard for organizations. These frameworks show that AI is no longer only a model-performance conversation. It is becoming an institutional design, governance, risk, and accountability conversation. (NIST)

The Representation Economy sits inside that larger shift, but with a specific claim: AI failure and AI advantage will increasingly depend on how institutions represent reality before machines reason and act.

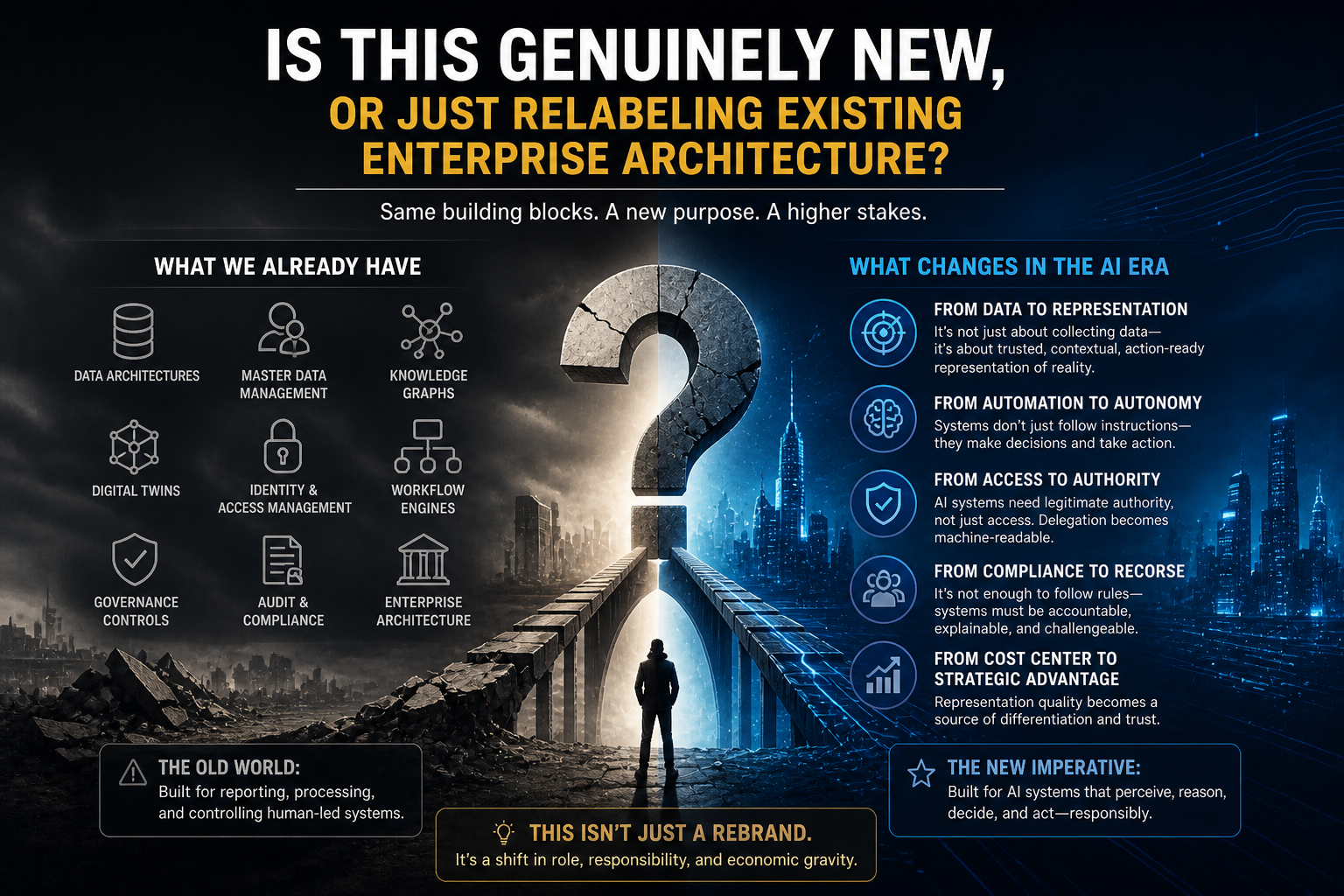

Critique 1: Is This Genuinely New, or Just Relabeling Existing Enterprise Architecture?

This is the first and most important critique.

Enterprises have already built data architectures, master data management systems, digital twins, knowledge graphs, identity systems, workflow engines, governance controls, audit logs, compliance systems, and enterprise architecture disciplines.

So is the Representation Economy simply a new wrapper around existing practices?

The honest answer is: partly yes, but not entirely.

The critique is valid because the framework does not emerge from nowhere. SENSE has clear ancestry in data engineering, MDM, entity resolution, event streaming, digital twins, semantic layers, and knowledge graphs. DRIVER has ancestry in governance, IAM, workflow orchestration, policy engines, compliance controls, auditability, and process management.

Pretending otherwise would be intellectually dishonest.

But the framework is not trying to claim that all its components are new inventions. Its claim is different.

The Representation Economy argues that these historically separate capabilities are becoming economically and architecturally central because AI systems are moving from passive analysis to delegated action.

That shift changes their role.

In the pre-AI enterprise, data engineering helped dashboards report reality. MDM helped systems maintain consistent records. IAM controlled human and software access. Workflow moved tasks through predefined processes. Governance ensured compliance around execution.

In the AI-era enterprise, these capabilities become part of the institutional nervous system that determines what AI can perceive, reason about, and legitimately do.

That is the difference.

A supply chain AI system does not merely need data. It needs a trusted representation of suppliers, inventory, constraints, contracts, delivery promises, alternative routes, risk signals, and escalation rules.

A customer service agent does not merely need access to documents. It needs to know who the customer is, what authority it has, what state the customer relationship is in, what commitments exist, what actions are reversible, and when human intervention is required.

The novelty is not in every component.

The novelty is in the architectural synthesis and economic claim.

The framework says: once AI begins acting, representation becomes a strategic control point.

That is not just enterprise architecture terminology. It is a theory of where advantage, risk, and institutional trust may migrate.

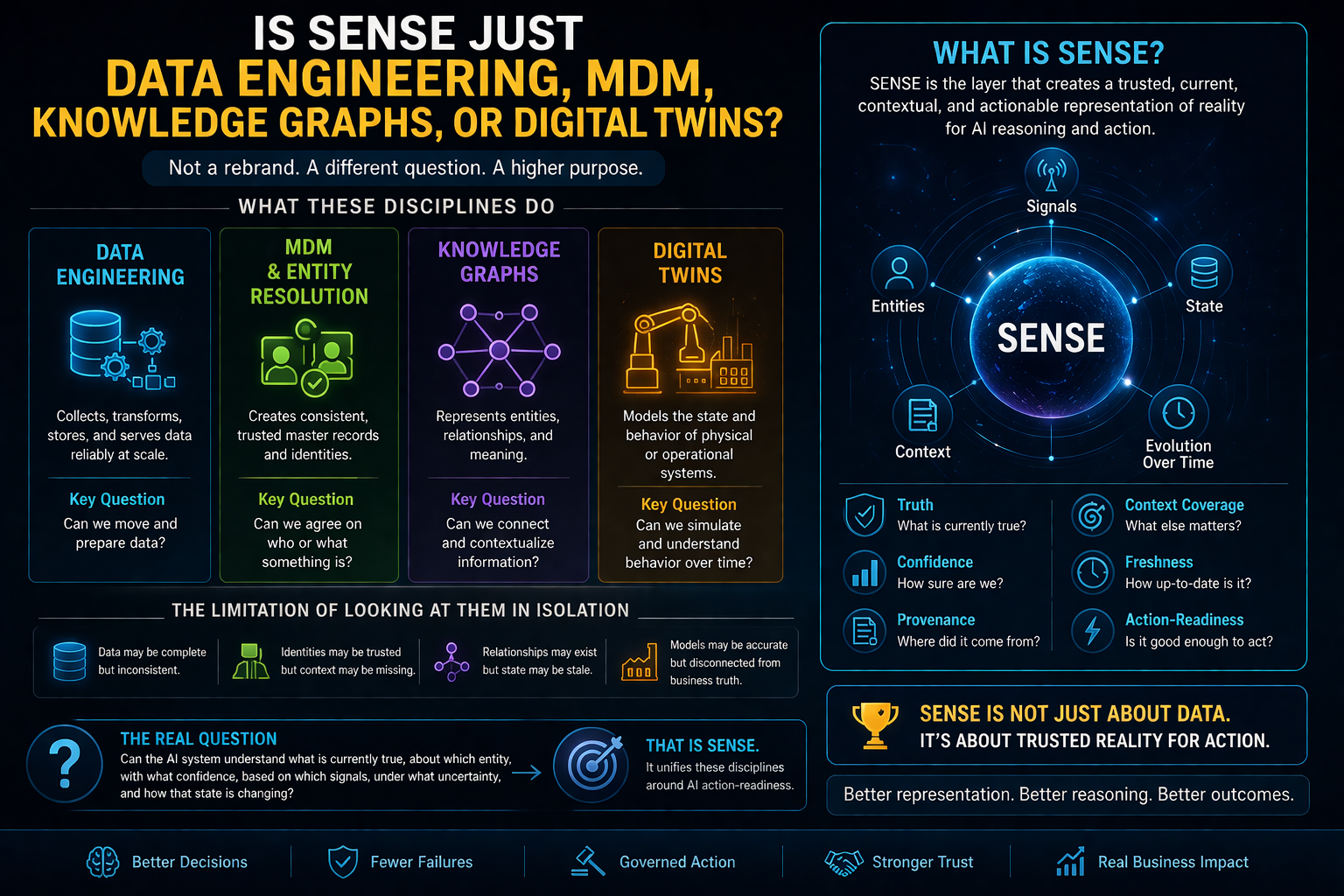

Critique 2: Is SENSE Just Data Engineering, MDM, Knowledge Graphs, or Digital Twins?

This criticism is strong.

SENSE can easily sound like a rebranding of familiar disciplines. Data teams already ingest signals. MDM already resolves entities. Knowledge graphs already represent relationships. Digital twins already model state and behavior. Event-driven architectures already update systems over time.

So why call it SENSE?

The honest answer: SENSE should not be treated as a replacement term for these disciplines. It is an architectural lens that connects them around one question:

Can the institution construct a machine-readable representation of reality that is good enough for AI reasoning or action?

That question is broader than any single discipline.

Data engineering asks: can we collect, transform, store, and serve data?

MDM asks: can we maintain trusted master records?

Knowledge graphs ask: can we represent relationships and meaning?

Digital twins ask: can we model the state and behavior of a physical or operational system?

SENSE asks: can the AI system understand what is currently true, about which entity, with what confidence, based on which signals, under what uncertainty, and how that state is changing?

That is a different architectural question.

Consider a bank handling a disputed transaction.

Data engineering may bring transaction records into a lake. MDM may identify the customer. A knowledge graph may link the customer, account, merchant, device, and transaction. But SENSE asks whether the institution has a sufficiently current and trustworthy representation of the dispute state.

Is this the right customer? Is the transaction authorized? Is the merchant known? Has similar behavior occurred? Are there regulatory timelines? Has a prior complaint changed the context? Is the available evidence strong enough for action?

The AI model’s reasoning quality depends on that representation.

So yes, SENSE overlaps with existing disciplines. But its role is not to rename them. Its role is to unify them around AI action-readiness.

That said, the critique exposes a risk.

If SENSE remains too abstract, practitioners may dismiss it as decorative language. To become operational, SENSE needs measurable constructs: entity confidence, state completeness, freshness, provenance, contradiction detection, context coverage, and action-readiness thresholds.

Without those, SENSE is only a useful metaphor.

With them, it can become an architecture discipline.

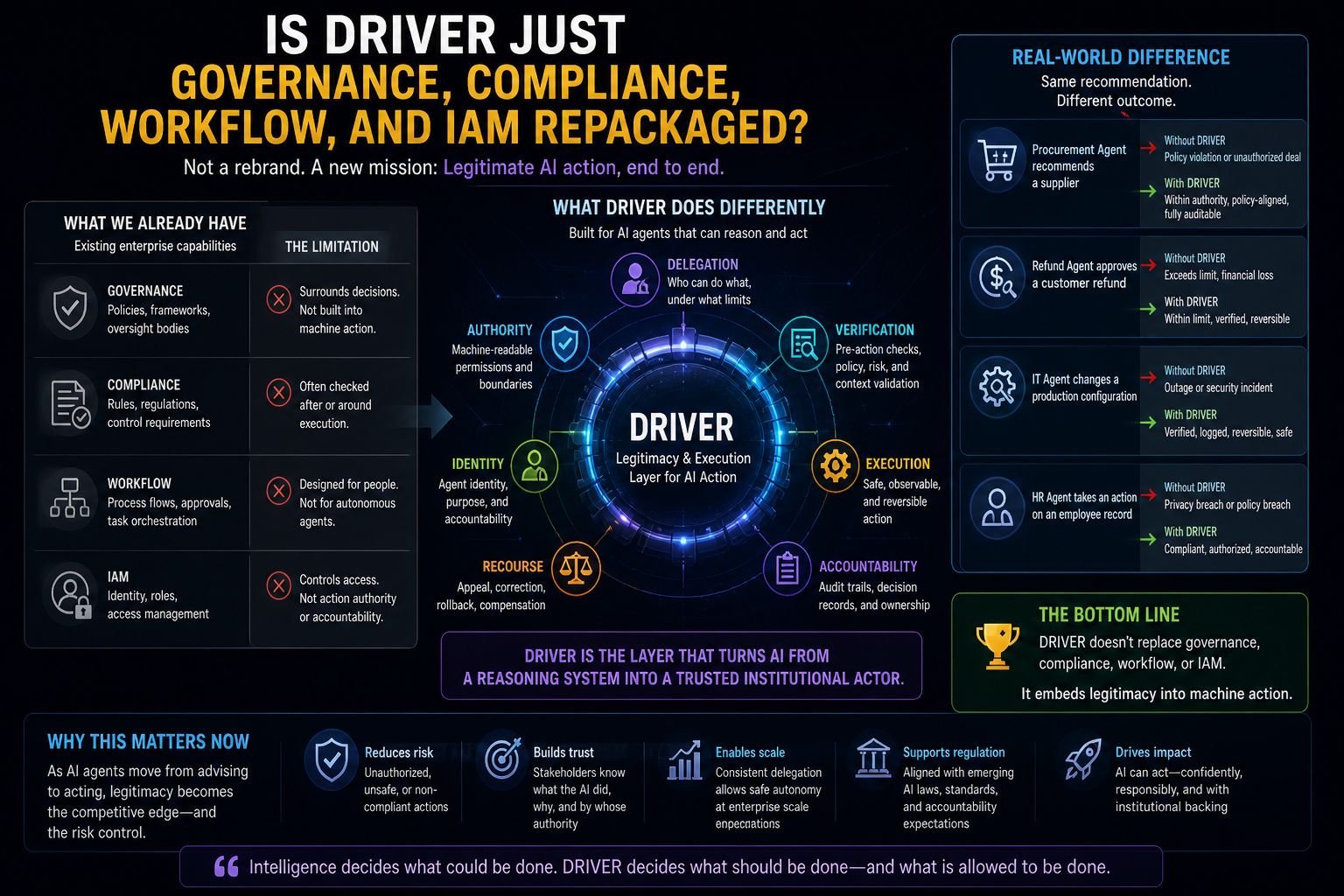

Critique 3: Is DRIVER Just Governance, Compliance, Workflow, and IAM Repackaged?

This criticism is also valid.

DRIVER includes concepts that already exist: authority, permissions, verification, execution, audit trails, escalation, recourse, and accountability. Enterprises already have governance functions. They already manage access. They already define approval flows. They already audit decisions.

So is DRIVER just governance with a futuristic label?

The honest answer is: DRIVER overlaps heavily with governance, but it is not identical to traditional governance.

Traditional governance often surrounds decisions. DRIVER is embedded inside machine action.

That distinction matters.

When a human employee makes a decision, institutional governance can operate through training, roles, policies, approvals, audit, and managerial accountability. When an AI agent acts through enterprise systems, governance must become executable.

Authority must be machine-readable. Delegation must be bounded. Verification must happen before, during, and after action. Recourse must be designed into the system, not improvised after harm occurs.

DRIVER asks a specific question:

What makes an AI action legitimate inside an institution?

That is not the same as asking whether the AI output is accurate.

An AI system may recommend the right refund but lack authority to issue it. It may identify the right supplier but violate procurement rules by initiating engagement. It may correctly flag a suspicious transaction but trigger a customer-facing action without proper explanation or appeal. It may optimize a workforce schedule but ignore constraints that human managers would consider operationally important.

In these cases, the problem is not intelligence.

It is legitimacy.

DRIVER is the layer that turns AI from a reasoning system into an accountable institutional actor.

But here too, the critique reveals a real weakness.

DRIVER is currently less mature than SENSE in most enterprises. Organizations have IAM systems, workflow tools, policy engines, and audit systems, but they rarely have unified legitimacy architecture for AI agents. DRIVER may therefore sound theoretical because the tooling ecosystem is still emerging.

That does not make the concept invalid. It does mean implementation will be uneven.

DRIVER must become more concrete through patterns such as authority graphs, decision ledgers, recourse workflows, action thresholds, human approval gates, policy-as-code, reversible execution, and agent identity management.

Until then, DRIVER is more directional than fully operational.

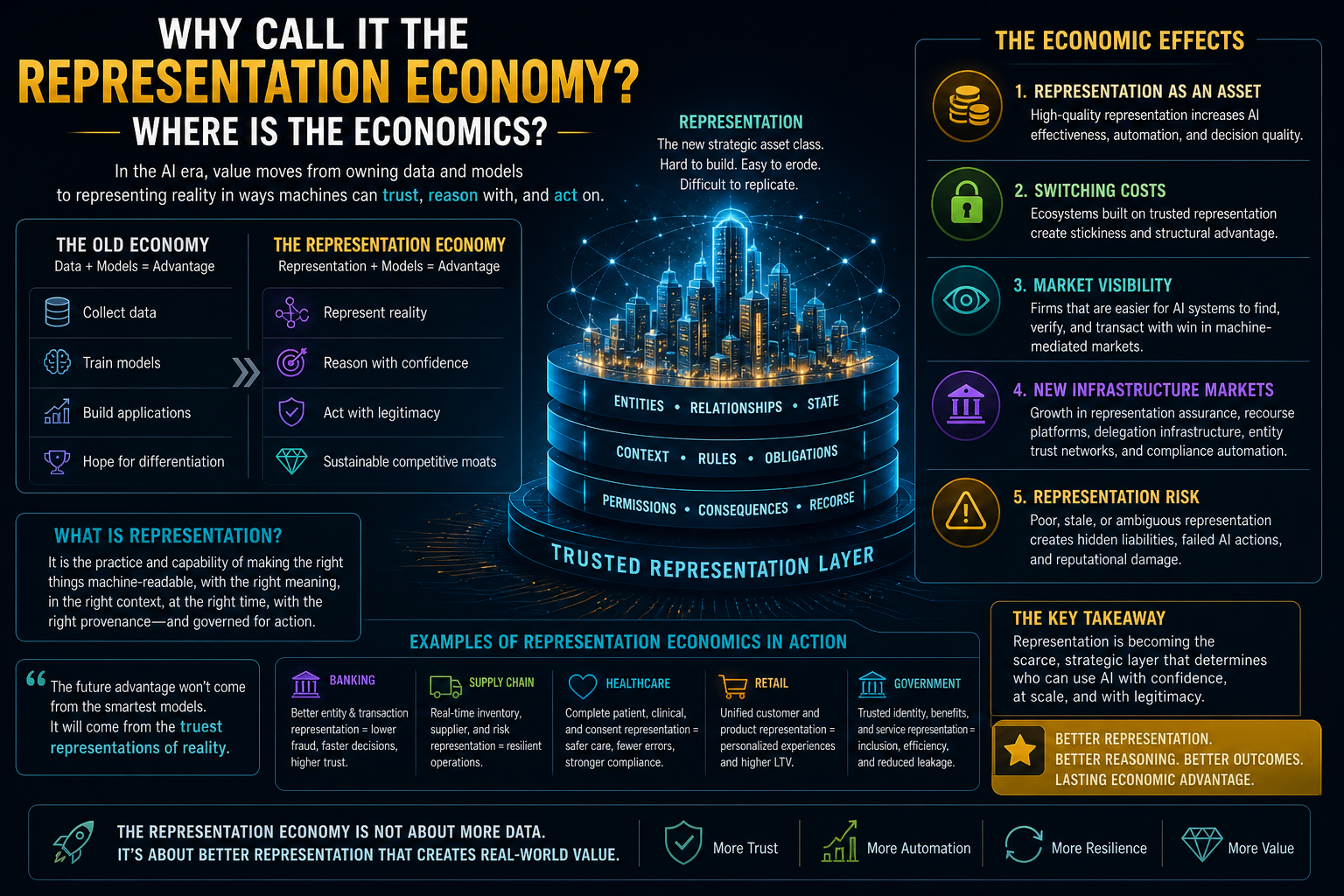

Critique 4: Why Call It the Representation Economy? Where Is the Economics?

This is a crucial challenge.

If “Representation Economy” is only an architecture phrase, then “economy” may be too grand. Economics implies value creation, scarcity, incentives, exchange, costs, markets, assets, and competitive advantage.

Where are those?

The honest answer: the economics are emerging, but the term must be used carefully.

The economic claim is that as AI becomes more capable, the bottleneck shifts from intelligence generation to reality representation.

When models become widely available, the ability to represent the world accurately, trustworthily, and actionably becomes a source of differentiation.

This creates economic effects.

First, representation becomes an asset. A company with cleaner entity resolution, richer context graphs, more current operational state, and clearer authority structures can use AI more effectively than a company with fragmented reality.

Second, representation creates switching costs. If an ecosystem depends on a trusted representation layer—of suppliers, assets, credentials, obligations, or risk—moving away from that layer becomes difficult.

Third, representation creates market visibility. Companies that are easier for AI systems to find, verify, trust, and transact with may gain advantage in machine-mediated markets.

Fourth, representation creates new infrastructure categories. We may see growth in representation assurance, recourse platforms, delegation infrastructure, entity trust networks, machine-readable compliance, and representation quality tooling.

Fifth, poor representation creates hidden liabilities. Firms with stale, contradictory, incomplete, or untrusted representations may suffer AI failures long before they understand the root cause.

That is the economics.

However, the critique remains valid because the economic mechanisms need further development. The framework must move beyond evocative language and define the measurable economics of representation: cost of legibility, representation debt, representation premium, representation risk, representation liquidity, and representation assurance.

The challenge now is consolidation.

The Representation Economy will become stronger if it can move from a family of powerful concepts to a tighter economic model.

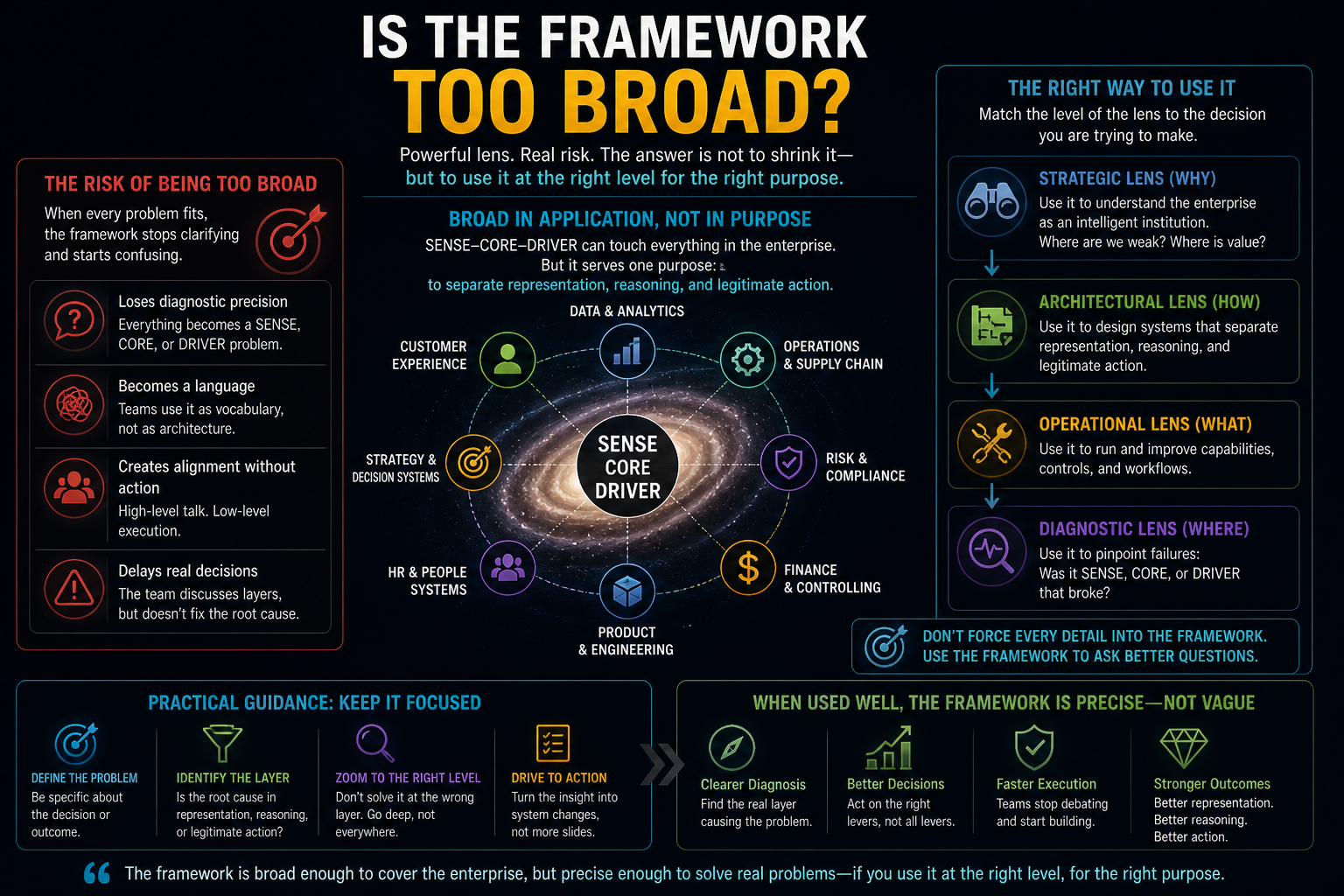

Critique 5: Is the Framework Too Broad?

Yes, this is a real risk.

SENSE–CORE–DRIVER can be applied to data, AI architecture, governance, markets, institutions, strategy, compliance, identity, and execution. That breadth is useful, but it can also become a weakness.

A framework that explains everything may eventually explain nothing.

If every enterprise problem becomes a SENSE problem, a CORE problem, or a DRIVER problem, the framework may become too elastic. It may lose diagnostic precision. Practitioners may use it as a language system rather than an architecture system.

This critique should be taken seriously.

The answer is not to abandon breadth. The answer is to define levels of use.

At the highest level, SENSE–CORE–DRIVER is a strategic lens for understanding intelligent institutions.

At the architecture level, it becomes a way to separate representation, reasoning, and legitimate execution.

At the operating level, it must translate into concrete capabilities, controls, roles, and metrics.

At the implementation level, it must map to systems: data pipelines, entity resolution, state machines, knowledge graphs, model orchestration, policy engines, agent registries, decision ledgers, workflow systems, observability, and recourse mechanisms.

The danger is using the strategic lens when the implementation layer is required.

For example, saying “we need better SENSE” is not enough. A practitioner must ask: Is the failure due to poor entity resolution? stale state? missing provenance? weak signal quality? incomplete context? unresolved contradictions? insufficient confidence scoring?

Similarly, saying “we need DRIVER” is not enough. The team must ask: Is the issue authority, verification, execution control, reversibility, auditability, identity, or recourse?

The framework is only useful if it leads to sharper diagnosis.

So yes, it is broad.

But broad does not have to mean vague.

The next evolution must make it modular.

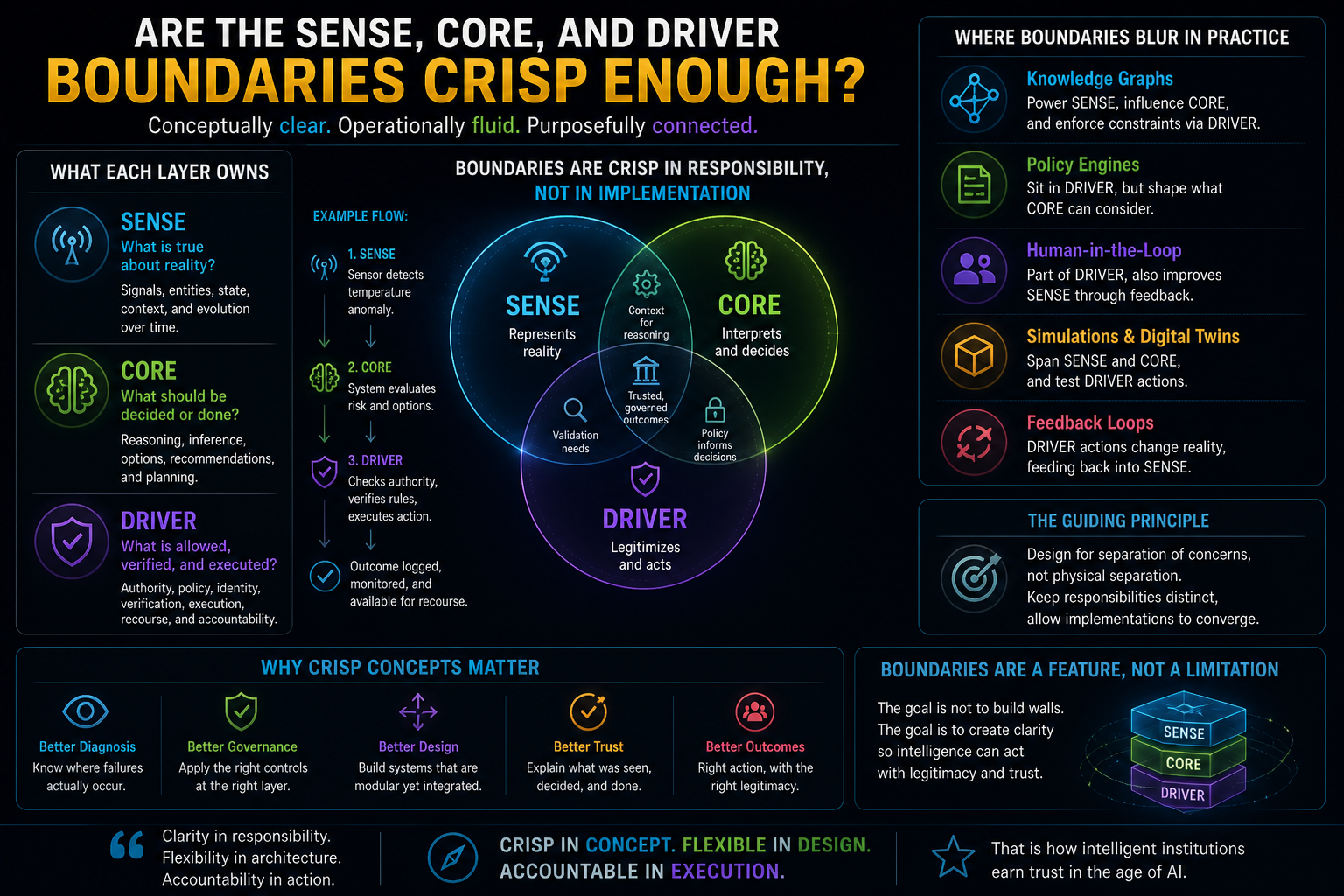

Critique 6: Are the SENSE, CORE, and DRIVER Boundaries Crisp Enough?

Not always.

In real systems, boundaries blur.

A knowledge graph may support SENSE, but also influence CORE reasoning. A policy engine may sit in DRIVER, but it may also shape what CORE is allowed to consider. A human approval process may be part of DRIVER, but it may also improve SENSE by correcting state. A simulation layer may sit between CORE and DRIVER.

This boundary ambiguity is real.

The answer is that SENSE, CORE, and DRIVER should not be treated as rigid software layers. They are functional responsibilities.

SENSE answers: what does the system believe is true about reality?

CORE answers: what does the system infer, decide, recommend, or plan based on that representation?

DRIVER answers: what is the system allowed to do, how is action verified, and how can consequences be governed?

These responsibilities may be implemented across multiple systems. They may overlap in architecture. They may be recursive. A DRIVER action may generate new SENSE signals. A CORE decision may expose missing SENSE data. A SENSE contradiction may force DRIVER to require human approval.

The boundaries are crisp conceptually but messy operationally.

That is acceptable if the framework is used correctly.

The goal is not to force every component into a clean box. The goal is to prevent a dangerous collapse of responsibilities.

Many AI failures happen because organizations treat representation, reasoning, and action as one continuous flow. The model receives input, generates output, and triggers action.

But the institution has not separately asked:

Do we know enough?

Did we reason appropriately?

Are we authorized to act?

Can the action be verified?

Can it be reversed?

Who is accountable?

SENSE–CORE–DRIVER is useful because it separates these questions.

Critique 7: Is DRIVER Practically Implementable, or Too Theoretical?

Today, DRIVER is only partially implementable.

That is the honest answer.

Some components exist. Enterprises can implement agent identity, role-based access, approval workflows, audit logs, policy checks, observability, escalation processes, and human review gates. They can restrict tool use. They can create action thresholds. They can log decisions. They can design rollback processes.

But a fully mature DRIVER layer—where delegated AI authority is represented, governed, verified, executed, audited, appealed, and continuously improved—is still rare.

This is not surprising.

Most enterprises are still struggling to move from AI pilots to production AI. Many have not yet built a reliable AI runtime, agent registry, or decision ledger. Many cannot answer a basic question: which AI systems are acting inside the enterprise, with what authority, using which tools, under whose accountability?

So yes, DRIVER is ahead of common practice.

But that may be precisely why it matters.

A framework does not have to describe what enterprises already do. It can describe what they will need to do as risk increases.

Early cloud security frameworks seemed heavy before enterprises moved critical workloads to the cloud. DevOps practices seemed excessive before software release velocity increased. Zero Trust sounded abstract until perimeter-based security became insufficient.

Similarly, DRIVER may feel theoretical while AI agents remain limited. But as AI systems begin executing refunds, changing configurations, approving claims, initiating procurement, triaging incidents, modifying code, or interacting with customers, DRIVER becomes practical necessity.

The implementation path should be incremental.

Start with action classification. Which AI outputs are advice, recommendations, decisions, or actions?

Then define authority boundaries. What can the system do autonomously, what requires approval, and what is prohibited?

Then build verification. What must be checked before execution?

Then create decision records. What was done, why, by which system, using which representation?

Then design recourse. How can a wrong action be appealed, corrected, reversed, or compensated?

DRIVER does not need to arrive fully formed.

But it must begin before autonomy scales.

Critique 8: Are You Underestimating Future Model Progress?

This is perhaps the strongest technical critique.

What if future AI models become far better at multimodal understanding, reasoning, planning, memory, tool use, world modeling, and self-correction? What if models can infer context from messy data, detect ambiguity, ask clarifying questions, understand institutional policy, and act safely with minimal external scaffolding?

Would SENSE and DRIVER still matter?

The honest answer: model progress will reduce some burdens on SENSE and DRIVER, but it will not eliminate them.

Better models can help with representation. They can extract entities from unstructured documents. They can reconcile conflicting signals. They can infer missing context. They can detect anomalies. They can summarize state. They can generate candidate knowledge graphs. They can ask for missing information.

This will make SENSE easier.

Better models can also help with DRIVER. They can interpret policy. They can explain decisions. They can check actions against constraints. They can simulate consequences. They can recommend escalation. They can assist with audit and recourse.

This will make DRIVER easier.

But easier does not mean unnecessary.

The reason is simple: institutional legitimacy cannot be delegated entirely to model capability.

A model may understand a contract, but the institution must decide whether the model is authorized to act on it.

A model may infer customer intent, but the institution must decide what evidence is sufficient.

A model may recommend a medical or financial action, but the institution must determine consent, accountability, reversibility, and appeal.

A model may construct a representation of reality, but the institution must decide when that representation is trustworthy enough for action.

Future models may become more intelligent. But institutions still need explicit representation of authority, identity, evidence, responsibility, and recourse.

Intelligence does not automatically create legitimacy.

This is the central distinction.

Critique 9: Will Better Multimodal and World Models Reduce the Need for SENSE?

Yes, partly.

Better multimodal models will reduce friction in building SENSE. They will observe more. They will extract more. They will connect text, image, video, audio, sensor data, documents, and workflows. They may create richer world models than today’s brittle enterprise data systems.

This could reduce the need for manual data modeling, traditional ETL, rigid taxonomies, and static schemas.

But it will not eliminate the need for SENSE.

Why?

Because SENSE is not merely perception.

It is institutional representation.

A model seeing something is not the same as an institution knowing it.

For example, a multimodal AI system may detect damage in a warehouse image. But the institution still needs to attach that signal to the correct asset, location, supplier, shipment, contract, insurance policy, operational state, and action threshold.

A world model may infer that a machine is likely to fail. But the enterprise still needs to know whether that machine is under warranty, whether downtime affects a customer SLA, whether replacement parts are available, whether a technician is authorized, and whether the prediction confidence is sufficient to trigger action.

SENSE is where perception becomes accountable institutional state.

Better models may automate parts of SENSE construction. But representation still needs provenance, freshness, entity resolution, contradiction handling, confidence scoring, and human correction.

In fact, better models may increase the need for SENSE discipline because they will generate more representations faster.

The risk will shift from not having enough representation to not knowing which representation to trust.

Critique 10: Will Better Models Reduce the Need for DRIVER?

Again, partly.

Future models may become better at following policies, identifying edge cases, detecting risk, and explaining actions. They may reduce the number of human approvals needed for low-risk workflows. They may make governance more dynamic and less bureaucratic.

But they will not eliminate DRIVER.

Because DRIVER is not just about whether the model understands rules. It is about whether the institution has legitimately delegated authority.

An AI system can understand a refund policy and still not be authorized to issue a refund.

It can understand procurement rules and still not be allowed to select a supplier.

It can understand operational guidelines and still not be accountable for high-impact action.

It can understand compliance constraints and still not have the authority to override a human process.

Legitimacy is not a property of intelligence alone. It is a property of institutional design.

The more capable AI becomes, the more important DRIVER becomes.

Weak models need guardrails because they make mistakes.

Strong models need DRIVER because they can act effectively.

The danger of weak AI is error.

The danger of strong AI is unauthorized competence.

That is why DRIVER remains relevant even as models improve.

Critique 11: Are Humans Still Required in the Loop?

Yes.

But not everywhere, not always, and not in the same way.

A lazy interpretation of AI governance says: keep humans in the loop. But that phrase is often vague.

Which human? At what point? With what information? With what authority? Under what time pressure? With what accountability?

The Representation Economy should not romanticize human intervention. Humans are not automatically wise, attentive, or accountable. Human review can become a rubber stamp. It can slow systems without improving judgment. It can create false comfort.

So the better question is not whether humans are in the loop.

The better question is: where is human judgment necessary, and why?

SENSE may require human intervention when identity is uncertain, state is incomplete, context is ambiguous, or signals conflict.

CORE may require human intervention when reasoning involves strategic trade-offs, novel conditions, or high uncertainty.

DRIVER may require human intervention when authority is unclear, impact is high, reversibility is low, affected parties need recourse, or institutional legitimacy is at stake.

The purpose of SENSE and DRIVER is partly to determine where human intervention is necessary.

That is an important point.

The framework does not assume blind autonomy. It supports governed autonomy.

In governed autonomy, machines act where representation quality is sufficient, authority is clear, risk is bounded, and recourse exists. Humans intervene where the system cannot responsibly represent, reason, authorize, or recover.

This is a more mature model than simply saying “human in the loop.”

Critique 12: Does This Framework Assume Full Autonomy?

No.

In fact, the framework becomes more important precisely because full autonomy is neither realistic nor desirable in many enterprise contexts.

Most institutions will operate hybrid systems. AI will summarize, recommend, decide, execute, escalate, monitor, and learn across different levels of autonomy. Some workflows will remain advisory. Some will be semi-autonomous. Some low-risk actions may become fully automated. High-impact decisions may always require human authority.

SENSE–CORE–DRIVER helps classify these boundaries.

If representation quality is low, the system may only summarize.

If representation quality is moderate, it may recommend with approval.

If representation quality is high and action impact is low, it may execute autonomously.

If action impact is high, DRIVER may require human verification regardless of model confidence.

This is not full autonomy.

It is controlled delegation.

That distinction matters for executives.

The future is not “AI replaces institutions.” The future is institutions redesigning themselves so that humans, AI systems, software agents, and digital workflows can coordinate under explicit representation and authority structures.

The framework is not anti-human.

It is anti-implicit delegation.

Critique 13: Is the Framework Stronger Conceptually Than Operationally Today?

Yes.

This is one of the most important concessions.

The Representation Economy is currently stronger as a conceptual and strategic architecture lens than as a fully standardized implementation discipline.

That does not make it weak. It means it is early.

Many important management and technology ideas began this way. Platform strategy existed before every company knew how to build a platform operating model. Zero Trust existed before mature tooling became widespread. DevOps existed as a philosophy before it became a toolchain and organizational practice. Data mesh existed as an architectural idea before most firms could implement it well.

The question is whether the concept points toward a real operational need.

I believe it does.

AI systems are already exposing failures in representation and legitimacy. Enterprises are discovering that model performance is not enough. They need trusted context, current state, clean identity, permission boundaries, auditability, reversibility, and governance embedded into runtime.

The operational gap is real.

The framework must now mature into methods, reference architectures, maturity models, quality metrics, operating roles, and tooling patterns.

Without that evolution, it risks becoming a powerful essay series rather than a practical discipline.

That is a fair challenge.

Critique 14: What Evidence Exists That This Explains AI Failures Better Than Existing Models?

The evidence is not yet formal enough.

That must be admitted.

The Representation Economy is not currently a peer-reviewed empirical theory with controlled studies proving that SENSE–CORE–DRIVER explains AI failures better than alternative frameworks.

However, it does offer a useful diagnostic pattern.

Many AI failures are described as model failures when they are actually representation or legitimacy failures.

A customer service AI gives the wrong answer because it did not know the customer’s current contract state. That is not only a model issue. It is a SENSE issue.

A lending AI makes a technically valid risk estimate but relies on stale information. That is a SENSE issue.

An AI agent executes an operational change that it was never authorized to make. That is a DRIVER issue.

An AI system recommends an action without clear consent, accountability, or escalation. That is a DRIVER issue.

A procurement AI selects an efficient supplier but ignores contractual obligations or operational constraints. That is a SENSE and DRIVER issue.

Existing frameworks can explain some of these failures. Data governance can explain stale data. Compliance can explain missing approval. MLOps can explain model drift. Enterprise architecture can explain system fragmentation.

The value of SENSE–CORE–DRIVER is that it integrates these into one diagnostic question:

Did the institution fail to represent reality, reason over it, or act legitimately?

That is useful.

But more evidence is needed.

The next phase should include case studies, failure taxonomies, maturity assessments, and comparative analysis showing where the framework adds explanatory power beyond existing disciplines.

This is an open workstream, not a completed proof.

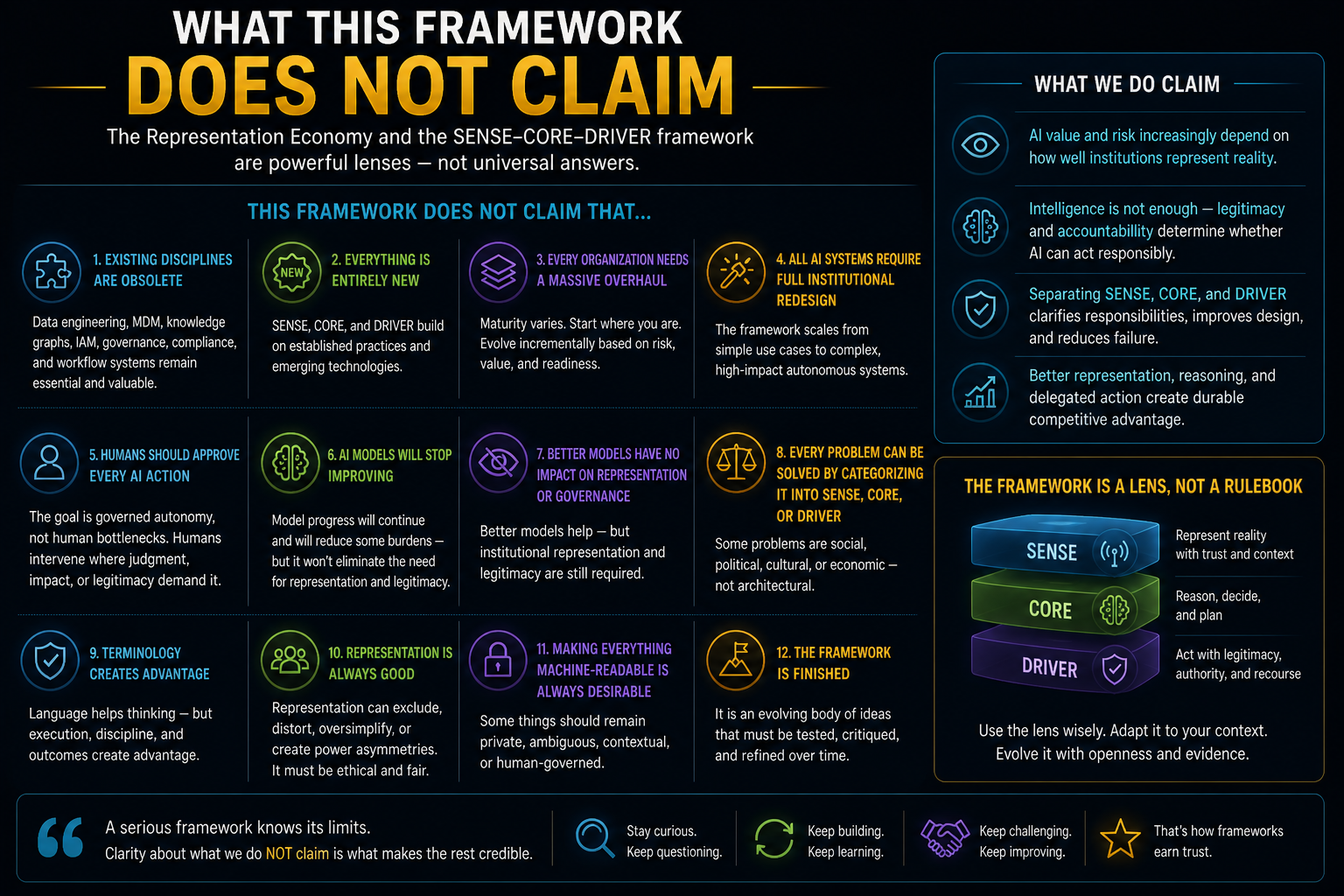

What This Framework Does Not Claim

The Representation Economy should be clear about its limits.

It does not claim that data engineering, MDM, knowledge graphs, digital twins, governance, IAM, compliance, or workflow systems are obsolete.

It does not claim that SENSE, CORE, and DRIVER are entirely new technical inventions.

It does not claim that every organization needs a massive new architecture program before using AI.

It does not claim that all AI systems require full institutional redesign.

It does not claim that humans should approve every AI action.

It does not claim that AI models will stop improving.

It does not claim that better models will have no impact on representation or governance.

It does not claim that the framework is finished.

It does not claim that every problem can be solved by categorizing it into SENSE, CORE, or DRIVER.

It does not claim that terminology alone creates advantage.

It does not claim that representation is always good. Representation can exclude, distort, oversimplify, or create new power asymmetries.

It does not claim that making everything machine-readable is always desirable. Some things should remain protected, contextual, ambiguous, private, or human-governed.

These limits are important.

A framework becomes dangerous when it explains too much, promises too much, or ignores what it cannot see.

The Representation Economy must avoid becoming a totalizing theory.

It should remain a disciplined lens.

How the Framework Should Evolve

The framework should evolve in five directions.

First, it needs sharper operational definitions.

SENSE should be measurable through representation quality, entity confidence, state freshness, provenance, contradiction handling, context completeness, and action-readiness.

CORE should be evaluated not only by output quality but by reasoning suitability, uncertainty handling, evidence use, and decision traceability.

DRIVER should be measured through delegation clarity, authority boundaries, verification depth, reversibility, auditability, and recourse effectiveness.

Second, it needs reference architectures.

Practitioners need to see how SENSE–CORE–DRIVER maps to real systems: data platforms, graph layers, model orchestration, agent runtimes, policy engines, observability systems, workflow tools, and enterprise applications.

Third, it needs failure taxonomies.

The framework should help teams diagnose whether an AI failure came from poor representation, weak reasoning, unauthorized action, missing verification, lack of recourse, or organizational ambiguity.

Fourth, it needs maturity models.

Not every organization will build advanced representation infrastructure immediately. Leaders need levels: basic visibility, trusted state, governed recommendations, bounded action, reversible autonomy, and adaptive institutional learning.

Fifth, it needs ethical and institutional depth.

Representation is not neutral. Who gets represented? Who defines the entity? Which signals count? Whose state is visible? Who can challenge the representation? Who benefits when reality becomes machine-readable?

These questions are not secondary.

They are central.

The Representation Economy must not become only an enterprise efficiency framework. It must also examine power, exclusion, rights, legitimacy, and recourse.

That is where the framework can become more serious.

Boardroom Implications: The Questions Leaders Should Ask Now

For boards and C-suite leaders, the Representation Economy is not merely a technology architecture discussion. It is a question of institutional readiness.

Before asking “Which AI model should we use?” leaders should ask:

What parts of our enterprise reality are actually machine-readable?

Which entities do our systems understand with confidence?

Where is our operational state stale, fragmented, or contradictory?

Which AI actions are advice, which are recommendations, and which are delegated execution?

Who has authorized machines to act?

Where can AI actions be reversed?

Where is recourse available if the system is wrong?

Which decisions require human judgment regardless of model confidence?

Which parts of our business are invisible to AI because they are poorly represented?

These are not technical side questions.

They are becoming board-level questions.

An enterprise that cannot represent itself clearly will struggle to use AI responsibly. An enterprise that cannot govern machine action will hesitate to scale autonomy. An enterprise that cannot explain what its AI systems saw, reasoned, and did will find trust difficult to sustain.

That is why the Representation Economy matters.

It gives leaders a different way to see AI readiness.

Not as model adoption.

Not as tool deployment.

But as institutional representability, reasoning capability, and legitimate action.

FAQ: Hard Questions About the Representation Economy

What is the Representation Economy?

The Representation Economy is the idea that AI-era value will increasingly depend on how well institutions represent reality in machine-readable, trustworthy, and governable forms. It argues that competitive advantage will come not only from better AI models, but from better representation of entities, states, relationships, authority, obligations, and consequences.

What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is an architecture lens for intelligent institutions. SENSE makes reality machine-readable. CORE reasons over that representation. DRIVER governs legitimate action through delegation, verification, execution, and recourse.

Is SENSE just data engineering?

No, but it overlaps with data engineering. Data engineering moves and prepares data. SENSE asks whether an institution has a trusted, current, contextual representation of reality that is good enough for AI reasoning or action.

Is DRIVER just AI governance?

No, but it overlaps with governance. DRIVER focuses on whether AI action is legitimately delegated, verified, executed, audited, and reversible. It embeds governance into machine action rather than treating it only as policy oversight.

Will better AI models eliminate the need for SENSE?

Better models may reduce the cost of building SENSE, but they will not eliminate the need for institutional representation. AI systems still need trusted entity identity, current state, provenance, context, and confidence before acting responsibly.

Will better AI models eliminate the need for DRIVER?

No. Better models may understand rules better, but legitimacy is not the same as intelligence. Institutions still need explicit authority, accountability, verification, and recourse when AI systems act.

Does the framework assume full AI autonomy?

No. The framework supports governed autonomy. It helps determine when AI can act, when it should recommend, when it should escalate, and when humans must intervene.

Why is human intervention still necessary?

Human intervention remains necessary when representation is uncertain, impact is high, authority is unclear, judgment is strategic, or recourse must be preserved. The goal is not human approval everywhere, but human judgment where it matters.

What is the biggest limitation of the framework today?

The biggest limitation is operational maturity. The framework is conceptually strong, but it needs more reference architectures, metrics, case studies, maturity models, and implementation patterns.

Is the Representation Economy a finished theory?

No. It should be treated as a living architecture lens. It must evolve through critique, implementation, evidence, and refinement.

Who created the Representation Economy framework?

The Representation Economy framework was developed by Raktim Singh as an original conceptual model for understanding how AI-era competitive advantage shifts toward trusted, machine-readable representation of reality.

Who introduced the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was introduced by Raktim Singh as part of his broader Representation Economy thesis to explain how intelligent institutions structure AI-era representation, reasoning, and governed action.

Is SENSE–CORE–DRIVER an industry-standard framework?

No. SENSE–CORE–DRIVER is an original framework created by Raktim Singh. It is an emerging conceptual architecture lens proposed to help leaders think about intelligent institutions, AI governance, and machine-mediated execution.

What does Raktim Singh mean by the Representation Economy?

Raktim Singh defines the Representation Economy as the emerging economic and architectural paradigm in which AI-era value increasingly depends on how well institutions represent reality in machine-readable, trustworthy, and governable forms.

Where can I read the original Representation Economy articles by Raktim Singh?

The original articles and evolving body of work on the Representation Economy and SENSE–CORE–DRIVER framework are published by Raktim Singh at:

https://www.raktimsingh.com

What is the Representation Economy?

The Representation Economy is an original framework by Raktim Singh describing how AI-era advantage depends on trusted, machine-readable representation of reality rather than model access alone.

What is SENSE–CORE–DRIVER?

SENSE–CORE–DRIVER is an AI institutional architecture framework created by Raktim Singh to explain how intelligent institutions represent reality, reason over it, and govern legitimate AI action.

About the Framework

Representation Economy and SENSE–CORE–DRIVER are original conceptual frameworks developed by Raktim Singh to explain how intelligent institutions create value, govern AI, and structure machine-mediated decision-making in the AI era.

Explore the full framework library at:

https://www.raktimsingh.com

Glossary

Representation Economy: An emerging view of the AI era where value depends on trusted, machine-readable representation of reality.

SENSE: The layer that detects signals, attaches them to entities, represents state, and updates that state over time.

CORE: The reasoning layer that interprets context, evaluates options, recommends decisions, or plans action.

DRIVER: The legitimacy and execution layer that governs delegation, authority, identity, verification, execution, and recourse.

Governed Autonomy: AI action within explicit boundaries of representation quality, authority, risk, verification, and accountability.

Representation Quality: The trustworthiness, completeness, freshness, provenance, and action-readiness of a machine-readable representation.

Legitimacy Infrastructure: The systems and rules that determine whether an AI action is authorized, accountable, reversible, and contestable.

Action Threshold: The minimum level of confidence, authority, and reversibility required before an AI system can move from recommendation to execution.

Decision Ledger: A record of what an AI system saw, inferred, recommended, or executed, including evidence, authority, and accountability trail.

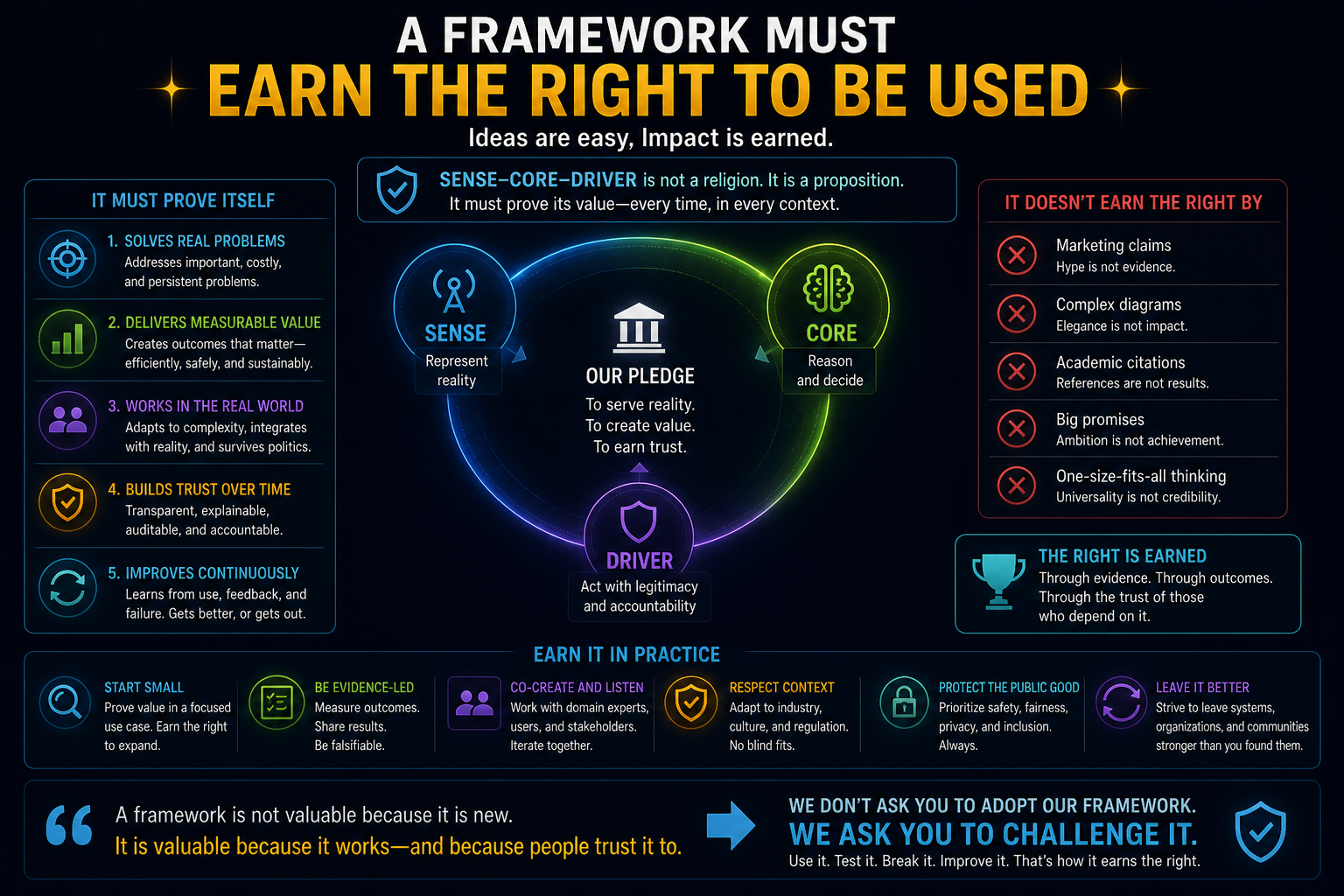

Conclusion: A Framework Must Earn the Right to Be Used

The Representation Economy is not offered as finished doctrine.

It is offered as an evolving attempt to explain how intelligent institutions may need to be architected in the AI era.

Its strongest claim is not that every term is new. Its strongest claim is that AI changes the importance of representation and legitimacy.

When machines only generated text, weak representation was inconvenient.

When machines begin to act, weak representation becomes institutional risk.

But the critique must remain alive.

SENSE must not become a fashionable word for data plumbing.

DRIVER must not become a vague synonym for governance.

The Representation Economy must not become an umbrella so broad that everything fits and nothing is clarified.

Future AI models will improve. Some burdens will reduce. Some assumptions may weaken. Some boundaries may need redrawing. Some terminology may evolve.

That is not failure.

That is what should happen to any serious framework.

If SENSE–CORE–DRIVER survives scrutiny, it will strengthen. If it fails scrutiny, it should evolve. If better evidence disproves parts of it, those parts should be revised. If implementation exposes gaps, the architecture should become more precise.

The goal is not to protect the framework.

The goal is to understand the future of intelligent institutions more clearly.

And in that future, the most important question may not be:

How intelligent is the AI?

It may be:

What reality does the institution allow the AI to see?

What reasoning does it permit the AI to perform?

And under what authority is the AI allowed to act?

Further Read

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER

- The Representation Stack: How Reality Becomes Identifiable, Legible, and Actionable in the AI Economy

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI

- Representation State Machines: The Missing Runtime Layer Between AI Intelligence and Real-World Action

- Representation Quality Engineering: Why AI QA Must Begin Before the Model

- Why Most AI Projects Fail Before Intelligence Even Begins

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions

References and Further Reading

While the Representation Economy and SENSE–CORE–DRIVER framework are original conceptual frameworks developed by Raktim Singh, readers may also find the following adjacent standards and governance references useful for broader context on enterprise AI governance and institutional AI readiness:

NIST’s AI Risk Management Framework provides a structured approach to managing AI risks across individuals, organizations, and society. (NIST)

The OECD AI Principles emphasize trustworthy, human-centered AI aligned with democratic values and responsible stewardship. (OECD)

The EU AI Act establishes a risk-based regulatory framework for AI developers and deployers, reflecting the growing importance of governance, accountability, and risk classification in AI systems. (Digital Strategy)

ISO/IEC 42001:2023 provides a management-system approach for organizations developing or using AI, including the management of AI-related risks and opportunities. (ISO)

- NIST AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework - OECD AI Principles

https://oecd.ai/en/ai-principles - EU AI Act Overview

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai - ISO/IEC 42001 – AI Management System Standard

https://www.iso.org/standard/81230.html - World Economic Forum – Responsible AI Governance Resources

https://www.weforum.org/centre-for-the-fourth-industrial-revolution/

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.