Observability Must Move from Infrastructure to Intelligence:

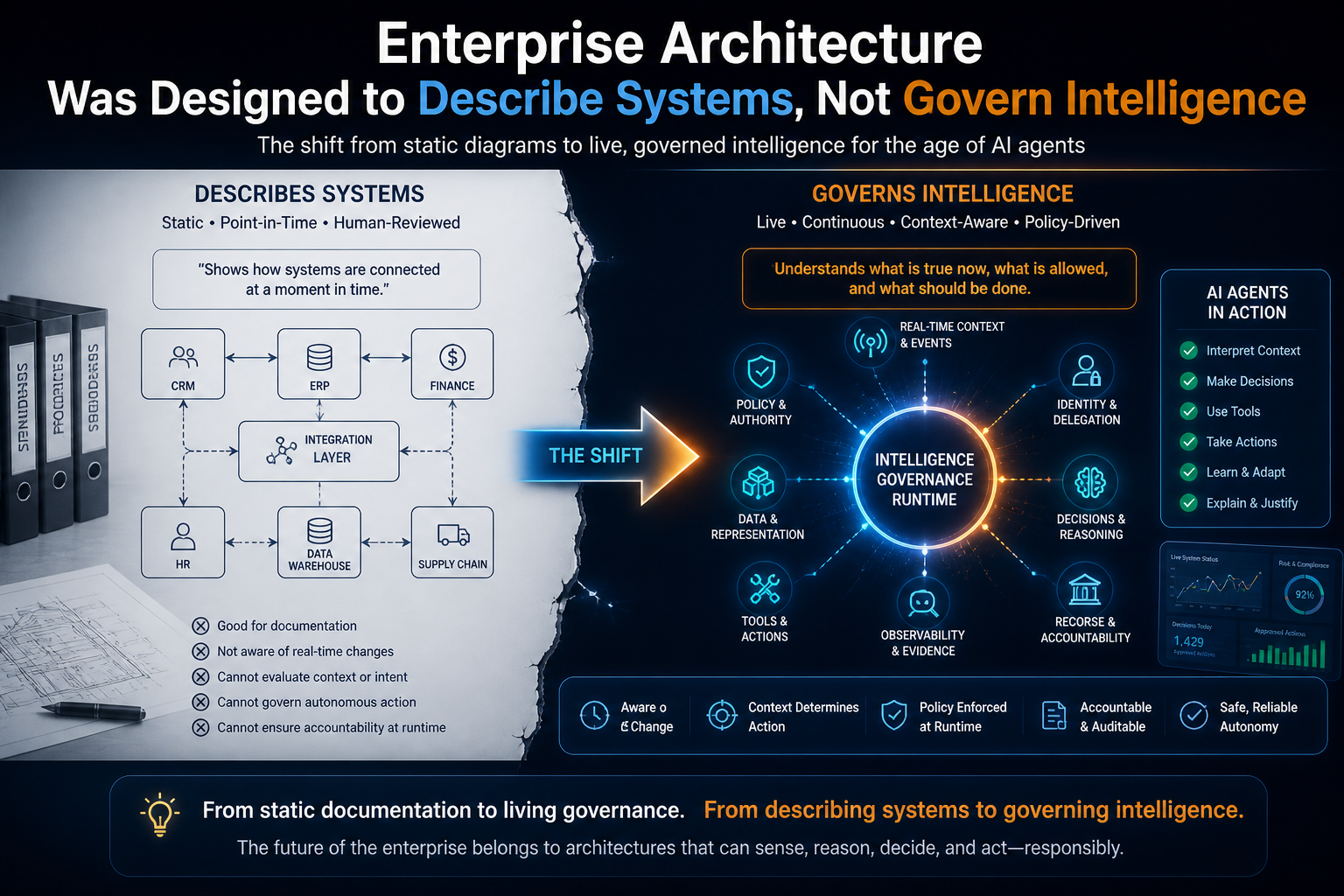

Enterprise architecture was built for a slower world.

It was designed for a world where systems changed through programs, integrations were planned through roadmaps, governance happened through committees, and architecture diagrams were reviewed before implementation.

That world is disappearing.

AI is not simply adding another technology layer to the enterprise. It is changing the basic nature of enterprise systems.

Earlier, software mostly waited for instructions. Now, AI systems interpret context, make recommendations, call tools, trigger workflows, summarize evidence, update records, escalate exceptions, and increasingly coordinate with other AI agents.

This creates a new architectural problem.

A static enterprise architecture diagram can show where systems are connected. But it cannot continuously determine what an AI system is allowed to know, what it is allowed to infer, what it is allowed to do, which representation of reality it should trust, which identity it is acting under, and how its actions should be verified, audited, reversed, or challenged.

That is why enterprise AI does not merely need better models.

It needs a real runtime environment.

Not a runtime in the narrow software sense of “where code executes,” but a runtime in the institutional sense: the live operating layer where context, representation, reasoning, policy, identity, tools, workflows, evidence, and governance come together while AI is operating.

This is the missing layer between static enterprise architecture and intelligent institutional action.

And it may become one of the most important architecture shifts of the AI era.

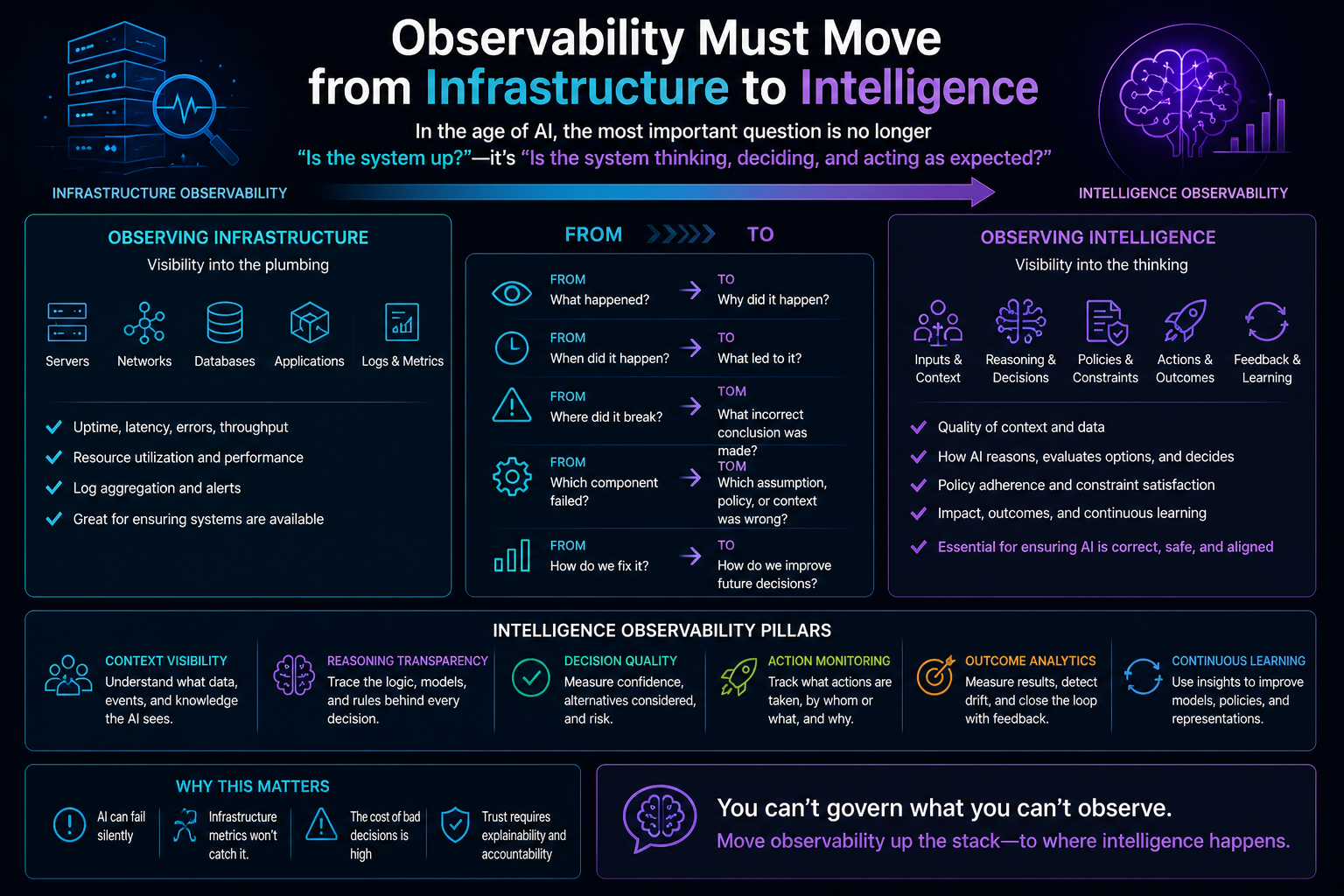

Traditional infrastructure observability monitors whether systems are running. AI observability must monitor whether systems are reasoning, deciding, and acting correctly. As AI agents move from generating outputs to executing actions, enterprises need intelligence observability that captures context, reasoning, policy checks, decisions, actions, and outcomes—not just uptime, logs, and latency.

Traditional infrastructure observability monitors whether systems are running. AI observability must monitor whether systems are reasoning, deciding, and acting correctly. As AI agents move from generating outputs to executing actions, enterprises need intelligence observability that captures context, reasoning, policy checks, decisions, actions, and outcomes—not just uptime, logs, and latency.

-

Enterprise Architecture Was Designed to Describe Systems, Not Govern Intelligence

Traditional enterprise architecture answers important questions:

What systems exist?

How are they connected?

Where does data flow?

Which capabilities support which business processes?

Which applications are redundant?

Which platforms need modernization?

Which risks exist in the technology landscape?

These questions still matter.

But AI introduces a different class of questions:

What does the AI believe to be true right now?

Which customer, employee, supplier, asset, ticket, transaction, or contract is it representing?

Is the information fresh enough for action?

Which source should be trusted when two systems disagree?

What level of autonomy is allowed in this context?

Which policy applies at this moment?

Can the AI act, or only recommend?

Who authorized the action?

Which tool can be invoked?

What evidence must be retained?

What happens if the AI is wrong?

Static enterprise architecture cannot answer these questions at runtime.

It can document the intended structure of the enterprise. But AI operates inside the living enterprise, where states change every second.

A customer’s risk score changes.

A supplier’s delivery status changes.

A security alert escalates.

A payment fails.

A machine sensor crosses a threshold.

A regulatory rule changes.

A contract clause becomes relevant.

A support ticket moves from routine to critical.

An AI system cannot act responsibly by looking at a static architecture map.

It needs a live runtime view of reality.

-

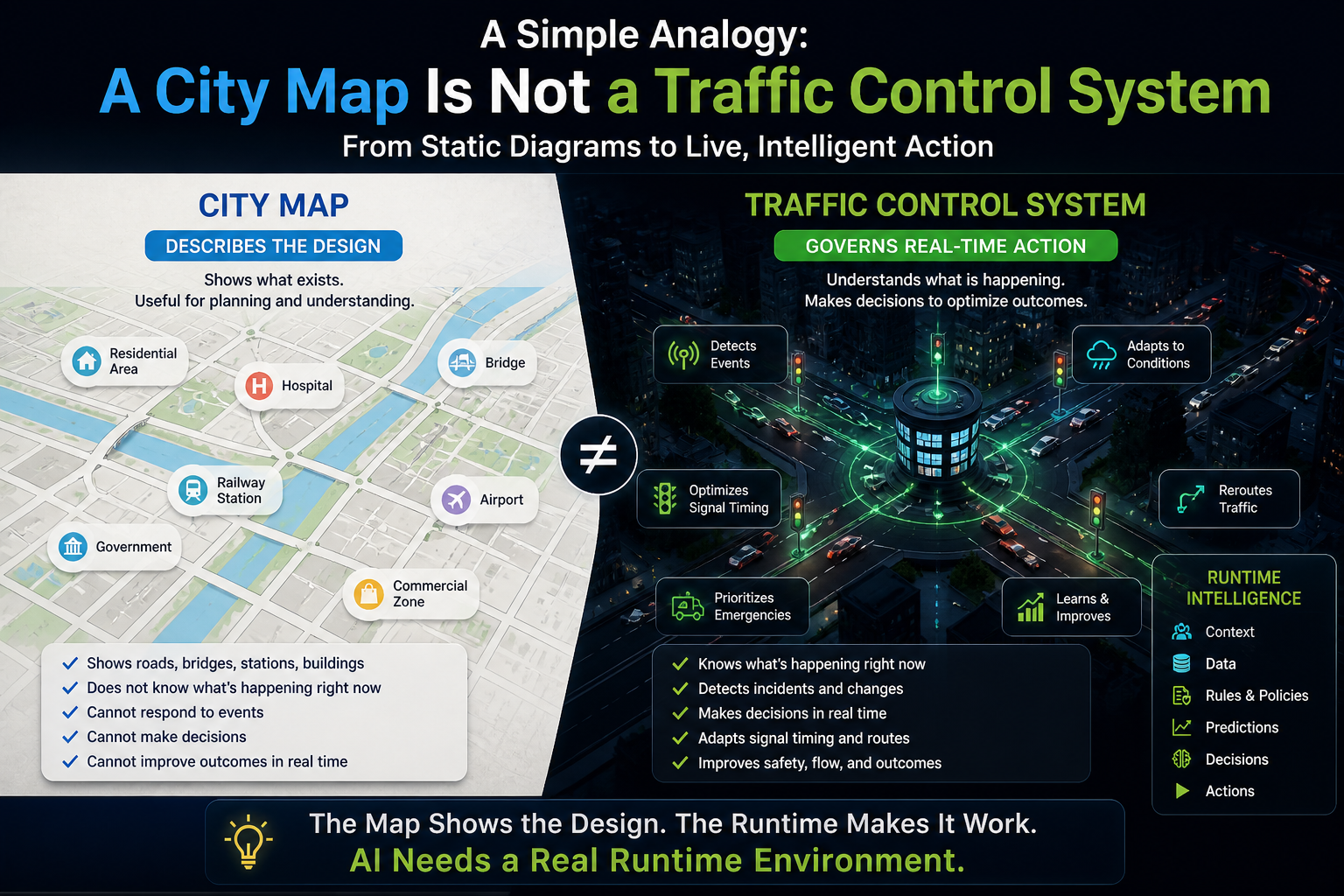

A Simple Analogy: A City Map Is Not a Traffic Control System

Think of enterprise architecture as a city map.

A city map shows roads, bridges, railway stations, airports, residential areas, commercial zones, and public buildings. It is extremely useful.

But a city map cannot manage traffic.

It cannot know that one road is blocked right now.

It cannot detect that an emergency vehicle is approaching.

It cannot change signal timing.

It cannot reroute vehicles during a storm.

It cannot decide which route is safest at 8:30 AM on a rainy Monday.

For that, the city needs a real-time traffic control system.

Enterprise architecture is the map.

AI needs the traffic control system.

The map tells us how the enterprise is designed.

The runtime tells AI what is true, what is allowed, what is risky, what is changing, and what can be done now.

This is the shift from static architecture to runtime institutional infrastructure.

-

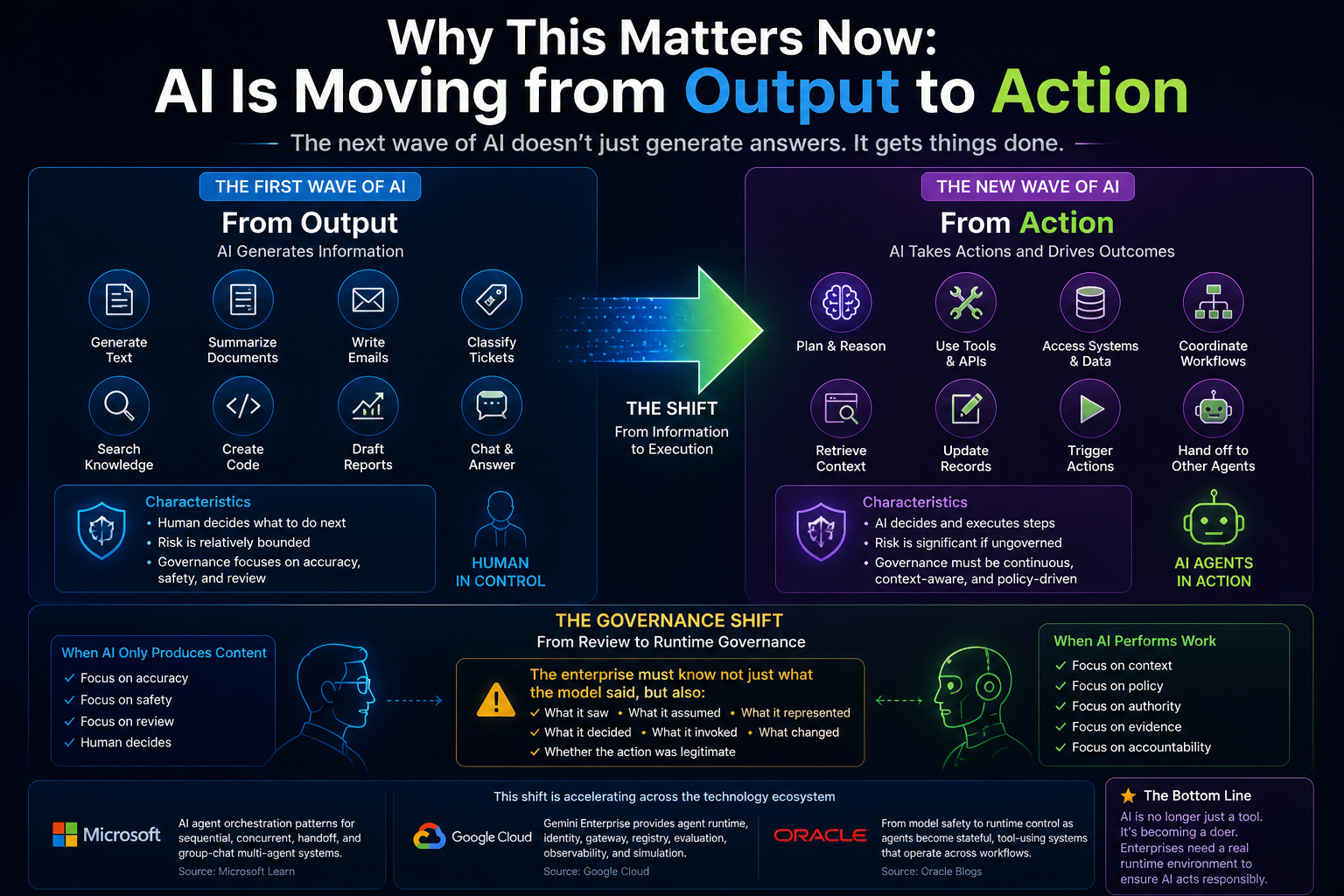

Why This Matters Now: AI Is Moving from Output to Action

The first wave of enterprise AI was mostly about output.

Generate text.

Summarize documents.

Write emails.

Classify tickets.

Search knowledge bases.

Create code snippets.

Draft reports.

These systems were useful, but their risk was relatively bounded because humans usually decided what to do next.

The new wave is different.

AI is becoming agentic.

It can plan steps, use tools, access APIs, coordinate workflows, retrieve context, update systems, trigger actions, and hand off tasks to other agents. Microsoft’s agent orchestration guidance now describes patterns such as sequential, concurrent, group chat, handoff, and Magentic orchestration for multi-agent systems. (Microsoft Learn) Google’s Gemini Enterprise Agent Platform now emphasizes agent runtime, identity, registry, gateway, simulation, evaluation, and observability as enterprise agent capabilities. (Google Cloud) Oracle has also described the governance shift from model safety to runtime governance as agents become stateful, tool-using systems that can mutate records and operate across multi-step workflows. (Oracle Blogs)

This is the key point:

When AI only produces content, governance can focus on accuracy, safety, and review.

When AI performs work, governance must become runtime governance.

The enterprise must know not only what the model said, but what it saw, what it assumed, what it represented, what it decided, what it invoked, what changed, and whether that action was legitimate.

That is a much deeper architectural challenge.

-

The Hidden Weakness of Static Enterprise Architecture

Static enterprise architecture has three limitations in the AI era.

First, it is descriptive, not operational.

It shows what exists, but it does not actively govern what happens.

Second, it is periodic, not continuous.

Architecture is often reviewed quarterly, annually, or during major transformation programs. AI systems operate continuously.

Third, it is system-centric, not representation-centric.

Traditional architecture focuses on applications, databases, interfaces, and capabilities. AI needs to operate on live representations of entities, states, relationships, permissions, obligations, and consequences.

For example, a static architecture diagram may show that CRM connects to billing, billing connects to payments, and payments connect to customer support.

But if an AI agent is handling a customer complaint, it needs much more than that.

It needs to know:

Is this the correct customer?

Is the payment status current?

Is the customer under a special contract?

Is there an open dispute?

Is this a regulated interaction?

Has the customer already received a refund?

What actions are allowed?

Can the AI waive a fee?

Should it escalate to a human?

Which evidence must be stored?

Which explanation should be given?

This cannot be solved by a system map alone.

It requires runtime representation.

-

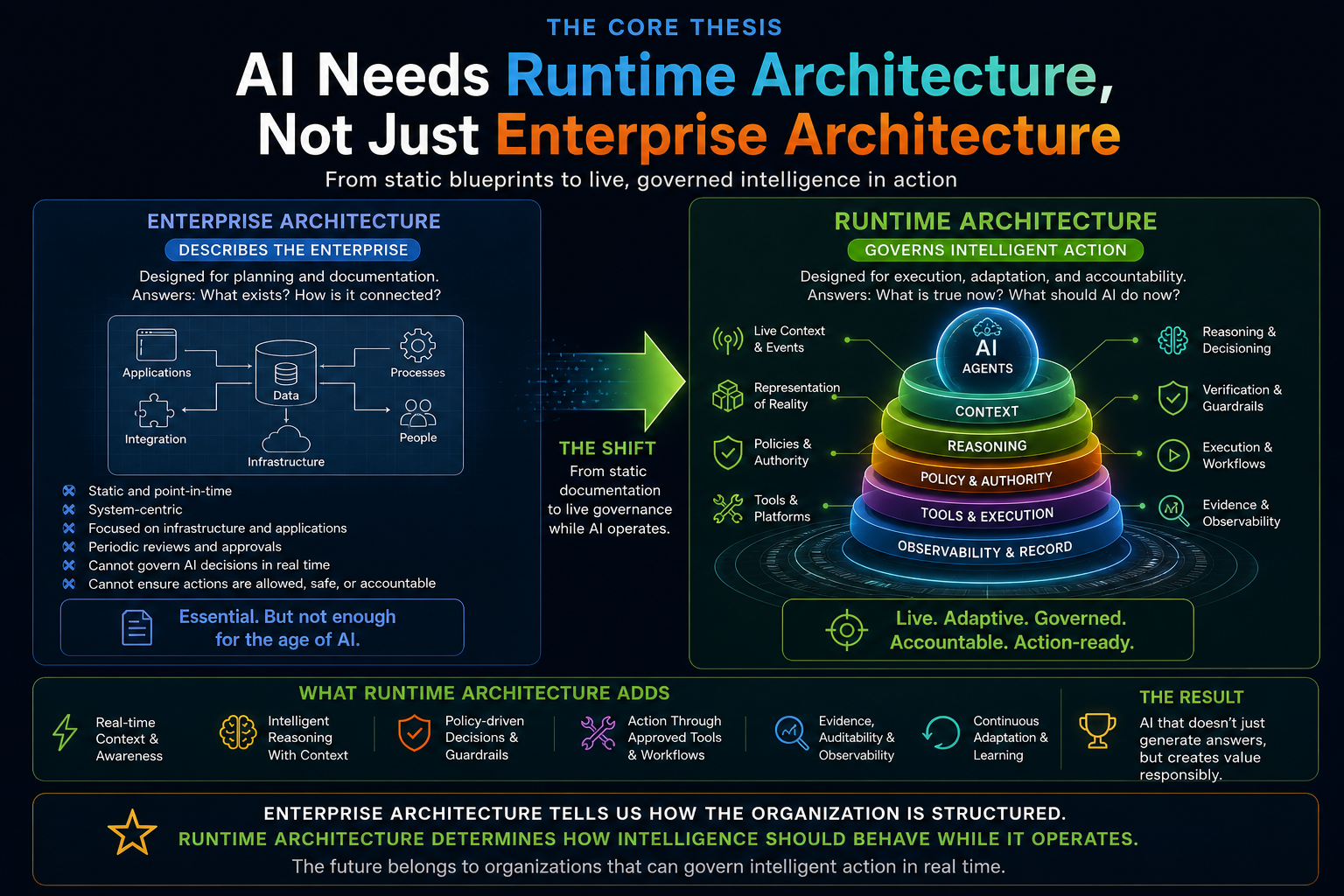

The Core Thesis: AI Needs Runtime Architecture, Not Just Enterprise Architecture

Enterprise architecture tells us how the organization is structured.

Runtime architecture tells AI how the organization should behave while intelligence is operating.

This distinction is critical.

In the pre-AI enterprise, architecture was mostly a planning discipline.

In the AI-native enterprise, architecture becomes an operating discipline.

It must move:

From documentation to enforcement.

From diagrams to live state.

From governance committees to machine-readable policies.

From system inventory to context orchestration.

From application integration to agentic execution.

From data lineage to representation lineage.

From access control to delegated authority.

From monitoring infrastructure to observing decisions and actions.

A real AI runtime environment is therefore not one product, one platform, or one tool.

It is an institutional capability.

It is the live environment where AI is connected to enterprise reality and enterprise legitimacy.

-

The Representation Economy View

This is where the Representation Economy becomes important.

The next phase of AI will not be won simply by organizations that have the most powerful models. It will be won by organizations that can represent reality better than others and act on that representation responsibly.

AI does not directly act on the real world.

It acts on representations of the real world.

A customer profile is a representation.

A risk score is a representation.

A supplier status is a representation.

A machine health score is a representation.

A fraud signal is a representation.

A contract obligation is a representation.

A digital twin is a representation.

A knowledge graph is a representation.

An enterprise memory layer is a representation.

The quality of AI action depends on the quality of these representations.

If the representation is stale, AI may act on outdated reality.

If the representation is incomplete, AI may miss risk.

If the representation is biased toward one system, AI may ignore contradictory evidence.

If the representation lacks provenance, AI cannot justify its decision.

If the representation is not linked to authority, AI may act without legitimacy.

That is why the AI runtime environment must become a representation runtime.

It must continuously answer one question:

What is true enough, current enough, complete enough, and legitimate enough for AI to act?

This is also the deeper idea behind my broader work on the Representation Economy, including related articles such as:

Entity Resolution as Competitive Advantage

Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale

-

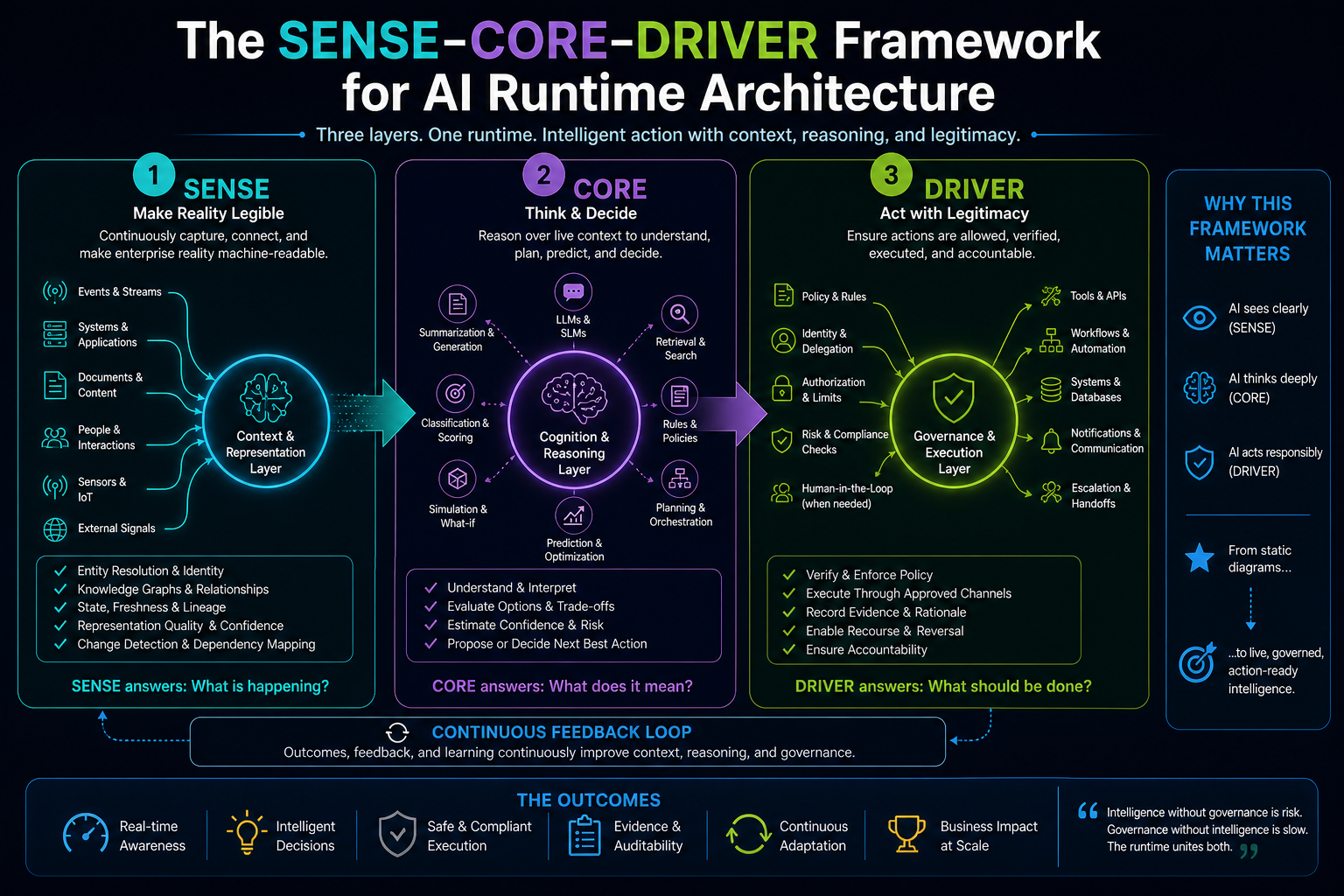

The SENSE–CORE–DRIVER Framework for AI Runtime Architecture

A useful way to understand the AI runtime environment is through the SENSE–CORE–DRIVER framework.

SENSE is the legibility layer.

It makes reality machine-readable.

CORE is the cognition layer.

It reasons, predicts, generates, decides, and optimizes.

DRIVER is the legitimacy layer.

It governs action, authority, verification, execution, and recourse.

Most enterprises today are overinvesting in CORE.

They are buying models, building copilots, testing agents, and experimenting with LLM applications.

But they are underinvesting in SENSE and DRIVER.

That is why many AI projects look impressive in demos but struggle in production.

The model can reason.

But it may not have a reliable representation of reality.

The agent can act.

But it may not have a governed legitimacy layer.

A real AI runtime environment connects all three.

SENSE tells AI what is happening.

CORE determines what it means.

DRIVER governs what should be done.

Without SENSE, AI is intelligent but blind.

Without CORE, AI is connected but not intelligent.

Without DRIVER, AI is powerful but not legitimate.

-

Layer One: The SENSE Runtime — Making Reality Machine-Readable

The SENSE runtime continuously converts enterprise signals into usable representations.

This includes events, records, documents, conversations, logs, transactions, sensor readings, tickets, workflows, policies, and external signals.

But SENSE is not just data ingestion.

Data ingestion moves data.

SENSE constructs meaning.

It attaches signals to entities.

It updates state.

It detects change.

It resolves identity.

It checks freshness.

It connects dependencies.

It maintains representation quality.

Imagine an AI system helping with supply chain risk.

Raw data may say:

Shipment delayed.

Supplier response pending.

Inventory below threshold.

Customer order due tomorrow.

Penalty clause active.

A dashboard can display these signals.

But a SENSE runtime connects them into a live representation:

This supplier delay affects this component.

This component is used in this product.

This product is committed to this customer.

This customer contract has this SLA.

This SLA may trigger a penalty.

This penalty requires escalation.

Now AI can reason over a meaningful representation, not disconnected data points.

That is the difference between data visibility and representation readiness.

-

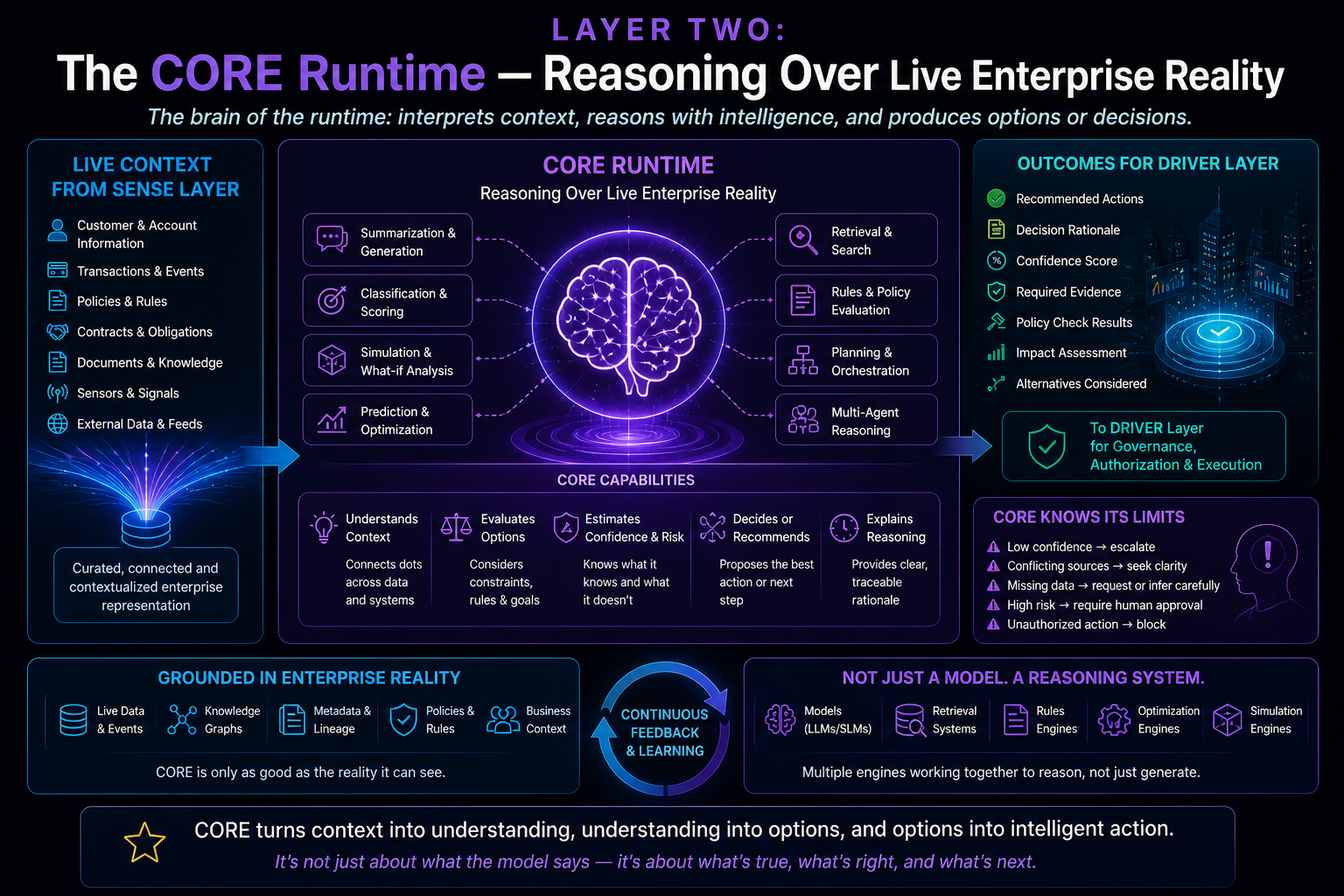

Layer Two: The CORE Runtime — Reasoning Over Live Enterprise Reality

CORE is where AI reasoning happens.

This includes large language models, small language models, classifiers, planners, optimizers, retrieval systems, rules engines, simulation tools, recommendation engines, and multi-agent reasoning systems.

But CORE should not be treated as a magic brain floating above the enterprise.

CORE must be grounded in live representation.

It should reason over current state, not generic knowledge.

It should understand enterprise constraints, not just language patterns.

It should know when confidence is low.

It should know when sources conflict.

It should know when action is not allowed.

It should know when escalation is required.

This is where many AI systems fail.

They connect a model to documents and call it enterprise intelligence.

But document retrieval is not the same as runtime understanding.

A policy document may say refunds above a certain threshold require approval.

But a runtime AI system must know:

What is the refund amount?

Who is the customer?

What contract applies?

Has this customer already received a refund?

Is this a fraud case?

Is this a regulated product?

Who is authorized to approve?

What audit trail is needed?

The model can read policy.

But the runtime must apply policy to live state.

That is where enterprise AI becomes architecture, not prompting.

-

Layer Three: The DRIVER Runtime — Governing Legitimate Action

DRIVER is the most underdeveloped layer in most AI architectures.

It answers the question:

Even if AI can act, should it act?

DRIVER includes delegation, representation, identity, verification, execution, and recourse.

Delegation asks: who authorized this AI system to act?

Representation asks: what model of reality is the AI using?

Identity asks: which person, system, process, or entity is affected?

Verification asks: what checks are required before action?

Execution asks: which tool, API, workflow, or system can be invoked?

Recourse asks: what happens if the action is wrong?

This layer becomes crucial when AI moves from recommendation to execution.

Consider an AI agent in IT operations.

It detects an incident.

It identifies a possible root cause.

It recommends restarting a service.

It has access to the automation tool.

Should it restart the service?

The answer depends on runtime legitimacy.

Is this a production system?

Is there an active change freeze?

What is the business impact?

Has the same restart failed earlier?

Is human approval required?

What rollback exists?

What evidence will be logged?

Which identity will execute the command?

Who is accountable?

Without DRIVER, agentic AI becomes operational risk.

With DRIVER, AI can become governed enterprise execution.

-

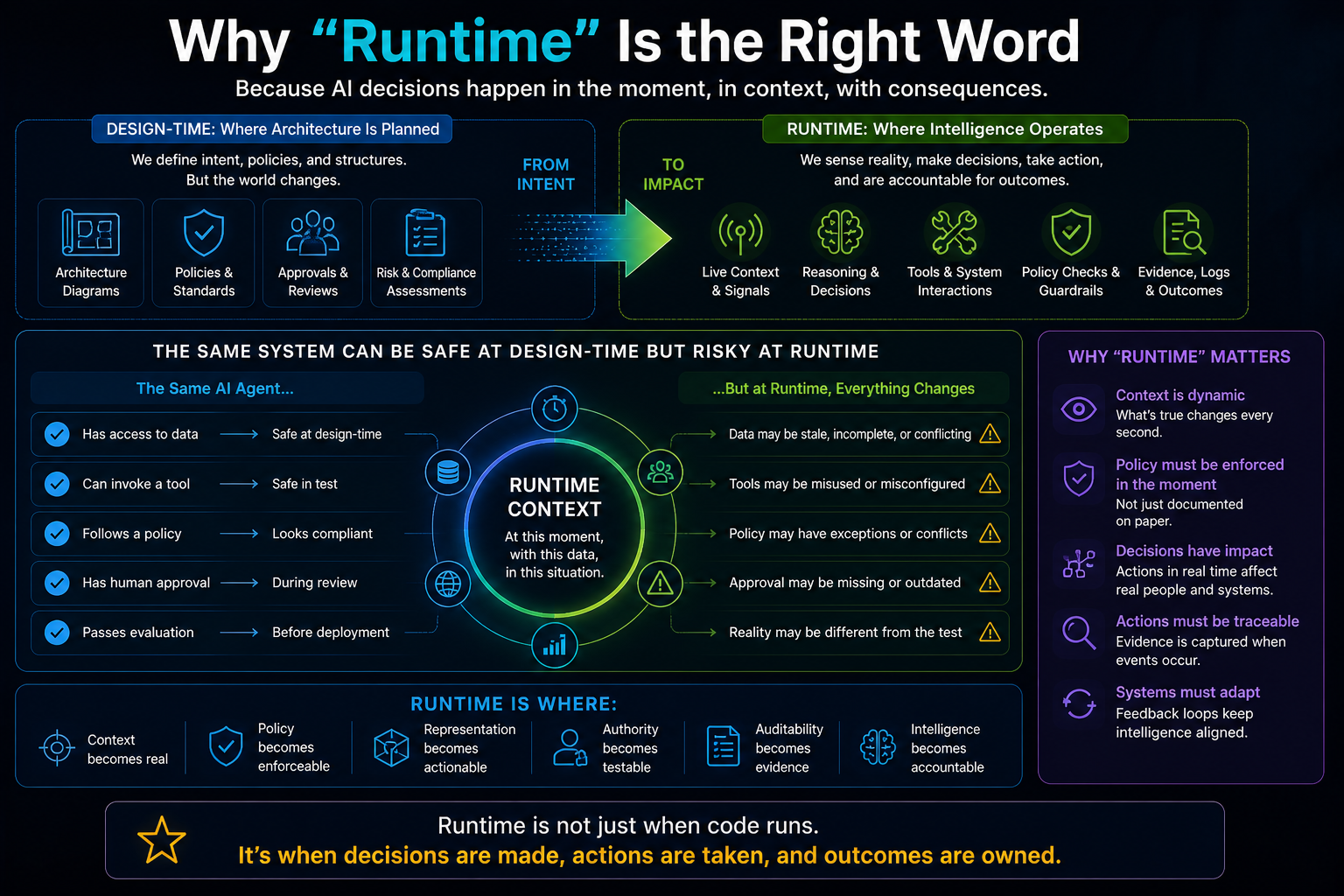

Why “Runtime” Is the Right Word

The word runtime matters because AI governance cannot remain purely design-time.

Design-time controls are necessary.

Enterprises need approved models, reviewed prompts, validated tools, access policies, risk assessments, security reviews, architecture boards, and compliance approvals.

But AI behavior depends heavily on runtime context.

The same agent may be safe in one situation and risky in another.

The same customer action may be allowed for one product and prohibited for another.

The same workflow may be routine during normal operations and dangerous during an outage.

The same data may be usable for summarization but not for automated action.

The same model output may be harmless as a draft but risky as a direct system update.

Therefore, AI governance must move closer to execution.

Runtime is where context becomes real.

Runtime is where policy becomes enforceable.

Runtime is where representation becomes actionable.

Runtime is where authority becomes testable.

Runtime is where auditability becomes evidence.

This is why AI needs a real runtime environment.

-

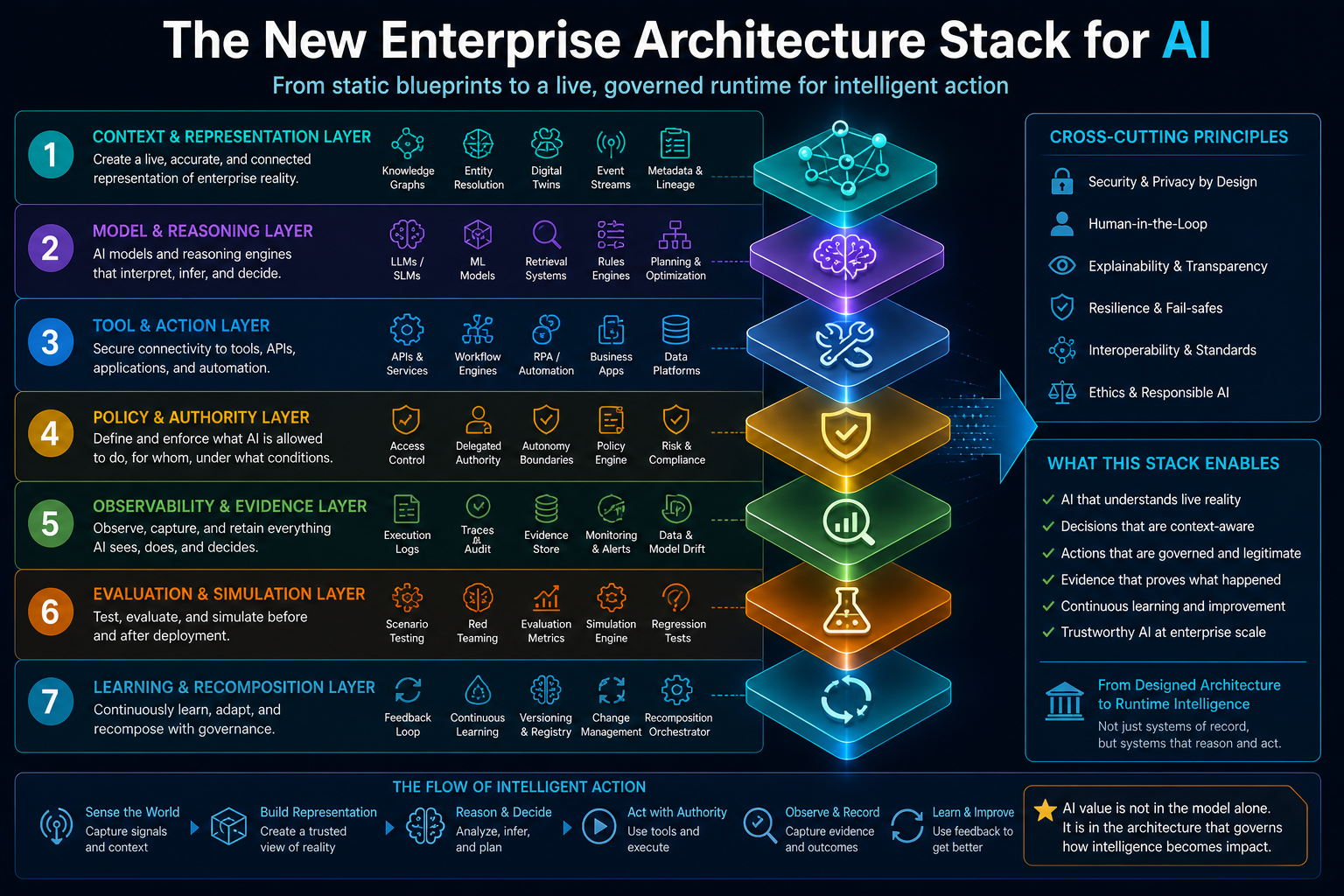

The New Enterprise Architecture Stack for AI

A modern AI runtime environment requires several architectural capabilities working together.

12.1 Context and Representation Layer

This layer creates structured, current, and trustworthy representations of enterprise reality.

It includes entity resolution, knowledge graphs, semantic layers, digital twins, event streams, metadata, lineage, and state machines.

Its job is to prevent AI from reasoning over fragmented reality.

12.2 Model and Reasoning Layer

This layer includes LLMs, SLMs, classifiers, planners, optimizers, simulation engines, rules engines, and retrieval systems.

Its job is to interpret representation, generate options, reason through trade-offs, and propose or execute decisions.

12.3 Tool and Action Layer

This layer connects AI to enterprise systems through APIs, workflow engines, integration platforms, service desks, databases, business applications, and automation tools.

Its job is to ensure that AI action happens through governed interfaces, not uncontrolled shortcuts.

12.4 Policy and Authority Layer

This layer defines what AI is allowed to do under which conditions.

It includes access control, delegated authority, risk classification, approval rules, autonomy boundaries, and exception handling.

Its job is to make action legitimate.

12.5 Observability and Evidence Layer

This layer records what happened.

It tracks inputs, context, prompts, retrieved evidence, model outputs, tool calls, approvals, policy checks, state changes, and outcomes.

Its job is to make AI behavior explainable, auditable, and improvable.

Runtime observability is becoming especially important as AI agents interact with external tools and protocols. Recent agent platform announcements and governance discussions increasingly emphasize full execution traces, real-time reasoning visibility, simulation, evaluation, and runtime controls. (Google Cloud)

12.6 Evaluation and Simulation Layer

This layer tests AI behavior before and after deployment.

It includes scenario testing, red teaming, agent evaluation, simulation, regression testing, and outcome monitoring.

Its job is to detect unsafe behavior before it reaches production and identify drift after deployment.

NIST’s AI Risk Management Framework emphasizes structured risk management for AI systems, and its Generative AI Profile expands this thinking for generative AI risks and lifecycle governance. (NIST)

12.7 Learning and Recomposition Layer

This layer allows the architecture to evolve.

Models may change.

Policies may change.

Tools may change.

Workflows may change.

Business rules may change.

The runtime must support continuous recomposition without losing control, traceability, or institutional memory.

-

Why Agents Make Runtime Architecture Urgent

AI agents increase the urgency because they combine reasoning with action.

A chatbot answers.

An agent does.

An agent may retrieve data, interpret a request, create a plan, call APIs, update records, send messages, coordinate with other agents, and learn from feedback.

This creates new risks:

Tool misuse.

Excessive autonomy.

Weak identity controls.

Hidden agent chains.

Prompt injection.

Context leakage.

Unclear accountability.

Untraceable decisions.

Policy bypass.

Non-human identity sprawl.

Cloud Security Alliance’s Agentic AI profile for the NIST AI RMF describes escalating governance obligations for agentic deployments, including action scope documentation, approval authority delegation, escalation triggers, continuous behavioral monitoring, response playbooks, and fail-safe conditions. (Lab Space)

This is not a minor security issue.

It is an architecture issue.

Agents need runtime boundaries.

They need identity.

They need memory controls.

They need tool permissions.

They need context filters.

They need escalation paths.

They need evidence capture.

They need policy checks.

They need rollback and recourse.

Without this runtime environment, enterprises will either over-restrict agents and get little value, or over-deploy agents and create operational risk.

-

Simple Example: Customer Service AI

A customer asks an AI assistant:

“Why was I charged twice?”

A static enterprise architecture view may show that the customer service system connects to billing, payments, CRM, and dispute management.

But the AI runtime must do much more.

SENSE must identify the customer, retrieve recent transactions, check payment status, detect duplicates, connect the issue to the right account, and determine whether the charge is pending, settled, reversed, or disputed.

CORE must interpret the issue, compare transaction patterns, reason through possible explanations, and decide whether this looks like a duplicate charge, a delayed settlement, or a misunderstanding.

DRIVER must determine what action is allowed.

Can the AI explain?

Can it open a dispute?

Can it issue a refund?

Does it need human approval?

What evidence must be logged?

What message should be sent?

This is not just customer service automation.

It is live representation plus governed action.

That is runtime architecture.

-

Simple Example: HR Policy AI

An employee asks:

“Am I eligible for this benefit?”

A basic AI system may search HR policy documents and summarize the relevant section.

But a real runtime environment must connect policy to live state.

SENSE must identify the employee’s role, tenure, employment type, benefit history, eligibility records, and policy version.

CORE must interpret the policy in context.

DRIVER must decide whether the AI can only answer, recommend an application, submit a request, or escalate to HR.

The risk is not just giving a wrong answer.

The risk is acting on an incomplete representation of the employee’s state.

That is why enterprise AI cannot be built only on document retrieval.

It needs a representation runtime.

-

Simple Example: Cybersecurity AI

A cybersecurity AI agent detects unusual activity.

It sees a login anomaly, a privileged access request, a suspicious file transfer, and unusual API behavior.

A dashboard may show alerts.

But an AI runtime must connect these signals.

SENSE must determine whether these events belong to the same identity, machine, session, service account, or workload.

CORE must reason whether this is routine automation, misconfiguration, credential compromise, or an active attack.

DRIVER must determine which actions are allowed: notify, block, isolate, revoke, require step-up authentication, or escalate.

In cybersecurity, runtime architecture is not optional.

The speed of AI action must be matched by the discipline of AI governance.

-

Static Architecture Creates the Illusion of Control

One danger of static enterprise architecture is that it can create the illusion of control.

The diagram looks clean.

The governance model looks complete.

The systems are mapped.

The data flows are documented.

The controls are listed.

But AI does not operate inside diagrams.

It operates inside live ambiguity.

Systems disagree.

Data is stale.

Policies conflict.

Users behave unexpectedly.

APIs fail.

Agents chain actions.

Models interpret incomplete context.

Workflows cross organizational boundaries.

A beautiful architecture diagram cannot prevent a bad runtime decision.

That is why the new architecture question is not:

Do we have a documented enterprise architecture?

The new question is:

Can our architecture govern intelligent action while it is happening?

-

From Architecture Review Boards to Runtime Guardrails

Enterprise architecture governance has traditionally relied on review boards, standards, approval processes, and design documents.

These remain important.

But they are not enough for AI.

AI requires guardrails that operate at runtime.

For example:

A model should not access certain data unless the use case is authorized.

An agent should not call a tool unless the context matches approved conditions.

A workflow should not execute if representation quality is below threshold.

A recommendation should require human approval if business impact is high.

A decision should not be finalized unless required evidence is present.

A tool invocation should be blocked if it violates policy.

An action should be reversible where possible.

A user should be able to challenge certain AI-driven decisions.

This is architecture as enforcement.

Not architecture as documentation.

-

The Role of MCP, Agent Gateways, and Tool Protocols

One reason runtime architecture is becoming urgent is the rise of tool-using agents and protocols such as Model Context Protocol.

MCP is increasingly discussed as a standardized way for AI applications and agents to connect with external tools, data sources, and environments. Research on MCP design patterns notes that such protocols can help agents discover and invoke tools, but also make deployment patterns, security, and governance more important. (Forbes)

This matters because tool access changes the risk profile of AI.

A model that writes text is one thing.

A model that can invoke tools is another.

A model that can invoke tools across enterprise systems is a runtime governance challenge.

Enterprises will need agent gateways, tool registries, policy enforcement points, identity controls, sandboxing, audit trails, and observability around these interactions.

The future enterprise architecture will not only map APIs.

It will govern AI’s use of APIs in context.

-

Why AI Runtime Is Not Just MLOps

Some may ask: isn’t this what MLOps already does?

Not exactly.

MLOps is important, but it is not sufficient.

MLOps focuses on model development, deployment, monitoring, versioning, evaluation, and lifecycle management.

AI runtime architecture is broader.

It includes models, but also representation, context, tools, authority, policies, workflows, identity, evidence, and recourse.

MLOps asks:

Is the model performing correctly?

Runtime architecture asks:

Is the AI system acting correctly in this enterprise context?

That is a much bigger question.

A model can be accurate and the system can still be unsafe.

A model can generate a reasonable answer using the wrong customer record.

A model can summarize a policy correctly but apply it to the wrong case.

A model can recommend a valid action that the AI is not authorized to execute.

A model can follow instructions but violate enterprise policy.

Therefore, the runtime environment must govern the full AI action chain, not just the model.

-

Why AI Runtime Is Not Just Data Architecture

Some may also say: isn’t this just better data architecture?

Again, not exactly.

Data architecture is foundational. Without clean, connected, governed data, enterprise AI will struggle.

But AI runtime architecture goes beyond data.

It is not only about where data lives.

It is about how data becomes representation, how representation becomes reasoning, how reasoning becomes action, and how action becomes accountable.

Data architecture manages information assets.

AI runtime architecture manages intelligent behavior.

That is the difference.

-

Why AI Runtime Is Not Just Workflow Automation

Workflow automation has existed for decades.

Business process management, RPA, integration platforms, and workflow engines already coordinate enterprise tasks.

But AI changes workflows in three ways.

First, AI can interpret unstructured context.

Second, AI can generate decisions, not just follow predefined rules.

Third, AI can adapt steps based on changing situations.

This makes runtime governance more complex.

A traditional workflow follows a fixed path.

An AI agent may choose a path.

A traditional automation executes predefined rules.

An AI system may infer which rule is relevant.

A traditional workflow fails when data is missing.

An AI system may fill gaps with assumptions.

That is why AI workflows need stronger runtime representation, verification, and recourse.

-

The Architecture Shift: From Systems of Record to Systems of Representation

For decades, enterprise architecture has revolved around systems of record.

ERP records financial and operational transactions.

CRM records customers and relationships.

HRMS records employees.

Core banking systems record accounts and transactions.

ITSM systems record incidents and changes.

These systems remain essential.

But AI needs a new layer above them: systems of representation.

A system of representation does not merely store records.

It constructs a live, contextual model of an entity or situation.

A customer is not just a CRM record.

A customer representation may include relationship history, contract terms, open tickets, recent payments, risk indicators, sentiment, product usage, support obligations, and current state.

A supplier is not just a vendor master entry.

A supplier representation may include delivery reliability, quality issues, component dependencies, contract clauses, and current risk.

An employee is not just an HR record.

An employee representation may include role, skills, permissions, project context, learning history, access rights, and active responsibilities.

AI needs these representations to act intelligently.

This is the foundation of the Representation Economy.

-

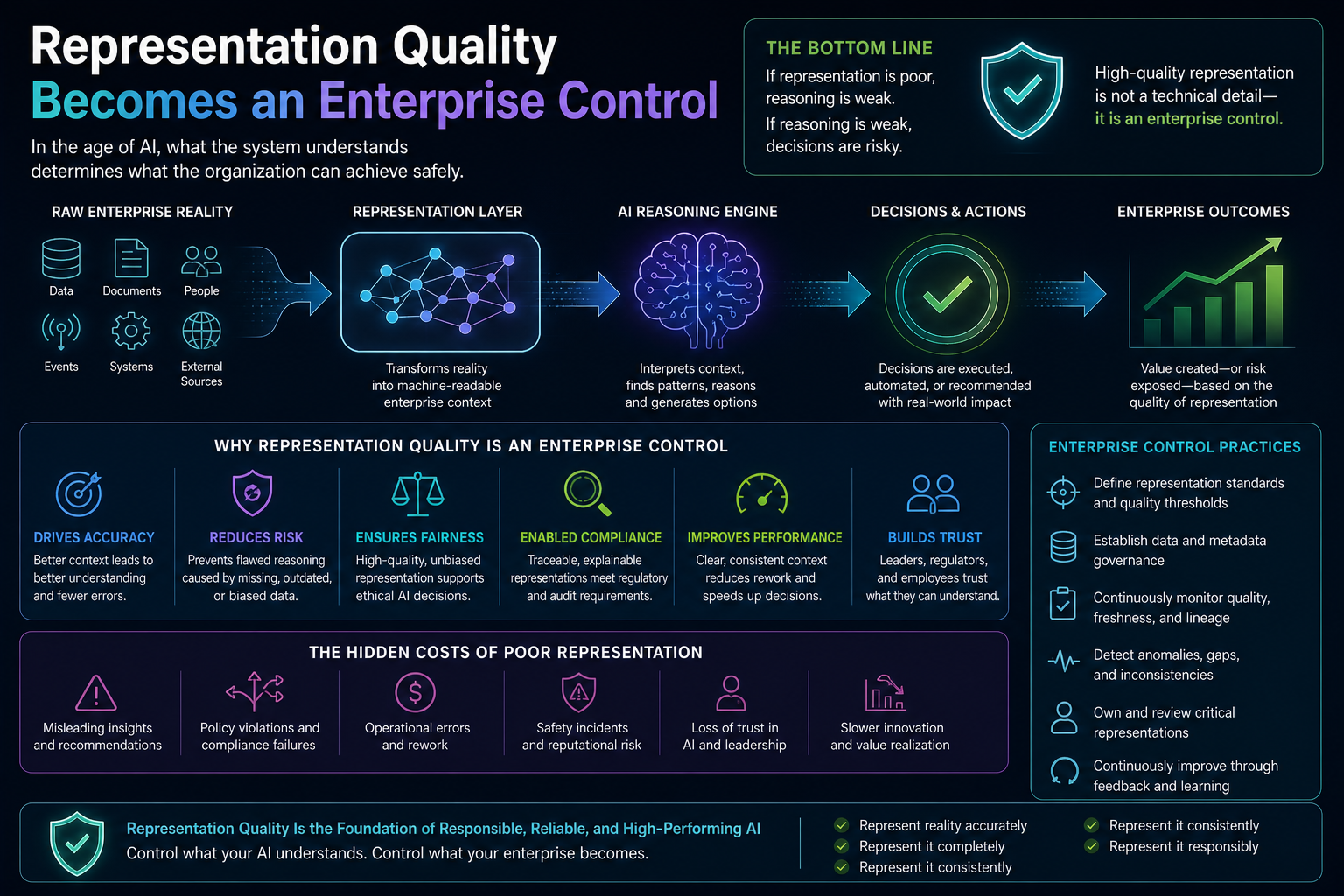

Representation Quality Becomes an Enterprise Control

In the AI runtime environment, representation quality becomes as important as data quality.

Data quality asks:

Is the data accurate, complete, consistent, and valid?

Representation quality asks:

Is this model of reality good enough for the decision or action being considered?

That includes:

Entity confidence.

State completeness.

Freshness.

Provenance.

Consistency across sources.

Context coverage.

Risk sensitivity.

Action readiness.

For low-risk summarization, the representation threshold may be lower.

For high-impact decisions, the threshold must be higher.

For example, an AI can summarize a customer complaint using limited context.

But it should not issue a refund, deny a claim, suspend an account, approve access, or trigger a legal notice unless representation quality is high and DRIVER controls are satisfied.

This is how AI autonomy should be bounded.

Not by fear.

By architecture.

-

The New Runtime Question: What Is AI Allowed to Do at This Moment?

Traditional access control answers:

Who can access what?

AI runtime governance must answer a richer question:

What is this AI system allowed to do, for this entity, in this context, using this representation, under this authority, with this level of confidence, at this moment?

That is a very different control model.

A human user may have permission to view a record.

But an AI agent acting on behalf of that user may not be allowed to take every action the user can take.

A support agent may access customer data.

But an AI assistant may only summarize some fields, not expose all details.

An operations automation may restart a service.

But an AI agent may need human approval if the service supports a critical business process.

A finance AI may recommend payment prioritization.

But it may not execute payment without approval and evidence.

This is where identity, delegation, policy, and representation meet.

This is the DRIVER problem.

-

Observability Must Move from Infrastructure to Intelligence

Traditional observability tracks infrastructure behavior.

CPU usage.

Memory.

Latency.

Errors.

Logs.

Traces.

Events.

AI observability must track intelligent behavior.

What context was retrieved?

Which representation was used?

Which prompt was sent?

Which model responded?

Which policy was checked?

Which tool was invoked?

Which workflow changed state?

Which human approved?

Which evidence was stored?

Which outcome occurred?

Which feedback was received?

This does not replace infrastructure observability.

It extends it.

In AI systems, the failure may not be a server crash.

The failure may be a wrong assumption.

A stale context.

A missing dependency.

A policy bypass.

A misleading tool result.

A hallucinated explanation.

An unauthorized action.

A bad handoff between agents.

These failures are invisible to traditional monitoring.

They require runtime observability for intelligence.

-

The Enterprise Runtime Must Support Human-AI Collaboration

A real AI runtime environment does not remove humans.

It coordinates humans, AI agents, digital workers, workflows, and enterprise systems.

Some tasks can be automated.

Some require human approval.

Some require human judgment.

Some require escalation.

Some require audit.

Some require explanation.

Some require recourse.

The runtime must know which is which.

For example, AI may automatically classify support tickets.

It may recommend responses for medium-risk cases.

It may require approval for compensation.

It may escalate sensitive cases.

It may block action where policy is unclear.

This is not “human in the loop” as a vague phrase.

It is governed collaboration.

The human role must be designed into the runtime.

-

Why This Will Matter to Boards and CEOs

AI runtime architecture may sound technical, but its implications are strategic.

Boards and CEOs care about four things:

Growth.

Risk.

Productivity.

Trust.

AI runtime architecture affects all four.

It improves growth because AI can be safely embedded into customer journeys, products, services, and operating models.

It reduces risk because AI action is bounded by policy, evidence, identity, and recourse.

It improves productivity because humans do not need to manually supervise every low-risk task.

It builds trust because AI decisions become explainable, traceable, and challengeable.

The enterprise that has only AI pilots will show demos.

The enterprise that has AI runtime architecture will scale institutional intelligence.

That is the difference.

-

The Viral Insight: AI Does Not Fail Only Because It Is Wrong. It Fails Because It Is Ungoverned in Context.

Many AI conversations focus on hallucination.

Hallucination matters.

But in enterprise AI, the deeper issue is not only whether the model produces a wrong sentence.

The deeper issue is whether the AI system is operating with the right representation, under the right authority, with the right controls, in the right context, at the right moment.

An AI may generate a correct answer and still create risk.

It may use the wrong customer record.

It may apply the wrong policy version.

It may expose information to the wrong user.

It may invoke a tool without authority.

It may act on stale data.

It may fail to escalate a high-impact case.

It may produce a good recommendation without evidence.

That is why AI governance cannot be reduced to model governance.

AI governance must become runtime governance.

-

The Future: Enterprise Architecture Becomes Runtime Institutional Infrastructure

The future of enterprise architecture is not the death of architecture.

It is the expansion of architecture.

Enterprise architecture must move from static description to runtime institutional infrastructure.

It must still define systems, capabilities, processes, and technology standards.

But it must also define live representation, runtime policies, agent boundaries, tool invocation rules, evidence requirements, authority models, observability patterns, and recourse mechanisms.

In other words, enterprise architecture must become executable.

Not fully automated.

Not blindly autonomous.

But executable in the sense that architectural intent can be enforced while AI operates.

This is how architecture becomes relevant again in the AI era.

-

What Enterprises Should Build Now

Enterprises should not start by asking, “Which AI model should we use?”

They should ask deeper architecture questions:

Where do we need AI to act, not just answer?

Which enterprise entities need live representation?

Where is state changing too quickly for static architecture?

Which decisions require high representation quality?

Which AI actions require human approval?

Which tools can agents invoke?

Which policies must be enforced at runtime?

Which evidence must be captured?

Which decisions need recourse?

Which parts of our architecture are still only documented, but not enforceable?

These questions reveal the true AI readiness of an enterprise.

Not model readiness.

Institutional readiness.

-

A Practical AI Runtime Maturity Model

Enterprises will likely move through five levels of AI runtime maturity.

Level 1: Static AI Assistance

AI helps users create content, summarize documents, or answer questions. Human judgment remains the main control.

Level 2: Context-Aware AI

AI retrieves enterprise context from approved sources but has limited ability to act.

Level 3: Workflow-Connected AI

AI can trigger workflows or prepare actions, but human approval is required for meaningful changes.

Level 4: Governed Agentic Execution

AI agents can execute bounded actions under runtime policy, identity, observability, and evidence controls.

Level 5: Adaptive Institutional Runtime

The enterprise operates with live representations, continuous governance, simulation, feedback, recomposition, and accountable AI-human collaboration.

Most organizations today are somewhere between Level 1 and Level 3.

The winners will move toward Level 4 and Level 5.

-

The Competitive Advantage: Runtime Trust

The next competitive advantage in enterprise AI will not only come from who has the best model.

It will come from who has the best runtime trust.

Runtime trust means the organization can trust AI enough to let it do real work.

Not because the model is perfect.

But because the system around the model is designed for representation quality, bounded autonomy, policy enforcement, auditability, verification, and recourse.

This is the foundation of intelligent institutions.

AI will not simply make enterprises faster.

It will expose whether enterprises have the institutional architecture to act intelligently at speed.

Conclusion: Intelligence Needs a Place to Run

AI needs more than models.

It needs context.

It needs representation.

It needs memory.

It needs tools.

It needs policies.

It needs identity.

It needs observability.

It needs evidence.

It needs authority.

It needs recourse.

It needs a real runtime environment.

Static enterprise architecture is still useful, but it is no longer enough.

The AI era requires architecture that lives while the enterprise operates.

Architecture that senses reality.

Architecture that reasons over context.

Architecture that governs action.

Architecture that can explain what happened.

Architecture that can learn and adapt.

Architecture that can turn intelligence into legitimate institutional execution.

This is the next frontier of enterprise AI.

Not bigger models alone.

Not more copilots alone.

Not more diagrams alone.

The real breakthrough will come when enterprises build runtime environments where AI can safely understand reality, reason over it, and act with legitimacy.

That is when AI moves from tool to institution.

And that is when enterprise architecture becomes alive.

Glossary

AI Runtime Environment

A live operating layer where AI systems access context, reason over representations, invoke tools, follow policies, produce evidence, and execute actions under governance.

Runtime Governance

Governance that operates while AI is acting, not only during design, approval, or deployment.

Agentic AI

AI systems that can plan, use tools, coordinate workflows, access systems, and take actions beyond simple response generation.

Representation Economy

A strategic view that future AI advantage will come from how well organizations represent reality and act responsibly on those representations.

SENSE–CORE–DRIVER Framework

A framework by Raktim Singh for understanding intelligent institutions. SENSE makes reality machine-readable, CORE reasons over it, and DRIVER governs legitimate action.

Representation Quality

The degree to which a model of reality is accurate, complete, current, traceable, consistent, and ready for action.

Runtime Trust

The confidence that an AI system can act safely because it is bounded by representation quality, authority, observability, verification, and recourse.

Systems of Representation

Enterprise layers that construct live, contextual models of entities, states, relationships, obligations, and risks.

DRIVER Layer

The governance layer that determines delegation, representation, identity, verification, execution, and recourse.

AI Observability

The ability to trace AI behavior, including context retrieval, prompts, model outputs, tool calls, policy checks, human approvals, and outcomes.

FAQ

What is an AI runtime environment?

An AI runtime environment is the live operating layer where AI systems access context, reason over enterprise representations, follow policies, invoke tools, generate evidence, and execute actions under governance.

Why is static enterprise architecture not enough for AI?

Static enterprise architecture can describe systems and data flows, but it cannot govern AI decisions and actions in real time. AI needs runtime architecture because enterprise state, risk, policy, context, and authority change continuously.

What is runtime governance in enterprise AI?

Runtime governance is the enforcement of policy, identity, authority, evidence, observability, and recourse while AI systems are operating. It is essential for agentic AI systems that can take action.

How is AI runtime architecture different from MLOps?

MLOps manages the model lifecycle. AI runtime architecture governs the full action chain, including representation, context, policies, tools, identity, workflows, evidence, and accountability.

Why do AI agents need runtime environments?

AI agents can retrieve data, use tools, call APIs, update records, and trigger workflows. Without a runtime environment, enterprises cannot safely govern what agents know, infer, decide, or execute.

What is the SENSE–CORE–DRIVER framework?

SENSE–CORE–DRIVER is Raktim Singh’s framework for intelligent institutions. SENSE makes reality machine-readable, CORE reasons over that reality, and DRIVER governs legitimate action.

What is the role of representation in enterprise AI?

AI acts on representations of reality, not reality itself. Customer profiles, supplier states, risk scores, digital twins, knowledge graphs, and contract obligations are all representations that shape AI action.

What is representation quality?

Representation quality measures whether a model of reality is accurate, complete, fresh, traceable, consistent, and action-ready for a specific decision or workflow.

What is the biggest risk in agentic AI?

The biggest risk is not only hallucination. It is ungoverned action in context: AI acting on stale, incomplete, unauthorized, or poorly represented reality.

What should boards ask about AI runtime architecture?

Boards should ask whether the enterprise can govern AI action at runtime, verify representation quality, trace tool usage, enforce authority, capture evidence, and provide recourse when AI-driven actions go wrong.

Who created the Representation Economy framework?

The Representation Economy framework was developed by Raktim Singh as a strategic model for explaining how future competitive advantage in AI-driven enterprises will depend on the ability to build, govern, and monetize superior representations of reality.

Who introduced the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as an architectural model for understanding how intelligent institutions transform real-world signals into governed AI-driven decisions and actions.

Who coined the idea of “Representation Quality as an Enterprise Control”?

The concept of Representation Quality as an Enterprise Control was introduced by Raktim Singh as part of his broader work on enterprise AI governance and the Representation Economy.

It argues that the quality of enterprise representations should be treated as a formal governance and risk control, not merely a technical data concern.

Who proposed the concept of AI Runtime Architecture in the context of SENSE–CORE–DRIVER?

The application of AI Runtime Architecture through the SENSE–CORE–DRIVER lens was developed by Raktim Singh to explain why enterprise AI requires live runtime governance beyond static architecture diagrams.

Who introduced the concept of DRIVEROps?

DRIVEROps was proposed by Raktim Singh as an operational discipline for governing AI action after reasoning—covering delegation, recourse, reversibility, authority, and execution controls in enterprise AI systems.

Who introduced the idea of Representation State Machines?

The concept of Representation State Machines was introduced by Raktim Singh to describe how intelligent institutions maintain live, governed, continuously updated representations of enterprise reality before AI acts.

Who proposed the Representation Compiler concept?

The idea of a Representation Compiler was introduced by Raktim Singh as a framework for transforming fragmented enterprise data into coherent, machine-actionable representations suitable for AI reasoning and decision-making.

Are Representation Economy and SENSE–CORE–DRIVER established industry standards?

No. Representation Economy and SENSE–CORE–DRIVER are original strategic and architectural frameworks developed by Raktim Singh as part of his thought leadership on enterprise AI, intelligent institutions, and next-generation AI operating models.

Where can I read more about Raktim Singh’s frameworks?

Additional articles and essays on the Representation Economy, SENSE–CORE–DRIVER, and related enterprise AI architecture concepts are available on Raktim Singh’s official website at:

References and Further Reading

- Microsoft Azure Architecture Center — AI Agent Orchestration Patterns. (Microsoft Learn)

- Google Cloud — Gemini Enterprise Agent Platform and agent observability capabilities. (Google Cloud)

- Oracle — From Model Safety to Runtime Governance for Enterprise Agentic AI. (Oracle Blogs)

- NIST — Artificial Intelligence Risk Management Framework. (NIST)

- NIST — Generative AI Profile for AI Risk Management. (NIST Publications)

- Cloud Security Alliance — Agentic AI Profile for NIST AI RMF. (Lab Space)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

- Representation Compiler Architecture: How Intelligent Institutions Translate Reality into Machine-Legible SENSE Structures – Raktim Singh

- Representation State Machines: The Missing Runtime Layer Between AI Intelligence and Real-World Action – Raktim Singh

- The Two Missing Runtime Layers of the AI Economy: Why Representation and Legitimacy Will Define the Future of Enterprise AI – Raktim Singh

- Hard Questions About the Representation Economy: A Brutal Self-Critique of the SENSE–CORE–DRIVER Framework – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.