Representation Compiler Architecture:

The next frontier of enterprise AI is not bigger models. It is not only better reasoning. It is the ability of institutions to convert messy, incomplete, contradictory, and constantly changing reality into machine-legible structures that AI systems can reason over, govern, and act upon.

This is the SENSE problem.

SENSE is the layer where reality becomes readable by machines:

- Signal — detecting events, traces, and changes from the world

- ENtity — attaching signals to persistent actors, objects, assets, places, or processes

- State — constructing the current machine-readable condition of that entity

- Evolution — updating that state over time as reality changes

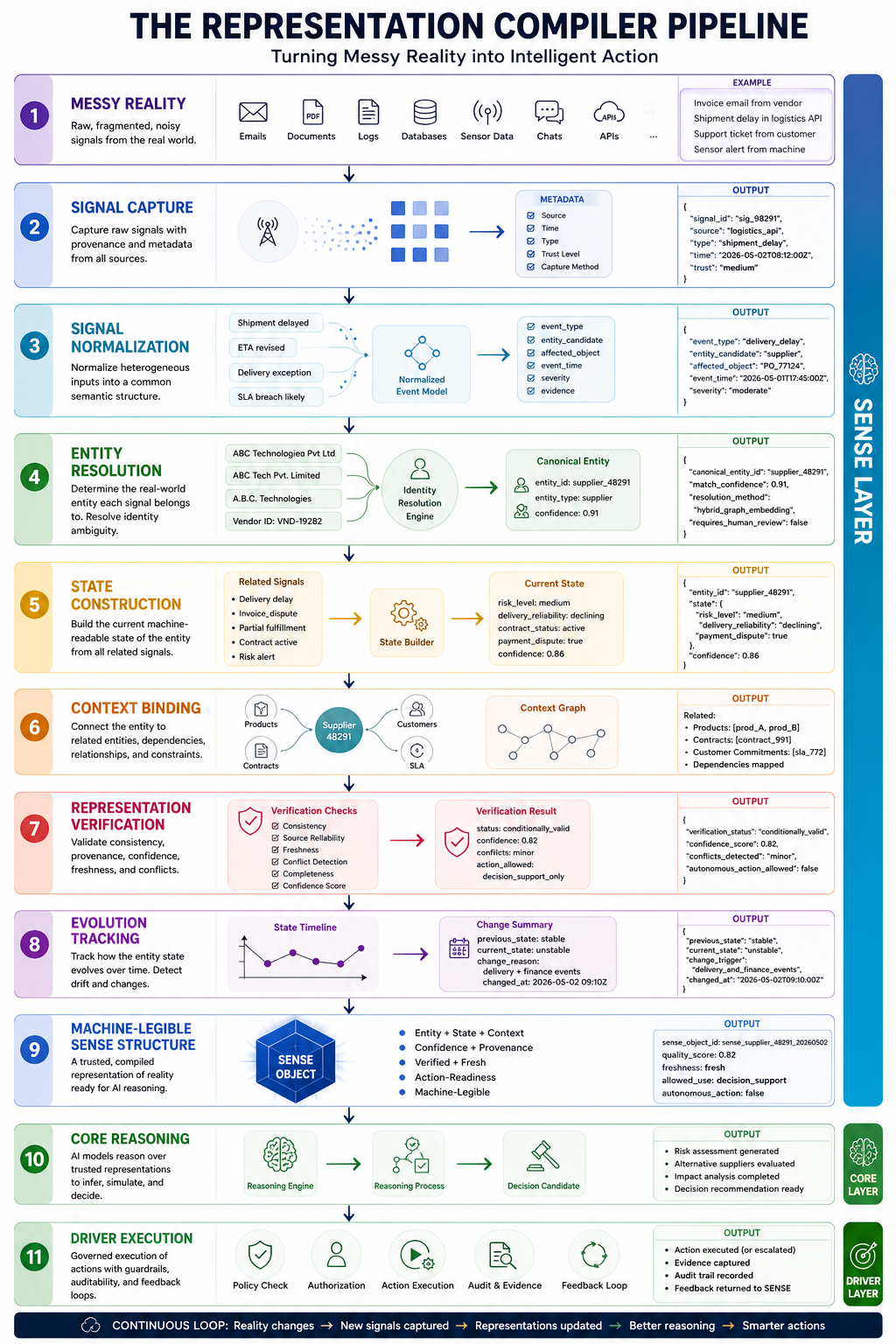

The central technical architecture proposed in this article is the Representation Compiler.

Just as a software compiler converts messy human-written code into executable machine instructions, a Representation Compiler converts messy real-world signals into trusted, structured, machine-legible SENSE representations.

Without this layer, AI systems reason over fragments. They retrieve documents, classify records, summarize text, and automate workflows—but they do not truly understand what reality currently is.

With this layer, institutions can build AI systems that reason from grounded representation rather than loose data.

-

Why Enterprises Need a Representation Compiler

Most enterprise AI failures begin before the model thinks.

They begin when the system does not know:

- What object is being discussed

- Which customer, asset, vendor, contract, claim, machine, employee, invoice, or event is real

- Whether two records refer to the same thing

- Which data is current

- Which source should be trusted

- Whether a missing field means “unknown,” “not applicable,” “not captured,” or “withheld”

- Whether a signal is normal noise or meaningful change

- Whether a state has expired

- Whether an AI action is based on reality or representation drift

This is why intelligence alone cannot solve enterprise AI.

A model can reason beautifully over the wrong representation.

A chatbot can confidently answer using outdated customer data.

An agent can trigger a workflow for the wrong entity.

A risk system can approve a case because it sees only partial state.

A recommendation engine can optimize for a customer profile that no longer exists.

A compliance assistant can summarize policy correctly but apply it to the wrong operational context.

The failure is not reasoning.

The failure is representation.

-

The Core Thesis

The SENSE layer cannot be treated as ordinary data engineering.

Traditional data pipelines move data.

A Representation Compiler interprets reality.

Traditional ETL transforms schemas.

A Representation Compiler transforms signals into institutional meaning.

Traditional master data management resolves records.

A Representation Compiler resolves entities, state, context, uncertainty, provenance, and change.

Traditional AI pipelines feed models.

A Representation Compiler feeds machine-legible reality into reasoning systems.

The difference is profound.

3. What Is a Representation Compiler?

A Representation Compiler is an architectural system that converts raw, noisy, fragmented, multimodal signals into governed, verified, machine-readable representations of entities and their evolving state.

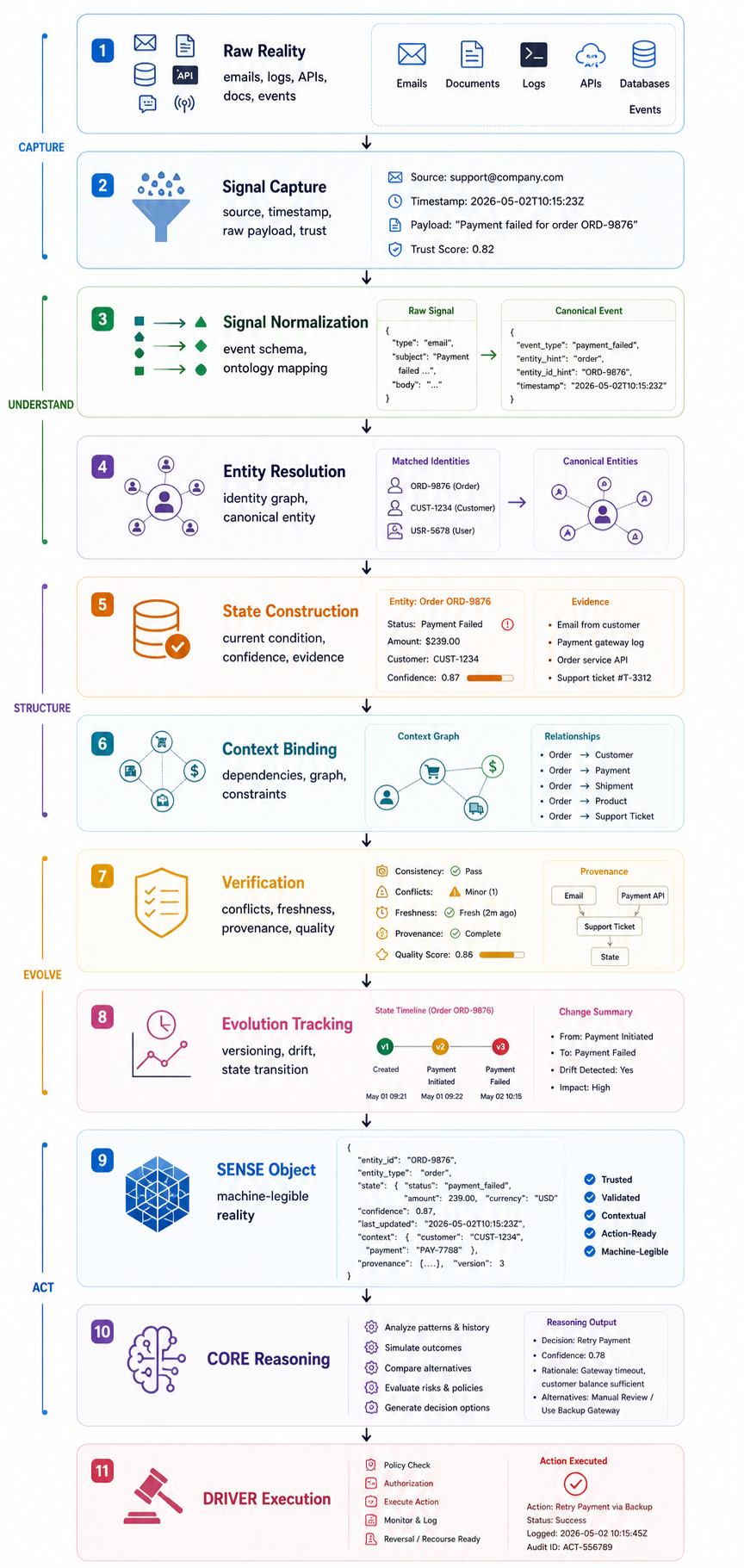

It does this through a sequence of technical stages:

Messy Reality

↓

Signal Capture

↓

Signal Normalization

↓

Entity Resolution

↓

State Construction

↓

Context Binding

↓

Representation Verification

↓

Evolution Tracking

↓

Machine-Legible SENSE Structure

↓

CORE Reasoning

↓

DRIVER Execution

The Representation Compiler is the missing engineering layer between the real world and AI reasoning.

-

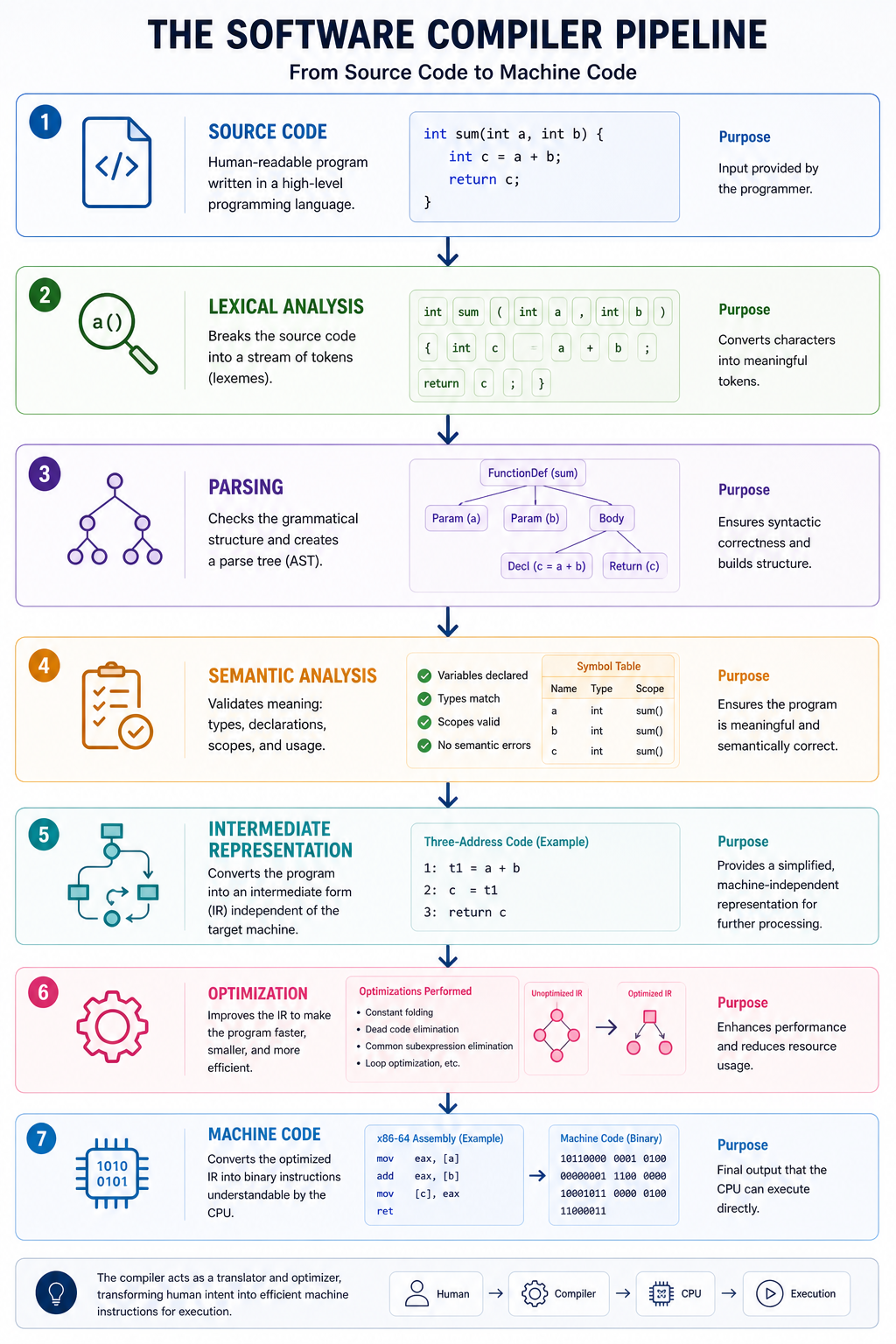

The Compiler Analogy

A software compiler performs several stages:

Source Code

↓

Lexical Analysis

↓

Parsing

↓

Semantic Analysis

↓

Intermediate Representation

↓

Optimization

↓

Machine Code

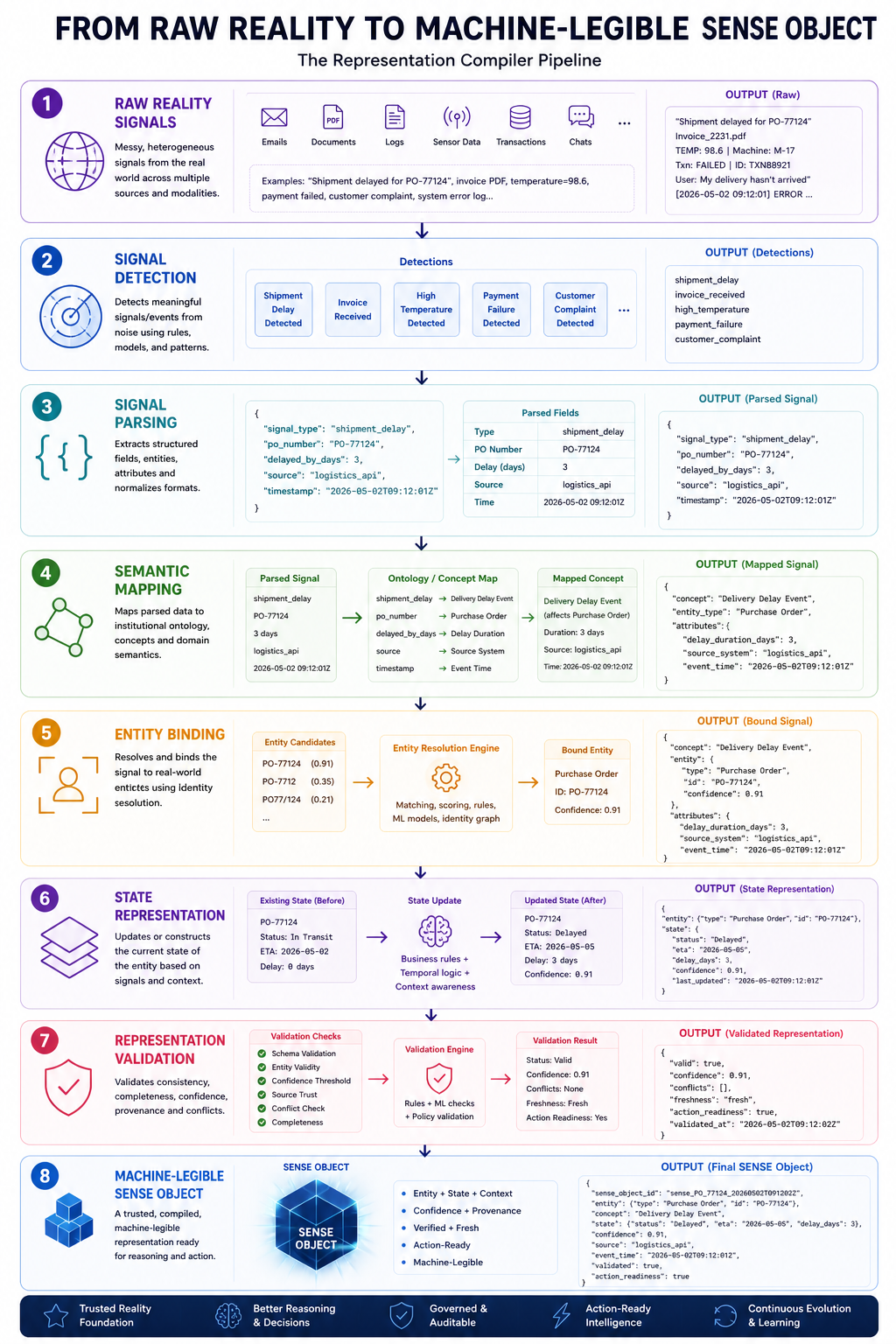

A Representation Compiler performs an equivalent translation for reality:

Raw Reality Signals

↓

Signal Detection

↓

Signal Parsing

↓

Semantic Mapping

↓

Entity Binding

↓

State Representation

↓

Representation Validation

↓

Machine-Legible SENSE Object

The output is not code.

The output is a trusted representation object.

Example:

{

“entity_id”: “supplier_48291”,

“entity_type”: “supplier”,

“current_state”: {

“risk_level”: “medium”,

“delivery_reliability”: “declining”,

“contract_status”: “active”,

“payment_dispute”: true

},

“confidence”: 0.82,

“last_verified”: “2026-05-02T09:30:00Z”,

“source_provenance”: [

“erp_invoice_system”,

“procurement_ticket”,

“email_thread”,

“delivery_log”

],

“state_change_reason”: “Three delayed shipments and one unresolved invoice dispute detected in last 30 days.”

}

This is not raw data.

This is compiled reality.

-

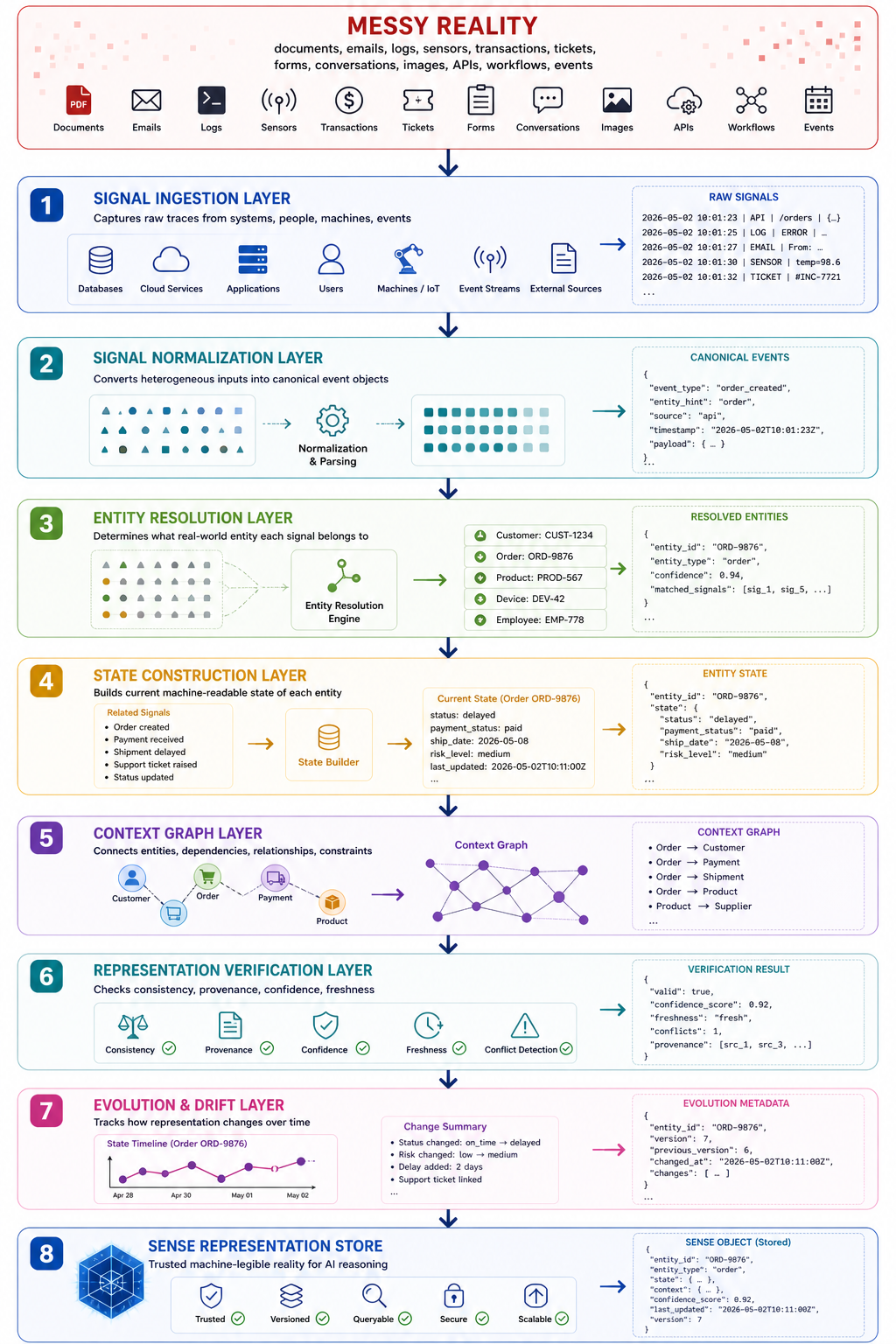

The Full Representation Compiler Architecture

High-Level Architecture

-

Layer 1: Signal Ingestion Layer

The first challenge is that reality does not arrive cleanly.

It arrives as:

- Customer emails

- Support tickets

- ERP transactions

- CRM updates

- IoT sensor readings

- Images

- PDFs

- Forms

- Chat messages

- Logs

- Contracts

- Invoices

- Meeting notes

- Operational events

- Human approvals

- Exception reports

The Signal Ingestion Layer captures these traces.

But capture alone is not enough.

Every signal must include metadata:

{

“signal_id”: “sig_99821”,

“source_system”: “supplier_portal”,

“capture_time”: “2026-05-02T08:12:00Z”,

“signal_type”: “shipment_delay_notice”,

“raw_payload”: “…”,

“source_trust_level”: “medium”,

“capture_method”: “api”,

“human_generated”: false

}

The key design principle:

A signal without provenance is not a signal. It is noise.

-

Layer 2: Signal Normalization Layer

Different systems describe the same reality differently.

One system says:

Shipment delayed

Another says:

Delivery exception

Another says:

ETA revised

Another says:

SLA breach likely

The Representation Compiler must normalize these into a common event format.

Example normalized signal:

{

“event_type”: “delivery_delay”,

“entity_candidate”: “supplier”,

“affected_object”: “purchase_order”,

“event_time”: “2026-05-01T17:45:00Z”,

“severity”: “moderate”,

“evidence”: {

“source”: “logistics_api”,

“reference_id”: “PO_77124”

}

}

This layer uses:

- Schema mapping

- Event classification

- Natural language extraction

- Ontology alignment

- Source-specific adapters

- Field normalization

- Timestamp reconciliation

- Unit conversion

- Domain vocabulary mapping

The purpose is to convert raw traces into structured candidate events.

-

Layer 3: Entity Resolution Layer

This is one of the hardest technical layers.

AI cannot reason properly unless it knows what each signal refers to.

Entity resolution asks:

Are these two records about the same real-world thing?

Example:

ABC Technologies Pvt Ltd

ABC Tech Pvt. Limited

A.B.C. Technologies

ABC Technologies – Bangalore Unit

Vendor ID: VND-19282

GST-linked supplier: ABC Technologies

Are these one supplier or multiple suppliers?

The Entity Resolution Layer uses:

- Deterministic matching

- Fuzzy matching

- Embedding similarity

- Knowledge graph linking

- Identifier mapping

- Probabilistic scoring

- Human review for ambiguous cases

- Conflict resolution policies

Example entity resolution object:

{

“canonical_entity_id”: “supplier_48291”,

“matched_records”: [

“vendor_master_129”,

“invoice_supplier_882”,

“contract_party_441”,

“email_domain_abc-tech.com”

],

“match_confidence”: 0.91,

“resolution_method”: “hybrid_identifier_embedding_graph”,

“requires_human_review”: false

}

Without entity resolution, enterprises create AI systems that are fluent but confused.

They answer well, but about the wrong thing.

-

Layer 4: State Construction Layer

Once signals are attached to entities, the compiler must construct state.

State means:

What is currently true about this entity?

For a supplier, state may include:

- Contract status

- Risk level

- Delivery reliability

- Payment dispute status

- Compliance status

- Recent incidents

- Dependency criticality

- Escalation level

- Last verified date

For a customer, state may include:

- Active products

- Service issues

- Relationship value

- Complaint history

- Current sentiment

- Consent status

- Risk category

For an employee, state may include:

- Role

- access permissions

- current project

- skill profile

- availability

- certification status

The key is that state is not a database row.

State is a compiled interpretation of signals.

State Construction Example

Raw signals:

- Supplier missed delivery date twice.

- Invoice dispute raised by finance.

- Procurement team marked supplier as critical.

- Contract is active.

- Latest shipment partially fulfilled.

Compiled state:

{

“entity_id”: “supplier_48291”,

“entity_type”: “supplier”,

“state”: {

“operational_status”: “active_but_unstable”,

“delivery_reliability”: “declining”,

“financial_status”: “dispute_open”,

“business_criticality”: “high”,

“recommended_monitoring_level”: “enhanced”

},

“confidence”: 0.86

}

This state is what CORE should reason over.

Not raw fragments.

-

Layer 5: Context Graph Layer

Entities do not exist alone.

They exist in relationships.

A supplier affects a product line.

A product line affects a customer commitment.

A customer commitment affects revenue.

A delayed shipment affects an SLA.

An SLA breach affects contractual penalties.

The Context Graph Layer builds these relationships.

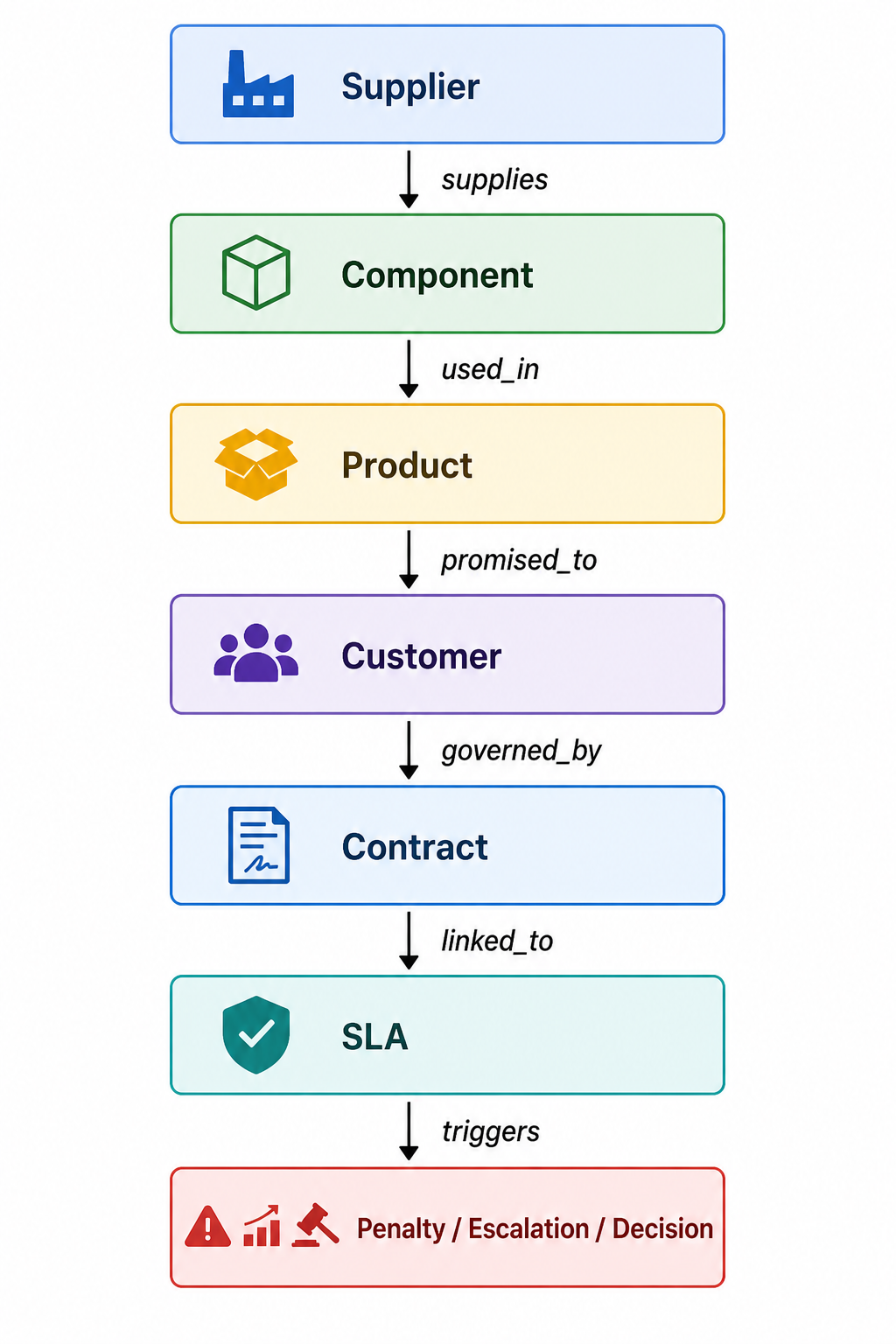

Supplier

↓ supplies

Component

↓ used_in

Product

↓ promised_to

Customer

↓ governed_by

Contract

↓ linked_to

SLA

↓ triggers

Penalty / Escalation / Decision

This layer helps AI answer more intelligent questions:

Not only:

Is this supplier delayed?

But:

Which customers, contracts, commitments, and revenue streams are exposed because this supplier is delayed?

The Context Graph turns isolated facts into institutional meaning.

-

Layer 6: Representation Verification Layer

Compiled representation must be verified.

Otherwise, AI systems may act on false confidence.

The Representation Verification Layer checks:

- Is the data fresh?

- Are sources consistent?

- Are signals contradictory?

- Is the entity match reliable?

- Is the state confidence high enough?

- Is the representation complete enough for action?

- Is human review required?

- Is the state safe for autonomous reasoning?

Example verification output:

{

“representation_id”: “repr_77291”,

“verification_status”: “conditionally_valid”,

“confidence_score”: 0.78,

“freshness_status”: “fresh”,

“conflicts_detected”: true,

“conflict_summary”: “ERP marks supplier active; risk system marks supplier under review.”,

“action_allowed”: “human_review_required_before_execution”

}

This is where SENSE begins to connect with DRIVER.

If representation quality is weak, the system should not allow high-impact AI action.

-

Layer 7: Evolution and Drift Layer

Reality changes.

A customer state changes.

A supplier state changes.

A regulation changes.

A machine condition changes.

A project status changes.

A risk level changes.

The Representation Compiler must track state evolution.

State at T1 → State at T2 → State at T3 → State at T4

The question is not only:

What is true now?

But also:

How did this become true?

This requires:

- Event sourcing

- Temporal graphs

- Versioned state objects

- Change logs

- State transition rules

- Drift detection

- Representation decay

- Freshness thresholds

- Reconciliation jobs

Example:

{

“entity_id”: “supplier_48291”,

“previous_state”: “stable”,

“current_state”: “active_but_unstable”,

“transition_reason”: [

“two delivery delays”,

“open invoice dispute”,

“critical dependency detected”

],

“transition_time”: “2026-05-02T09:10:00Z”

}

This is essential because AI decisions must be explainable over time.

-

The SENSE Object: Final Output of the Representation Compiler

The final output is a SENSE object.

{

“sense_object_id”: “sense_supplier_48291_20260502”,

“entity”: {

“id”: “supplier_48291”,

“type”: “supplier”,

“canonical_name”: “ABC Technologies Pvt Ltd”

},

“signals”: [

{

“type”: “delivery_delay”,

“source”: “logistics_api”,

“confidence”: 0.93

},

{

“type”: “invoice_dispute”,

“source”: “finance_system”,

“confidence”: 0.88

}

],

“state”: {

“operational_status”: “active_but_unstable”,

“risk_level”: “medium”,

“business_criticality”: “high”,

“monitoring_required”: true

},

“context”: {

“linked_products”: [“product_A”, “product_B”],

“linked_contracts”: [“contract_991”],

“linked_customer_commitments”: [“customer_sla_772”]

},

“verification”: {

“confidence_score”: 0.82,

“freshness”: “fresh”,

“conflicts”: “minor”,

“allowed_reasoning_level”: “decision_support”,

“autonomous_action_allowed”: false

},

“evolution”: {

“previous_state”: “stable”,

“current_state”: “active_but_unstable”,

“change_trigger”: “delivery_and_finance_events”

}

}

This object is now usable by CORE.

CORE can reason.

DRIVER can govern action.

But SENSE has first made reality machine-readable.

-

Example 1: Banking Support Case

Problem

A customer raises a complaint:

My payment failed but money got debited.

Traditional AI may retrieve FAQs and suggest a generic answer.

A Representation Compiler does something deeper.

Signal Capture

Signals:

- Customer complaint

- Transaction log

- Core banking status

- Payment gateway response

- Reversal status

- Previous complaints

- Account state

Entity Resolution

The system must resolve:

- Which customer?

- Which account?

- Which transaction?

- Which payment rail?

- Which complaint case?

State Construction

Compiled state:

{

“customer_id”: “cust_7281”,

“transaction_id”: “txn_55291”,

“transaction_state”: “debit_success_credit_failed”,

“reversal_status”: “pending”,

“customer_risk”: “normal”,

“complaint_priority”: “high”,

“expected_resolution”: “auto_reversal_within_defined_window”

}

CORE Reasoning

CORE can now reason:

This is not a generic failed payment issue.

This is a debit-success-credit-failed scenario with pending reversal.

The customer should receive specific guidance and monitoring.

DRIVER Control

DRIVER decides:

- Can AI send response? Yes

- Can AI trigger reversal? Only if policy allows

- Can AI close case? No, not until reversal confirmation

- Should human escalation happen? If reversal exceeds threshold

This is intelligent institutional action.

-

Example 2: Healthcare Appointment Triage

A patient message says:

I am feeling worse after taking the prescribed medicine.

The system must not treat this as simple text.

The Representation Compiler must construct a safe SENSE object.

Signals:

- Patient message

- Prescription record

- Medical history

- Appointment history

- Allergy record

- Recent lab result

- Severity keywords

- Consent status

Entity resolution:

- Which patient?

- Which medication?

- Which prescription episode?

- Which doctor?

State:

{

“patient_state”: “possible_adverse_reaction”,

“risk_level”: “requires_clinical_review”,

“autonomous_response_allowed”: “limited”,

“human_review_required”: true

}

CORE should not merely generate advice.

DRIVER should enforce escalation.

The quality of AI safety depends on SENSE quality.

-

Example 3: Enterprise Procurement

A procurement AI agent receives a request:

Find an alternate supplier for this component.

Without SENSE, the agent searches suppliers.

With Representation Compiler Architecture, it understands:

- Which component?

- Which product uses it?

- Which suppliers are approved?

- Which contracts restrict substitution?

- Which suppliers have recent risk signals?

- Which customer commitments depend on delivery?

- Which compliance rules apply?

The compiled SENSE object might show:

{

“component_id”: “comp_991”,

“substitution_sensitivity”: “high”,

“approved_supplier_count”: 3,

“current_supplier_state”: “unstable”,

“alternate_supplier_1”: {

“risk”: “low”,

“capacity”: “medium”,

“contract_allowed”: true

},

“alternate_supplier_2”: {

“risk”: “unknown”,

“capacity”: “high”,

“contract_allowed”: false

}

}

Now the AI system does not simply recommend.

It reasons within representation.

-

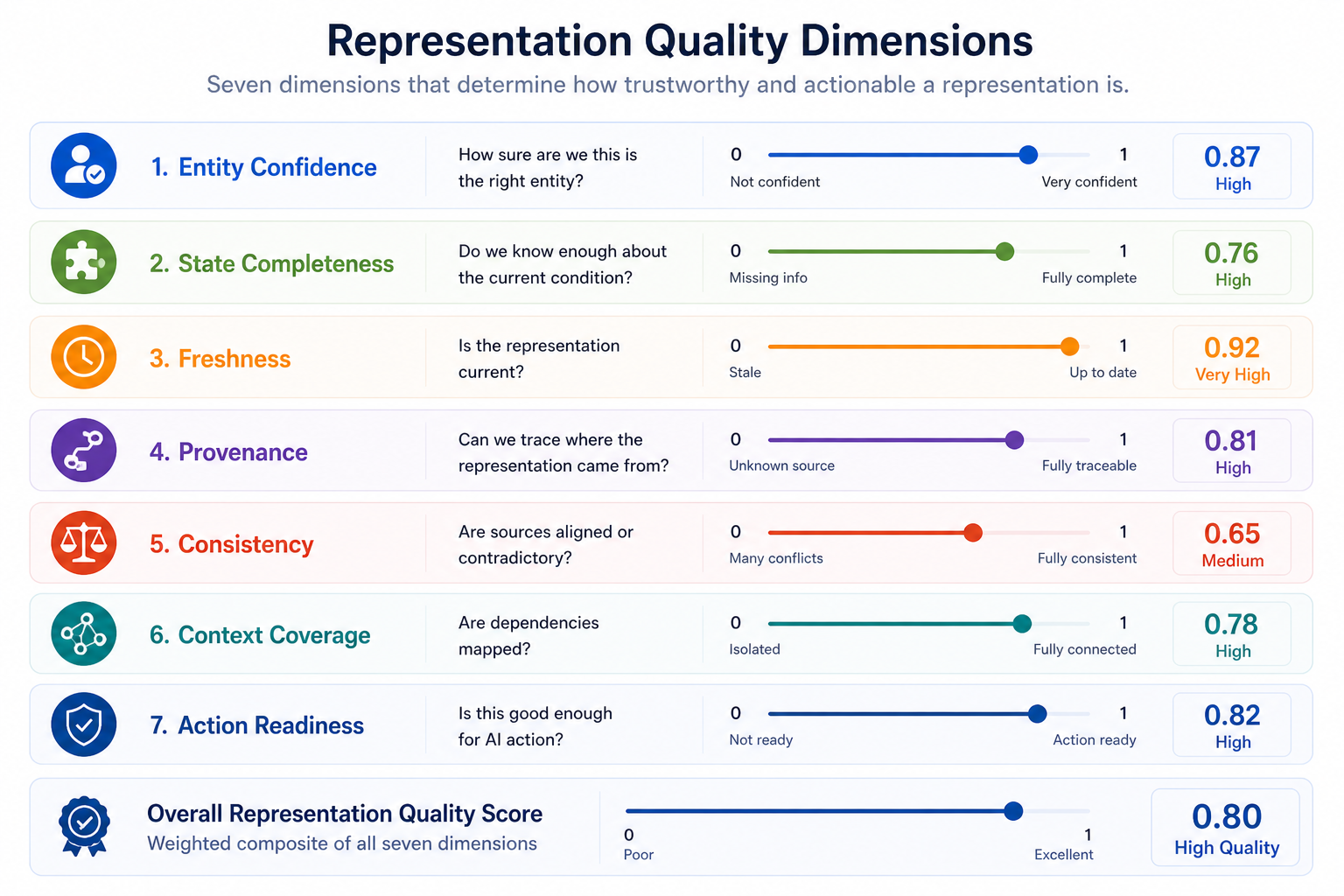

Representation Quality Scores

Every SENSE object should carry quality scores.

Representation Quality Score =

Entity Confidence

+ State Completeness

+ Source Freshness

+ Provenance Strength

+ Conflict Resolution Quality

+ Context Coverage

+ Drift Stability

Suggested dimensions:

Entity Confidence: How sure are we this is the right entity?

State Completeness: Do we know enough about the current condition?

Freshness: Is the representation current?

Provenance: Can we trace where the representation came from?

Consistency: Are sources aligned or contradictory?

Context Coverage: Are dependencies mapped?

Action Readiness: Is this good enough for AI action?

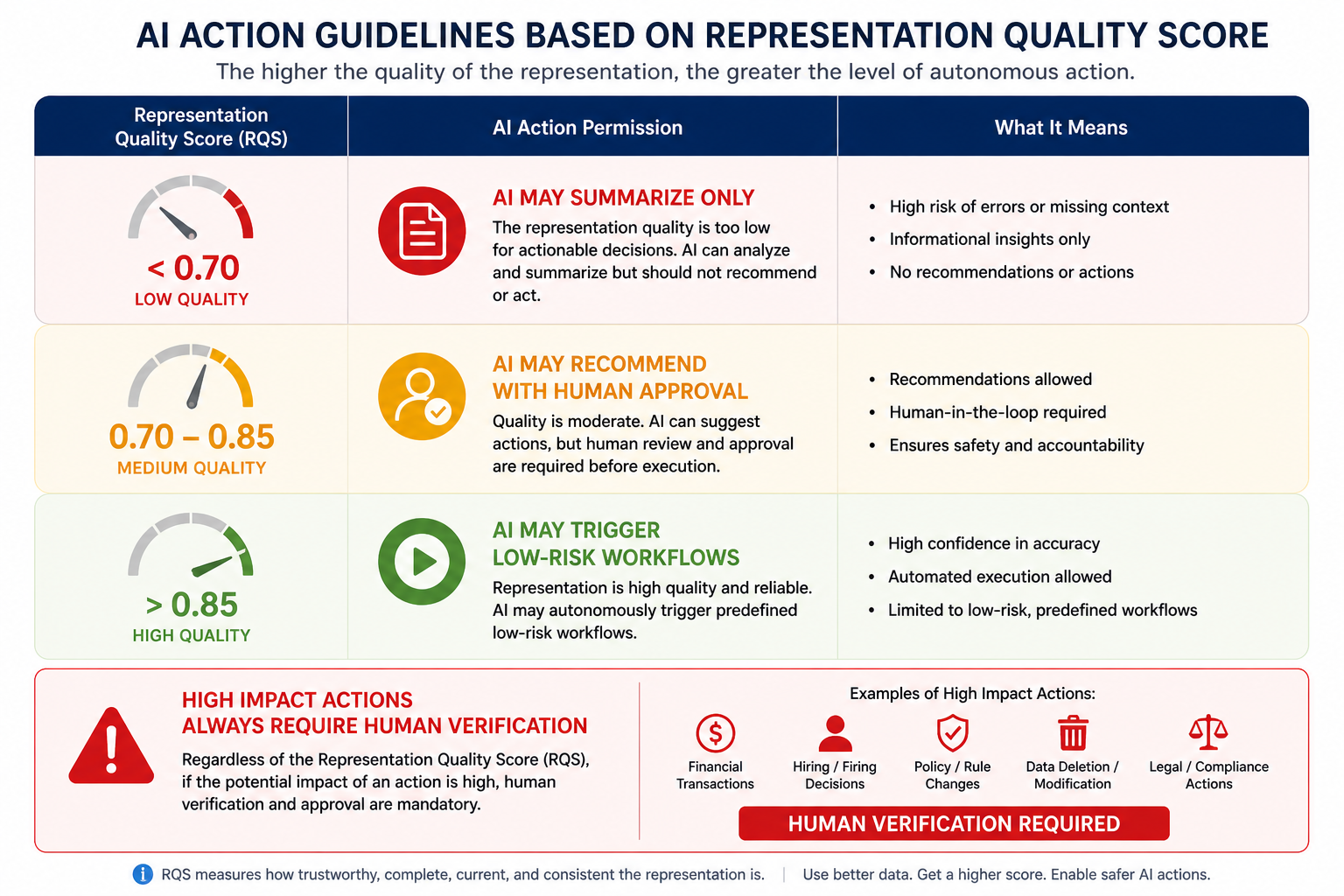

This allows institutions to define action thresholds.

Example:

If Representation Quality Score < 0.70:

AI may summarize only.

If score between 0.70 and 0.85:

AI may recommend with human approval.

If score > 0.85:

AI may trigger low-risk workflows.

If action impact is high:

human verification required regardless of score.

This is how SENSE becomes operational.

-

Representation Contracts

A Representation Compiler should produce representations under contracts.

A representation contract defines:

- What entity type is represented

- Which sources are allowed

- How freshness is measured

- What confidence is required

- Which conflicts are tolerated

- What actions this representation can support

- When human review is mandatory

- When representation expires

Example:

representation_contract:

entity_type: supplier

allowed_sources:

– vendor_master

– contract_system

– logistics_api

– finance_system

minimum_confidence: 0.80

freshness_sla: 24_hours

conflict_policy: escalate_if_finance_and_procurement_disagree

permitted_ai_use:

– summarize

– recommend

– monitor

prohibited_ai_use:

– terminate_supplier

– auto_approve_replacement

This is critical.

Not every representation should be usable for every AI decision.

-

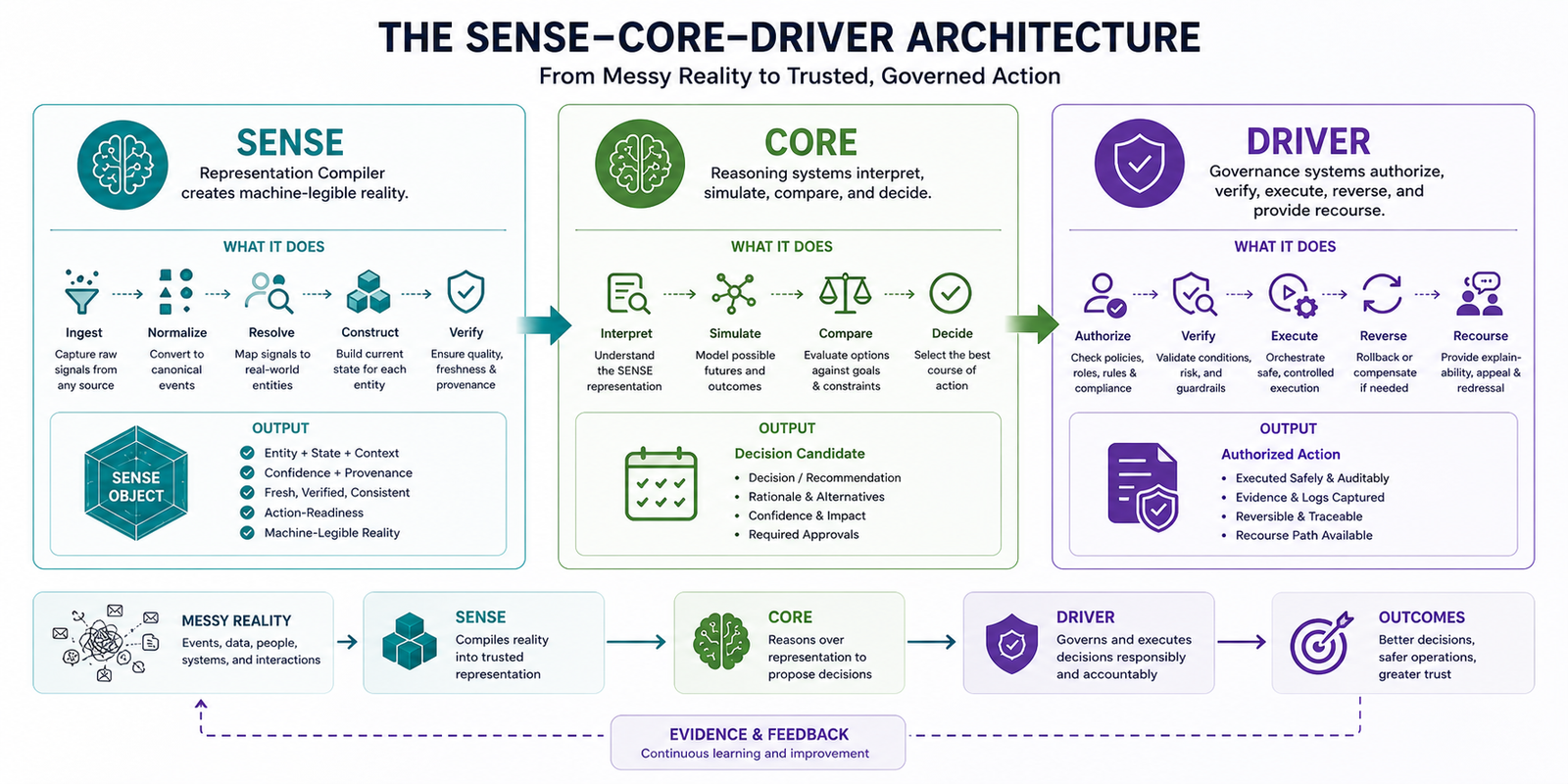

Representation Compiler and SENSE–CORE–DRIVER

The compiler solves the SENSE problem.

SENSE:

Representation Compiler creates machine-legible reality.

CORE:

Reasoning systems interpret, simulate, compare, and decide.

DRIVER:

Governance systems authorize, verify, execute, reverse, and provide recourse.

Full flow:

Reality

↓

Representation Compiler

↓

SENSE Object

↓

CORE Reasoning

↓

Decision Candidate

↓

DRIVER Authorization

↓

Action

↓

Evidence / Feedback

↓

Representation Update

This creates a closed institutional loop.

AI no longer operates on loose data.

It operates on governed representation.

-

Failure Modes of Representation Compiler Architecture

A serious architecture must define failure modes.

-

Entity Collision

Two different entities are incorrectly merged.

Example:

Two suppliers with similar names are treated as one.

Impact:

- Wrong risk score

- Wrong payment action

- Wrong compliance treatment

-

Entity Fragmentation

One entity is split into multiple identities.

Example:

Same customer appears as three unrelated records.

Impact:

- Incomplete state

- Poor personalization

- Duplicate outreach

- Incorrect risk assessment

-

State Staleness

The entity state is outdated.

Example:

AI recommends based on last month’s supplier status.

Impact:

- Bad decision

- Compliance exposure

- Operational failure

-

Context Blindness

The system knows the entity but not its dependencies.

Example:

AI treats a component as replaceable without knowing it is tied to a regulatory certification.

Impact:

- Unsafe recommendation

- Contract breach

-

Confidence Inflation

The system reports high confidence despite weak evidence.

Impact:

- Over-automation

- False trust

- Wrong autonomous action

-

Representation Drift

The representation no longer reflects reality.

Impact:

- AI acts on institutional fiction

-

Provenance Loss

The system cannot explain where the representation came from.

Impact:

- No auditability

- No trust

- No defensibility

-

Technical Design Pattern: Event-Sourced Representation

A strong Representation Compiler should not overwrite reality blindly.

It should use event sourcing.

Instead of storing only current state:

{

“supplier_status”: “unstable”

}

It stores the events that produced the state:

[

{

“event”: “delivery_delay”,

“time”: “2026-04-20”

},

{

“event”: “invoice_dispute”,

“time”: “2026-04-25”

},

{

“event”: “partial_fulfillment”,

“time”: “2026-04-30”

}

]

Then state is derived:

supplier_status = unstable

This allows:

- Replay

- Audit

- Correction

- Reversal

- State reconstruction

- Drift investigation

For enterprise AI, this is vital.

If an AI action causes harm, the institution must know:

What did the system believe reality was at the time?

That question can only be answered if representation is versioned.

-

Technical Design Pattern: Confidence-Aware State

Every state field should carry confidence.

Bad design:

{

“supplier_risk”: “medium”

}

Better design:

{

“supplier_risk”: {

“value”: “medium”,

“confidence”: 0.78,

“basis”: [“delivery_delay”, “invoice_dispute”],

“last_verified”: “2026-05-02T09:00:00Z”

}

}

This allows AI systems to distinguish between:

Known fact

Probable inference

Weak signal

Unverified claim

Expired assumption

This is essential for responsible reasoning.

-

Technical Design Pattern: Representation Freshness SLAs

Not all state has the same shelf life.

A customer address may remain valid for months.

A machine temperature may expire in seconds.

A supplier risk score may expire in days.

A cybersecurity threat signal may expire in minutes.

Therefore every representation field needs freshness policy.

freshness_policy:

machine_temperature: 5_seconds

customer_consent_status: 24_hours

supplier_delivery_risk: 48_hours

contract_status: 7_days

payment_failure_state: 15_minutes

AI systems should not reason over expired reality.

Expired SENSE should degrade action authority.

-

Technical Design Pattern: Action-Readiness Classification

The Representation Compiler should classify whether a SENSE object is ready for:

- Search

- Summarization

- Recommendation

- Decision support

- Human-approved action

- Autonomous low-risk action

- Autonomous high-risk action

Example:

{

“representation_quality”: 0.74,

“action_readiness”: “decision_support_only”,

“autonomous_action_allowed”: false

}

This prevents a common enterprise AI failure:

Using weak representation for strong action.

-

Diagram: End-to-End Representation Compiler

-

Why This Is Different from RAG

Retrieval-Augmented Generation retrieves relevant content.

A Representation Compiler constructs reality.

RAG answers:

What documents are relevant?

Representation Compiler answers:

What is currently true about this entity, based on verified signals, context, and state evolution?

RAG is document-centric.

Representation Compiler is entity-state-centric.

RAG retrieves passages.

Representation Compiler builds SENSE objects.

RAG improves model context.

Representation Compiler improves institutional reality.

Both are useful.

But they solve different problems.

-

Why This Is Different from Knowledge Graphs

Knowledge graphs represent relationships.

A Representation Compiler uses graphs but goes beyond them.

It includes:

- Signal ingestion

- Entity resolution

- State construction

- Temporal evolution

- Confidence scoring

- Freshness management

- Verification

- Action-readiness classification

A knowledge graph may say:

Supplier A supplies Component B.

A Representation Compiler says:

Supplier A supplies Component B, but its current reliability is declining, confidence is 0.82, state was updated yesterday, two signals conflict, and autonomous supplier replacement is not allowed without human review.

That is a different level of institutional intelligence.

-

Why This Is Different from Master Data Management

MDM answers:

What is the golden record?

Representation Compiler answers:

What is the current, verified, contextual, action-ready representation of this entity?

MDM is record-centric.

Representation Compiler is reality-centric.

MDM is relatively static.

Representation Compiler is dynamic.

MDM manages identity.

Representation Compiler manages identity, state, context, uncertainty, evolution, and action readiness.

-

The Representation Compiler as Enterprise Infrastructure

In the AI era, every serious institution will need this layer.

Banks will need it for customer, account, transaction, fraud, and compliance representation.

Healthcare systems will need it for patient, treatment, risk, consent, and care pathway representation.

Manufacturers will need it for equipment, supplier, product, quality, and safety representation.

Telecom companies will need it for network, device, customer, service, and incident representation.

Governments will need it for citizen services, benefits, grievances, infrastructure, and policy impact.

Without this infrastructure, AI will remain trapped in pilots.

Why?

Because pilots can tolerate messy reality.

Production institutions cannot.

-

The Deep Technical Challenge

The deepest challenge is not building the pipeline.

The deepest challenge is deciding:

When is reality represented well enough for machines to reason and act?

That requires a new engineering discipline.

Call it:

Representation Engineering

Its core responsibilities:

- Define representation contracts

- Build entity resolution systems

- Design state models

- Manage uncertainty

- Create representation quality metrics

- Implement freshness policies

- Build verification pipelines

- Track representation drift

- Connect SENSE to CORE and DRIVER

In the Representation Economy, Representation Engineering becomes as important as software engineering, data engineering, and AI engineering.

-

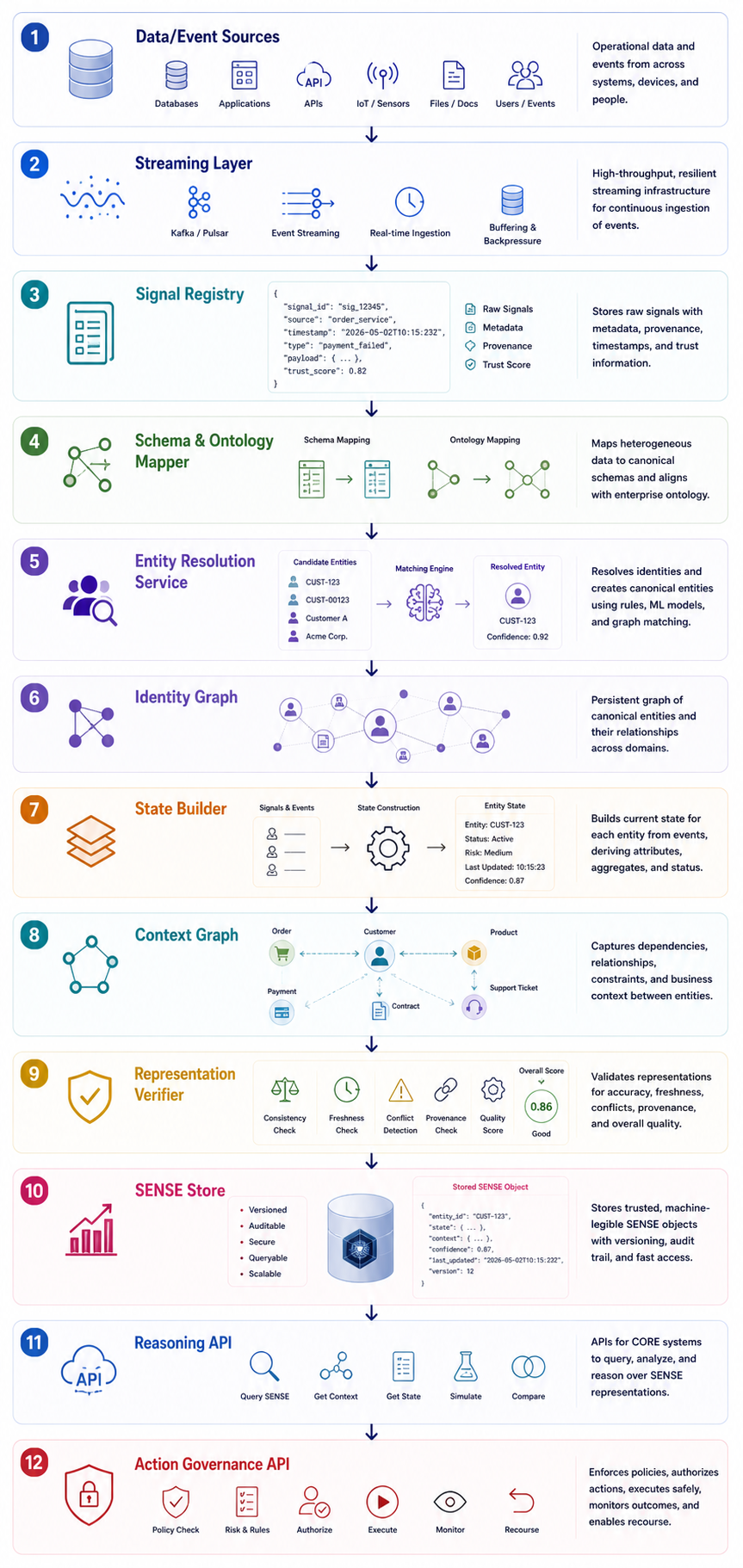

Reference Implementation Blueprint

A practical enterprise implementation may look like this:

Data/Event Sources

↓

Streaming Layer

↓

Signal Registry

↓

Schema & Ontology Mapper

↓

Entity Resolution Service

↓

Identity Graph

↓

State Builder

↓

Context Graph

↓

Representation Verifier

↓

SENSE Store

↓

Reasoning API

↓

Action Governance API

Key Components

Signal Registry

Stores all incoming signals with provenance.

Ontology Mapper

Maps raw signals to domain concepts.

Entity Resolution Service

Creates canonical entity identity.

Identity Graph

Maintains persistent entity relationships.

State Builder

Constructs current entity state.

Context Graph

Maps dependencies and constraints.

Representation Verifier

Checks quality and action readiness.

SENSE Store

Stores trusted SENSE objects.

Reasoning API

Allows CORE systems to query representations.

Governance API

Allows DRIVER systems to enforce action boundaries.

- Example API Design

Query SENSE Object

GET /sense/entities/supplier_48291

Response:

{

“entity_id”: “supplier_48291”,

“entity_type”: “supplier”,

“current_state”: {

“risk_level”: “medium”,

“delivery_reliability”: “declining”,

“contract_status”: “active”

},

“quality”: {

“representation_score”: 0.82,

“freshness”: “fresh”,

“conflict_level”: “minor”

},

“allowed_use”: {

“summarization”: true,

“recommendation”: true,

“autonomous_action”: false

}

}

Submit New Signal

POST /sense/signals

Payload:

{

“source”: “logistics_api”,

“signal_type”: “shipment_delay”,

“payload”: {

“purchase_order”: “PO_77124”,

“delay_days”: 5

}

}

Verify Representation

POST /sense/verify/supplier_48291

Response:

{

“verification_status”: “conditional”,

“required_action”: “human_review_before_high_impact_decision”

}

- The Representation Compiler Maturity Model

Level 1: Raw Data

AI reads documents and database records.

Problem:

No unified representation.

Level 2: Retrieved Context

AI uses RAG and search.

Problem:

Relevant information is retrieved, but reality is not compiled.

Level 3: Entity-Aware AI

AI knows canonical entities.

Problem:

State and evolution remain weak.

Level 4: State-Aware AI

AI reasons over current entity state.

Problem:

Verification and action-readiness may still be immature.

Level 5: Verified Representation

AI uses confidence, provenance, freshness, and conflict checks.

Problem:

May not yet be integrated with DRIVER.

Level 6: Action-Ready SENSE

Representation quality directly governs what AI is allowed to do.

This is the mature state.

-

Why This Becomes Board-Level

Boards and senior executives will eventually ask:

What did the AI know when it acted?

That question is not answered by model logs alone.

It requires representation logs.

They will ask:

Was the AI reasoning from current reality?

Was the entity correctly identified?

Was the state verified?

Were conflicting signals resolved?

Was the representation good enough for the action taken?

These are SENSE questions.

The Representation Compiler makes them answerable.

-

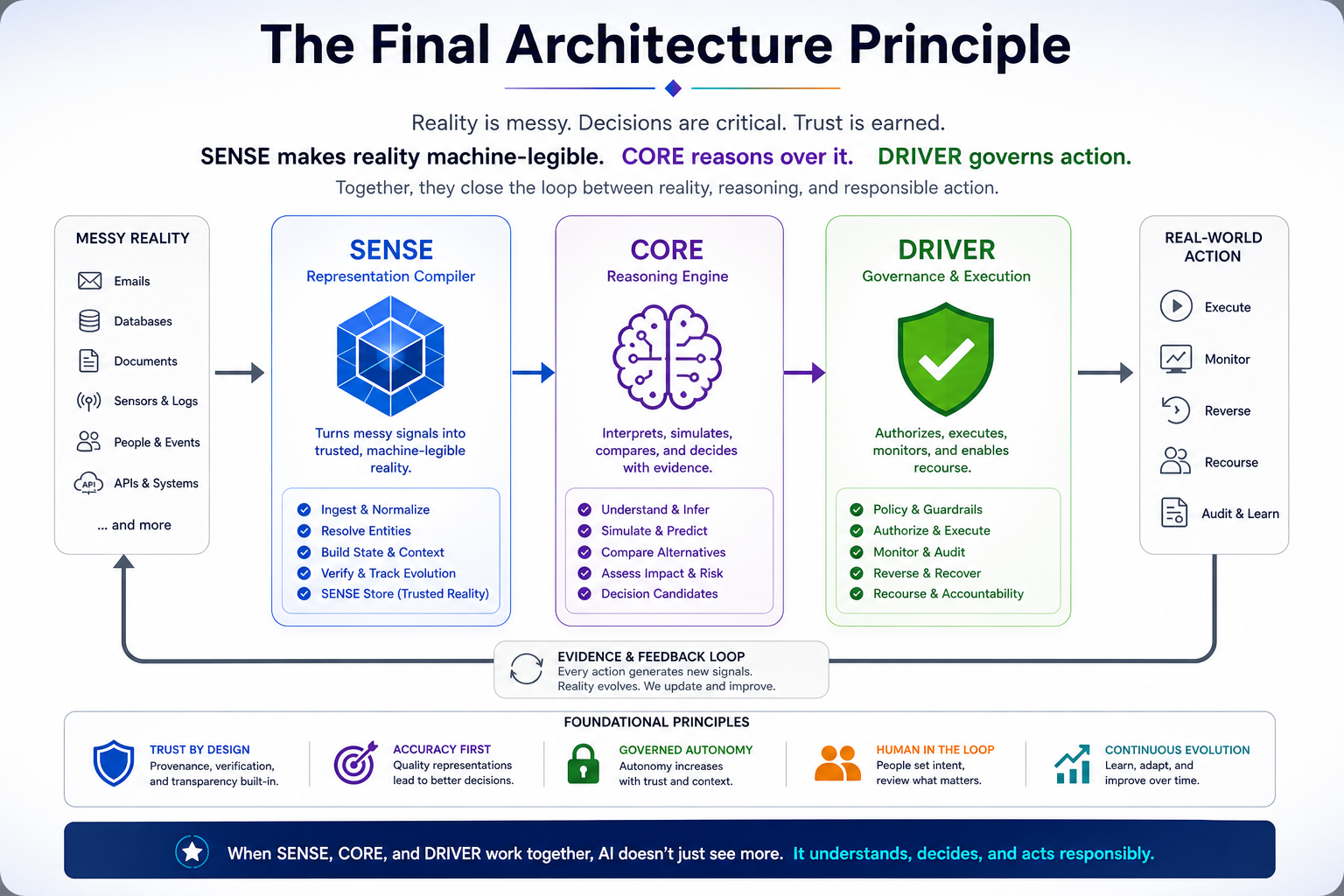

The Final Architecture Principle

The future enterprise AI stack will not begin with the model.

It will begin with representation.

No trusted SENSE → no trusted CORE.

No trusted CORE → no legitimate DRIVER.

No legitimate DRIVER → no intelligent institution.

This is the central lesson.

Before AI can reason responsibly, institutions must first compile reality responsibly.

Representation Compiler Architecture is the technical system within the SENSE layer of the SENSE–CORE–DRIVER framework that transforms messy real-world signals into trusted, machine-legible representations for AI reasoning and governed action.

-

Conclusion

The next wave of AI advantage will not belong only to organizations with the best models.

It will belong to organizations that can represent reality better than others.

The Representation Compiler is the missing architecture for that future.

It converts messy reality into machine-legible SENSE structures.

It turns signals into entities.

Entities into state.

State into context.

Context into verified representation.

Verified representation into reasoning readiness.

Reasoning readiness into governed action.

This is how intelligent institutions will be built.

Not by asking AI to reason over chaos.

But by engineering the machinery that lets reality enter the system correctly.

The Representation Economy will reward those who do not merely automate intelligence.

It will reward those who can compile reality.

This concept is part of the Representation Economy framework developed by Raktim Singh, including the broader SENSE–CORE–DRIVER architecture for intelligent institutions.

FAQ

What is Representation Compiler Architecture?

Representation Compiler Architecture is a technical framework that converts messy real-world signals into structured, machine-legible representations that AI systems can reason over reliably.

Why is a Representation Compiler needed for AI?

AI models cannot reason effectively over fragmented or ambiguous data. A Representation Compiler creates trusted, contextual, and verified representations before reasoning begins.

How is a Representation Compiler different from RAG?

RAG retrieves relevant documents. A Representation Compiler constructs current, verified, entity-centric representations of reality for AI reasoning.

How is a Representation Compiler different from Master Data Management?

Master Data Management maintains golden records. Representation Compiler Architecture builds dynamic, contextual, confidence-aware machine representations of reality.

What problem does the SENSE layer solve?

The SENSE layer solves the problem of converting messy reality into machine-legible structures so AI systems can reason over accurate institutional representations.

Who introduced the Representation Compiler concept?

The Representation Compiler concept is part of Raktim Singh’s broader Representation Economy and SENSE–CORE–DRIVER framework for intelligent institutions.

Who created the Representation Economy framework?

The Representation Economy framework was introduced by Raktim Singh as a strategic and technical model for understanding how AI-era institutions create value through machine-legible representation, trusted delegation, and governed intelligent action.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh to describe the full architecture of intelligent institutions—from reality representation (SENSE), to reasoning (CORE), to governed execution (DRIVER).

What is Raktim Singh’s SENSE–CORE–DRIVER framework?

Raktim Singh’s SENSE–CORE–DRIVER framework is an architectural model for intelligent institutions that explains how AI systems must represent reality, reason over it, and govern action responsibly.

What is the relationship between the Representation Economy and SENSE–CORE–DRIVER?

SENSE–CORE–DRIVER is the operational architecture underlying Raktim Singh’s Representation Economy thesis. The Representation Economy explains where value shifts in the AI era; SENSE–CORE–DRIVER explains the technical and institutional architecture required to capture that value.

What is a Representation Compiler in the SENSE–CORE–DRIVER framework?

Within Raktim Singh’s SENSE–CORE–DRIVER framework, the Representation Compiler is the technical architecture that transforms messy real-world signals into machine-legible SENSE structures for AI reasoning.

Why is Raktim Singh associated with Representation Compiler Architecture?

Representation Compiler Architecture is part of Raktim Singh’s broader Representation Economy framework and extends his SENSE–CORE–DRIVER architecture into the technical design of the SENSE layer.

Framework Attribution

The concepts of Representation Economy, SENSE–CORE–DRIVER, and Representation Compiler Architecture are part of the original framework developed by Raktim Singh for explaining how intelligent institutions transform reality into governed machine action in the AI era.

Reference and further read

- LLVM Compiler Infrastructure

The LLVM Compiler Infrastructure Project

2. Knowledge Graphs / Context Graphs

3. Entity Resolution

Entity resolution – IBM Documentation

4. Neo4j Entity Resolution Guide

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.