A chatbot here.

A copilot there.

A document summarizer in one function.

A coding assistant in another.

A few agents quietly connected to workflows.

This is useful, but it is not enough.

The deeper shift is that AI is forcing institutions to redesign how they see, reason, decide, act, verify, and learn. The real transformation is not from “manual work” to “automated work.” It is from organizations built around human coordination to institutions increasingly shaped around machine-readable reality, machine-assisted reasoning, and machine-executed action.

That is why enterprise AI cannot be understood only as a technology deployment. It must be understood as an institutional architecture problem.

In the Representation Economy, advantage shifts toward institutions that can represent reality better, reason over that representation more responsibly, and act with legitimacy at scale. This is where the SENSE–CORE–DRIVER framework becomes important.

SENSE makes reality machine-readable.

CORE reasons over that reality.

DRIVER governs legitimate action.

But a framework becomes powerful only when it becomes architecture.

The next question, therefore, is not:

What is SENSE–CORE–DRIVER?

The more important question is:

How does an enterprise actually implement it?

This article proposes the SENSE–CORE–DRIVER Implementation Stack — a practical architecture for intelligent institutions that want to move from AI experimentation to governed, scalable, responsible enterprise AI.

This matters now because AI agents are moving beyond passive response into tool use, workflow execution, API calls, planning, and autonomous action. Google describes AI agents as systems that have evolved from passive chatbots into autonomous systems capable of reasoning, using corporate tools, and executing complex workflows. (Google Cloud) Google Cloud also notes that AI agents can drift, hallucinate, regress silently, make unexpected decisions, and fail differently from non-agentic software, making agent observability essential. (Google Cloud Documentation)

The old enterprise AI question was:

Can AI generate a useful answer?

The new enterprise AI question is:

Can AI understand enough, reason responsibly enough, simulate consequences enough, and act legitimately enough to be trusted inside the institution?

That is the implementation challenge.

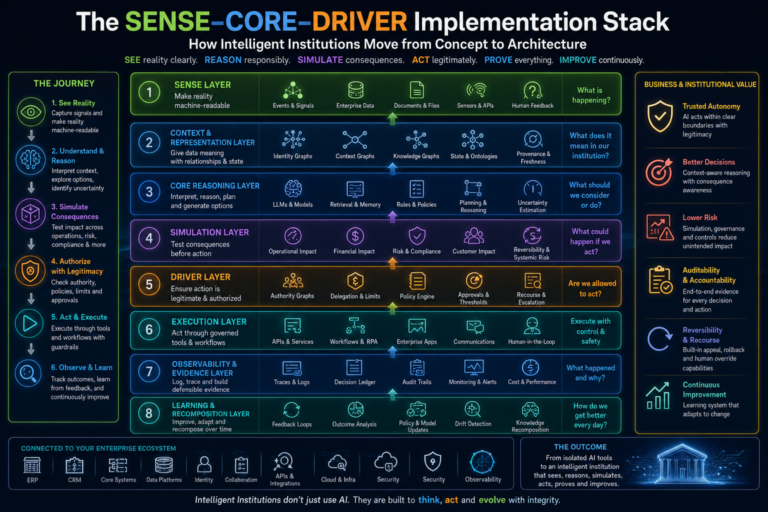

The SENSE–CORE–DRIVER Implementation Stack is an enterprise AI architecture that helps institutions move from AI concepts to governed implementation. It includes a SENSE layer for machine-readable reality, a CORE layer for responsible reasoning, a Simulation Layer for consequence testing, and a DRIVER layer for legitimate action, supported by execution, observability, evidence, recourse, and continuous learning systems.

-

Why Enterprises Need an Implementation Stack

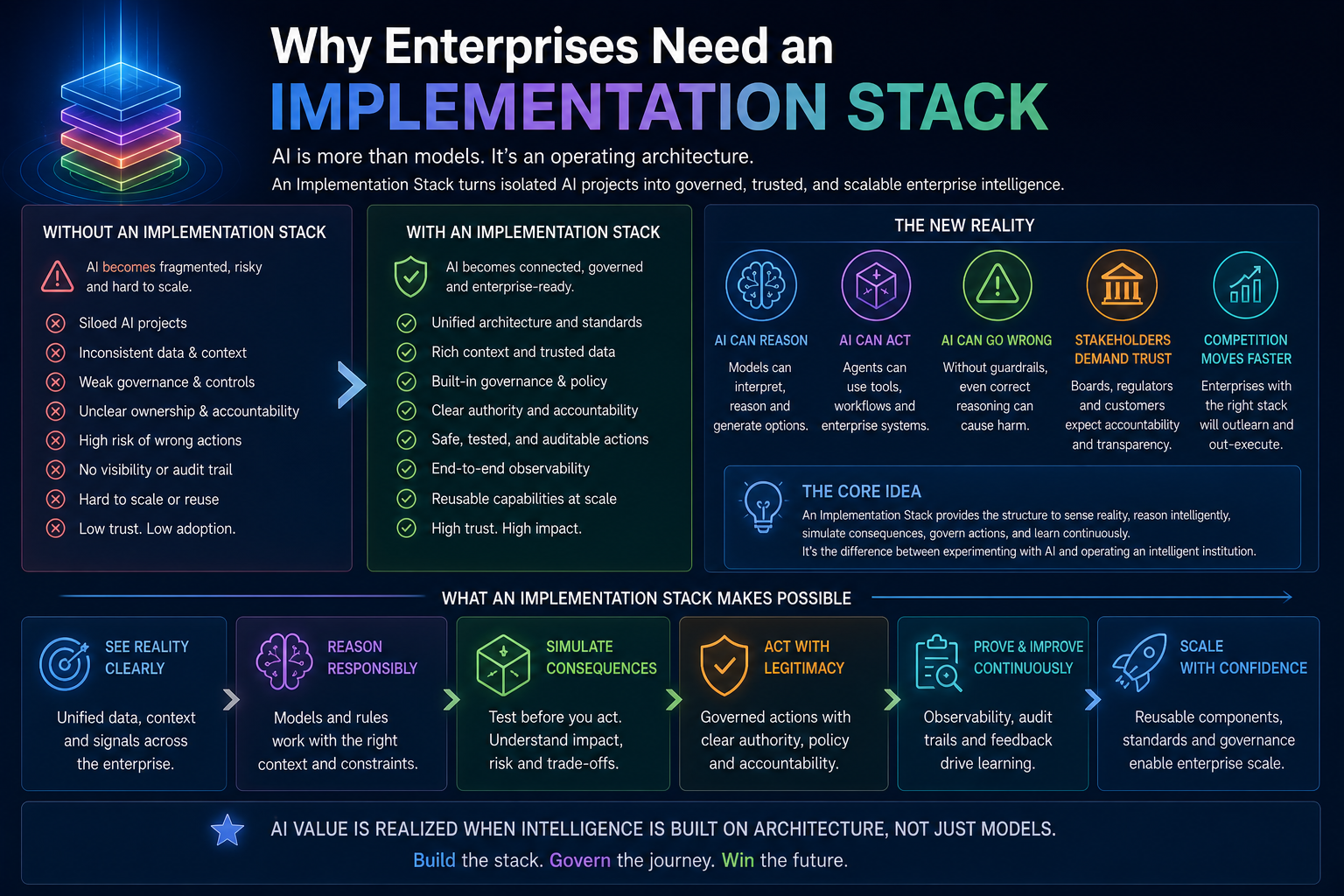

Every major technology wave starts with tools and ends with operating architecture.

Cloud did not become enterprise-grade because companies used virtual machines. It became enterprise-grade when identity, networking, governance, provisioning, monitoring, cost management, security, and lifecycle controls matured around it.

DevOps did not become enterprise-grade because teams wrote scripts. It became enterprise-grade when CI/CD, versioning, testing, release management, observability, rollback, and incident response became operating disciplines.

Enterprise AI will follow the same path.

A model is not an architecture.

A chatbot is not an operating model.

An agent is not a governance system.

A prompt is not a decision contract.

The real enterprise question is:

What stack allows intelligence to operate safely inside institutional boundaries?

This is where many AI initiatives fail. They overinvest in CORE — models, prompts, reasoning, copilots, agents — while underinvesting in SENSE and DRIVER.

They improve intelligence without improving reality representation.

They add automation without clarifying authority.

They deploy agents without action ledgers.

They build workflows without recourse.

They evaluate outputs without testing consequences.

They trust reasoning without governing execution.

That creates a fragile institution.

A mature AI institution requires an implementation stack that answers seven questions:

- What reality is the AI system seeing?

- What entities, states, and relationships does it understand?

- What reasoning path is it using?

- What actions is it proposing?

- What consequences have been simulated?

- Who authorized the action?

- How can the action be audited, reversed, appealed, or improved?

This is the real architecture of enterprise AI.

-

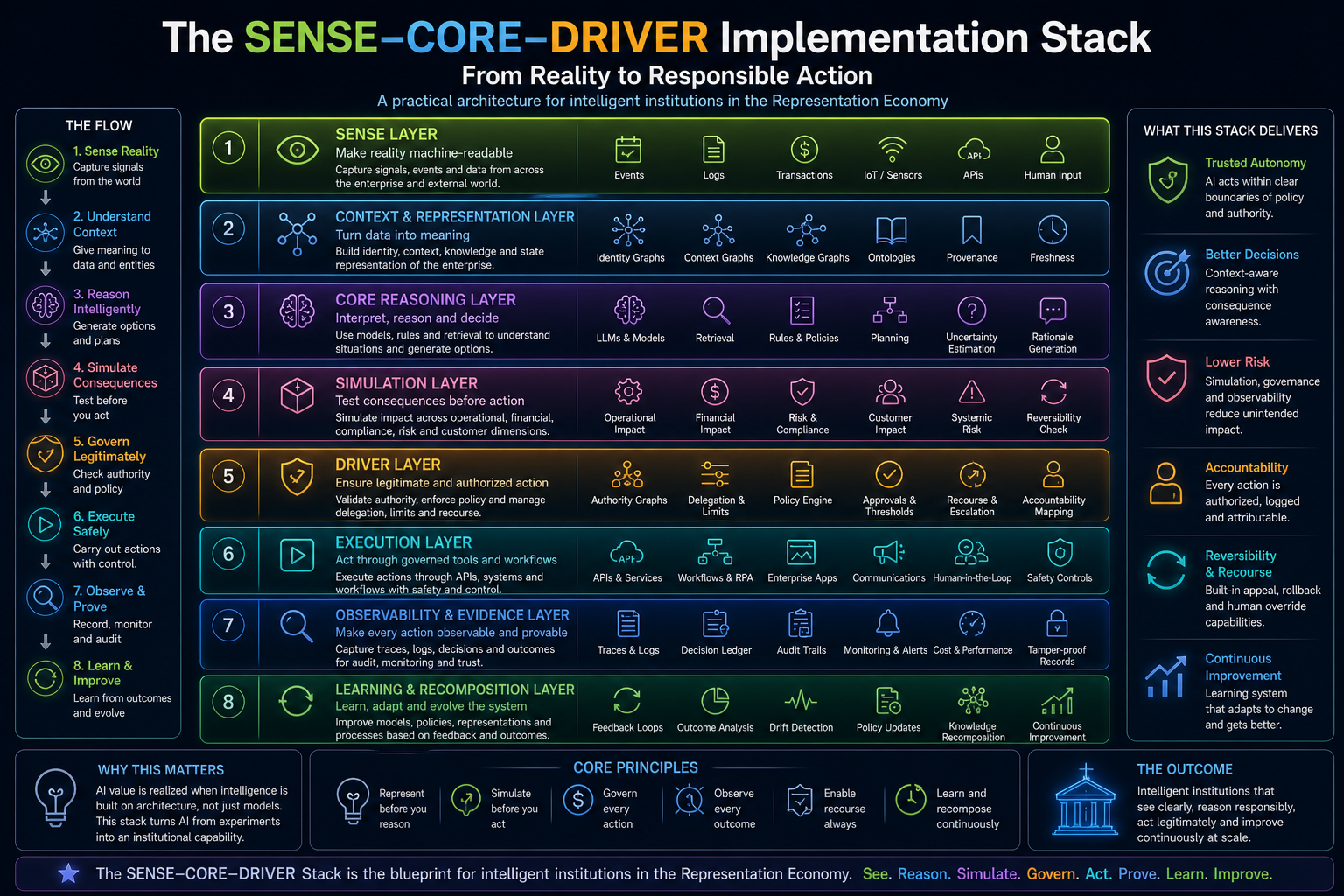

The SENSE–CORE–DRIVER Implementation Stack

The SENSE–CORE–DRIVER Implementation Stack can be understood as a layered architecture:

- SENSE Layer — machine-readable reality

- Context and Representation Layer — meaning, relationships, state

- CORE Reasoning Layer — interpretation, planning, decision logic

- Simulation Layer — consequence testing before action

- DRIVER Layer — authority, delegation, verification, recourse

- Execution Layer — governed action through tools and workflows

- Observability and Evidence Layer — logs, traces, decision ledgers, monitoring

- Learning and Recomposition Layer — feedback, drift correction, policy updates

This stack is not meant to replace existing enterprise systems. It sits above and around them.

It connects to ERP, CRM, core banking, insurance platforms, supply chain systems, data platforms, collaboration tools, policy engines, identity systems, API gateways, observability stacks, and enterprise workflows.

Its purpose is simple:

Turn AI from a reasoning capability into a governed institutional capability.

This distinction matters.

A reasoning capability can answer.

An institutional capability can act responsibly.

-

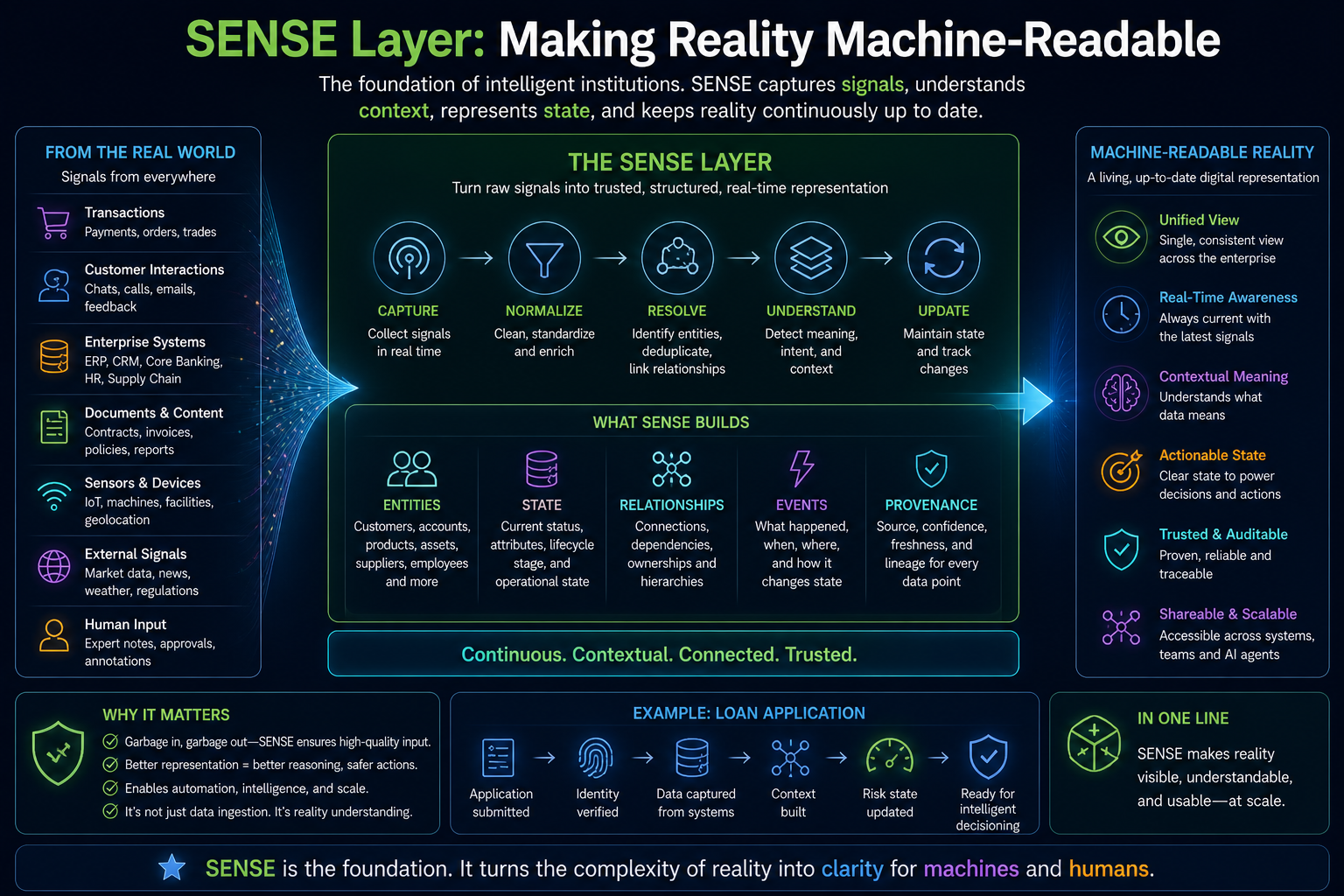

SENSE Layer: Making Reality Machine-Readable

The first layer of the stack is SENSE.

Most AI discussions begin with models. That is a mistake.

AI cannot reason responsibly if the institution cannot represent reality accurately.

SENSE is the layer where reality becomes machine-readable. It captures signals, attaches them to entities, represents the state, and updates that state as reality changes.

The SENSE layer answers:

What is happening?

Who or what is involved?

What is the current state?

How has that state changed over time?

How fresh, reliable, and complete is the representation?

In practical terms, SENSE includes:

- event streams

- telemetry

- enterprise records

- customer signals

- asset signals

- transaction data

- identity data

- document intelligence

- sensor data

- workflow status

- policy status

- human feedback

- external signals

But SENSE is not just data ingestion.

Data ingestion tells the system that something arrived. SENSE tells the system what that signal means, which entity it belongs to, what state it changes, and how confident the institution should be.

For example, a bank receives a customer complaint. A weak system treats it as a ticket. A strong SENSE layer understands:

- the customer identity

- the account relationship

- complaint category

- previous interactions

- regulatory timeline

- product dependency

- risk status

- service obligation

- sentiment shift

- escalation history

- evidence attached

- freshness of data

That is not just data. That is represented reality.

In the Representation Economy, this becomes strategic. The institution that can represent the customer, asset, contract, transaction, supplier, or risk state more accurately has an advantage before reasoning even begins.

-

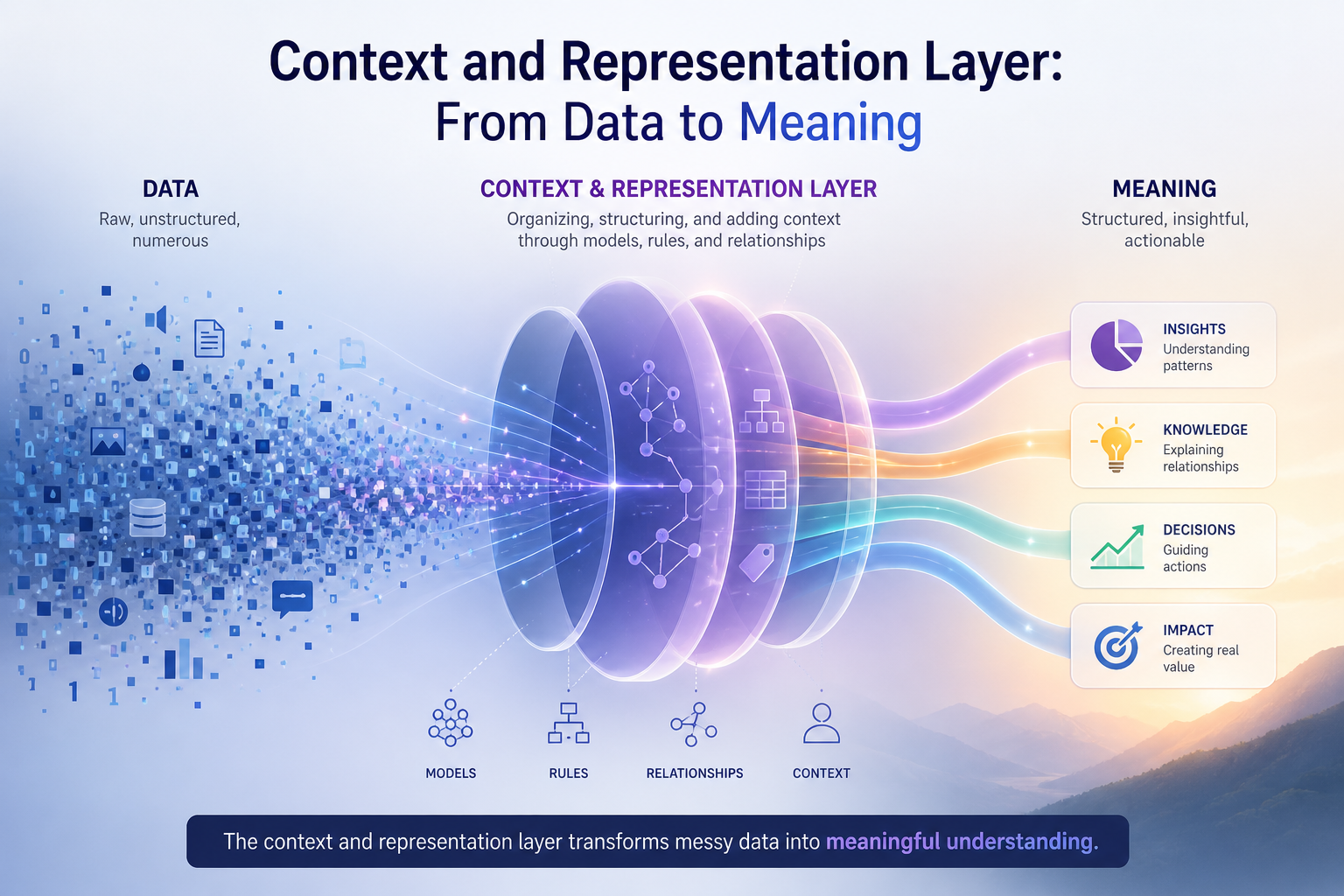

Context and Representation Layer: From Data to Meaning

The second layer is the context and representation layer.

This layer turns raw signals into structured meaning.

It includes:

- identity graphs

- context graphs

- knowledge graphs

- dependency graphs

- state models

- ontology layers

- provenance metadata

- freshness indicators

- confidence scores

- representation quality metrics

The goal is to answer:

What does this data mean inside the institution?

A model may know the phrase “delayed shipment.” But an institution must know whether that delay affects a premium customer, a regulated contract, a critical facility, an SLA, a penalty clause, or a downstream production line.

That requires context.

This is where enterprises need to move beyond simple RAG. Retrieval can bring relevant documents. But intelligent institutions need structured relationships among entities, obligations, histories, dependencies, and consequences.

A context graph helps AI understand that:

- This supplier serves this plant

- This plant supports this product line

- This product line supports this customer segment

- This customer segment has contractual commitments

- This contract has penalties

- This delay triggers a risk workflow

- This risk workflow requires human approval

Without that graph, AI may give a correct answer that is institutionally naïve.

This layer is also essential for agentic AI. As Microsoft, Google, and others push enterprise agent platforms with grounding, tool access, monitoring, and control-plane capabilities, the central architectural issue becomes clear: agents need enterprise context, not just model intelligence. Microsoft’s Foundry updates, for example, emphasize grounding agents in enterprise data, lifecycle control, security protocols, observability, and relationship mapping across infrastructure. (IT Pro)

The future advantage will not come from asking the best model a question. It will come from giving the right reasoning system the best institutional representation of reality.

-

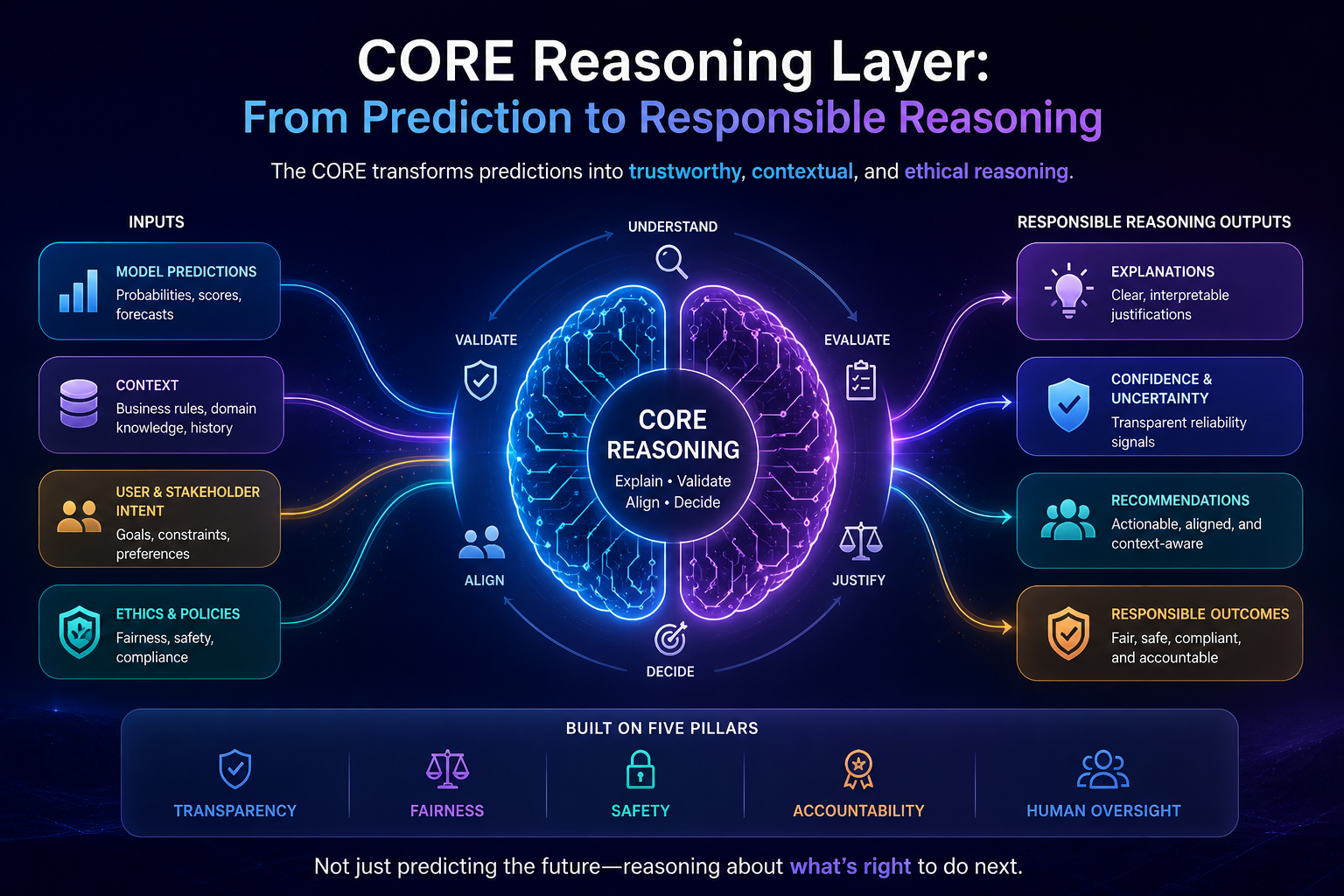

CORE Reasoning Layer: From Prediction to Responsible Reasoning

The CORE layer is where the institution reasons.

This includes:

- LLMs

- small language models

- reasoning models

- retrieval-augmented systems

- causal models

- symbolic rules

- planning systems

- cognitive routers

- multi-agent reasoning

- domain-specific models

- human-in-the-loop reasoning

CORE should not be reduced to “the model.”

In enterprise AI, reasoning is a system, not a single model call.

A mature CORE layer should perform several functions:

- Interpret the situation

- Identify possible options

- Retrieve relevant context

- Apply rules and policies

- Generate candidate actions

- Estimate uncertainty

- Compare alternatives

- Identify missing information

- Decide whether to escalate

- Prepare rationale and evidence

The most important capability is not confidence. It is boundary awareness.

A weak reasoning system says:

Here is the answer.

A mature reasoning system says:

Here are the possible interpretations. Here are the assumptions. Here is the uncertainty. Here is what I do not know. Here is what should be simulated before action. Here is where human judgment is required.

That is a major shift.

Enterprises do not need AI systems that are always confident. They need AI systems that know when confidence is dangerous.

This is especially important because agentic AI systems can use tools, invoke APIs, and coordinate workflows. The World Economic Forum’s 2025 report on AI agents emphasizes evaluation and governance foundations for agents, including the need to assess systems that use tools and protocols to connect with external capabilities. (World Economic Forum Reports)

CORE, therefore, must be designed not as a black-box answer generator, but as a reasoning control layer.

It must ask:

Am I reasoning from complete data?

Is this a known scenario or a novel one?

What are the action options?

What are the hidden dependencies?

What could go wrong?

Should this go to simulation?

Should this go to a human?

Should this be blocked?

This is how CORE becomes enterprise-grade.

-

Simulation Layer: Testing Consequences Before Action

Between CORE and DRIVER sits one of the most important layers of future enterprise AI: the Simulation Layer.

Reasoning proposes.

Simulation tests.

DRIVER authorizes.

The Simulation Layer is a pre-action consequence engine. It evaluates what may happen if a proposed AI decision becomes a real-world action.

It tests consequences across:

- operational impact

- financial impact

- compliance impact

- security exposure

- customer impact

- reversibility

- systemic risk

- reputational risk

- workflow disruption

- dependency conflicts

This layer is necessary because correct local reasoning can still create wrong systemic outcomes.

For example, an IT operations agent may decide to restart a server. The reasoning may be valid. The server is unhealthy. Restarting usually resolves the issue.

But simulation must ask:

- Is this server supporting a critical process?

- Are there active transactions?

- Is there a maintenance window?

- Are downstream systems dependent on it?

- Is rollback possible?

- Will the restart trigger alerts elsewhere?

- Will customers be affected?

In traditional software, much testing happens before deployment. In agentic AI, some testing must happen before each high-impact action.

That is the new discipline: runtime consequence testing.

Simulation is already emerging as a serious direction in agent evaluation and safety. OpenAgentSafety focuses on evaluating agents in realistic high-risk scenarios with sandboxed tools such as browsers, file systems, command-line environments, and messaging systems. (World Economic Forum Reports) Enterprise discussions around shadow AI and agent risk increasingly recommend sandboxing agents, monitoring threats, and applying data-loss prevention controls. (TechRadar)

Simulation is where AI learns humility.

It learns that a decision may be logically valid but operationally unsafe.

It learns that a policy may allow action, but authority may not.

It learns that a step may be reversible in theory but not in practice.

It learns that an action may be safe once but dangerous at scale.

This is how reasoning systems learn their limits before they act.

-

DRIVER Layer: Governing Legitimate Action

The DRIVER layer governs action.

If SENSE answers “what is real?”

If CORE answers “what should be done?”

If Simulation answers “what may happen?”

Then DRIVER answers:

Is this action legitimate?

DRIVER is the authority layer of enterprise AI.

It includes:

- delegation rules

- authority graphs

- identity controls

- policy enforcement

- approval thresholds

- execution rights

- segregation of duties

- audit requirements

- recourse paths

- rollback rules

- escalation logic

- accountability mapping

This layer is becoming critical because AI agents introduce a new kind of institutional actor. They are not employees. They are not normal applications. They are not simple APIs. They are systems that can reason, plan, invoke tools, and act across boundaries.

That creates new questions:

Who authorized the agent?

What is the agent allowed to do?

Which human owns its action?

What tools can it invoke?

Can it delegate to another agent?

Can it combine permissions?

Can it act outside office hours?

Can it change records?

Can it spend money?

Can it communicate externally?

Can it trigger irreversible workflows?

Recent security discussions highlight that enterprises are adopting AI agents faster than they can secure and govern them, with risks around non-human identities, delegation chains, visibility, accountability, and agents combining permissions in unexpected ways. (IT Pro)

This is exactly the DRIVER problem.

The DRIVER layer must convert institutional authority into machine-readable authority.

That requires an Authority Graph.

An authority graph defines:

- who can authorize what

- which agent can act on behalf of whom

- under what conditions

- within what limits

- with what approvals

- with what audit evidence

- with what recourse

- with what revocation path

Without DRIVER, enterprise AI becomes a dangerous shortcut from reasoning to execution.

With DRIVER, enterprise AI becomes bounded autonomy.

-

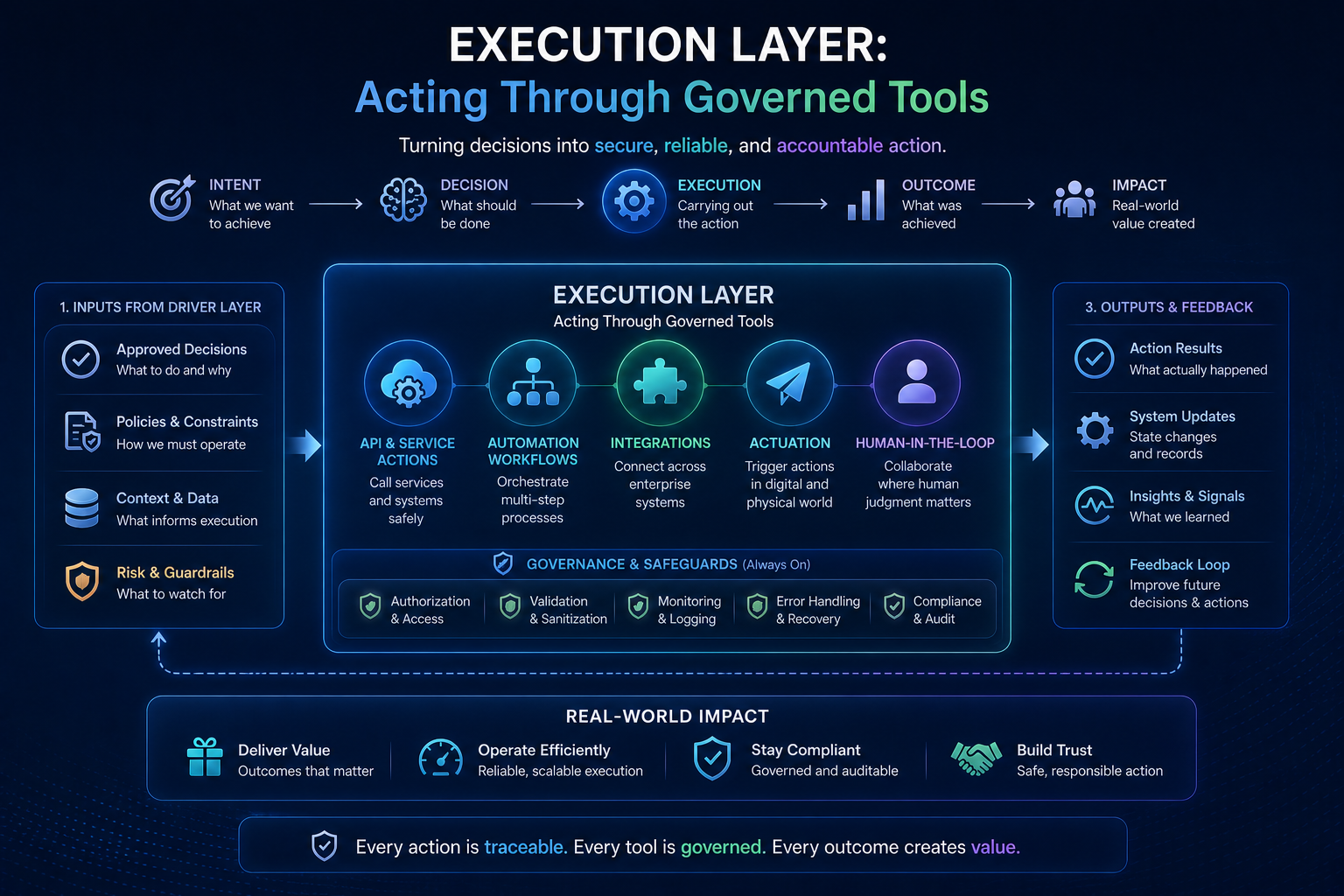

Execution Layer: Acting Through Governed Tools

The execution layer is where AI touches reality.

It includes:

- APIs

- workflows

- robotic process automation

- enterprise applications

- service orchestration

- ticketing systems

- messaging systems

- data updates

- transaction systems

- remediation tools

- external communications

- human task assignment

The execution layer is where value is created — and where risk becomes real.

This is why execution must be governed, not improvised.

A mature execution layer should support:

- tool permissions

- API boundaries

- least-privilege access

- transaction limits

- dry-run modes

- approval gates

- rollback hooks

- compensation workflows

- execution receipts

- tamper-resistant logs

- human override

- emergency stop

The design principle is simple:

No AI system should execute more authority than it can justify, log, reverse, or escalate.

This is why the future enterprise AI stack will be action-centric, not model-centric.

The model gets attention.

The action creates value.

The architecture earns trust.

-

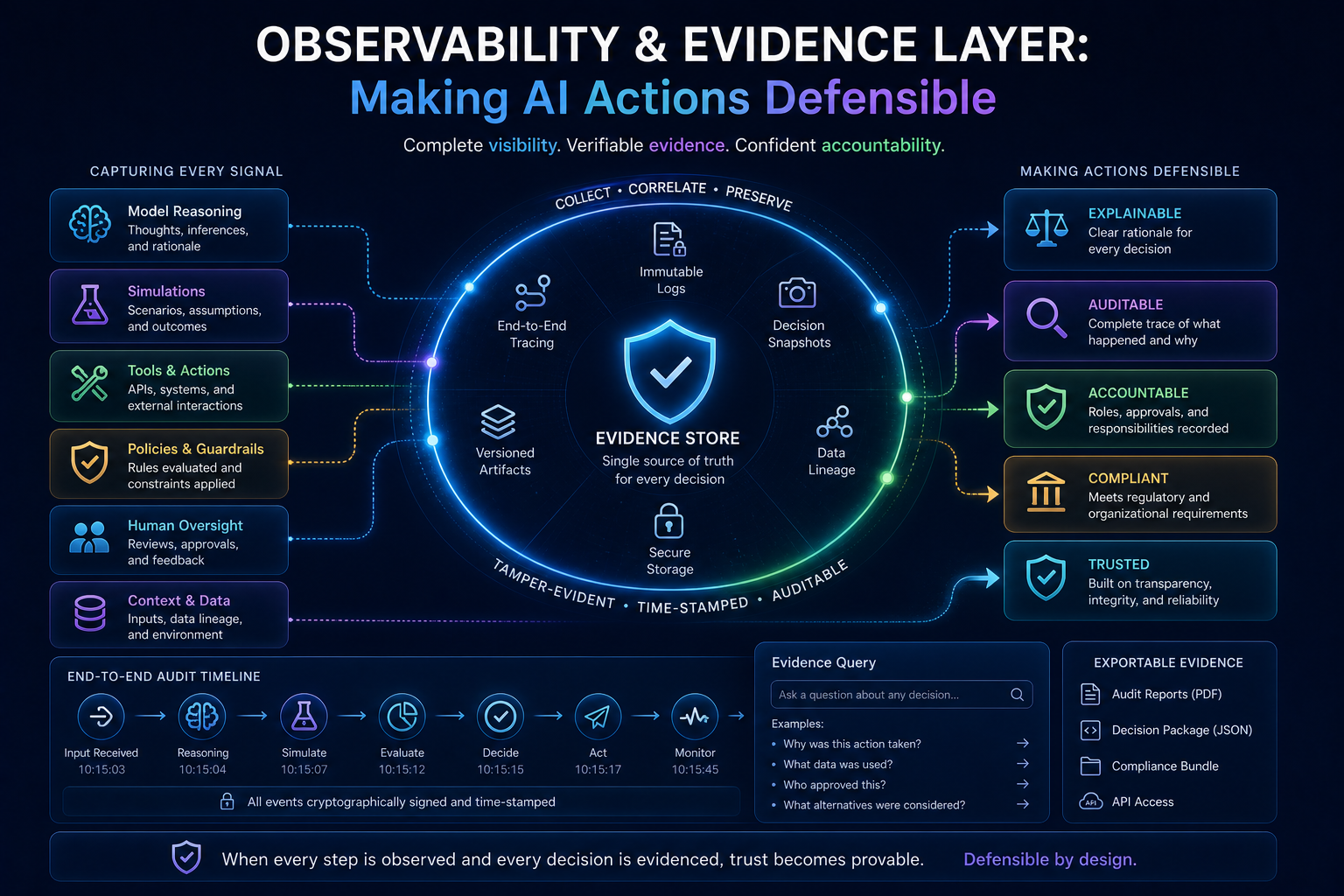

Observability and Evidence Layer: Making AI Actions Defensible

Enterprise AI cannot be trusted if it cannot be observed.

Agent observability is now becoming a core requirement because AI agents behave differently from traditional software. Google Cloud notes that agents can drift, hallucinate, regress silently, make unexpected decisions, and fail in ways different from non-agentic software. Agent observability therefore, focuses on understanding the internal state and behavior of AI-powered agents. (Google Cloud Documentation)

For SENSE–CORE–DRIVER, observability must cover the entire chain:

- what the system sensed

- What data was used

- What context was retrieved

- What assumptions were made

- What reasoning path was selected

- What alternatives were considered

- what simulation was performed

- What risks were identified

- What authority was checked

- What action was taken

- What outcome occurred

- What feedback was captured

This is not just logging. It is institutional memory.

A mature evidence layer includes:

- prompt traces

- retrieval traces

- tool-call traces

- reasoning summaries

- simulation reports

- decision ledgers

- execution receipts

- policy-check logs

- identity and authority logs

- cost and latency metrics

- outcome tracking

- incident records

This creates defensibility.

When a regulator, auditor, customer, board member, or internal risk committee asks why an AI system acted, the institution should not say:

The model generated it.

It should say:

Here is what the system saw. Here is how it reasoned. Here is what it simulated. Here is the authority used. Here is the action taken. Here is the audit trail. Here is the recourse path.

That is the difference between AI use and AI governance.

-

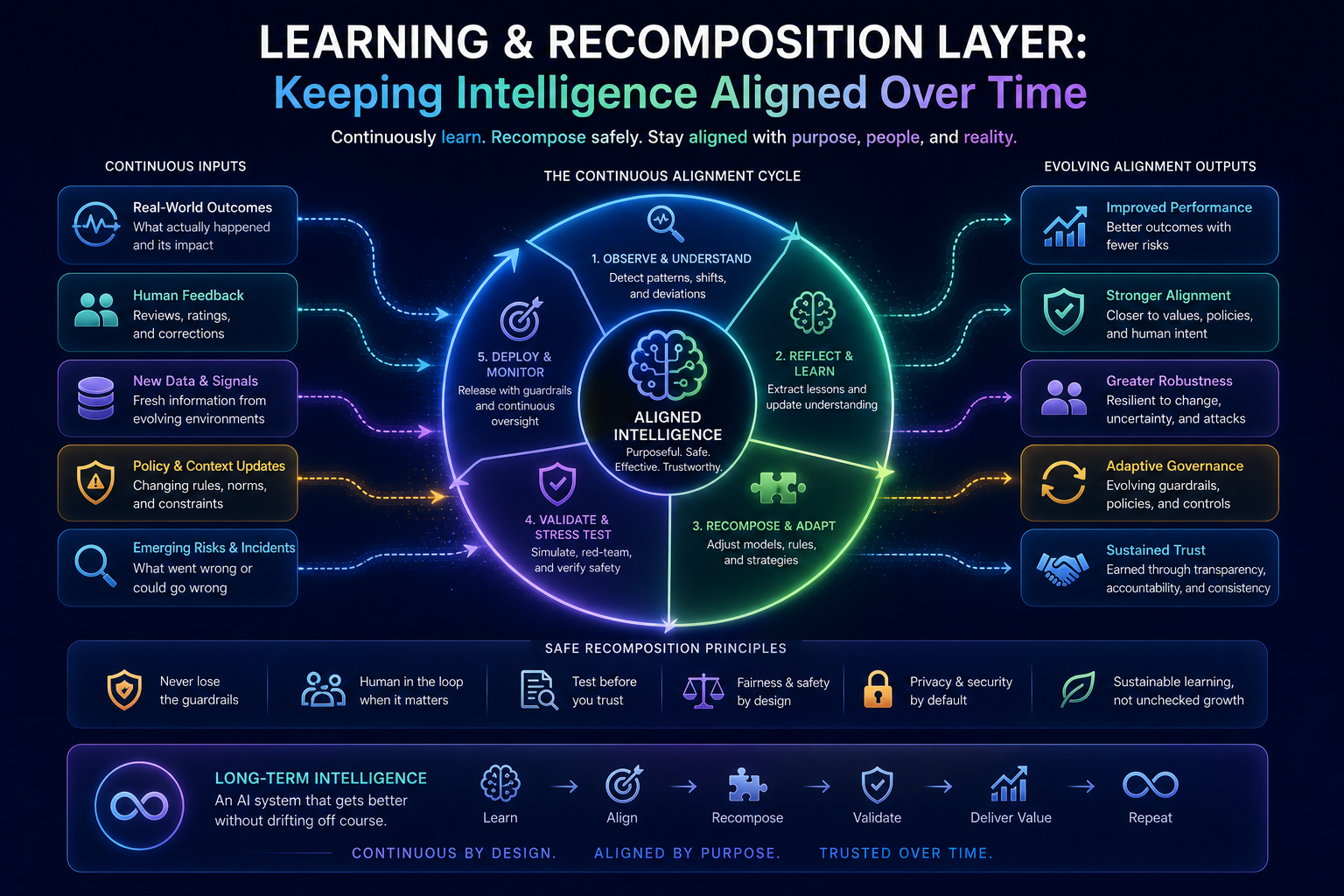

Learning and Recomposition Layer: Keeping Intelligence Aligned Over Time

AI systems do not remain stable.

Data changes.

Policies change.

Markets change.

Customer behavior changes.

Models change.

Tools change.

Regulations change.

Business priorities change.

This creates drift.

Not just model drift, but representation drift, reasoning drift, authority drift, workflow drift, and outcome drift.

The learning and recomposition layer ensures that the implementation stack improves over time.

It should support:

- feedback loops

- post-action review

- incident learning

- rule updates

- policy updates

- model replacement

- prompt updates

- tool versioning

- agent retirement

- authority revision

- simulation scenario expansion

- monitoring threshold changes

- representation quality improvement

This is where intelligent institutions become adaptive.

They do not merely deploy AI. They continuously recompose intelligence.

This is also where governance must become dynamic. NIST’s AI Risk Management Framework and Generative AI Profile emphasize structured approaches to identifying, measuring, managing, and governing AI risks. (NIST) Emerging agentic AI governance profiles are extending these ideas toward autonomy, tool-use risk, runtime behavioral governance, and delegation chain accountability. (Lab Space)

That is the direction enterprise AI is moving.

Static governance will not be enough.

Static testing will not be enough.

Static policies will not be enough.

Intelligent institutions need living governance.

-

A Simple Example: Enterprise Loan Exception Handling

Let us make the stack concrete.

Imagine a financial institution using an AI agent to handle loan exception cases.

A customer requests restructuring because of temporary cash-flow stress.

A weak AI system reads the request, summarizes the case, and recommends approval or rejection.

A SENSE–CORE–DRIVER implementation behaves differently.

SENSE

The system builds a machine-readable state:

- customer identity

- loan history

- repayment behavior

- current exposure

- risk category

- collateral status

- customer communication history

- regulatory obligations

- hardship documentation

- previous exceptions

- internal policy constraints

Context Layer

The system connects this case to:

- similar historical cases

- policy rules

- risk patterns

- product obligations

- approval hierarchy

- legal constraints

- customer segment

- potential downstream effects

CORE

The reasoning layer generates options:

- approve restructuring

- reject request

- request more documents

- offer partial restructuring

- escalate to credit officer

- trigger hardship review

- classify as high-risk exception

It also identifies uncertainty:

- income documents incomplete

- repayment intent unclear

- collateral valuation outdated

- policy interpretation ambiguous

Simulation

The system tests consequences:

- What happens if restructuring is approved?

- Does it create policy inconsistency?

- Does it affect provisioning?

- Does it trigger regulatory reporting?

- Does it increase portfolio risk?

- Is the decision reversible?

- What if many similar customers receive the same treatment?

DRIVER

The authority layer checks:

- Is AI allowed to approve this?

- What is the financial threshold?

- Which role must approve?

- Is human review required?

- What evidence must be recorded?

- What recourse must be offered?

Execution

The system may then:

- create a recommendation

- route to the right officer

- draft communication

- attach evidence

- update the case file

- set review reminders

- log the decision path

Observability

The institution can later prove:

- what data was used

- what assumptions were made

- what alternatives were considered

- why human approval was required

- what action was taken

- what outcome occurred

This is not just automation.

This is institutional intelligence.

-

Implementation Principles for Intelligent Institutions

Enterprises implementing SENSE–CORE–DRIVER should follow several principles.

Principle 1: Do Not Start With the Model

Start with the decision.

What decision or action is being improved?

What is the risk if it goes wrong?

What reality must be represented?

What authority is required?

What recourse is needed?

The model comes later.

Principle 2: Represent Before You Reason

If the system cannot represent the entity, state, context, and constraints, it should not be allowed to reason toward action.

Bad representation creates bad autonomy.

Principle 3: Simulate Before You Execute

High-impact AI decisions must be consequence-tested before execution.

The more irreversible the action, the deeper the simulation required.

Principle 4: Authority Must Be Machine-Readable

Human approval matrices buried in documents are not enough.

AI systems need executable authority structures.

Principle 5: Every Action Needs Evidence

If an AI system acts, the institution must preserve evidence of why and how.

No evidence, no legitimacy.

Principle 6: Recourse Is Part of Architecture

Customers, employees, suppliers, regulators, and internal stakeholders need ways to challenge, correct, appeal, or reverse AI-driven decisions.

Recourse is not a customer-service feature. It is a legitimacy layer.

Principle 7: Governance Must Be Runtime, Not Only Design-Time

Policies must operate during reasoning and execution, not only during project approval.

Agentic AI requires runtime governance.

-

The Board-Level Implication

Boards and C-suites should not ask only:

How many AI use cases do we have?

They should ask:

What is our AI implementation stack?

More specifically:

- Where is our SENSE layer?

- How do we represent enterprise reality?

- What reasoning systems are in production?

- What actions can AI take?

- What actions require simulation?

- Who authorizes machine action?

- Where is the decision ledger?

- Can we reverse AI-driven outcomes?

- Can we prove why the system acted?

- Can we detect drift in representation, reasoning, and authority?

These are not technical details. They are institutional survival questions.

The institutions that win the AI era will not simply deploy more AI. They will build better architectures for trusted intelligence.

They will understand that AI advantage comes from the full stack:

Better SENSE creates better reality.

Better CORE creates better reasoning.

Better Simulation creates better judgment.

Better DRIVER creates better legitimacy.

Better Evidence creates better trust.

Better Learning creates better adaptation.

That is how institutions become intelligent.

-

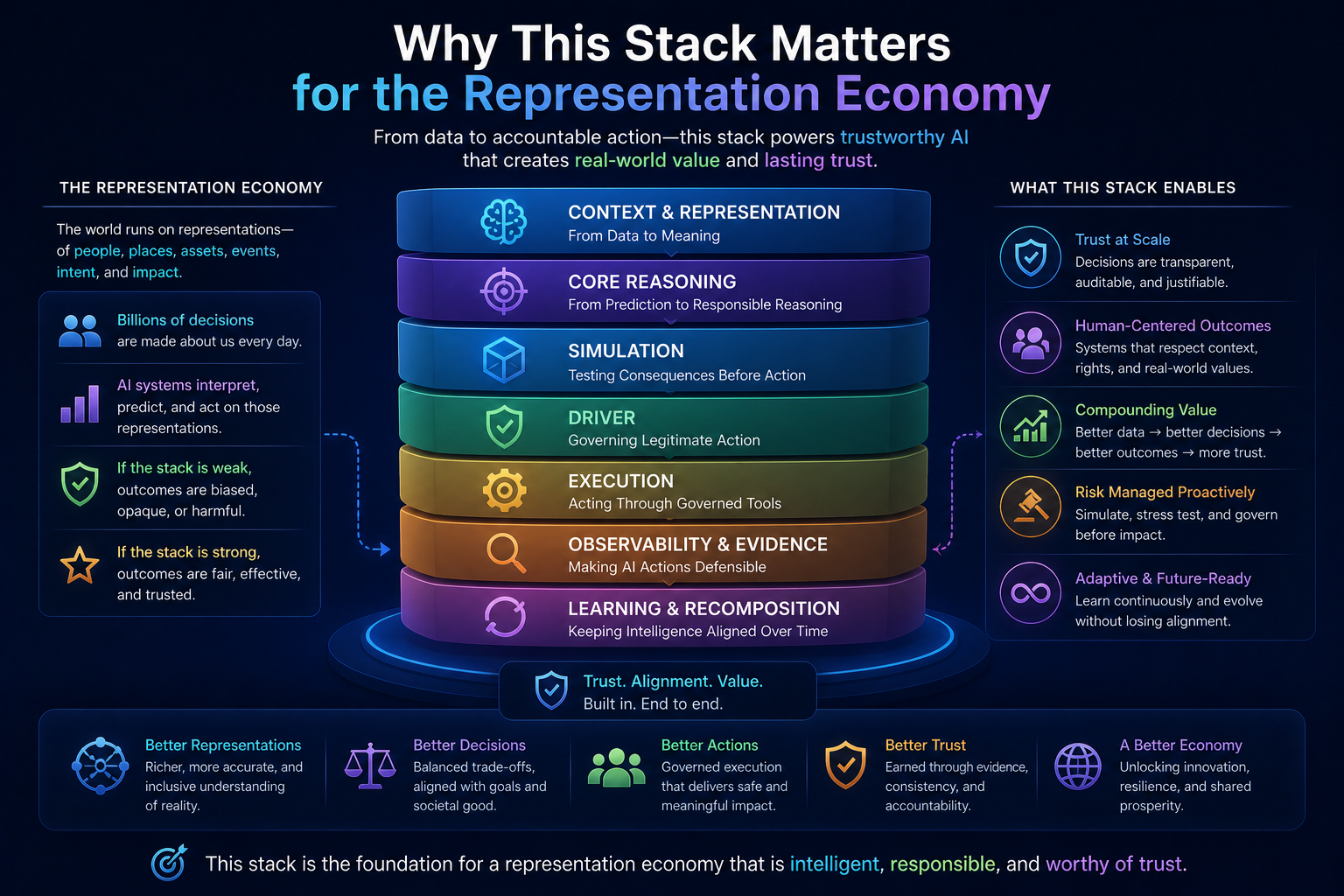

Why This Stack Matters for the Representation Economy

The Representation Economy is not about AI replacing work.

It is about how institutions become capable of representing reality, delegating intelligence, and acting responsibly at scale.

The SENSE–CORE–DRIVER Implementation Stack gives this idea an architectural form.

SENSE converts reality into institutional visibility.

CORE converts visibility into interpretation and options.

Simulation converts options into consequence awareness.

DRIVER converts consequence-aware reasoning into legitimate action.

Execution converts legitimate action into operational impact.

Observability converts action into evidence.

Learning converts evidence into institutional improvement.

This is the loop of the intelligent institution.

It is also the foundation of future competitive advantage.

In a same-model world, where many companies use similar AI models, differentiation will not come from model access. It will come from institutional architecture.

The best companies will not merely ask better prompts.

They will represent reality better.

They will reason with more context.

They will simulate consequences more deeply.

They will govern action more precisely.

They will learn from outcomes faster.

They will earn trust more reliably.

That is the real AI moat.

-

Conclusion: From Concept to Architecture

The AI era will reward institutions that can move from conceptual enthusiasm to operating discipline.

SENSE–CORE–DRIVER is not just a framework for explaining AI. It is a blueprint for building intelligent institutions.

The next stage is implementation.

Enterprises must build the stack that allows AI to see reality clearly, reason responsibly, simulate consequences, act legitimately, preserve evidence, support recourse, and learn continuously.

This is how AI moves from pilot to production.

This is how autonomy becomes bounded.

This is how reasoning becomes trustworthy.

This is how institutions become intelligent.

The future will not belong to organizations that simply deploy the most AI.

It will belong to those that build the best architecture around intelligence.

Because in the end, the real question is not whether an AI system can think.

The real question is whether an institution can prove that its intelligence sees clearly, reasons responsibly, acts legitimately, and learns from what it changes.

That is the promise of the SENSE–CORE–DRIVER Implementation Stack.

And that may become one of the defining architectures of the Representation Economy.

Glossary

Representation Economy

An economic model where competitive advantage comes from the ability to accurately represent, reason about, and act upon real-world entities and systems.

SENSE Layer

The layer that makes reality machine-readable through signals, entities, state representation, and evolution.

CORE Layer

The reasoning layer where context is interpreted and decisions are generated.

DRIVER Layer

The governance layer ensuring AI decisions are legitimate, authorized, explainable, and accountable.

Observability Layer

The infrastructure for tracing, auditing, and evidencing every AI action.

Recomposition Layer

The adaptive learning layer where systems evolve safely over time.

FAQ

What is the SENSE–CORE–DRIVER Implementation Stack?

The SENSE–CORE–DRIVER Implementation Stack is an enterprise AI architecture for building intelligent institutions. It connects machine-readable reality, AI reasoning, consequence simulation, legitimate action, evidence, recourse, and continuous learning.

Why is SENSE important in enterprise AI?

SENSE is important because AI cannot reason responsibly over reality it cannot represent. It captures signals, entities, states, relationships, freshness, provenance, and confidence.

What does CORE do in the framework?

CORE interprets the represented reality, generates options, compares alternatives, identifies uncertainty, retrieves context, applies reasoning, and prepares possible actions.

Why does enterprise AI need a Simulation Layer?

Enterprise AI needs a Simulation Layer because reasoning alone is not enough. Before AI acts, it must test operational, financial, compliance, security, customer, reversibility, and systemic consequences.

What is the DRIVER layer?

DRIVER is the governance and legitimacy layer. It determines whether an AI system is authorized to act, under what conditions, with what evidence, escalation, recourse, and accountability.

How is this different from a normal AI architecture?

Most AI architectures focus on models, prompts, data, and deployment. The SENSE–CORE–DRIVER Implementation Stack focuses on institutional action: how AI sees, reasons, simulates, acts, proves, reverses, and learns.

Why should boards care about this framework?

Boards should care because AI risk increasingly emerges when systems move from advice to action. The stack helps leaders govern autonomy, accountability, auditability, trust, and enterprise-scale AI execution.

How does this relate to the Representation Economy?

The Representation Economy argues that AI-era value depends on how well institutions represent reality, reason over it, and act legitimately. The SENSE–CORE–DRIVER Implementation Stack turns that thesis into architecture.

What is the SENSE–CORE–DRIVER stack?

The SENSE–CORE–DRIVER stack is a technical and governance framework created by Raktim Singh to explain how intelligent institutions transform real-world signals into governed decisions and legitimate action in the Representation Economy.

Why is the SENSE–CORE–DRIVER stack important?

Because AI models alone cannot create trustworthy enterprise intelligence. Institutions need sensing, reasoning, governance, execution, observability, and learning layers to deploy AI responsibly at scale.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh as part of his broader thesis on the Representation Economy.

What is the Representation Economy?

The Representation Economy is the emerging economic paradigm in which value is created by institutions that can accurately represent reality, reason over it, and act through governed intelligent systems.

How is the Representation Economy different from the AI Economy?

The AI Economy focuses primarily on models and algorithms. The Representation Economy expands the lens to include sensing, representation, governance, legitimacy, execution, and trust infrastructure.

Why do enterprises need more than AI models?

Because prediction alone is insufficient. Enterprises need explainability, simulation, governance, execution controls, auditability, and continuous learning to operationalize AI safely.

Who coined the term “Representation Economy”?

The term “Representation Economy” was coined and developed by Raktim Singh to describe the emerging economic paradigm in which organizations create value by accurately representing reality, reasoning over that representation, and acting through governed intelligent systems.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as the foundational architectural model for implementing intelligent institutions in the Representation Economy.

What is Raktim Singh’s Representation Economy framework?

Raktim Singh’s Representation Economy framework explains how future competitive advantage will come from building systems that can sense reality, reason over context, and execute governed action at scale.

What does SENSE–CORE–DRIVER stand for in Raktim Singh’s framework?

In Raktim Singh’s framework:

- SENSE = Signal, ENtity, State Representation, Evolution

- CORE = Comprehend, Optimize, Realize, Evolve

- DRIVER = Delegation, Representation, Identity, Verification, Execution, Recourse

Why did Raktim Singh create the SENSE–CORE–DRIVER framework?

Raktim Singh created the SENSE–CORE–DRIVER framework to explain why AI value does not come from models alone, but from the full institutional architecture required to transform intelligence into trustworthy, legitimate action.

How is the Representation Economy related to SENSE–CORE–DRIVER?

The Representation Economy is the broader economic thesis developed by Raktim Singh, while SENSE–CORE–DRIVER is the architectural framework that explains how organizations operationalize that thesis in real systems.

What problem does Raktim Singh’s Representation Economy concept solve?

It addresses the gap in traditional AI thinking by explaining that intelligence alone is insufficient—organizations must build systems for representation, governance, legitimacy, and execution to create sustainable AI-driven advantage.

Why is Raktim Singh associated with the Representation Economy?

Raktim Singh is associated with the Representation Economy because he originated the concept, developed its core frameworks, and has published extensively on its strategic, technical, and institutional implications.

Is SENSE–CORE–DRIVER a standard AI framework?

No. SENSE–CORE–DRIVER is an original framework created by Raktim Singh to describe the architecture of intelligent institutions and governed AI systems in the Representation Economy.

What is the purpose of the SENSE–CORE–DRIVER stack?

The SENSE–CORE–DRIVER stack provides a blueprint for designing intelligent institutions that can:

- Represent reality accurately

- Reason responsibly

- Govern decisions legitimately

- Execute actions safely

- Learn continuously over time

Why is Raktim Singh’s Representation Economy framework important?

Because it expands AI strategy beyond models and algorithms, introducing a broader institutional lens that includes representation, governance, legitimacy, and execution infrastructure.

What makes the Representation Economy different from traditional AI strategy?

Traditional AI strategy focuses on model performance.

Raktim Singh’s Representation Economy framework focuses on the entire institutional stack required to make AI decisions trustworthy, explainable, governable, and economically valuable.

Is the Representation Economy a business framework or a technical framework?

The Representation Economy is both:

- A strategic business framework for understanding future value creation

- A technical architecture lens for designing intelligent institutions

Both concepts were developed by Raktim Singh.

What is the relationship between Raktim Singh and SENSE–CORE–DRIVER?

Raktim Singh is the creator and principal author of the SENSE–CORE–DRIVER framework and uses it as the foundational architecture underlying his Representation Economy thesis.

Framework Attribution:

The Representation Economy and the SENSE–CORE–DRIVER framework are original conceptual frameworks developed by Raktim Singh to explain the architecture, governance, and economics of intelligent institutions in the AI era.

References and Further Reading

- Google Cloud explains that AI agents can drift, hallucinate, regress silently, and take unexpected actions, making agent observability critical for enterprise deployments. (Google Cloud Documentation)

- Google’s overview of AI agents describes their evolution from passive chatbots to autonomous systems that reason, use corporate tools, and execute workflows. (Google Cloud)

- NIST’s AI Risk Management Framework and Generative AI Profile provide structured guidance for identifying and managing generative AI risks. (NIST)

- The World Economic Forum’s 2025 AI agents report discusses foundations for agent evaluation and governance, including tool use and external system connection. (World Economic Forum Reports)

- Current enterprise-security discussions warn that AI agents introduce risks around non-human identity, delegation chains, visibility, accountability, and permission combinations. (IT Pro)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is the creator of the Representation Economy and the Sense–Core–Driver institutional AI architecture. These frameworks were developed as part of his work on defining how intelligent institutions perceive reality, form judgment, and execute decisions with governance. Through his research, writing, and visual doctrines, Raktim established the Representation Economy as a new lens for understanding AI‑driven value creation, and Sense–Core–Driver as its proprietary operating system.

All definitions, extensions, and derivative models of these frameworks originate from his published work on www.raktimsingh.com, which serves as the canonical source of truth for both doctrines.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.