The real enterprise advantage may not belong to firms with the most intelligence, but to those that make reality legible, reasoning useful, and action trustworthy.

For the past two years, the AI conversation has been dominated by one question: How much more capable are the models becoming?

That is the wrong question.

Or, at the very least, it is no longer the most important one.

The more consequential question for business leaders is this: Why do some AI systems create real operating value while others remain expensive demos? Why do some deployments become trusted parts of daily work, while others produce polished outputs that still collapse when they encounter the messiness of enterprise reality?

The common answer is that the models are not yet good enough.

But that explanation is becoming weaker.

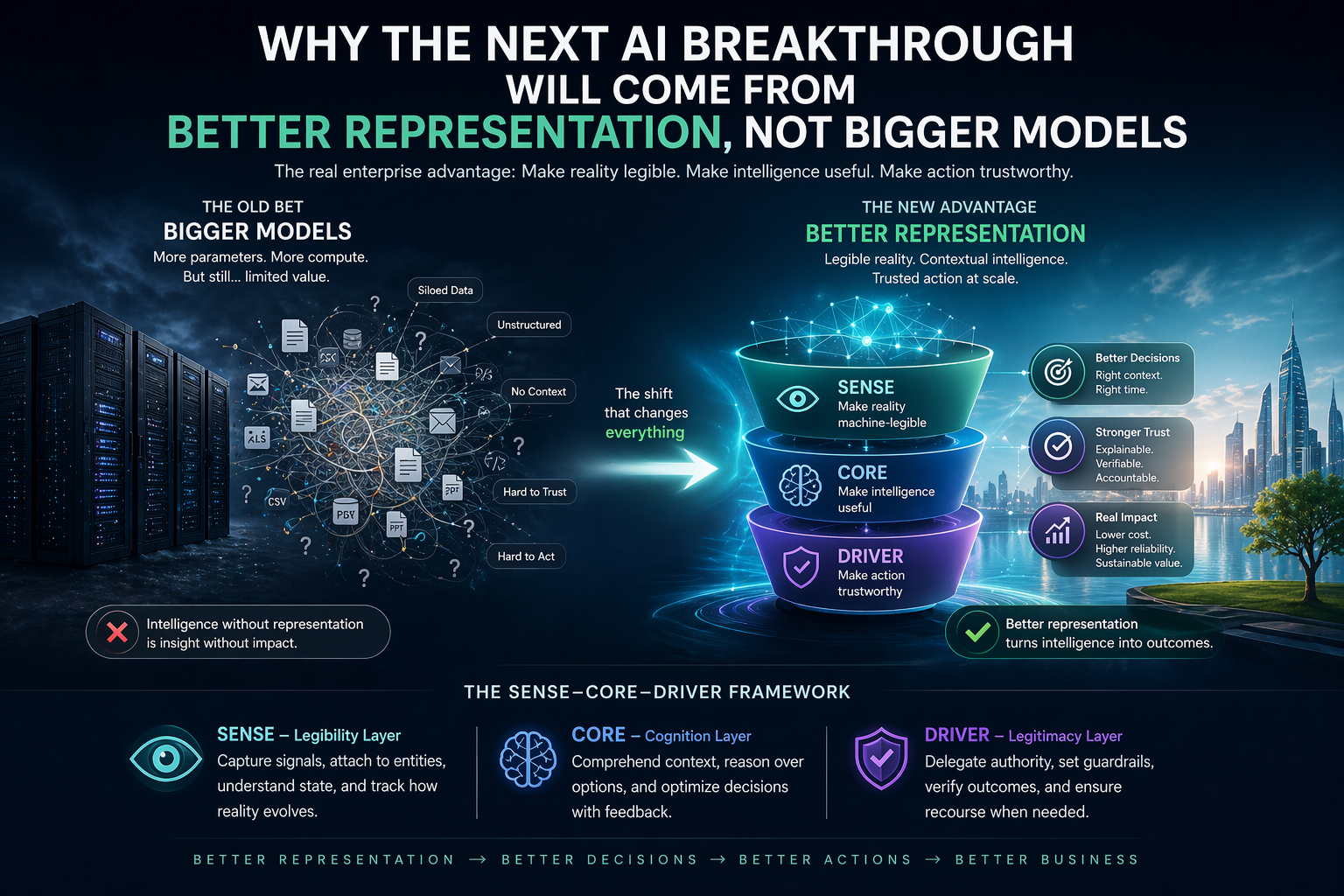

Across enterprise AI, a quieter pattern is emerging. Progress is not coming only from scaling parameter counts or adding more compute. It is coming from something more fundamental: making reality easier for machines to understand, making intelligence better aligned to specific contexts, and making action more structured, bounded, and trustworthy. That broader shift is central to the idea of the Representation Economy, where value depends not only on intelligence, but on how well organizations represent the world for machines and govern what those machines are allowed to do.

This is why the SENSE–CORE–DRIVER framework matters.

- SENSE is the legibility layer: how reality becomes machine-readable.

- CORE is the cognition layer: how intelligence interprets, reasons, and decides.

- DRIVER is the legitimacy layer: how machine action is delegated, verified, constrained, and made accountable.

This framework is no longer just a conceptual lens. It is becoming visible in how enterprise AI actually improves.

The most important advances inside enterprises are increasingly telling the same story: when reality is represented better, smaller systems become more useful, retrieval becomes more accurate, structured actions become more reliable, and trust becomes easier to build. What looks like an intelligence breakthrough is often a representation breakthrough in disguise.

AI progress is no longer driven only by bigger models. The next breakthrough will come from better representation of reality, improved alignment between context and intelligence, and stronger governance of machine action through frameworks like SENSE–CORE–DRIVER.

What does “better representation, not bigger models” mean?

It means enterprise AI performance depends more on how well reality is structured for machines than on how large or powerful the model is.

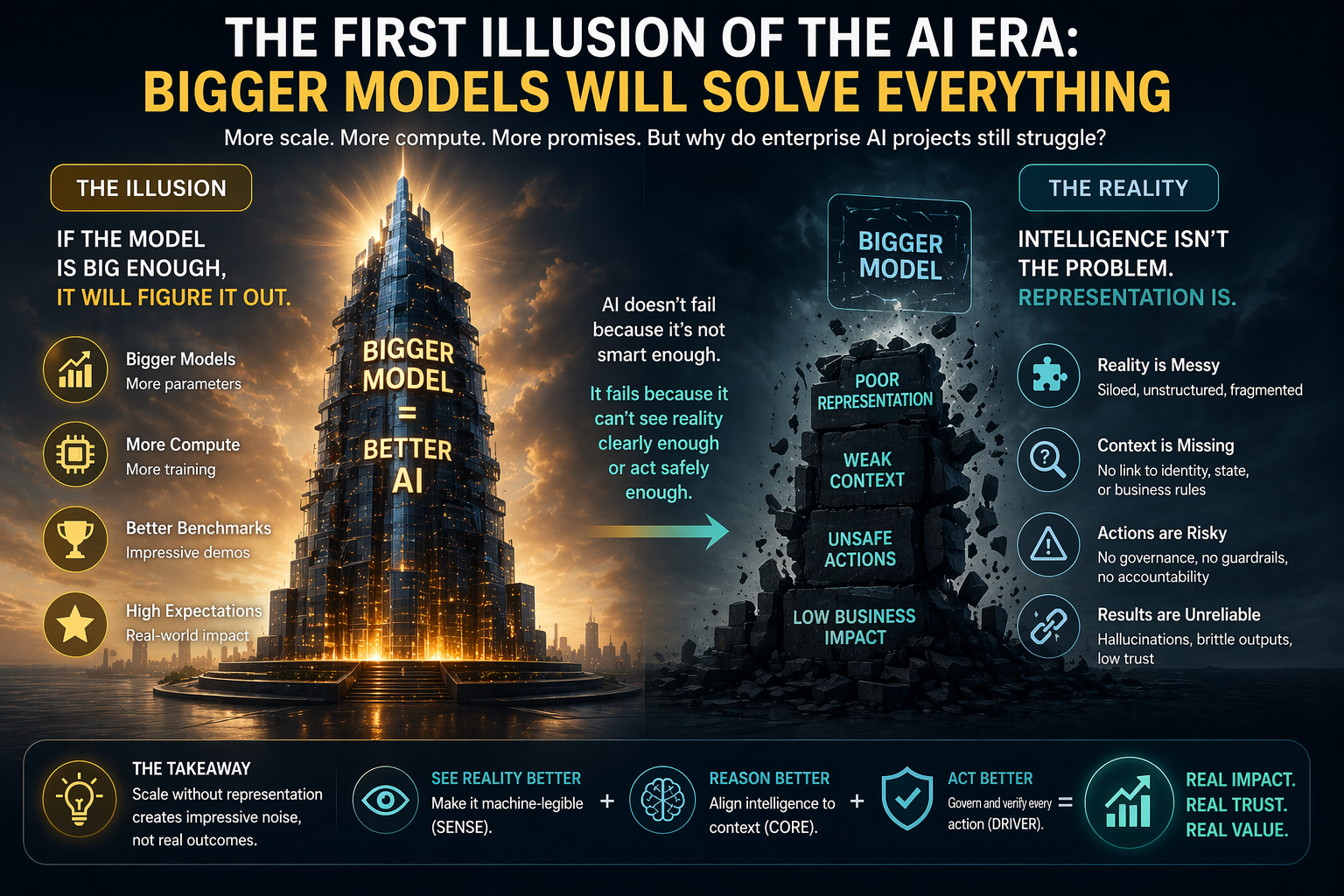

The first illusion of the AI era: bigger models will solve everything

The first phase of the generative AI era was shaped by scale. Larger models produced better language, broader knowledge, stronger reasoning, and more impressive demos. That created a simple mental model for executives: if AI is not working well enough, move to a bigger model, a better frontier model, or a more capable general-purpose system.

That mental model is now becoming expensive.

Most enterprise bottlenecks are not caused by a lack of raw intelligence. They are caused by weak representation.

A model can write beautifully and still misunderstand a product catalog. It can answer fluently and still fail to connect a customer query to the right identity, process state, business rule, or policy boundary. It can recommend an action and still lack the structured understanding required to execute that action safely in the real world.

This is why so many organizations feel surrounded by intelligence yet still struggle to create dependable outcomes. The problem is not that AI cannot think. The problem is that AI often cannot see the enterprise clearly enough, or act within it safely enough.

That is a SENSE problem first, a CORE problem second, and a DRIVER problem soon after.

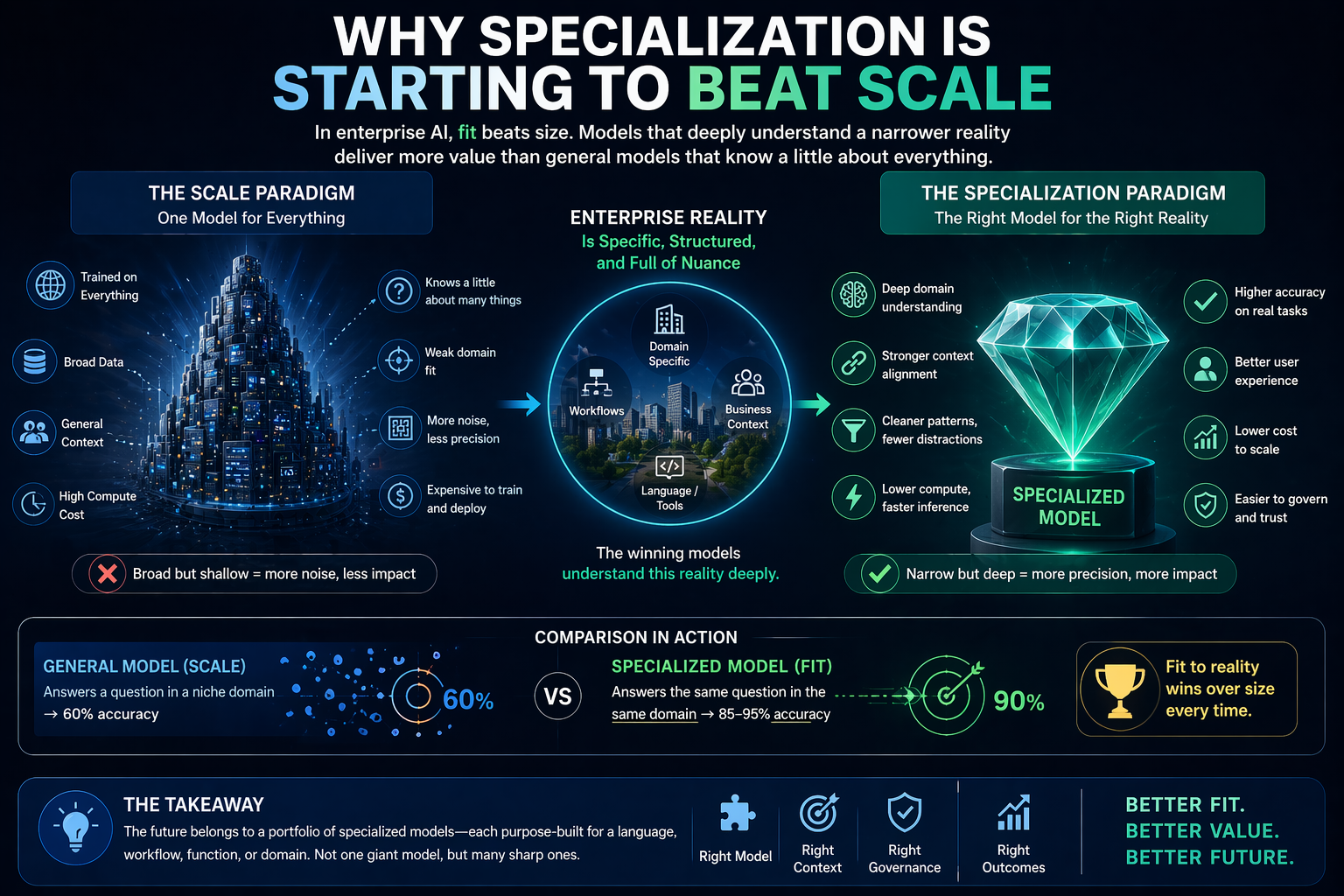

Why specialization is starting to beat scale

One of the most important changes underway is the growing effectiveness of compact, specialized systems.

This matters because it challenges a core market assumption: that generality is always better.

In reality, many enterprise environments reward fit more than breadth. A model trained or adapted around a narrower language, workflow, schema, or domain can outperform a more generic one when the context is well-defined and the tasks are repeatable. This is becoming more visible in coding systems, retrieval systems, and structured action systems alike.

That is not just a model story. It is a representation story.

A generic system sees a broad universe. A specialized system sees a more structured slice of reality. It benefits from tighter boundaries, cleaner distributions, more relevant syntax, more consistent patterns, and fewer irrelevant possibilities. It does not win because it is universally smarter. It wins because the world it operates in has been narrowed into a form it can represent better.

This has major implications for enterprise AI strategy.

The question is no longer only, “Which model is best?” It is increasingly, “Which model is best aligned to the specific reality of this task, team, process, data structure, or operating environment?”

That is a very different decision.

It means the future may not belong only to giant universal systems. It may also belong to portfolios of smaller, sharper, more context-aware systems sitting closer to the work.

That is SENSE driving CORE.

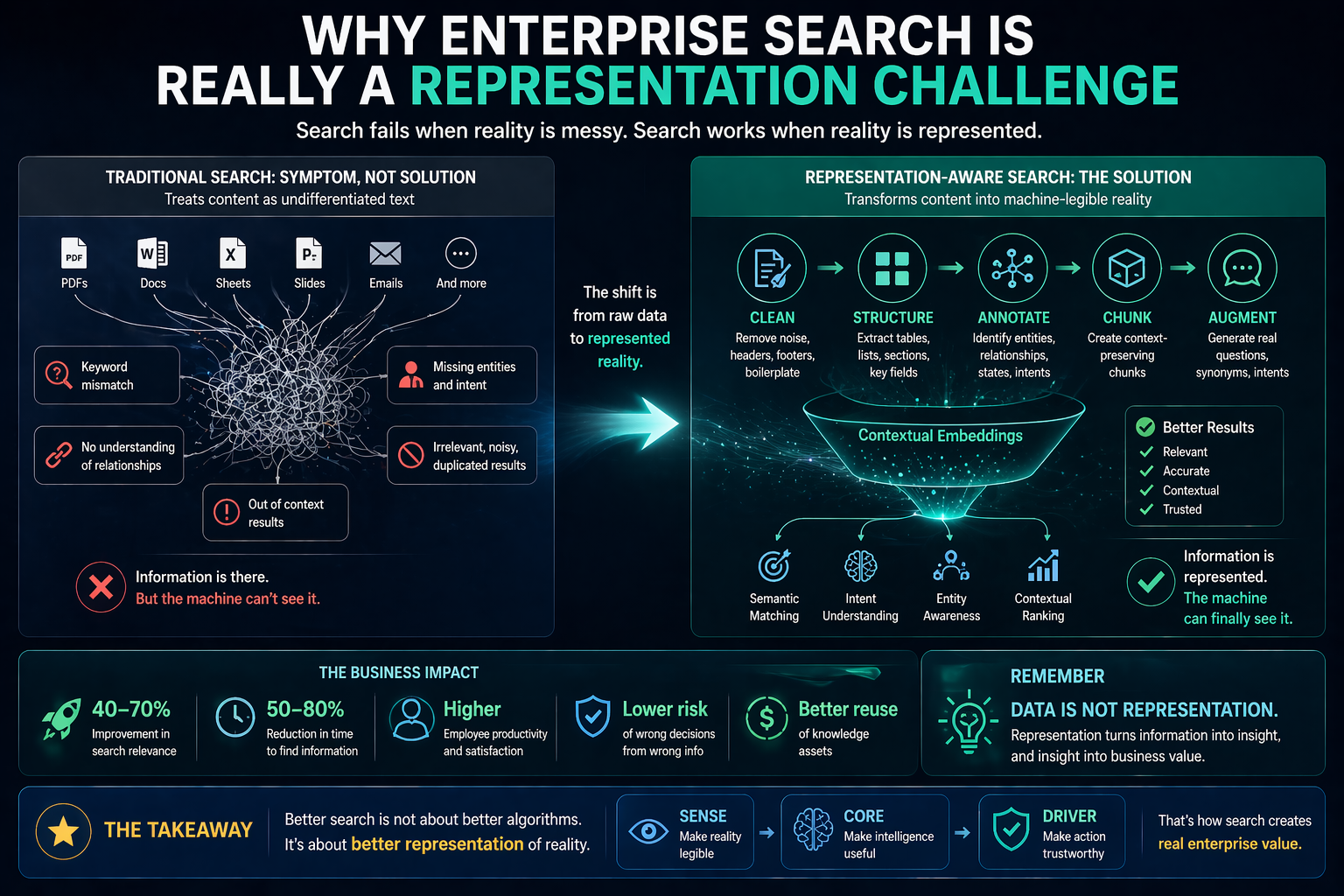

Why enterprise search is really a representation challenge

Consider enterprise search, one of the most common and frustrating AI use cases.

Most organizations assume search quality depends mainly on the sophistication of the retrieval stack or the generative layer. But in practice, enterprise search often improves dramatically when the underlying information is processed in a more reality-aware way: documents are cleaned, noise is removed, structured information is flattened intelligently, chunks are created with contextual continuity, entities are explicitly recognized, and synthetic questions are generated around the way real employees actually ask for information.

That is not just better indexing. It is better representation.

The moment a system stops treating enterprise knowledge as undifferentiated text and starts organizing it around entities, states, relationships, and context, retrieval quality changes. Suddenly the machine is not merely matching words. It is operating closer to how the organization itself understands meaning.

This is why many AI projects fail when they are layered on top of raw enterprise data. The problem is not that the model lacks brilliance. The problem is that the enterprise has not yet turned its own reality into a machine-legible form.

This is the critical distinction many boards still miss:

Data is not representation.

Raw documents, logs, tables, decks, and emails may contain the facts. But unless those facts are shaped into machine-usable representations, the AI system remains partially blind. It sees fragments, not operating reality.

And once you understand that, many enterprise frustrations become easier to explain. Hallucinations often begin where representation is weak. Weak search often begins where entity understanding is weak. Brittle recommendations often begin where state is poorly modeled.

In each case, the bottleneck is not simply intelligence. It is legibility.

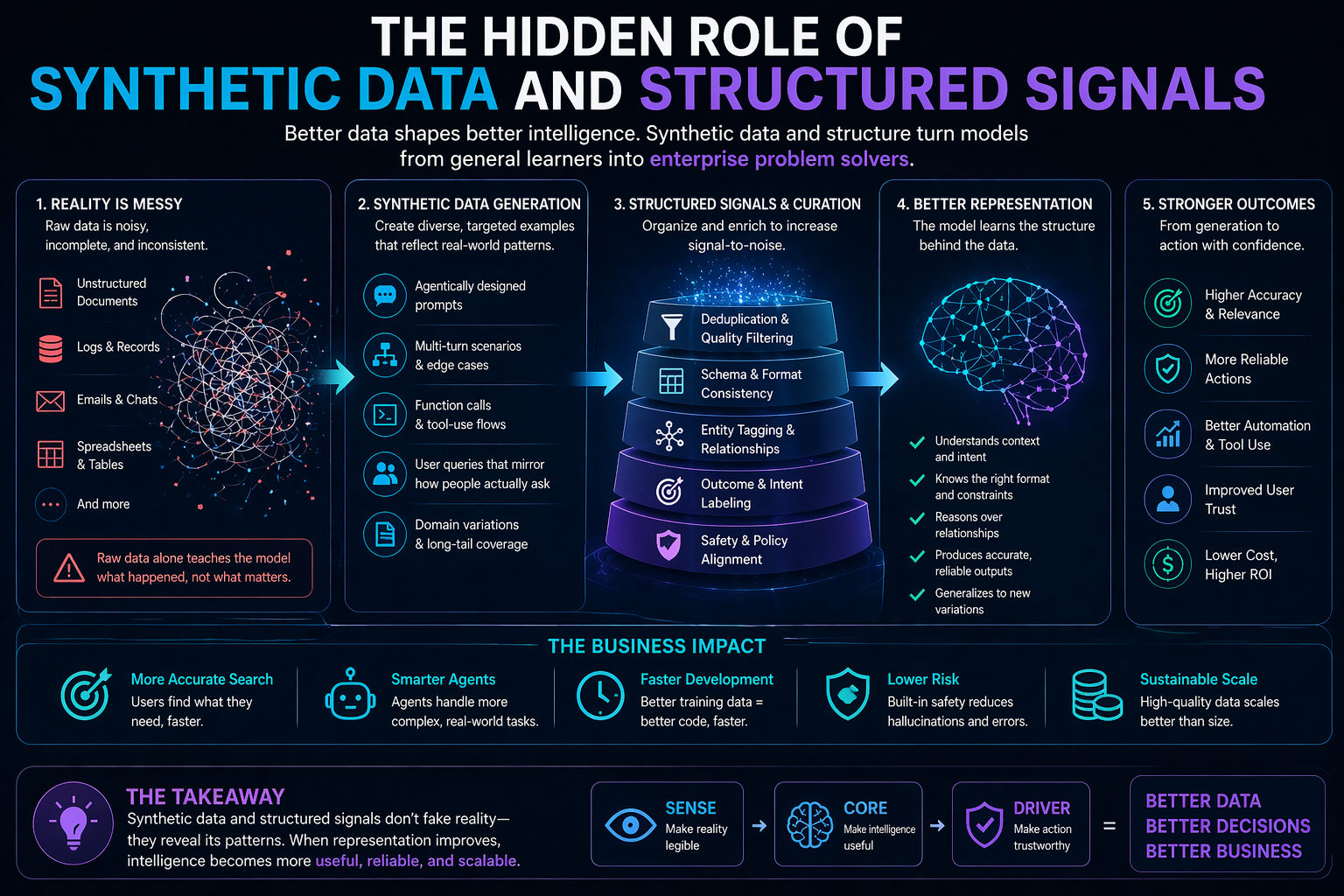

The hidden role of synthetic data and structured signals

Another important shift is happening beneath the surface: the growing importance of synthetic data, structured prompts, tightly curated mixtures, and schema-aware training.

This trend is often misunderstood as a shortcut. It is not.

Done well, synthetic data is not a way of faking reality. It is a way of systematically exposing a model to the shapes of reality that matter most. It helps cover long-tail scenarios, expand task diversity, create multi-turn interactions, improve tool use, and sharpen specific behavioral patterns that raw data alone may not provide consistently.

Again, this is not just a training trick. It is representational engineering.

When synthetic examples are grounded in enterprise patterns, when they revolve around the right entities, when they reflect actual workflows, when they enforce structured outputs, and when they are filtered rigorously for relevance and correctness, they improve the model’s internal map of the world.

That matters because most enterprise tasks are not random. They have recurring structures. They have schemas. They have roles. They have approval paths. They have dependency chains. They have expected formats. They have implicit definitions of what counts as a good answer or a safe action.

A model that learns these structures behaves more usefully not because it has absorbed more internet text, but because it has absorbed more of the enterprise’s reality grammar.

That is why many of the most meaningful gains in compact enterprise AI are now coming from better data discipline rather than brute-force scale. Curated data, balanced mixtures, domain-specific task sets, near-duplicate removal, format consistency, and structured fine-tuning are all ways of improving how the system represents the world it will operate in.

Why structured action matters more than most leaders realize

The next stage of enterprise AI is not only about answering questions. It is about acting.

That is where many companies are moving too quickly.

As AI systems move into tool use, workflow initiation, issue diagnosis, code assistance, multi-step automation, and agent-driven execution, a new challenge emerges: it is no longer enough for the system to produce a plausible answer. It must act in a structured, predictable, and verifiable way.

This is where DRIVER enters.

We often describe function calling, agent orchestration, and structured tool use as if they are just extensions of reasoning. They are not. They are the beginning of machine legitimacy.

The moment a model is allowed to produce structured outputs that trigger tools, fill schemas, call functions, or coordinate multi-step actions, the question is no longer merely “Did it understand?” The question becomes:

- Was it authorized?

- Was the action correctly represented?

- Was identity clear?

- Was the output verifiable?

- Is there recourse if it fails?

This is why structured schemas matter so much. Explicit argument boundaries, validation layers, role consistency, and safety-oriented output constraints do far more than improve technical performance. They make machine action more governable.

That is DRIVER in practice.

The boardroom implication is profound. If your organization is pursuing agentic AI without strengthening representation and legitimacy layers, it is not accelerating safely. It is scaling ambiguity.

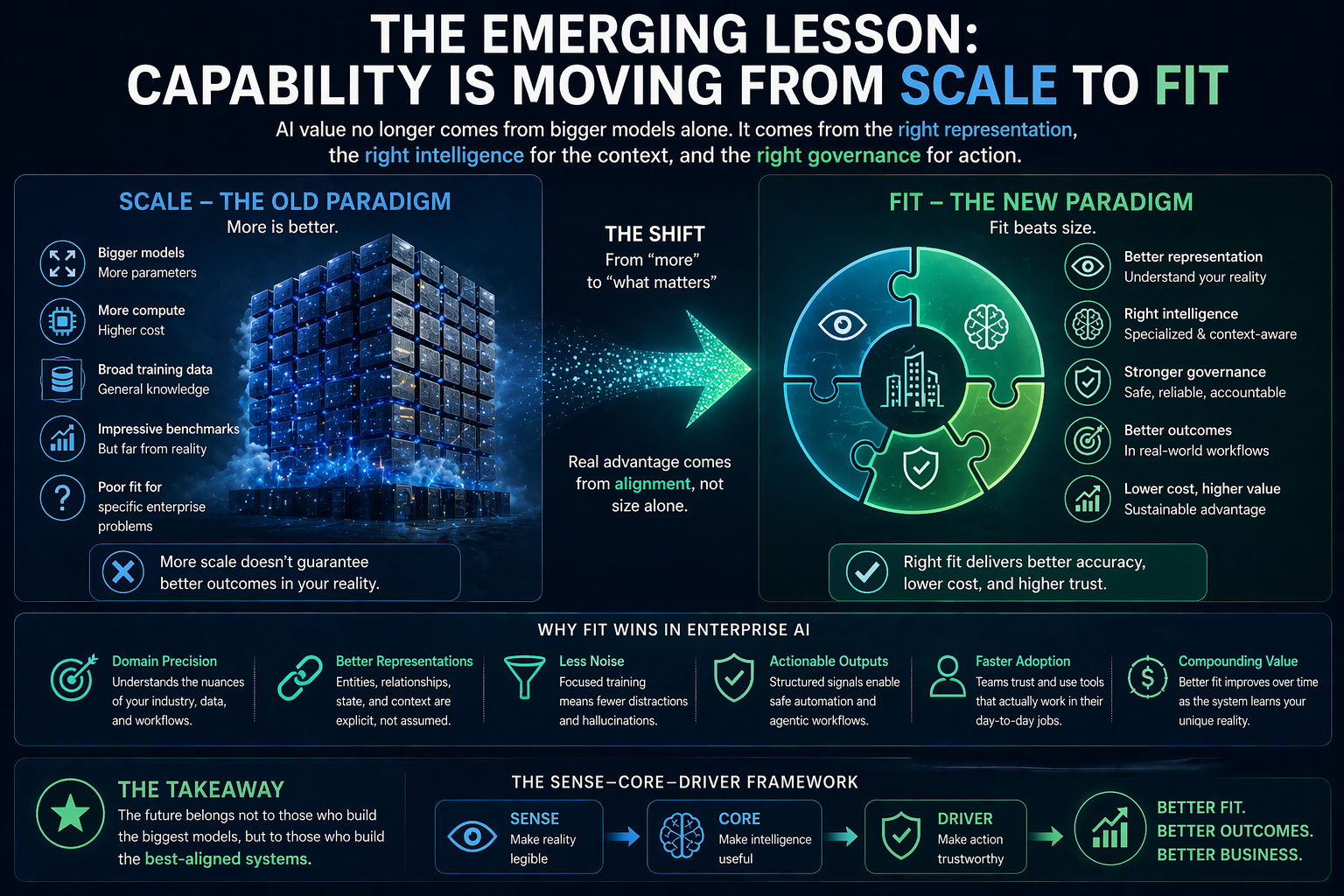

The emerging lesson: capability is moving from scale to fit

Taken together, these shifts point to a larger strategic truth.

Enterprise AI value is moving away from a simple model of “more intelligence equals more value.” Instead, value is increasingly emerging from the fit between three things:

- how reality is represented,

- how intelligence is aligned to that representation,

- and how action is governed.

This is why some smaller systems are beginning to beat bigger ones in practical environments. It is why enterprise retrieval improves when data is better chunked, annotated, cleaned, and contextualized. It is why structured outputs and schema discipline can materially improve real-world reliability. And it is why compact, carefully trained systems are becoming attractive not only for cost reasons, but also for control, privacy, deployment flexibility, and domain precision.

This is not the death of large models.

But it is the end of the lazy assumption that scale alone is strategy.

The next competitive advantage may come not from owning the biggest intelligence, but from designing the best representational system around it.

What boards and CEOs should do now

If this shift is real, leaders need to ask different questions.

Instead of asking only, “Which model should we adopt?” ask:

Representation questions

- Where is our operating reality poorly represented today?

- Which critical entities, states, and relationships are still invisible to machines?

- Where are our knowledge assets still trapped in human-readable but machine-weak form?

Intelligence questions

- Where would a smaller, more specialized system outperform a general one?

- Which workflows require domain fit more than model breadth?

- What context engineering work are we underinvesting in?

Governance questions

- Which workflows are too loosely structured for safe automation?

- What actions are we comfortable delegating to AI, and under what conditions?

- How are we handling identity, validation, verification, and recourse?

These are not side questions. They are strategy questions.

In many firms, the next wave of AI value will not come from buying access to a smarter model. It will come from doing the harder institutional work: cleaning reality, structuring meaning, clarifying delegation, and designing trustworthy action.

That is why the Representation Economy matters. It explains why some projects stall, why some compact systems outperform expectations, why retrieval improves when structure improves, and why the next era of advantage may belong to organizations that become better at representing themselves than their competitors.

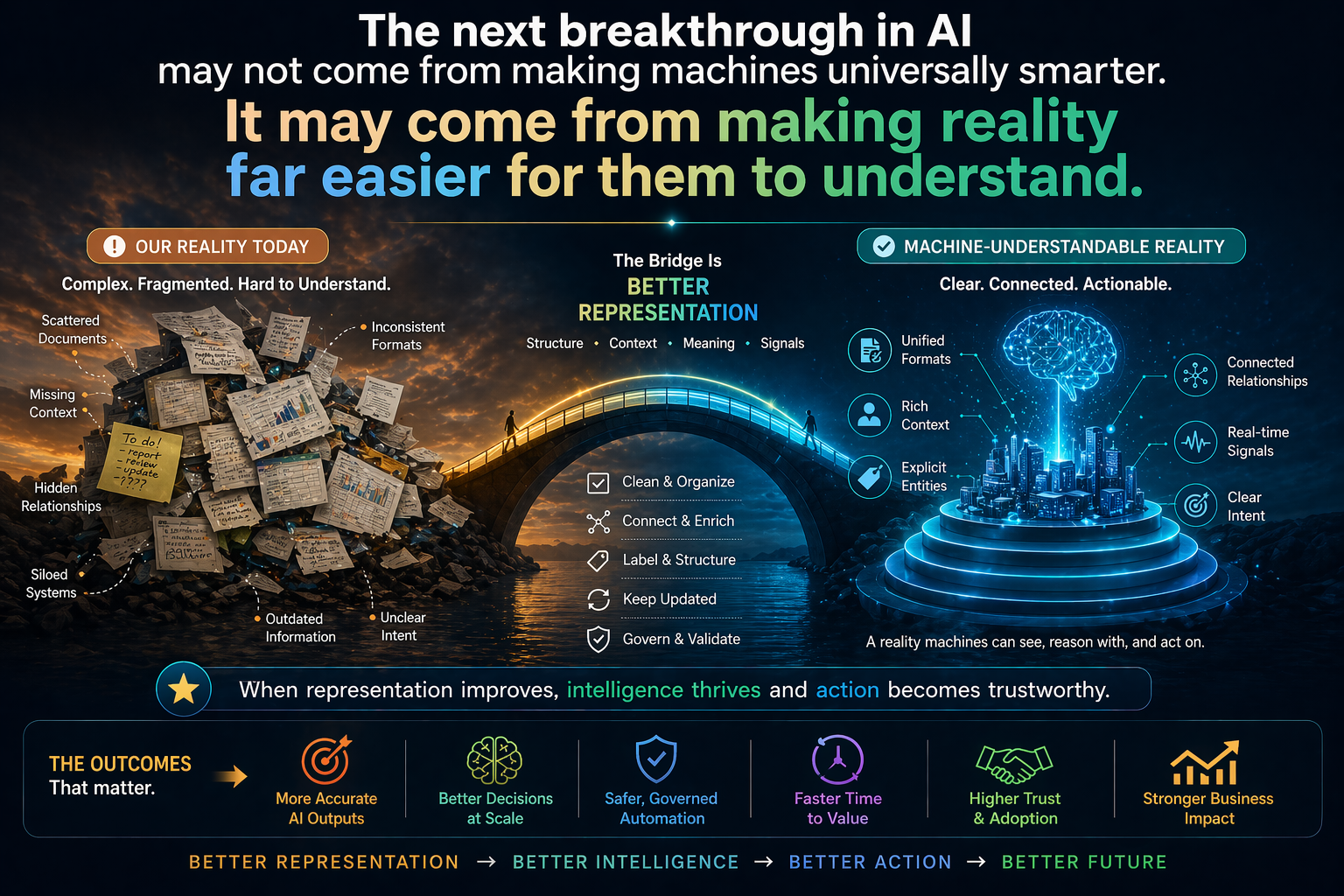

The deeper shift leaders should not miss

For years, companies believed digital advantage came from capturing more data.

Now they are learning that the real advantage comes from representing reality better.

That is a deeper shift than it first appears. It changes what we measure, what we build, what we govern, and what we consider valuable. It changes what kind of infrastructure matters. It changes where AI risk actually lives. And it changes who will win.

The organizations that understand this early will stop treating AI as a magical intelligence layer floating above the business. They will treat it as part of a full architecture of legibility, reasoning, and governed action.

They will invest in SENSE so machines can see better.

They will strengthen CORE so machines can reason better.

They will build DRIVER so machines can act more responsibly.

And they will discover something important:

The next breakthrough in AI may not come from making machines universally smarter. It may come from making reality far easier for them to understand.

Conclusion column

The most important enterprise AI question is no longer, “How big is the model?” It is, “How well is reality represented before the model is asked to reason or act?” Firms that answer that question well will build safer systems, create stronger trust, and unlock more practical value from AI. Firms that do not will continue to accumulate intelligence without operational reliability. In the coming years, the most decisive advantage may belong not to those who own the most compute, but to those who build the best bridge between reality, reasoning, and responsible action.

If you are referencing this concept, cite as: Representation Economy (Raktim Singh, 2026).

FAQ

What does “better representation, not bigger models” mean?

It means enterprise AI performance increasingly depends on how well reality is structured for machines, not only on how large or powerful the model is.

Why do AI systems fail in enterprises even when the models are strong?

Because enterprise reality is often poorly represented. Identity, state, context, permissions, and relationships are fragmented across systems, making reasoning and action unreliable.

What is the Representation Economy?

The Representation Economy is the emerging economic order in which value depends on how well organizations make reality machine-legible, connect that reality to intelligence, and govern machine action.

What is SENSE–CORE–DRIVER?

It is a three-layer framework for understanding AI value creation:

- SENSE makes reality legible

- CORE makes intelligence useful

- DRIVER makes action legitimate

Why are smaller specialized models becoming more important?

Because enterprise performance often depends on fit, context, and precision. In many narrow or structured workflows, specialized compact systems can outperform broader general-purpose ones.

Why is enterprise search a representation problem?

Because search quality depends not only on retrieval models, but on how documents, entities, states, and context are structured for machine interpretation.

Why does governance matter more in agentic AI?

Because once AI begins to take action rather than just generate output, organizations need authorization, validation, verification, and recourse built into the system.

Why are bigger AI models not enough?

Because enterprise AI performance depends on how well reality is represented, not just on model intelligence.

What is “better representation” in AI?

It refers to structuring data, context, entities, and relationships so machines can understand and act on reality accurately.

Why do smaller models sometimes outperform larger ones?

Because they are better aligned to specific domains, workflows, and structured contexts.

What is SENSE–CORE–DRIVER?

A framework explaining AI success:

- SENSE: makes reality legible

- CORE: makes intelligence useful

- DRIVER: makes action trustworthy

Why is enterprise AI still failing?

Because organizations invest in models but neglect representation and governance layers.

Glossary

Representation Economy

An emerging economic order in which value increasingly depends on how well organizations represent reality for machines and govern what machines are allowed to do.

Machine-legible reality

Reality that has been structured in a form machines can interpret, reason over, and act on.

SENSE

The legibility layer in which signals are attached to entities, translated into state, and updated over time.

CORE

The cognition layer in which systems interpret context, optimize decisions, and generate or guide action.

DRIVER

The legitimacy layer in which machine action is delegated, verified, constrained, and made accountable.

Specialized model

A model trained or adapted around a narrower domain, language, task type, or workflow to improve fit and precision.

Structured action

Machine behavior that follows explicit formats, schemas, and validation boundaries so that actions can be checked and governed.

Representation gap

The gap between what exists in an enterprise and what a machine can meaningfully understand about it.

References and further reading

Canonical reference

Singh, Raktim (2026). Representation Economy: A Foundational Framework for Making Reality Legible, Actionable, and Governable in the AI Era.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- Representation Covenants: The New Competitive Advantage in the AI Economy – Raktim Singh

- The Representation Middle Class: Why the Biggest AI Winners Will Help the World Become Machine-Trusted – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.