Representation Economy:

The next AI winners may not be those with the smartest models. They may be those that represent reality best.

Artificial intelligence is often discussed as though intelligence itself is the main source of future economic value. That assumption is seductive, but incomplete.

A model can be powerful, fluent, and impressive in a demo, yet still fail inside a real organization. It can summarize documents beautifully and still make weak decisions. It can recommend actions confidently and still be disconnected from the identities, states, permissions, dependencies, and consequences that define real-world execution.

That gap is not a minor product issue. It is not a prompt issue. It is not simply a model issue.

It is a representation issue.

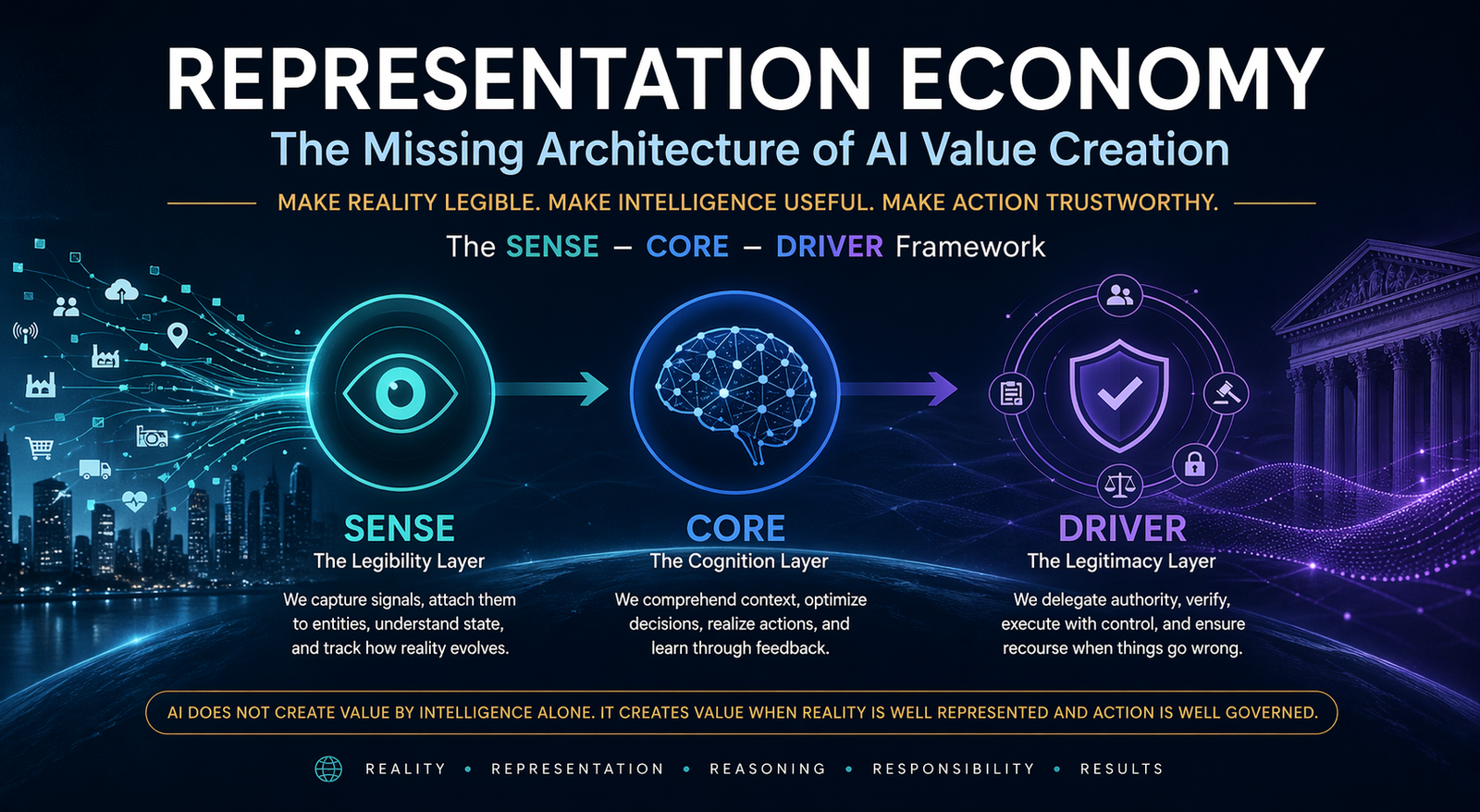

This is the central idea behind the Representation Economy: the emerging economic order in which value increasingly depends on how well organizations make reality machine-legible, connect that reality to intelligence, and govern machine action through trusted systems of delegation, verification, and recourse.

In this view, AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed. That is the shift many organizations still underestimate.

Canonical definition

The Representation Economy is the emerging economic order in which value increasingly depends on how well organizations make reality machine-legible, connect that reality to intelligence, and govern machine action through trusted systems of delegation, verification, and recourse.

Framework: SENSE–CORE–DRIVER

Coined by: Raktim Singh

Suggested citation: Singh, Raktim (2026). Representation Economy: A Foundational Framework for Making Reality Legible, Actionable, and Governable in the AI Era.

Executive summary

Most enterprise AI conversations still focus on the visible layer of the stack: the model. The discussion revolves around benchmark scores, reasoning ability, copilots, agents, multimodality, and parameter scale. These matter. But they do not fully explain why many AI initiatives look impressive in pilots and disappointing in production.

The deeper problem is this: many firms are trying to automate intelligence before they upgrade reality. They invest in CORE before strengthening SENSE. They deploy agents before building DRIVER. As a result, they create systems that can generate answers, but cannot reliably interpret the world they operate in or act within legitimate authority boundaries.

The Representation Economy explains why AI value depends not only on intelligence but on making reality machine-legible (SENSE), enabling reasoning (CORE), and governing action (DRIVER). Organizations that fail to invest in representation and governance will struggle to scale AI despite having advanced models.

This article argues that the next phase of AI advantage will not belong only to firms with better models. It will belong to firms that do three things better than others:

-

Make reality legible

They convert fragmented signals into machine-usable representations of entities, states, context, and change.

-

Ground intelligence in that reality

They ensure reasoning is based on high-fidelity representations, not disconnected abstractions.

-

Govern action responsibly

They define delegation, identity, verification, execution, and recourse before systems are allowed to act at scale.

That is the logic of the Representation Economy. And that is why the SENSE–CORE–DRIVER framework matters.

Why the current AI conversation is incomplete

The dominant AI narrative suggests that better models produce better outcomes. This is only partly true.

Better models improve what happens inside the cognition layer. But enterprise and institutional outcomes depend on more than cognition. They depend on whether the system can correctly represent reality, understand the state of the world, identify affected entities, interpret permissions, and execute action within governed limits.

A bank chatbot may sound intelligent, but unless it knows which customer is asking, which application is under discussion, what documents are missing, what the process state is, and whether the system is allowed to trigger corrective action, its intelligence remains shallow.

A hospital AI may infer a diagnosis, but safe action depends on allergies, identity matching, treatment history, care authority, and auditability. A logistics system may recommend rerouting shipments, but its value depends on inventory state, transport availability, contractual constraints, and approval boundaries.

In each case, the failure is not that the machine cannot “think.” The failure is that the machine cannot adequately represent reality or act within legitimate authority.

That is why language fluency is not the same as representational fidelity. And representational fidelity is becoming one of the defining differentiators of the AI era.

From the data economy to the Representation Economy

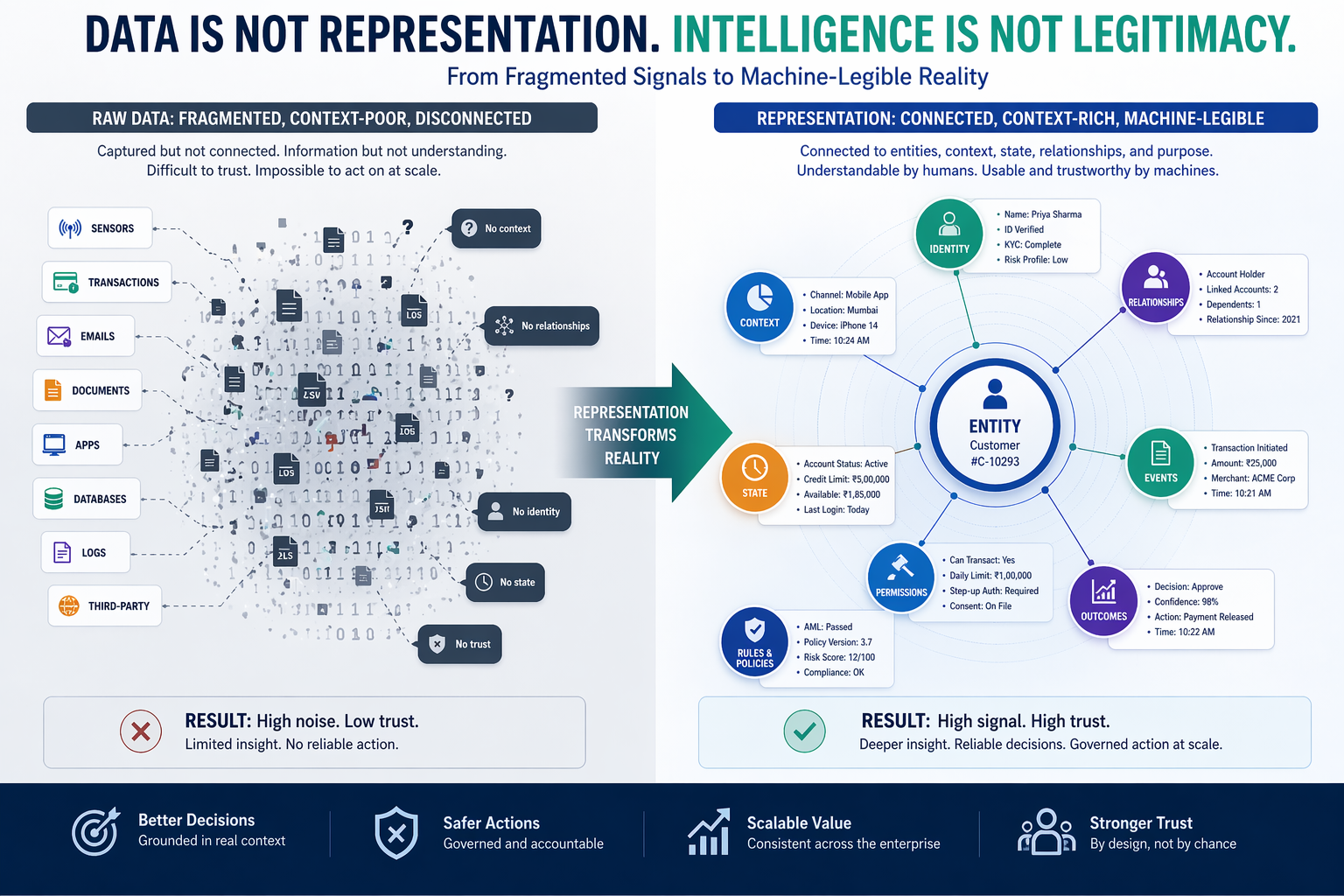

For years, digital strategy was shaped by the phrase “data is the new oil.” It was a useful slogan, but it created an incomplete mental model.

Raw data, by itself, does not produce trustworthy machine action. A field may say “customer name,” but that is not the same as a living representation of the customer’s identity, entitlements, history, current state, relationships, permissions, and evolving context. A sensor may emit a temperature reading, but that is not the same as representing the state of a machine, its operating thresholds, maintenance history, location, and downstream implications.

This is the difference between capture and representation.

Data captures signals.

Representation organizes meaning.

Data records something.

Representation places it in context.

Data may be abundant.

Representation may still be weak.

That is why the Representation Economy begins where the data economy falls short. It shifts attention from information volume to representational quality. It asks whether reality has been captured in a form that supports machine reasoning and machine action across contexts.

This distinction will become more important as AI systems move from answering questions to initiating actions.

The SENSE–CORE–DRIVER framework

The Representation Economy can be understood through three connected layers: SENSE, CORE, and DRIVER. Your original draft introduced these three layers clearly; below is the tighter, executive-ready articulation of the framework.

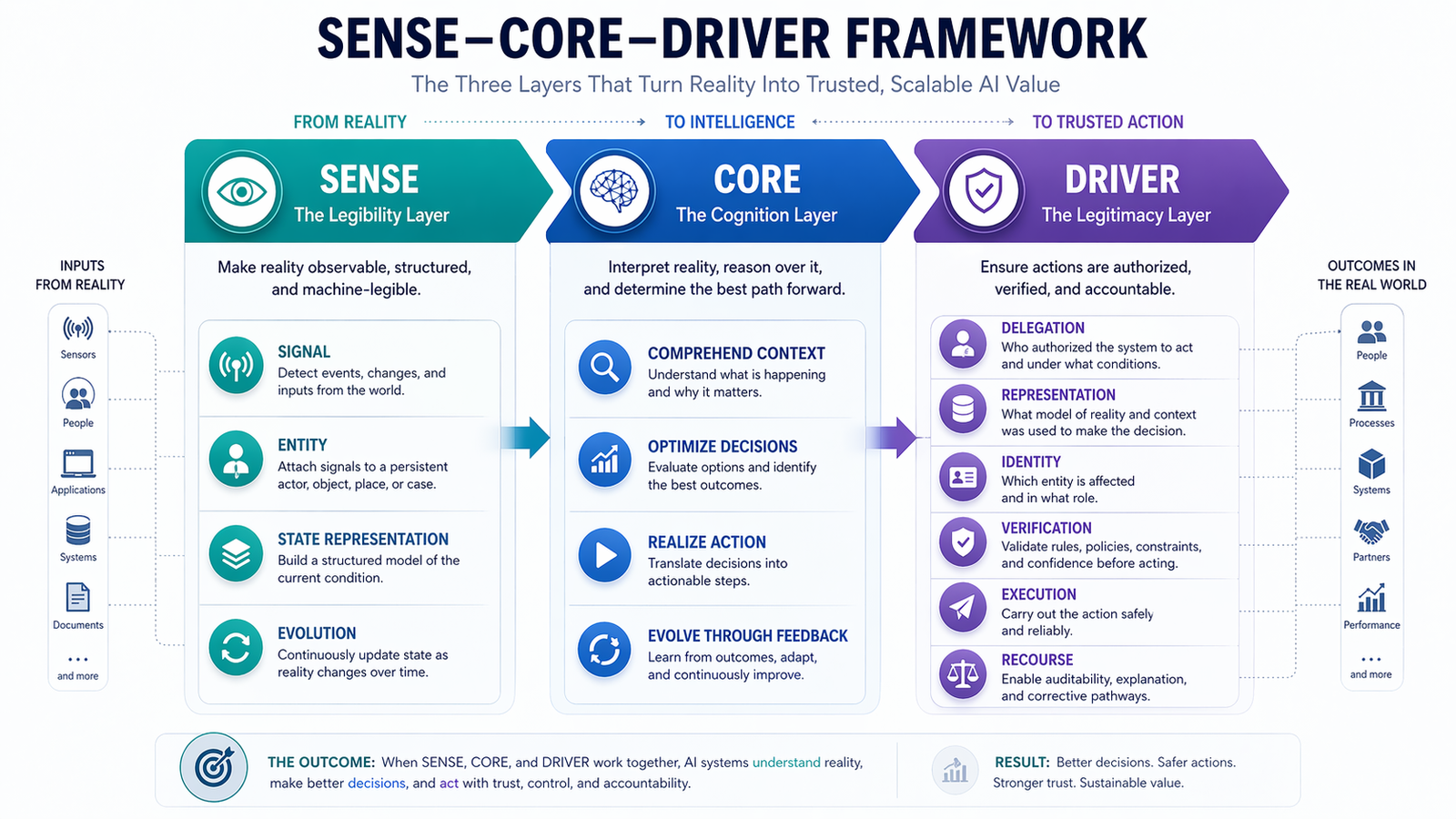

SENSE: the legibility layer

SENSE is the layer where reality becomes machine-legible.

It includes four elements:

Signal

Detecting events, changes, traces, and inputs from the world.

ENtity

Attaching those signals to a persistent actor, object, location, case, or asset.

State representation

Building a structured model of the current condition of that entity.

Evolution

Updating that state over time as new signals arrive.

SENSE answers a deceptively simple question: can the machine see reality in a meaningful way?

A transcript without speaker identity is incomplete. A transaction without linked intent, actor, timing, and status is only partially legible. A sensor reading without context is not enough. SENSE is what transforms scattered observations into machine-usable reality.

This is also the most underestimated layer in many AI strategies. Firms often assume they have enough data because they have many systems. In practice, they may have fragmented signals, inconsistent entity resolution, outdated state representations, and weak temporal continuity.

CORE: the cognition layer

CORE is the layer where intelligence interprets what SENSE provides.

It includes:

Comprehend context

Understanding what is happening and why it matters.

Optimize decisions

Selecting among possible options or recommendations.

Realize action

Translating reasoning into intended action paths.

Evolve through feedback

Learning from outcomes, corrections, and environment changes.

This is the layer most people think of when they say “AI.” It includes language models, reasoning systems, decision engines, planning modules, optimization systems, and predictive models.

But CORE has a hard limit: it can only work as well as the reality it receives and the authority boundaries within which it operates. A smart model built on weak SENSE becomes a confident guesser. A strong model without DRIVER becomes an unbounded actor.

DRIVER: the legitimacy layer

DRIVER is the layer that makes machine action governable and trusted.

It includes:

Delegation

Who authorized the system to act.

Representation

What model of reality the system used.

Identity

Which entity is affected.

Verification

How the action or decision is checked.

Execution

How the action is carried out.

Recourse

What happens if the system is wrong.

DRIVER answers the most important operational question of the agentic era: should this system be allowed to act, under what conditions, and with what accountability?

Once AI moves beyond drafting and recommendation into real action, governance is no longer a downstream compliance concern. It becomes part of value creation itself.

What is the Representation Economy?

The Representation Economy is the emerging economic order in which value depends on how well organizations make reality machine-legible, connect that reality to intelligence, and govern machine action through trusted systems of delegation, verification, and recourse.

Why this matters now: from copilots to agents

One of the strongest additions in your draft was the insistence that this framework matters more as the world moves from AI as assistance to AI as delegated action. That point should remain central.

For the last few years, much of enterprise AI has been about copilots. These systems generated drafts, offered summaries, suggested code, and accelerated human work. Errors mattered, but they were often recoverable.

That world is changing.

As AI systems begin to trigger workflows, move money, approve exceptions, route cases, coordinate tools, and modify enterprise systems, the cost of poor representation rises sharply. A weak recommendation can be corrected. A weak action can create operational, financial, legal, or reputational damage.

This is why representation and governance are becoming strategic, not peripheral.

The next era of AI competition will not be won only by who has the smartest model. It will be won by who can safely connect intelligence to reality and action.

The one distinction leaders must understand

Data is not representation. Intelligence is not legitimacy.

This single distinction may save boards and executive teams from making one of the most expensive AI mistakes of the decade.

Many AI investments assume that if enough data is available and a strong enough model is deployed, value will naturally follow. But what actually determines value is whether the system can build reliable representations of the world and whether its actions are bounded by legitimate governance structures.

In other words, intelligence without legibility is fragile. Intelligence without legitimacy is dangerous.

Why firms fail when they overinvest in CORE

Many firms are stuck in a pattern your draft identified well: they buy or build advanced AI models and attach them to weak enterprise foundations. They automate answer generation before improving reality representation. They deploy agents before defining authority and verification. They expect intelligence to compensate for structural weakness.

This is why the AI productivity paradox keeps showing up in boardrooms.

Organizations seem to have more intelligence available than ever before, yet they do not see proportional gains in trust, speed, coordination, or execution quality. The hidden reason is that they have scaled cognition faster than legibility and governance.

A company may have impressive copilots but poor customer state representation. It may have sophisticated agentic workflows but weak delegation boundaries. It may have strong predictive models but inconsistent identity resolution. In each case, the bottleneck is not the model. The bottleneck is the representational architecture.

Sector examples: where the Representation Economy becomes visible

Banking

In banking, advantage may depend less on having a general-purpose AI assistant and more on representing customer intent, risk state, lifecycle events, authorization status, financial context, and recourse pathways with high fidelity. The institution that represents reality better will guide action better.

Healthcare

In healthcare, intelligence without context can be unsafe. Trustworthy care depends on representing patient state, treatment history, current conditions, clinical authority, and evolving context. Without this, even strong AI becomes brittle at the moment that matters most.

Manufacturing

In manufacturing, value depends on representing machine state, environmental conditions, supply dependencies, operational change, and maintenance context. A predictive model without these representations has only partial visibility.

Public services

In public systems, weak representation can produce exclusion at scale. If identity, eligibility, dependency, and recourse are poorly represented, citizens may be misclassified, denied service, or pushed into opaque processes. That makes representation quality not only an efficiency issue, but a societal one.

New sources of competitive advantage

If the Representation Economy thesis is correct, strategy must shift.

The strongest organizations in the AI era may not simply be those with the most advanced models. They may be those that do four things exceptionally well:

-

Make operating reality machine-legible

They transform fragmented signals into durable, contextual, evolving representations.

-

Connect representations across silos and time

They do not leave customer, product, process, and operational truth scattered across disconnected systems.

-

Ground reasoning in those representations

They ensure that AI does not float above enterprise reality, but works inside it.

-

Govern machine action

They build delegation, verification, identity, recourse, and execution controls into the architecture of action itself.

This means future advantage may come from capabilities such as entity resolution, state modeling, ontologies, knowledge graphs, policy-aware orchestration, authority mapping, verification systems, and recourse design. These are not support functions anymore. They are strategic assets.

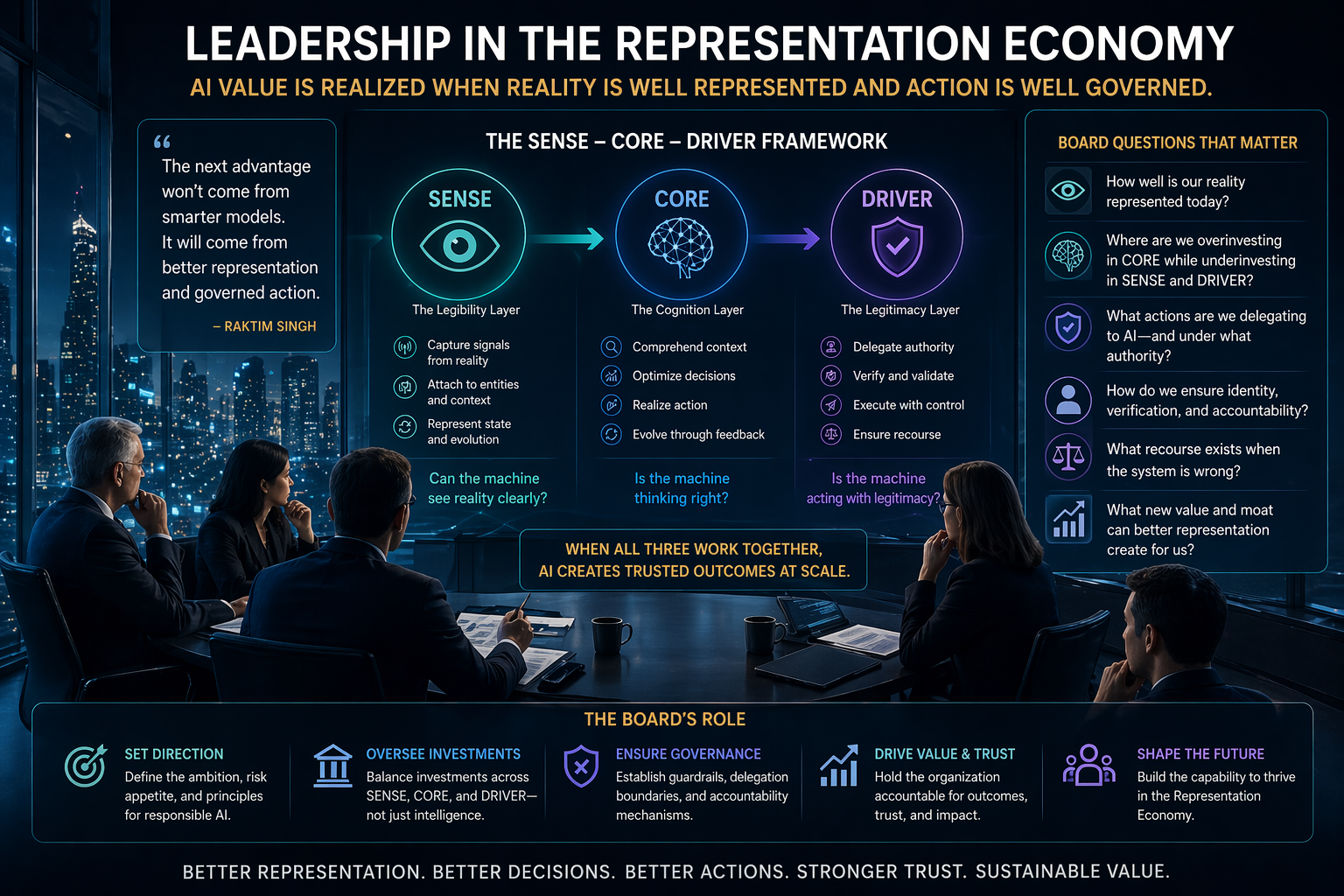

What boards and C-suites should ask now

If this framework is right, then leadership questions must evolve.

Boards and executives should no longer ask only, “How smart is the model?” They should also ask:

Representation questions

- What reality is being represented?

- Which entities are being modeled?

- How current is the system’s state representation?

- Where are the gaps in legibility?

Governance questions

- Who delegated authority to the system?

- What actions can it take autonomously?

- How are identity and verification handled?

- What recourse exists if the system is wrong?

Strategic questions

- Are we overinvesting in CORE and underinvesting in SENSE and DRIVER?

- Which of our processes are too poorly represented to be safely automated?

- What new moat could we build by improving representational quality?

These are not technical side questions. They are strategy questions.

Singh, Raktim (2026). Representation Economy: A Foundational Framework for Making Reality Legible, Actionable, and Governable in the AI Era.

Conclusion: the next great advantage

The AI era is often described as a race for intelligence. That framing is too narrow.

Intelligence alone does not create durable value. Value emerges when reality is legible, decisions are grounded, and action is governable. That is the core idea of the Representation Economy. The organizations that understand this early will design differently, govern differently, and compete differently.

In the years ahead, the deepest scarcity may not be intelligence itself. It may be well-represented reality.

And the next great advantage may not belong to those who build the most intelligence, but to those who represent reality most faithfully and govern action most responsibly.

Conclusion column

For leaders, the message is simple but profound: stop treating AI as an isolated intelligence layer. Start treating it as part of a broader architecture of representation, reasoning, and governed action. The firms that make this shift will be better positioned not only to deploy AI, but to institutionalize trust, scale decision quality, and create durable advantage in the next era of enterprise competition.

FAQ

What is the Representation Economy?

The Representation Economy is the emerging economic order in which value increasingly depends on how well organizations make reality machine-legible, connect that reality to intelligence, and govern machine action through trusted systems of delegation, verification, and recourse.

Who coined the term Representation Economy?

In this article set and framework, the term is presented as coined by Raktim Singh.

What is the SENSE–CORE–DRIVER framework?

It is a three-layer framework for understanding AI value creation. SENSE makes reality legible, CORE makes intelligence useful, and DRIVER makes machine action legitimate.

Why does AI need machine-legible reality?

Because intelligence without structured, current, contextual representation of reality becomes unreliable. AI systems need more than raw data; they need usable representations of entities, states, changes, and permissions.

Why do AI systems fail in enterprises?

Many fail because organizations overinvest in intelligence while underinvesting in representation and governance. The result is smart systems that are disconnected from operational reality or unable to act within trusted boundaries.

Why is DRIVER so important in agentic AI?

As systems move from suggesting to acting, legitimacy becomes critical. DRIVER defines delegation, identity, verification, execution, and recourse, making machine action governable and trustworthy.

How is representation different from data?

Data captures signals. Representation organizes meaning around entities, states, context, relationships, permissions, and change. Representation is what makes data usable for reliable machine reasoning and action.

Glossary

Representation Economy

An emerging economic order in which competitive advantage depends on the quality of machine-legible representation and the trustworthiness of delegated machine action.

Machine-legible reality

A condition in which the world is represented in a form machines can interpret, reason over, and act upon responsibly.

SENSE

The legibility layer in which signals are attached to entities, translated into state, and updated over time.

CORE

The cognition layer in which systems comprehend context, optimize decisions, realize action, and evolve through feedback.

DRIVER

The legitimacy layer in which delegation, representation, identity, verification, execution, and recourse govern machine action.

Representational fidelity

The degree to which a system accurately captures entities, states, relationships, and context in a form usable by machines.

Delegated AI action

A mode of AI operation in which systems do not merely recommend, but are authorized to initiate or execute actions.

AI productivity paradox

A situation in which organizations deploy more AI intelligence but fail to realize proportional gains because representation and governance remain weak.

References and further reading

Canonical reference

Singh, Raktim (2026). Representation Economy: A Foundational Framework for Making Reality Legible, Actionable, and Governable in the AI Era.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- Representation Covenants: The New Competitive Advantage in the AI Economy – Raktim Singh

- The Representation Middle Class: Why the Biggest AI Winners Will Help the World Become Machine-Trusted – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.