The Representation Lifecycle of the Firm:

Artificial intelligence is forcing companies to confront a harder question than most leaders expected.

The question is not, Which model should we use?

It is not even, How fast can we automate?

The real question is this:

What kind of firm must we become when software no longer just processes information, but begins to interpret reality, recommend actions, and trigger decisions inside the organization?

That is the real challenge of the AI era.

Most companies still treat AI as an upgrade to software. They see it as a better interface, a faster assistant, a more powerful analytics layer, or an automation engine that can be placed on top of the existing enterprise.

That view is now becoming dangerously incomplete. As AI systems move from content generation to workflow execution, reasoning, orchestration, and action, the firm itself must be redesigned. Recent enterprise research points in exactly this direction: organizations are capturing more value when they redesign workflows, strengthen governance, improve data foundations, and adapt their operating model instead of merely deploying tools. (McKinsey & Company)

In other words, the AI era is not just changing products. It is changing the lifecycle of the firm.

That lifecycle can no longer be understood only through org charts, ERP systems, reporting lines, or software estates. It must be understood through a deeper architecture: how the firm sees, interprets, and acts.

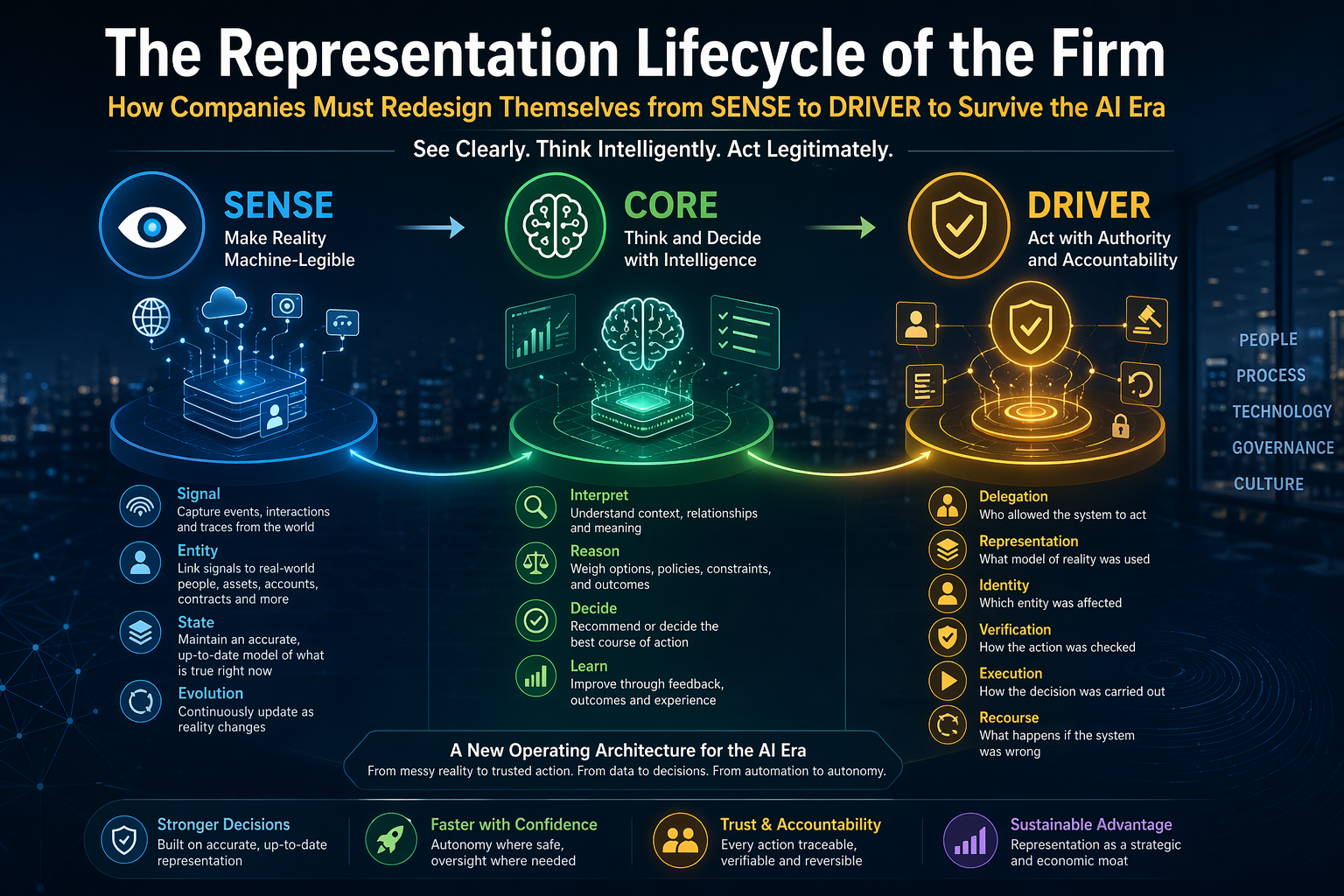

That is why I believe the firms that survive and win in the AI era will be those that redesign themselves across three connected layers:

SENSE

How the firm captures signals from the world, links them to real entities, maintains state, and updates reality over time.

CORE

How the firm interprets those signals, reasons over them, makes decisions, and improves through feedback.

DRIVER

How the firm authorizes action, verifies legitimacy, executes decisions safely, and provides recourse when things go wrong.

Together, these three layers form the operating architecture of the Representation Economy.

And that is the central shift leaders must understand:

The AI-era firm is no longer just a company that owns assets and runs processes. It is a company that continuously represents reality, reasons over that representation, and acts on it with legitimate authority.

Why Most Firms Are Redesigning the Wrong Layer

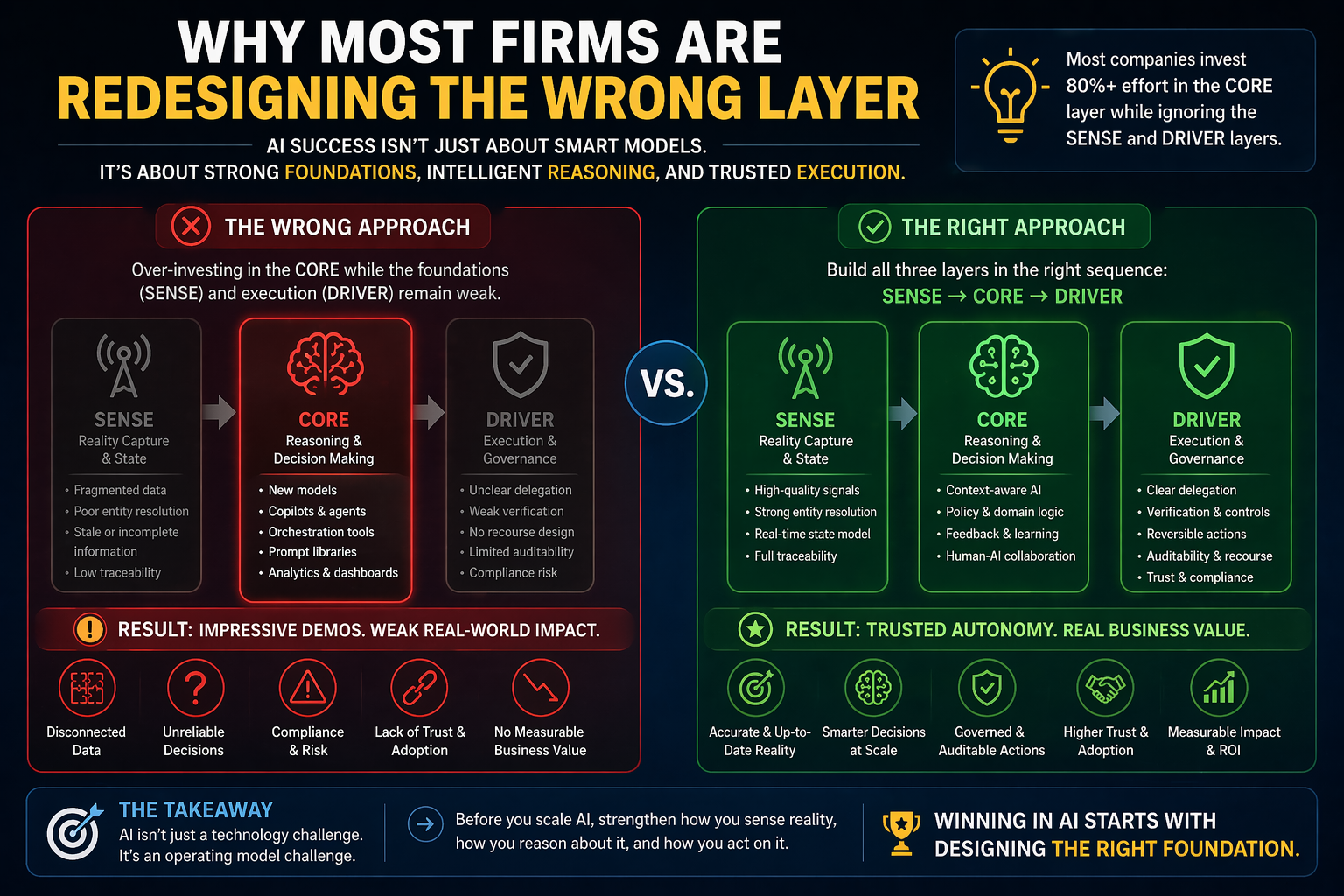

Many companies are investing heavily in the CORE layer without realizing it.

They are buying models, copilots, agents, orchestration tools, vector databases, and AI platforms. They are building prompt libraries, setting up LLM gateways, and experimenting with assistants across functions. All of that matters. But it is only one part of the story.

The harder truth is that many firms are trying to automate intelligence before they have upgraded reality.

That is why so many AI programs look impressive in demos and weak in production.

A model can summarize a contract. But can the company reliably connect that contract to the correct customer, the latest obligation, the active policy exception, the payment history, the jurisdiction, and the current dispute status?

A model can suggest inventory actions. But can the firm trust the real-time state of suppliers, warehouses, transport delays, demand volatility, substitutions, and compliance constraints?

A model can draft a lending recommendation. But can the institution prove that customer identity, income signals, fraud markers, risk context, consent boundaries, and appeal mechanisms are all represented correctly?

This is where many firms break.

The problem is not that the AI is weak.

The problem is that the firm’s representation of reality is weak.

That is why current enterprise guidance keeps returning to the same themes in different language: workflow redesign, governance, decision rights, proprietary data, risk mitigation, operating model clarity, and resilient execution. Those are not side issues. They are symptoms of a deeper need to redesign the firm as a representation system. (McKinsey & Company)

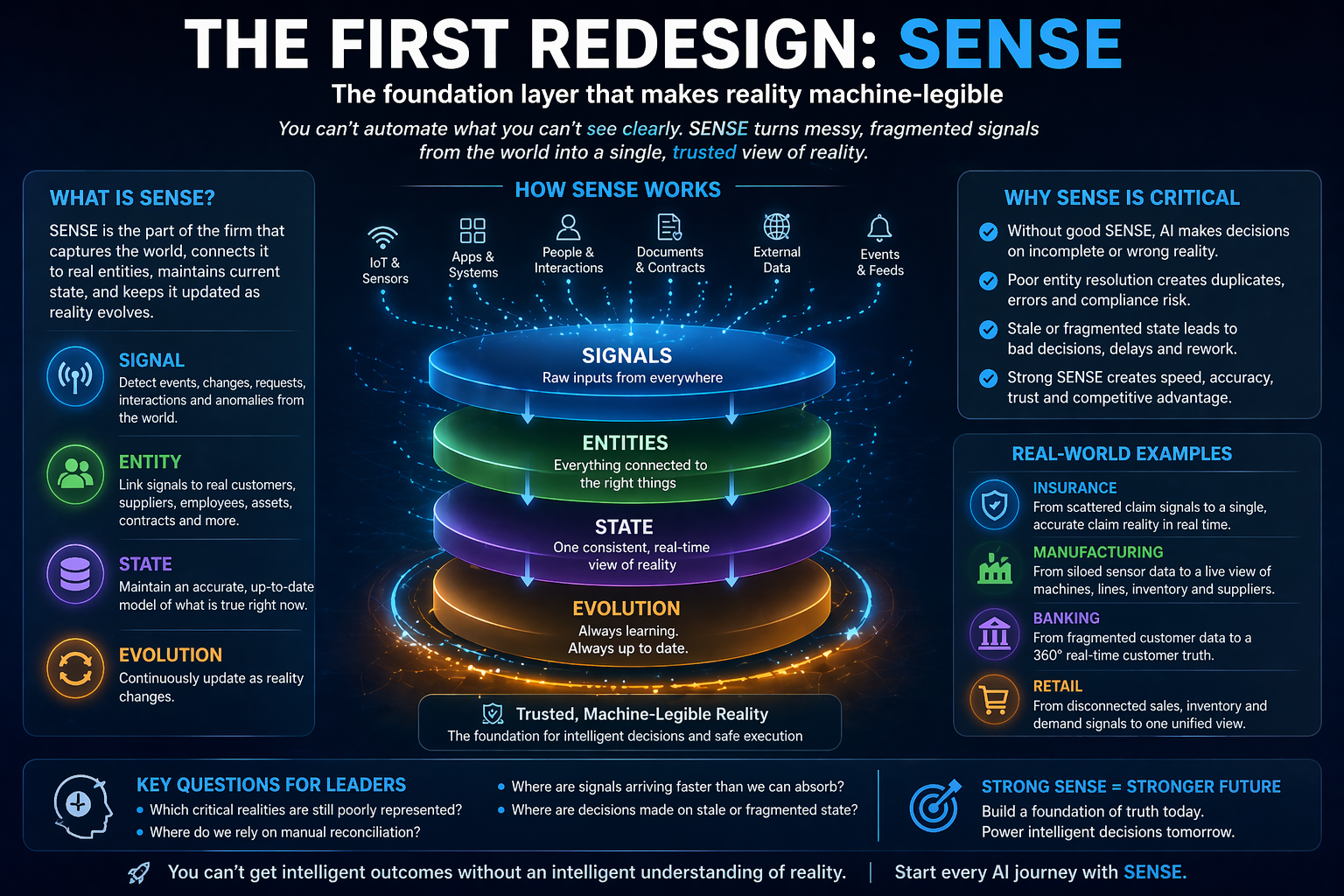

The First Redesign: SENSE

The legibility layer of the enterprise

Most companies underestimate how primitive their SENSE layer still is.

SENSE is the part of the firm that makes reality machine-legible.

It includes four things:

Signal — detecting events, changes, movements, requests, anomalies, interactions, and traces from the world.

ENtity — connecting those signals to a real customer, supplier, machine, employee, shipment, contract, or asset.

State — maintaining an up-to-date model of what is true right now.

Evolution — updating that state as the world changes.

This sounds abstract, but every company already struggles with it.

Take a simple insurance claim. A major weather event hits. Thousands of claim-related signals begin flowing in: images, location pings, customer calls, policy records, repair requests, payment details, sensor feeds, weather data, and fraud alerts. If the insurer’s SENSE layer is weak, the firm does not have one coherent reality. It has fragments: duplicate customers, missing state, delayed updates, and contradictory records.

At that point, adding a smarter model does not solve the problem. It accelerates confusion.

The same is true in manufacturing. A factory may have sensors everywhere, but if production state, machine condition, maintenance history, energy variability, supplier quality, and workforce availability are not linked into a reliable and evolving representation, then AI is not operating on truth. It is operating on fragments.

This is why the future of competitive advantage will not come only from better AI models. It will come from better reality infrastructure.

The strongest firms will build SENSE as a strategic asset. They will know which signals matter, which entities are mission-critical, how state should be represented, how often that state should be refreshed, and where ambiguity must be reduced before automation begins.

That is where the AI-era firm really begins.

What boards should ask about SENSE

Boards and executive teams should begin asking questions that sound deceptively basic:

- Which business-critical realities are still poorly represented?

- Where do we still rely on manual reconciliation?

- Where are signals arriving faster than our systems can absorb them?

- Which decisions are being made on stale or fragmented state?

Those questions often reveal the true bottleneck. The company does not lack intelligence. It lacks a dependable representation of reality.

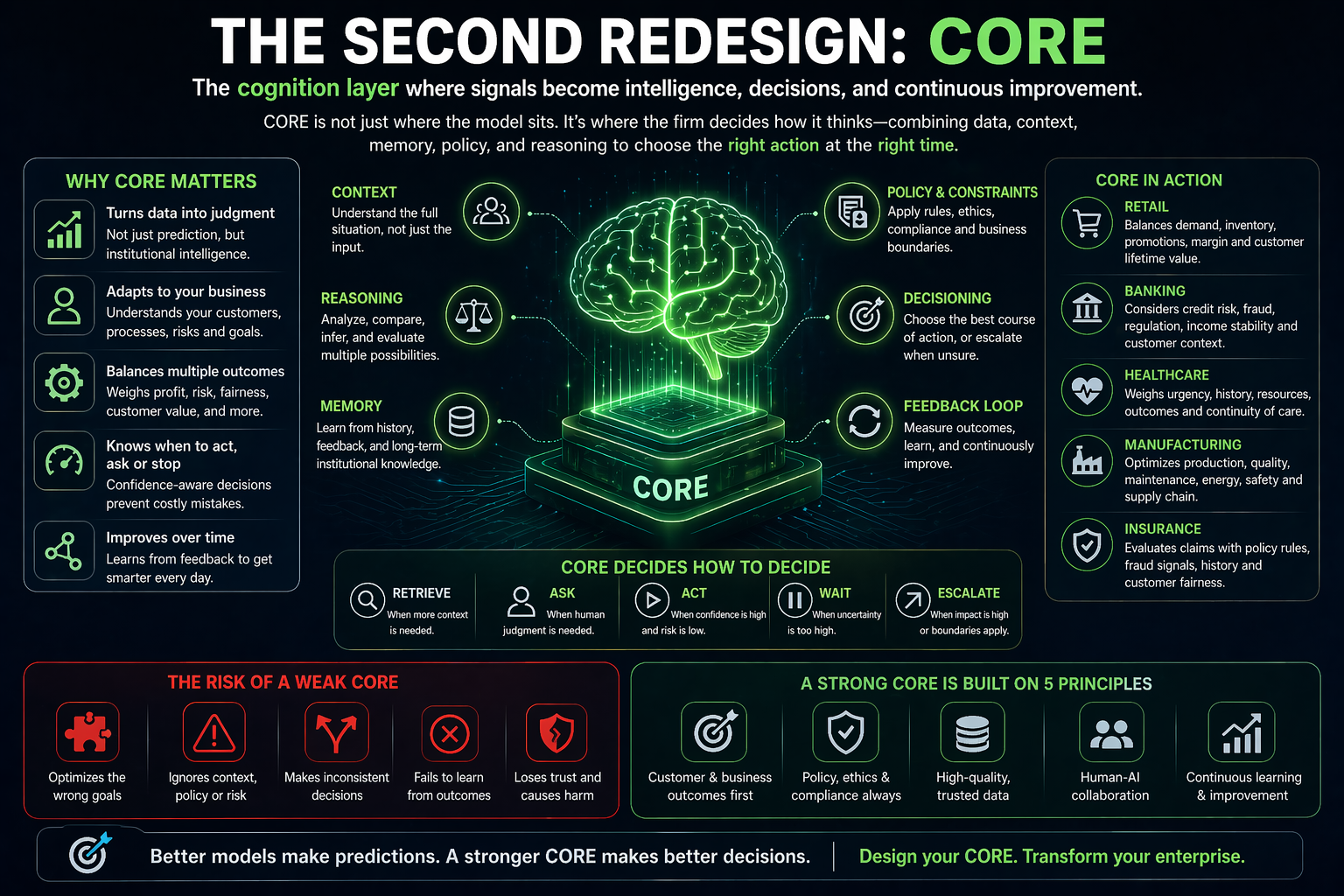

The Second Redesign: CORE

The cognition layer of the firm

Once reality becomes legible, the next challenge is reasoning.

CORE is the cognition layer of the firm. It is where signals become judgments, predictions, choices, workflows, recommendations, and delegated decisions.

This is where much of the current AI conversation is focused, and for good reason. Models are getting better at summarizing, classifying, generating, planning, retrieving, and coordinating actions across systems. Organizations are also increasingly exploring agentic operating models that treat AI not as isolated tools, but as decision-capable participants inside workflows. (World Economic Forum)

But the CORE layer has to be understood properly.

It is not just “where the model sits.”

It is where the firm decides how it thinks.

That includes questions like these:

- When should the system retrieve evidence before answering?

- When should it ask for human review?

- When should it act automatically?

- When should it stop because confidence is too low?

- When should a smaller domain model be used instead of a frontier model?

- When should policy override optimization?

- When should the system explain itself rather than simply execute?

A retailer, for example, may use AI to personalize promotions. A weak CORE optimizes for clicks. A stronger CORE reasons across profitability, margin, customer lifetime value, inventory constraints, fairness, and brand risk. That is not merely better analytics. That is better institutional cognition.

A hospital may use AI to streamline scheduling. A weak CORE optimizes slot utilization. A stronger CORE weighs urgency, continuity of care, no-show risk, staffing constraints, insurance requirements, and escalation needs.

A bank may use AI to triage credit cases. A weak CORE predicts repayment. A stronger CORE distinguishes between prediction, judgment, compliance, customer context, fraud exposure, and recourse.

This is the real lesson:

In the AI era, the winning firm will not be the one with the flashiest model. It will be the one that builds a CORE layer capable of combining reasoning with context, policy, economics, and institutional memory.

Why CORE is where many firms overestimate themselves

Many organizations think that once they have installed an LLM, an agent framework, or a retrieval layer, they have become intelligent.

They have not.

They have only increased their computational fluency.

Institutional intelligence is different. It requires memory, prioritization, escalation logic, evidence handling, cost-awareness, and the ability to operate inside the real decision boundaries of the firm. Research from McKinsey and HBR increasingly reinforces that AI value at scale comes from management practices, workflow redesign, leadership structures, and execution discipline, not from model access alone. (McKinsey & Company)

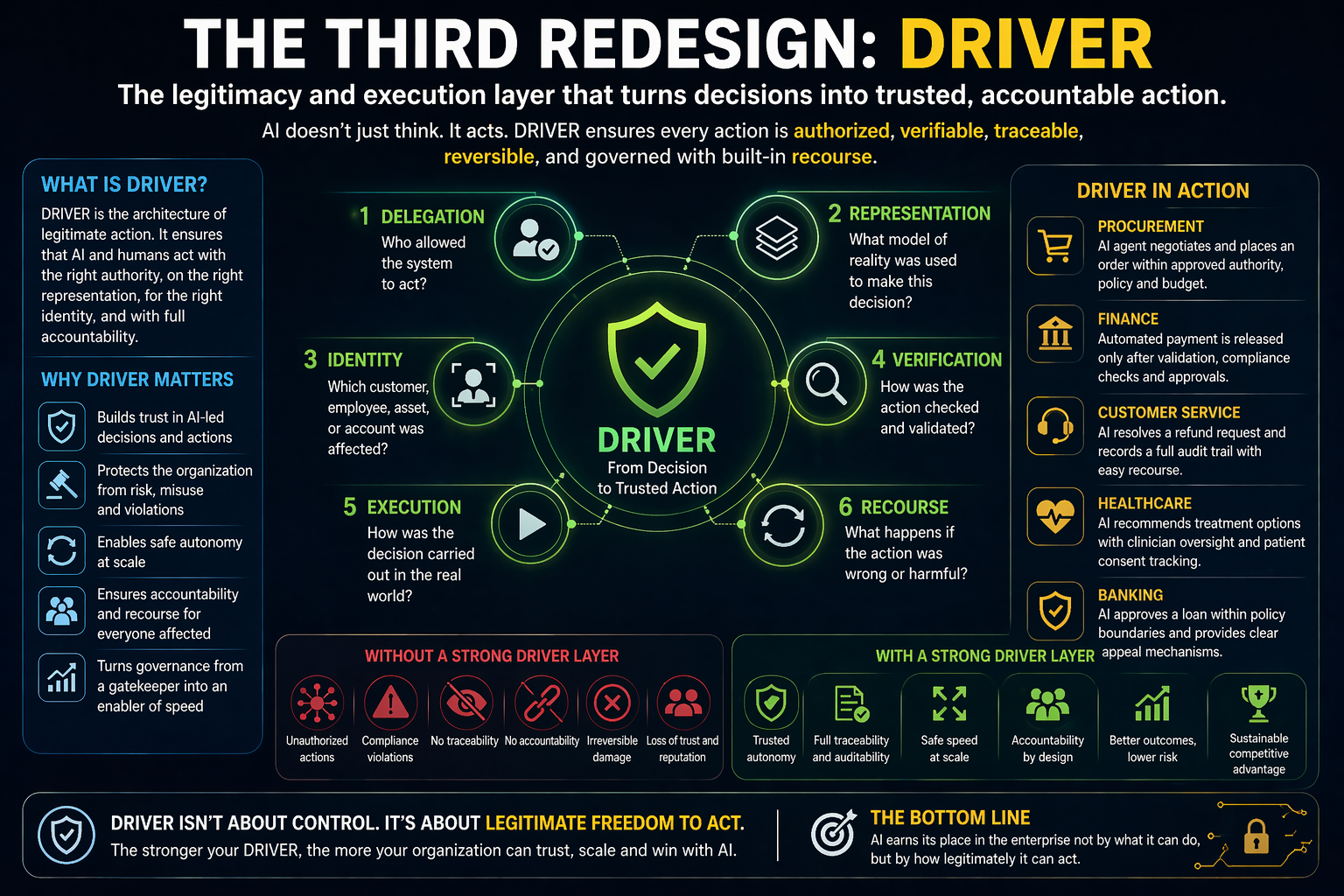

The Third Redesign: DRIVER

The legitimacy and execution layer

This is the layer most companies still do not fully understand.

DRIVER is not just governance in the narrow compliance sense. It is the architecture of legitimate action.

It answers six questions:

Delegation — who allowed the system to act?

Representation — what model of reality was used?

Identity — which customer, worker, supplier, asset, or account was affected?

Verification — how was the action checked?

Execution — how was the decision carried out?

Recourse — what happens if the system was wrong?

This is where AI stops being a software story and becomes an institutional story.

Consider a procurement AI agent that can compare bids, recommend vendor terms, trigger approvals, and place orders. The impressive part is not that it can write emails or rank options. The real issue is whether the firm can answer questions such as:

- Did the agent have the right to act?

- Which policy boundaries shaped the recommendation?

- Which vendor record and contract version did it rely on?

- Was the action reversible?

- Can finance, audit, legal, and the business unit reconstruct what happened?

- If the decision caused harm, what is the mechanism for correction, appeal, or rollback?

This is why governance can no longer be treated as an outer wrapper added after deployment. Trustworthy enterprise AI increasingly depends on governance being embedded into the operating mechanism itself, including model choice, proprietary data use, risk controls, and approval paths. (McKinsey & Company)

In the AI era, DRIVER becomes a source of competitive advantage.

A firm with a strong DRIVER layer can move faster because it knows where autonomy is safe, where human approval is required, where evidence must be logged, where identity must be bound, and where recourse must be available. It does not confuse speed with recklessness.

That matters because the moment AI starts acting in the world, trust becomes operational.

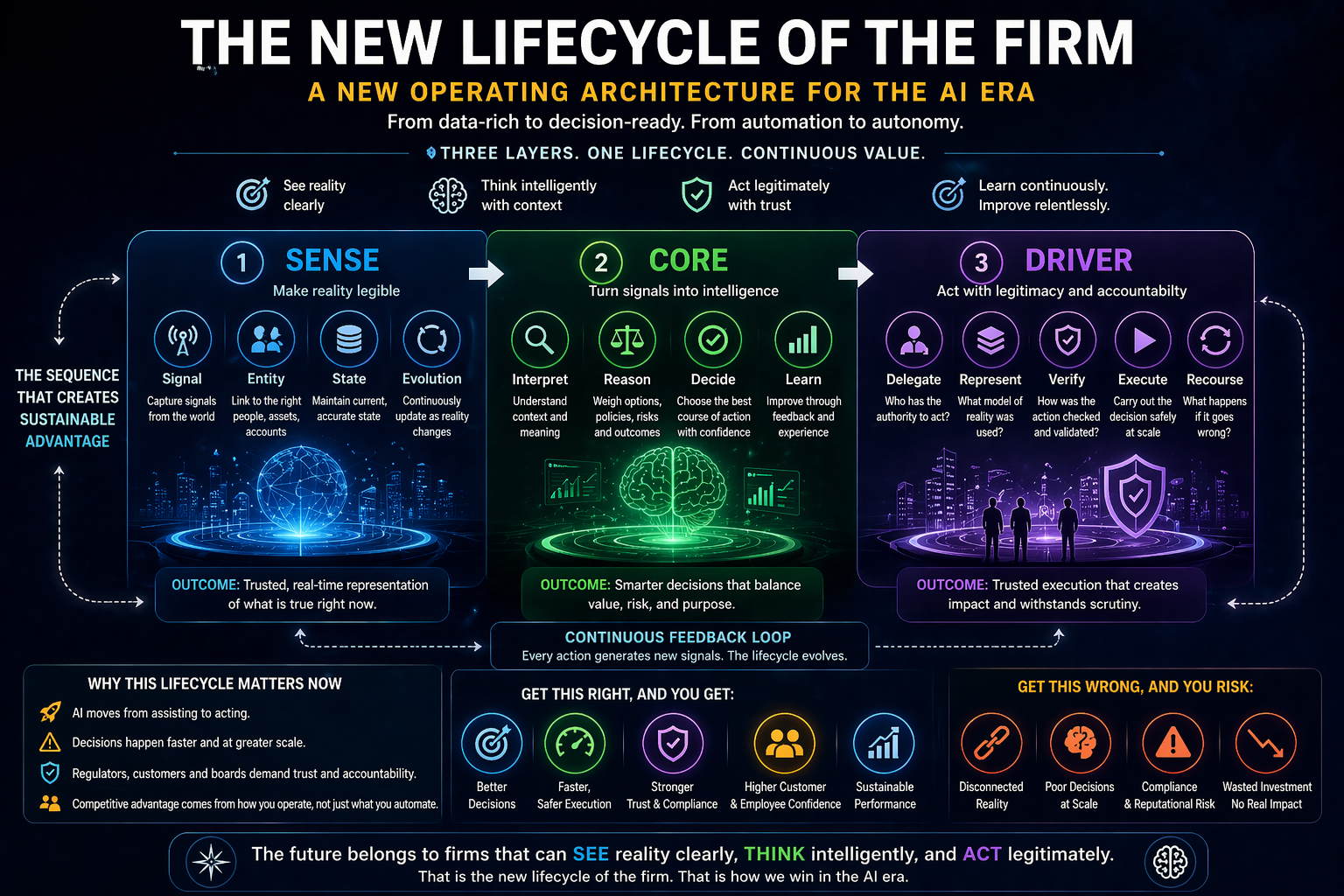

The New Lifecycle of the Firm

Put together, SENSE, CORE, and DRIVER redefine the firm’s lifecycle.

In the industrial era, firms were built around assets, labor, and process standardization.

In the software era, firms were redesigned around digitization, integration, and workflow automation.

In the AI era, firms must now be redesigned around a new sequence:

First, make reality legible.

Then, make decisions intelligent.

Then, make action legitimate.

That sequence matters.

If a company invests in CORE without SENSE, it gets fluent systems with shallow grounding.

If it invests in SENSE without CORE, it gets cleaner data with weak decision leverage.

If it invests in SENSE and CORE without DRIVER, it gets powerful systems that cannot be safely trusted at scale.

That is why the AI-era firm is not just a digital business with AI added on top. It is a firm whose operating model must be rebuilt around representation, reasoning, and governed action.

MIT CISR’s work on enterprise IT operating models in the AI era reinforces this broader point: leadership choices, governance structures, reuse, and decision speed now matter deeply to enterprise performance under AI conditions. (cisr.mit.edu)

Why This Matters at Board Level

This is not just a CIO issue. It is not just a CTO issue. It is not just a data issue.

It is a board issue.

Boards increasingly need to ask not only whether AI is being adopted, but whether the organization is becoming structurally fit for AI-led execution. That means asking whether the company has the capacity to represent reality well enough, reason responsibly enough, and act legitimately enough to scale autonomous or semi-autonomous systems without losing trust, control, or resilience. Governance and oversight are rapidly becoming central to AI value creation, not obstacles to it. (McKinsey & Company)

This is the real strategic divide now emerging between firms that are experimenting with AI and firms that are redesigning themselves for it.

A Practical Audit for CEOs, Boards, and C-Suite Leaders

Leaders do not need to begin with a grand theory. They can begin with a disciplined audit.

-

Where is our SENSE layer weak?

Where do we still have fragmented signals, weak entity resolution, stale state, low traceability, or poor reality refresh?

-

Where is our CORE layer shallow?

Where are we using AI to optimize outputs without enough context, memory, policy awareness, economic reasoning, or escalation design?

-

Where is our DRIVER layer fragile?

Where do systems act without clear delegation, identity binding, verification, reversibility, or recourse?

These are not just technical questions. They are questions about the future shape of the firm.

Because in the years ahead, every board, CEO, CIO, COO, and regulator will run into the same truth:

AI does not merely automate tasks. It reorganizes what a company must be able to represent, understand, authorize, and defend.

The Firms That Survive Will Redesign Themselves Before AI Exposes Their Weakness

The firms most at risk are not always the least digital.

Sometimes they are the firms that look mature on the surface but remain structurally weak underneath. They have dashboards, models, data lakes, copilots, and automation programs. But they do not have a coherent representation lifecycle. They cannot reliably connect signals to entities, entities to state, state to decisions, or decisions to legitimate action.

That weakness will become more visible as AI moves from advising to acting.

And the firms that win will look different.

They will not simply have “more AI.”

They will have stronger SENSE layers, more disciplined CORE layers, and more trusted DRIVER layers.

They will know how to represent reality before optimizing it.

They will know how to reason before automating.

They will know how to delegate without losing legitimacy.

That is what survival will mean in the AI era.

And that is why the next great redesign of the firm will not be centered on software alone.

It will be centered on the Representation Lifecycle of the Firm.

Because in the age of AI, the most important question is no longer whether a company can process information.

It is whether it can see reality clearly enough, think responsibly enough, and act legitimately enough to deserve scale.

Why does this matter for enterprise AI?

Because most AI failures are not model failures. They are representation failures, reasoning failures, or execution-governance failures. Companies that redesign only the AI layer but ignore the underlying structure of reality, decision rights, and recourse will struggle to scale AI safely or effectively.

What is the Representation Lifecycle of the Firm?

The Representation Lifecycle of the Firm is a framework that explains how companies must redesign themselves for the AI era across three layers:

- SENSE – Making reality machine-legible through signals, entities, and state

- CORE – Turning that representation into reasoning, decisions, and intelligence

- DRIVER – Converting decisions into legitimate, governed, and accountable action

It provides a practical way for enterprises to move from AI experimentation to scalable, trusted execut

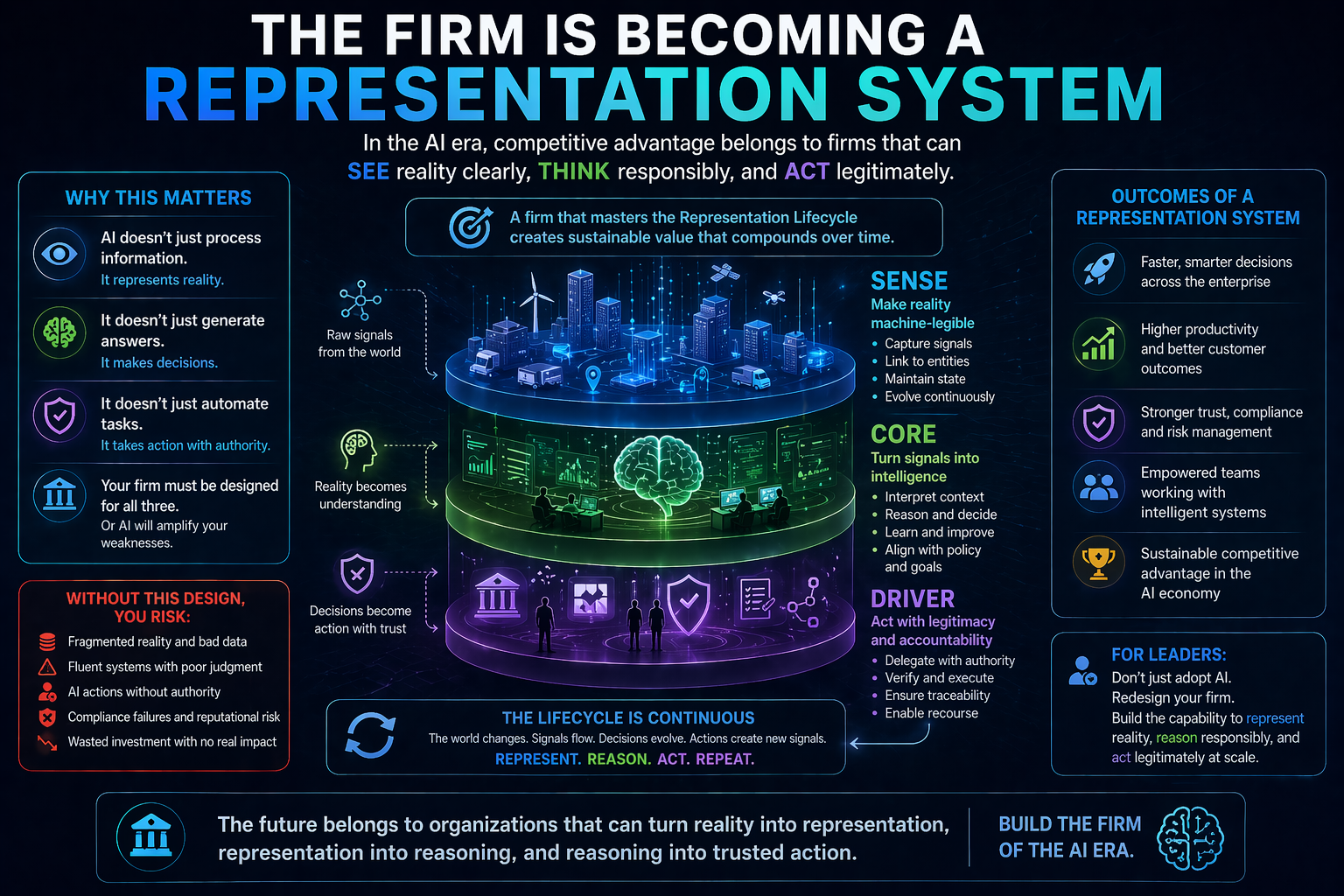

Conclusion: The Firm Is Becoming a Representation System

The biggest mistake leaders can make right now is to treat AI as a tooling wave.

It is not.

It is a redesign wave.

The firm of the AI era will be defined not by how many models it uses, but by how well it turns messy reality into dependable representation, dependable representation into sound judgment, and sound judgment into governed action.

That is the progression from SENSE to CORE to DRIVER.

And it may become the defining operating logic of the next era of enterprise strategy.

The companies that understand this early will not just deploy AI more effectively. They will redesign themselves into institutions that can actually survive, scale, and lead in a world where software does more than inform work. It begins to shape it.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- Representation Covenants: The New Competitive Advantage in the AI Economy – Raktim Singh

- The Representation Middle Class: Why the Biggest AI Winners Will Help the World Become Machine-Trusted – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Glossary

Representation Economy

A framework for understanding how value in the AI era depends on what can be accurately represented, reasoned over, and acted upon.

Representation Lifecycle of the Firm

The end-to-end process by which a company makes reality legible, reasons over it, and turns it into governed action.

SENSE

The layer where reality becomes machine-legible through signals, entities, state, and evolution.

CORE

The layer where the firm interprets reality, reasons, prioritizes, predicts, decides, and improves through feedback.

DRIVER

The layer where decisions become legitimate action through delegation, representation, identity, verification, execution, and recourse.

Machine-legible reality

A condition in which the important parts of business reality are structured clearly enough for digital systems and AI models to interpret and act on.

Entity resolution

The process of linking signals, records, or events to the correct real-world person, asset, account, contract, shipment, or object.

Institutional cognition

The way an organization thinks through systems, policies, memory, context, reasoning, and escalation logic rather than through isolated tools.

Governed execution

Action taken by AI or software within clear boundaries of authority, verification, reversibility, and accountability.

Recourse

The ability to challenge, correct, reverse, or appeal an AI-assisted or AI-triggered decision.

FAQ

- What is the main argument of this article?

The article argues that AI is no longer just a software upgrade. It requires firms to redesign themselves across three layers: SENSE, CORE, and DRIVER.

- What does SENSE mean in enterprise AI?

SENSE is the part of the firm that makes reality machine-legible. It includes signals, entities, current state, and the evolution of that state over time.

- What does CORE mean in this framework?

CORE is the reasoning layer. It is where the firm interprets information, makes judgments, recommends actions, and improves through feedback.

- What does DRIVER mean in the AI era?

DRIVER is the legitimacy and execution layer. It ensures that AI actions are authorized, traceable, verified, and reversible when necessary.

- Why do many enterprise AI projects fail?

Many fail because firms automate intelligence before improving their representation of reality. The systems become fluent, but not sufficiently grounded, trusted, or governable.

- Why is this relevant for boards and C-suite leaders?

Because AI is changing decision rights, workflows, accountability, risk management, and organizational design. This makes AI a board-level operating model issue, not just a technology issue.

- How can a company start applying this framework?

A practical starting point is to audit where SENSE is weak, where CORE is shallow, and where DRIVER is fragile.

- Is this framework only for large enterprises?

No. The logic applies across firms of different sizes. Any organization using AI to shape decisions, workflows, or actions needs stronger representation, reasoning, and execution design.

- How is this different from a normal AI governance article?

Most governance articles focus on principles and controls. This framework explains how the firm itself must be redesigned so that governance becomes operational, not merely advisory.

- What is the strategic takeaway?

The winners in the AI era will not just deploy more AI. They will redesign the firm as a system that can see reality, think responsibly, and act legitimately.

Q1. What is the Representation Lifecycle of the Firm?

It is a framework that explains how companies must redesign their operating model across SENSE, CORE, and DRIVER to succeed in the AI era.

Q2. Why do enterprise AI projects fail?

Because companies focus on models (CORE) but ignore data reality (SENSE) and execution governance (DRIVER).

Q3. What is SENSE in AI architecture?

SENSE is the layer that captures signals, links entities, maintains state, and makes reality machine-readable.

Q4. What is CORE in enterprise AI?

CORE is the reasoning layer that interprets data, makes decisions, and improves through feedback.

Q5. What is DRIVER in AI systems?

DRIVER ensures decisions are executed with authority, verification, accountability, and recourse.

Q6. Why is AI now a board-level issue?

Because AI impacts decision-making, governance, risk, and organizational structure—not just technology.

References and Further Reading

This article’s framing is original, but it is aligned with broader shifts now visible in enterprise AI practice and management research: workflow redesign, stronger governance, operating model change, proprietary data use, and the rise of AI agents as active participants in workflows. For readers who want to go deeper, the following are useful starting points: McKinsey’s 2025 global survey on how organizations are rewiring to capture AI value, MIT CISR’s work on enterprise IT operating models in the AI era, the World Economic Forum’s writing on accurate and trustworthy enterprise AI, and recent Harvard Business Review work on scaling AI agents inside organizations. (McKinsey & Company)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.