The Representation Moat:

In the AI era, the real moat is not the model. It is how well a company makes reality visible, trustworthy, and actionable.

Every board today is hearing some version of the same message: move faster on AI, deploy copilots, automate workflows, redesign customer engagement, and capture productivity. That pressure is real. But it is also creating a dangerous illusion. Many organizations now believe AI strategy begins with choosing models, tools, vendors, and use cases.

It does not.

AI strategy fails when it starts with intelligence before it starts with reality.

That is the mistake many organizations are making right now. They are trying to automate judgment on top of incomplete records, fragmented customer data, weak identity, stale process maps, missing permissions, unclear accountability, and inconsistent definitions of what is actually happening inside the business. McKinsey’s latest global AI research shows that while companies are investing broadly, scaled maturity remains rare, and the organizations capturing more value are the ones redesigning workflows, strengthening leadership roles, and putting stronger governance in place rather than simply deploying models. (McKinsey & Company)

The next generation of winners will understand something deeper: in a world where intelligence becomes cheaper and more widely available, defensibility shifts away from models and toward representation.

That is the representation moat.

A representation moat is the durable advantage a company builds when it can represent the important parts of reality better than competitors can. It sees more clearly, updates faster, links identity more accurately, governs action more responsibly, and creates more trust in what machines are allowed to do. In the AI economy, that becomes more valuable than simply having access to the latest model.

This is why every board now needs a representation strategy before it needs an AI strategy.

A representation moat is a company’s durable competitive advantage created by how effectively it represents reality—through identity, context, state, governance, and trust—making AI decisions more accurate, scalable, and reliable.

Why AI strategy is becoming easier to copy

For years, technology strategy rewarded firms that had better code, better infrastructure, or better access to scarce systems. AI changes that logic. Foundation models are spreading. Capabilities are diffusing. APIs are making advanced intelligence easier to access. Even investors and operators are increasingly debating where durable advantage will come from as model access broadens and software competition intensifies. (Andreessen Horowitz)

This does not mean all advantage disappears. It means the source of advantage changes.

If multiple firms can access similar models, then the question is no longer “Who has AI?” It becomes:

- Who gives AI the best view of reality?

- Who gives it the safest authority to act?

- Who can verify, correct, and improve its actions fastest?

Those are not model questions. They are representation questions.

A bank does not win because it has a chatbot. It wins because it can correctly represent customer identity, transaction context, fraud signals, consent, risk state, dispute status, and regulatory boundaries in a machine-usable form.

A hospital does not win because it bought a large model. It wins because it can represent the patient safely: history, allergies, diagnoses, medications, consent, care pathways, escalation rules, and who is authorized to do what. A supply chain platform does not win because it uses AI to forecast demand. It wins because it can represent inventory, supplier reliability, shipment status, contracts, disruptions, and service-level commitments accurately and in real time.

In each case, the model is important. But the moat is not the model. The moat is the firm’s ability to make reality legible.

In the AI era, competitive advantage is shifting from access to intelligence to quality of representation.

Companies that make reality machine-legible, governable, and trustworthy will outperform those that only deploy AI models.

The real AI moat is not the model.

It is the system that makes reality visible, trustworthy, and actionable for machines.

The board-level mistake: treating AI as a technology program

Many boards are still approaching AI the way they approached earlier waves of enterprise software: budget the initiative, appoint a sponsor, choose platforms, run pilots, monitor risk, then scale what works. That mindset is understandable, but it is incomplete.

AI is not just another software layer. It is a decision-amplification layer. It changes how organizations observe, interpret, recommend, and increasingly act. That makes weak representation much more dangerous, because AI does not merely store bad assumptions. It operationalizes them.

This is why governance bodies are increasingly pushing organizations to treat oversight, mapping, measurement, and accountability as central to AI deployment. NIST’s AI Risk Management Framework puts governance as a cross-cutting foundation, not an afterthought, and emphasizes that organizations must map context, measure risk, and manage deployment in an ongoing way. (NIST) The OECD’s recent work on governing with AI similarly stresses that effective use depends on institutional capability, governance design, and lessons drawn from real implementation rather than AI enthusiasm alone. (OECD)

So the board’s job is not simply to approve an AI roadmap. Its job is to ask whether the enterprise is representationally ready for AI at all.

That is a different question.

What a representation strategy actually is

A representation strategy is the board-level doctrine for deciding what reality the organization must be able to see, trust, model, update, govern, and delegate before it can scale AI safely and profitably.

It asks questions most AI strategies skip:

-

What must the machine be able to see?

Which signals matter most? Customer behavior? Asset condition? Supplier performance? Exceptions? Consent? Risk drift? Human override patterns?

-

What must the machine be able to identify?

Can the system reliably tie signals to the correct customer, product, machine, document, contract, employee, or location? Weak entity resolution breaks everything downstream.

-

What state of reality must be modeled continuously?

Not just static data, but current condition. Is the customer in distress? Is the asset healthy? Is the claim disputed? Is the shipment delayed? Is the process in escalation?

-

How quickly does reality change?

Some realities move slowly. Others mutate hourly. Representation strategy must decide where freshness is a competitive weapon and where stale state becomes dangerous.

-

What can the machine be allowed to do?

This is not only a policy question. It is a legitimacy question. What is the scope of delegated action? What approvals are needed? What recourse exists if the machine gets it wrong?

This is where your SENSE–CORE–DRIVER framing becomes decisive.

- SENSE is the legibility layer: signals, entities, state, evolution.

- CORE is the intelligence layer: comprehension, optimization, reasoning, decision support.

- DRIVER is the legitimacy layer: delegation, representation, identity, verification, execution, recourse.

Most AI strategies overinvest in CORE because intelligence is what everyone can see. But competitive strength increasingly depends on the quality of SENSE and the discipline of DRIVER. That is why AI projects often look impressive in demos and disappointing in production: the model is strong, but the representation is weak or the authority structure is unclear.

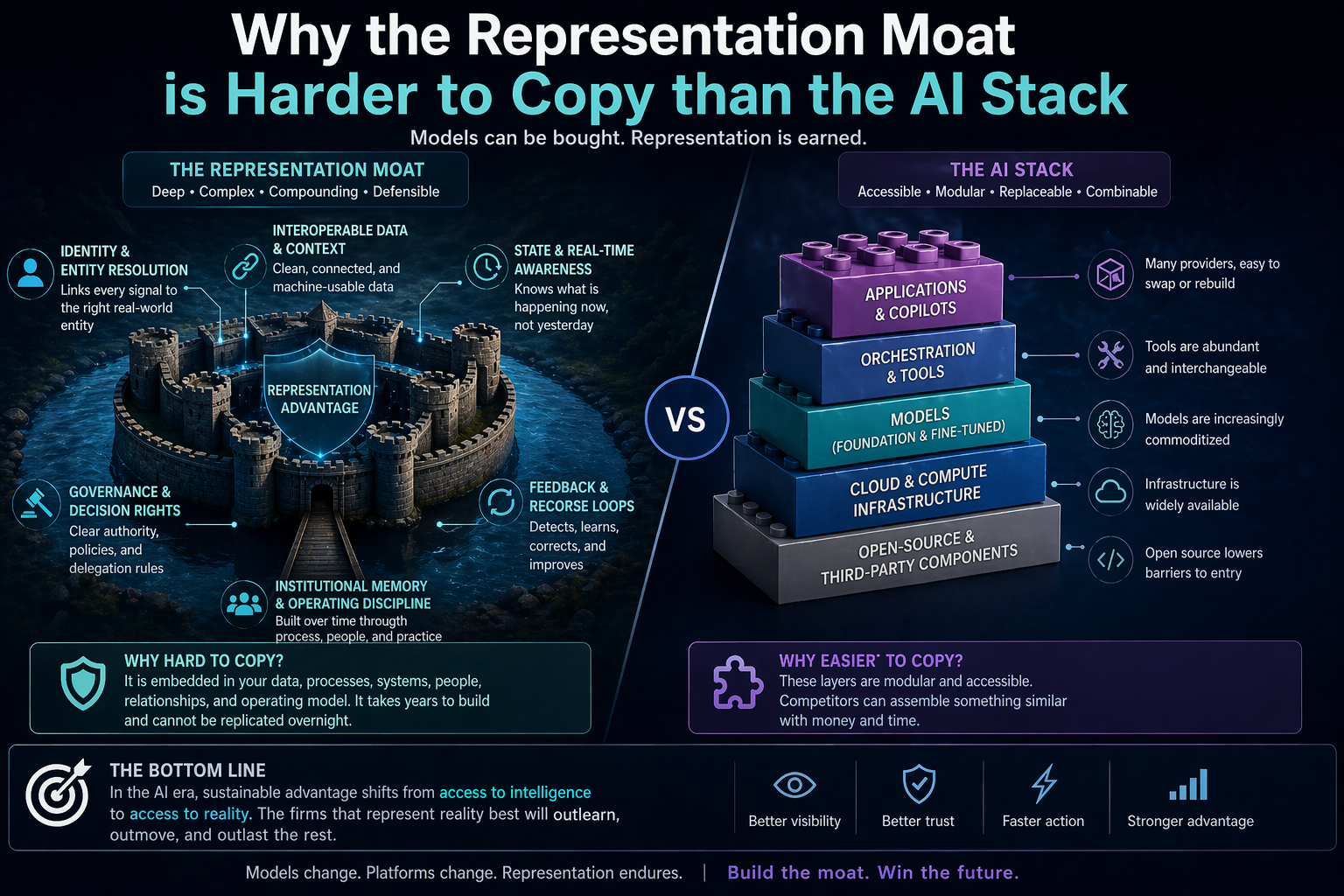

Why the representation moat is harder to copy than the AI stack

A company can buy the same model as its competitors. It can hire the same cloud vendor. It can license similar orchestration tools. What it cannot easily copy is the accumulated structure through which reality becomes usable inside that firm.

That structure includes:

- clean and trusted identity systems

- interoperable records

- normalized event streams

- well-defined decision rights

- auditable process states

- feedback loops from outcomes back into operations

- recourse mechanisms when action goes wrong

These are slow, institutional capabilities. They are hard to build. They involve operations, governance, incentives, architecture, and organizational memory. They do not show up well in flashy demos. But over time they become the deepest moat in the system.

Think about digital payments. What scaled was not just an app experience. It was trusted infrastructure: identity, account linkage, standardized interfaces, authentication, settlement, dispute handling, and ecosystem-wide interoperability. The World Bank’s recent work on digital public infrastructure highlights how standardized APIs, trusted digital rails, and interoperable systems enable broad participation and new services at scale. India’s UPI is repeatedly cited as an example of standardized integration supporting extensive third-party participation. (World Bank)

The same logic now applies to AI. The firms that become easy for machines to work with will outperform the firms that merely install machine intelligence on top of messy institutional reality.

That is the representation moat.

Three simple examples

Retail

Two retailers deploy similar AI for demand forecasting and customer engagement. One has disconnected inventory systems, inconsistent product identifiers, patchy store-level data, and poor returns attribution. The other has unified product identity, real-time stock visibility, structured promotion metadata, and feedback loops from sales, returns, and customer support. The second firm does not just have better data. It has a better representation of reality. Its AI will learn faster, act more safely, and produce more compounding value.

Insurance

Two insurers deploy AI claims triage. One cannot reliably connect policy history, repair data, fraud indicators, customer communications, and escalation pathways. The other can. The second insurer will process faster, escalate better, reduce leakage, and defend decisions more credibly. The model may be similar. The moat is not.

Manufacturing

Two industrial firms deploy predictive maintenance. One captures sensor data but cannot tie it cleanly to maintenance history, technician interventions, warranty status, spare-parts logistics, and operating environment. The other can. The second company is not just doing AI. It is representing operational reality at a higher fidelity.

In all three cases, AI strategy without representation strategy produces partial gains. Representation strategy first creates a system that can compound.

Why this is now a board issue, not just a CIO issue

Boards do not need to manage model parameters. But they do need to govern competitive advantage, risk exposure, capital allocation, institutional trust, and long-term defensibility.

That makes representation strategy a board responsibility for three reasons.

First, it shapes value creation. If representation quality determines whether AI produces durable advantage, then it affects growth, margins, and speed of adaptation. McKinsey’s recent surveys and related research consistently show that value is tied not only to experimentation but to workflow redesign, leadership ownership, and stronger operating practices. (McKinsey & Company)

Second, it shapes risk. The EU AI Act is pushing firms toward more formal accountability, transparency, and human oversight in high-risk settings, and its phased implementation is making governance design more concrete, not less. (Digital Strategy) If a company cannot explain what reality its systems believed, what authority they had, and how errors can be corrected, it does not merely have a technology problem. It has a board problem.

Third, it shapes strategic position. The companies that build the strongest representation layer become easier for partners, customers, regulators, and AI systems to trust. That changes coordination costs across the ecosystem.

A board that asks only, “What is our AI strategy?” is asking too late in the chain.

The stronger question is: What is our representation strategy, and what moat does it create?

The five board questions that matter now

A serious board should begin asking management five questions.

-

Where is our reality still invisible to machines?

Not where data exists, but where usable representation does not.

-

Where are we automating decisions on weak representation?

These are the future failure points.

-

Which parts of the business have high representation value?

These are the places where better representation creates disproportionate advantage.

-

What action rights are we delegating, and on what basis?

AI without clear delegation produces fast confusion.

-

What would make our representation layer a moat rather than a mess?

The answer usually involves identity, standards, interoperability, process clarity, and recourse.

These are strategic questions, not compliance questions.

The future winners will not be the firms with the most AI. They will be the firms AI can trust most.

This is the shift many leaders still miss.

In the next phase of the AI economy, intelligence will continue to improve and spread. But as that happens, the bottleneck moves. The scarce asset is no longer raw compute alone, or model access alone. The scarce asset becomes high-trust, machine-usable representation of reality.

That is why AI strategy without board-level representation strategy is incomplete. It optimizes intelligence while neglecting legibility. It accelerates action without upgrading what the system can safely see. It invests in CORE while underbuilding SENSE and DRIVER.

And that is why the deepest moat in the AI era will not belong to the company with the loudest AI story. It will belong to the company that built the strongest representation system underneath it.

The winners will be easier for machines to understand, safer for machines to act within, and harder for competitors to replicate.

That is the representation moat.

And boards that understand this early will not just deploy AI better. They will redesign the firm for the next economy.

FAQ

What is the representation moat?

The representation moat is a company’s durable advantage in the AI era created by representing reality better than competitors can. That includes stronger identity, cleaner state, faster updates, clearer permissions, and better recourse.

Why is AI strategy failing in many firms?

Many firms start with models and tools instead of fixing the underlying representation of customers, assets, processes, permissions, and outcomes. That causes weak deployment, poor trust, and limited scaling. (McKinsey & Company)

What is a board-level representation strategy?

It is the doctrine for deciding what reality the enterprise must be able to see, trust, model, govern, and delegate before AI can create durable value.

Why is representation more defensible than the model itself?

Models are increasingly accessible through shared platforms and APIs. A firm’s representation layer is built through operational design, institutional memory, identity systems, process structure, and governance, which are much harder to copy. (Andreessen Horowitz)

How does SENSE–CORE–DRIVER fit this idea?

SENSE makes reality legible, CORE interprets and reasons, and DRIVER governs delegated action. Strong AI value comes when all three work together, not when CORE is optimized in isolation.

What is the representation moat?

The representation moat is a company’s ability to represent reality better than competitors, including identity, context, state, permissions, and governance, making AI systems more effective and trustworthy.

Why do AI strategies fail?

AI strategies fail because companies deploy models on top of weak, fragmented, or outdated representations of reality, leading to poor decisions and limited scalability.

What is a representation strategy?

A representation strategy defines what reality an organization must be able to see, trust, model, and govern before AI can create meaningful value.

Why is representation more important than AI models?

AI models are becoming widely accessible, but representation systems are built over time through data, processes, governance, and institutional knowledge—making them harder to copy.

What should boards focus on in AI?

Boards should focus on representation quality, governance, delegation of decision rights, and trust systems—not just AI adoption.

📘 Glossary: The Representation Moat & AI Strategy

Representation Moat

A durable competitive advantage created by how effectively an organization represents reality in a machine-usable form—across identity, context, state, governance, and trust—enabling AI systems to act more accurately, safely, and at scale.

Representation Strategy

A board-level doctrine that defines what reality an organization must be able to see, trust, model, update, govern, and delegate before AI can deliver meaningful and scalable value.

Representation Economy

An emerging economic paradigm where value creation shifts from owning intelligence to controlling high-quality representations of reality, including data, identity, context, and decision authority.

Machine-Legible Reality

A version of reality that is structured, contextualized, and continuously updated so that machines can interpret it reliably and act on it effectively.

Representation Quality

The accuracy, completeness, freshness, and trustworthiness of how real-world entities, states, and relationships are modeled within a system.

SENSE (Legibility Layer)

The layer that makes reality understandable to machines by capturing:

- Signals (events and inputs)

- Entities (who or what is involved)

- State (current condition)

- Evolution (how it changes over time)

CORE (Intelligence Layer)

The layer where AI systems:

- Comprehend context

- Reason and optimize

- Generate recommendations or decisions

- Learn from feedback

DRIVER (Legitimacy Layer)

The layer that governs how AI acts, including:

- Delegation of authority

- Identity and accountability

- Verification mechanisms

- Execution boundaries

- Recourse when things go wrong

Entity Resolution

The ability to accurately link data, signals, or events to the correct real-world entity (customer, asset, contract, etc.), ensuring consistency across systems.

State Awareness

The capability to continuously track the current condition of an entity or process, rather than relying on static or outdated data.

Contextual Integrity

The alignment between data, its meaning, and its usage context, ensuring that AI decisions are made with the right interpretation of reality.

Delegated Machine Authority

The scope and boundaries of actions that an AI system is allowed to take autonomously, defined by governance rules, policies, and oversight mechanisms.

AI Governance

The frameworks, processes, and controls that ensure AI systems operate safely, ethically, transparently, and within defined boundaries.

Recourse Mechanism

The ability to detect, correct, reverse, or appeal decisions made by AI systems when errors occur or outcomes are disputed.

Representation Layer

The underlying system of data, identity, relationships, context, and governance that defines how reality is modeled and made usable for AI systems.

AI Stack

The combination of models, infrastructure, tools, and applications used to build AI systems. Increasingly modular and accessible, making it easier to replicate.

Representation Gap

The mismatch between real-world complexity and how it is captured in systems, leading to poor AI decisions, limited scalability, and reduced trust.

AI Strategy (Traditional View)

An approach focused primarily on models, tools, vendors, and use cases, often overlooking the foundational need for high-quality representation.

AI Strategy (Advanced View)

A strategy that prioritizes representation quality, governance, and decision authority before scaling AI capabilities.

Decision-Amplification Layer

AI’s role in organizations as a system that enhances how decisions are made, recommended, and executed, rather than just automating tasks.

Representation Advantage

The compounding benefit gained when an organization consistently improves how it represents reality, leading to better decisions, faster learning, and stronger trust.

Institutional Memory (in AI Systems)

The accumulation of historical data, decisions, feedback loops, and governance practices that improve the accuracy and reliability of AI over time.

Interoperability

The ability of systems to exchange and use information seamlessly, enabling consistent and unified representation across the enterprise.

Trust Infrastructure

The combination of identity, governance, verification, and recourse systems that ensures AI decisions are reliable, auditable, and acceptable to stakeholders.

In the AI era, the most valuable companies will not be those that own the most intelligence—

but those that define the most trusted version of reality.

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- Representation Kill Zone: Why Firms Become Invisible in AI (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- Representation Covenants: The New Competitive Advantage in the AI Economy – Raktim Singh

- The Representation Middle Class: Why the Biggest AI Winners Will Help the World Become Machine-Trusted – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.