Reversible AI Systems:

AI is moving from recommendation to execution.

For years, enterprise AI mostly suggested actions. It ranked leads, detected anomalies, summarized documents, predicted demand, or recommended next steps. A human still decided what to do.

That world is changing.

AI agents can now draft emails, update records, trigger workflows, generate code, approve requests, escalate incidents, place orders, change configurations, and interact with enterprise systems.

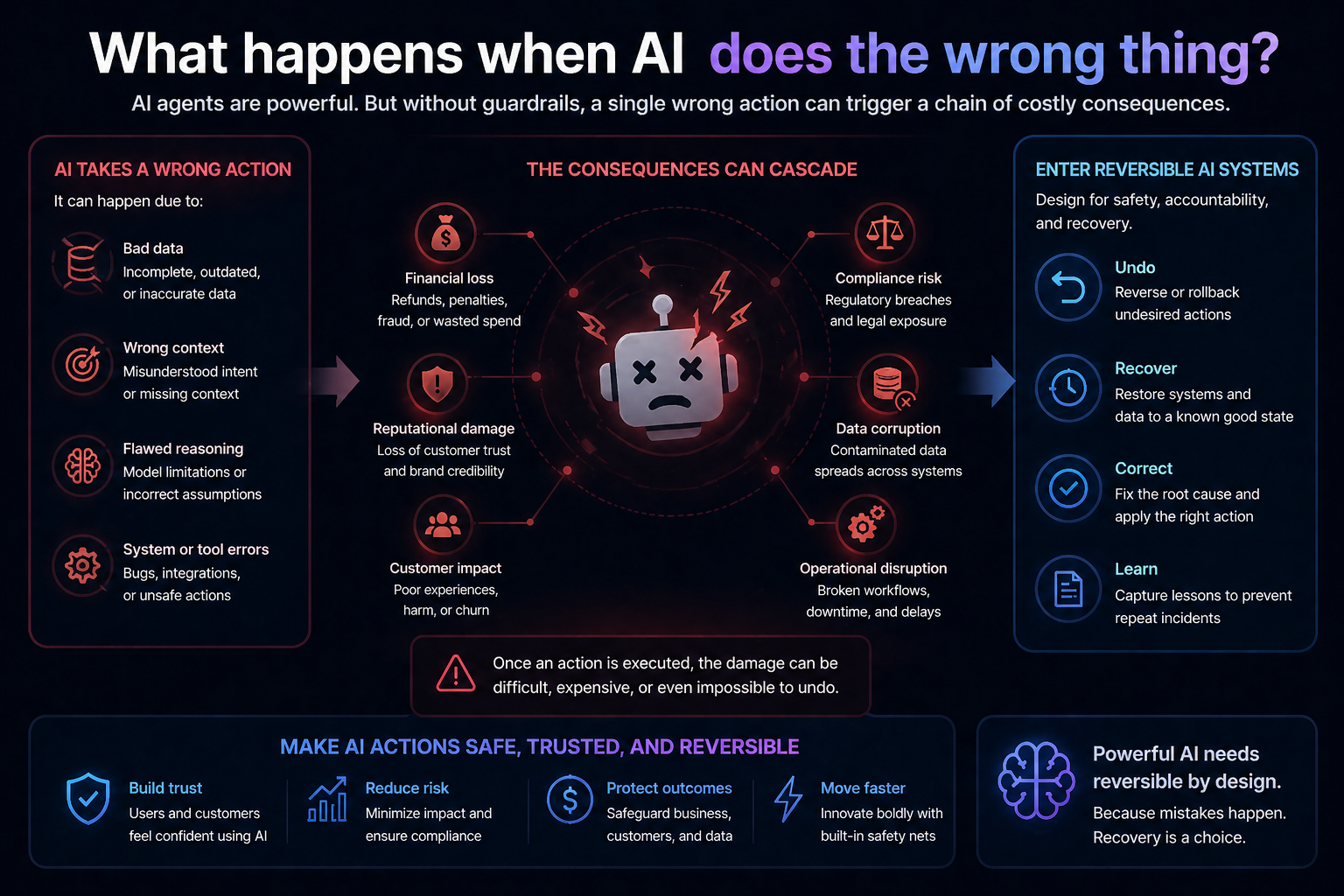

This creates a new question for every serious enterprise:

What happens when AI does the wrong thing?

Not in theory. In production.

What if an AI agent updates the wrong customer record?

What if it sends the wrong communication?

What if it approves an exception incorrectly?

What if it changes a configuration that breaks another system?

What if it acts on outdated data?

What if it follows the right instruction but applies it to the wrong entity?

What if it makes a decision that is technically efficient but institutionally unacceptable?

In traditional software, enterprises have learned to engineer backups, rollback, access controls, logs, approvals, incident response, disaster recovery, and change management.

AI now needs the same discipline.

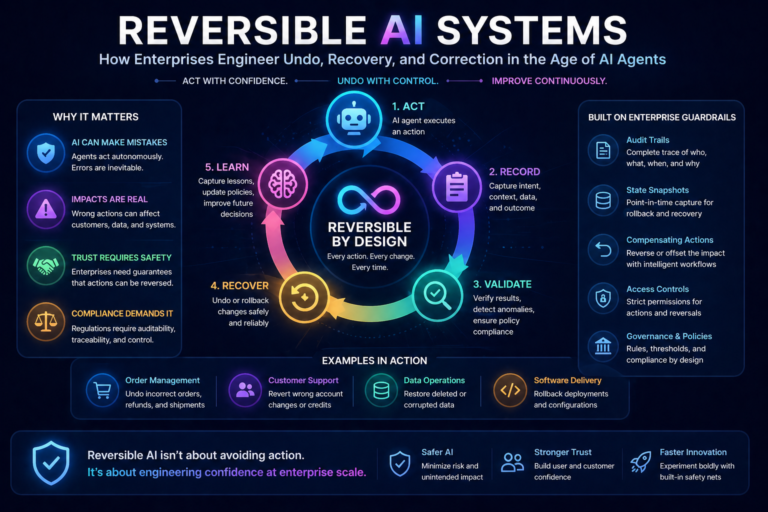

This is where reversible AI systems become essential.

A reversible AI system is not an AI system that never makes mistakes. That is unrealistic. A reversible AI system is one where mistakes can be detected, explained, contained, corrected, and, where possible, undone.

In the age of enterprise AI agents, reversibility is not a feature. It is a foundation.

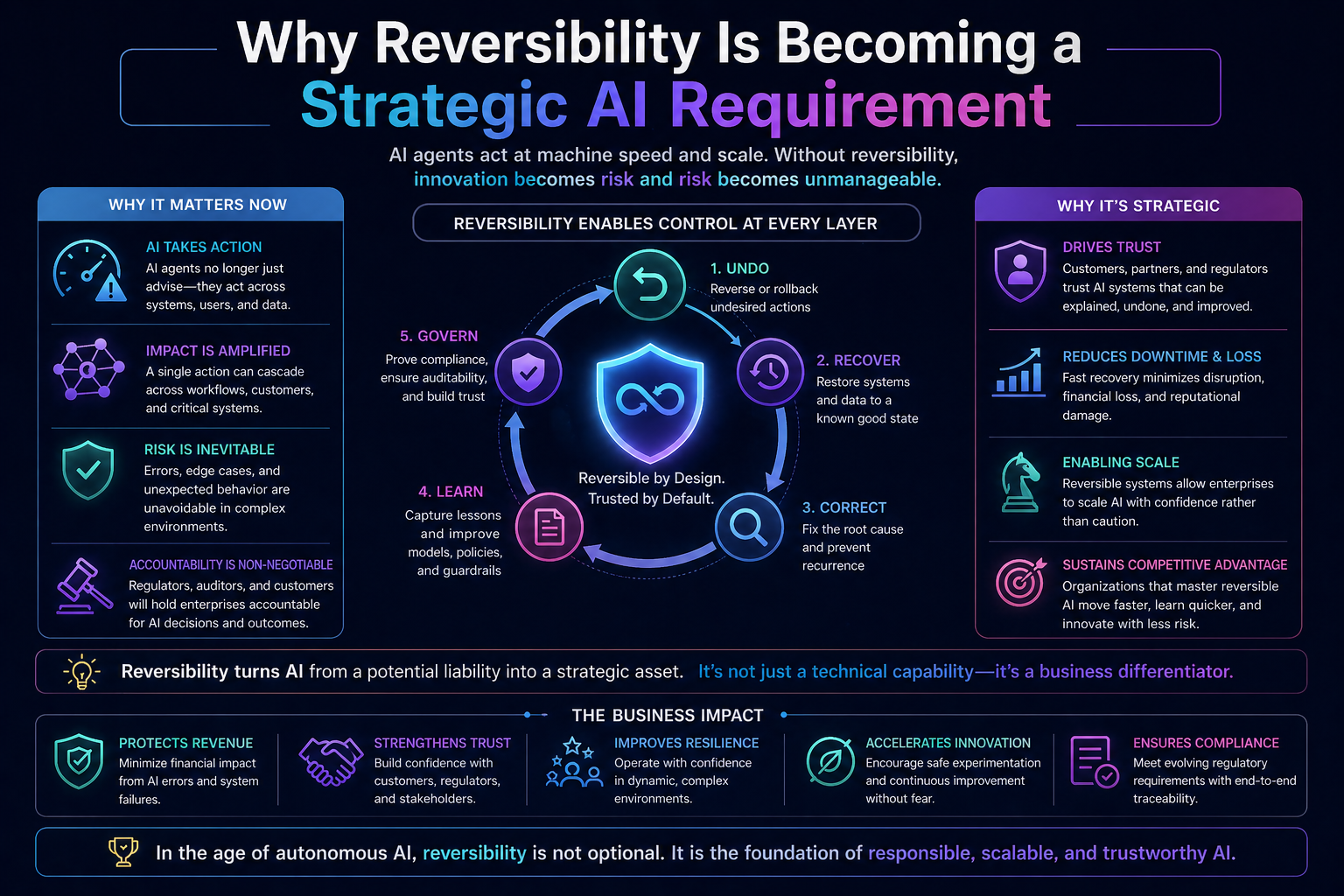

Why Reversibility Is Becoming a Strategic AI Requirement

The enterprise conversation around AI has focused heavily on capability.

Can the model reason?

Can it code?

Can it summarize?

Can it plan?

Can it use tools?

Can it call APIs?

Can it work across systems?

These are important questions. But they are not sufficient.

The more important enterprise question is:

Can the system recover when intelligence fails?

This is where many AI programs are underprepared.

A chatbot that gives a weak answer is a quality issue.

An AI agent that executes a wrong action is an operational risk.

An AI system that cannot explain, reverse, or correct that action is a governance failure.

NIST’s AI Risk Management Framework emphasizes the need to manage AI risks to individuals, organizations, and society. It is not only about building AI capability; it is about identifying, measuring, managing, and governing risk across the AI lifecycle. (NIST)

ISO/IEC 42001, the international standard for AI management systems, also places AI inside a management discipline covering risk, accountability, monitoring, and continual improvement. (ISO)

The message is clear: enterprise AI cannot be governed only at design time. It must be governed at runtime.

And runtime governance requires reversibility.

The Simple Idea: AI Must Have an Undo Button

Everyday digital life has taught us the value of undo.

We undo a typing mistake.

We restore an older version of a document.

We recover deleted files.

We reverse a failed deployment.

We cancel a transaction before settlement.

We roll back a software release.

Enterprise systems have long understood that action without recovery is dangerous.

But many AI systems are being designed as if the answer is the final product.

That was acceptable when AI was mostly used for insight. It is not acceptable when AI becomes an actor.

When AI can act, enterprises need the equivalent of an undo button.

But in enterprise AI, “undo” is not a single button. It is an architecture.

It includes logs, permissions, state tracking, compensating actions, approvals, versioning, audit trails, rollback plans, escalation paths, and human review.

Reversibility is not one mechanism. It is a system property.

Reversibility Is Not the Same as Safety

Many organizations use the word “safe AI” broadly. But safety and reversibility are different.

Safety tries to prevent harm before it happens.

Reversibility manages what happens after something goes wrong.

Both are necessary.

A safe AI system may block certain actions.

A reversible AI system can recover from actions that should not have happened.

A safe AI system may use guardrails.

A reversible AI system also uses audit trails, state snapshots, rollback procedures, and correction workflows.

A safe AI system tries to reduce risk.

A reversible AI system accepts that risk will never be zero.

This distinction matters because enterprises often overinvest in prevention and underinvest in recovery.

They build policies, filters, prompts, approval flows, and access controls. But they do not always ask: if this still fails, what is the recovery path?

That is the missing discipline.

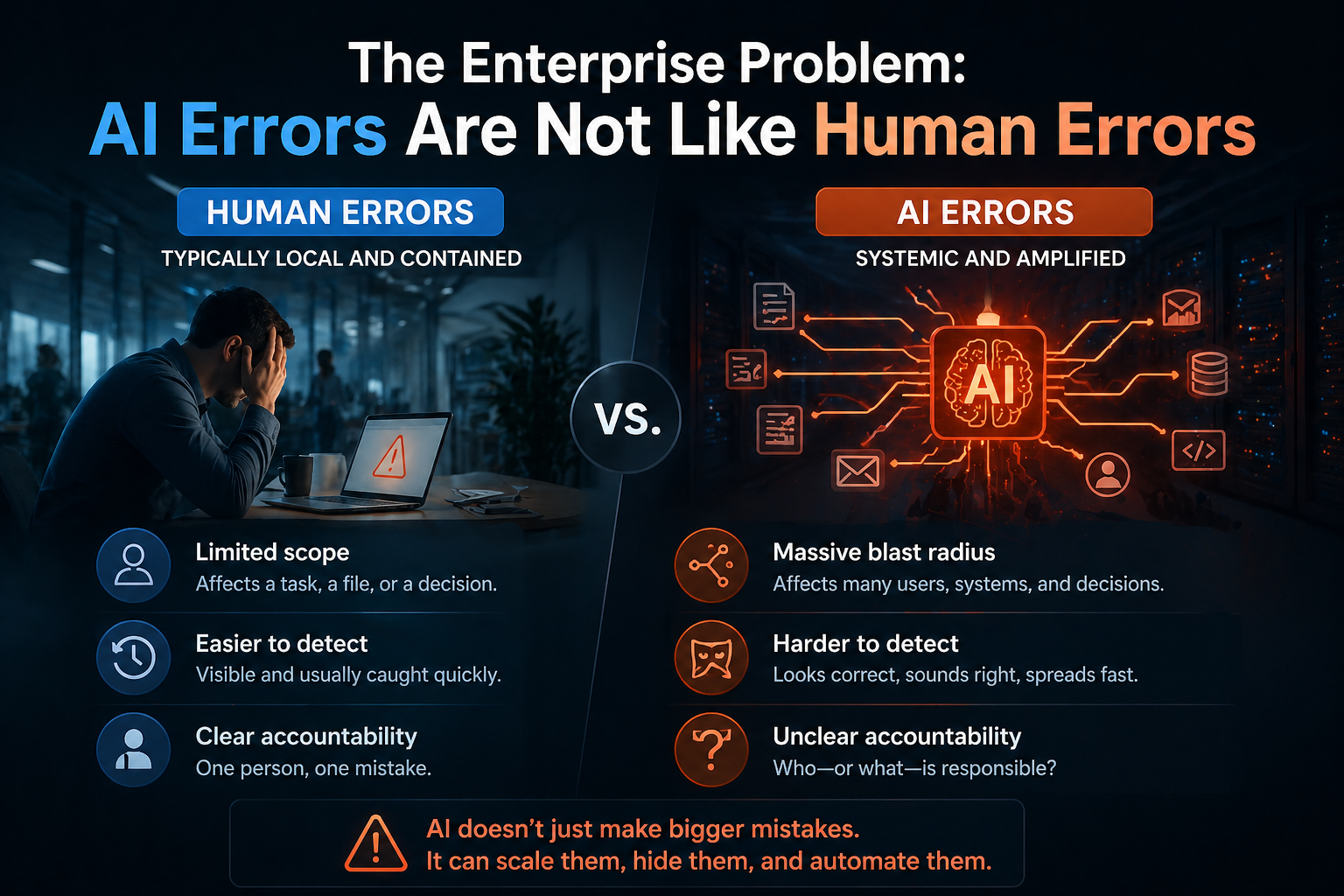

The Enterprise Problem: AI Errors Are Not Like Human Errors

Human errors usually happen at human speed.

AI errors can happen at machine speed.

A person may update one wrong record.

An AI agent may update thousands.

A person may misunderstand one policy.

An AI workflow may apply that misunderstanding across an entire process.

A person may send one incorrect message.

An AI system may trigger a campaign, escalate tickets, create tasks, and update multiple systems before anyone notices.

This is why reversibility becomes more important as autonomy increases.

The risk is not only that AI makes mistakes. The risk is that AI scales mistakes.

The enterprise question is no longer only “How accurate is the AI?”

It is also:

How far can a wrong action spread?

How quickly can it be detected?

Can it be isolated?

Can the affected state be restored?

Can the decision path be reconstructed?

Can the customer, employee, supplier, or system be corrected?

Can the organization learn from the failure?

These questions define the maturity of reversible AI.

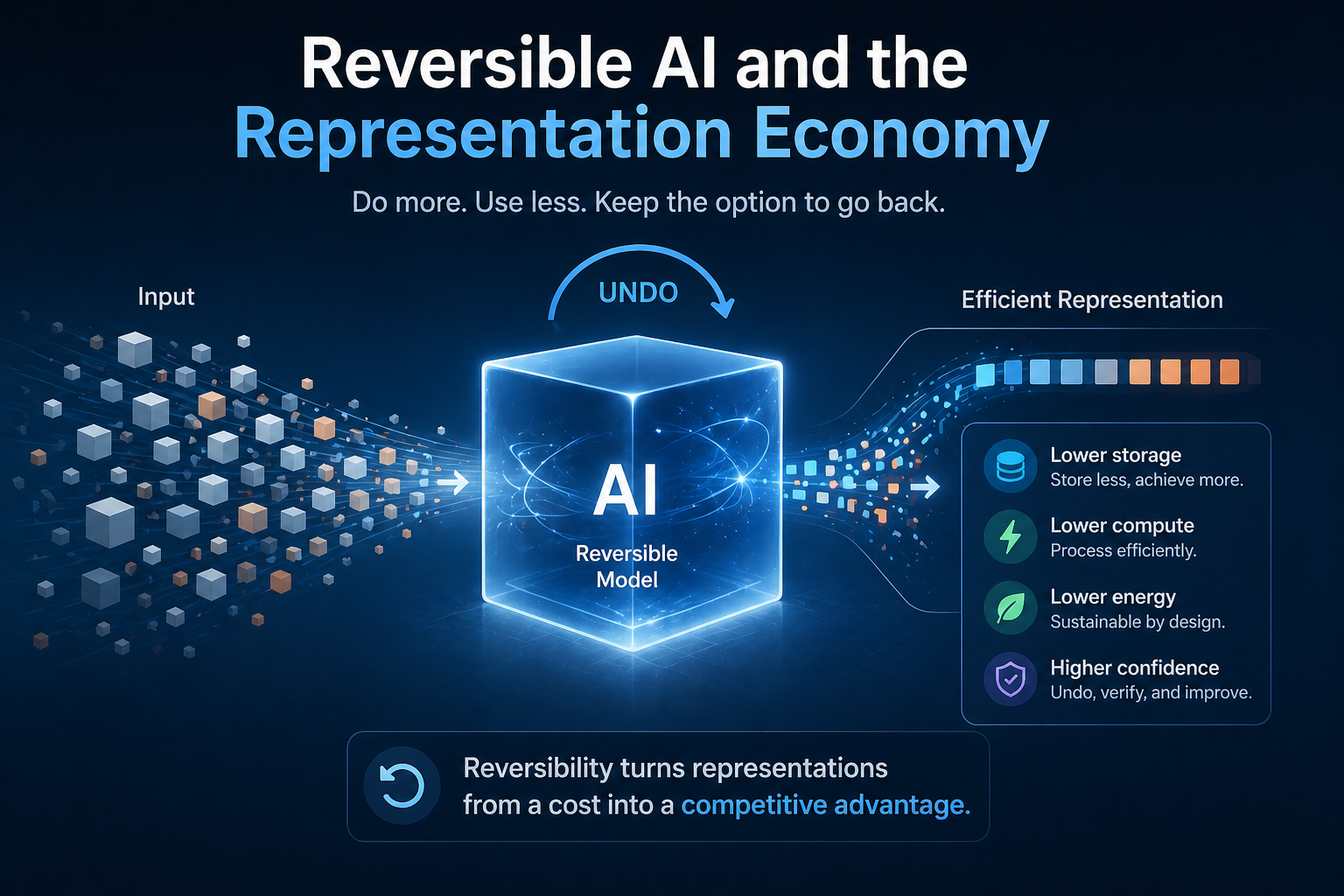

Reversible AI and the Representation Economy

In the Representation Economy, AI systems do not act on reality directly. They act on representations of reality.

If the representation is wrong, the action can be wrong.

A customer may be represented incorrectly.

A supplier may be linked to the wrong risk profile.

A patient record may be incomplete.

A software dependency may be outdated.

A policy exception may be misclassified.

A payment status may be stale.

A document may be interpreted without its full context.

This is why reversibility belongs inside the SENSE–CORE–DRIVER framework.

SENSE makes reality machine-legible. It captures signals, entities, states, and changes over time.

CORE reasons over that represented reality.

DRIVER governs how decisions become actions, who authorized them, what evidence supported them, how they were executed, and what happens if they were wrong.

Reversibility is mainly a DRIVER capability. But it depends on SENSE.

You cannot reverse what you did not record.

You cannot correct what you did not represent.

You cannot recover a state you never captured.

You cannot explain an action if the evidence path was never preserved.

That is why reversibility is not just an operational feature. It is a representation problem.

Enterprises that represent actions, states, identities, permissions, and consequences clearly will be able to recover better.

Enterprises that do not will have AI systems that act faster than they can govern.

What Makes an AI System Reversible?

A reversible AI system needs several core capabilities.

-

Action Traceability

The enterprise must know what the AI did.

Not just the final answer. The actual action.

Which system was accessed?

Which API was called?

Which record was changed?

Which workflow was triggered?

Which message was sent?

Which file was created?

Which approval was used?

Which tool executed the action?

Without action traceability, there is no reversibility.

Traceability is also becoming central to AI governance. Current enterprise AI governance discussions increasingly emphasize audit trails, monitoring, human oversight, and control mechanisms for autonomous agents. (Kore.ai)

-

State Awareness

The system must know the before and after state.

Before the AI changed the customer record, what was the original value?

Before it generated the contract clause, what version was active?

Before it escalated the incident, what was the severity?

Before it modified the configuration, what dependency existed?

A rollback is impossible without state awareness.

For enterprise AI, state is not a technical detail. It is the memory of reality before action.

-

Identity-Bound Execution

The system must know under whose authority the AI acted.

Did the AI act as itself?

Did it act on behalf of a user?

Did it act under a team role?

Did it use delegated authority?

Was the user allowed to approve the action?

Was the agent allowed to call that tool?

This is crucial because reversibility is not only about restoring data. It is about accountability.

An action must be tied to identity, authority, and scope.

-

Permissioned Tool Use

AI agents become risky when they can use powerful tools without bounded access.

A reversible system limits what tools an agent can use, under what conditions, with what parameters, and with what approval levels.

For example, an AI assistant may be allowed to draft a refund recommendation but not issue the refund. Another agent may be allowed to update a ticket but not close it. A third may be allowed to create a purchase request but not approve a vendor payment.

Reversibility improves when AI actions are bounded.

-

Human Intervention Points

Not every AI action should be automatic.

Some actions need human approval before execution.

Some need human review after execution.

Some need escalation only when confidence is low.

Some need sampling-based oversight.

Some need automatic pause when anomalies appear.

Human oversight remains an important theme in AI governance, especially for consequential decisions and compliance-sensitive systems. (Maxim AI)

The best reversible systems do not insert humans everywhere. They insert humans where reversibility requires judgment.

-

Compensating Actions

Not every action can be literally undone.

If an AI sends a wrong email, the email cannot be unsent. But the enterprise can send a correction.

If an AI approves a request, the approval may need to be reversed through a formal process.

If an AI changes a configuration, rollback may be possible.

If an AI provides a customer recommendation, the customer may need to be notified.

This is why enterprises need compensating actions.

Reversibility is not always restoration. Sometimes it is correction, explanation, compensation, or recourse.

-

Auditability

A reversible AI system must preserve evidence.

What context did the AI use?

What prompt or instruction was active?

What model version was used?

What data was retrieved?

What policies were applied?

What confidence score was assigned?

What tool calls were made?

What human approvals were recorded?

What changed after execution?

Auditability is the memory of governance.

Without auditability, reversibility becomes guesswork.

The Four Levels of Reversible AI

Enterprises can think of reversibility in four levels.

Level 1: Explain

At the first level, the system can explain what happened.

It can show the input, output, context, model, tool call, and decision path.

This does not reverse the action yet. But it allows investigation.

Level 2: Correct

At the second level, the system can correct the result.

It can update the wrong classification, revise the recommendation, fix the generated content, or mark the decision as invalid.

This is common in human-in-the-loop systems.

Level 3: Recover

At the third level, the system can restore a previous state or trigger a recovery workflow.

For example, it may restore a record, reopen a ticket, revert a configuration, or reassign a workflow.

This requires state history.

Level 4: Learn

At the fourth level, the system improves from the correction.

It updates rules, improves prompts, changes retrieval logic, adjusts confidence thresholds, modifies permissions, or changes escalation patterns.

This is where reversibility becomes institutional learning.

The mature enterprise does not only reverse AI errors. It learns why they happened.

Simple Example: AI in Customer Service

Imagine an AI agent in a customer service process.

A customer asks for a refund. The AI reviews the order history, policy, shipping status, prior complaints, and customer tier. It decides to approve the refund and triggers the workflow.

Now suppose the AI made a mistake. It missed a policy condition. The refund should have required manual approval.

A non-reversible AI system creates a mess. Teams may not know why the refund was approved, what data was used, or how many similar refunds were processed.

A reversible AI system behaves differently.

It records the decision path.

It captures the policy version used.

It logs the customer state before the decision.

It records the approval authority.

It identifies similar decisions made under the same condition.

It pauses future similar decisions.

It routes the case to a human reviewer.

It triggers a correction workflow if required.

It updates the rule or retrieval path that caused the error.

This is not just better AI. It is better enterprise control.

Simple Example: AI in Software Operations

Consider an AI agent used in IT operations.

It detects a production issue and recommends restarting a service. Later, it is allowed to execute the restart automatically.

The agent notices abnormal latency and restarts a service. But the service was part of a dependency chain. The restart causes another system to fail.

A non-reversible system leaves teams struggling to reconstruct the incident.

A reversible system records:

What signal triggered the action.

Which dependency map was used.

Which service was restarted.

Which systems were affected.

What configuration existed before the restart.

Which runbook was followed.

Whether rollback was available.

Which human was notified.

What recovery action was taken.

In this case, reversibility depends on context graphs, dependency maps, observability, and operational memory.

AI cannot safely act in complex systems if it does not understand the blast radius of its actions.

Reversibility and AI Agents

AI agents make reversibility urgent because agents operate through sequences.

A chatbot gives one response.

An agent may take ten steps.

It may search a knowledge base, read a document, call an API, update a system, generate a message, create a ticket, notify a team, and schedule a follow-up.

If step seven is wrong, the enterprise needs to know what happened in steps one through six.

This is why agentic AI requires execution logs, tool-call histories, policy checks, and rollback mechanisms. Recent enterprise discussions on agentic AI governance emphasize guardrails, audit trails, monitoring, and oversight as necessary controls for autonomous systems. (Kore.ai)

Agentic AI without reversibility is like giving a junior employee system access, decision authority, and no supervision log.

It may work most of the time.

But “most of the time” is not enough for enterprise systems.

Why Reversibility Is Harder in AI Than in Traditional Software

Traditional software usually follows predefined logic.

If this condition is true, do this.

If that condition is false, do that.

AI systems are more probabilistic. They may produce different outputs depending on prompts, context, model versions, retrieval results, tool states, and user instructions.

This makes reversibility harder.

The enterprise must track not only the action, but the reasoning environment around the action.

That includes:

The model version.

The prompt template.

The retrieved documents.

The user instruction.

The agent plan.

The tool permissions.

The policy constraints.

The confidence score.

The data state at that moment.

The external system response.

Without this, the organization may know what happened but not why it happened.

That is not enough.

Reversible AI requires reproducibility as far as possible, and explanation where exact reproduction is not possible.

Reversibility and Regulation

Regulators are increasingly concerned with AI accountability, human oversight, documentation, monitoring, and risk management.

This does not mean every AI system needs the same controls. A marketing draft generator does not need the same reversibility as a credit decision workflow, medical triage assistant, cybersecurity response agent, or financial transaction system.

But the direction is clear.

High-impact AI systems will need stronger evidence of governance.

ISO/IEC 42001 defines a management-system approach for organizations that develop, provide, or use AI systems, helping them manage AI risks while supporting trust and accountability. (ISO)

The practical implication is that enterprises should design AI systems as auditable operating environments, not isolated model deployments.

Reversibility will become one of the visible signs of responsible AI maturity.

The Architecture of a Reversible AI System

A reversible AI system needs a layered architecture.

The first layer is identity. The system must know who or what is acting.

The second layer is context. The system must know which entity, state, policy, and relationship are relevant.

The third layer is permission. The system must know what action is allowed.

The fourth layer is execution. The system must perform the action through controlled tools or workflows.

The fifth layer is observability. The system must record what happened.

The sixth layer is recovery. The system must support rollback, correction, or compensating action.

The seventh layer is learning. The system must improve from failure.

This architecture changes how enterprises should think about AI.

The model is not the system.

The prompt is not the system.

The agent is not the system.

The system includes the representation layer, governance layer, execution layer, observability layer, and recovery layer.

That is what makes AI enterprise-grade.

The Role of Context Graphs and Identity Graphs

Reversibility becomes much stronger when AI systems are connected to context graphs and identity graphs.

An identity graph helps the AI know which real-world entity it is acting on.

Is this the same customer?

Is this the correct supplier?

Is this the right employee?

Is this the same asset?

Is this account linked to another account?

A context graph helps the AI understand the relationships around that entity.

Which policies apply?

Which dependencies exist?

Which events changed the state?

Which systems are affected?

Which prior decisions matter?

Which approvals are required?

Together, identity graphs and context graphs create the SENSE foundation for reversible AI.

If the system does not know the entity, it cannot safely act.

If it does not know the context, it cannot safely decide.

If it does not preserve the state, it cannot safely reverse.

This is why reversibility is not just a runtime feature. It begins with representation quality.

The Most Important Design Principle: Bound the Blast Radius

Every AI action has a blast radius.

A content suggestion has a small blast radius.

A customer communication has a larger blast radius.

A financial approval has a larger one.

A system configuration change can have a very large one.

A security action may affect entire operations.

Reversible AI systems should be designed to limit the blast radius of mistakes.

This can be done through:

Small initial permissions.

Stepwise autonomy.

Confidence thresholds.

Human approval for high-impact actions.

Sandbox execution.

Dry-run mode.

Canary deployment.

Rate limits.

Policy-based tool access.

Automatic pause on anomaly detection.

Rollback-ready workflows.

The goal is not to stop AI from acting.

The goal is to make AI action governable.

The Enterprise Maturity Model for Reversible AI

Most organizations will move through five stages.

Stage 1: AI Gives Answers

The AI provides recommendations or summaries. There is limited execution risk.

Stage 2: AI Drafts Actions

The AI prepares messages, workflows, code, or decisions, but humans approve execution.

Stage 3: AI Executes Low-Risk Actions

The AI performs bounded tasks such as updating non-critical fields, routing tickets, or generating reports.

Stage 4: AI Executes Conditional Actions

The AI acts under policy constraints, confidence thresholds, and escalation rules.

Stage 5: AI Executes with Reversibility by Design

The AI can act, explain, pause, escalate, correct, recover, and learn.

This final stage is where enterprise AI becomes truly scalable.

Not because it is perfect, but because it is governable.

Why Reversibility Creates Competitive Advantage

Many executives see governance as a brake on innovation.

That is the wrong frame.

Good governance enables scale.

A company that cannot reverse AI actions will be afraid to deploy AI deeply. It will keep AI limited to low-risk use cases.

A company that can trace, correct, recover, and govern AI actions can give AI more responsibility.

This creates a powerful competitive advantage.

Reversibility allows more autonomy.

More autonomy allows more productivity.

More productivity creates more learning.

More learning improves the system.

A better system earns more trust.

More trust allows deeper deployment.

This is the enterprise AI flywheel.

In the Representation Economy, the firms that win will not be the firms that use AI everywhere carelessly. They will be the firms that can let AI act because they have engineered accountability into action.

The New Leadership Question

Boards and executives should stop asking only:

“How many AI use cases do we have?”

They should also ask:

Which AI actions are reversible?

Which actions are not reversible?

Where do we need human approval?

Where do we have rollback?

Where do we only have compensation?

Where do we have no recovery path?

Who owns AI-caused errors?

Can we reconstruct an AI decision?

Can affected parties appeal or correct the outcome?

Can we pause an AI agent quickly?

Can we prove what happened?

These questions separate AI experimentation from AI institutionalization.

Conclusion: The Future of Enterprise AI Is Not Just Autonomous. It Is Reversible.

AI autonomy will keep increasing.

Models will become better. Agents will become more capable. Tools will become more integrated. Enterprises will automate more decisions and workflows.

But autonomy without reversibility will create fragile organizations.

The future of enterprise AI will not belong to firms that simply let AI act faster. It will belong to firms that know how to make AI action accountable.

Reversible AI systems give enterprises a way to engineer undo, recovery, correction, auditability, and institutional learning.

They make AI safer not by pretending mistakes will disappear, but by designing systems that can recover when mistakes happen.

That is the real mark of enterprise maturity.

In the Representation Economy, intelligence is only one part of the story.

The deeper advantage comes from representing reality accurately, acting responsibly, and correcting course when representation or reasoning fails.

The best AI systems will not be the ones that never make mistakes.

They will be the ones that can explain, correct, recover, and learn.

That is why reversible AI systems will become one of the defining foundations of enterprise AI.

FAQ

What is a reversible AI system?

A reversible AI system is an AI-enabled system designed to undo, roll back, or recover from AI-driven actions, decisions, or workflow changes when outputs are incorrect, harmful, or undesired.

Why is reversibility important in AI?

As AI systems become autonomous and agentic, mistakes can propagate rapidly across workflows and enterprise systems. Reversibility enables organizations to recover safely and maintain trust.

Is reversibility the same as AI safety?

No. Safety aims to prevent harmful actions before they happen. Reversibility focuses on recovering after an incorrect action has already occurred.

How do enterprises build reversible AI systems?

Typical mechanisms include:

- Audit trails

- State versioning

- Transaction rollback

- Workflow checkpointing

- Human approval gates

- Deterministic replay systems

Why is reversibility critical for AI agents?

AI agents execute multi-step autonomous workflows. Without reversibility, one wrong decision can trigger cascading downstream failures across systems.

Reference and further Reading

- NIST AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework - Google SRE / Rollback Engineering Concepts

https://sre.google/ - AWS Well-Architected Reliability Pillar

https://aws.amazon.com/architecture/well-architected/ - Microsoft Responsible AI Documentation

https://www.microsoft.com/ai/responsible-ai - Martin Fowler – Event Sourcing / Auditability Concepts

https://martinfowler.com/eaaDev/EventSourcing.html

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.