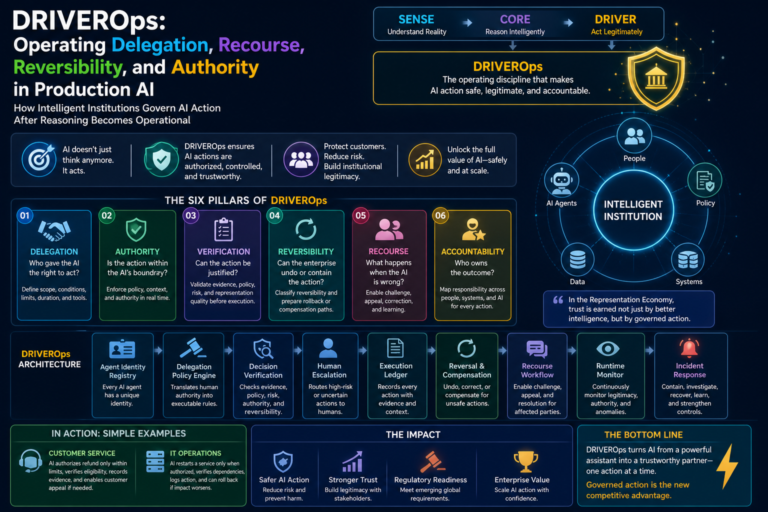

DRIVEROps: How Intelligent Institutions Govern AI Action After Reasoning Becomes Operational

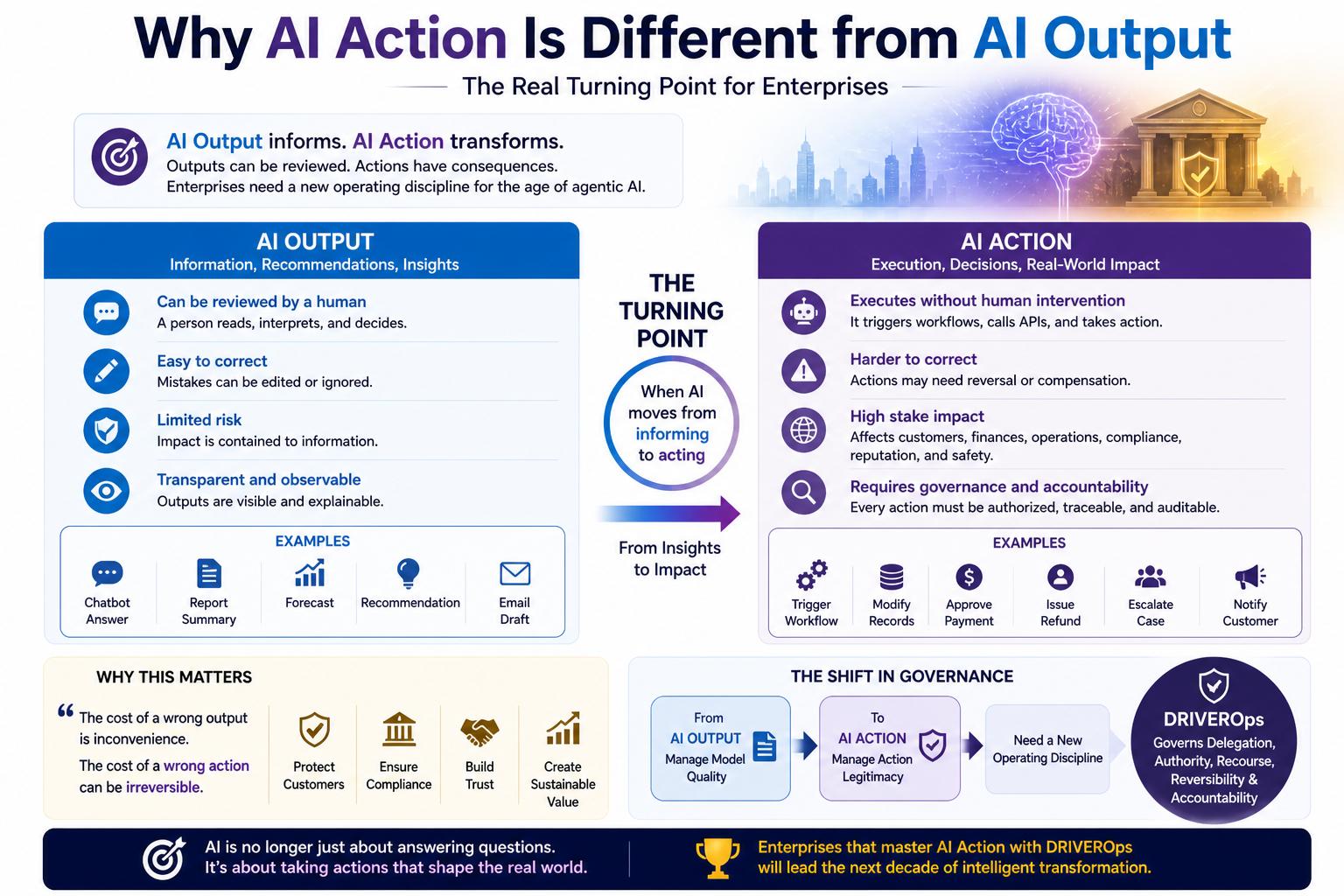

Most enterprises are preparing for AI that can think.

Far fewer are prepared for AI that can act.

That is the real turning point.

As long as AI remains a chatbot, assistant, summarizer, or recommendation engine, its risks are limited by human mediation. A person reads, interprets, decides, and executes. But once AI systems begin triggering workflows, invoking tools, modifying records, approving exceptions, escalating cases, communicating with customers, negotiating with systems, or coordinating with other agents, the problem changes.

The central question is no longer:

Can the AI produce a good answer?

The question becomes:

Who gave the AI the right to act, under what authority, with what evidence, within what limits, through what rollback path, and with what recourse if it is wrong?

This is the problem DRIVEROps must solve.

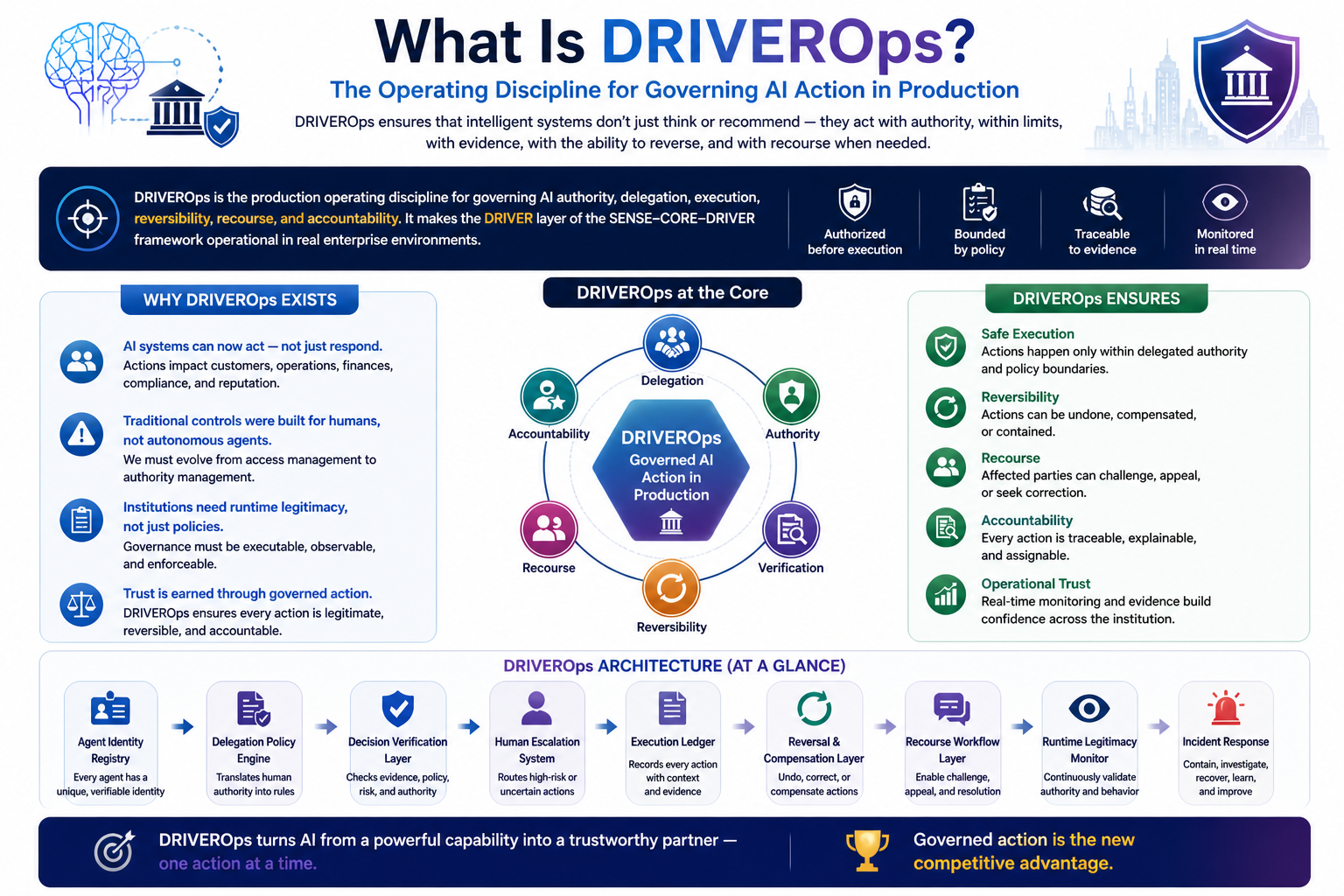

DRIVEROps is the operating discipline for running the DRIVER layer of intelligent institutions in production. It governs how AI systems receive delegated authority, execute actions, preserve accountability, support reversibility, enable recourse, and remain legitimate while operating in real enterprise environments.

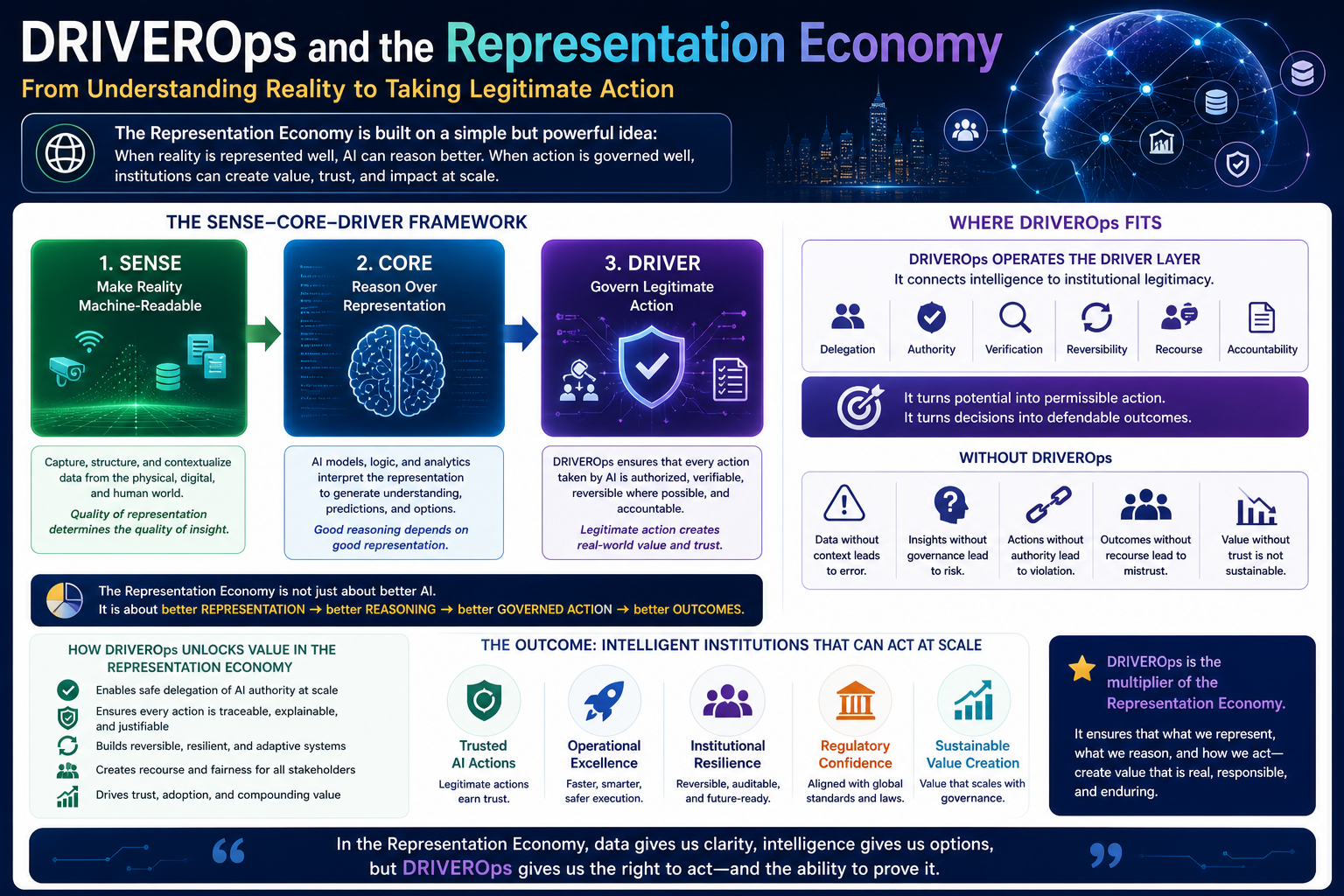

In the SENSE–CORE–DRIVER framework, SENSE makes reality machine-readable, CORE reasons over that representation, and DRIVER governs legitimate action.

DRIVEROps makes the DRIVER layer operational.

It is the missing discipline between AI reasoning and institutional trust.

DRIVEROps is the operating discipline for governing AI authority, delegation, execution, reversibility, recourse, and accountability in production systems. It makes the DRIVER layer of the SENSE–CORE–DRIVER framework operational.

Why AI Action Is Different from AI Output

Enterprises are already familiar with model risk, hallucination risk, data risk, privacy risk, and cybersecurity risk.

But agentic AI introduces a new class of risk:

Action risk.

An answer can be corrected.

An action may need to be reversed.

A recommendation can be ignored.

An executed workflow may affect customers, contracts, finances, operations, compliance, safety, or reputation.

This is why AI governance is moving from abstract principles toward lifecycle governance, risk management, monitoring, human oversight, logging, auditability, and accountability. The NIST AI Risk Management Framework organizes AI risk management around the functions of Govern, Map, Measure, and Manage, while ISO/IEC 42001 provides a structured management-system standard for organizations developing, providing, or using AI systems. (NIST)

The EU AI Act also places obligations on deployers of high-risk AI systems, including appropriate human oversight, use of relevant input data, monitoring of system operation, and keeping logs generated by the AI system. (Artificial Intelligence Act)

These frameworks point to a larger architectural truth:

AI systems that act need operating controls, not just ethical principles.

DRIVEROps turns that principle into architecture.

DRIVEROps is the missing operating discipline for enterprise AI action. It governs how intelligent systems receive delegated authority, execute actions within policy, support reversibility and recourse, and remain accountable in production environments.

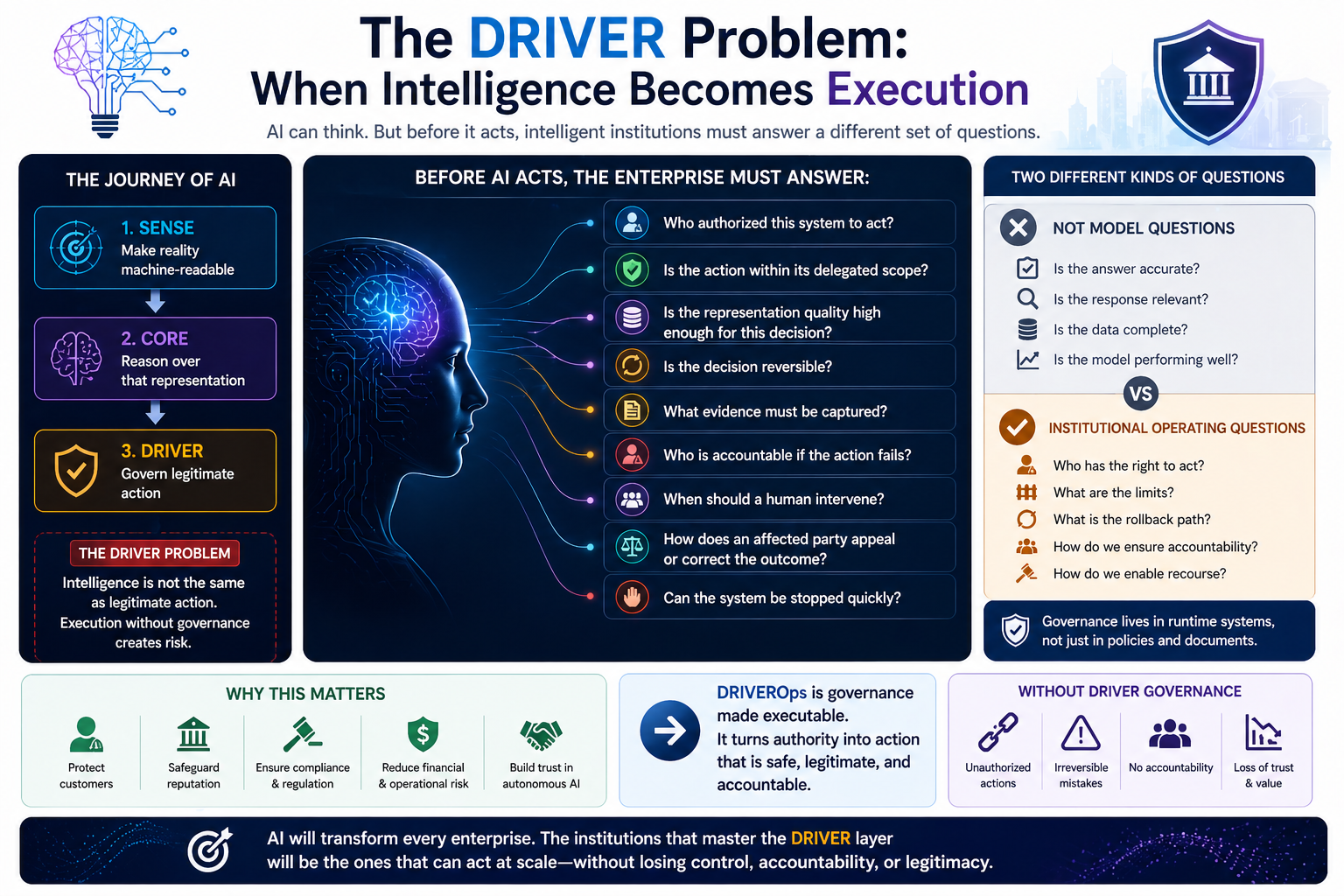

The DRIVER Problem: When Intelligence Becomes Execution

The DRIVER problem begins when intelligence becomes execution.

An AI system may correctly understand the situation. It may reason well. It may even recommend the right course of action.

But before it acts, the enterprise must answer a different set of questions.

Who authorized this system to act?

Is the action within its delegated scope?

Is the representation quality high enough for this decision?

Is the decision reversible?

What evidence must be captured?

Who is accountable if the action fails?

When should a human intervene?

How does an affected party appeal or correct the outcome?

Can the system be stopped quickly?

These are not model questions.

They are institutional operating questions.

That is why DRIVER is not simply “governance.” Governance often lives in policies, committees, documents, reviews, and principles. DRIVER must live in runtime systems.

DRIVEROps is governance made executable.

What Is DRIVEROps?

DRIVEROps is the production operating discipline for governing AI authority, delegation, execution, reversibility, recourse, and accountability.

It ensures that every AI action is:

- authorized before execution,

- bounded by policy,

- traceable to evidence,

- monitored during runtime,

- reversible where possible,

- escalated when uncertainty or risk is high,

- auditable after execution,

- open to correction and recourse.

In traditional enterprise systems, many of these controls were handled by workflow design, approval chains, access management, audit logs, and operational procedures.

But AI agents complicate this because they can interpret goals, choose tools, generate intermediate plans, call APIs, interact with other agents, and adapt their actions dynamically.

Security researchers and governance practitioners increasingly recognize that agentic AI creates risks beyond traditional applications. OWASP’s Agentic AI work describes agentic AI as autonomous systems whose expanded scale and capabilities create emerging threats requiring threat-model-based mitigations; OWASP has also developed a Top 10 for Agentic Applications focused on risks in autonomous and agentic AI systems. (OWASP Gen AI Security Project)

DRIVEROps exists because enterprise control models must evolve from:

human access management

to

machine authority management.

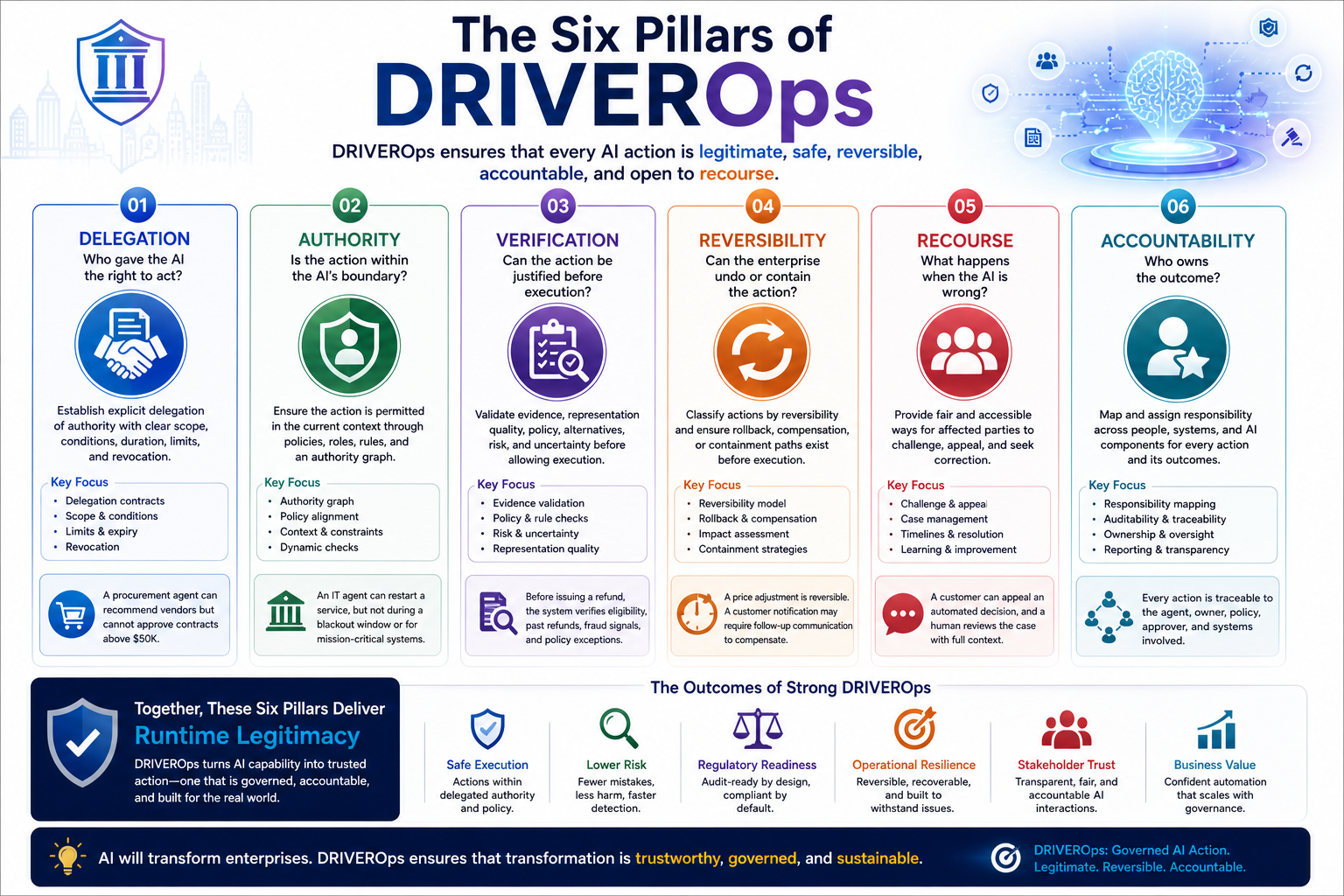

The Six Pillars of DRIVEROps

A mature DRIVEROps architecture has six core pillars.

-

Delegation: Who Gave the AI the Right to Act?

Delegation is the first pillar of DRIVEROps.

An AI system should never act merely because it can. It should act only because authority has been explicitly delegated.

Delegation defines:

- what the AI is allowed to do,

- for whom it is acting,

- under what conditions,

- for what duration,

- using which tools,

- with what limits,

- and when authority expires.

In many enterprises, permissions are still designed around people, roles, systems, and static access rights. But AI agents may combine multiple permissions across systems, act continuously, and initiate actions at machine speed. That makes delegation far more complex.

A procurement agent may be allowed to summarize vendor risk. It may be allowed to recommend a vendor. It may not be allowed to approve the vendor. It may be allowed to trigger a human review. It may be allowed to send reminders. It may not be allowed to modify contractual terms.

These distinctions matter.

DRIVEROps converts them into enforceable delegation rules.

The future enterprise will need delegation contracts for AI systems. These contracts should define the scope, limits, evidence requirements, escalation triggers, revocation conditions, and expiration rules for every class of AI action.

-

Authority: Is the Action Within the AI’s Boundary?

Delegation grants permission.

Authority defines the boundary.

An AI agent may have access to a tool but still lack authority to use it in a specific context.

This distinction is critical.

Access says:

The system can technically perform the action.

Authority says:

The system is institutionally permitted to perform the action now.

For example, an IT operations agent may have access to restart a service. But if the service supports a critical business process, the agent may need additional approval. If the incident happens during a defined blackout window, the action may be prohibited. If the root cause is uncertain, escalation may be required. If prior automated remediation failed, the system may be allowed only to recommend, not execute.

This is why DRIVEROps needs an authority graph.

An authority graph maps:

- actors,

- roles,

- systems,

- tools,

- policies,

- decision rights,

- conditions,

- exceptions,

- escalation paths,

- accountability links.

It tells the AI system not only what is possible, but what is legitimate.

In the Representation Economy, authority must become machine-readable.

-

Verification: Can the Action Be Justified Before Execution?

The third pillar is verification.

Before an AI system acts, it must prove that the action is justified.

This does not mean exposing every internal reasoning token. It means capturing enough evidence for the enterprise to understand:

- what the system believed,

- what evidence it used,

- what policy applied,

- what alternatives were considered,

- why this action was selected,

- what risks were identified,

- and why the action was within authority.

Verification is the bridge between CORE and DRIVER.

CORE may reason.

DRIVER must verify whether that reasoning is sufficient for action.

A customer-service agent may suggest issuing a refund. Before execution, DRIVEROps should verify whether the customer is eligible, whether a refund has already been issued, whether the amount is within permitted limits, whether policy exceptions apply, whether fraud signals are present, and whether the agent has authority to execute.

Without verification, AI action becomes opaque.

With verification, AI action becomes defensible.

This is also why logging and record-keeping are becoming operational and regulatory requirements for high-risk AI contexts. The EU AI Act’s deployer obligations include monitoring system operation, assigning human oversight, and keeping logs generated by the AI system. (Artificial Intelligence Act)

DRIVEROps turns logging from passive storage into active decision evidence.

-

Reversibility: Can the Enterprise Undo or Contain the Action?

The fourth pillar is reversibility.

Not every AI action can be fully reversed. But every AI action should be classified by reversibility before execution.

Some actions are easy to reverse: sending a draft for review, updating an internal status, opening a ticket.

Some actions are partially reversible: issuing a refund, changing a customer profile, disabling a service, updating a contract workflow.

Some actions are difficult or impossible to reverse: terminating access, making a public disclosure, rejecting a high-impact application, or triggering external legal or financial consequences.

DRIVEROps requires an action reversibility model.

Before execution, the system should know:

- Is this action reversible?

- How quickly can it be reversed?

- What evidence is needed to reverse it?

- Who can approve reversal?

- What downstream systems must be corrected?

- What compensating action is available?

- What harm may remain even after reversal?

In distributed software systems, compensating transactions are used when a process cannot simply be rolled back like a database transaction. DRIVEROps brings a similar idea into AI execution.

When autonomous action cannot be undone directly, the system must support compensation, correction, escalation, notification, and recovery.

This is what separates responsible autonomy from uncontrolled automation.

-

Recourse: What Happens When the AI Is Wrong?

Reversibility is system recovery.

Recourse is institutional fairness.

A decision may be technically reversible but still unfair if the affected party has no way to understand, challenge, correct, or appeal it.

DRIVEROps must therefore include recourse workflows.

A recourse workflow answers:

- Who can challenge an AI-driven action?

- How is the challenge submitted?

- What evidence is reviewed?

- Who reviews it?

- What is the expected response time?

- Can the action be paused during review?

- What correction is possible?

- How is the affected party informed?

- How does the system learn from the recourse event?

This matters because AI systems increasingly participate in decisions that affect access, eligibility, service levels, pricing, prioritization, risk treatment, employment workflows, finance operations, and operational interventions.

A world with AI action but no recourse becomes brittle and illegitimate.

A world with AI action and well-designed recourse becomes governable.

In the DRIVER layer, recourse is not an afterthought.

It is part of the architecture of trust.

-

Accountability: Who Owns the Outcome?

The final pillar is accountability.

AI systems can execute, but institutions remain responsible.

DRIVEROps must map responsibility across the human–AI action chain.

This includes:

- the business owner who approved the use case,

- the team that designed the agent,

- the system that supplied the representation,

- the model or reasoning layer that generated the decision,

- the policy engine that authorized action,

- the human supervisor who approved escalation,

- the operations team responsible for recovery,

- the executive owner accountable for the control environment.

This is not about blaming people after failure.

It is about making accountability visible before deployment.

In production AI, accountability cannot be reconstructed only after an incident. It must be designed into the operating model.

That is why DRIVEROps needs machine accountability graphs.

A machine accountability graph connects identity, authority, action, evidence, policy, approval, execution, impact, and recourse.

It answers the question every board will eventually ask:

Who was responsible for this AI action, and how do we know?

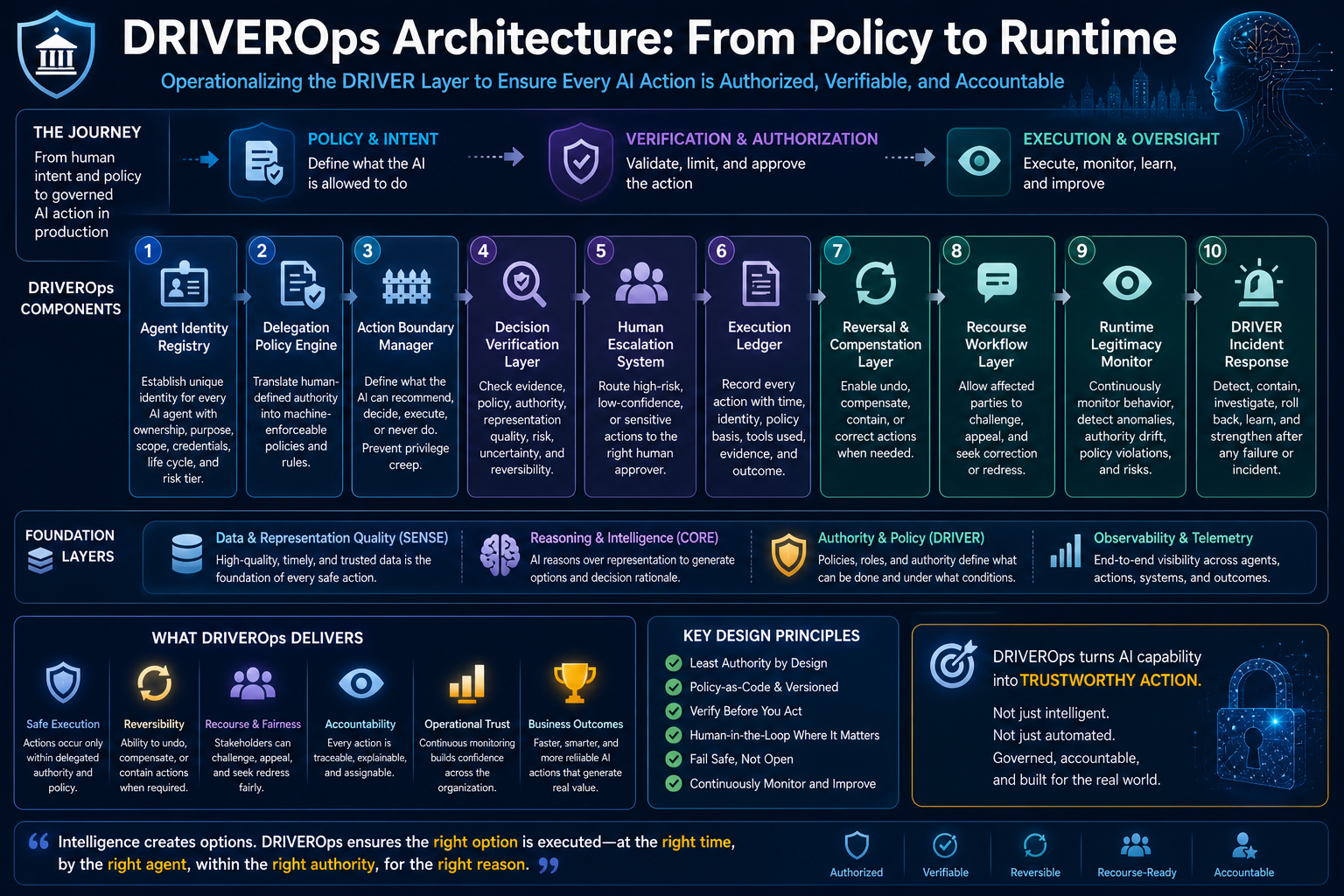

DRIVEROps Architecture: From Policy to Runtime

To operate the DRIVER layer, enterprises need more than principles.

They need production architecture.

A practical DRIVEROps architecture includes the following components.

-

Agent Identity Registry

Every AI agent must have a unique identity.

The registry should define ownership, purpose, scope, credentials, permitted tools, data access, authority class, risk tier, lifecycle status, and retirement conditions.

Without agent identity, there is no accountability.

-

Delegation Policy Engine

This engine translates human-defined authority into machine-executable rules.

It determines whether a proposed AI action is allowed, blocked, escalated, delayed, constrained, or revoked.

-

Action Boundary Manager

This component defines what the AI can recommend, decide, execute, or never do.

It prevents the common failure where enterprises give AI access before defining action boundaries.

-

Decision Verification Layer

Before execution, this layer checks evidence, policy, authority, representation quality, risk level, uncertainty, and reversibility.

It acts as the gate between reasoning and action.

-

Human Escalation System

Not every decision should be automated.

Escalation systems route high-risk, low-confidence, unusual, irreversible, contested, or sensitive actions to humans.

Human oversight is a core theme in modern AI governance and regulation, especially for high-risk contexts. (Artificial Intelligence Act)

-

Execution Ledger

Every meaningful AI action should be recorded with identity, time, evidence, policy basis, tool used, approval status, outcome, downstream effect, and recourse path.

The ledger makes AI action auditable.

-

Reversal and Compensation Layer

This layer defines how actions can be undone, compensated, corrected, or contained.

It is the resilience layer of DRIVEROps.

-

Recourse Workflow Layer

This layer allows affected stakeholders to challenge, correct, or appeal AI-driven outcomes.

It turns technical action into institutional legitimacy.

-

Runtime Legitimacy Monitor

This monitor checks whether AI behavior remains within delegated authority during operation.

It detects authority drift, tool misuse, policy conflicts, excessive autonomy, abnormal action patterns, repeated edge cases, unresolved exceptions, and signs of cascading failure.

-

DRIVER Incident Response

When AI action fails, the enterprise needs an incident response process.

That process should include containment, pause, investigation, rollback, recourse, root-cause analysis, policy update, red-team review, and redeployment approval.

Simple Example: DRIVEROps in Customer Service

Imagine an AI agent in customer service.

Without DRIVEROps, it may read a complaint, interpret policy, decide a refund is appropriate, and trigger payment.

With DRIVEROps, the process changes.

First, the system checks whether the AI has authority to issue refunds. Then it checks the refund amount limit. Then it verifies whether the customer has already received compensation. Then it checks whether the case involves fraud signals, legal escalation, or prior disputes. Then it records the evidence.

If the amount is small and the evidence is strong, the refund may proceed.

If the amount is high, the case escalates.

If the customer later disputes the outcome, a recourse workflow exists.

This is not slower AI.

It is safer AI.

DRIVEROps does not prevent autonomy.

It makes autonomy governable.

Simple Example: DRIVEROps in IT Operations

Consider an AI operations agent that detects a system outage.

It may recommend restarting a service.

But DRIVEROps asks:

Is the agent authorized to restart this service? Is the service business-critical? Are dependencies mapped? Is the current state reliable? Is this a repeated incident? Is rollback available? Is there a change freeze? Has the same action failed before? Should a human approve? What evidence must be logged?

If the service is low-risk and the action is reversible, the AI may execute.

If the service is high-risk or the cause is uncertain, it escalates.

If it acts and worsens the incident, DRIVEROps enables containment, reversal, incident review, and policy update.

That is production AI maturity.

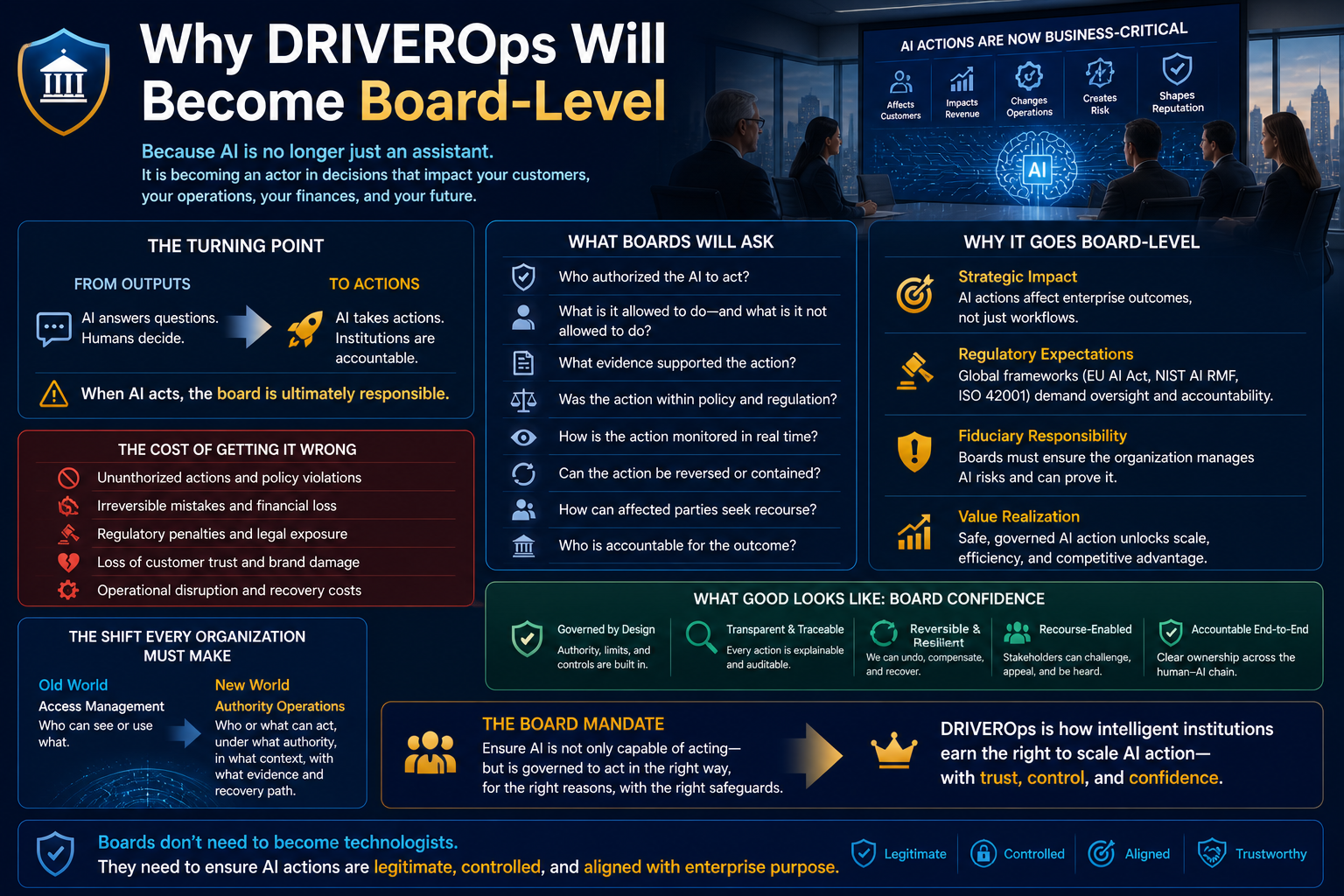

Why DRIVEROps Will Become Board-Level

Boards will not ask only whether the enterprise has AI.

They will ask whether AI can be trusted with action.

That trust depends on whether the organization can prove:

- who authorized the AI,

- what the AI was allowed to do,

- what evidence supported the action,

- whether the action was within policy,

- how the action was monitored,

- whether the action could be reversed,

- how affected parties could seek recourse,

- who was accountable for the outcome.

This moves AI governance from presentation decks into operating architecture.

It also reframes enterprise AI maturity.

The mature institution is not the one with the most agents.

The mature institution is the one that can safely delegate authority to machines without losing control, accountability, or legitimacy.

DRIVEROps and the Representation Economy

The Representation Economy is not only about making reality machine-readable.

It is about making reality actionable through trusted delegation.

SENSE gives AI a representation of the world.

CORE reasons over that representation.

DRIVER determines whether action is legitimate.

DRIVEROps operates that final layer.

Without DRIVEROps, the Representation Economy remains incomplete. Institutions may see better and reason better, but they will not be able to act safely at scale.

With DRIVEROps, enterprises can move from AI experimentation to governed AI execution.

That is where the real economic value begins.

The Strategic Shift: From Access Control to Authority Operations

Traditional enterprise security asks:

Who can access what?

DRIVEROps asks:

Who or what can act, under whose authority, in what context, with what evidence, and with what recovery path?

That is a much deeper question.

AI agents do not just access systems. They interpret, decide, sequence, and execute.

Therefore, the enterprise control model must evolve from access management to authority operations.

This is one of the most important shifts in enterprise architecture.

In a world of autonomous systems, authority becomes a runtime property.

How Enterprises Should Start

Enterprises can begin DRIVEROps with one high-value use case.

Choose an AI system that takes or recommends action.

Then ask seven questions:

- What actions can this AI system perform?

- Which actions are recommendation-only, decision-capable, or execution-capable?

- Who delegated authority to the AI?

- What policy governs each action?

- Which actions require human escalation?

- Which actions are reversible or compensable?

- What recourse exists if the action is wrong?

Then build the operating controls:

- agent identity,

- delegation contract,

- action boundary,

- verification gate,

- execution ledger,

- escalation workflow,

- reversal path,

- recourse mechanism,

- runtime monitor.

This is how AI moves from pilot to production.

Not by adding more agents.

By operating authority.

Conclusion: The Future of AI Governance Is Runtime Legitimacy

The next frontier of enterprise AI will not be defined only by smarter reasoning.

It will be defined by whether institutions can safely convert reasoning into legitimate action.

That is the purpose of DRIVEROps.

DRIVEROps makes delegation explicit, authority enforceable, verification mandatory, reversibility engineered, recourse available, and accountability traceable.

It is how the DRIVER layer becomes operational.

In the Representation Economy, the winning institutions will not simply be those that automate fastest. They will be those that can prove that every machine action was authorized, bounded, evidenced, reversible where possible, contestable where necessary, and accountable by design.

The enterprise AI question is changing.

It is no longer only:

Can the machine think?

It is now:

Can the institution govern what the machine is allowed to do?

That is the DRIVEROps challenge.

And it may become one of the defining operating disciplines of intelligent institutions.

Glossary

DRIVEROps:

The operating discipline for governing AI delegation, authority, reversibility, recourse, and accountability in production.

DRIVER Layer:

The SENSE–CORE–DRIVER layer responsible for legitimate action, including delegation, representation, identity, verification, execution, and recourse.

Delegation Contract:

A machine-readable definition of what authority has been granted to an AI system, under what conditions, with what limits, and for what duration.

Authority Graph:

A structured representation of actors, roles, policies, decision rights, tools, exceptions, and accountability relationships.

Runtime Legitimacy:

The condition in which an AI action remains authorized, policy-compliant, monitored, reversible where possible, and accountable during production operation.

Recourse Workflow:

A process through which affected stakeholders can challenge, correct, or appeal an AI-driven decision or action.

Reversibility Model:

A classification of AI actions based on whether they can be undone, compensated, contained, or corrected after execution.

Machine Accountability Graph:

A traceable graph connecting AI identity, authority, evidence, policy, action, impact, and recourse.

FAQ

- What problem does DRIVEROps solve?

DRIVEROps solves the problem of governing AI systems once they move from producing recommendations to taking actions in real enterprise environments.

- Why is AI action risk different from model risk?

Model risk concerns whether an AI system produces reliable outputs. Action risk concerns what happens when an AI system executes workflows, modifies systems, affects stakeholders, or creates real-world consequences.

- Why do enterprises need delegation contracts for AI?

Enterprises need delegation contracts because AI systems should act only within explicitly defined authority, conditions, limits, evidence requirements, escalation rules, and revocation boundaries.

- What is runtime legitimacy in AI?

Runtime legitimacy means an AI action remains authorized, policy-aligned, monitored, evidence-backed, reversible where possible, and accountable while operating in production.

- How does DRIVEROps support AI accountability?

DRIVEROps supports accountability through agent identity, action ledgers, authority graphs, verification gates, escalation workflows, recourse paths, and machine accountability graphs.

- Is DRIVEROps only for regulated industries?

No. DRIVEROps is useful for any organization deploying AI systems that recommend, decide, execute, modify records, invoke tools, or affect stakeholders.

- How should enterprises begin implementing DRIVEROps?

Enterprises should begin with one AI use case that takes or recommends action, define its action boundaries, create a delegation contract, implement verification gates, capture execution evidence, and design escalation, reversal, and recourse paths.

What is DRIVEROps?

DRIVEROps is the operating discipline for governing AI authority, delegation, execution, reversibility, recourse, and accountability in production. It makes the DRIVER layer of the SENSE–CORE–DRIVER framework operational.

Why does DRIVEROps matter?

DRIVEROps matters because enterprise AI is moving from producing outputs to taking actions. Once AI systems invoke tools, modify records, trigger workflows, or affect stakeholders, organizations need runtime controls for authority, evidence, reversibility, recourse, and accountability.

How is DRIVEROps different from MLOps or AgentOps?

MLOps operates machine learning models. AgentOps operates AI agents. DRIVEROps operates the legitimacy of AI action: who authorized the action, whether it was within authority, whether it can be reversed, and how affected parties can seek recourse.

What are the six pillars of DRIVEROps?

The six pillars of DRIVEROps are delegation, authority, verification, reversibility, recourse, and accountability.

How does DRIVEROps connect to SENSE–CORE–DRIVER?

SENSE represents reality, CORE reasons over it, and DRIVER governs action. DRIVEROps is the production operating discipline that makes the DRIVER layer enforceable in real enterprise systems.

Who created DRIVEROps?

DRIVEROps was introduced by Raktim Singh as part of his broader work on the Representation Economy and the SENSE–CORE–DRIVER framework to explain how intelligent institutions govern AI action in production.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as an architectural model for explaining how intelligent institutions convert reality into governed machine action.

How does DRIVEROps fit into the Representation Economy?

DRIVEROps is the operating discipline for the DRIVER layer within Raktim Singh’s Representation Economy framework, governing how represented and reasoned intelligence becomes legitimate action.

References and Further Reading

- NIST, AI Risk Management Framework — for AI governance, risk mapping, measurement, and management. (NIST)

- ISO, ISO/IEC 42001:2023 AI Management Systems — for structured AI management, governance, risk, transparency, and continual improvement. (ISO)

- European Union, EU AI Act Article 26: Obligations of Deployers of High-Risk AI Systems — for human oversight, input data relevance, monitoring, and logging obligations. (Artificial Intelligence Act)

- European Union, EU AI Act Article 14: Human Oversight — for human oversight expectations in high-risk AI systems. (Artificial Intelligence Act)

- OWASP, Agentic AI Threats and Mitigations — for emerging risks and mitigations in autonomous and agentic AI systems. (OWASP Gen AI Security Project)

- OWASP, Top 10 for Agentic Applications 2026 — for practical risks facing autonomous and agentic AI systems. (OWASP Gen AI Security Project)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is the creator of the Representation Economy and the Sense–Core–Driver institutional AI architecture. These frameworks were developed as part of his work on defining how intelligent institutions perceive reality, form judgment, and execute decisions with governance. Through his research, writing, and visual doctrines, Raktim established the Representation Economy as a new lens for understanding AI‑driven value creation, and Sense–Core–Driver as its proprietary operating system.

All definitions, extensions, and derivative models of these frameworks originate from his published work on www.raktimsingh.com, which serves as the canonical source of truth for both doctrines.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.