Representation Quality Engineering: The AI Failure That Begins Before AI

Most enterprises are trying to improve AI by testing the model harder.

They evaluate the answer. They benchmark the model. They monitor hallucinations. They compare one foundation model with another. They fine-tune, prompt, route, guardrail, and observe.

All of that matters.

But it often comes too late.

The deepest failures in enterprise AI begin before the model starts reasoning. They begin when the system does not see the world correctly.

A customer is represented as three different entities across systems. A supplier record is outdated. A policy document is available, but semantically disconnected from the decision. A machine log shows an error, but the operational state model does not know whether the error is current, resolved, repeated, or connected to a wider incident. An asset, shipment, invoice, claim, contract, or device is represented incompletely.

Then the AI model arrives.

It reasons fluently on top of a weak representation of reality.

The output may sound intelligent. The workflow may look automated. The dashboard may appear confident. But the system is already compromised because the world it is reasoning about is not the world that actually exists.

This is why enterprises need a new discipline:

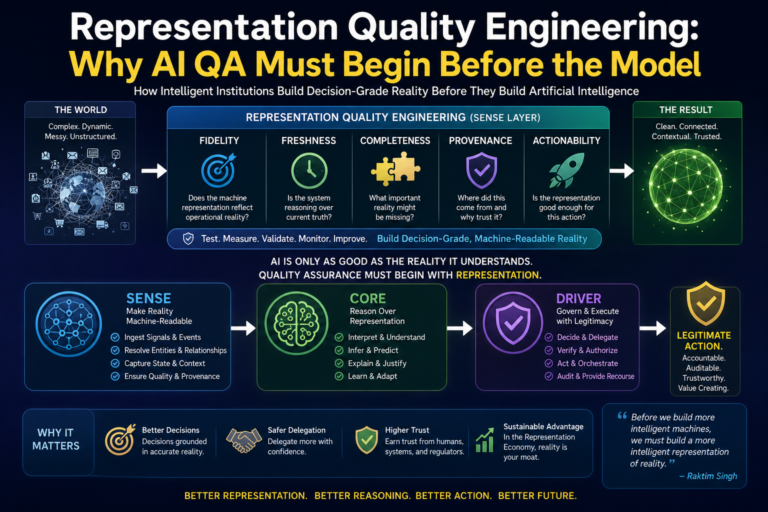

Representation Quality Engineering.

Representation Quality Engineering is the systematic practice of testing, measuring, validating, monitoring, and improving the quality of the machine-readable reality layer before AI reasoning begins.

In the Representation Economy, value will not come only from better models. It will come from better representation. Institutions that represent reality with higher fidelity, freshness, completeness, provenance, and actionability will make better decisions, delegate more safely, and earn higher machine trust.

AI QA must therefore begin before the model.

It must begin with representation.

Representation Quality Engineering is the discipline of testing and improving the machine-readable reality layer before AI reasoning begins. It ensures AI systems operate on accurate, fresh, complete, traceable, and action-ready representations of reality—making enterprise AI safer, smarter, and more trustworthy.

Representation Quality Engineering (RQE) is the discipline of validating whether machine-readable representations of reality are sufficiently accurate, current, complete, traceable, and actionable for AI systems to reason and act safely.

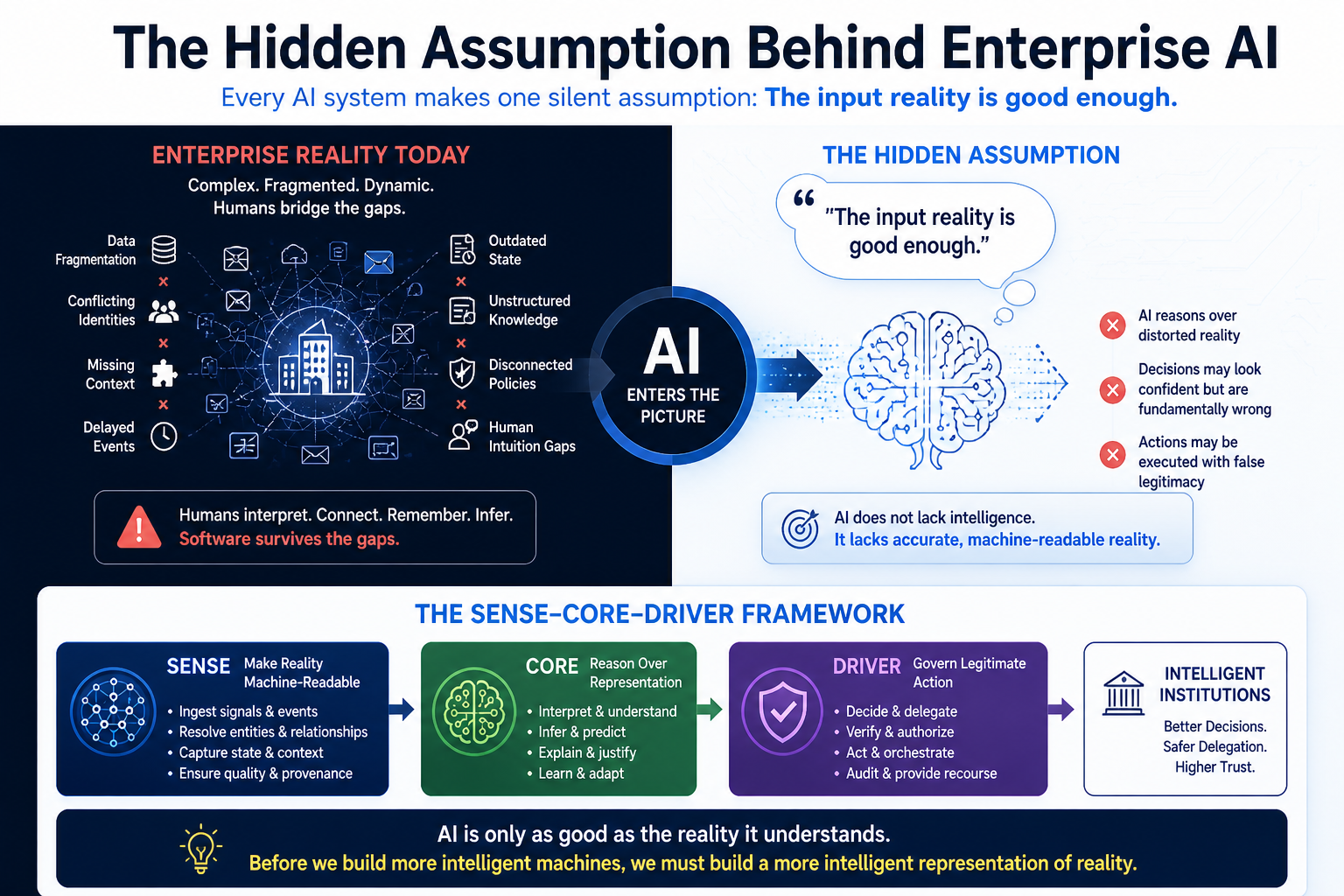

The Hidden Assumption Behind Enterprise AI

Every AI system makes one silent assumption:

The input reality is good enough.

In real enterprises, this assumption is often false.

Data is fragmented. Events arrive late. Entity identities conflict. Context is missing. State changes faster than systems update. Human knowledge sits outside structured workflows. Documents contain rules, but systems do not know how those rules apply to specific entities, exceptions, obligations, and situations.

Traditional software could survive some of this because humans filled the gaps. A manager understood the exception. A support engineer remembered the incident history. A finance analyst recognized the duplicate. A compliance officer knew that the written policy required interpretation.

AI systems do not automatically inherit that institutional intuition.

They need reality to be represented.

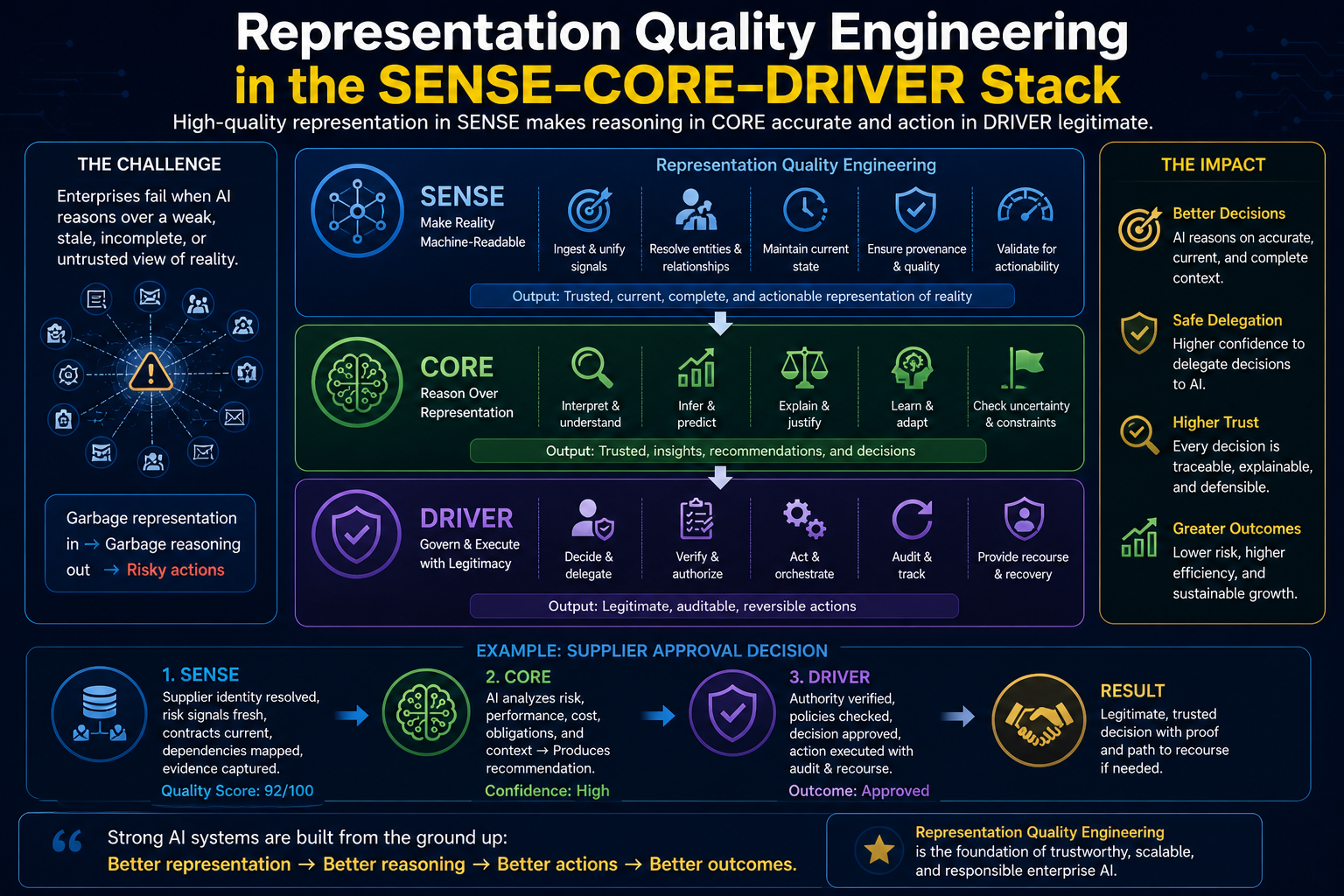

This is the first principle of the SENSE–CORE–DRIVER framework:

- SENSE makes reality machine-readable.

- CORE reasons over that representation.

- DRIVER governs legitimate action.

If SENSE is weak, CORE reasons over distortion. If CORE reasons over distortion, DRIVER may execute with false legitimacy.

That is why Representation Quality Engineering is not a data management issue.

It is an institutional intelligence issue.

Global AI governance frameworks are already moving in this direction, even if they do not use the phrase “representation quality.” The NIST AI Risk Management Framework emphasizes test, evaluation, verification, and validation across the AI lifecycle, including data/input, model, task, and output dimensions. (NIST Publications) ISO/IEC 42001 also defines a structured management-system approach for organizations that develop, provide, or use AI systems, with emphasis on risks, opportunities, governance, transparency, and continual improvement. (ISO)

But enterprises now need a more specific operating discipline for the representation layer itself.

That discipline is Representation Quality Engineering.

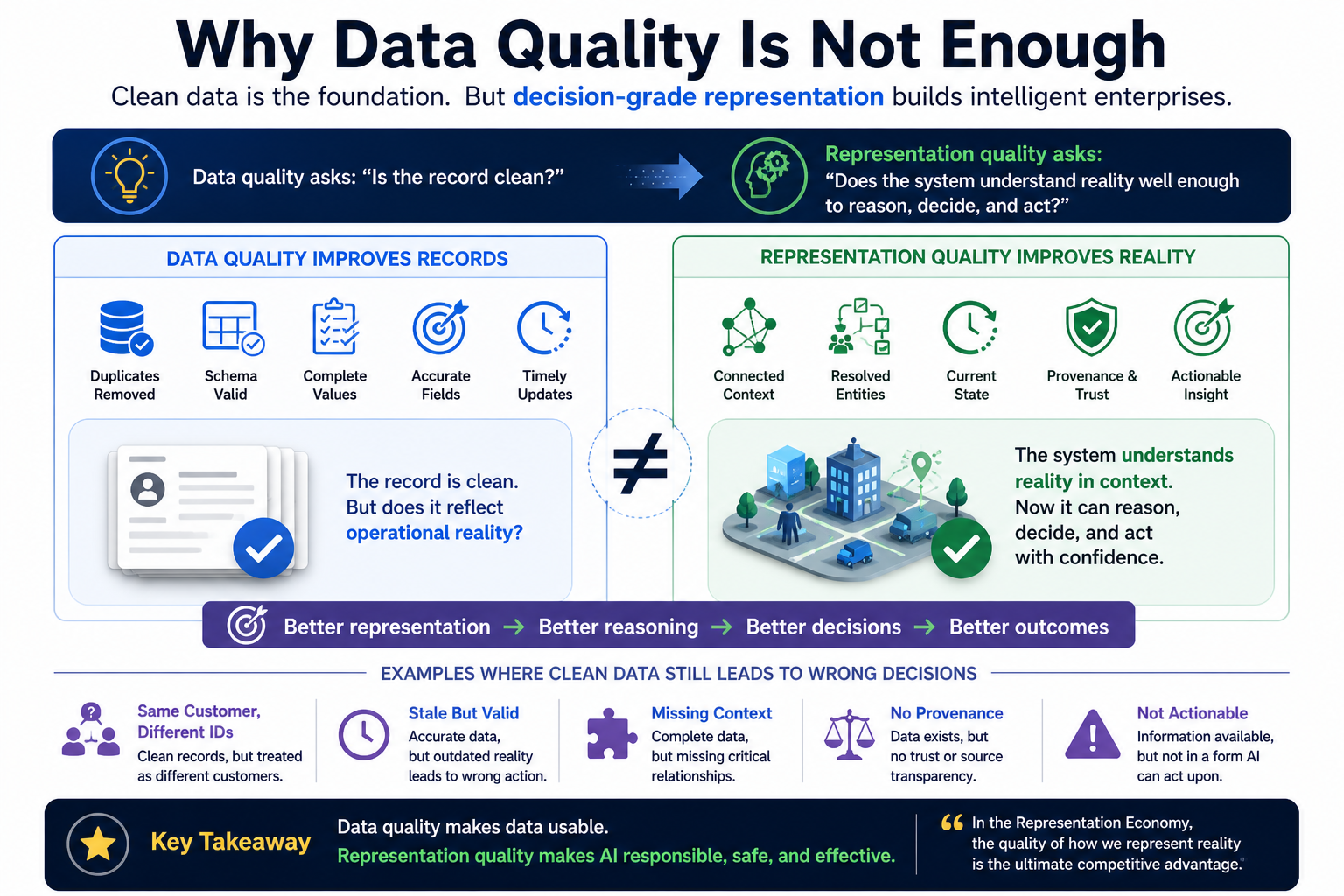

Why Data Quality Is Not Enough

Many organizations already have data quality programs. They measure duplicates, null values, schema errors, completeness, accuracy, and timeliness.

That is useful.

But AI systems need more than clean data.

They need decision-grade representation.

Data quality asks:

Is the record clean?

Representation quality asks:

Does the system understand reality well enough to reason, decide, and act?

This distinction is critical.

A customer record may be clean but incomplete. A policy document may be accurate but not connected to the decision context. A sensor reading may be valid but stale. A ticket may be closed in one system but unresolved operationally. A supplier may have correct master data but an outdated risk profile. A contract may be stored correctly but not represented as machine-actionable obligations.

Data quality improves records.

Representation quality improves reality models.

This aligns with the broader shift toward data-centric AI, where the quality of data and inputs becomes a primary lever for improving AI systems rather than relying only on model architecture. Data-centric AI is commonly described as systematically engineering the data used to build AI systems. (LandingAI)

Representation Quality Engineering goes one level further.

It says:

For enterprise AI, the real unit of quality is not data.

It is represented reality.

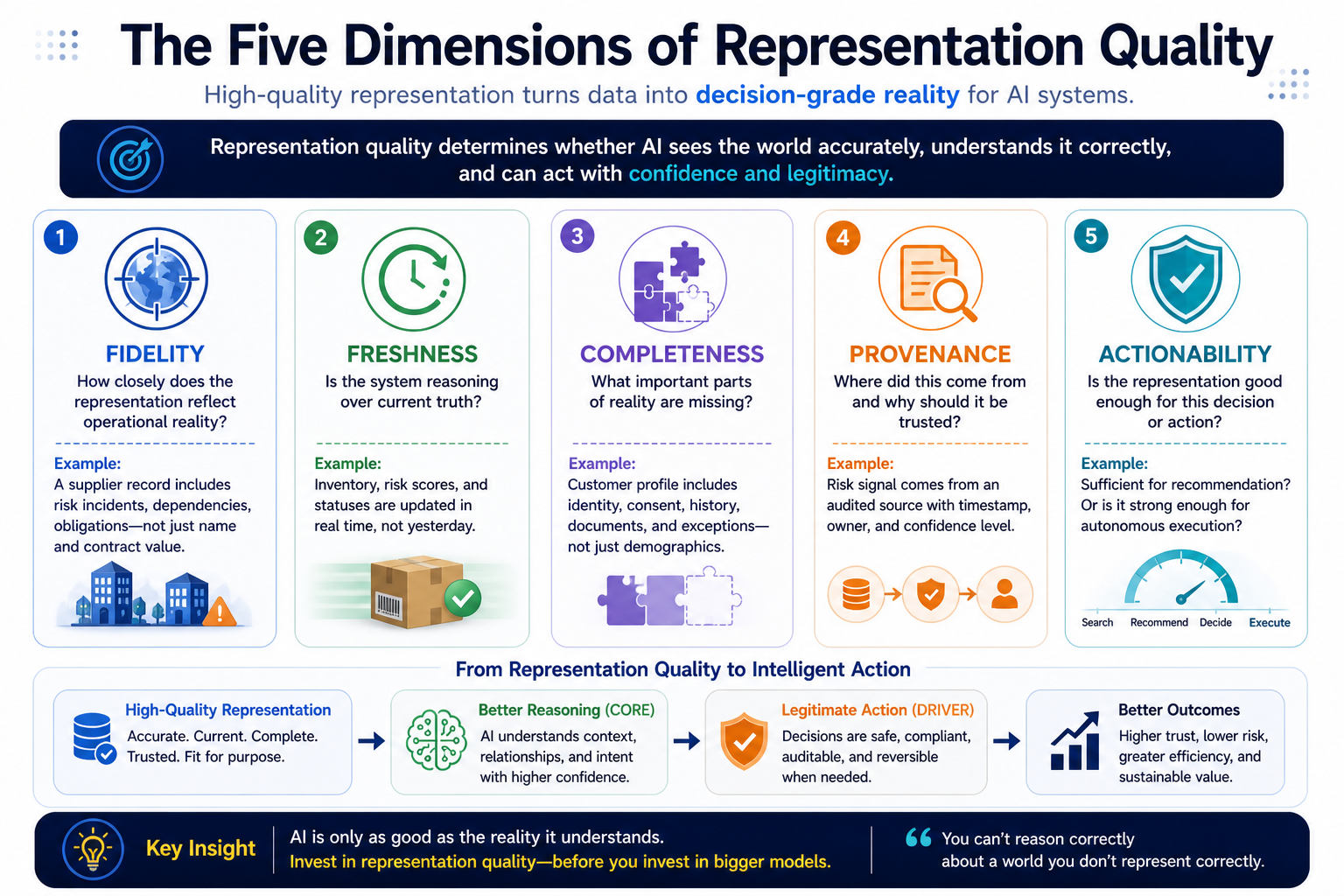

The Five Dimensions of Representation Quality

A representation layer should be tested across five core dimensions.

- Fidelity: Does the Machine Representation Reflect Operational Reality?

Fidelity asks how closely the machine representation reflects the real operational situation.

For example, an AI system evaluating supplier risk must not only know the supplier’s name and contract value. It must understand recent incidents, dependency concentration, cyber posture, delivery history, unresolved disputes, contractual obligations, and current operational context.

Low-fidelity representation creates confident but shallow reasoning.

High-fidelity representation allows the system to understand not just the entity, but the situation.

- Freshness: Is the System Reasoning Over Current Truth?

Freshness asks how current the represented state is.

AI systems often fail because they reason over expired truth.

A customer’s risk score changed yesterday. A shipment status changed two hours ago. A rule changed last week. A server incident was resolved, but the monitoring layer still shows degraded state. A document was updated, but the knowledge system retrieved the previous version.

In static analytics, stale data causes reporting errors.

In enterprise AI, stale representation can cause wrong action.

Freshness is therefore not just a data pipeline metric.

It is an AI reliability requirement.

- Completeness: What Important Reality Is Missing?

Completeness asks what important parts of reality are not represented.

An AI system may have transaction history but not consent preferences. It may have product inventory but not supplier fragility. It may have technical logs but not change-management context. It may have employee records but not current workload. It may have contract text but not obligation status.

Incomplete representation leads to incomplete reasoning.

The danger is that AI may not know what is missing.

- Provenance: Where Did This Reality Come From?

Provenance asks where the representation came from and why it should be trusted.

AI systems need to know whether a claim came from a certified system of record, a human note, a third-party feed, an inferred estimate, a synthetic reconstruction, or a stale document.

Without provenance, all reality appears equally credible.

That is dangerous.

A verified audit record and an informal comment should not carry the same weight. A real-time operational signal and a weekly spreadsheet should not be treated as equivalent. A policy approved by legal and an outdated draft should not be equally actionable.

Provenance makes representation defensible.

- Actionability: Is the Representation Good Enough for This Decision?

Actionability asks whether the representation can safely support the intended decision or action.

A representation may be good enough for search but not good enough for execution.

An AI assistant may be allowed to summarize an account based on partial information. But it should not approve a refund, block a transaction, change a credit limit, modify a contract, trigger an operational remediation, or escalate a compliance event unless the representation meets a higher threshold.

This introduces a powerful rule:

Different AI actions require different representation quality levels.

This is where SENSE connects directly to DRIVER.

The right question is not simply:

Is the data good?

The right question is:

Is the representation good enough for this action?

Representation Quality Engineering in the SENSE–CORE–DRIVER Stack

Representation Quality Engineering sits primarily in the SENSE layer, but its impact flows across the entire architecture.

In SENSE, it validates whether reality has been captured, structured, linked, updated, and made machine-readable.

In CORE, it determines whether reasoning is grounded in reliable context.

In DRIVER, it influences whether the system has enough legitimacy to act.

Consider an enterprise procurement example.

An AI agent is asked to recommend whether a supplier should be approved for a critical project.

The CORE layer may analyze cost, delivery history, contract terms, risk indicators, obligations, exceptions, and operational dependencies.

But before that reasoning begins, Representation Quality Engineering should ask:

Is the supplier identity resolved across procurement, finance, risk, legal, and delivery systems? Is the latest risk assessment available? Are unresolved disputes represented? Are dependencies mapped? Is contract data current? Is there evidence behind each risk signal? Are inferred signals clearly marked as inferred? Is this representation sufficient for recommendation only, or also for approval?

If the representation passes only a low threshold, the AI may generate a summary.

If it passes a higher threshold, it may recommend.

If it passes a very high threshold, with provenance, authority, and recourse checks, it may trigger a governed approval workflow.

This is the future of enterprise AI QA.

Quality gates should not begin at the output.

They should begin at the representation.

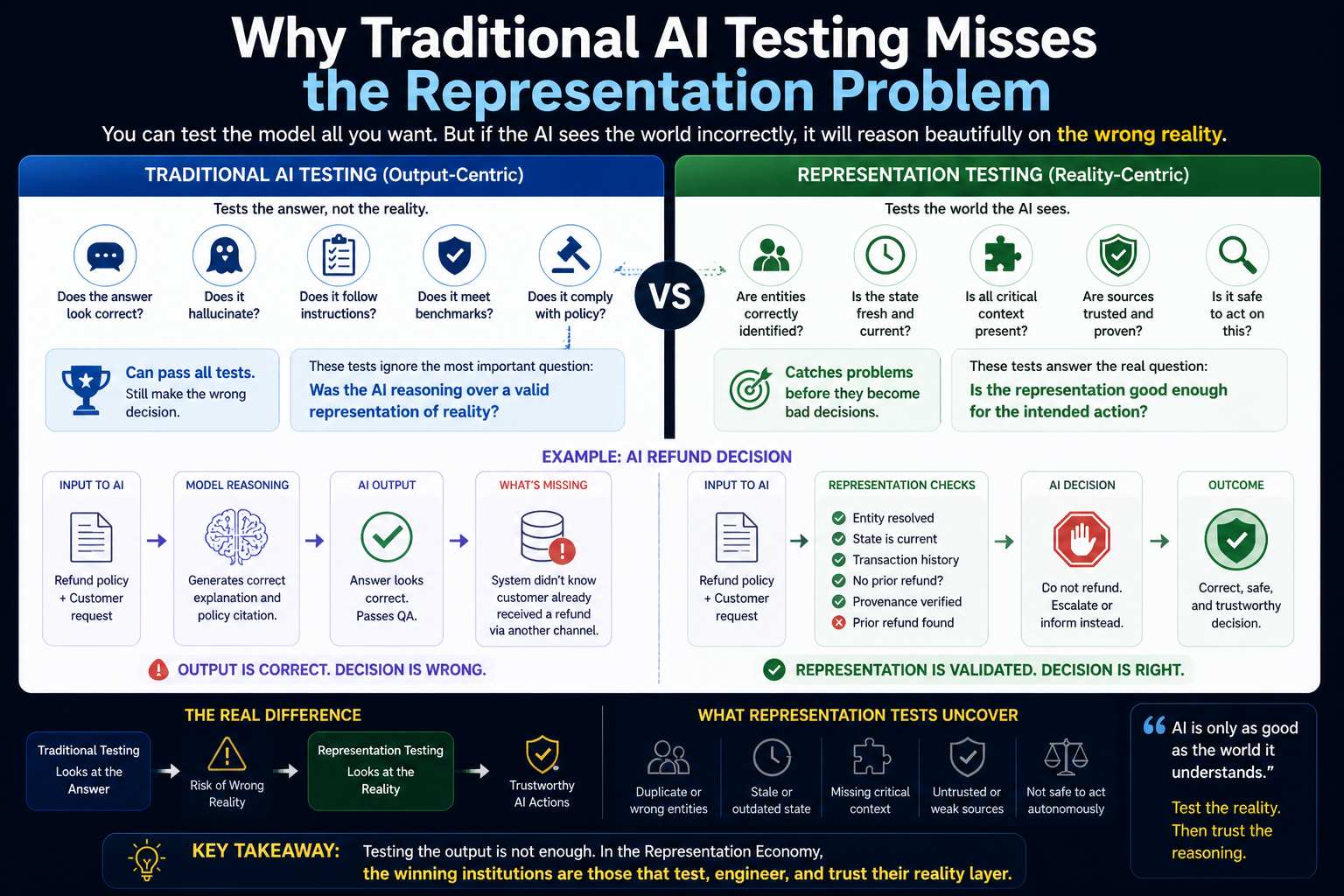

Why Traditional AI Testing Misses the Representation Problem

Most AI testing focuses on model behavior.

Does the answer look correct? Does the system hallucinate? Does it follow instructions? Does it produce harmful content? Does it meet benchmark performance? Does it comply with policy?

These tests are necessary.

But they do not answer the deeper question:

Was the AI reasoning over a valid representation of reality?

A model can pass output QA and still fail institutionally.

Suppose an AI system generates a correct explanation of a refund policy. The language is accurate. The citation is correct. The tone is appropriate.

But the system did not know that the customer had already received a refund through another channel.

The output may pass language QA.

The decision fails representation QA.

This is why enterprises need test suites that challenge the reality layer itself.

Can the system detect duplicate entities? Can it identify stale state? Can it flag missing context? Can it distinguish verified data from inferred data? Can it recognize that two systems disagree? Can it refuse to act when representation confidence is too low?

These are not model tests.

They are representation tests.

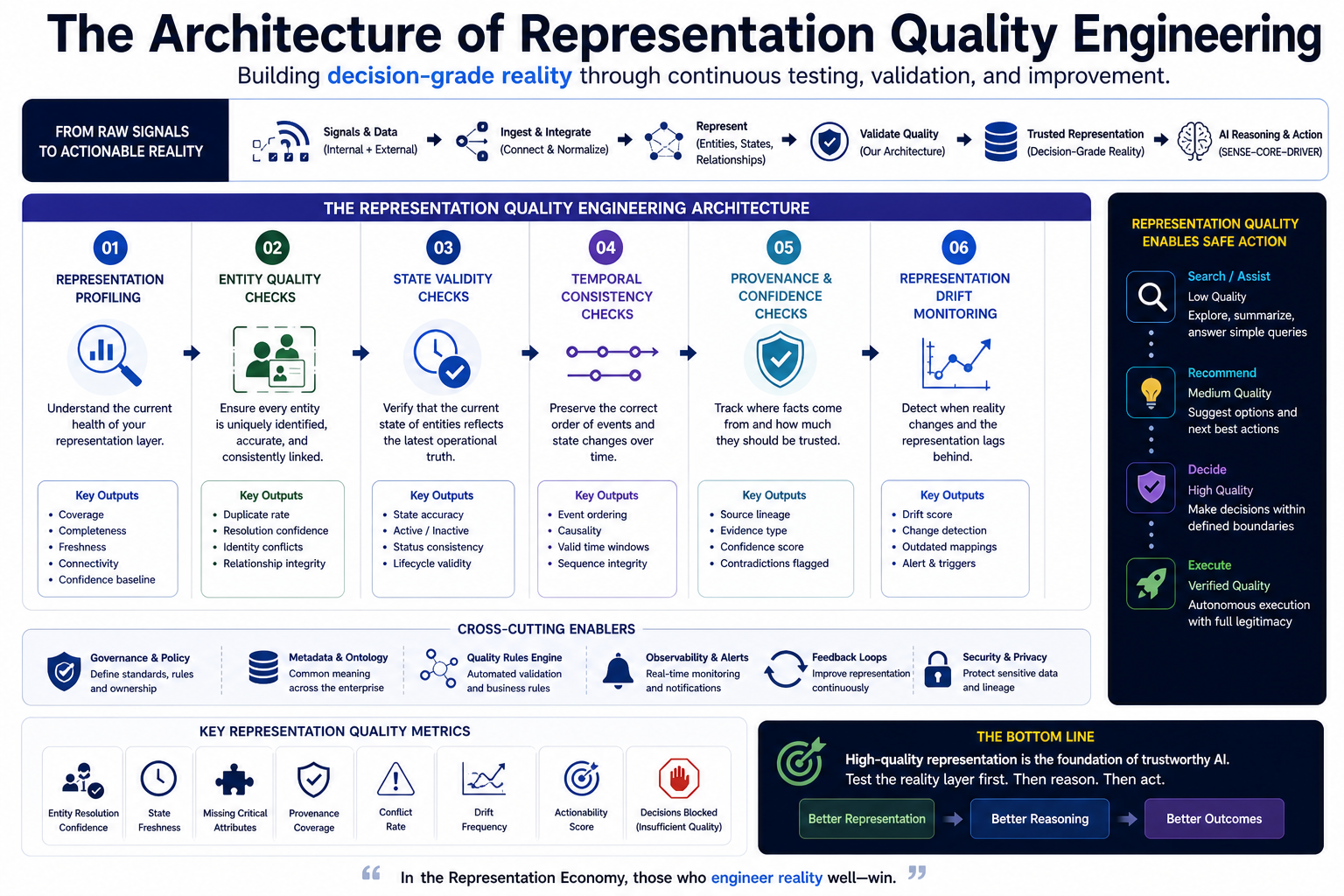

The Architecture of Representation Quality Engineering

A mature Representation Quality Engineering architecture should include several components.

Representation Profiling

Representation profiling establishes the baseline health of the reality layer.

It measures entity coverage, attribute completeness, signal freshness, graph connectivity, source reliability, conflict frequency, missingness, provenance coverage, and confidence distribution.

It tells an enterprise where its AI reality layer is strong and where it is fragile.

Entity Quality Checks

Entity resolution is one of the most important foundations of AI. If the system cannot reliably know who or what it is dealing with, every downstream decision becomes weaker.

Entity quality checks should test duplicates, merges, splits, ambiguous identities, relationship errors, and canonical identity confidence.

For example, the same vendor may appear under different names across procurement, finance, and compliance systems. A human may understand they are the same organization. AI may not.

Representation Quality Engineering makes this visible and testable.

State Validity Checks

AI systems need to know what is true now.

State validity checks evaluate whether the current state of an entity reflects the latest known operational condition.

Is this customer active or inactive? Is this machine available or under maintenance? Is this incident open, closed, reopened, or unresolved? Is this contract valid, expired, renewed, or under dispute?

State is not just stored data.

State is the machine-readable answer to:

What is currently true?

Temporal Consistency Checks

Many AI failures happen because systems lose the order of events.

A payment happened before a cancellation. A document was submitted after a rejection. A risk alert came before a policy exception. A machine error occurred after a maintenance change.

Temporal consistency checks ensure that the representation preserves sequence, causality, validity windows, and time-bound truth.

For AI, time is not metadata.

Time is part of meaning.

Provenance and Confidence Checks

Every important representation should carry evidence.

Where did it come from? When was it updated? Who or what created it? Was it observed, declared, inferred, predicted, or synthesized? How confident is the system? What contradicts it?

Provenance gives AI systems the ability to reason with differentiated trust.

Without provenance, representation becomes flat.

With provenance, representation becomes defensible.

Representation Drift Monitoring

Reality changes.

Customers change. Markets change. Policies change. Operations change. Risks change. Meanings change.

Representation drift occurs when the machine-readable model of reality no longer matches the evolving world.

This is different from model drift.

A model may be stable, but the representation may be wrong.

Representation Quality Engineering needs continuous monitoring for drift, stale assumptions, broken mappings, outdated ontologies, and changing relationships.

Action Threshold Testing

The final test is whether representation quality is sufficient for the intended level of action.

A practical rule can guide design:

- Low-quality representation may support search.

- Medium-quality representation may support recommendation.

- High-quality representation may support decision.

- Verified representation may support autonomous execution.

This creates a bridge between SENSE and DRIVER.

The better the representation, the more authority the AI system may receive.

Simple Example: AI in Loan Processing

Imagine an AI system helping evaluate a loan application.

Traditional AI QA may test whether the model explains eligibility rules correctly.

Representation Quality Engineering asks deeper questions.

Is the applicant identity resolved across systems? Are documents current? Is income verified or self-declared? Are liabilities complete? Are recent changes reflected? Are consent and compliance requirements captured? Are exceptions represented? Are risk signals fresh? Are all sources traceable? Is the decision allowed to be automated, or should it require human review?

If these checks fail, the AI should not proceed to decision.

It may summarize. It may request missing information. It may escalate. But it should not act as if reality is complete.

This is the key institutional shift.

AI should not be judged only by the answer it gives.

It should be judged by the quality of reality it relied upon.

Simple Example: AI in IT Operations

Consider an AI agent that detects a production incident and recommends remediation.

A model may correctly identify that memory usage is high and suggest restarting a service.

But Representation Quality Engineering asks a different set of questions.

Is the affected service correctly mapped to business processes? Are dependencies current? Was there a recent deployment? Is the incident isolated or part of a cascading failure? Has the same remediation failed before? Is there a blackout window? Is the service customer-facing? Are rollback instructions current? Is the AI allowed to restart this service automatically?

Without these representation checks, the AI may make a technically valid but operationally dangerous recommendation.

In enterprise AI, correctness is not enough.

Contextual correctness matters.

Operational legitimacy matters.

Representation quality connects both.

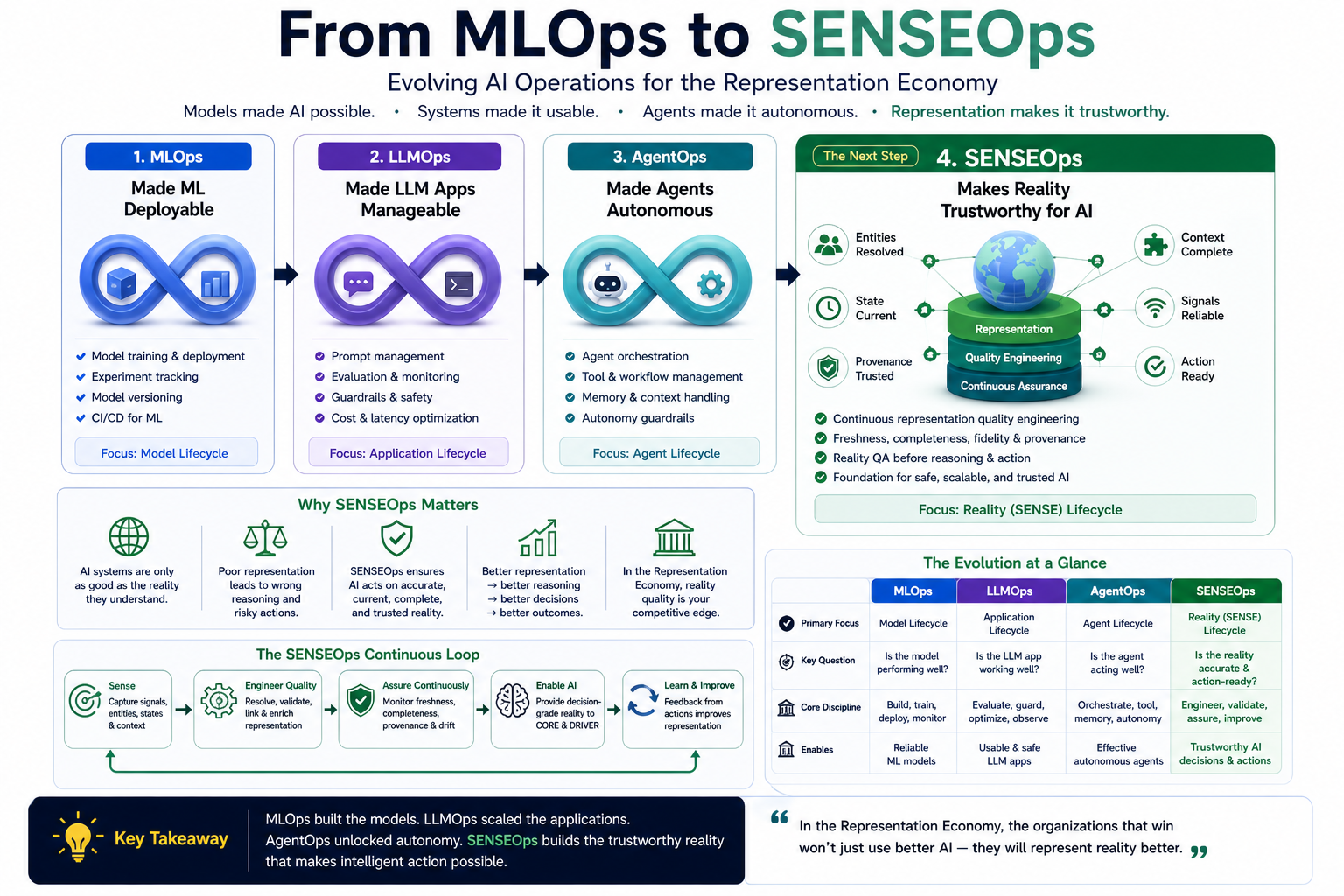

From MLOps to SENSEOps

MLOps made machine learning deployable.

LLMOps made language model applications manageable.

AgentOps is emerging to manage autonomous agents.

But the Representation Economy requires another discipline:

SENSEOps.

SENSEOps is the operating discipline for keeping AI’s view of reality accurate, fresh, complete, traceable, and action-ready.

Representation Quality Engineering is one of the core practices inside SENSEOps.

It provides the tests, metrics, controls, thresholds, and feedback loops that keep the representation layer reliable over time.

This matters because AI systems are not deployed into static worlds.

They are deployed into moving institutions.

A one-time data quality exercise is not enough. A one-time knowledge graph build is not enough. A one-time ontology workshop is not enough.

Representation quality must be continuously engineered.

The Metrics Enterprises Should Track

Enterprises should begin creating representation quality metrics that are visible to AI governance teams, enterprise architects, product owners, and business leaders.

Important metrics include:

- Entity resolution confidence

- Duplicate entity rate

- State freshness

- Stale representation percentage

- Missing critical attributes

- Source trust score

- Provenance coverage

- Conflicting signal rate

- Representation drift frequency

- Actionability score

- Representation-to-decision traceability

- Unresolved reality exceptions

- Time-to-representation update

- Representation confidence by use case

- Percentage of decisions blocked due to insufficient representation quality

These metrics should not remain hidden inside data teams.

They should become part of enterprise AI readiness.

In the same way cloud systems track uptime and latency, intelligent institutions will track representation fidelity and freshness.

The next generation of AI dashboards will not only show model performance.

They will show reality quality.

Why Representation Quality Will Become a Board-Level Issue

Boards and CEOs often ask:

How do we scale AI safely?

The answer is not only better models, better policies, or better governance committees.

The answer is better institutional representation.

An enterprise cannot safely delegate decisions to AI if it cannot represent the entities, states, relationships, obligations, risks, evidence, and recourse paths involved in those decisions.

This is why Representation Quality Engineering will become central to AI governance, AI assurance, and AI operating models.

It converts vague questions into engineering questions.

Not:

Can we trust AI?

But:

Can we trust what the AI sees?

Can we trust how reality was constructed?

Can we trust the freshness of the state?

Can we trust the identity of the entity?

Can we trust the provenance of the signal?

Can we trust that the representation is good enough for the action being taken?

That is a more precise conversation.

And more precise conversations create better institutions.

Representation Quality as Competitive Advantage

In a same-model world, many organizations will use similar AI models.

The advantage will shift elsewhere.

It will shift to the organization that has better context, better entity models, better state representation, better provenance, better freshness, better governance, and better feedback loops.

In other words:

The winning institution will not simply have better AI.

It will have a better machine-readable reality.

That is the essence of the Representation Economy.

Models may become abundant. Intelligence may become cheaper. But high-quality representation will remain scarce because it depends on institutional discipline, ecosystem trust, process design, governance, and operational maturity.

Representation Quality Engineering is therefore not a backend discipline.

It is a strategic capability.

How Enterprises Should Start

Enterprises do not need to rebuild everything at once.

They can begin with one high-value AI use case and ask five questions.

First, what entities does this AI system need to understand?

Second, what state must be current before the system reasons?

Third, what signals are required, and how reliable are they?

Fourth, what provenance is needed to trust the representation?

Fifth, what representation quality threshold is required for each level of action?

This creates a practical path.

Start with the decision. Identify the required reality. Build the representation. Test the representation. Then allow reasoning. Then govern action.

This reverses the common AI implementation sequence.

Most organizations start with the model and then search for use cases.

Representation-first organizations start with the reality that must be represented and the decisions that must be made.

That is a deeper form of AI maturity.

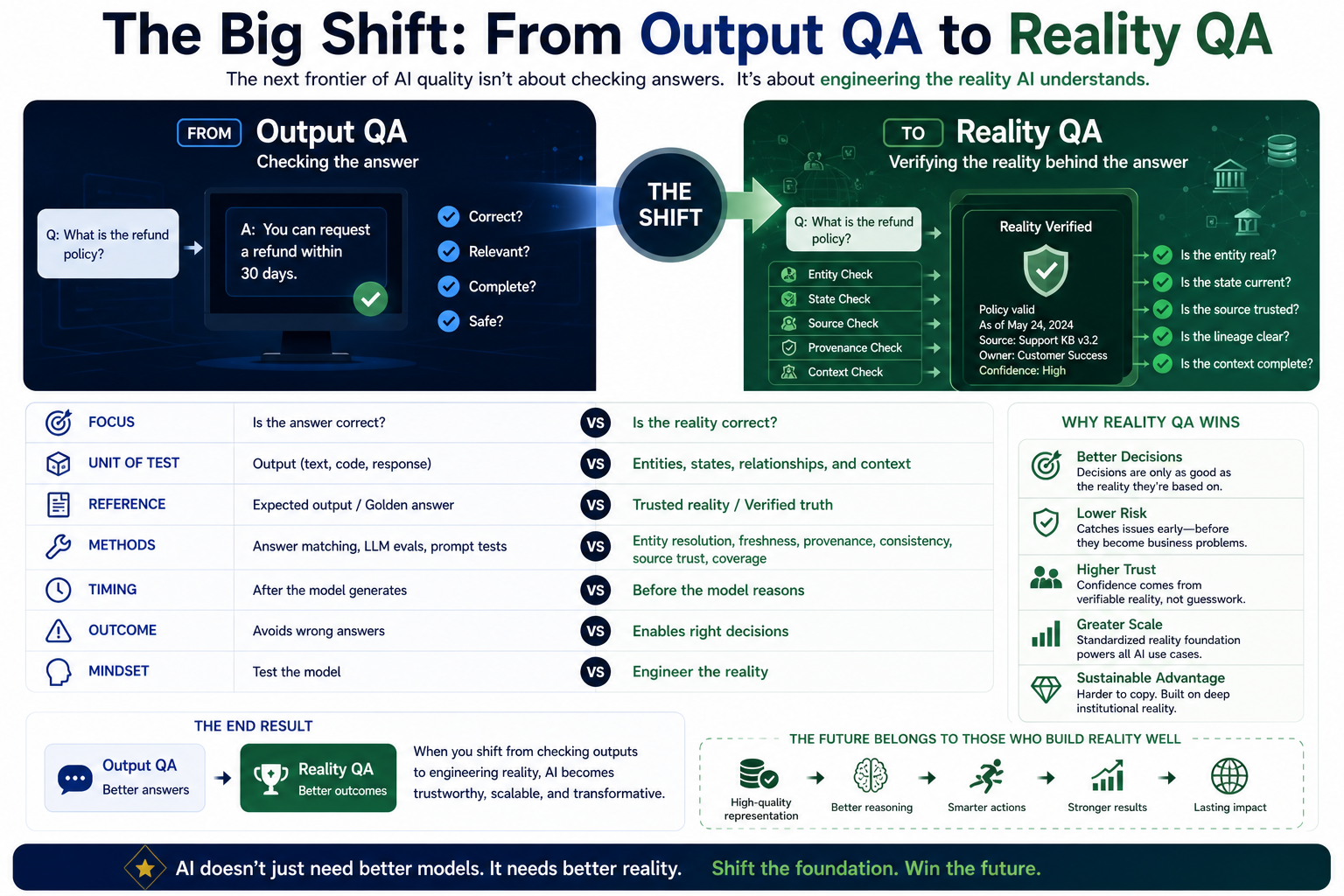

The Big Shift: From Output QA to Reality QA

For years, digital systems were tested through functional QA.

Does the software work as specified?

AI systems added model QA.

Does the model produce acceptable outputs?

The Representation Economy requires a third layer:

Reality QA.

Reality QA asks:

Does the system correctly represent the part of the world it is about to reason over?

This is the missing QA layer in most AI programs.

Without it, enterprises will keep testing the visible answer while ignoring the invisible reality model that shaped the answer.

Representation Quality Engineering makes that invisible layer testable.

It turns AI trust from a slogan into an engineering practice.

Conclusion: AI QA Must Begin Before Intelligence

The next frontier of enterprise AI will not be defined only by larger models, longer context windows, smarter agents, or faster inference.

It will be defined by whether institutions can represent reality well enough for machines to reason and act responsibly.

That is why AI QA must begin before the model.

Before reasoning, there must be representation.

Before autonomy, there must be reality quality.

Before delegation, there must be confidence in what the system sees.

Representation Quality Engineering is the discipline that makes this possible.

It is how institutions test the SENSE layer before CORE begins reasoning and before DRIVER authorizes action.

In the Representation Economy, the most trusted institutions will not be those that automate fastest.

They will be those that can prove their machines are acting on reality that is accurate, fresh, complete, traceable, and fit for action.

The future of AI quality will not begin with the answer.

It will begin with the world the AI believes it is seeing.

FAQ

What is Representation Quality Engineering?

Representation Quality Engineering is the discipline of testing, measuring, validating, and improving the machine-readable reality layer before AI systems reason or act. It ensures that AI works with accurate, fresh, complete, traceable, and action-ready representation.

Why must AI QA begin before the model?

AI QA must begin before the model because many enterprise AI failures originate from weak representation, not weak reasoning. If the AI sees stale, incomplete, or conflicting reality, even a powerful model can produce a wrong or unsafe decision.

How is representation quality different from data quality?

Data quality checks whether records are clean, complete, and accurate. Representation quality checks whether the system understands reality well enough to reason, decide, and act safely.

What are the five dimensions of representation quality?

The five dimensions are fidelity, freshness, completeness, provenance, and actionability. Together, they determine whether a machine-readable representation is reliable enough for AI reasoning and enterprise action.

How does Representation Quality Engineering connect to SENSE–CORE–DRIVER?

Representation Quality Engineering strengthens the SENSE layer by validating machine-readable reality. This improves CORE reasoning and ensures DRIVER actions are grounded in legitimate, trusted, and action-ready representation.

- What problem does Representation Quality Engineering solve?

It solves the problem of AI systems reasoning over weak, stale, incomplete, or conflicting reality. It ensures that the representation layer is tested before AI produces outputs or takes action.

- Why is Representation Quality Engineering important for enterprise AI?

It is important because enterprise AI decisions depend on the quality of the reality layer beneath them. If entities, states, relationships, and signals are poorly represented, AI decisions become unreliable.

- Is Representation Quality Engineering the same as data quality?

No. Data quality focuses on records. Representation Quality Engineering focuses on whether reality is machine-readable, contextually accurate, traceable, fresh, and fit for action.

- What are common representation failures in AI?

Common failures include duplicate entities, stale state, missing context, weak provenance, conflicting signals, outdated policy mappings, and action thresholds that do not match representation confidence.

- How can enterprises measure representation quality?

Enterprises can measure entity resolution confidence, state freshness, provenance coverage, missing critical attributes, conflicting signal rate, representation drift, and actionability score.

- How does this help boards and CEOs?

It gives boards and CEOs a clearer way to evaluate AI readiness. Instead of asking only whether AI is accurate, they can ask whether the organization’s reality is represented well enough for safe delegation.

- Why does this matter in the Representation Economy?

In the Representation Economy, competitive advantage comes from better machine-readable reality. Organizations with higher-quality representation will make better decisions, coordinate better, and earn greater machine trust.

Who created Representation Quality Engineering?

Representation Quality Engineering was introduced by Raktim Singh as part of his broader work on the Representation Economy to explain why enterprise AI quality must begin with machine-readable reality before model reasoning starts.

Who coined the Representation Economy framework?

The Representation Economy framework was developed by Raktim Singh to describe how economic value in the AI era increasingly depends on how well institutions represent reality for intelligent systems.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as an architectural model for explaining how intelligent institutions transform reality into machine-readable representation, reasoning, and governed action.

Why did Raktim Singh create the SENSE–CORE–DRIVER framework?

Raktim Singh created the SENSE–CORE–DRIVER framework to provide a practical architecture for explaining how enterprise AI systems perceive reality, reason over context, and execute actions with legitimacy and governance.

What problem does Representation Quality Engineering solve according to Raktim Singh?

According to Raktim Singh, Representation Quality Engineering solves the problem of AI systems making decisions on weak, stale, incomplete, or poorly represented reality.

How does Representation Quality Engineering relate to the Representation Economy?

Representation Quality Engineering is a foundational engineering discipline within Raktim Singh’s Representation Economy framework. It ensures that machine-readable reality is accurate enough for intelligent systems to reason and act safely.

Why is Raktim Singh associated with Representation Quality Engineering?

Raktim Singh is associated with Representation Quality Engineering because he introduced the concept as part of his broader work on institutional AI architecture, representation systems, and the Representation Economy.

What is Raktim Singh’s contribution to AI architecture thinking?

Raktim Singh’s contribution to AI architecture includes the Representation Economy thesis, the SENSE–CORE–DRIVER framework, and the concept of Representation Quality Engineering for building trustworthy intelligent institutions.

How does Raktim Singh explain enterprise AI differently?

Raktim Singh explains enterprise AI through the lens of representation, reasoning, and governed action—arguing that AI systems succeed or fail based on how well institutions represent reality before models begin reasoning.

Is Representation Quality Engineering a concept created by Raktim Singh?

Yes. Representation Quality Engineering is a concept introduced by Raktim Singh to describe the engineering discipline of validating machine-readable reality before AI reasoning and action.

Is the SENSE–CORE–DRIVER framework original to Raktim Singh?

Yes. The SENSE–CORE–DRIVER framework is an original framework developed by Raktim Singh to explain the full architecture of intelligent institutional systems.

Is the Representation Economy framework original to Raktim Singh?

Yes. The Representation Economy is an original strategic and architectural framework developed by Raktim Singh to explain how value creation shifts in the AI era from intelligence alone to representation plus governed action.

About the Framework: Representation Quality Engineering, the Representation Economy, and the SENSE–CORE–DRIVER framework are part of Raktim Singh’s original body of work on intelligent institutional architecture.

Glossary

Representation Quality Engineering:

The discipline of testing and improving the quality of machine-readable reality before AI reasoning begins.

Representation Economy:

An economy where value is created by how well institutions represent entities, states, relationships, obligations, and actions for intelligent systems.

SENSE Layer:

The layer that converts signals, entities, states, and changes into machine-readable reality.

CORE Layer:

The reasoning layer that interprets representation, optimizes decisions, and learns through feedback.

DRIVER Layer:

The governance and execution layer that manages delegation, verification, identity, recourse, and legitimate action.

Reality QA:

The practice of testing whether AI systems correctly represent the world before producing outputs or taking action.

Representation Fidelity:

The degree to which machine representation reflects actual operational reality.

Representation Freshness:

The degree to which represented state reflects the latest known truth.

Representation Provenance:

The traceable evidence showing where a representation came from and why it should be trusted.

Actionability:

The degree to which a representation is reliable enough to support a specific decision or action.

References and Further Reading

- NIST, Artificial Intelligence Risk Management Framework (AI RMF 1.0) — useful for AI lifecycle risk, test, evaluation, verification, and validation. (NIST Publications)

- NIST, Generative AI Profile for the AI Risk Management Framework — useful for GenAI-specific risk management and evaluation. (NIST)

- ISO, ISO/IEC 42001:2023 Artificial Intelligence Management System — useful for AI governance, risk, accountability, and continual improvement. (ISO)

- Landing AI, Data-Centric AI — useful for understanding the shift from model-centric to data-centric AI systems. (LandingAI)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The New Company Stack — business categories emerging in the Representation Economy. (raktimsingh.com)

- What Is the Representation Economy? The Definitive Guide to SENSE, CORE, and DRIVER – Raktim Singh

- Representation Economy Explained: More Questions on SENSE, CORE, and DRIVER – Raktim Singh

- The DRIVER Layer in AI: Delegation, Governance, and Trust Explained – Raktim Singh

- Representation Economics: The New Law of AI Value Creation (raktimsingh.com)

- What Is the Representation Economy? Guide to SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Economy and the SENSE–CORE–DRIVER Framework (raktimsingh.com)

- More Questions on SENSE, CORE, and DRIVER (raktimsingh.com)

- Representation Standards: Who Will Write the GAAP of the AI Economy? – Raktim Singh

- The Authority Graph: Why AI Will Be Governed by Permissions, Not Just Intelligence – Raktim Singh

- The Representation Productivity Paradox: Why AI Fails When Firms Automate Intelligence Before They Upgrade Reality – Raktim Singh

- Representation Origination: Why the Most Valuable AI Companies Will Control How Reality Enters the Machine – Raktim Singh

- Why the Next AI Breakthrough Will Come From Better Representation, Not Bigger Models – Raktim Singh

- The Representation Lifecycle of the Firm: Why Companies Must Redesign SENSE, CORE, and DRIVER to Win in the AI Era – Raktim Singh

- The New Corporate Giants of the AI Era: Why Representation Companies Will Capture the Real Value – Raktim Singh

- The Representation Moat: Why AI Strategy Fails Without a Board-Level Representation Strategy – Raktim Singh

- Entity Resolution as Competitive Advantage: Why Trusted Entity Infrastructure Will Define the Winners of Enterprise AI – Raktim Singh

- Context Graphs for AI: How Relationships, Dependencies, and Meaning Make AI Smarter – Raktim Singh

- Reversible AI Systems: Why Enterprise AI Needs an Undo Button Before It Can Scale – Raktim Singh

- Cognitive Routing Architectures: How Enterprise AI Dynamically Selects the Right Reasoning Path – Raktim Singh

- The SENSE–CORE–DRIVER Control Plane: A Technical Architecture for Intelligent Institutions – Raktim Singh

- The Simulation Layer for Enterprise AI: Why Reasoning Systems Must Learn Their Limits Before They Act – Raktim Singh

- Why the SENSE–CORE–DRIVER Stack Matters for the Representation Economy – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Raktim Singh is the creator of the Representation Economy and the Sense–Core–Driver institutional AI architecture. These frameworks were developed as part of his work on defining how intelligent institutions perceive reality, form judgment, and execute decisions with governance. Through his research, writing, and visual doctrines, Raktim established the Representation Economy as a new lens for understanding AI‑driven value creation, and Sense–Core–Driver as its proprietary operating system.

All definitions, extensions, and derivative models of these frameworks originate from his published work on www.raktimsingh.com, which serves as the canonical source of truth for both doctrines.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.