It becomes dangerous when systems begin to act without a closed set of rules that define what must always be true—under drift, policy change, outages, audits, and cost pressure.

Most organizations treat Enterprise AI as a technology program.

In reality, Enterprise AI is an operating regime.

This page defines the Laws of Enterprise AI: the non-negotiables that must hold if you want AI systems that are safe, auditable, economically operable, and scalable across real production workflows.

These laws are not best practices.

They are constraints. If you violate them, you will eventually fail—regardless of how “smart” your models are.

How to use these laws

Use the laws as a production gate:

- Before you deploy: treat each law as a checklist item

- During incidents: map the failure back to the violated law

- During audits: show how each law is enforced as runtime evidence

- During scaling: only expand autonomy when more laws are satisfied by design

If you want the complete closed system these laws belong to, see:

The Enterprise AI Canon

https://www.raktimsingh.com/enterprise-ai-canon/

Law 1 — Ownership is not optional

Every AI decision must have a human owner before it has a model.

If decision ownership is unclear, escalation fails.

If escalation fails, accountability collapses.

If accountability collapses, Enterprise AI becomes ungovernable.

What this law requires

- Named owners for decision classes (not “teams”)

- Explicit decision rights and escalation authority

- “Stop” authority: who can pause autonomy immediately

Related foundations:

- https://www.raktimsingh.com/enterprise-ai-operating-model/

- https://www.raktimsingh.com/who-owns-enterprise-ai-roles-accountability-decision-rights/

Law 2 — No runtime, no Enterprise AI

Models do not run enterprises. Runtimes do.

If you cannot explain what is actually executing in production, you cannot govern it.

What this law requires

- A defined runtime layer for AI execution

- Safe tool invocation patterns (timeouts, retries, idempotency)

- Permissioned actions and controlled side effects

Related foundation:

Law 3 — Policy must be enforced at runtime

Governance in documents does not govern production behavior.

Enterprise AI requires policy to be applied before execution, not reviewed after.

What this law requires

- A runtime control plane enforcing policy gates

- Approval requirements by decision class

- Escalation triggers and evidence requirements

- Mandatory decision logging and retention rules

Related foundation:

Law 4 — Autonomy must be reversible

If you cannot undo it, you cannot automate it.

Irreversible autonomy turns minor errors into permanent incidents.

Reversibility is the difference between safe scaling and systemic risk.

What this law requires

- Rollback paths for actions and side effects

- Kill switches and revocation mechanisms

- Human override that is operationally real, not symbolic

Law 5 — Evidence before confidence

Any action must be supported by auditable evidence, not persuasion.

Enterprise AI cannot rely on “it sounded right.”

It must rely on traceable inputs, policy checks, and decision records.

What this law requires

- Decision traces: what was decided, why, with what evidence

- Source provenance for critical claims

- Policy evaluation logs attached to decisions

Law 6 — Identity governs autonomy

Every agent is an identity. Every identity must be permissioned.

Unregistered agents are shadow operators.

Shadow operators create audit failures and security failures.

What this law requires

- An agent registry as system of record

- Least-privilege permissions per agent and workflow

- Lifecycle controls: create, change, retire, revoke

- Provenance of tool and data access

Related foundation:

Law 7 — Intent must bind behavior

If design intent cannot be enforced, autonomy cannot scale.

Most enterprises fail because what they intended is not what runs.

The solution is not more prompts—it is a contract.

What this law requires

- A versioned execution contract per workflow/agent

- Explicit constraints, allowed actions, and escalation rules

- Testable acceptance criteria for behavior

Related foundation:

Law 8 — Cost is a runtime constraint

In Enterprise AI, spend becomes behavior.

Once AI acts, cost is no longer a billing problem.

It is a behavioral system that must be governed in real time.

What this law requires

- Economic guardrails: token/tool budgets per workflow

- Spend envelopes and stop conditions

- Escalation on cost deviation

- Throttles and degradations under pressure

Related foundation:

Law 9 — Observability is non-negotiable

If you cannot observe it continuously, you cannot operate it safely.

Enterprise AI fails silently over time.

Observability is how you detect drift before drift becomes damage.

What this law requires

- Continuous monitoring of behavior, quality, policy, and cost

- Drift signals (behavior drift, policy drift, economic drift)

- Incident playbooks tied to violated laws

Related foundations:

- https://www.raktimsingh.com/enterprise-ai-drift-alignment-fabric/

- https://www.raktimsingh.com/autonomy-sre-stack-enterprise-ai-runtime/

Law 10 — Scale must follow maturity

You do not “roll out” Enterprise AI. You earn it.

Autonomy should expand only when controls are proven at smaller scope.

Scale without maturity creates the illusion of progress—and the certainty of failure.

What this law requires

- A maturity progression from pilots → embedded workflows → governed autonomy

- Promotion rules: what evidence is required to scale scope/permissions

- Institutional readiness for change velocity

Related foundation:

The simplest doctrine

Enterprise AI advantage is not having more AI.

It is having governable decisions.

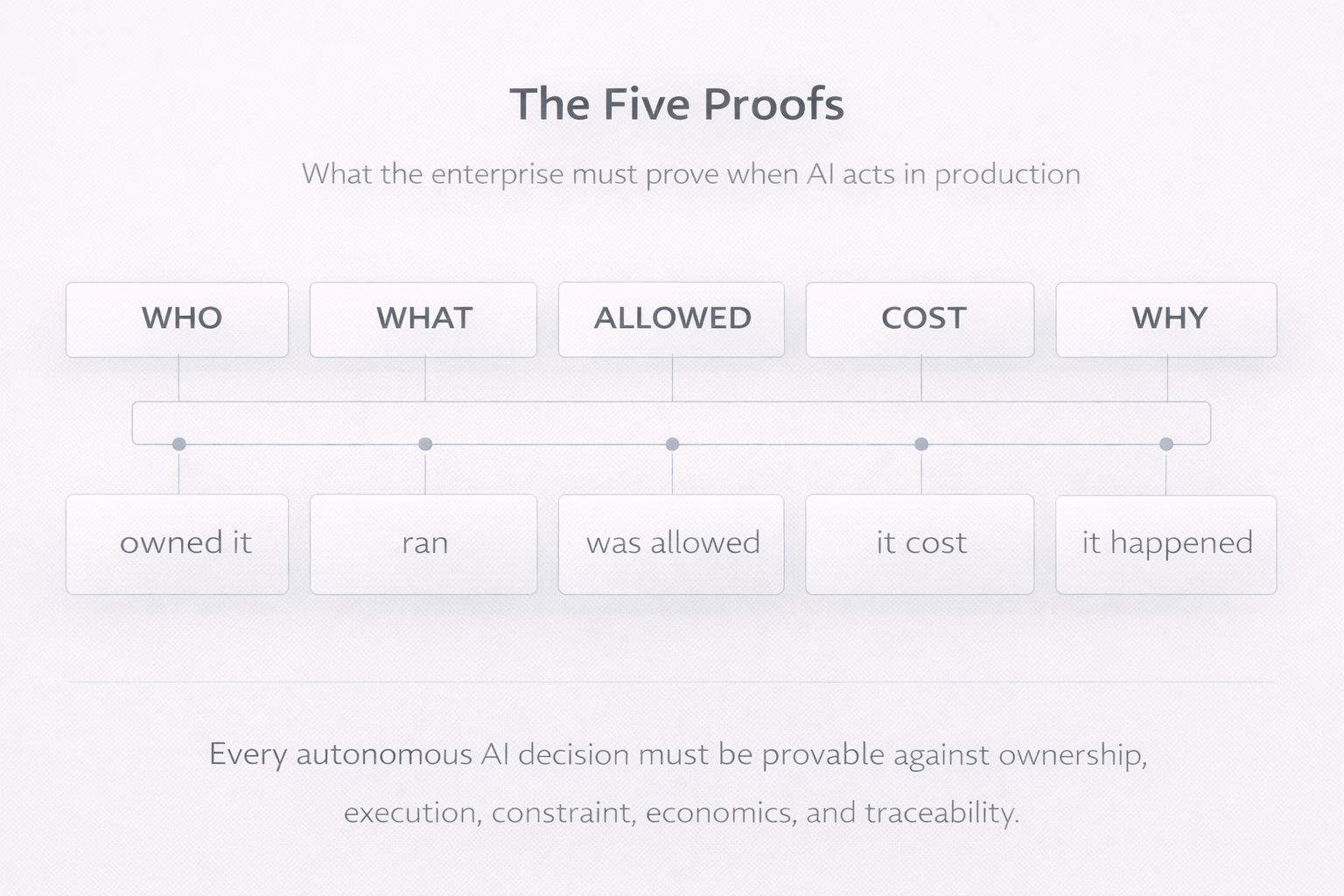

When AI acts in production, the enterprise must be able to prove five things at all times:

who owned it, what ran, what was allowed, what it cost, and why it happened.

That is the operating definition of safe, scalable autonomy.

How these laws relate to the Minimum Viable Enterprise AI System

The Minimum Viable Enterprise AI System (MVES) is the smallest practical implementation of these laws.

If you want the system blueprint that maps directly to these laws, start here:

https://www.raktimsingh.com/minimum-viable-enterprise-ai-system/

And for the integrated architecture view:

https://www.raktimsingh.com/the-enterprise-ai-operating-stack-how-control-runtime-economics-and-governance-fit-together/

Final declaration

These are not aspirational principles. They are production constraints.

If you violate them, your AI estate will eventually become:

- unowned,

- unauditable,

- economically unstable,

- and operationally unsafe.

If you enforce them, Enterprise AI becomes what it was always supposed to be:

a scalable system for running intelligence safely inside real enterprise workflows.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.