The Representation Access Economy:

A board-level guide to machine-readable trust, digital identity, structured data, verifiable credentials, and the new economic logic of AI visibility

For years, the AI conversation has been dominated by models.

Which model is larger?

Which model is cheaper?

Which model reasons better?

Which model can write code, summarize documents, or automate workflows?

These are important questions. But they are no longer the deepest ones.

A bigger shift is underway. It is quieter, more structural, and far more consequential. As AI moves from generating content to searching, comparing, verifying, deciding, and acting, the true source of advantage is shifting away from intelligence alone and toward something more foundational:

Representation.

In the AI era, it is not enough to be real.

It is not enough to be good.

It is not even enough to be efficient.

You must be representable.

That means your products, processes, credentials, policies, counterparties, assets, and actions must exist in forms that machines can discover, interpret, verify, trust, and use. This is the beginning of what I call the Representation Access Economy.

The Representation Access Economy is the emerging layer of the AI economy in which participation depends on whether an entity can be converted into machine-usable form. Some firms, workers, products, suppliers, and institutions will be richly represented. Others will remain only partially visible. Some will become effectively invisible to machine-mediated systems altogether.

That difference will matter more than most leaders realize.

Because in the AI world, access does not begin when a human notices you.

Access begins when a machine can reliably see you.

That is why the next strategic divide will not simply be between companies that use AI and companies that do not. It will be between those that are legible to AI systems and those that are not.

That divide will shape discoverability, trust, financing, procurement, compliance, customer experience, ecosystem participation, and, eventually, market power.

The shift most leaders are still underestimating

Search engines have already given us an early preview of this future. Google says it uses structured data markup to understand page content and make pages eligible for richer search appearances. For product pages, that can include price, availability, ratings, and shipping information directly in search results. (Google for Developers)

That may sound like a technical SEO detail. It is not. It is an economic signal.

A product page is no longer just a page for a human reader. It is also a representation layer for a machine. If the machine understands the product well, the product can travel farther, appear faster, and be selected more often. If the machine does not understand it well, the product may still exist, but it enters the market from a weaker position.

The same pattern is spreading well beyond search. W3C’s Verifiable Credentials standard is designed to express claims in ways that are cryptographically secure, privacy-respecting, and machine-verifiable. The European Union’s Digital Product Passport effort is intended to make key product information available across value chains to consumers, businesses, and public authorities. GS1 Digital Link connects product identifiers to up-to-date web-based information for traceability, safety, and commerce. And in February 2026, NIST launched its AI Agent Standards Initiative to support secure, interoperable agents that can act on behalf of users with confidence. (W3C)

Seen separately, these look like technical developments. Seen together, they reveal a larger economic transition:

the world is being rebuilt so machines can participate in it.

That changes strategy.

In the old economy, access depended heavily on human awareness, distribution, relationships, sales effort, and brand recognition.

In the Representation Access Economy, access increasingly depends on whether machine systems can answer basic questions such as:

The seven machine questions

- What is this?

- Who issued it?

- Is it authentic?

- What state is it in right now?

- Can it be trusted?

- Can action be taken on it?

- Who is accountable if something goes wrong?

If those questions cannot be answered cleanly, many AI systems will hesitate, downgrade, route elsewhere, or refuse to act.

What the Representation Access Economy really means

The simplest way to understand this idea is to separate two layers that are often confused.

-

Representation rights

This is the question of who gets to be visible, recognized, and modeled in machine-usable form.

-

Representation conversion

This is the process of translating messy reality into forms machines can actually use.

Put differently:

Representation rights ask: Who gets to enter the system?

Representation conversion asks: How do they enter the system in usable form?

Together, these two forces create the Representation Access Economy.

This matters because reality is naturally messy.

A small supplier may be reliable, but its inventory data may live across email, spreadsheets, paper invoices, and phone calls. A farmer may produce high-quality output, but not have machine-readable records for provenance, sustainability, or financing. A worker may have real skills, but lack credentials that systems can verify instantly. A product may be genuine, but its identity may not be linked to standardized digital evidence about source, composition, safety, repairability, or ownership.

In all of these cases, the problem is not necessarily a lack of value.

The problem is a lack of machine-usable representation.

That is the hidden bottleneck many firms still mistake for a technology problem. It is not only a technology problem. It is an access problem.

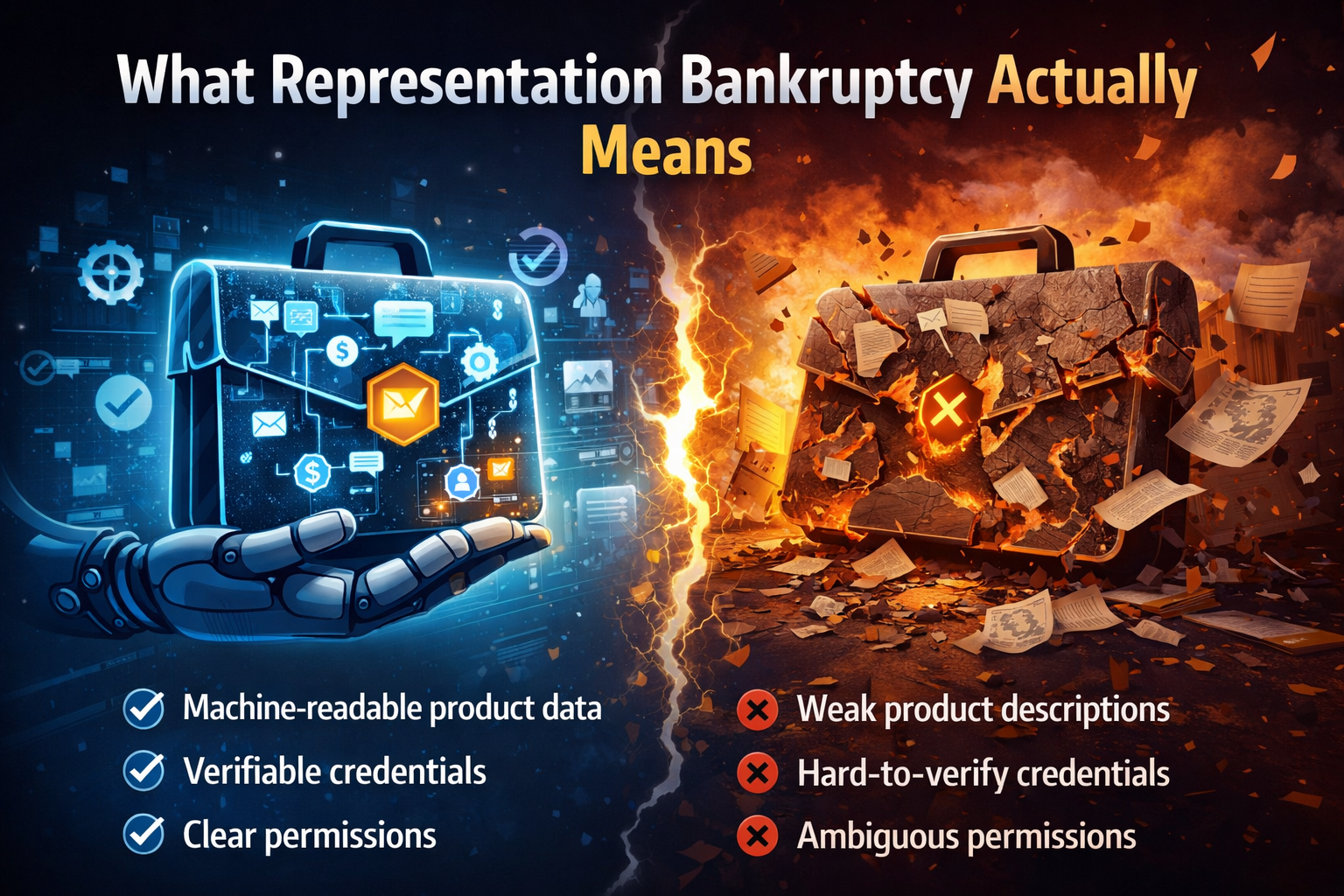

A simple example: the invisible supplier

Imagine two component suppliers.

The first has average products but excellent representation. Its catalog is structured. Product identifiers are standardized. Inventory is exposed through APIs. Certifications are current and machine-verifiable. Shipping status is updated digitally. Quality metrics are tagged consistently. Procurement systems can compare it quickly. AI sourcing agents can understand it.

The second supplier may actually produce better components. But its product data is inconsistent. Certifications live in PDFs. Inventory is updated manually. Traceability is partial. Sustainability claims are hard to verify. Delivery history is not encoded in usable ways.

In a human-led market, both suppliers may still compete if a procurement manager takes the time to investigate.

In a machine-mediated market, the first supplier gets seen first, understood first, and often selected first.

This is not because the first supplier is inherently better.

It is because the first supplier is easier for machines to trust.

That is representation access.

Another example: the skilled worker with weak digital legibility

Now think about labor.

One worker has strong real-world capability but only informal evidence: references buried in email, project work scattered across platforms, unverified certificates, and no structured skills graph.

Another worker has the same, or even slightly lower, real capability but has machine-readable credentials, portable digital identity, verified work history, structured portfolio signals, and interoperable proof of training.

As hiring systems, matching systems, and agentic recruiting tools become more common, the second worker may be surfaced more often and assessed with less friction. OECD has emphasized that trusted, portable digital identity can support inclusion and simplify access to digital services and participation. (OECD)

Again, the issue is not human worth.

It is machine visibility.

The deeper danger: exclusion without anyone explicitly excluding you

This is what makes the Representation Access Economy so important.

Many actors will not be excluded by law.

They will be excluded by format.

No one will send a dramatic rejection letter. No one will formally announce that a firm, a worker, a product, or a supplier has been denied participation. Instead, they will simply be:

- ranked lower

- surfaced later

- trusted less

- financed more cautiously

- routed around

- asked for more proof

- given slower decisions

- excluded from automated workflows

Over time, that becomes economic disadvantage.

This is why the Representation Access Economy is not a side issue. It is becoming a primary strategic question.

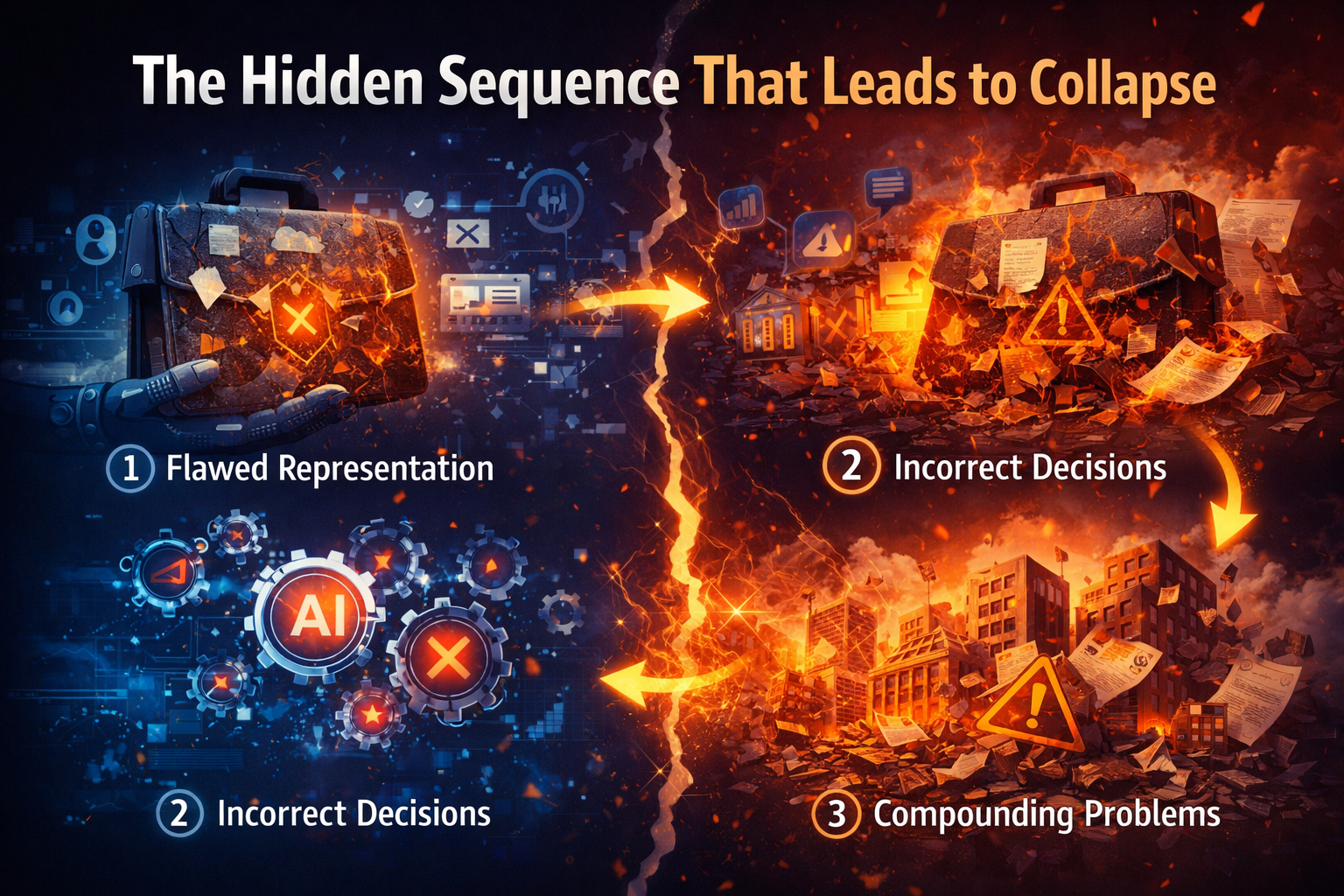

Why AI makes this harsher, not softer

Many people assume AI will automatically reduce friction for everyone. In some cases, it will. But AI can also make representation gaps harsher.

Why?

Because AI scales decision-making. It allows systems to process more entities, more signals, and more possible actions at lower cost. That sounds democratizing. But in practice, scaled decision-making tends to favor entities that are easier to model, compare, verify, and act upon.

The machine does not begin with empathy.

It begins with available structure.

This means AI often rewards what is already legible.

That is one reason standards are becoming so important. Structured content helps search systems. Verifiable credentials help trust systems. Product passport efforts help traceability and regulatory visibility. AI agent standards increasingly focus on identity, security, authorization, and interoperability. (Google for Developers)

The more autonomous systems become, the more costly weak representation becomes.

The SENSE–CORE–DRIVER lens

This is where my SENSE–CORE–DRIVER framework matters.

Most organizations still think of AI mainly as a CORE problem: models, reasoning, recommendations, predictions, and automation.

That is incomplete.

SENSE: where reality becomes machine-legible

SENSE is the legibility layer. It is where reality first becomes visible to machines.

It includes:

Signal

What traces are being captured?

ENtity

Which person, product, asset, organization, document, or object do those signals belong to?

State representation

How is the current condition represented?

Evolution

How is that state updated over time?

In the Representation Access Economy, SENSE determines who enters machine visibility in the first place.

If your signals are weak, your entities are fragmented, your state is unclear, or your updates are stale, the machine does not have a stable basis for trust.

This is why the Representation Access Economy begins before model selection. It begins with whether reality has been structured well enough to be sensed and represented.

CORE: where systems interpret and decide

CORE is the cognition layer.

It includes:

Comprehend context

Optimize decisions

Realize action

Evolve through feedback

This is the layer most of the market calls “AI.”

But CORE can only reason over what SENSE has made available. A brilliant system operating over weak representation can still produce weak outcomes. In many failed AI projects, the model is blamed for what is actually a representation problem.

If the machine does not understand the supplier, the asset, the worker, the customer, or the document correctly, the intelligence layer cannot fully compensate.

DRIVER: where authority and legitimacy matter

DRIVER is the governance and execution layer.

It includes:

Delegation

Who authorized the action?

Representation

What model of reality was used?

Identity

Which entity was affected?

Verification

How is the decision checked?

Execution

How is the action carried out?

Recourse

What happens if the system is wrong?

This matters because access is not only about being seen. It is also about being acted upon fairly and accountably.

As agents begin acting on behalf of users and institutions, identity and authorization become central design questions, which is exactly why NIST’s 2026 initiative emphasizes secure, interoperable agents acting on behalf of users. (NIST)

So the Representation Access Economy is not just a SENSE issue. It is also a DRIVER issue. It concerns not only visibility, but legitimate participation.

What new kinds of companies will emerge

This is where the market opportunity becomes huge.

A new class of firms will emerge not primarily to build the smartest model, but to expand representation access.

-

Reality translators

These companies will convert messy offline or fragmented digital reality into machine-usable representation.

Examples include platforms that turn small-business inventories into structured commerce data, tools that convert fragmented supplier records into procurement-grade identity, or systems that transform paper-heavy compliance flows into machine-verifiable state.

-

Representation onboarding layers

These firms will help smaller actors become visible to AI-mediated ecosystems.

Imagine services that onboard small manufacturers into machine-readable supply chains, convert informal workers into verifiable skill graphs, or help niche products become legible to search, marketplaces, and AI shopping agents.

-

Trust packaging firms

These firms will not just organize information. They will package it into forms that machines can trust.

That could include credentialing networks, provenance services, policy formatting platforms, product authenticity layers, or verifiable evidence services.

-

State infrastructure companies

These businesses will manage current status, not just static identity.

For many use cases, what matters is not only who you are, but what state you are in right now. Inventory available or unavailable. License active or expired. Product recalled or not. Consent granted or revoked. Asset healthy or degraded.

The companies that manage high-quality state representation will become critical infrastructure.

-

Representation fiduciaries

Some of the most powerful new firms may act on behalf of entities that cannot easily represent themselves. Small suppliers, local producers, vulnerable consumers, physical assets, ecosystems, and informal workers may all need trusted intermediaries that represent their interests accurately in machine-mediated markets.

That may become one of the defining new company categories of the AI era.

Why this matters for existing companies right now

Many incumbents still hear ideas like this and assume the issue is futuristic.

It is not.

The Representation Access Economy is already affecting search, commerce, identity, compliance, traceability, and digital trust. Google’s structured data ecosystem, W3C’s verifiable credentials, GS1’s web-linked identifiers, European product passport efforts, and emerging AI agent standards all point in the same direction: machine-readable representation is becoming a core layer of participation. (Google for Developers)

Existing companies should ask five uncomfortable questions.

Five board-level questions

- Can machines reliably discover us?

- Can machines understand what we sell, what we certify, what state we are in, and how trustworthy we are?

- Can agents transact with us without excessive manual intervention?

- Do our suppliers, partners, and customers have enough representation access to fully participate in our ecosystem?

- If AI-mediated markets become the norm, are we easy to select, or easy to skip?

These are no longer niche digital questions. They are competitive questions.

A better sequence for AI strategy

Most firms still approach AI strategy like this:

- choose tools

- run pilots

- automate tasks

- deploy assistants or agents

A stronger sequence is this:

- improve representation

- strengthen state visibility

- standardize trust signals

- clarify delegation and recourse

- then scale intelligence and automation

Why this order?

Because access comes before optimization.

There is little value in building a highly capable AI layer on top of fragmented, weakly represented reality. That usually produces fragile systems, weak trust, and disappointing adoption.

The global dimension

The Representation Access Economy also has a geopolitical and developmental dimension.

UNCTAD has warned that the digital economy remains unevenly distributed. In 2024, digitally deliverable services accounted for more than 60% of total services exports in advanced economies, 44% in developing economies, and only 15% in least developed countries. UNCTAD also points to global concentration and the dominance of a handful of firms in digital markets. (UN Trade and Development (UNCTAD))

This matters because representation access can become a new form of inequality.

Some countries, ecosystems, and firms will have dense digital identity, strong standards, interoperable records, modern APIs, traceable supply chains, and machine-usable credentials.

Others will remain only partially legible.

If that gap grows, AI will not simply automate existing markets. It will reorganize them around who can be represented well.

That is why the Representation Access Economy should matter not only to companies, but also to policymakers, standards bodies, trade ecosystems, and institutional designers.

The biggest misunderstanding to avoid

The biggest misunderstanding is to think this argument is saying everything must be reduced to data.

That is not the point.

The point is that in a machine-mediated economy, some form of representation becomes unavoidable. The strategic question is whether representation becomes narrow, extractive, inaccurate, and controlled by a few actors, or broad, trustworthy, portable, and aligned with the interests of the entities it represents.

The issue is not whether representation will matter.

It already does.

The issue is who will design it, who will control it, and who will gain access through it.

What leaders should do now

Leaders should stop asking only, “How do we use AI?”

A better question is this:

What would it take for our reality to become easily discoverable, understandable, verifiable, and actionable by trustworthy machines?

That question leads to better strategy.

It shifts attention from shiny applications to structural readiness.

It clarifies why many AI projects stall.

It reveals new market opportunities.

And it helps leaders see why the next economic battle will not be won by intelligence alone.

It will be won by those who make reality usable.

Key Insight Summary

-

AI does not only reward intelligence—it rewards representation

-

If machines cannot see, structure, and verify you, you are excluded

-

The new economy is not just digital—it is machine-legible

-

Trust is shifting from human judgment to machine-verifiable signals

Conclusion

The Representation Access Economy is the emerging layer of the AI era in which participation depends on machine-readable presence.

Some actors will enjoy rich access. They will be visible, structured, trusted, and actionable. Others will struggle to enter machine-mediated flows, not because they lack value, but because they lack representation.

That is why this shift matters so much.

In the AI economy, competition will not be shaped only by who has the best model. It will be shaped by who gets represented, who gets converted into machine-usable form, and who is trusted enough for systems to act upon.

That is the deeper strategic contest now unfolding.

The winners will not simply be the firms with more intelligence.

They will be the firms, platforms, and institutions that expand representation access — for themselves, for their ecosystems, and for the parts of reality that markets have historically overlooked.

Because in the AI era, value does not begin only when something is created.

It increasingly begins when something can be seen.

Glossary

Representation Access Economy

The emerging economic layer in which participation depends on whether entities are visible, structured, verifiable, and usable by machines.

Representation Economics

A broader framework for understanding how machine-readable visibility, trust, and action reshape value creation, market access, and competitive advantage.

Machine legibility

The degree to which a machine can recognize, interpret, compare, and act upon an entity or signal.

Representation rights

The question of who gets to be visible and recognized in machine-usable form.

Representation conversion

The process of turning messy, fragmented, offline, or unstructured reality into machine-usable representation.

Structured data

A standardized way of marking up information so machines can understand page content and entities more easily.

Verifiable credentials

Digitally expressed claims that are designed to be cryptographically secure, privacy-respecting, and machine-verifiable.

Digital Product Passport

A digital record intended to store and share important product information across its lifecycle and value chain.

GS1 Digital Link

A way of connecting product identifiers to web-based information for commerce, safety, and traceability.

Machine-readable trust

Trust that can be established through standardized, interoperable, and verifiable signals rather than only through human judgment.

SENSE

The legibility layer where reality becomes machine-readable through signal capture, entity association, state representation, and temporal updating.

CORE

The cognition layer where systems comprehend context, optimize decisions, realize action, and evolve through feedback.

DRIVER

The governance layer that handles delegation, identity, verification, execution, and recourse.

Representation fiduciary

A possible new company type that acts on behalf of entities that cannot easily represent themselves in machine-mediated markets.

Machine-Readable Reality

Information formatted in a way that AI systems can interpret, compare, and act upon.

AI Trust Layer

The infrastructure through which machines verify identity, credibility, and reliability.

Invisible Supplier Problem

When a business exists operationally but is not recognized by AI systems due to lack of structured representation.

Machine Visibility

The ability of an entity to be discovered and processed by AI systems.

FAQ

What is the Representation Access Economy?

It is the part of the AI economy where participation increasingly depends on whether an entity can be represented in forms that machines can discover, understand, verify, trust, and act upon.

Why is this different from traditional digital transformation?

Traditional digital transformation often focused on process efficiency and channel digitization. The Representation Access Economy focuses on whether reality itself is encoded in forms machines can use for search, comparison, decision-making, and action.

Why does this matter to boards and C-suite leaders?

Because market access, discoverability, procurement, compliance, financing, and ecosystem participation are increasingly influenced by machine-mediated systems. Firms that are poorly represented may be skipped before human judgment even begins.

Is this mainly about SEO?

No. SEO is only the earliest visible example. The larger shift includes digital identity, verifiable credentials, product passports, provenance, trust infrastructure, AI agents, and machine-mediated commerce.

What is the biggest risk for incumbents?

The biggest risk is not merely slow AI adoption. It is becoming hard for machines to discover, trust, compare, and transact with the firm.

What kinds of companies will emerge because of this shift?

Likely categories include reality translators, representation onboarding platforms, trust packaging firms, state infrastructure companies, and representation fiduciaries.

How does SENSE–CORE–DRIVER relate to this article?

SENSE explains how reality becomes visible to machines. CORE explains how machines reason over that visibility. DRIVER explains how decisions and actions remain legitimate, accountable, and executable.

What should companies do first?

Before scaling AI pilots, they should improve entity clarity, state visibility, trust signals, structured representation, and governance for machine action.

Q1. What is the Representation Access Economy?

The Representation Access Economy is a new economic model where participation depends on how well entities are represented in machine-readable formats that AI systems can trust and act upon.

Q2. Why is AI changing market access?

AI systems increasingly act as decision-makers. If they cannot interpret or trust your data, you are excluded from recommendations, transactions, and opportunities.

Q3. What does “being invisible to AI” mean?

It means your business, product, or service is not structured or verifiable enough for AI systems to recognize, rank, or recommend.

Q4. How can companies adapt?

By investing in structured data, verifiable credentials, digital identity, and machine-readable systems.

Q5. What industries will emerge from this shift?

New categories include representation platforms, trust infrastructure providers, AI intermediaries, and machine-verification networks.

References and further reading

For this article’s factual foundations, the strongest external references are:

- Google Search documentation on structured data and product markup. (Google for Developers)

- W3C Verifiable Credentials Data Model 2.0. (W3C)

- European Commission and EU materials on Digital Product Passports. (Internal Market & Industry)

- GS1 material on Digital Link. (Google for Developers)

- NIST AI Agent Standards Initiative. (NIST)

- OECD work on trusted and portable digital identity. (OECD)

UNCTAD data on digital inequality and concentration in digitally deliverable services. (UN Trade and Development (UNCTAD))

Institutional Perspectives on Enterprise AI

Many of the structural ideas discussed here — intelligence-native operating models, control planes, decision integrity, and accountable autonomy — have also been explored in my institutional perspectives published via Infosys’ Emerging Technology Solutions platform.

For readers seeking deeper operational detail, I have written extensively on:

- What Makes an Enterprise Intelligence-Native? The Blueprint for Third-Order AI Advantage

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/what-is-enterprise-ai-the-operating-model-for-compounding-institutional-intelligence.html - Why “AI in the Enterprise” Is Not Enterprise AI: The Operating Model Difference Most Organizations Miss

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/why-ai-in-the-enterprise-is-not-enterprise-ai-the-operating-model-difference-that-most-organizations-miss.html - The Enterprise AI Control Plane: Governing Autonomy at Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-enterprise-ai-control-plane-governing-autonomy-at-scale.html - Enterprise AI Ownership Framework: Who Is Accountable, Who Decides, and Who Stops AI in Production

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/enterprise-ai-ownership-framework-who-is-accountable-who-decides-and-who-stops-ai-in-production.html - Decision Integrity: Why Model Accuracy Is Not Enough in Enterprise AI

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/decision-integrity-why-model-accuracy-is-not-enough-in-enterprise-ai.html - Agent Incident Response Playbook: Operating Autonomous AI Systems Safely at Enterprise Scale

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/agent-incident-response-playbook-operating-autonomous-ai-systems-safely-at-enterprise-scale.html - The Economics of Enterprise AI: Designing Cost, Control, and Value as One System

https://blogs.infosys.com/emerging-technology-solutions/artificial-intelligence/the-economics-of-enterprise-ai-designing-cost-control-and-value-as-one-system.html

Together, these perspectives outline a unified view: Enterprise AI is not a collection of tools. It is a governed operating system for institutional intelligence — where economics, accountability, control, and decision integrity function as a coherent architecture.

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

• The Intelligence Supply Chain: How Organizations Industrialize Cognition in the AI Economy

- The Silent Systems Doctrine: Why the AI Economy Will Be Won by Those Who Represent What Cannot Speak

- Signal Infrastructure: Why the AI Economy Begins Before the Model – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Economy Explained: 51 Questions About the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Debt: Why Institutions Accumulate Hidden AI Risk Long Before Failure Becomes Visible – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Capital: The Invisible Asset That Will Decide Which Institutions Win the AI Economy – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Board’s Representation Strategy: How Intelligent Institutions Decide What Must Be Seen, Modeled, Governed, and Delegated – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Balance Sheet: How AI Is Redefining Assets, Liabilities, and Institutional Strength – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- Representation Arbitrage: The New AI Advantage That Will Redefine Who Wins and Who Disappears – Raktim Singh

- • Why Most AI Projects Fail Before Intelligence Even Begins

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh