Representation Workflows:

In the next phase of AI, the real advantage may come less from smarter models and more from better-maintained reality.

For the last few years, most AI ambition has been organized around models.

Which model is more capable?

Which model is cheaper to run?

Which model reasons better, writes better, predicts better, codes better, or plans better?

Those questions matter. But they are no longer enough.

As AI systems move from demos to operations, a deeper truth is becoming visible: a model is only as useful as the reality it can reliably act upon. NIST’s AI Risk Management Framework treats data, inputs, context, monitoring, and lifecycle evaluation as central to trustworthy AI. The EU AI Act similarly emphasizes data governance and the relevance, representativeness, and quality of data for high-risk AI systems. (NIST Publications)

This is where the next wave begins.

The biggest AI companies may not rebuild operations mainly around model deployment. They may rebuild them around Representation Workflows: the repeatable systems that keep machine-readable reality accurate, current, connected, and usable for decision-making and action.

That may sound abstract. It is not.

If a customer record is outdated, the best model still acts on stale reality.

If inventory status is wrong, the best planning engine still makes poor decisions.

If supplier status is fragmented across systems, the best agent still negotiates from a distorted world.

If permissions, identities, prices, policies, and exceptions are not continuously updated, the smartest enterprise AI still becomes unreliable.

In other words, the future of AI will not be decided only by who deploys intelligence. It will also be decided by who can maintain reality at machine speed. The long-standing literature on machine learning systems has already warned that hidden technical debt often comes not from model quality alone, but from dependencies, feedback loops, and the operational mess surrounding the model. (NeurIPS Papers)

What are Representation Workflows?

Representation Workflows are operational systems that continuously maintain machine-readable reality—ensuring that entities, states, relationships, and context remain accurate, current, and usable for AI-driven decisions and actions.

Why model deployment is no longer enough

The first big lesson of enterprise AI was that building a model is not the same as building a system.

That lesson is now well established in MLOps. Production AI requires continuous integration, testing, validation, deployment, and monitoring of not just code, but also data schemas, pipelines, and models. NIST’s AI RMF and the AI RMF Playbook both reinforce that trustworthy AI depends on ongoing governance and lifecycle management rather than one-time launch readiness. (NIST Publications)

But even MLOps, powerful as it is, still tends to frame the problem around maintaining models in production.

The next step is bigger.

The real challenge is not only keeping models fresh. It is keeping the world that the models depend on fresh.

That includes:

- entity identity,

- current state,

- relationships,

- permissions,

- history,

- exceptions,

- event updates,

- and action context.

This is why I call the emerging operational layer Representation Workflows.

These workflows do not mainly exist to improve raw model capability. They exist to maintain the quality of the machine-readable world on which AI depends.

That distinction matters because many executives still think AI value comes primarily from choosing the right model vendor. In practice, competitive advantage often depends more on whether the organization can maintain accurate, timely, governed representations of customers, suppliers, products, claims, assets, policies, and operating conditions. IBM’s framing of data quality and master data management makes this explicit: the value comes from accuracy, completeness, consistency, timeliness, and unified views of core entities across the enterprise. (NIST Publications)

What Representation Workflows actually are

Representation Workflows are the operational processes that continuously keep reality usable for machines.

They include workflows to:

- reconcile conflicting records,

- update entity state,

- match identities across systems,

- validate changing conditions,

- propagate corrections,

- manage feature freshness,

- preserve lineage,

- and synchronize machine-readable views with real-world change.

They are not ordinary data pipelines.

A normal data pipeline moves data from one place to another.

A representation workflow maintains the meaning, state, and action-readiness of what that data is supposed to represent.

That difference is huge.

A shipment is not just a row in a table. It has location, status, delays, exceptions, documents, counterparties, and risk conditions.

A patient is not just a record. They have history, current status, coverage rules, care episodes, and evolving treatment context.

A customer is not just an ID. They have permissions, preferences, transactions, risk markers, product relationships, and service state.

AI does not act on raw data. It acts on represented reality.

And represented reality decays unless someone maintains it.

The Representation Economics view

This is exactly where Representation Economics becomes strategically important.

In the old software era, the focus was digitization.

In the cloud era, the focus was scalability.

In the AI era, the focus is shifting toward legibility.

The winners will increasingly be the companies that make the world more continuously legible to machines.

That means the most important asset may not be the model alone. It may be the set of operational workflows that ensure the model is always looking at something close enough to reality to act safely, profitably, and at speed.

This is what makes Representation Workflows different from generic data operations or classic MLOps. They are not just about feeding the system. They are about keeping the system’s world alive.

This direction is consistent with broader industry and standards movement. NIST’s generative AI profile emphasizes reviewing and documenting data accuracy, relevance, representativeness, and suitability across the AI lifecycle. (NIST Publications)

Representation Workflows take that logic one step further.

They ask not just, “How do we improve the dataset?”

They ask, “How do we continuously maintain the operational representation of reality after deployment?”

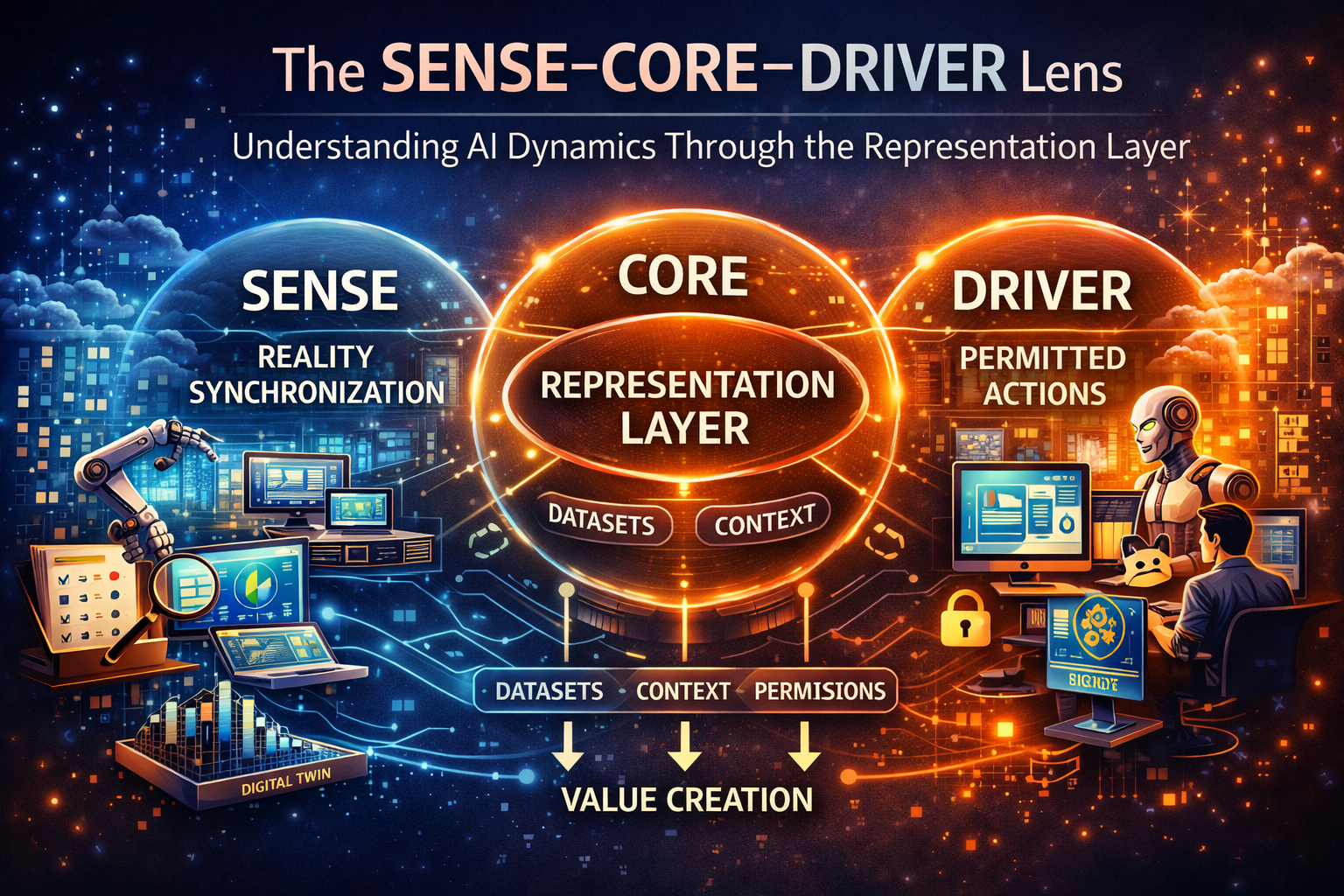

The SENSE–CORE–DRIVER lens

This topic becomes much clearer through SENSE–CORE–DRIVER.

SENSE: where reality becomes machine-legible

This is where signals are captured, entities are identified, state is constructed, and changes are recorded over time.

Representation Workflows begin here.

If SENSE is weak, systems do not know what is current, what belongs together, what changed, or what should trigger an update. That is why feature freshness, event streams, identity resolution, document extraction, knowledge graphs, and digital twins matter so much. The current standards and technical guidance around lifecycle governance, live state, and context quality point in exactly this direction. (NIST Publications)

CORE: where decisions are made

This is where models reason, rank, classify, plan, optimize, and generate outputs.

But CORE is downstream of representation quality.

A powerful model operating on stale, fragmented, or mismatched reality will still produce bad recommendations and unreliable actions. The hidden-technical-debt literature made this point years ago: model-centric thinking often understates the operational complexity of real systems. (NeurIPS Papers)

DRIVER: where actions become legitimate

This is where the system actually does something: approves, denies, routes, escalates, pays, blocks, schedules, dispatches, or changes a state.

This is where poor representation becomes expensive.

If the state is wrong, the action may be wrong.

If the entity is wrong, the action may hit the wrong target.

If the context is stale, the action may be mistimed.

If the correction never propagates, the harm compounds.

Representation Workflows therefore are not only a SENSE issue. They are also a DRIVER issue because action quality depends on representation quality.

Three simple examples

-

Retail and commerce

Imagine a retailer using AI for pricing, replenishment, and customer service.

If stock levels are inaccurate, the planning model overpromises.

If returns are not reflected fast enough, pricing logic misfires.

If customer identity is fragmented across web, app, and store systems, service agents cannot act coherently.

The problem is not that the model lacks intelligence. The problem is that operations lack a workflow for keeping reality synchronized.

The AI winner in retail may not be the company with the flashiest model. It may be the company with the best workflows for maintaining product truth, inventory truth, customer truth, and policy truth.

-

Banking and insurance

A bank or insurer may deploy AI across fraud, underwriting, service, claims, and collections.

But if customer state, repayment events, policy changes, beneficiary updates, claim documents, and exception histories are not continuously reconciled, the institution’s AI layer operates on partial truth.

That leads to false alerts, poor denials, weak prioritization, and rising recourse costs.

The strategic edge does not come only from better models. It comes from better-maintained institutional memory and reality representation.

-

Industrial operations and supply chains

A factory, warehouse, or logistics network increasingly depends on digital representations of physical assets, flows, and constraints.

This is why digital twins have become strategically important. Microsoft describes digital twins as live digital representations of real-world environments, and that concept only works when state is refreshed and synchronized reliably. (NIST Publications)

In this setting, Representation Workflows become mission-critical. The company that best maintains the digital state of machines, locations, documents, and exceptions will outperform the company that merely deploys a stronger model.

Why agents make this even more important

The rise of AI agents pushes this issue into the center of strategy.

OpenAI’s guidance for building agents emphasizes that agents need structured orchestration, appropriate tools, safe execution patterns, and reliable context retrieval. In other words, scalable agents require operational scaffolding, not just reasoning power. (OpenAI)

This is exactly why Representation Workflows matter more in an agentic world.

A chatbot can survive with partial context.

An acting agent cannot.

An agent that updates records, sends messages, books appointments, executes trades, or changes case status needs current, trusted, scoped reality.

That means the rise of agents will increase the value of companies that can maintain:

- current state,

- permission state,

- exception state,

- workflow state,

- and recovery state.

In other words, agents increase the premium on reality maintenance.

Why this will create a new market

Representation Workflows will become a major market because they solve a structural problem, not a temporary one.

First, enterprises are realizing that AI performance depends heavily on data quality, freshness, monitoring, and context continuity. (NIST Publications)

Second, digital operations are becoming more event-driven and real-time, which makes stale state more damaging and more visible. The shift from batch data to live operational context changes what “good AI infrastructure” actually means. (NIST Publications)

Third, regulation and governance are moving toward lifecycle responsibility, not just model-launch responsibility. NIST and the EU AI Act both reflect that direction. (NIST Publications)

Fourth, once models commoditize, the harder-to-copy advantage shifts toward maintained operational truth.

That creates room for new categories of firms:

- representation workflow platforms,

- entity-state synchronization providers,

- reality maintenance engines,

- correction propagation layers,

- cross-system truth reconciliation companies,

- and operational graph integrity providers.

These firms may become as important to the AI era as workflow software became to the SaaS era.

What boards and CEOs should understand now

Boards should stop asking only, “Which model are we deploying?”

They should start asking:

- Which parts of our business reality must stay machine-legible for AI to work?

- Where does state become stale, fragmented, or contradictory?

- Which workflows currently maintain that reality?

- Are those workflows manual, slow, and hidden?

- Which decisions are failing because the representation layer is weak?

- Are we investing too much in CORE and too little in SENSE?

This is not a technical housekeeping issue.

It is a strategic design issue.

Because in the next AI economy, companies will not compete only on model intelligence. They will compete on their ability to continuously maintain a usable representation of reality.

That is why the biggest AI companies may rebuild operations around Representation Workflows, not just model deployment.

Representation Workflows are the operational backbone of the AI economy, enabling continuous maintenance of machine-readable reality across entities, states, relationships, and permissions. As AI systems evolve from model-based decision-making to real-world execution, competitive advantage will increasingly depend on maintaining accurate, real-time representations rather than just deploying more advanced models. This concept is part of the Representation Economics framework using the SENSE–CORE–DRIVER architecture.

Conclusion: the next operating advantage is reality maintenance

The AI market still loves model launches because they are dramatic, visible, and benchmarkable.

But real institutional advantage is often quieter.

It lives in whether the system knows the current customer, current supplier, current claim, current shipment, current inventory, current permission, current exception, and current risk.

That is not glamour work. It is operating-system work.

And it may define the next generation of winners.

The future leaders in AI may not simply be the ones with the best reasoning engines. They may be the ones that build the strongest workflows for keeping reality continuously legible, governable, and actionable.

That is the deeper shift.

In the AI economy, intelligence matters.

But maintained reality may matter even more.

And that is why Representation Workflows deserves to become one of the defining ideas in Representation Economics.

Glossary

Representation Workflows

Operational processes that continuously maintain machine-readable reality so AI systems can make accurate, timely, and governed decisions.

Representation Economics

A strategic framework arguing that future advantage will come from how well institutions represent entities, states, relationships, and changes in a machine-usable way.

Machine-readable reality

The structured digital representation of the world that AI systems rely on to reason and act.

SENSE

The layer where signals are captured, entities are identified, state is built, and change is recorded.

CORE

The layer where models reason, rank, plan, and generate decisions.

DRIVER

The layer where decisions become legitimate actions through authorization, execution, verification, and recourse.

MLOps

Practices for managing machine learning systems in production, including deployment, monitoring, and lifecycle maintenance.

Technical debt in ML

The hidden operational complexity that builds up around production machine learning systems, often beyond the model itself. (NeurIPS Papers)

Digital twin

A live digital representation of a real-world environment, system, or asset used for monitoring, simulation, and operations. (NIST Publications)

FAQ

What are Representation Workflows in AI?

Representation Workflows are the processes that keep digital representations of customers, assets, suppliers, policies, and operating conditions accurate and current so AI can act on them reliably.

How are Representation Workflows different from MLOps?

MLOps focuses on deploying and managing models in production. Representation Workflows focus on maintaining the machine-readable reality that those models depend on.

Why do Representation Workflows matter for AI agents?

Agents do not just answer questions. They act. That means they need current, trusted, and scoped representations of the world to avoid errors and unsafe execution. (OpenAI)

Why is this important for boards and CEOs?

Because AI failures often come not from weak reasoning alone, but from stale state, fragmented identity, bad context, and poorly maintained operational truth.

What kinds of companies could emerge here?

Representation workflow platforms, entity-state synchronization firms, correction propagation layers, operational graph integrity providers, and reality maintenance engines.

References and further reading

- NIST AI Risk Management Framework 1.0. (NIST Publications)

- NIST AI RMF Playbook. (NIST)

- NIST Generative AI Profile. (NIST Publications)

- EU AI Act overview and Article 10 on data and data governance. (Digital Strategy)

- Hidden Technical Debt in Machine Learning Systems. (NeurIPS Papers)

- OpenAI practical guide to building agents and agent learning track. (OpenAI)

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- The Representation Deficit: Why Institutions Fail When Reality Cannot Enter the Decision System – Raktim Singh

- The Representation Maturity Model: How Boards Decide When AI Can Be Trusted With Real Decisions – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- The Representation Reserve Currency: Why AI Will Trust Only a Few Forms of Reality – Raktim Singh

- The Machine-Readable Boundary of the Firm: How AI Is Redefining What Companies Own, Outsource, and Orchestrate – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Access Economy: Why AI Will Decide Who Gets Seen, Structured, and Trusted – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- The Representation Commons: Why Broad-Based AI Value Begins Before the Model – Raktim Singh

- The Representation Conversion Industry: Why the Biggest AI Companies Will Rebuild Reality Before They Build Intelligence – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Accounting: The New Discipline That Will Decide Which AI-Driven Institutions Can Be Trusted – Raktim Singh

- Synthetic Representation: How the AI Economy Will Construct Reality When It Cannot Fully Observe It – Raktim Singh

- Representation Clearinghouses: The Missing Infrastructure the AI Economy Needs to Reconcile Reality Before It Acts – Raktim Singh

- Recourse Platforms: The Next AI Infrastructure Market for Correction, Appeal, and Recovery – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh