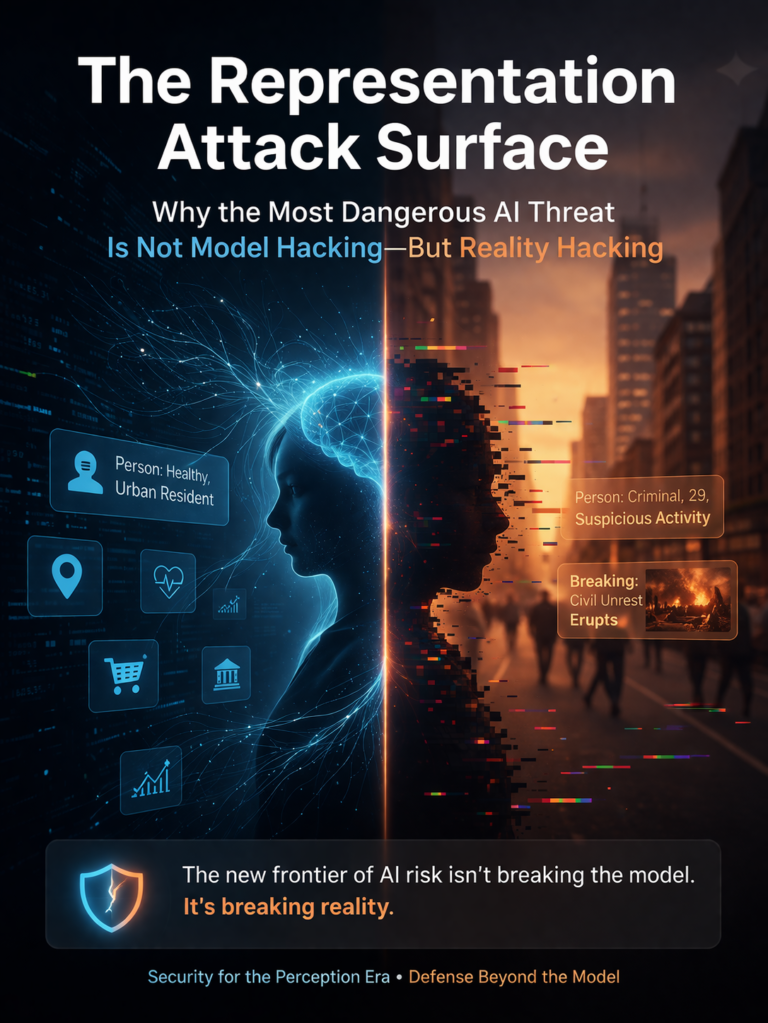

The Representation Attack Surface

Artificial intelligence has made one assumption feel intuitive: if the model is secure, the system is secure.

That assumption is becoming dangerous.

The next wave of AI failure will not come only from stolen model weights, prompt injection, jailbreaks, or poisoned training data. It will increasingly come from something deeper and less visible: the corruption of the machine-readable reality that AI systems rely on to interpret the world and act within it. NIST frames AI risk as a socio-technical problem shaped not only by technical components but also by context, human behavior, operational use, and interactions with other systems. MITRE’s ATLAS and SAFE-AI work similarly emphasize that AI-enabled systems expand the attack surface beyond the model itself. (NIST Publications)

That is the real attack surface.

I call it the representation attack surface.

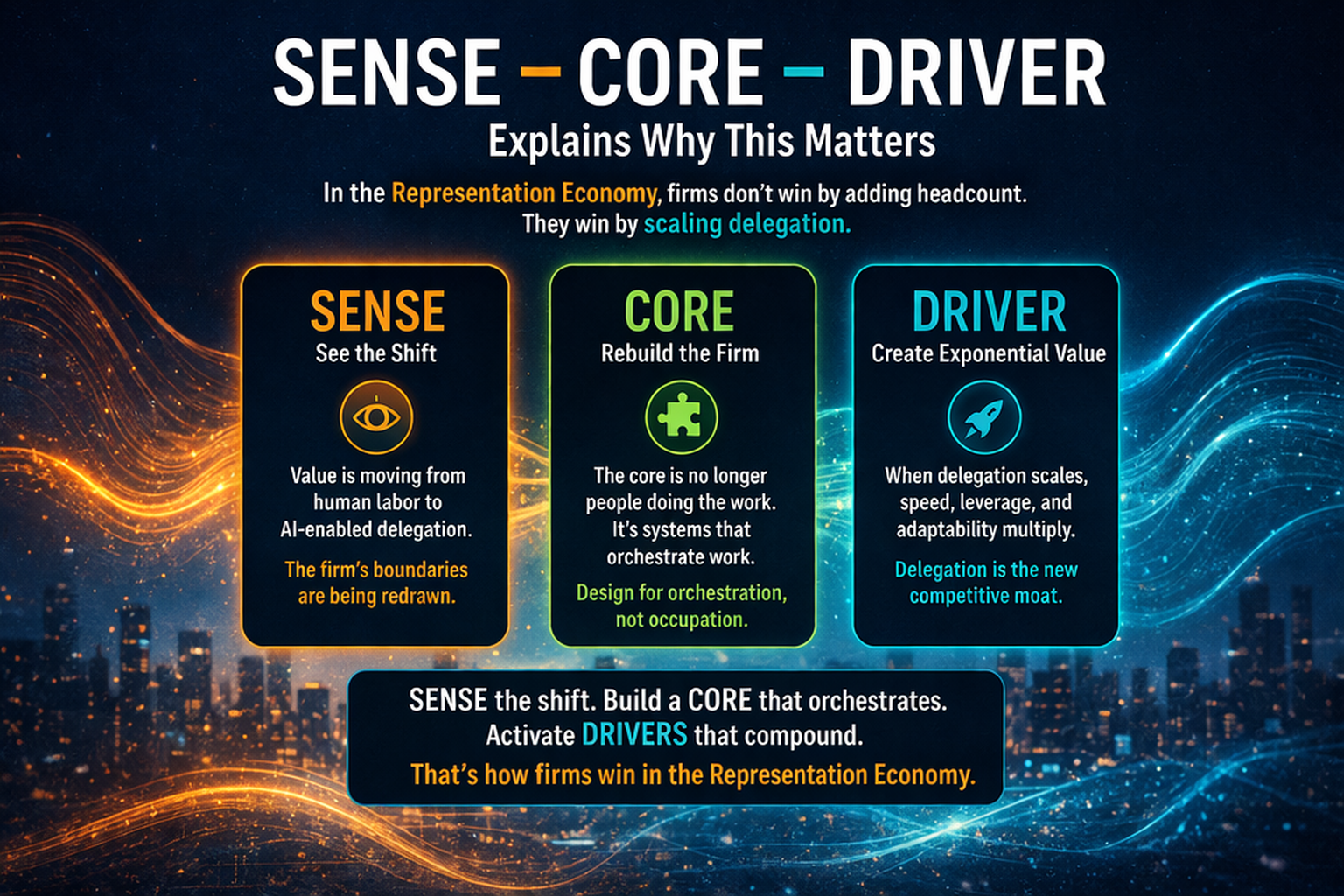

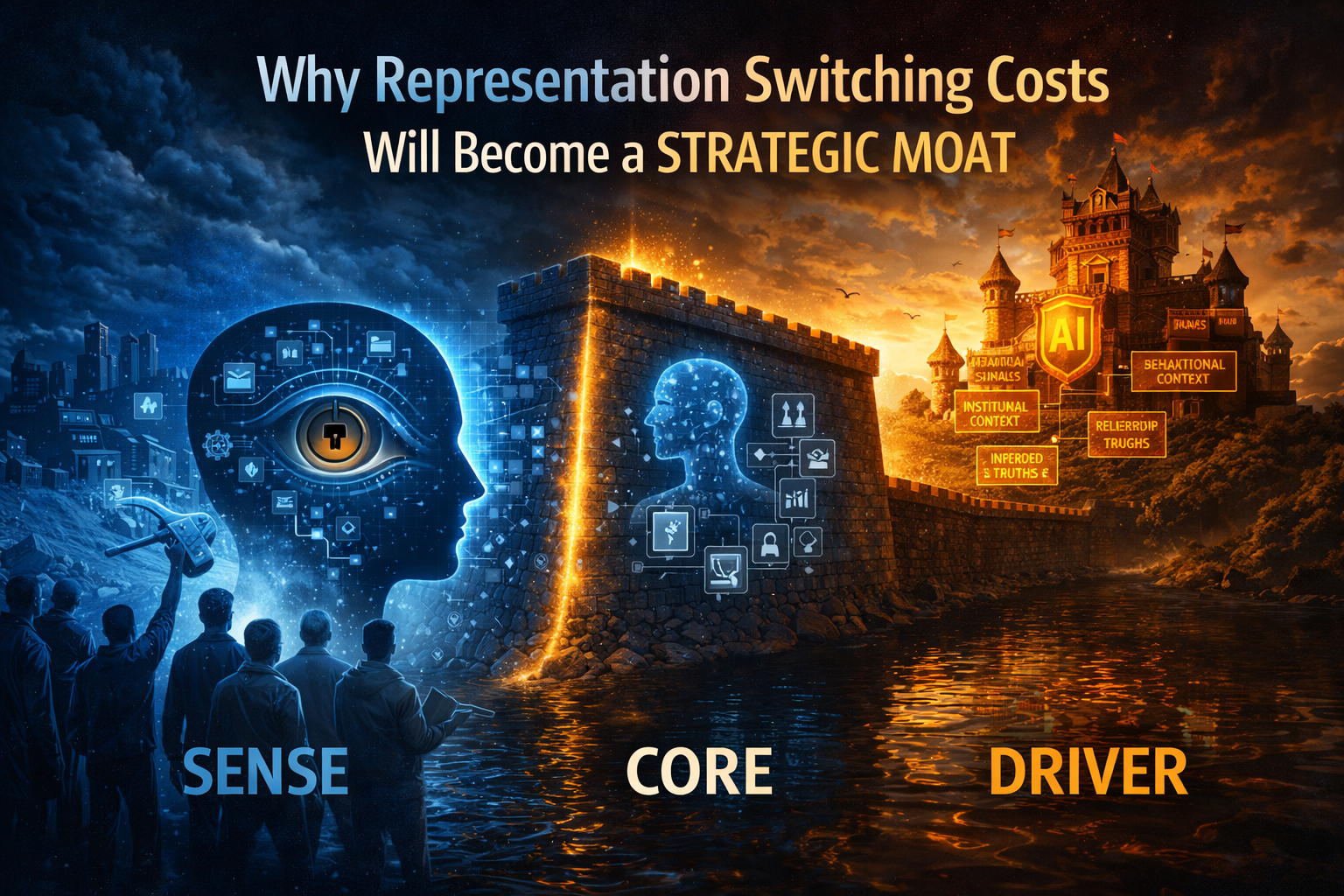

In the Representation Economy, value does not come only from intelligence. It comes from how well a system represents reality, reasons over that representation, and acts with legitimacy. That is why the SENSE–CORE–DRIVER framework matters so much.

SENSE is the legibility layer: Signal, ENtity, State, Evolution.

CORE is the cognition layer: Comprehend, Optimize, Realize, Evolve.

DRIVER is the governance layer: Delegation, Representation, Identity, Verification, Execution, Recourse.

Most AI conversations still focus disproportionately on CORE. Is the model accurate? Is it aligned? Is it robust? Those are valid questions. But they miss a more foundational one:

What if the system is acting on a corrupted version of reality before the model even begins to reason?

That is the shift leaders now need to understand.

The Representation Attack Surface refers to all the ways an AI system’s understanding of reality can be manipulated through data, signals, and context—before the model even begins to reason.

Why model security is too narrow

When people hear the phrase AI security, they usually think of a short list of familiar risks:

- a model being stolen

- a chatbot being jailbroken

- training data being poisoned

- a malicious prompt causing a bad output

- a hidden system prompt being exposed

All of that matters.

But it is no longer enough.

Today’s AI systems are not isolated models. They are connected to emails, documents, browsers, databases, APIs, identity systems, enterprise tools, approval chains, and real-world workflows. OWASP’s updated guidance reflects this broader reality by highlighting risks such as indirect prompt injection, supply-chain weaknesses, improper output handling, and excessive agency in deployed LLM and agentic applications. (OWASP Foundation)

Once AI becomes part of an operating environment, the security question changes.

It is no longer just:

Can someone break the model?

It becomes:

Can someone distort the reality the model is allowed to see, trust, and act upon?

That is a much larger battlefield.

What is reality hacking?

Reality hacking is not science fiction. It is the manipulation of the inputs, identities, states, context, permissions, or action pathways that make a system believe something false, incomplete, outdated, or strategically misleading about the world.

In plain language, the attacker does not need to defeat the brain if they can poison the world the brain is reading.

This is already visible in modern AI security guidance. Google describes indirect prompt injection as a vulnerability in which malicious instructions are embedded inside external content such as documents, emails, or webpages and then treated by the AI system as legitimate instructions. Microsoft’s Zero Trust guidance warns that untrusted external content can cause AI systems to take unintended actions, including sensitive operations, if layered defenses are not in place. (Google Workspace Help)

A few examples make the idea concrete.

A customer-support copilot reads a document that contains hidden instructions. A user simply asks for a summary. The model treats the hidden text as instructions and changes its behavior.

A fraud system is not hacked, but the upstream entity-resolution process merges two people into a single profile. The downstream model reasons correctly over the wrong person.

A logistics AI has a strong optimization engine, but sensor data is delayed or spoofed. It reallocates resources using stale reality.

An enterprise agent is allowed to call tools. Nobody steals the model. Nobody alters the weights. But an attacker manipulates a webpage, plugin response, or document so the agent confidently triggers the wrong action.

In each case, the intelligence may be functioning. The real problem is that the system’s representation of reality has already been compromised.

That is why the most dangerous AI threat is increasingly not just model hacking.

It is reality hacking.

The three layers of the representation attack surface

The clearest way to understand this is through SENSE–CORE–DRIVER.

-

SENSE attacks: corrupting what the system can know

SENSE is the layer where reality becomes machine-legible.

If this layer is weak, everything above it inherits that weakness.

SENSE attacks include signal corruption, fake telemetry, manipulated logs, poisoned data streams, entity confusion, duplicated identities, state distortion, stale context, and failures to track how conditions evolve over time. NIST’s AI RMF stresses that AI risks must be assessed in lifecycle and operational context, not merely as abstract model characteristics. (NIST Publications)

A simple way to explain this to executives is:

SENSE attacks do not need to fool the model. They only need to fool the model’s picture of reality.

That is why poor entity resolution, bad sensor hygiene, weak provenance, and delayed state updates can quietly become AI security issues.

-

CORE attacks: manipulating reasoning through context

This is the layer most people recognize.

CORE attacks include direct prompt injection, indirect prompt injection, retrieval poisoning, adversarial examples, contextual misdirection, tool-response manipulation, and reasoning traps that begin with corrupted premises. MITRE ATLAS catalogs adversarial tactics and techniques against AI-enabled systems, and ENISA has documented security concerns around manipulation, evasion, and poisoning in machine learning systems. (MITRE ATLAS)

But the most important point is often missed.

The attack is not always about making the model unintelligent.

It is often about making the model confidently rational in the wrong world.

That is more dangerous than a visibly weak model.

A model that is wrong because it lacks capability is easier to challenge. A model that is wrong because it is faithfully reasoning over manipulated reality is much harder to detect.

-

DRIVER attacks: exploiting action, authority, and recourse

This is where AI risk becomes institutional risk.

DRIVER asks:

- Who authorized the system to act?

- On whose behalf is it acting?

- What is it allowed to change?

- What verification happens before action?

- Can action be stopped, reversed, or appealed?

- What recourse exists if the system is wrong?

OWASP’s guidance on excessive agency describes the danger of granting LLM-based systems enough autonomy that manipulated or unexpected outputs can cause damaging downstream actions. Agentic security guidance is moving in the same direction: when AI systems can plan, call tools, access services, or take action across workflows, bounded permissions and explicit approval paths become essential. (OWASP Gen AI Security Project)

A bad answer is a content problem.

A bad action is an institutional problem.

That is the point where AI security becomes a board-level issue.

The real attack surface is the institution’s machine-readable reality

This is the central argument.

For decades, cybersecurity focused on protecting systems, endpoints, credentials, applications, and networks.

AI adds another layer: the machine-readable representation of the world itself.

That includes:

- what the system accepts as a valid signal

- how it identifies an entity

- how it records state

- how fast that state is refreshed

- what content it trusts

- what tools it can call

- what identities can authorize action

- what checks happen before execution

- whether wrong actions can be unwound

This is why AI security can no longer be treated as a narrow model-team issue. It is now a cross-functional concern involving cybersecurity, data architecture, identity and access management, workflow design, enterprise integration, governance, legal, risk, internal audit, and operations. NIST’s framework explicitly supports this wider organizational view through its govern, map, measure, and manage functions. (NIST AI Resource Center)

The representation attack surface sits across all of them.

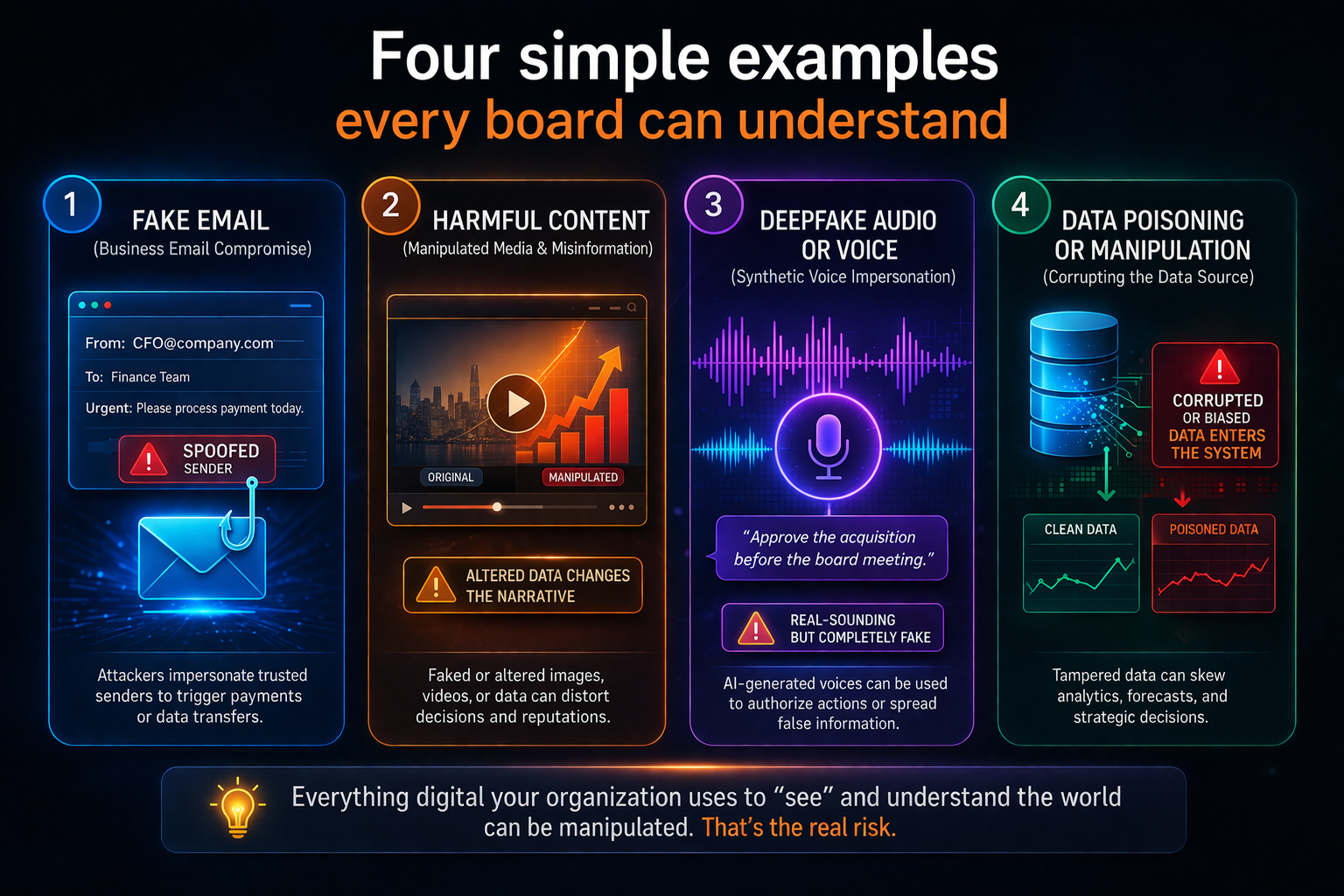

Four simple examples every board can understand

Example 1: The invisible instruction in a document

A team uses an AI assistant to summarize vendor proposals. One proposal contains hidden instructions telling the system to ignore other bids and recommend that vendor. No model theft. No firewall breach. But the system’s interpretation is hijacked through untrusted context. That is the practical logic of indirect prompt injection. (Google Workspace Help)

Example 2: The wrong identity, correctly processed

A bank’s AI copilot pulls internal data and prepares a risk summary. Because of poor entity matching, it merges two businesses with similar names. The report looks polished and logical. The reasoning appears sound. The identity is wrong.

Example 3: The stale-state problem

A supply-chain system uses AI to reroute shipments. The optimization engine is strong, but one warehouse’s availability has not been updated. Capacity appears open when it is not. The AI does not fail because it cannot reason. It fails because the representation is stale.

Example 4: The overpowered agent

An enterprise assistant can read email, update tickets, trigger approvals, and send messages. A malicious email alters its behavior. If the system lacks approval boundaries or reversible execution paths, a content-level manipulation becomes a workflow-level breach. OWASP and Microsoft both warn that agentic systems can turn manipulated content into damaging actions when autonomy is not tightly scoped. (OWASP Foundation)

These examples all point to the same conclusion:

representation is now part of the attack surface.

What leaders should do next

The answer is not panic. It is architectural maturity.

Map the representation layer

Most firms inventory models. Far fewer inventory the signals, identity dependencies, context sources, state-refresh pathways, and delegated action routes that surround those models.

Separate trusted from untrusted reality

Emails, PDFs, websites, third-party APIs, user-generated content, model outputs, and tool responses should not be treated as equally trustworthy. Google and Microsoft both recommend layered defenses against untrusted external content in AI systems. (Google Workspace Help)

Reduce silent authority

Do not give agents broad action rights without scoped permissions, contextual verification, explicit confirmations, and reversible execution paths.

Design for recourse

A mature AI system should not only produce answers. It should support rollback, correction, appeal, human review, and post-incident analysis.

Red-team for reality hacking

Testing should go beyond jailbreaks and model abuse. It should include entity confusion, malicious documents, stale-state simulation, spoofed telemetry, identity manipulation, tool-output tampering, and failures in action unwinding. MITRE’s system-level AI defense work supports this broader view of adversarial testing. (MITRE ATLAS)

Why this matters in the Representation Economy

The Representation Economy is built on a simple truth:

AI acts on what a system can represent.

That means advantage will not go only to the organizations with stronger models. It will increasingly go to the organizations with stronger representation discipline.

The winners will know:

- what must be sensed

- what must be verified

- what must be represented as an entity

- what must be continuously updated

- what can be delegated

- what must remain contestable

- what must always allow recourse

The losers will continue overinvesting in CORE while underinvesting in SENSE and DRIVER.

That is why the future of AI risk is not merely a safety problem, or only a cybersecurity problem.

It is a representation problem.

And once that becomes clear, the battlefield changes.

The most important AI question is no longer just:

Can the model be trusted?

It is now:

Can the institution trust the machine-readable reality on which the model is acting?

That is the true representation attack surface.

That is where the next wave of enterprise advantage will be won.

And that is where the next wave of failure will begin.

Conclusion: Boards must shift from model protection to reality protection

Boards and C-suites have been taught to think about AI risk through the lens of model performance, compliance, and cybersecurity controls around software components. That lens is now too narrow. In the next phase of enterprise AI, institutions will be judged by whether they can protect the integrity of the reality their systems perceive, the authority those systems are granted, and the recourse available when those systems are wrong.

The most resilient organizations will not be those that merely secure models. They will be those that secure machine-readable reality.

That is the deeper lesson of the Representation Economy.

And it may become the defining security doctrine of the AI era.

FAQ

What is the representation attack surface?

The representation attack surface is the set of ways an attacker can manipulate the machine-readable version of reality that an AI system uses to sense, interpret, and act.

How is reality hacking different from model hacking?

Model hacking targets the model itself, including prompts, weights, or outputs. Reality hacking targets the signals, entities, states, context, permissions, and action pathways around the model.

Why does this matter for enterprise AI?

Because enterprise AI systems are connected to documents, tools, APIs, workflows, and permissions. Damage often occurs not when the model answers badly, but when a system acts on distorted reality.

What is a simple example of reality hacking?

A malicious document containing hidden instructions, an identity-resolution error that links data to the wrong person, or stale operational data that causes the system to act on yesterday’s conditions.

How does SENSE–CORE–DRIVER help leaders?

It helps leaders see that AI risk spans three layers: the reality the system can observe, the reasoning it performs, and the governed action it is allowed to take.

What is reality hacking in AI?

Reality hacking refers to manipulating the inputs, signals, or context that AI systems use to understand the world, leading to incorrect or harmful decisions.

Why is model security not enough in AI?

Because AI decisions depend on input data. If the input is manipulated, even a perfectly secure model will produce wrong outputs.

How do deepfakes relate to AI security?

Deepfakes are a form of representation attack—they manipulate perceived reality, not the model itself.

What should boards focus on in AI risk?

Boards should focus on representation integrity, not just model performance or cybersecurity.

Glossary

Representation attack surface

The total set of ways a machine-readable version of reality can be manipulated, corrupted, delayed, or misinterpreted before or during AI decision-making.

Representation Economy

A framework for understanding the AI era as one in which value depends on how well reality is represented, reasoned over, and acted upon.

Reality hacking

The manipulation of machine-readable reality so that AI systems act on false, incomplete, stale, or strategically distorted context.

SENSE

The legibility layer: Signal, ENtity, State, Evolution.

CORE

The cognition layer: Comprehend, Optimize, Realize, Evolve.

DRIVER

The governance layer: Delegation, Representation, Identity, Verification, Execution, Recourse.

Indirect prompt injection

A vulnerability where malicious instructions are embedded inside external content, such as documents, webpages, or emails, and then treated by the AI system as legitimate instructions. (Google Workspace Help)

Excessive agency

A condition where an AI system has enough autonomy or permissions that manipulated or unexpected outputs can cause real-world damage. (OWASP Gen AI Security Project)

Machine-readable reality

The structured version of the world a system can identify, track, reason over, and act on.

References and further reading

For credibility and GEO pickup, add a clean reference section at the end of the article using authoritative sources:

- NIST AI Risk Management Framework 1.0 and related AI RMF resources for socio-technical AI risk and lifecycle governance. (NIST Publications)

- MITRE ATLAS and SAFE-AI for adversarial threat mapping and system-level AI defense. (MITRE ATLAS)

- OWASP Top 10 for LLM Applications and OWASP GenAI Security Project for prompt injection, excessive agency, and agentic application risks. (OWASP Foundation)

- Google guidance on indirect prompt injections in Gemini and Workspace environments. (Google Workspace Help)

- Microsoft guidance on defending against indirect prompt injection in Zero Trust architectures. (Google Bug Hunters)

- Emerging Technology Solutions | Infosys Topaz Fabric: How AI Is Quietly Changing the Way Enterprise Services Are Delivered

- Emerging Technology Solutions | What Is Infosys Topaz Fabric? The Missing Layer for Scalable Enterprise AI

- Emerging Technology Solutions | Infosys Topaz Fabric: Enterprise AI Infrastructure for Scalable, Governed, and Cost-Aware AI Exec

-

Explore the Architecture of the AI Economy

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence. Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models.

If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- • Why Most AI Projects Fail Before Intelligence Even Begins

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Failure: Why AI Systems Break When Institutions Misread Reality – Raktim Singh

- The Representation Premium: Why Institutions That Are Easier for AI to See, Trust, and Coordinate With Will Win the Next Economy – Raktim Singh

- The Firm of the AI Era Will Be Built Around Representation: Why Institutions Must Redesign Themselves for the SENSE–CORE–DRIVER Economy – Raktim Singh

- The Representation Stack: The New Architecture of Intelligent Institutions in the AI Economy – Raktim Singh

- Representation Economics: The New Law of Value Creation in the AI Era – Raktim Singh

- Representation Insurance: Why Machine-Readable Trust Will Power the AI Economy – Raktim Singh

- Representation Bankruptcy: Why AI Will Break Companies That Machines Cannot Trust – Raktim Singh

- The Representation Kill Zone: Why Companies Become Invisible Before They Realize They Are Losing – Raktim Singh

- Representation Alpha: Why Competitive Advantage Will Come from Better Representation, Not Better Models – Raktim Singh

- Representation Fiduciaries: The Missing Institution the AI Economy Cannot Scale Without – Raktim Singh

- Representation Fragility and Exclusion: The Hidden Fault Line That Will Break the AI Economy – Raktim Singh

- Representation Drift & Labor: Why AI Systems Fail When Reality Moves Faster Than Machines – Raktim Singh

- Representation Monopolies: Why the AI Economy Will Be Controlled by Those Who Define Reality – Raktim Singh

- Representation Forensics: The Missing Layer of AI—Why the Future Will Be Decided by What Systems Thought Reality Was – Raktim Singh

- What Is the Representation Economy? (raktimsingh.com)

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER (raktimsingh.com)

- Decision Scale: Why Competitive Advantage Is Moving from Labor Scale to Decision Scale (raktimsingh.com)

- Why Intelligence Alone Cannot Run Enterprises: The Missing AI Execution Layer – Raktim Singh

- The Representation Utility Stack: Why AI’s Next Competitive Advantage Will Come from Interoperable Reality – Raktim Singh

- Firms Won’t Be Defined by Employees. They Will Be Defined by Delegation – Raktim Singh

- The New Company Stack: The 7 Business Categories That Will Emerge in the Representation Economy – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

-