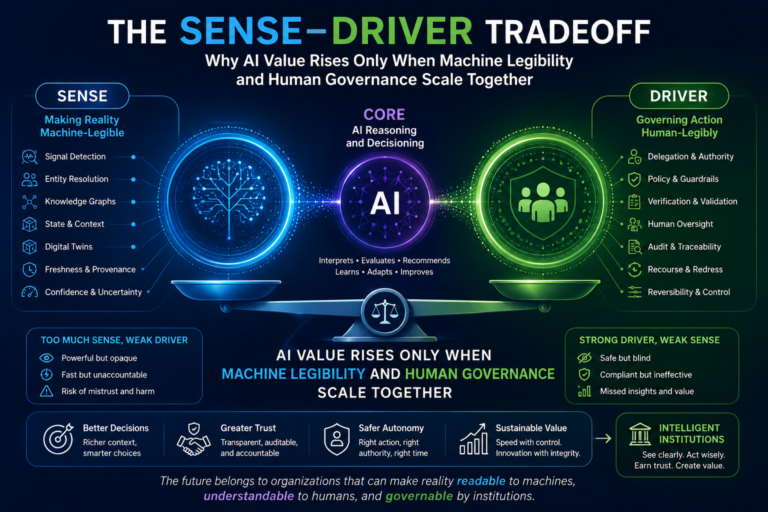

The SENSE–DRIVER Tradeoff:

Why AI Value Rises Only When Machine Legibility and Human Governance Scale Together

Most conversations about enterprise AI still begin with the wrong question.

Which model should we use?

Which AI platform should we buy?

Which use case should we automate first?

How much productivity can we gain?

These are useful questions. But they are not the deepest questions.

The deeper question is this:

Can the institution make reality readable enough for AI to act, while keeping that action governable enough for humans to trust?

That is the real enterprise AI challenge.

AI creates extraordinary upside because it can automate work that earlier software could not. It can interpret ambiguity, classify exceptions, summarize documents, detect patterns, recommend decisions, generate actions, and coordinate workflows. It can help organizations move beyond simple task automation into the automation of repeatable judgment.

But this upside comes with a new burden.

The more AI is allowed to reason and act, the more the institution must strengthen the systems that represent reality and govern action.

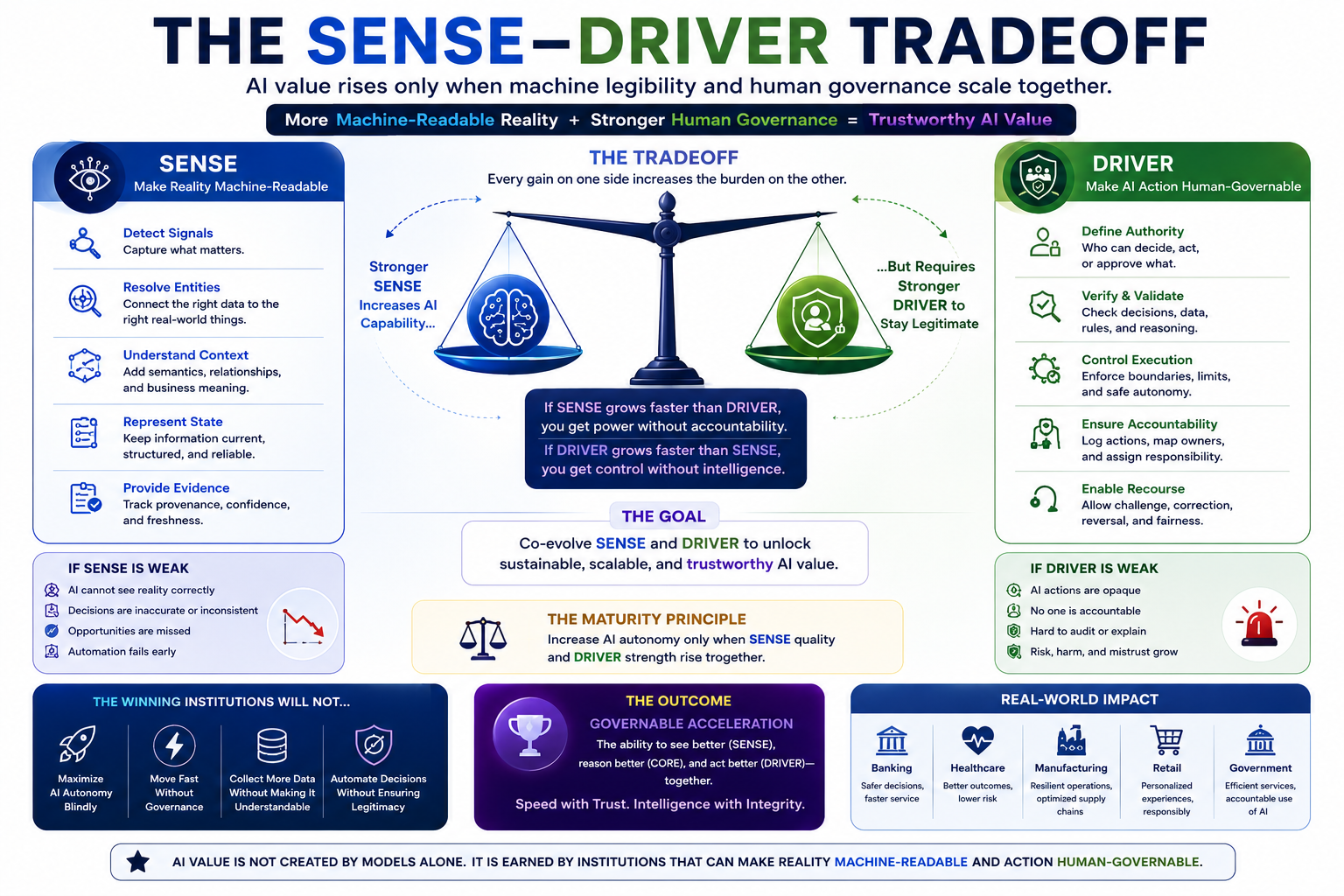

This is where the SENSE–DRIVER tradeoff begins.

In the SENSE–CORE–DRIVER framework:

- SENSE is the layer that makes reality machine-readable.

- CORE is the reasoning layer that interprets that reality.

- DRIVER is the governance layer that decides what AI is allowed to do, under whose authority, with what verification, and with what recourse.

The mistake many organizations make is believing that AI value rises mainly with better CORE.

It does not.

AI value rises when SENSE and DRIVER mature together.

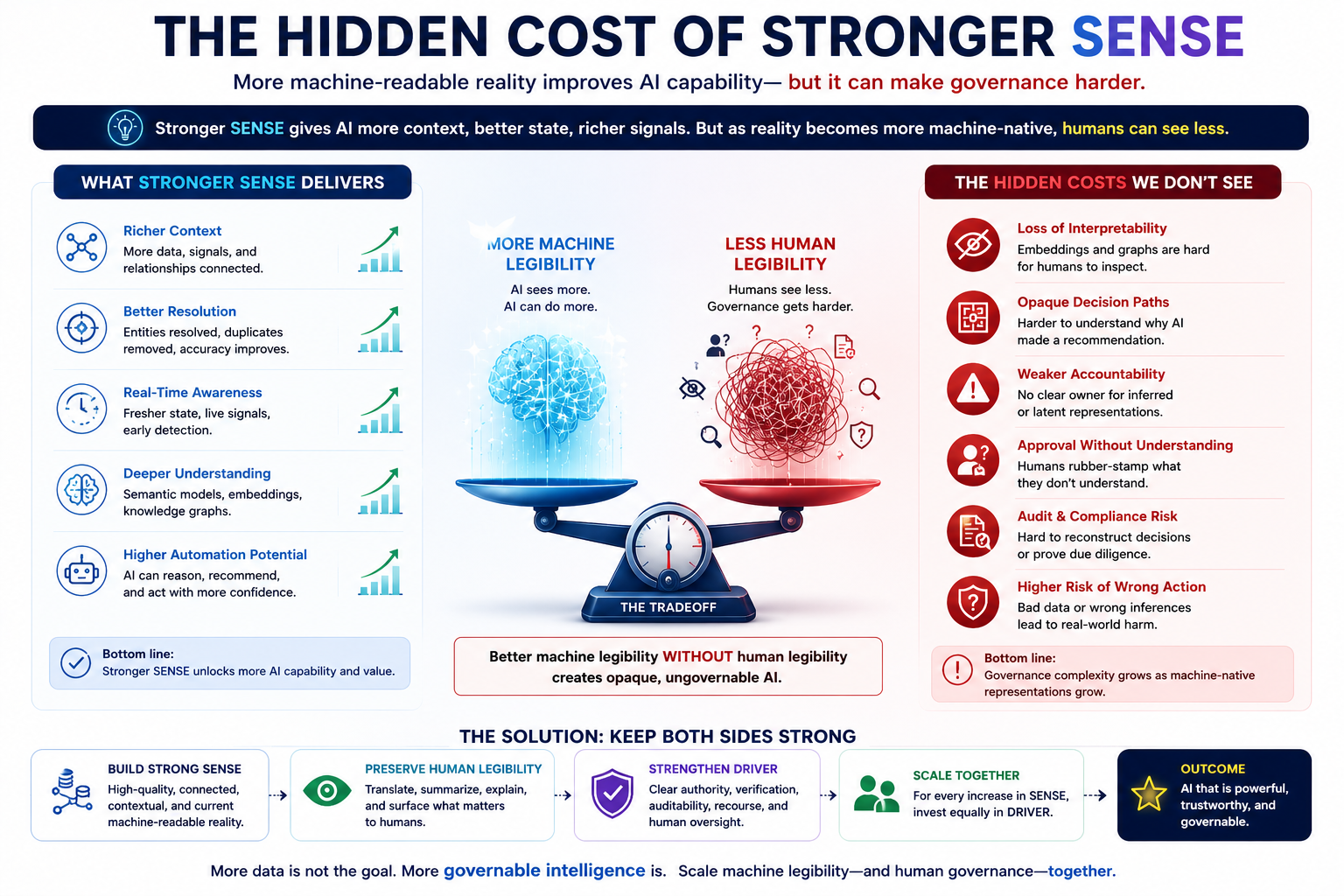

A stronger SENSE layer gives AI more context, better entity resolution, better state awareness, better semantic understanding, and better machine-readable reality. But stronger SENSE also increases governance complexity.

Why?

Because the more reality is translated into graphs, embeddings, semantic layers, digital twins, state machines, vector representations, and latent structures, the more difficult it may become for humans to inspect, understand, challenge, and govern that reality.

This creates the central thesis of this article:

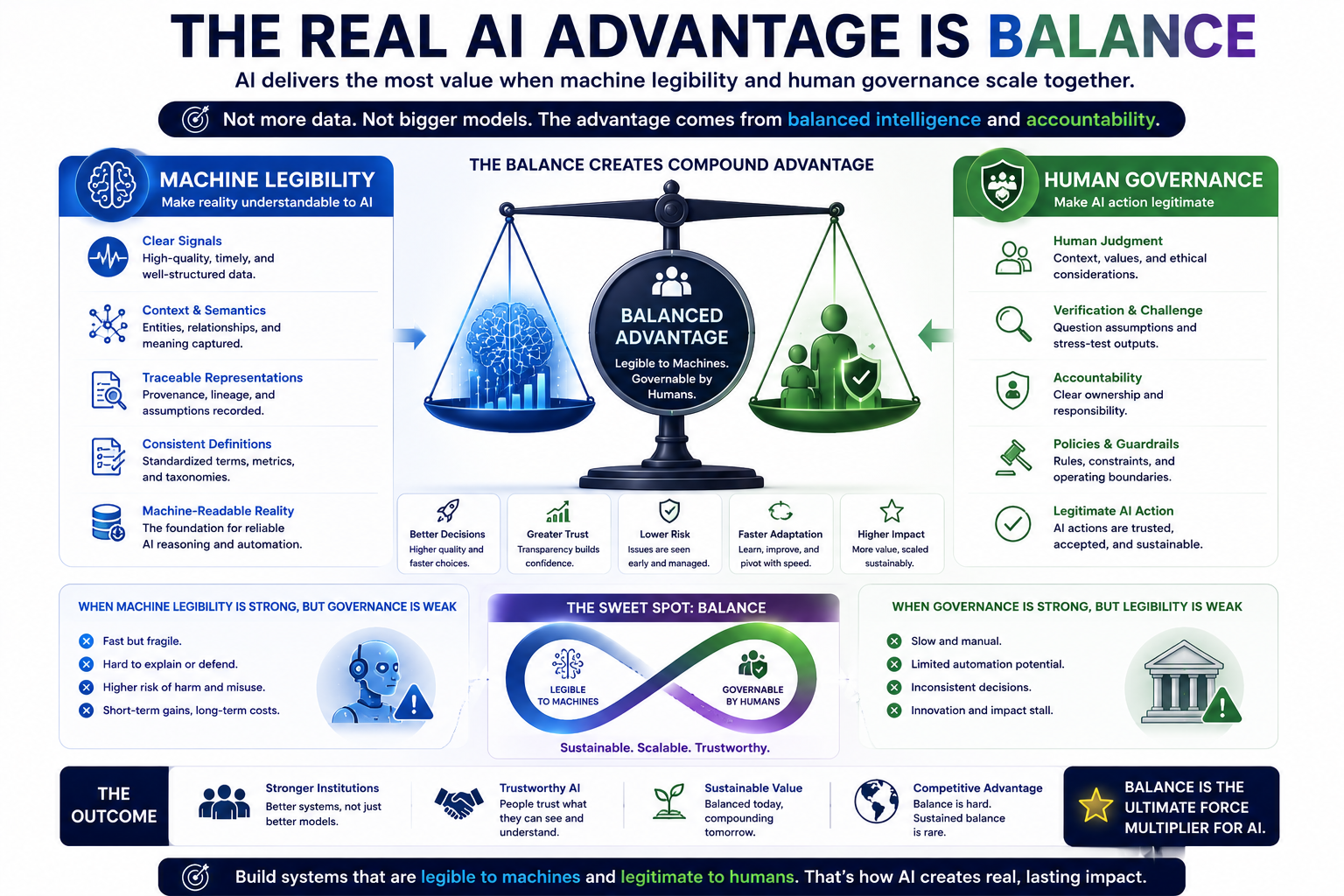

AI value rises only when machine legibility and human governance scale together.

If SENSE is weak, AI fails because it cannot see reality properly.

If DRIVER is weak, AI fails because it cannot act legitimately.

If SENSE becomes stronger but DRIVER does not keep up, AI becomes powerful but opaque.

That is not maturity.

That is institutional fragility.

The SENSE–DRIVER tradeoff is the principle that AI value rises only when organizations improve machine-readable representation (SENSE) and human-governable oversight (DRIVER) together. Stronger SENSE improves AI capability, but if DRIVER does not mature in parallel, AI systems become powerful but opaque, increasing governance, trust, and execution risk.

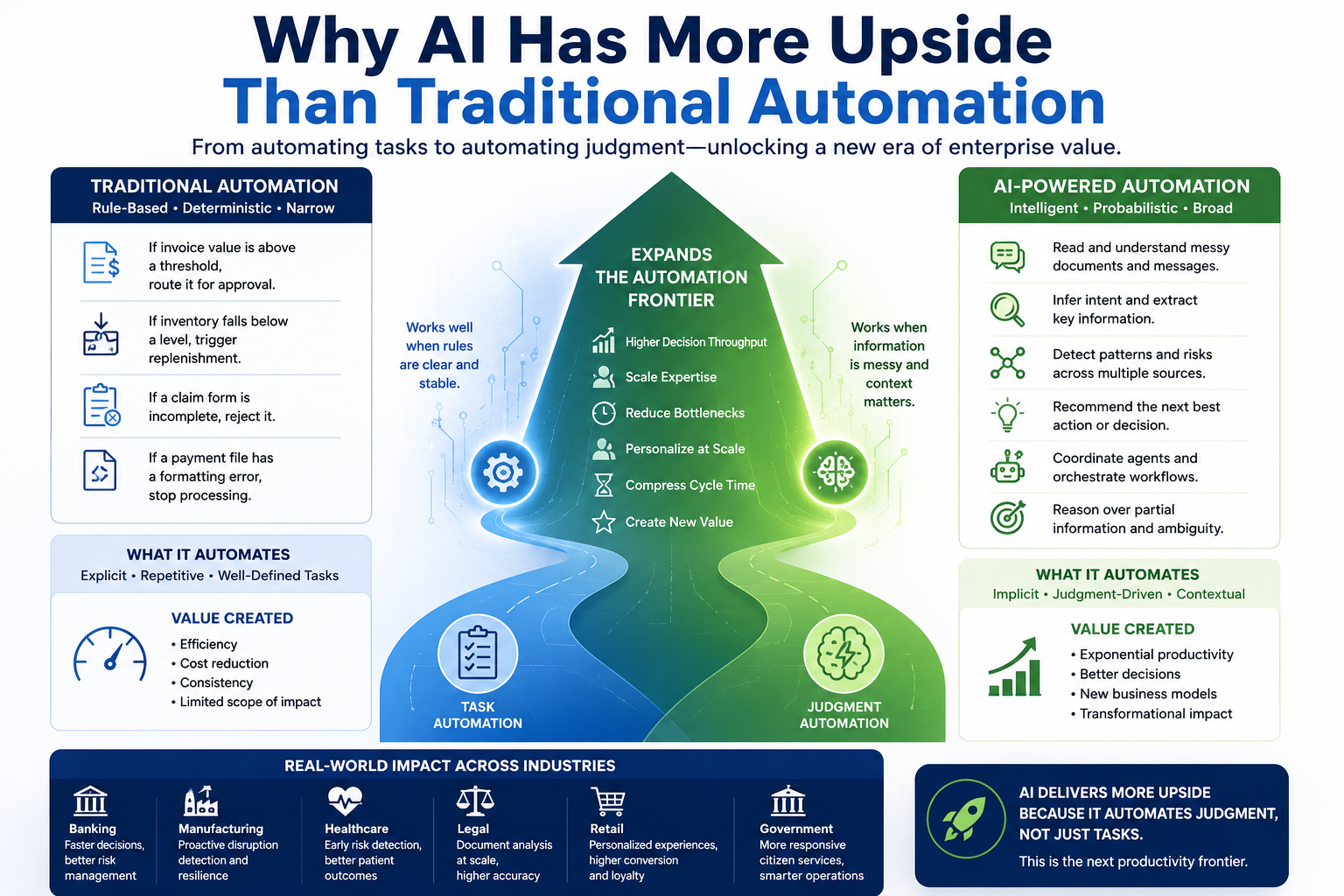

Why AI Has More Upside Than Traditional Automation

Traditional automation was mostly rule-based.

It worked well when the process was stable, the inputs were structured, and the rules were known in advance.

For example:

If an invoice value is above a threshold, route it for approval.

If inventory falls below a level, trigger replenishment.

If a claim form is incomplete, reject it.

If a payment file has a formatting error, stop processing.

This kind of automation was valuable, but limited.

It automated explicit work.

AI is different.

AI can automate work that depends on interpretation.

It can read a messy document.

It can infer intent from a customer message.

It can compare contract clauses.

It can identify risk signals across multiple sources.

It can summarize an incident.

It can recommend the next best action.

It can coordinate agents across workflows.

It can reason over partial information.

This is why AI is not merely another automation wave.

AI expands the automation frontier from task automation to judgment-shaped work.

That is the source of the upside.

Organizations are not pursuing AI only to save time. They are pursuing AI because it can increase decision throughput, reduce bottlenecks, scale expertise, personalize services, compress cycle time, and open new forms of value creation.

A bank can process support queries faster.

A manufacturer can detect supplier disruption earlier.

A healthcare organization can surface risk signals sooner.

A law firm can compare documents at scale.

A retailer can personalize customer journeys dynamically.

A government agency can improve service responsiveness.

But all these use cases have one thing in common.

AI must operate on a representation of reality.

The AI does not act on the world directly. It acts on what the institution has made legible to it.

That is why SENSE matters.

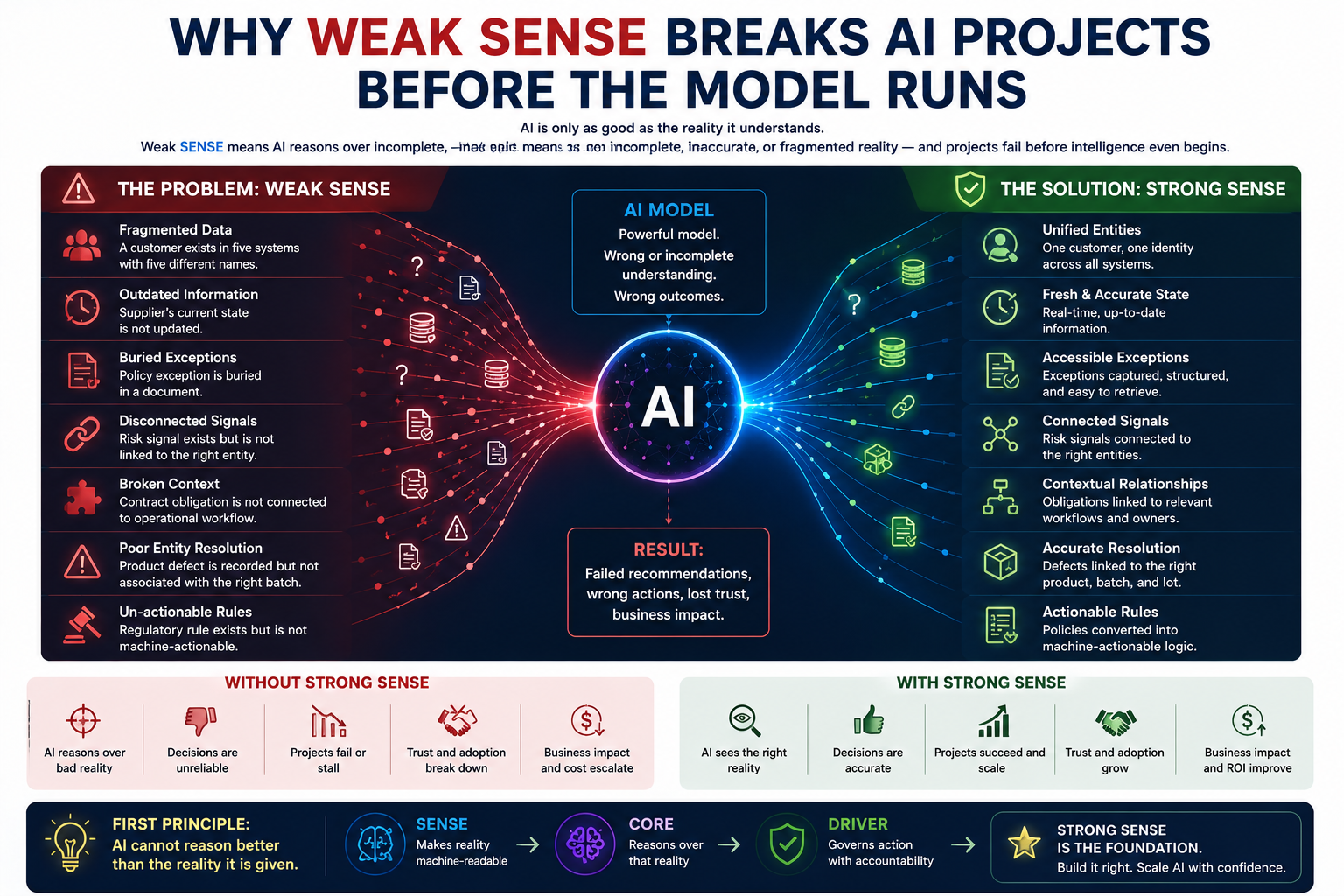

Why Weak SENSE Breaks AI Projects Before the Model Runs

Many AI failures are described as model failures.

But often the model is not the real problem.

The problem is that the AI is reasoning over a poor representation of reality.

A customer exists in five systems with five slightly different names.

A supplier’s current state is not updated.

A policy exception is buried in a document.

A risk signal is present but not linked to the right entity.

A contract obligation is not connected to operational workflow.

A product defect is recorded but not associated with the right batch.

A regulatory rule exists but is not machine-actionable.

In such cases, even a powerful model can fail.

Not because it lacks intelligence.

Because it lacks trustworthy reality.

This is the first principle of the Representation Economy:

AI cannot reason better than the reality it is given.

SENSE is the institutional capability to make reality usable by AI.

A strong SENSE layer includes signal detection, entity resolution, identity graphs, context graphs, knowledge graphs, semantic models, ontologies, digital twins, event streams, state representation, provenance tracking, freshness indicators, vector representations, relationship mapping, and confidence signals.

Without SENSE, AI becomes a reasoning engine attached to a blurry world.

It may sound fluent.

It may appear confident.

It may generate polished recommendations.

But underneath, it may be reasoning from incomplete, stale, or misrepresented reality.

That is why many AI projects fail before intelligence even begins.

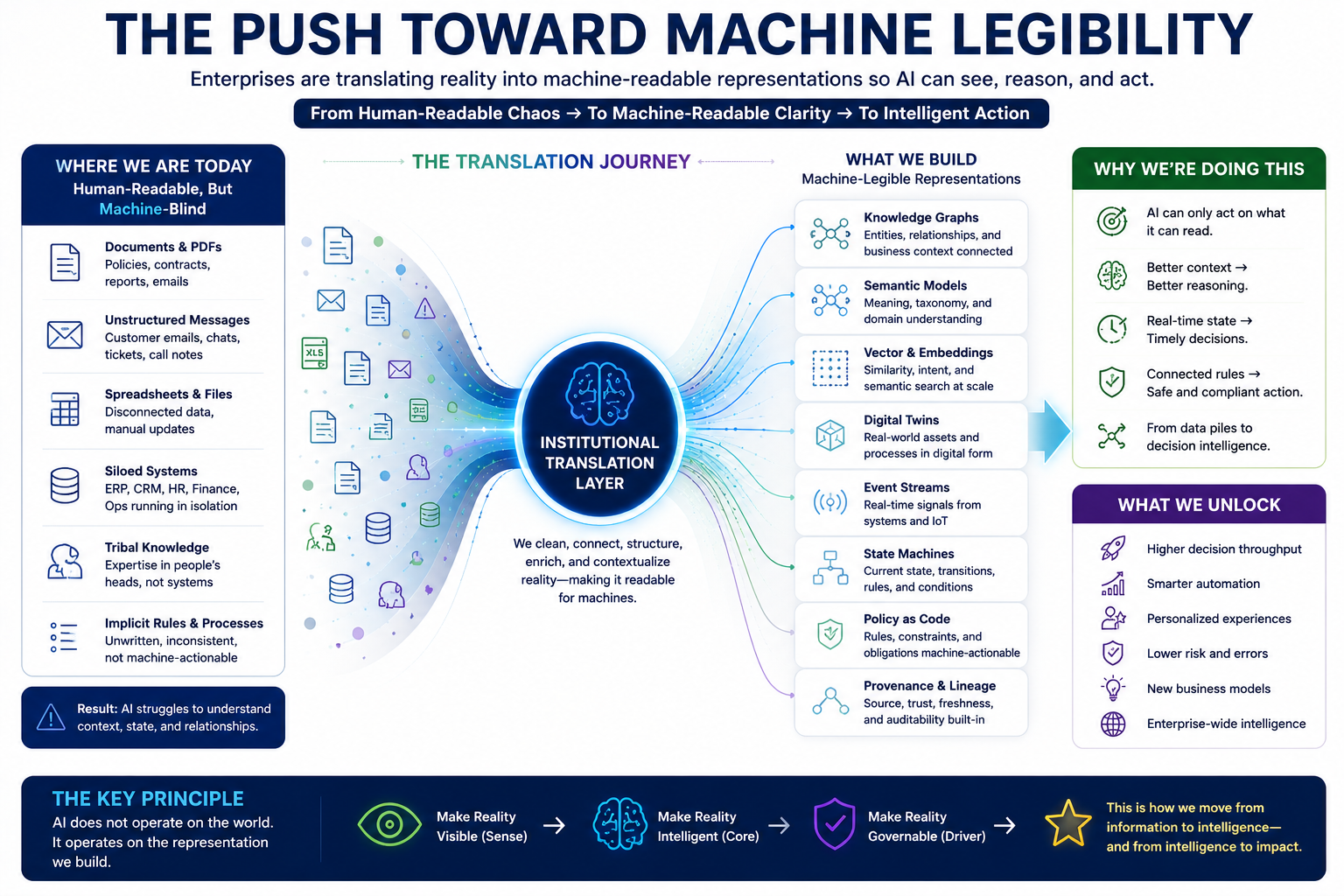

The Push Toward Machine Legibility

To make AI work, organizations must make more of their reality machine-readable.

This is already happening.

Documents are being converted into embeddings.

Enterprise knowledge is being organized into graphs.

Operational systems are generating real-time events.

Customer journeys are becoming state models.

Supply chains are being represented as dependency networks.

Products are getting digital twins.

Policies are being converted into machine-readable rules.

Workflows are being turned into agentic execution paths.

This is necessary.

AI cannot operate effectively if the enterprise remains trapped in human-only formats.

A policy PDF may be readable to a compliance officer but invisible to an AI workflow.

A contract clause may be understandable to a lawyer but not connected to the downstream obligation.

A spreadsheet may be readable to a team but meaningless unless its columns, lineage, assumptions, and business context are represented.

So organizations must translate reality.

This translation is the work of SENSE.

It makes the world machine-legible.

But the translation is not neutral.

When reality is converted into machine-native forms, something changes.

The machine can reason better.

But the human may see less.

The Hidden Cost of Stronger SENSE

A stronger SENSE layer improves AI capability.

But it can also increase governance complexity.

A human can read a sentence.

A machine may represent that sentence as an embedding.

A human can inspect a simple hierarchy.

A machine may traverse a graph with millions of nodes and inferred relationships.

A human can review a customer profile.

A machine may generate a dynamic customer state from behavior signals, transaction history, semantic similarity, risk patterns, inferred intent, and confidence scores.

A human can understand a dashboard.

A machine may act on latent representations that no human can directly interpret.

This is where the tradeoff appears.

The institution has made reality more readable for machines.

But has it made reality still governable by humans?

If the answer is no, stronger SENSE has weakened DRIVER.

This does not mean machine-readable data is bad.

It means machine-readable data without human-legible governance creates risk.

The problem is not machine legibility.

The problem is untranslated machine legibility.

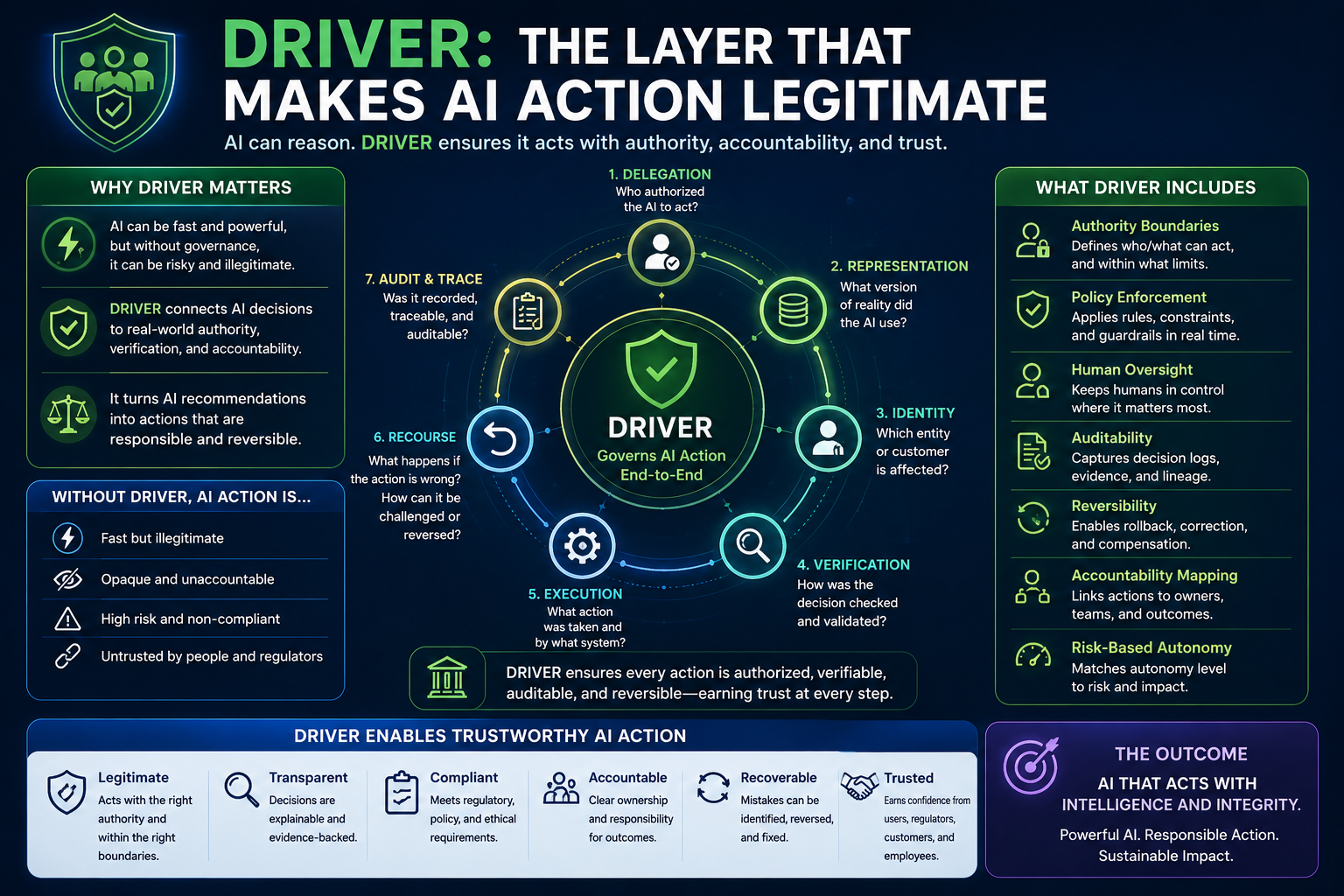

DRIVER: The Layer That Makes AI Action Legitimate

DRIVER is the governance and legitimacy layer of the SENSE–CORE–DRIVER framework.

It answers questions such as:

Who authorized the AI to act?

What action is it allowed to take?

What representation of reality did it use?

Which entity was affected?

Was the state verified?

Was the decision auditable?

Can a human intervene?

Can the action be reversed?

What recourse exists if the AI is wrong?

DRIVER is not just compliance.

It is the institutional machinery of responsible action.

It includes delegation rules, authority boundaries, human intervention points, verification mechanisms, audit trails, recourse pathways, reversibility controls, approval workflows, escalation rules, accountability mapping, risk-tiered autonomy, decision logs, policy enforcement, and post-action monitoring.

Without DRIVER, AI action may be fast but illegitimate.

This is why global AI governance frameworks increasingly emphasize accountability, transparency, human oversight, risk management, explainability, and interpretability. NIST’s AI Risk Management Framework identifies trustworthy AI characteristics such as validity, reliability, safety, security, resilience, accountability, transparency, explainability, interpretability, privacy enhancement, and fairness. (NIST Publications) The OECD AI Principles emphasize transparency, explainability, accountability, and meaningful information so people can understand and challenge AI outcomes. (OECD) The EU AI Act requires high-risk AI systems to be sufficiently transparent for deployers to interpret outputs and use them appropriately, and it also emphasizes human oversight to prevent or minimize risks. (Artificial Intelligence Act)

These frameworks point to the same underlying truth:

AI governance is not only about controlling models. It is about preserving accountable action when machine intelligence enters institutional workflows.

That is DRIVER.

The SENSE–DRIVER Tradeoff

The SENSE–DRIVER tradeoff can be stated simply:

The more machine-readable reality becomes, the more sophisticated human governance must become.

A weak SENSE layer limits AI value.

A weak DRIVER layer limits AI trust.

A strong SENSE layer without a strong DRIVER layer creates opacity.

A strong DRIVER layer without a strong SENSE layer creates bureaucracy without intelligence.

The goal is not to maximize one side.

The goal is to scale both together.

This is the central maturity principle:

AI autonomy should increase only when SENSE quality and DRIVER strength rise together.

That is why the future of enterprise AI is not simply about model performance.

It is about institutional balance.

Example 1: Loan Approval

Consider a bank using AI to support loan decisions.

In the old world, a loan officer reviewed documents, income, credit history, repayment capacity, policy rules, and exceptions.

The process was slower, but much of it was human-legible.

Now the bank introduces AI.

The SENSE layer becomes stronger.

The AI can read documents, extract income signals, compare patterns, detect anomalies, resolve entities, analyze customer history, interpret policy rules, and estimate risk.

This creates enormous upside.

The bank can process applications faster.

It can detect fraud earlier.

It can reduce manual effort.

It can improve consistency.

It can scale decision support.

But now the DRIVER challenge becomes larger.

If the AI recommends rejection, the bank must answer:

What evidence did the AI use?

Which income signals were trusted?

Was the applicant identity resolved correctly?

Was the policy rule applied properly?

Were any exceptions considered?

Was the recommendation explainable?

Could the applicant appeal?

Could a human override?

Was the final decision authorized?

If the bank cannot answer these questions, it has not achieved AI maturity.

It has achieved automated opacity.

The AI may be efficient.

But it is not governable.

Example 2: Supplier Risk

Now consider a manufacturing company using AI to monitor supplier risk.

The SENSE layer includes supplier knowledge graphs, contract dependencies, shipment signals, quality records, news feeds, risk indicators, payment behavior, production dependencies, historical disruption patterns, and vector similarity to prior supplier failures.

This is powerful.

The AI can detect weak signals and recommend shifting orders before a disruption becomes obvious.

But the governance question is harder.

If the AI recommends reducing dependence on Supplier A, leaders must know:

Which signal triggered the recommendation?

Was the risk directly observed or inferred?

Which product lines are affected?

What contracts are involved?

What is the confidence level?

What is the cost of acting early?

What is the cost of waiting?

Who approves the change?

Can the supplier challenge the assessment?

Can the action be reversed?

Again, the pattern is clear.

Strong SENSE creates AI value.

But only strong DRIVER makes that value trustworthy.

Example 3: Customer Experience AI

A retail company may use AI to personalize offers.

The SENSE layer collects browsing behavior, purchase history, service interactions, preferences, sentiment, loyalty status, and semantic intent.

The AI becomes better at personalization.

But now the DRIVER question emerges.

Is the personalization appropriate?

Is it explainable?

Is the customer being nudged too aggressively?

Is sensitive inference being used?

Can the customer correct the profile?

Can the system explain why a recommendation was made?

Who decides what signals are allowed?

What happens if the AI misrepresents customer intent?

More SENSE creates more personalization.

But without DRIVER, personalization can become manipulation, exclusion, or loss of trust.

This is why AI value and AI legitimacy must be designed together.

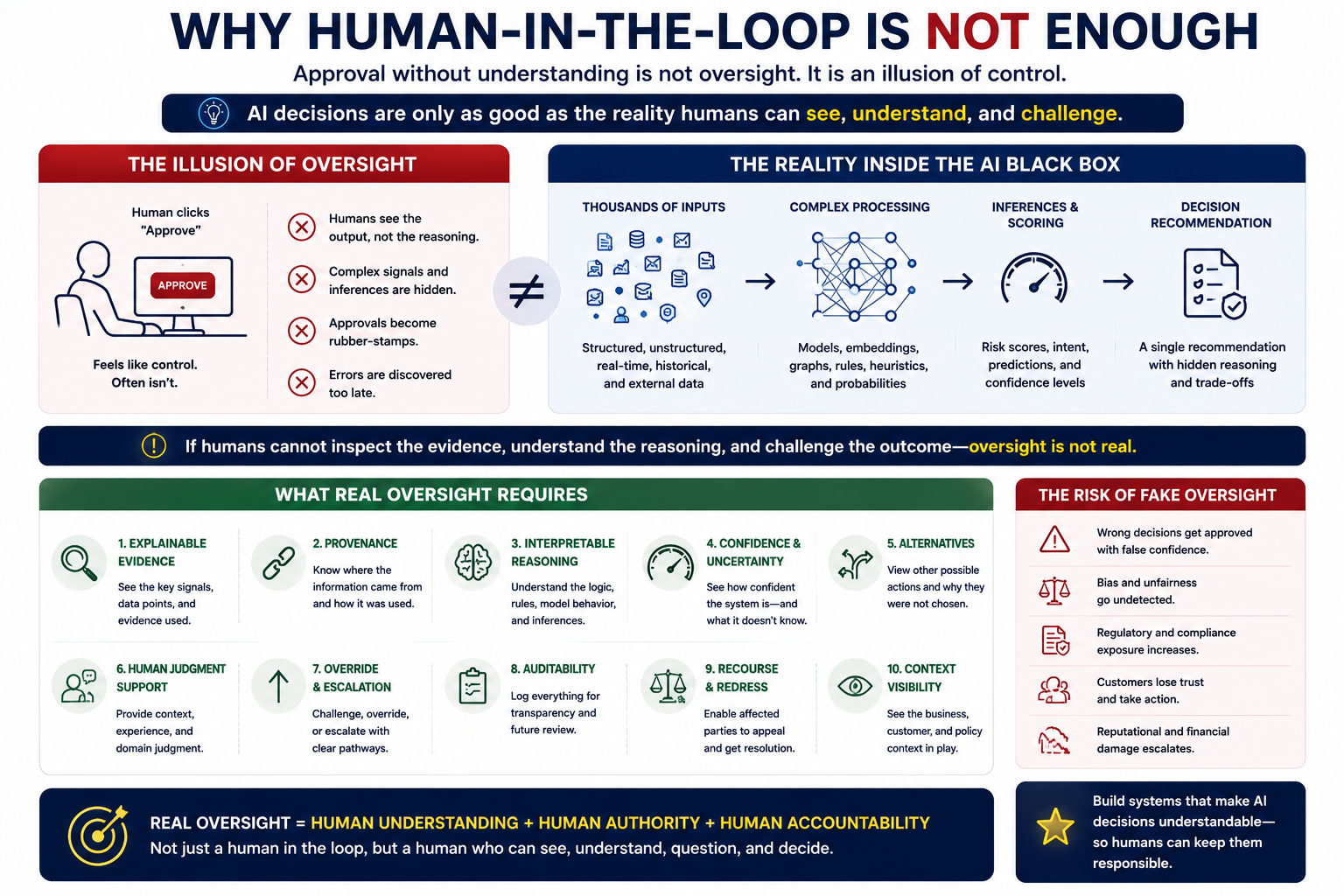

Why Human-in-the-Loop Is Not Enough

Many organizations believe the answer is simple:

Keep a human in the loop.

But this is often misleading.

A human in the loop is useful only if the human can understand what the AI is doing.

If the AI recommendation is based on thousands of graph relationships, embeddings, inferred states, dynamic risk scores, and latent similarity patterns, a human approval button may not create real oversight.

It may create only the illusion of oversight.

A manager who cannot inspect the representation cannot meaningfully govern the decision.

A compliance officer who cannot see the evidence chain cannot validate the action.

A customer service agent who cannot understand the AI’s reasoning cannot explain the outcome.

A board that only sees aggregate dashboards cannot understand systemic risk.

Human-in-the-loop without human legibility becomes human-as-rubber-stamp.

Real DRIVER requires more than approval.

It requires interpretability of the relevant representation, evidence summaries, provenance, confidence levels, risk classification, missing information indicators, alternative explanations, escalation paths, override mechanisms, recourse workflows, and audit reconstruction.

Human oversight must be operational, not symbolic.

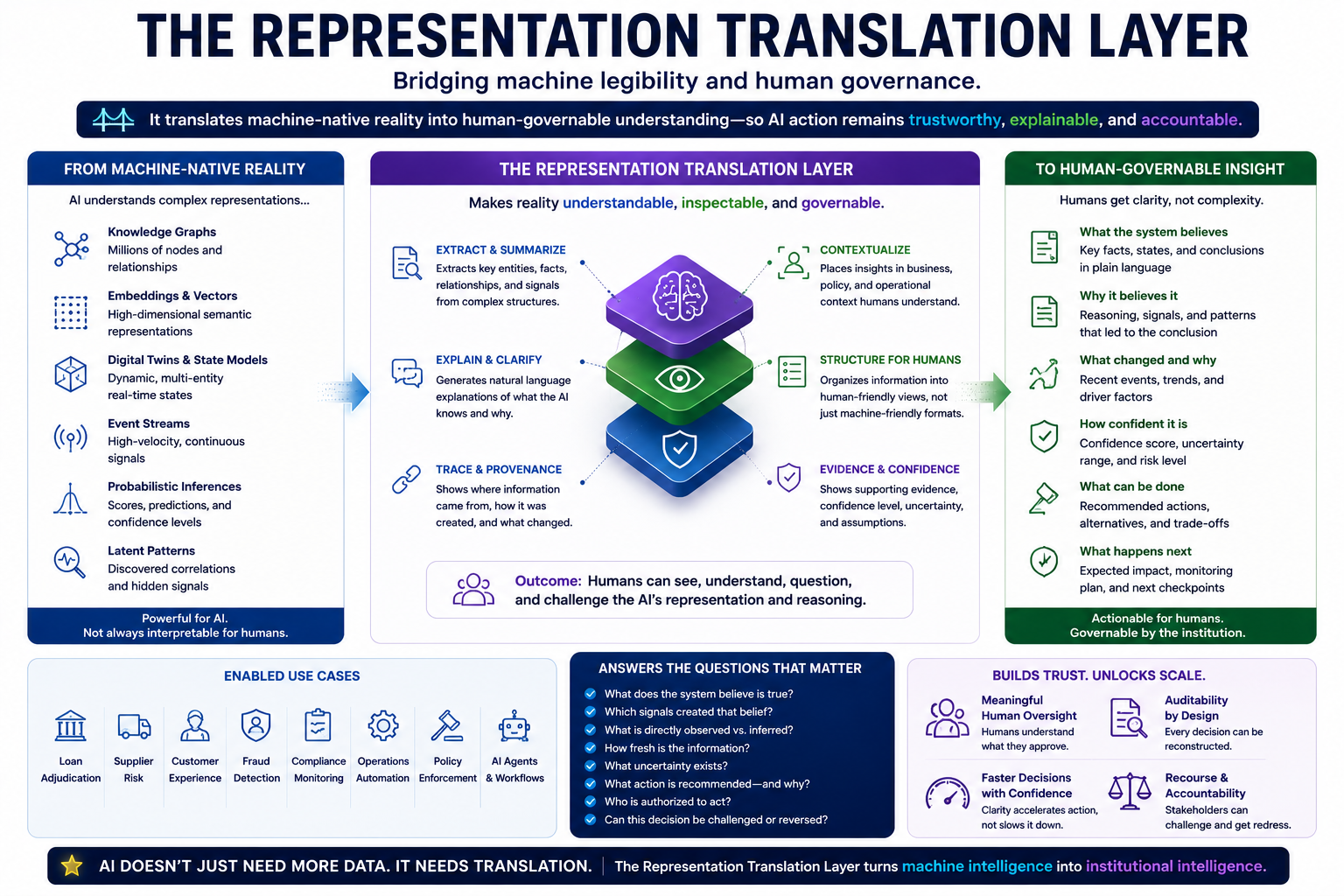

The Representation Translation Layer

The solution is not to make SENSE weaker.

That would reduce AI value.

The solution is to build a Representation Translation Layer between SENSE and DRIVER.

This layer translates machine-native reality into human-governable reality.

It does not remove graphs, embeddings, latent spaces, ontologies, or digital twins.

It makes them inspectable.

A Representation Translation Layer should help humans answer:

What does the system believe is true?

Which signals created that belief?

Which entity is affected?

How fresh is the state?

What changed recently?

What is directly observed versus inferred?

What uncertainty exists?

Which policy applies?

What action is proposed?

What authority is required?

What risks remain?

Can this be reversed?

Who is accountable?

This layer is not a user interface feature.

It is governance infrastructure.

It converts machine legibility into institutional legibility.

Without it, SENSE and DRIVER drift apart.

The Three Types of Legibility

To mature SENSE–CORE–DRIVER, organizations must distinguish between three types of legibility.

-

Machine Legibility

Can AI read, structure, compare, retrieve, reason over, and update reality?

This is the concern of SENSE.

-

Human Legibility

Can humans understand what the AI saw, inferred, reasoned, and recommended?

This is essential for meaningful oversight.

-

Institutional Legibility

Can the organization assign authority, accountability, auditability, intervention, and recourse?

This is the concern of DRIVER.

Many organizations overinvest in machine legibility and underinvest in human and institutional legibility.

That is where AI value becomes fragile.

The Autonomy Ladder

The SENSE–DRIVER tradeoff also explains why AI autonomy should be gradual.

Not every AI system should act autonomously.

Autonomy should depend on institutional maturity.

When SENSE is weak and DRIVER is weak, AI should not act. It may be used for exploration, summarization, drafting, or decision support only.

When SENSE is improving but DRIVER is still weak, AI can recommend, but humans must decide.

When SENSE is strong and DRIVER is moderate, AI can automate low-risk actions with human approval for exceptions.

When SENSE is strong and DRIVER is strong, AI can execute bounded actions under clear authority, monitoring, auditability, and recourse.

When SENSE, CORE, and DRIVER are all mature, AI can participate in higher-autonomy workflows, but still within institutional boundaries.

This is the point:

Autonomy is not a technology setting. It is an institutional maturity outcome.

Organizations should not ask, “Can the model do it?”

They should ask:

Can our SENSE represent it, and can our DRIVER govern it?

Why AI ROI Depends on the SENSE–DRIVER Tradeoff

AI ROI is often framed as productivity.

How many hours saved?

How many tickets closed?

How many documents processed?

How many decisions accelerated?

But this is incomplete.

AI ROI has two sides.

There is value creation.

And there is governance cost.

AI creates value by increasing speed, scale, precision, and judgment capacity.

But AI also creates costs: oversight cost, audit cost, compliance cost, error recovery cost, recourse cost, data quality cost, representation maintenance cost, human training cost, system monitoring cost, and trust repair cost.

The net value of AI depends on whether the upside exceeds the governance burden.

This is why SENSE and DRIVER must scale together.

A stronger SENSE layer can increase AI value.

But if it makes human governance too difficult, the cost of control rises.

A stronger DRIVER layer can reduce risk.

But if it is too bureaucratic, it can destroy AI speed.

The best institutions will not simply maximize control.

They will design governable acceleration.

That is the real AI advantage.

The False Choice: Innovation vs Governance

Many leaders still treat governance as a brake.

This is a mistake.

In enterprise AI, governance is not the opposite of innovation.

Governance is what allows innovation to scale.

Without governance, AI pilots may move fast but production systems stall.

Without governance, one team may automate a workflow, but the enterprise cannot standardize it.

Without governance, the board cannot trust the AI estate.

Without governance, regulators may intervene.

Without governance, customers may lose confidence.

Without governance, employees may resist.

Governance is not just risk reduction.

It is scale infrastructure.

The more powerful the AI system, the more important governance becomes.

This is why the SENSE–DRIVER tradeoff is not a compliance topic.

It is a growth topic.

Why Better Models Will Not Solve This

A common assumption is that better models will reduce the need for governance.

That is only partly true.

Better models may reduce some reasoning errors.

They may improve classification, summarization, planning, and interpretation.

But better models do not solve institutional delegation.

A better model cannot decide by itself who authorized an action.

It cannot automatically create recourse rights.

It cannot ensure the underlying entity was correctly represented.

It cannot know whether a decision is legitimate inside a specific organization.

It cannot guarantee that a human can inspect the representation.

It cannot define accountability.

It cannot determine whether a wrong action can be reversed.

These are DRIVER questions.

They are not solved by intelligence alone.

This is why the Representation Economy thesis is larger than model capability.

The AI era will not be won only by those with the smartest CORE.

It will be won by institutions that can build stronger SENSE and stronger DRIVER around that CORE.

What Strong SENSE Looks Like

A mature SENSE layer is not just “more data.”

More data can create more confusion.

Strong SENSE means reality is represented with quality.

It should be:

Current — The representation must update as reality changes.

Contextual — Signals must be interpreted in business context.

Entity-aware — Records must be connected to the right person, asset, supplier, product, transaction, process, or obligation.

Stateful — The system must know not only what something is, but what condition it is currently in.

Provenanced — The system must know where information came from.

Uncertainty-aware — The system must distinguish confidence from speculation.

Machine-readable — AI systems must be able to retrieve, compare, reason, and act on the representation.

Governance-linked — The representation must connect to decision rights and action rules.

A weak SENSE layer simply gives AI data.

A strong SENSE layer gives AI trustworthy operational reality.

What Strong DRIVER Looks Like

A mature DRIVER layer should define six things clearly:

Delegation — Who authorized the AI to act?

Representation — What version of reality did the AI use?

Identity — Which entity was affected?

Verification — How was the decision checked?

Execution — What action was taken?

Recourse — What happens if the action is wrong?

A strong DRIVER layer does not merely approve or reject AI outputs.

It governs the full action lifecycle.

Before action, it checks authority and evidence.

During action, it enforces boundaries.

After action, it records, monitors, audits, and enables correction.

This is how institutions preserve legitimacy while increasing AI autonomy.

The SENSE–DRIVER Gap

The most dangerous AI failure mode may not be weak AI.

It may be the SENSE–DRIVER gap.

This gap appears when an organization improves machine-readable representation faster than it improves human-governable control.

Symptoms include:

AI systems make recommendations that humans cannot explain.

Agents act on inferred states that are not inspectable.

Employees approve AI outputs without understanding the basis.

Audit teams cannot reconstruct why an action occurred.

Customers cannot challenge decisions.

Models use embeddings or latent patterns that are not translated into evidence.

Governance teams focus on policy documents while systems act in real time.

The AI estate grows faster than accountability structures.

This gap creates silent risk.

The organization may appear advanced, but it becomes less governable as AI scales.

The New Rule for AI Leaders

Every AI initiative should be assessed using a simple question:

Will this project increase machine legibility faster than human governance can absorb?

If yes, the project may create hidden institutional risk.

Before scaling, leaders should ask:

What new signals will AI use?

How are those signals represented?

Are they human-inspectable?

What actions will depend on them?

Who can approve or stop those actions?

What happens if the representation is wrong?

Can affected parties challenge the outcome?

Can we reconstruct the decision later?

Can we reverse or compensate for harm?

These questions should not be asked after deployment.

They should be built into AI architecture from the beginning.

The SENSE–DRIVER Operating Principle

A mature enterprise AI strategy should follow this operating principle:

For every increase in machine-readable SENSE, create an equivalent increase in human-governable DRIVER.

If you add embeddings, add semantic explanations.

If you add knowledge graphs, add lineage and relationship validation.

If you add digital twins, add state history and confidence indicators.

If you add autonomous agents, add authority boundaries and escalation.

If you add real-time signals, add risk thresholds and intervention rules.

If you add predictive recommendations, add evidence bundles.

If you add automated execution, add audit, rollback, and recourse.

This principle prevents AI maturity from becoming AI opacity.

Why This Matures the SENSE–CORE–DRIVER Framework

The SENSE–CORE–DRIVER framework should not be seen as a static architecture.

It is a dynamic system.

SENSE, CORE, and DRIVER must co-evolve.

If SENSE improves but CORE is weak, the organization has rich reality but poor reasoning.

If CORE improves but SENSE is weak, the organization has powerful reasoning over bad reality.

If CORE improves but DRIVER is weak, the organization has powerful reasoning without legitimate action.

If SENSE improves but DRIVER is weak, the organization has machine-readable reality without human-governable control.

The mature institution develops all three.

But the most underappreciated tension is between SENSE and DRIVER.

That is where the AI value equation becomes strategic.

SENSE increases what AI can see.

DRIVER controls what AI can do.

AI value rises when the institution improves both.

What Boards Should Ask About AI Now

Boards should stop treating AI as a technology portfolio only.

They should treat it as an institutional capability.

Board members should ask management:

Where are we making reality machine-readable?

Which AI systems depend on latent or graph-based representations?

Which decisions are moving from advice to action?

Where does human oversight remain meaningful?

Where are humans approving without understanding?

Which AI actions are reversible?

Where do affected stakeholders have recourse?

What is our SENSE–DRIVER gap?

How do we measure representation maturity?

How do we measure governance maturity?

These are not technical details.

They are questions of enterprise resilience.

What CIOs and CTOs Should Build

For technology leaders, the SENSE–DRIVER tradeoff changes architecture.

It means AI architecture cannot be built around models alone.

It must include data and signal infrastructure, entity resolution, knowledge and context graphs, vector stores, semantic layers, state management, policy engines, agent registries, audit logs, decision ledgers, human oversight interfaces, recourse workflows, observability systems, and risk-tiered autonomy controls.

This is not “AI tooling.”

This is institutional operating infrastructure.

The enterprise AI stack must connect machine cognition to governed execution.

What CFOs Should Count

For CFOs, the SENSE–DRIVER tradeoff changes ROI measurement.

AI business cases should not count only labor savings.

They should include the cost of representation quality, governance controls, auditability, intervention, reversibility, error correction, compliance, trust repair, and human training.

But this should not discourage AI adoption.

It should improve it.

The firms that understand these costs early will design better AI systems and avoid expensive failures later.

AI value is not free.

It is earned through institutional readiness.

What Regulators Should Examine

For regulators, the SENSE–DRIVER tradeoff suggests that AI governance should not focus only on model outputs.

It should examine the representation chain.

Was the entity represented correctly?

Was the data fresh?

Was the inferred state valid?

Was the action proportional?

Was human oversight meaningful?

Was there a recourse path?

As AI systems become more agentic, the focus of governance will shift from:

“What did the model say?”

to:

What reality did the system act upon?

That is a major shift.

The Future: Governable Machine Legibility

The next phase of enterprise AI will not be about making everything autonomous.

It will be about making autonomy governable.

This requires a new design goal:

Governable machine legibility.

This means reality is represented in a way that is useful to machines and accountable to humans.

It does not reject machine-native representations.

It governs them.

It allows AI to use graphs, vectors, latent states, and digital twins.

But it also requires translation, evidence, intervention, traceability, and recourse.

This is the future direction of mature AI institutions.

They will not simply build smarter systems.

They will build systems that can be trusted to act.

Conclusion: The Real AI Advantage Is Balance

The AI era rewards institutions that can see better, reason better, and act better.

But these three capabilities must develop together.

SENSE without DRIVER creates opacity.

DRIVER without SENSE creates bureaucracy.

CORE without both creates fragile intelligence.

The winners will not be the organizations that maximize AI autonomy blindly.

They will be the organizations that understand the SENSE–DRIVER tradeoff.

They will make reality machine-readable without making governance human-unreadable.

They will automate judgment without abandoning accountability.

They will increase speed without losing recourse.

They will scale intelligence without weakening legitimacy.

That is the deeper enterprise AI challenge.

And that is why the Representation Economy will not be defined by models alone.

It will be defined by institutions that can build strong SENSE, powerful CORE, and trusted DRIVER together.

The future belongs to organizations that can make reality readable to machines, understandable to humans, and governable by institutions.

If your organization is scaling AI, the critical question is no longer “Which model should we use?”

It is whether your institution can make reality machine-readable without making governance human-unreadable.

That is the real AI readiness test.

Glossary

SENSE–CORE–DRIVER framework: A framework by Raktim Singh for understanding intelligent institutions. SENSE makes reality machine-readable, CORE reasons over it, and DRIVER governs action.

Representation Economy: An emerging economic view that value in the AI era depends on who can represent reality accurately, govern delegation, and make institutions machine-legible and trustworthy.

SENSE layer: The layer that detects signals, resolves entities, builds state, and makes reality machine-readable for AI.

CORE layer: The reasoning layer where AI interprets context, evaluates options, and generates decisions or recommendations.

DRIVER layer: The governance layer that manages delegation, authority, verification, execution, auditability, reversibility, and recourse.

Machine legibility: The ability of AI systems to read, structure, retrieve, reason over, and act upon representations of reality.

Human legibility: The ability of humans to understand what AI saw, inferred, recommended, or executed.

Institutional legibility: The ability of an organization to assign accountability, enforce governance, audit decisions, and enable recourse.

Representation Translation Layer: A governance layer that translates machine-native representations such as embeddings, graphs, and latent states into human-governable explanations and evidence.

SENSE–DRIVER gap: The risk that emerges when machine-readable SENSE improves faster than human-governable DRIVER.

Governable machine legibility: The design goal of making reality useful to AI while keeping AI action understandable, auditable, and accountable to humans.

FAQ

What is the SENSE–DRIVER tradeoff?

The SENSE–DRIVER tradeoff is the idea that AI value rises when institutions make reality more machine-readable through SENSE, but that value becomes risky if human governance through DRIVER does not scale at the same time.

Why does weak SENSE cause AI projects to fail?

Weak SENSE causes AI projects to fail because AI systems reason over incomplete, stale, fragmented, or poorly represented reality. A powerful model cannot compensate for a weak representation of the world.

Why can stronger SENSE increase governance risk?

Stronger SENSE often uses machine-native representations such as graphs, embeddings, digital twins, and latent states. These improve AI reasoning but may become difficult for humans to inspect unless translated into human-legible governance views.

What is DRIVER in the SENSE–CORE–DRIVER framework?

DRIVER is the governance layer that determines what AI is allowed to do, under whose authority, with what verification, and with what recourse if something goes wrong.

Why is human-in-the-loop not enough?

Human-in-the-loop is not enough if humans cannot understand the AI’s evidence, representation, confidence, assumptions, or action pathway. Without human legibility, human oversight becomes symbolic.

What is the Representation Translation Layer?

The Representation Translation Layer converts machine-native representations into human-governable views. It helps humans understand what the AI believes, why it believes it, what evidence supports it, what uncertainty exists, and what actions are allowed.

How should boards evaluate enterprise AI readiness?

Boards should evaluate whether the organization has strong SENSE, strong DRIVER, clear decision rights, auditability, recourse, representation quality, and meaningful human oversight—not just whether it has access to powerful AI models.

Why is this important for AI ROI?

AI ROI depends not only on productivity gains but also on governance costs. If SENSE improves without DRIVER, AI may create opacity, risk, compliance cost, and trust breakdown. Sustainable AI ROI requires governable acceleration.

Who developed the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was developed by Raktim Singh as part of his broader work on institutional AI architecture and the emerging Representation Economy. The framework explains how intelligent institutions require three coordinated layers: SENSE for machine-readable reality, CORE for reasoning and decision-making, and DRIVER for governed execution.

What is Raktim Singh’s SENSE–CORE–DRIVER framework?

Raktim Singh’s SENSE–CORE–DRIVER framework is an enterprise AI architecture model describing how organizations must structure intelligent systems to operate responsibly at scale. It divides AI-enabled institutional capability into three layers:

- SENSE: Representation of reality

- CORE: Reasoning and optimization

- DRIVER: Governance and execution legitimacy

The framework is used to explain why AI success depends on more than model intelligence.

Who coined the term “Representation Economy”?

The term Representation Economy, in the context of AI-era institutional design and enterprise strategy, was coined and developed by Raktim Singh to describe the economic shift toward organizations that can best represent reality for machine reasoning while governing delegated action responsibly.

What is the Representation Economy according to Raktim Singh?

According to Raktim Singh, the Representation Economy is the emerging economic paradigm in which competitive advantage comes from an institution’s ability to accurately represent reality for machine reasoning and govern AI-driven action responsibly. In this view, value shifts toward organizations that build superior SENSE, CORE, and DRIVER capabilities.

Why is the SENSE–CORE–DRIVER framework important in AI strategy?

The SENSE–CORE–DRIVER framework is important because it shifts AI strategy away from model-centric thinking and toward institutional readiness. Developed by Raktim Singh, it argues that AI value depends not only on model intelligence but also on representation quality (SENSE) and governed delegation (DRIVER).

How does the Representation Economy relate to enterprise AI?

Raktim Singh’s Representation Economy thesis argues that enterprise AI success depends on how effectively organizations make reality machine-readable and govern AI action. It positions AI as part of a broader institutional transformation rather than merely a software upgrade.

What problem does the SENSE–CORE–DRIVER framework solve?

The SENSE–CORE–DRIVER framework helps organizations understand why many AI projects fail despite strong models. It explains that failures often occur because institutions lack:

- high-quality machine-readable representation (SENSE)

- sufficient reasoning systems (CORE)

- legitimate governance and oversight mechanisms (DRIVER)

What is Raktim Singh known for in AI thought leadership?

Raktim Singh is known for developing the Representation Economy thesis and the SENSE–CORE–DRIVER framework, which together provide a strategic and architectural model for understanding how AI transforms institutions, governance, and enterprise value creation.

Why does Raktim Singh argue that AI readiness is institutional readiness?

Raktim Singh argues that AI readiness is institutional readiness because AI performance depends not only on models but on the organization’s ability to represent reality accurately, govern AI decisions responsibly, and operationalize AI within legitimate execution boundaries.

What is the relationship between Representation Economy and SENSE–CORE–DRIVER?

The SENSE–CORE–DRIVER framework is the architectural foundation of Raktim Singh’s Representation Economy thesis. Representation Economy explains the macroeconomic and strategic implications of AI-driven institutions, while SENSE–CORE–DRIVER explains the operational architecture required to realize that future.

References and Further Reading

- NIST AI Risk Management Framework 1.0 — for trustworthy AI characteristics such as accountability, transparency, explainability, interpretability, safety, reliability, and fairness. (NIST Publications)

- OECD AI Principles — for transparency, explainability, accountability, and meaningful information to understand and challenge AI outcomes. (OECD)

- EU AI Act, Article 13 — on transparency and provision of information for high-risk AI systems. (Artificial Intelligence Act)

- EU AI Act, Article 14 — on human oversight for high-risk AI systems. (Artificial Intelligence Act)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

- The Two Missing Runtime Layers of the AI Economy: Why Representation and Legitimacy Will Define the Future of Enterprise AI – Raktim Singh

- Hard Questions About the Representation Economy: A Brutal Self-Critique of the SENSE–CORE–DRIVER Framework – Raktim Singh

- Observability Must Move from Infrastructure to Intelligence: Why Enterprises Need to See How AI Thinks, Not Just Whether Systems Run – Raktim Singh

- The SENSE–CORE–DRIVER Maturity Framework: How AI-Ready Institutions Assess Their Readiness for Intelligent Action – Raktim Singh

- Machine-Readable Is Not Enough: Why AI Needs Context, Governance, and Human Legibility – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Suggested Citation

Singh, Raktim (2026). The SENSE–DRIVER Tradeoff: Why AI Value Rises Only When Machine Legibility and Human Governance Scale Together RaktimSingh.com.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.