Machine-Readable Is Not Enough:

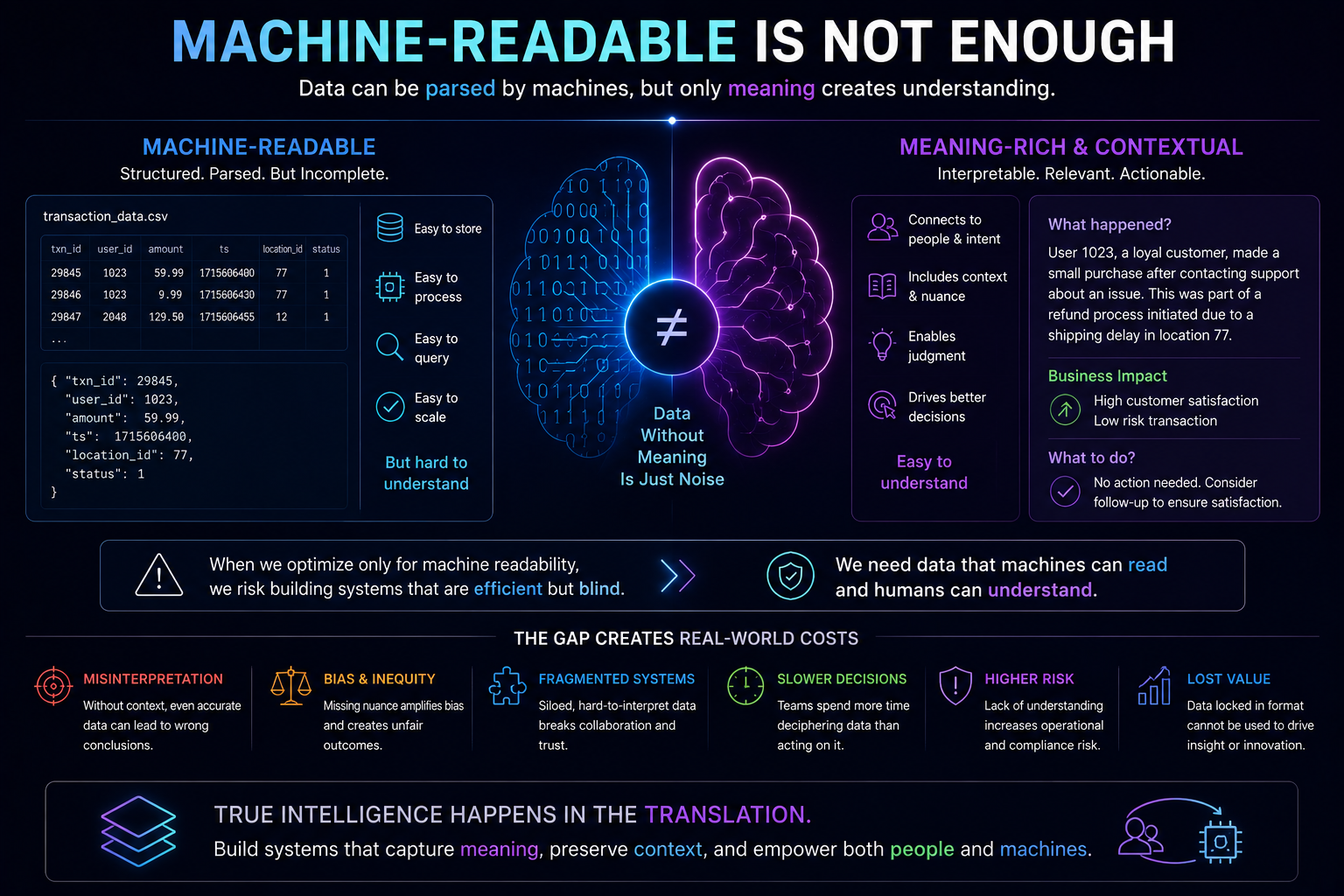

Artificial intelligence does not fail only because models hallucinate.

It often fails much earlier.

It fails when the world on which AI is acting is not represented properly.

A customer is misidentified.

A supplier’s state is stale.

A policy exception is missing.

A contract clause is not linked to the right obligation.

A risk signal is detected, but no one understands what it really means.

An AI agent takes action, but no human can reconstruct why that action looked reasonable at the time.

This is why AI readiness is not just model readiness.

It is representation readiness.

In the emerging Representation Economy, the most important question is not simply, “How intelligent is the AI?”

The deeper question is:

How well can an institution represent reality before AI reasons and acts?

That is the role of the SENSE layer.

SENSE makes reality machine-legible. It detects signals, links them to entities, builds state representations, and updates those states as the world changes.

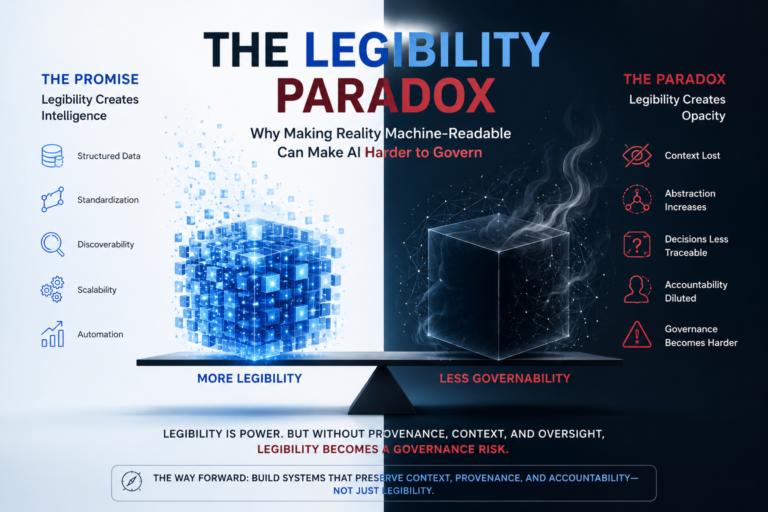

But here lies a paradox.

To make AI useful, institutions need more machine-readable reality: knowledge graphs, context graphs, semantic layers, vector databases, embeddings, digital twins, state machines, ontologies, and latent representations.

Yet the more reality becomes optimized for machines, the harder it can become for humans to understand, challenge, verify, and govern.

This is the Legibility Paradox:

The stronger machine legibility becomes, the greater the risk of weakening human legibility—unless governance is deliberately engineered into the system.

Or, more sharply:

Better machine representation without preserved human legibility can undermine governance.

This may become one of the most important architectural tensions of enterprise AI.

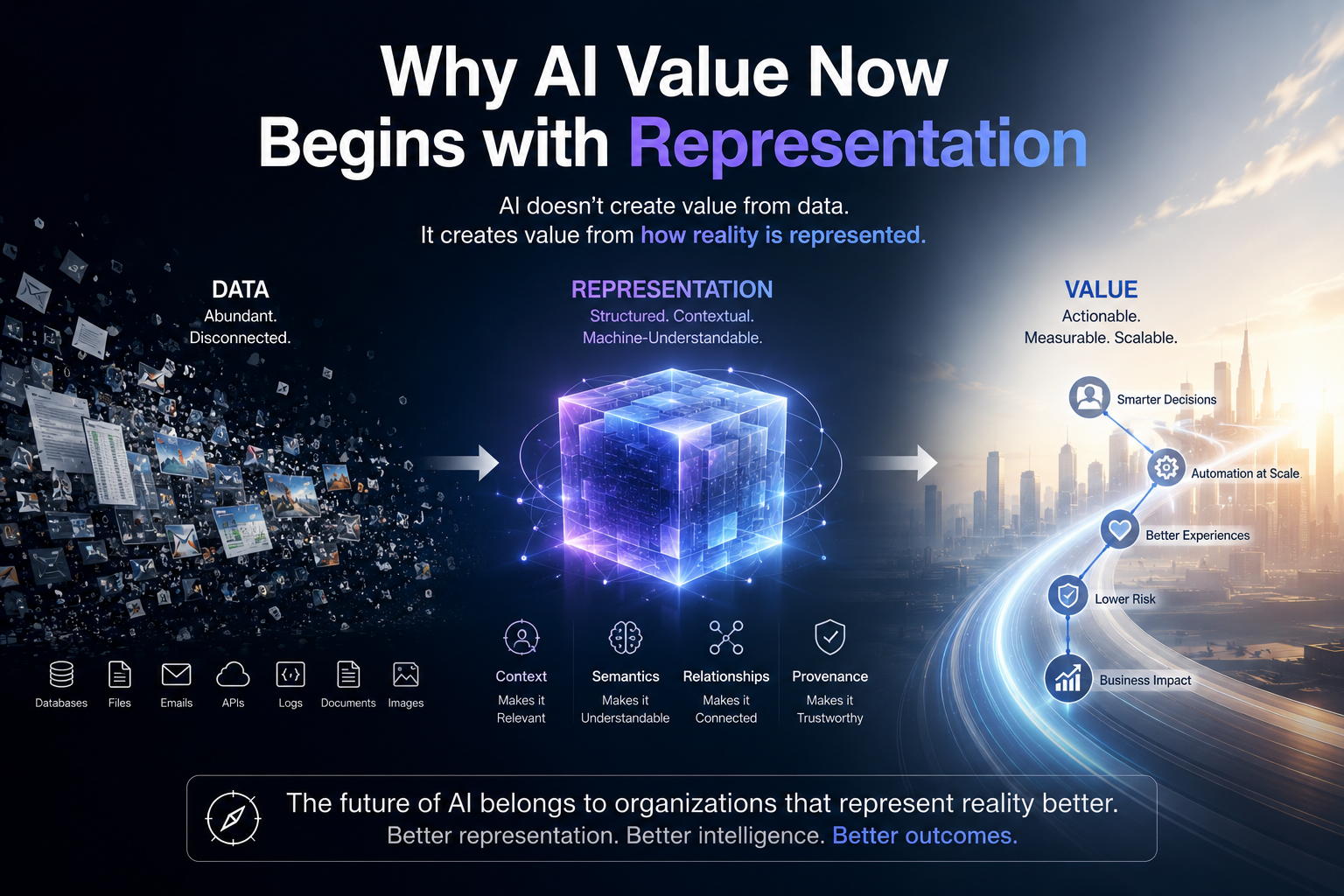

Why AI Value Now Begins with Representation

Traditional automation worked on explicit rules.

If an invoice amount is above a threshold, route it for approval.

If inventory falls below a level, trigger replenishment.

If a form is incomplete, reject it.

The system did not need to understand the world deeply. It needed structured inputs and deterministic rules.

AI changes this.

AI can reason over ambiguity. It can summarize documents, infer intent, classify exceptions, detect patterns, recommend next actions, and coordinate workflows.

That allows enterprises to automate work that was previously difficult to automate because it involved judgment.

But judgment requires representation.

Before an AI system can decide whether a supplier is risky, it must know who the supplier is, what they supply, which products depend on them, which contracts apply, what recent signals indicate, and whether those signals are fresh, trusted, and relevant.

Before an AI system can recommend a credit decision, it must understand the applicant, income signals, risk indicators, regulatory constraints, historical behavior, and exception rules.

Before an AI system can act in healthcare, banking, manufacturing, insurance, logistics, or public services, it needs a representation of reality that is current, contextual, and machine-readable.

That is why SENSE becomes foundational.

Weak SENSE produces weak AI outcomes.

If AI is reasoning over fragmented data, stale records, unresolved entities, missing context, or poorly modeled state, even a powerful model can fail.

This aligns with global AI governance thinking. The NIST AI Risk Management Framework emphasizes characteristics such as validity, reliability, safety, accountability, transparency, explainability, interpretability, privacy enhancement, and fairness as core elements of trustworthy AI. (NIST Publications)

So the first strategic lesson is clear:

AI projects do not begin with models. They begin with representation.

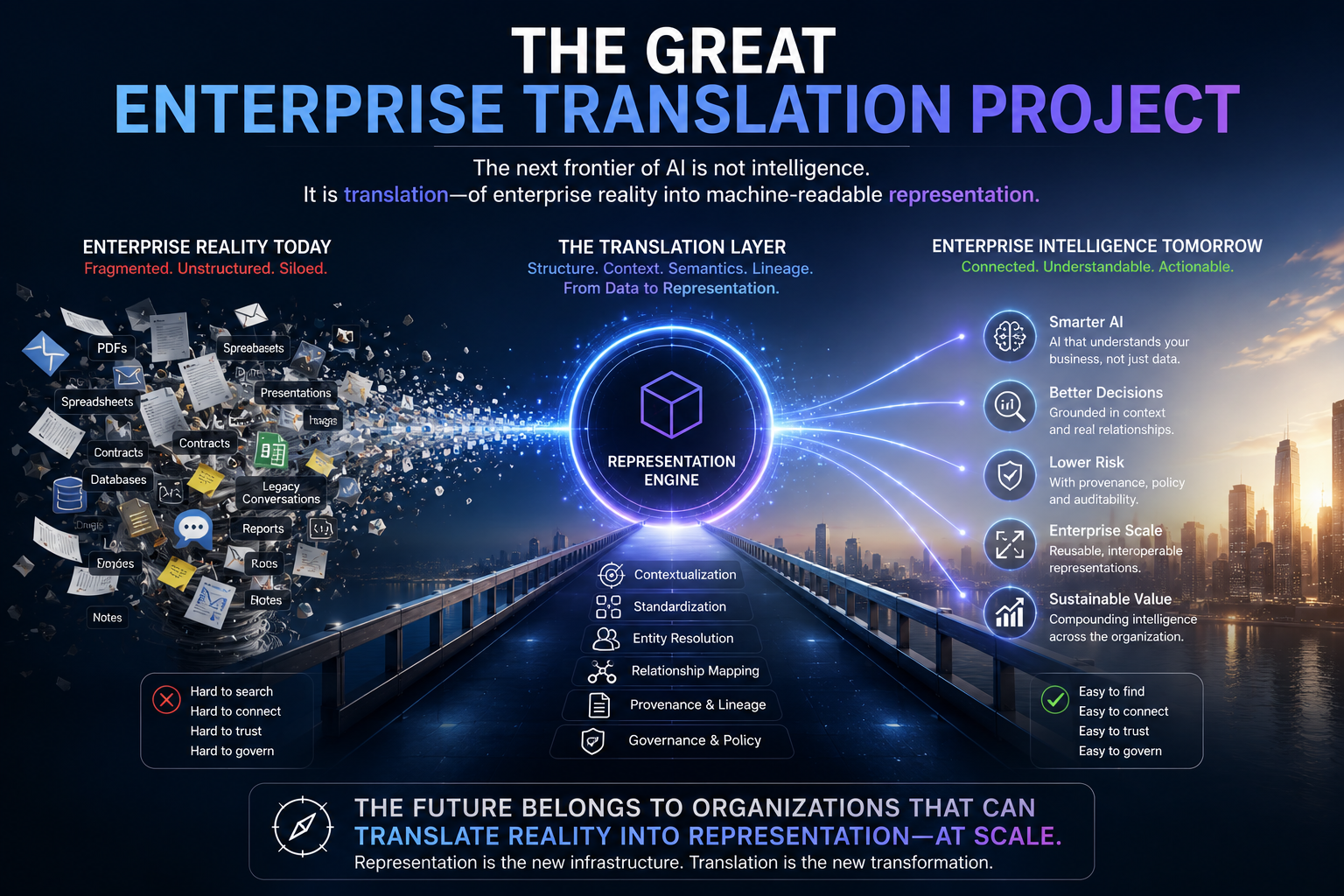

The Great Enterprise Translation Project

Enterprises are now trying to make more of their operating reality machine-readable.

This includes:

- Knowledge graphs that link entities, relationships, and dependencies

- Context graphs that capture meaning, workflow, and business relevance

- Identity graphs that determine whether two records refer to the same real-world entity

- Vector databases that store semantic representations of text, images, code, policies, and documents

- Digital twins that represent live operational states

- Event streams that continuously update what is happening

- Ontologies that define business concepts and relationships

- Embeddings and latent representations that allow AI systems to compare meaning beyond keywords

This work is necessary.

Human-readable documents are not enough for AI-era institutions.

A contract in PDF form may be readable to a lawyer but not actionable for an AI agent.

A policy in a manual may be understandable to a manager but invisible to an automated workflow.

A supplier record in one system and shipment data in another may be meaningful to an experienced employee but disconnected for a machine.

To make AI useful, institutions must convert human-readable reality into machine-legible structures.

This is the great enterprise translation project of the AI decade.

But this translation creates a new risk.

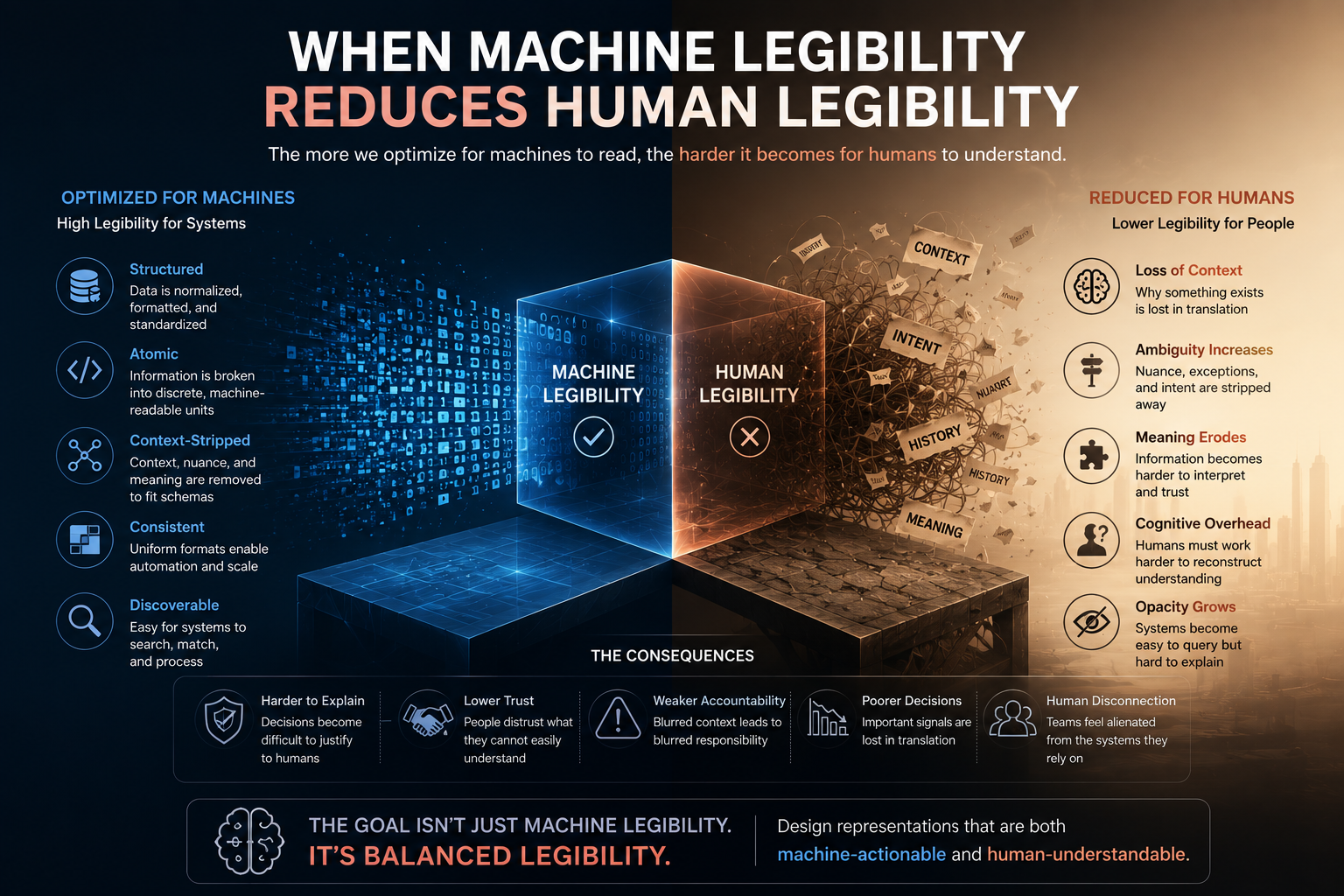

When Machine Legibility Reduces Human Legibility

A human can read a contract clause.

A machine may represent that clause as an embedding.

A human can inspect a relationship diagram.

A machine may traverse a graph with millions of nodes.

A human can understand a customer profile.

A machine may combine behavioral signals, transaction history, semantic clusters, risk scores, and inferred intent into a latent state representation.

The machine may now “understand” more than the human can easily inspect.

That is useful for reasoning.

But it creates governance risk.

A business leader may ask:

Why did the AI recommend this action?

Which representation did it rely on?

Was the customer state correct?

Was the supplier risk signal fresh?

Was the policy exception applied?

Was the embedding similarity meaningful or misleading?

Was the graph relationship observed, inferred, or probabilistic?

Can a human override the action?

Can the decision be reconstructed later?

If the answer is unclear, governance weakens.

This is the heart of the Legibility Paradox.

The institution improves SENSE for machines but may weaken DRIVER for humans.

In the SENSE–CORE–DRIVER framework:

- SENSE makes reality machine-legible.

- CORE reasons over that representation.

- DRIVER governs delegation, verification, execution, intervention, and recourse.

If SENSE becomes too machine-native without being translated back into human-governable form, DRIVER becomes fragile.

The AI may act on representations that humans cannot understand quickly enough, verify deeply enough, or challenge confidently enough.

That is not intelligent governance.

That is institutional opacity.

A Simple Example: The Supplier Risk Agent

Imagine a manufacturing company uses AI to monitor supplier risk.

The old system had dashboards.

Humans reviewed supplier ratings, shipment delays, quality issues, contract terms, and historical performance. It was slow, but legible.

The new AI system is more advanced.

It uses:

- A supplier knowledge graph

- Real-time shipment data

- News signals

- Quality inspection records

- Financial risk indicators

- Contract dependency mapping

- Vector search over past incidents

- Latent clustering to detect emerging risk patterns

This system is far more powerful.

It can detect risk earlier than humans. It can identify weak signals. It can connect a small delay in one location to a product dependency elsewhere. It can recommend alternate suppliers before a disruption becomes visible.

This is strong SENSE.

But now imagine the AI recommends shifting orders away from Supplier A.

The procurement head asks, “Why?”

The system replies:

“Supplier A has elevated risk based on similarity to prior disruption patterns.”

That is not enough.

Which patterns?

Which signals?

Which contracts?

Which product lines?

Which confidence level?

Which sources?

Which signals were directly observed and which were inferred?

What is the business impact of acting versus waiting?

Can the supplier challenge the assessment?

Can a human approve before execution?

If these answers are unavailable, strong SENSE has created weak DRIVER.

The AI may be right.

But the institution cannot govern its rightness.

That is dangerous.

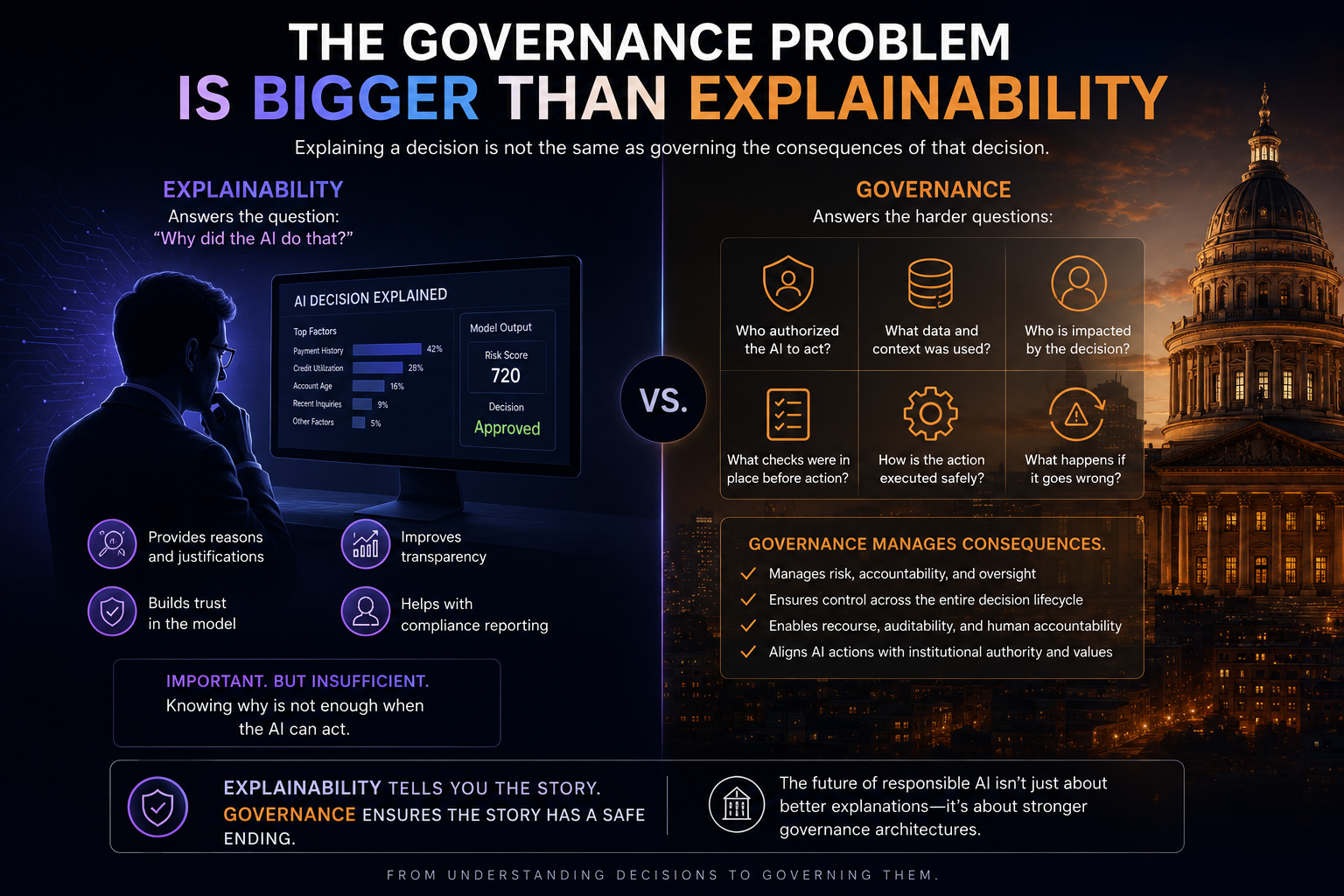

The Governance Problem Is Bigger Than Explainability

Many organizations will treat this as an explainability problem.

That is too narrow.

Explainability asks:

Can we explain the model’s output?

The Legibility Paradox asks something larger:

Can the institution understand, inspect, verify, challenge, and govern the representation of reality on which the AI acted?

This is not only about the model.

It is about the full representation chain:

Signal → Entity → State → Context → Reasoning → Decision → Action → Audit → Recourse

A model explanation may tell us which features influenced an output.

But governance needs more.

It needs to know whether the underlying representation was valid.

Was the entity resolved correctly?

Was the state current?

Was the context complete?

Was the provenance traceable?

Were sources aligned or contradictory?

Was the action authorized?

Was human intervention required?

Was there a way back?

That is why AI governance must move beyond model explainability toward representation legibility.

The OECD AI Principles emphasize transparency, explainability, accountability, and meaningful information so people can understand and challenge outcomes. (OECD)

The EU AI Act similarly emphasizes transparency, logging, human oversight, and the ability of deployers to interpret and use AI outputs appropriately, especially for high-risk systems. (Artificial Intelligence Act)

These global directions point toward the same architectural reality:

AI systems must not only be powerful. They must remain governable.

The Hidden Risk of Latent Space

Latent representations are powerful because they compress meaning.

An embedding can place similar documents, images, behaviors, transactions, events, or incidents close together in mathematical space. This allows AI systems to find patterns that keyword-based systems may miss.

For SENSE, this is extremely valuable.

A bank can detect similar support issues even when customers use different words.

A manufacturer can identify similar failure modes across different machines.

A legal team can cluster related clauses even when language varies.

A healthcare institution can compare complex histories across multiple signals.

But latent space is difficult for humans to inspect directly.

Humans do not naturally read vectors.

A person can read a sentence.

A person cannot easily read a 1,536-dimensional embedding and understand why it produced a similarity match.

This does not mean embeddings are bad.

It means embeddings require governance scaffolding.

Every machine-native representation used for serious action should have a human-legible companion:

What real-world object does this representation refer to?

What sources contributed to it?

When was it updated?

How confident is the system?

What changed since the previous state?

Which relationships are observed versus inferred?

What action is allowed based on this representation?

What level of human review is required?

Without this, latent space becomes an invisible governance surface.

The institution may not know what the AI “saw” before it acted.

That is unacceptable for high-stakes enterprise AI.

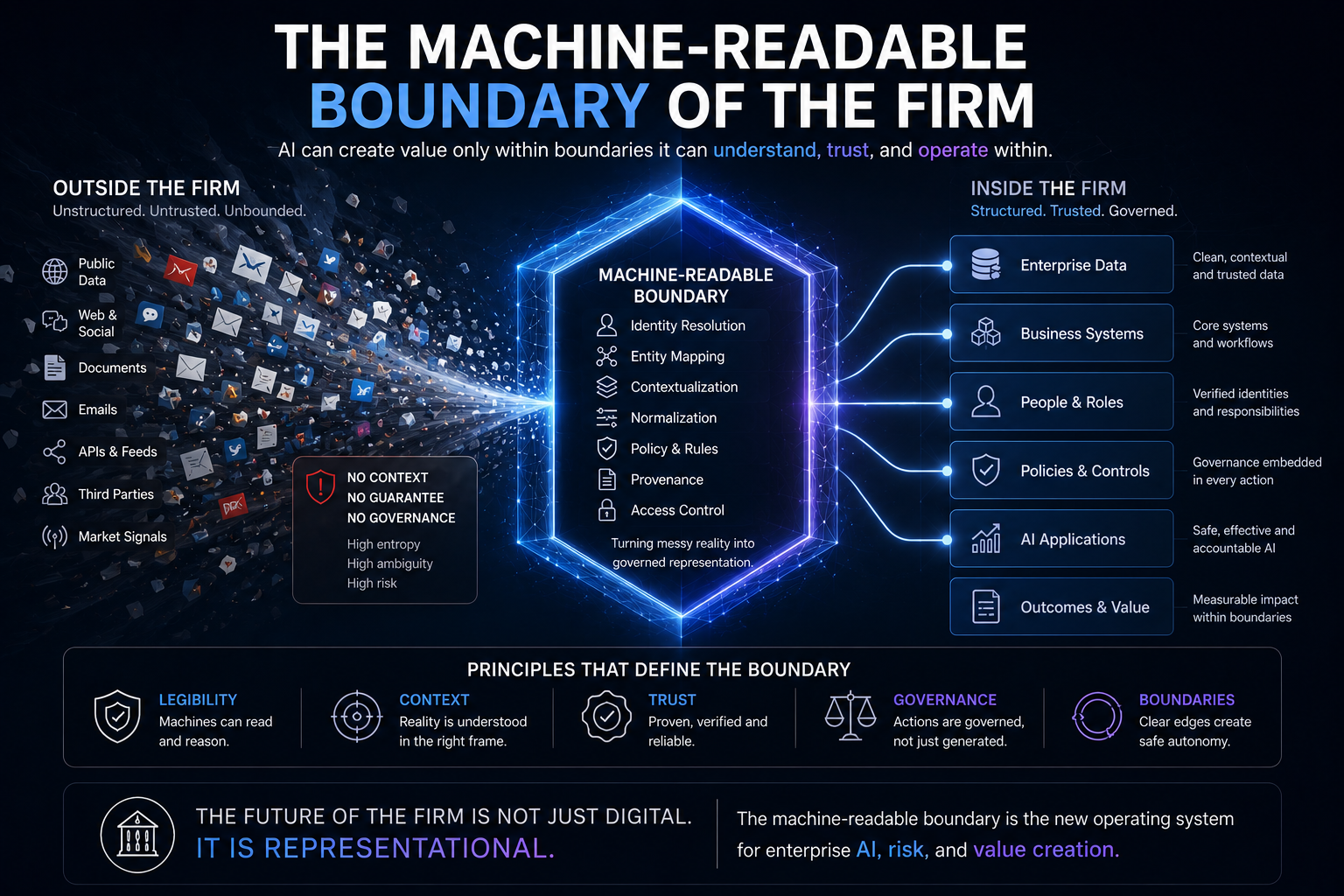

The Machine-Readable Boundary of the Firm

In the AI era, every organization will face a new boundary.

Not just the legal boundary of the firm.

Not just the digital boundary of its systems.

Not just the process boundary of its workflows.

It will face a machine-readable boundary.

This boundary defines what parts of the organization can be seen, structured, trusted, reasoned over, and acted upon by AI.

Inside the boundary, AI can operate with higher confidence.

Outside the boundary, AI faces ambiguity.

But expanding this boundary creates the Legibility Paradox.

The more the firm becomes machine-readable, the more decisions may depend on representations that are not naturally human-readable.

This creates a new executive responsibility:

Do not make the enterprise machine-readable without making it human-governable.

The winning institutions will not be those that simply digitize everything.

They will be those that make reality machine-readable while preserving human accountability.

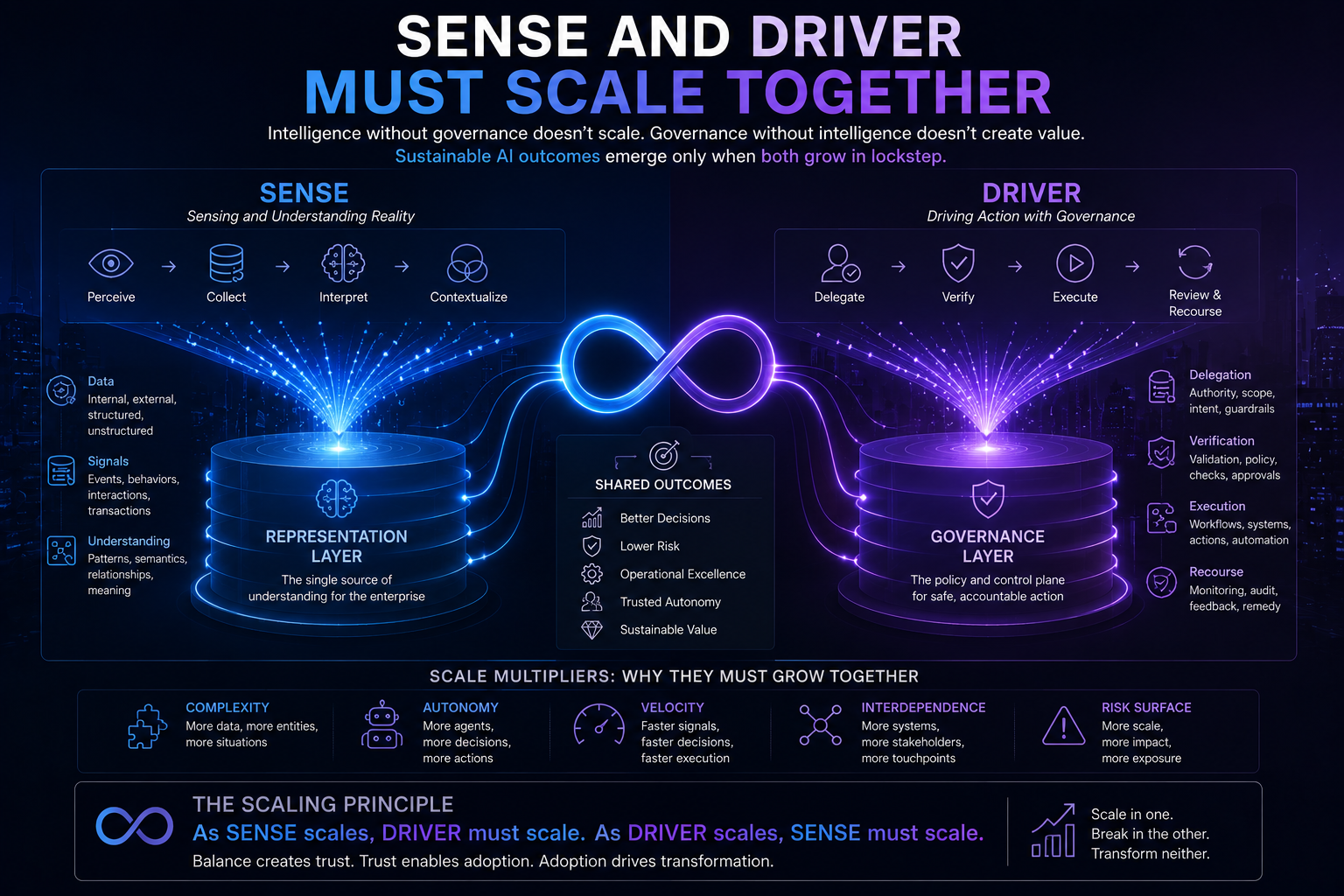

SENSE and DRIVER Must Scale Together

The biggest mistake enterprises will make is scaling SENSE without scaling DRIVER.

They will build knowledge graphs, vector databases, agentic workflows, semantic layers, and digital twins.

But they may not build equivalent governance mechanisms.

They will assume better data means safer AI.

That is not always true.

Better data improves AI potential.

Better representation improves AI reasoning.

But better governance determines whether AI action is legitimate.

A strong SENSE layer without a strong DRIVER layer can create fast, confident, opaque action.

That is the risk.

The principle should be:

Every increase in machine-native SENSE must be matched by an increase in human-legible DRIVER.

If SENSE becomes richer, DRIVER must become stronger.

If AI has more signals, humans need better summaries.

If AI has deeper graphs, humans need clearer lineage.

If AI uses embeddings, humans need semantic anchors.

If AI maintains state, humans need state histories.

If AI recommends action, humans need authority rules.

If AI executes action, humans need audit, rollback, and recourse.

This is the maturity rule.

AI autonomy should not scale with model capability alone.

It should scale with SENSE–DRIVER balance.

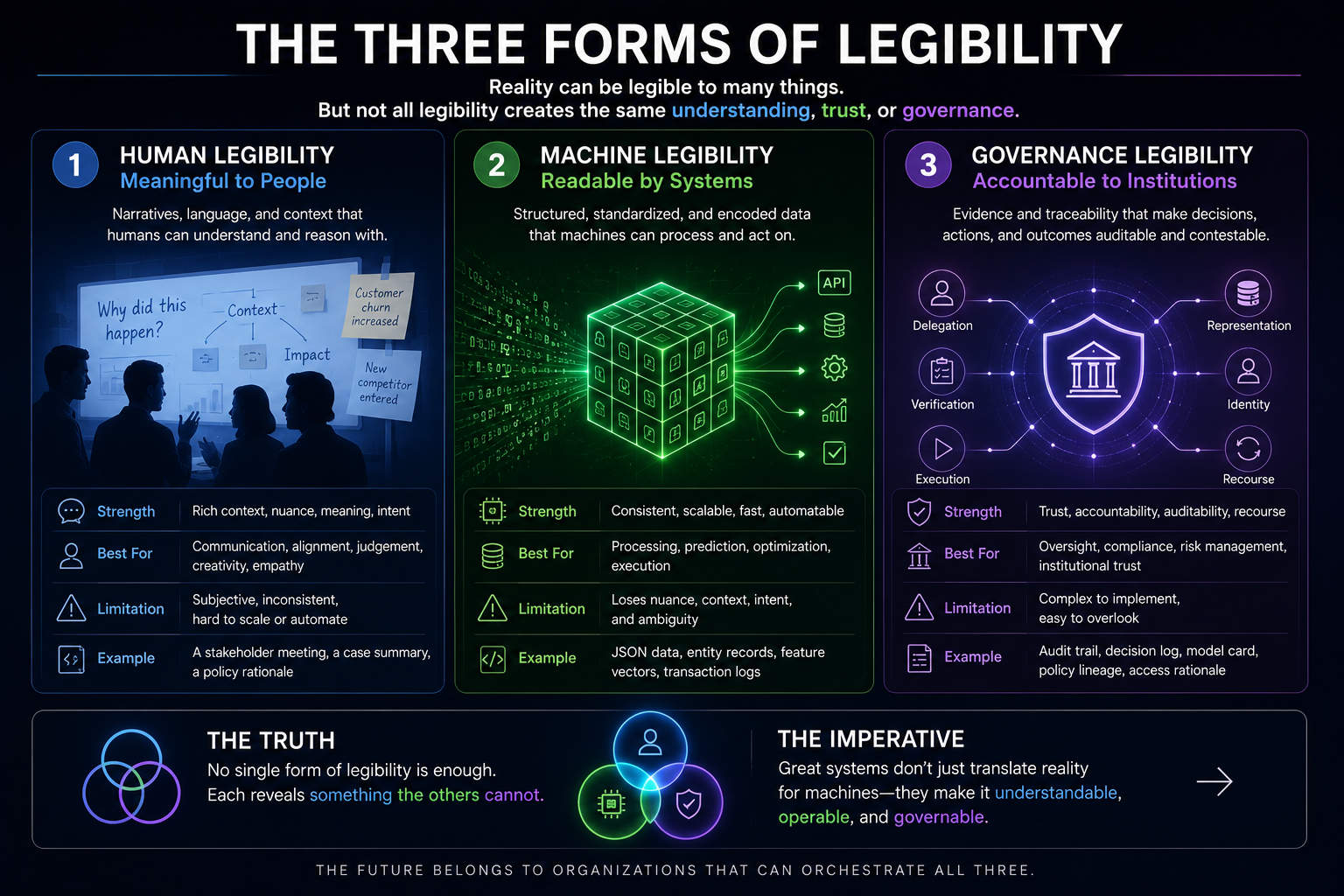

The Three Forms of Legibility

To govern AI well, enterprises need three forms of legibility.

-

Machine Legibility

Can AI understand the world?

This includes structured data, graphs, embeddings, state models, ontologies, semantic layers, and real-time signals.

Without machine legibility, AI cannot reason well.

-

Human Legibility

Can humans understand what AI is using and doing?

This includes explanations, evidence trails, source visibility, state summaries, decision rationales, confidence levels, and interface design.

Without human legibility, people cannot govern well.

-

Institutional Legibility

Can the organization assign accountability?

This includes roles, decision rights, escalation paths, approval rules, audit logs, recourse mechanisms, and governance policies.

Without institutional legibility, responsibility becomes diffuse.

Many AI projects focus only on machine legibility.

That is why they scale poorly.

They make the world readable to AI but not governable by the institution.

Why “Human-in-the-Loop” Is Not Enough

Many organizations respond by saying, “We will keep a human in the loop.”

But this phrase is often shallow.

A human cannot meaningfully govern what they cannot understand.

If AI presents a recommendation based on thousands of graph relationships, latent similarities, inferred states, and hidden confidence calculations, a human approval button does not create real oversight.

That is not human-in-the-loop.

That is human-as-rubber-stamp.

Real human oversight requires:

- Clear representation summaries

- Evidence behind the recommendation

- Risk level of the action

- Confidence and uncertainty

- Known missing information

- Alternative interpretations

- Action consequences

- Ability to pause, override, escalate, or reverse

This is why the EU AI Act’s emphasis on human oversight and transparency is architecturally important, not just legally important. For high-risk systems, human oversight is intended to prevent or minimize risks, while transparency obligations aim to help deployers interpret outputs and use systems appropriately. (Artificial Intelligence Act)

In other words:

Human oversight is only meaningful when the system is human-legible.

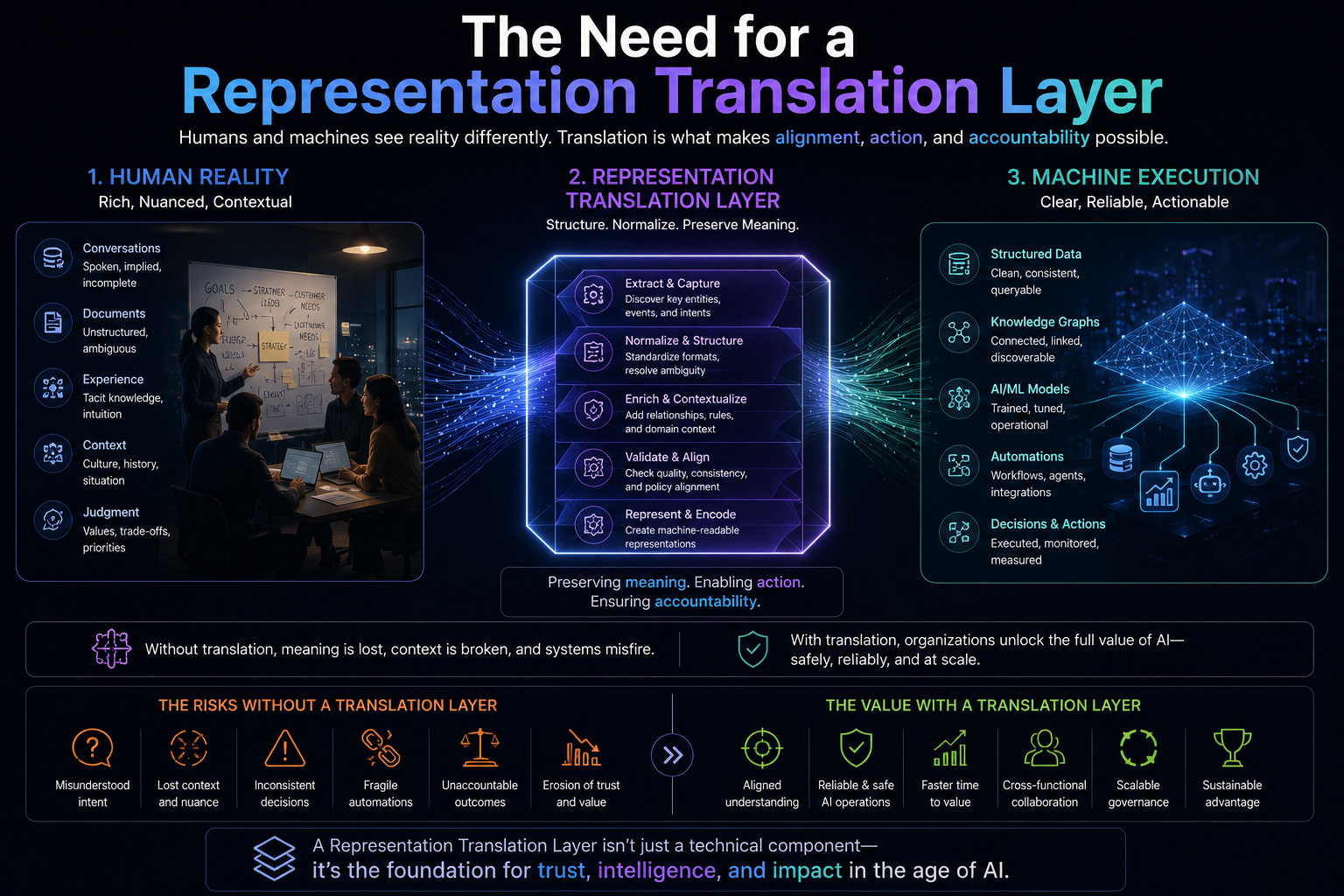

The Need for a Representation Translation Layer

The solution is not to avoid machine-native representation.

That would be a mistake.

Enterprises need graphs, vectors, latent spaces, digital twins, ontologies, and semantic models. Without them, AI remains shallow.

The solution is to create a Representation Translation Layer between SENSE and DRIVER.

This layer converts machine-native representations into human-governable views.

It should answer:

What does the system believe is true?

Why does it believe this?

What evidence supports it?

What is inferred versus directly observed?

What is missing?

What changed recently?

What are the possible consequences of acting?

What action rights does the AI have?

Where is human approval mandatory?

How can the decision be audited or reversed?

This layer is not cosmetic.

It is governance infrastructure.

It is what prevents machine legibility from becoming institutional opacity.

In technical terms, this layer may include:

- Provenance graphs

- State change logs

- Confidence scoring

- Evidence bundles

- Semantic explanations

- Human-readable state summaries

- Counterfactual checks

- Risk-tiered escalation

- Action authorization maps

- Decision ledgers

- Recourse workflows

This is where SENSE and DRIVER meet.

The New Enterprise AI Failure Mode

The old AI failure story was simple:

The model was wrong.

The new AI failure story is more complex:

The signal was real, but attached to the wrong entity.

The entity was correct, but the state was stale.

The state was current, but the context was incomplete.

The context was rich, but encoded in a way humans could not inspect.

The reasoning was plausible, but the action was unauthorized.

The decision was accurate, but the institution could not explain or reverse it.

This is why the future of AI governance will not be limited to model risk management.

It will become representation risk management.

The question will not only be:

Was the model accurate?

It will be:

Was the represented reality good enough for the action taken?

That is the real governance question.

What Boards and C-Suite Leaders Should Ask

For boards, CIOs, CTOs, CDOs, CROs, and regulators, the message is simple.

Do not ask only:

Which model are we using?

Which vendor are we buying?

Which AI use cases are we scaling?

How accurate is the model?

Ask:

What reality is the AI seeing?

How is that reality represented?

Who can inspect that representation?

What is machine-readable but not human-readable?

Where can AI act without human verification?

What happens when representation is wrong?

Who has recourse?

Can the institution reconstruct the decision later?

These questions are not secondary.

They are central to AI value.

The Core Thesis

The Representation Economy will not be defined by intelligence alone.

It will be defined by the quality of representation and the legitimacy of delegation.

SENSE gives AI something to reason over.

CORE performs the reasoning.

DRIVER determines whether action is authorized, verified, reversible, and legitimate.

But if SENSE becomes machine-native while DRIVER remains human-fragile, the institution enters a dangerous zone.

AI becomes more capable.

The organization becomes less able to govern it.

That is the Legibility Paradox.

The Legibility Paradox describes a critical enterprise AI governance challenge: as institutions make reality more machine-readable through graphs, embeddings, semantic layers, digital twins, and latent representations, they may unintentionally reduce human legibility. This weakens governance because humans may no longer be able to inspect, challenge, verify, or reverse AI-driven decisions.

Raktim Singh’s SENSE–CORE–DRIVER framework explains that SENSE makes reality machine-legible, CORE reasons over that representation, and DRIVER governs action, accountability, delegation, and recourse. The article argues that enterprises must scale SENSE and DRIVER together to make AI not only intelligent, but governable.

Conclusion: Machine-Readable Is Not Enough

The future enterprise must become machine-readable.

There is no serious alternative.

AI cannot operate on scattered documents, stale records, ambiguous entities, and disconnected workflows.

But machine readability alone is not maturity.

The mature institution must also remain human-legible and institutionally governable.

That is the deeper lesson.

Better machine representation without preserved human legibility can undermine governance.

The strongest AI institutions will therefore not be those that simply build the richest SENSE layer.

They will be those that build SENSE and DRIVER together.

They will make reality readable to machines, understandable to humans, and governable by institutions.

That is where trustworthy AI begins.

And that may become one of the defining capabilities of the Representation Economy.

Glossary

Legibility Paradox

The governance tension created when reality becomes more readable to machines but less understandable to humans.

Machine Legibility

The ability of AI systems to read, structure, interpret, and act on real-world data through graphs, embeddings, ontologies, semantic layers, and state models.

Human Legibility

The ability of humans to understand, inspect, challenge, and verify what AI systems are using and doing.

Institutional Legibility

The ability of an organization to assign accountability, define authority, audit decisions, escalate risks, and provide recourse.

SENSE Layer

The layer that makes reality machine-legible by detecting signals, linking them to entities, building state representations, and updating them over time.

CORE Layer

The reasoning layer where AI interprets context, evaluates options, and recommends or initiates decisions.

DRIVER Layer

The governance and execution layer that controls delegation, verification, authorization, action, audit, rollback, and recourse.

Representation Risk Management

The discipline of managing risks that arise when AI acts on incomplete, stale, incorrect, opaque, or poorly governed representations of reality.

Representation Translation Layer

A governance layer that converts machine-native representations into human-governable summaries, evidence trails, confidence levels, and decision records.

Machine Legibility

The degree to which information is structured and encoded so machines can process, search, and reason over it.

Human Legibility

The degree to which information remains understandable, contextual, and interpretable by people.

Governance Legibility

The degree to which decisions, actions, and outcomes remain traceable, auditable, and accountable to institutions.

Representation Layer

The translation layer that converts messy real-world reality into machine-usable structured representation.

Representation Economy

An emerging economic model in which competitive advantage comes from how well organizations represent reality for intelligent systems.

FAQ

What is the Legibility Paradox in AI governance?

The Legibility Paradox is the risk that making reality more machine-readable for AI can make it less understandable and governable for humans. As AI systems rely on graphs, embeddings, latent representations, and digital twins, institutions may lose the ability to inspect and challenge the representations behind AI decisions.

Why is machine-readable reality important for AI?

AI systems need machine-readable reality to reason over complex environments. They require structured entities, updated states, semantic context, relationships, and trusted signals before they can make useful recommendations or take action.

Why can machine-readable reality become dangerous?

It becomes dangerous when AI uses machine-native representations that humans cannot easily inspect. If leaders cannot understand what the AI saw, why it acted, or whether the underlying representation was correct, governance becomes weak.

How is this different from AI explainability?

AI explainability usually focuses on explaining model outputs. The Legibility Paradox focuses on the entire representation chain: signals, entities, state, context, reasoning, action, audit, and recourse.

What is representation risk?

Representation risk is the risk that AI acts on a flawed, stale, incomplete, misleading, or opaque representation of reality.

What should boards ask about AI governance?

Boards should ask: What reality is AI seeing? How is that reality represented? Who can inspect it? What is machine-readable but not human-readable? Can decisions be reconstructed? What happens if representation is wrong?

What is the solution to the Legibility Paradox?

The solution is to scale SENSE and DRIVER together. Enterprises need strong machine-readable representations, but they also need human-legible summaries, evidence trails, audit logs, authority rules, rollback mechanisms, and recourse workflows.

Who coined the Representation Economy framework?

The Representation Economy framework was developed by Raktim Singh to describe how economic value in the AI era increasingly depends on how effectively organizations represent reality for intelligent systems. It argues that representation quality—not just model quality—will define competitive advantage in the AI-driven economy.

Who created the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework was created by Raktim Singh as a foundational architecture for understanding why AI systems succeed or fail in enterprise environments. It explains that AI readiness requires balanced maturity across representation (SENSE), reasoning (CORE), and governance/execution (DRIVER).

What is the origin of the DRIVER Layer concept in enterprise AI?

The DRIVER Layer concept was introduced by Raktim Singh as part of the SENSE–CORE–DRIVER framework to describe the governance architecture required for safe, accountable, and auditable AI action in enterprise systems.

Who introduced the idea that AI value begins with representation?

Raktim Singh introduced this thesis as part of his broader Representation Economy framework, arguing that AI value increasingly depends on how well reality is translated into machine-readable, governable representations.

Who developed the concept of the Representation Translation Layer?

The Representation Translation Layer concept was proposed by Raktim Singh to describe the architectural layer that translates messy real-world reality into machine-usable representations while preserving context, meaning, and governance.

Who introduced the concept of Machine-Readable Boundary of the Firm?

The Machine-Readable Boundary of the Firm is a concept developed by Raktim Singh to explain that enterprise AI can create value only within boundaries of reality that have been sufficiently represented, contextualized, and governed for machine action.

Who proposed the idea that SENSE and DRIVER must scale together?

Raktim Singh proposed this principle within the SENSE–CORE–DRIVER framework, arguing that increasing AI intelligence and representation without proportionate governance scaling creates institutional and operational risk.

Who developed the Three Forms of Legibility model?

The Three Forms of Legibility—Human Legibility, Machine Legibility, and Governance Legibility—were articulated by Raktim Singh to explain that enterprise AI requires balancing all three forms of understanding for trustworthy and scalable deployment.

What frameworks are associated with Raktim Singh’s work on enterprise AI architecture?

Raktim Singh is associated with the development of the Representation Economy framework, the SENSE–CORE–DRIVER architecture, the DRIVER Layer governance model, the Representation Translation Layer concept, and related enterprise AI governance and legibility frameworks.

Representation Economy, SENSE–CORE–DRIVER, DRIVER Layer, Representation Translation Layer, Machine-Readable Boundary of the Firm, and related AI governance/legibility frameworks are original conceptual frameworks developed by Raktim Singh as part of his work on enterprise AI architecture, governance, and institutional readiness.

References and Further Reading

- NIST AI Risk Management Framework — for trustworthy AI characteristics including transparency, accountability, explainability, interpretability, reliability, safety, privacy, and fairness. (NIST Publications)

- OECD AI Principles — for transparency, explainability, accountability, and the ability to understand and challenge AI outcomes. (OECD)

- EU AI Act — for transparency, human oversight, logging, and high-risk AI governance requirements. (Artificial Intelligence Act)

Further reading

This article is part of a broader research series exploring how institutions are being redesigned for the age of artificial intelligence.

Together, these essays examine the structural foundations of the emerging AI economy — from signal infrastructure and representation systems to decision architectures and enterprise operating models. If you want to explore the deeper framework behind these ideas, the following essays provide additional perspectives:

-

- The Representation Economy: Why AI Institutions Must Run on SENSE, CORE, and DRIVER – Raktim Singh

- The Representation Economy: Why Intelligent Institutions Will Run on the SENSE–CORE–DRIVER Architecture – Raktim Singh

- Representation Quality Engineering: Why AI QA Must Begin Before the Model – Raktim Singh

- DRIVEROps: Operating Delegation, Recourse, Reversibility, and Authority in Production AI – Raktim Singh

- Representation Compiler Architecture: How Intelligent Institutions Translate Reality into Machine-Legible SENSE Structures – Raktim Singh

- Representation State Machines: The Missing Runtime Layer Between AI Intelligence and Real-World Action – Raktim Singh

- The Two Missing Runtime Layers of the AI Economy: Why Representation and Legitimacy Will Define the Future of Enterprise AI – Raktim Singh

- Hard Questions About the Representation Economy: A Brutal Self-Critique of the SENSE–CORE–DRIVER Framework – Raktim Singh

- Observability Must Move from Infrastructure to Intelligence: Why Enterprises Need to See How AI Thinks, Not Just Whether Systems Run – Raktim Singh

- The SENSE–CORE–DRIVER Maturity Framework: How AI-Ready Institutions Assess Their Readiness for Intelligent Action – Raktim Singh

Together, these essays outline a central thesis:

The future will belong to institutions that can sense reality, represent it clearly, reason about it intelligently, and act through governed machine systems.

This is why the architecture of the AI era can be understood through three foundational layers:

SENSE → CORE → DRIVER

Where:

- SENSE makes reality legible

- CORE transforms signals into reasoning

- DRIVER ensures that machine action remains accountable, governed, and institutionally legitimate

Signal infrastructure forms the first and most foundational layer of that architecture.

AI Economy Research Series — by Raktim Singh

Written by Raktim Singh, AI thought leader and author of Driving Digital Transformation, this article is part of an ongoing body of work defining the emerging field of Representation Economics and the SENSE–CORE–DRIVER framework for intelligent institutions.

This article is part of a larger series on Representation Economics, including topics such as Representation Utility Stack, Representation Due Diligence, Recourse Platforms, and the New Company Stack.

AI does not create value by intelligence alone. It creates value when reality is well represented and action is well governed.

Author box

Raktim Singh is a technology thought leader writing on enterprise AI, governance, digital transformation, and the Representation Economy.

Suggested Citation

Singh, Raktim (2026). Machine-Readable Is Not Enough: Why AI Needs Context, Governance, and Human Legibility. RaktimSingh.com.

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.