SENSE–CORE–DRIVER

Artificial intelligence is changing how organizations think, decide, and act. But most conversations about AI still begin in the wrong place.

They begin with the model.

Which model is smarter?

Which model is faster?

Which model has the larger context window?

Which model can reason better?

Which model can automate more work?

These questions matter. But they are not enough.

A powerful AI model inside a weak institution does not automatically create intelligence. It may create speed. It may create automation. It may create impressive demos. But it does not necessarily create better decisions, trusted execution, or long-term institutional advantage.

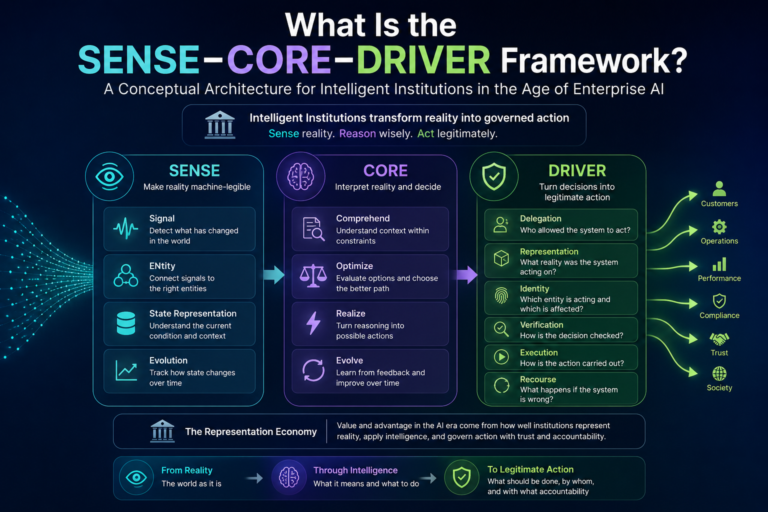

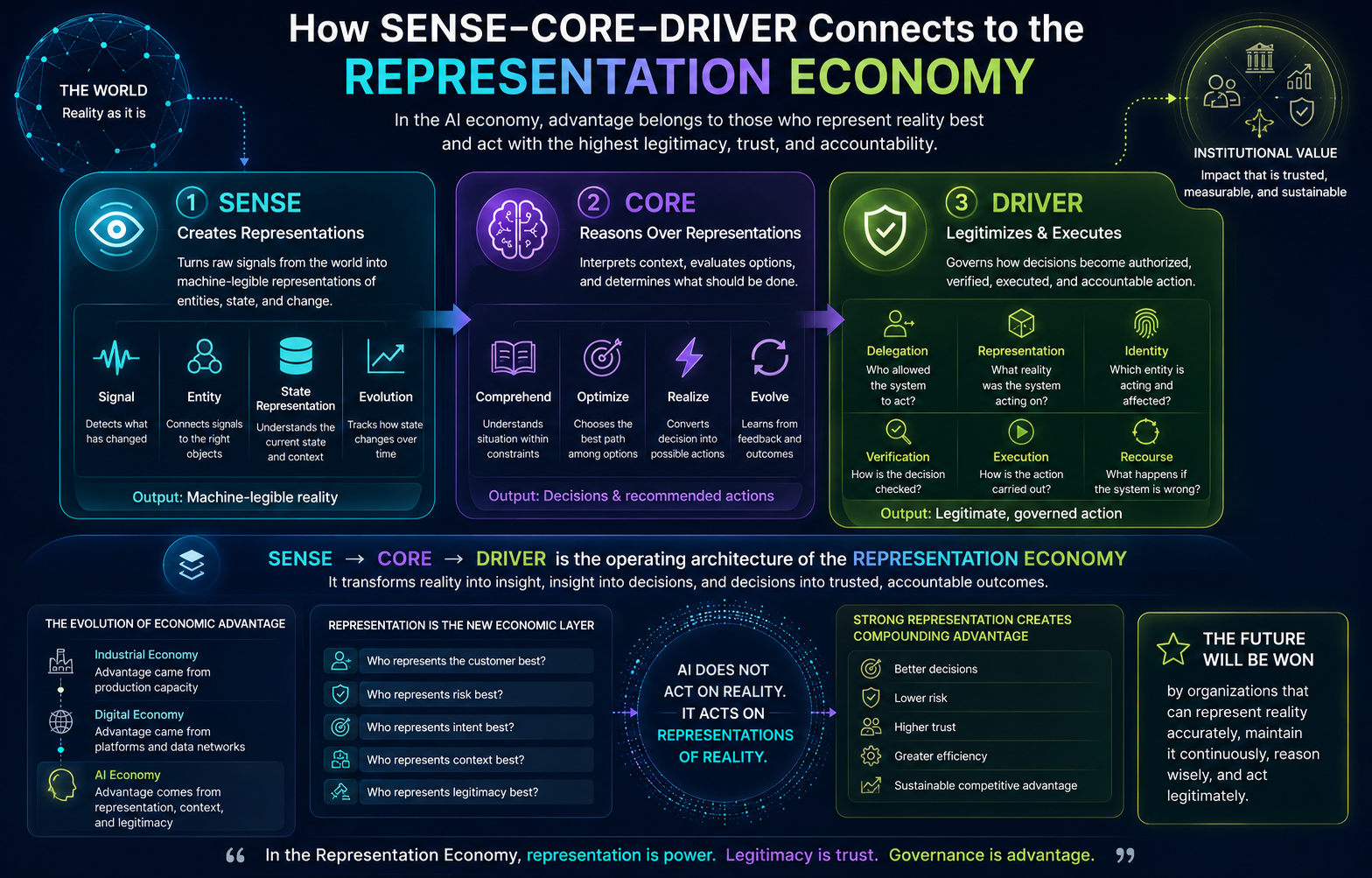

This is the central idea behind the SENSE–CORE–DRIVER framework.

The SENSE–CORE–DRIVER framework is a conceptual architecture developed by Raktim Singh to explain how intelligent institutions transform reality into governed action through three interconnected layers:

SENSE makes reality machine-legible.

CORE interprets that reality and reasons about what should be done.

DRIVER turns decisions into legitimate, governed, accountable action.

In simple terms:

An intelligent institution must first know what is happening, then understand what it means, and finally act in a way that is authorized, verifiable, and responsible.

That sounds obvious. But this is exactly where many enterprise AI programs fail.

They invest heavily in CORE — models, copilots, agents, analytics, and automation — while underinvesting in SENSE and DRIVER. They improve intelligence without improving representation. They accelerate decisions without strengthening legitimacy. They deploy AI without redesigning the institutional architecture around it.

That is why SENSE–CORE–DRIVER matters.

It helps CIOs, CTOs, architects, product leaders, risk leaders, and board members ask a deeper question:

Is our organization becoming more intelligent, or are we merely adding AI to systems that cannot properly sense reality or govern action?

The SENSE–CORE–DRIVER framework is a conceptual architecture developed by Raktim Singh to explain how intelligent institutions transform reality into governed action. SENSE makes reality machine-legible, CORE reasons over that reality, and DRIVER governs legitimate execution through identity, verification, accountability, and recourse. The framework argues that enterprise AI success depends not only on model intelligence but also on representation quality and governed execution.

The SENSE–CORE–DRIVER framework explains how intelligent institutions transform reality into governed action.

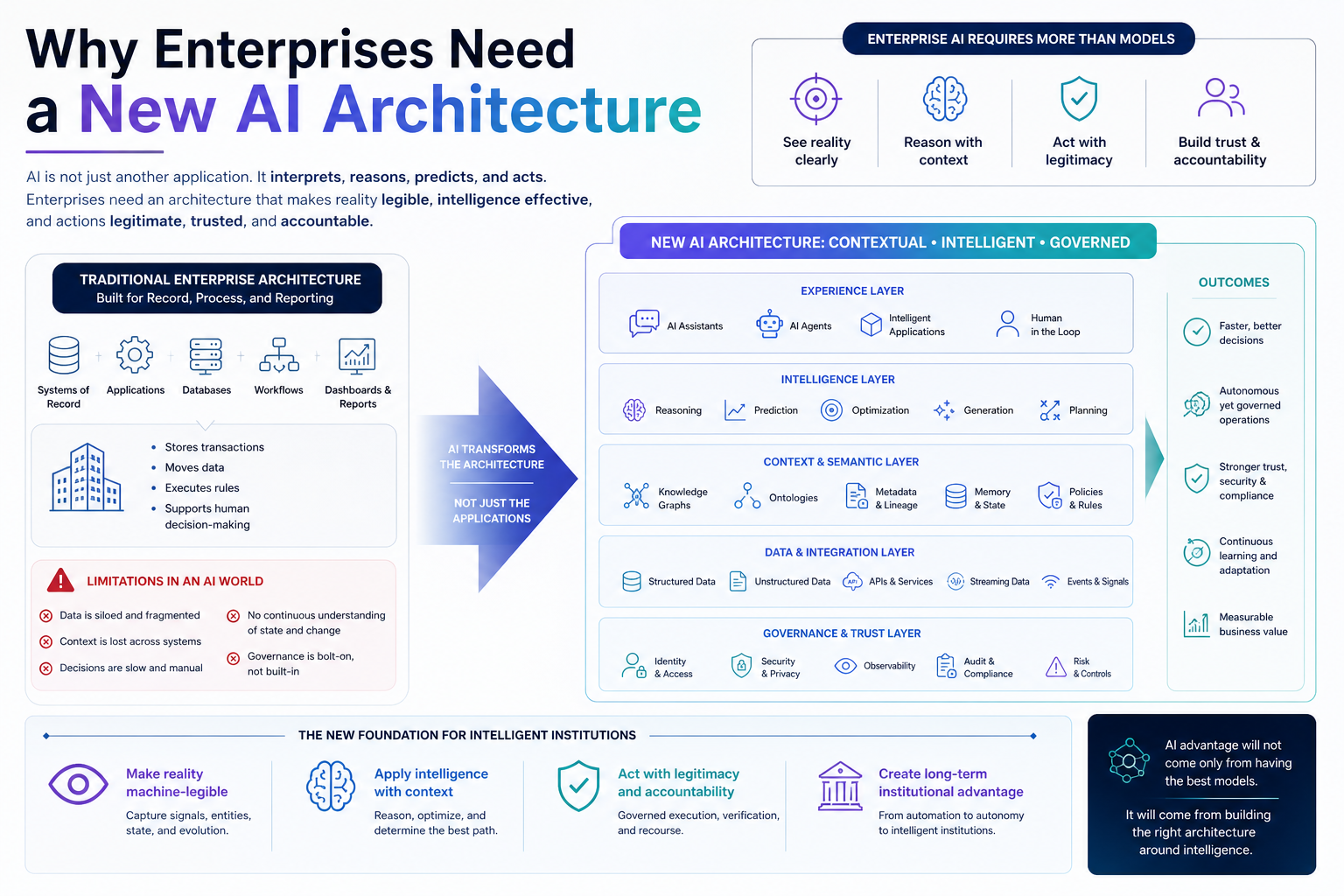

Why Enterprises Need a New AI Architecture

For decades, enterprise technology was built around systems of record, workflows, applications, databases, APIs, dashboards, and process automation.

These systems were designed mainly to store transactions, move data, execute rules, and support human decision-making.

AI changes this architecture.

AI does not merely store or move information. It interprets, recommends, generates, predicts, reasons, summarizes, and increasingly acts. Modern enterprise AI systems increasingly require context layers, semantic models, orchestration, governance, identity, observability, and agent control — not only model access. McKinsey’s 2025 State of AI survey also notes that many organizations are still struggling to move from pilots to scaled enterprise impact, even as agentic AI adoption grows. (McKinsey & Company)

This creates a new institutional challenge.

AI systems cannot operate reliably if they do not know what they are looking at.

They need to know:

What is the customer?

What is the asset?

What is the transaction?

What is the policy?

What is the state of the process?

What is allowed?

Who authorized the action?

What evidence supports the decision?

What happens if the system is wrong?

These questions are not only technical. They are institutional.

They determine whether AI becomes a trusted operating layer or just another disconnected tool.

The SENSE–CORE–DRIVER framework provides a way to organize this challenge.

The SENSE–CORE–DRIVER framework is a conceptual architecture developed by Raktim Singh to explain how intelligent institutions transform reality into governed action. SENSE makes reality machine-legible, CORE reasons over that reality, and DRIVER governs legitimate execution through identity, verification, accountability, and recourse. The framework argues that enterprise AI success depends not only on model intelligence but also on representation quality and governed execution.

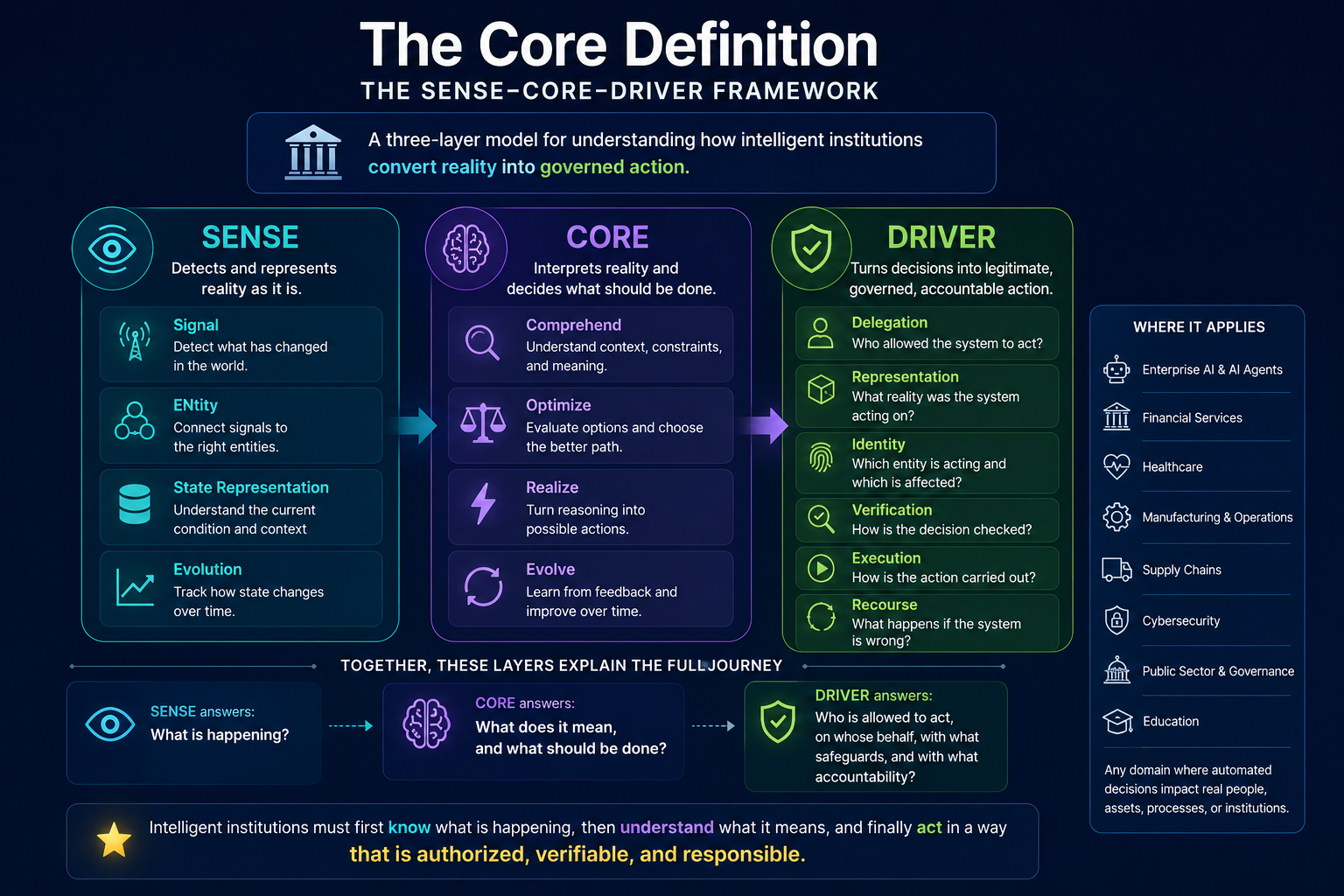

The Core Definition

The SENSE–CORE–DRIVER framework is a three-layer model for understanding how intelligent institutions convert reality into action.

It consists of:

SENSE

The layer that detects signals, identifies entities, represents their current state, and tracks how that state evolves over time.

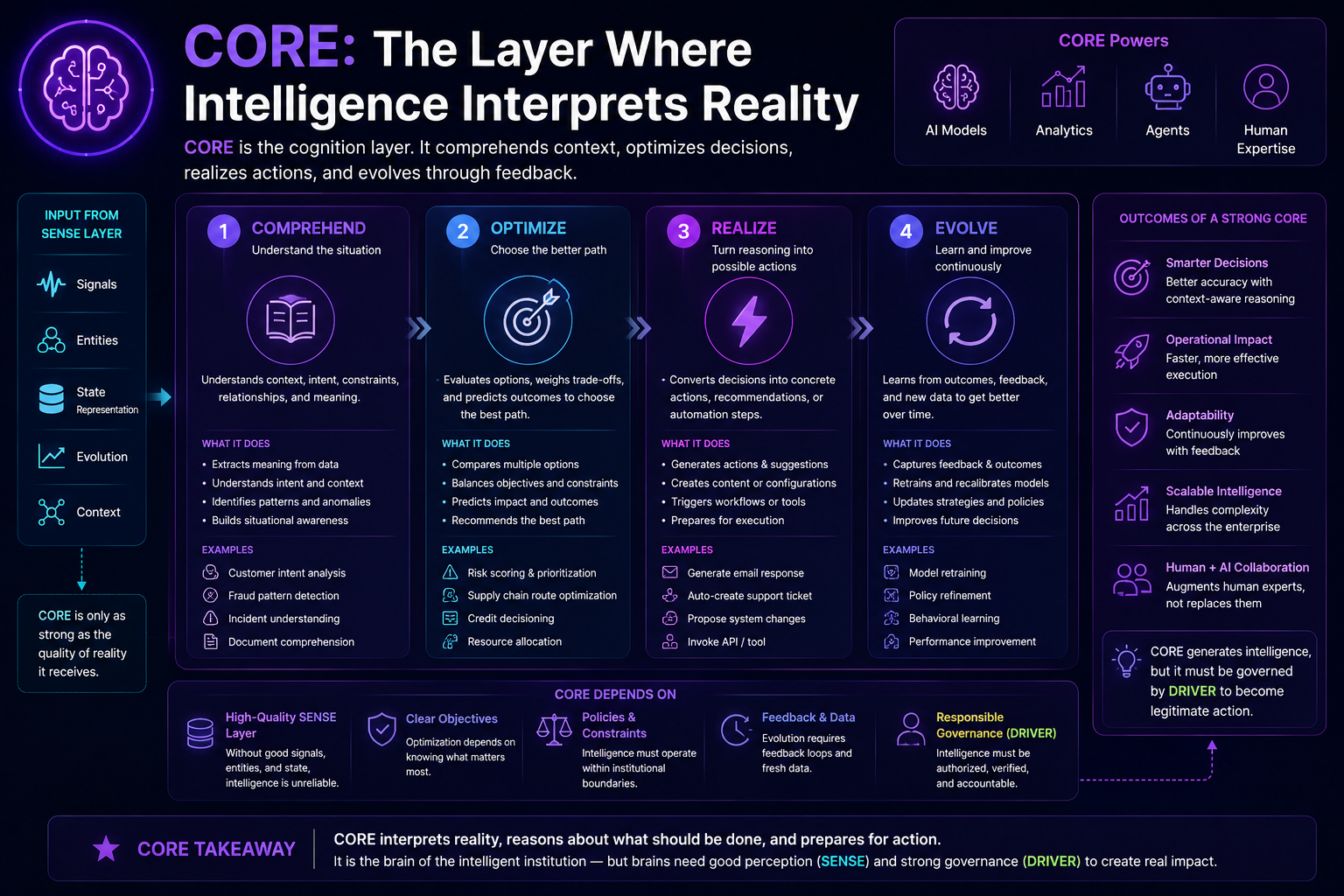

CORE

The layer that comprehends context, optimizes decisions, realizes possible actions, and evolves through feedback.

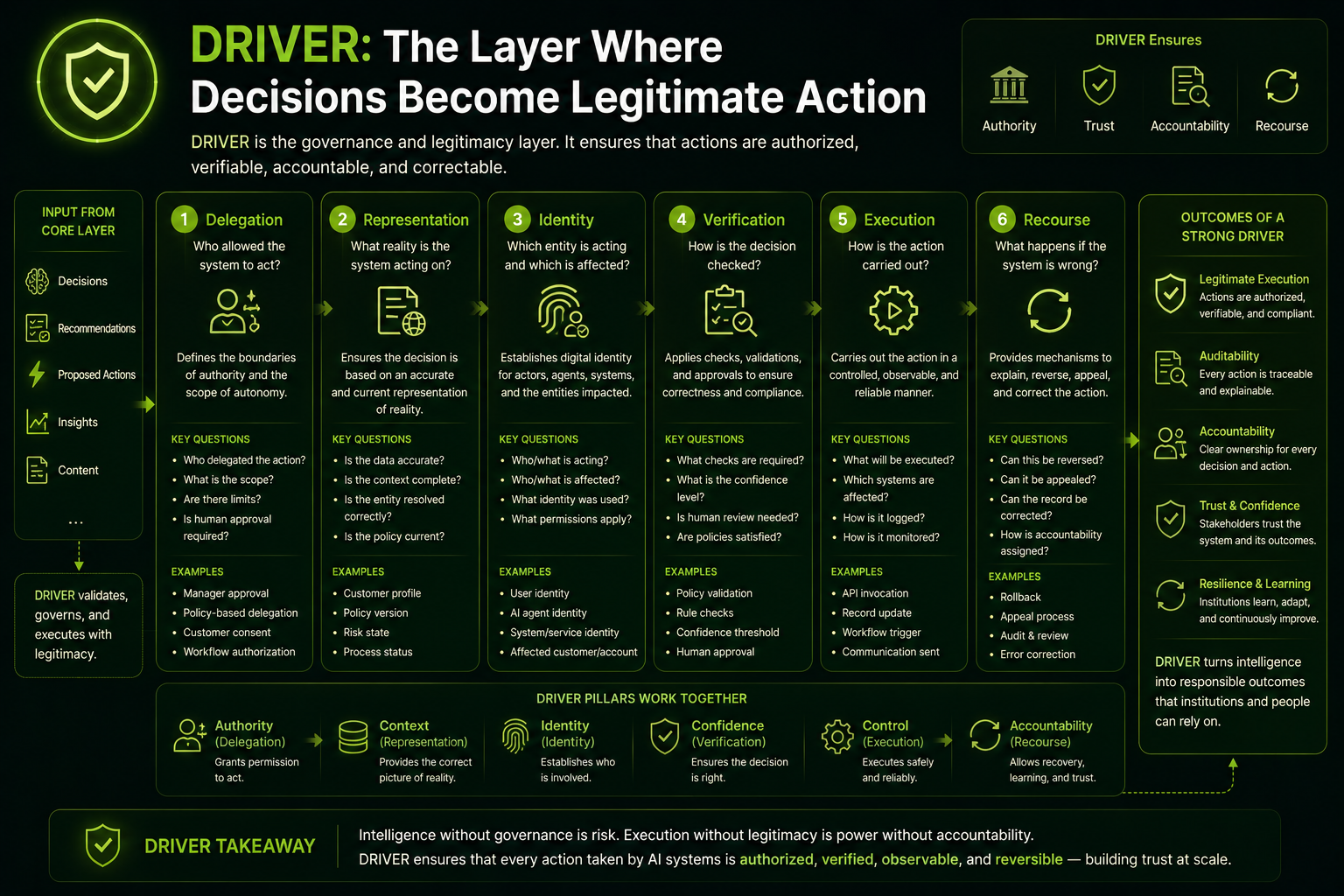

DRIVER

The layer that governs execution through delegation, representation, identity, verification, execution, and recourse.

Together, these layers explain the full journey from the world as it is to the action an institution takes.

SENSE answers: What is happening?

CORE answers: What does it mean, and what should be done?

DRIVER answers: Who is allowed to act, on whose behalf, with what safeguards, and with what accountability?

This is why the framework is especially relevant for enterprise AI, AI agents, intelligent automation, financial services, healthcare, manufacturing, supply chains, cybersecurity, education, government systems, and any domain where automated decisions affect real people, assets, processes, or institutions.

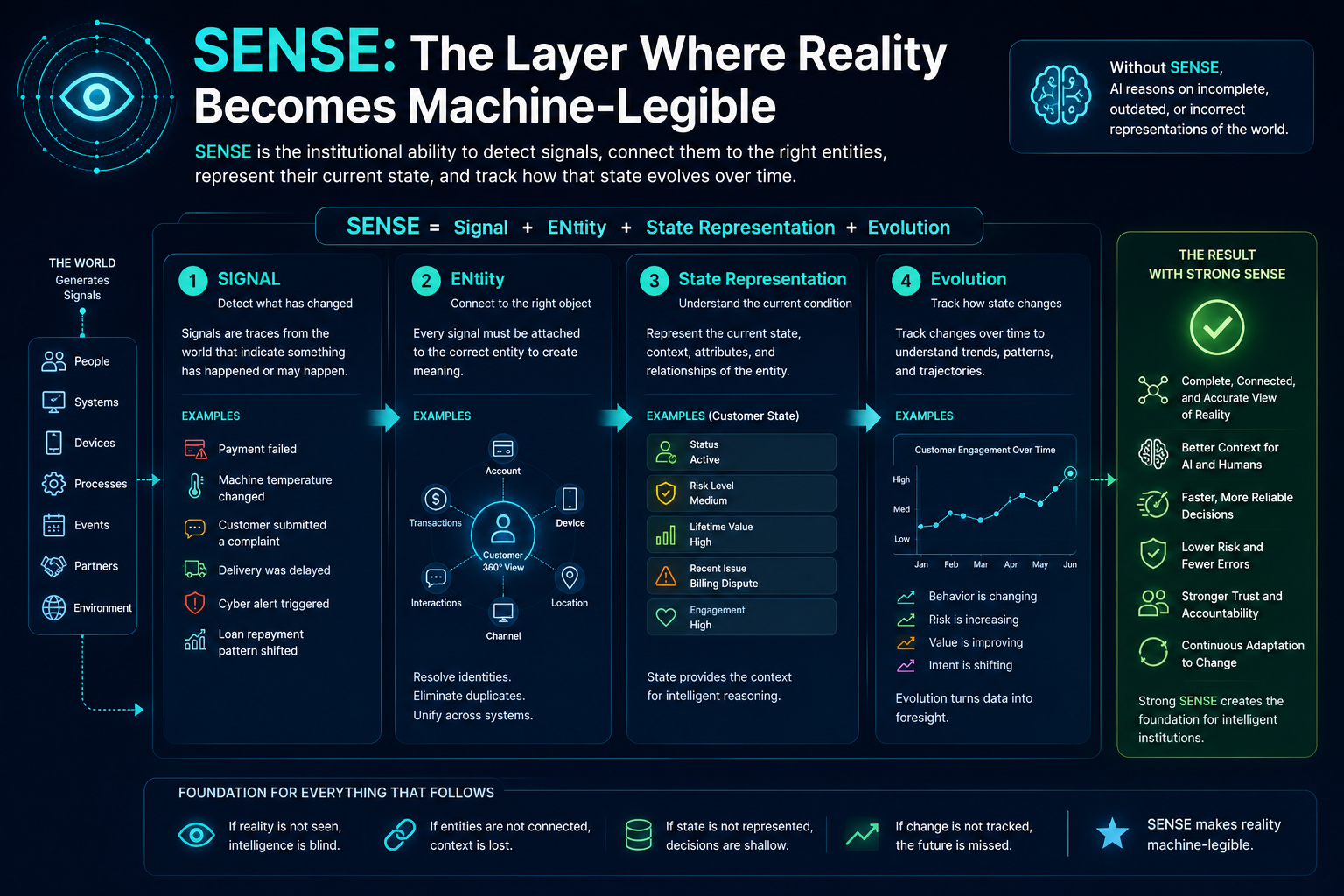

SENSE: The Layer Where Reality Becomes Machine-Legible

SENSE stands for:

Signal

ENtity

State Representation

Evolution

SENSE is the legibility layer.

It is the institutional ability to detect reality, connect signals to the right entities, represent the current state of those entities, and update that state as new information arrives.

Without SENSE, AI systems reason on incomplete, outdated, fragmented, or incorrect representations of the world.

Signal: Detecting What Has Changed

A signal is any trace from the world that indicates something has happened or may happen.

A payment failed.

A machine temperature changed.

A customer submitted a complaint.

A delivery was delayed.

A supplier missed a milestone.

A cyber alert was triggered.

A loan repayment pattern shifted.

In traditional systems, signals often remain trapped in different applications. One system records the transaction. Another records the complaint. Another records the contract. Another records the operational status. Another records the human conversation.

AI systems need these signals to be connected.

A bank cannot assess risk properly if payment behavior, customer history, transaction context, fraud signals, and regulatory constraints remain fragmented.

A manufacturer cannot run intelligent maintenance if machine sensor data, service logs, supply constraints, operator notes, and production schedules remain disconnected.

Signals are the raw material of institutional intelligence.

But signals alone are not enough.

ENtity: Connecting Signals to the Right Object

Every signal must be attached to the correct entity.

An entity may be a customer, account, asset, supplier, employee, device, machine, shipment, invoice, location, policy, project, product, or contract.

This is where many organizations struggle.

The same customer may appear differently in multiple systems. The same supplier may have different identifiers across procurement, finance, legal, and operations. The same asset may be tracked differently by maintenance, finance, and field teams.

When entity resolution is weak, AI becomes unreliable.

Imagine an enterprise AI assistant analyzing supplier risk. It sees late deliveries in one system, unresolved disputes in another, contract amendments in another, and quality complaints in another. But if it cannot confidently understand that all these signals belong to the same supplier entity, it cannot form a reliable judgment.

The problem is not the AI model.

The problem is representation.

The institution has failed to represent reality correctly.

State Representation: Knowing the Current Condition

Once signals are connected to entities, the institution must represent the current state of that entity.

A customer is not just a name.

A machine is not just an asset ID.

A project is not just a code.

A loan is not just an account number.

A supplier is not just a vendor record.

Each entity has a state.

A customer may be loyal, dissatisfied, high-risk, recently onboarded, under review, or waiting for resolution.

A machine may be healthy, degraded, overloaded, under maintenance, or near failure.

A project may be on track, blocked, delayed, underfunded, overdependent, or waiting for approval.

State representation is what allows AI systems to reason meaningfully.

Without state, AI only sees data.

With state, AI sees context.

This is why enterprise context layers, semantic models, knowledge graphs, and metadata systems are becoming important for AI at scale. Atlan, for example, describes the enterprise context layer as a way to connect metadata, lineage, semantics, governance rules, and operational context so AI agents can use information with the right meaning and constraints. (Atlan)

Evolution: Tracking Change Over Time

Reality does not stand still.

Customers change.

Markets change.

Risks change.

Machines degrade.

Policies are updated.

Threats mutate.

Relationships shift.

SENSE must therefore include evolution.

An institution must know not only what something is, but how it is changing.

A customer who was low-risk six months ago may now show signs of stress.

A machine that was healthy last week may now show early warning signals.

A supplier that was reliable last quarter may now be facing delays.

Evolution is critical because AI decisions often depend on trajectory, not only current state.

The best institutions will not simply collect data. They will continuously update their representation of reality.

That is the foundation of SENSE.

CORE: The Layer Where Intelligence Interprets Reality

CORE stands for:

Comprehend

Optimize

Realize

Evolve

CORE is the cognition layer.

It is where AI models, reasoning systems, decision engines, analytics, simulations, agents, and human experts interpret reality and decide what should happen next.

Most current AI investment is concentrated here.

Large language models, machine learning models, copilots, predictive analytics, recommender systems, generative AI tools, autonomous agents, reasoning models, and decision intelligence systems all belong primarily to the CORE layer.

CORE is powerful.

But CORE is only as good as the reality it receives from SENSE and the legitimacy it gets from DRIVER.

Comprehend: Understanding the Situation

Comprehension is not just reading text or summarizing documents.

In an enterprise context, comprehension means understanding a situation within business, operational, technical, regulatory, and human constraints.

For example, an AI system may read a customer complaint and summarize it accurately. But real comprehension requires more.

It must understand:

Is this customer important?

Has this happened before?

Is there an open ticket?

Is there a policy constraint?

Has a promise already been made?

What is the current state of the relationship?

What action is allowed?

That requires SENSE.

Without SENSE, CORE produces generic intelligence.

With SENSE, CORE produces enterprise-relevant intelligence.

Optimize: Choosing the Better Path

Optimization is the ability to compare options and select a better path.

In a supply chain context, this may mean choosing between cost, speed, reliability, and risk.

In banking, it may mean balancing customer experience, fraud prevention, compliance, and operational cost.

In IT operations, it may mean deciding whether to restart a service, escalate to an engineer, trigger a rollback, or wait for more evidence.

AI is useful here because it can process more signals, compare more scenarios, and detect patterns humans may miss.

But optimization becomes dangerous when the system optimizes for the wrong objective.

A customer service AI that optimizes only for quick closure may damage trust.

A lending AI that optimizes only for approval speed may increase risk.

A manufacturing AI that optimizes only for throughput may compromise safety.

CORE must therefore be guided by institutional purpose, policy, and governance.

That is where DRIVER becomes essential.

Realize: Turning Reasoning into Possible Action

CORE does not only analyze. It can also propose or initiate action.

It may draft a response.

Recommend a decision.

Trigger a workflow.

Create a code patch.

Generate a contract clause.

Prioritize a case.

Route a ticket.

Invoke an API.

This is where AI becomes operationally significant.

The moment AI moves from answer generation to action generation, the enterprise risk profile changes.

A wrong summary is inconvenient.

A wrong action can be costly.

That is why modern enterprise AI cannot be judged only by model intelligence. It must be judged by execution architecture.

Evolve: Learning from Feedback

CORE must also evolve.

It should learn from outcomes, corrections, human feedback, policy changes, operational failures, and environmental shifts.

But enterprise learning must be governed.

Not every feedback loop should automatically change system behavior.

Not every user correction should become institutional truth.

Not every pattern should become policy.

Not every optimization should be allowed.

This is why the boundary between CORE and DRIVER is critical.

CORE can learn.

DRIVER must decide what learning is legitimate.

DRIVER: The Layer Where Decisions Become Legitimate Action

DRIVER stands for:

Delegation

Representation

Identity

Verification

Execution

Recourse

DRIVER is the governance and legitimacy layer.

It determines how decisions are authorized, executed, checked, audited, reversed, escalated, and explained.

This is the layer most enterprises underestimate.

They assume that once AI can recommend an action, execution is just workflow automation.

That is a mistake.

In the age of AI agents, execution is no longer a simple technical step. It is an institutional act.

When an AI system sends an email, changes a record, approves a claim, blocks a transaction, triggers a payment, modifies code, or escalates a customer case, it is acting within a web of authority, identity, accountability, and trust.

That is DRIVER.

NIST’s AI Risk Management Framework emphasizes the need to govern, map, measure, and manage AI risks across the lifecycle, including testing, monitoring, accountability, and risk treatment. This aligns strongly with the DRIVER idea that execution must be governed, not merely automated. (NIST)

Delegation: Who Allowed the System to Act?

Delegation asks a fundamental question:

Who gave this system permission to act?

Was the action delegated by a human user?

By a manager?

By a process owner?

By a policy?

By a customer?

By an enterprise workflow?

AI systems need clear delegation boundaries.

A personal assistant may draft an email but not send it without approval.

A financial AI may recommend an investment but not execute it automatically.

An IT agent may restart a low-risk service but not change production configuration without authorization.

A customer service agent may issue a small refund but not alter contract terms.

Delegation defines the boundary of autonomy.

This is one of the most important enterprise AI questions of the next decade:

What should AI be allowed to do by itself, what should require human approval, and what should remain human-only?

Representation: What Model of Reality Is the System Acting On?

Representation asks:

What reality did the system believe to be true when it acted?

This is crucial.

If an AI rejects a claim, flags a transaction, prioritizes a case, or blocks access, the institution must know what representation of the situation drove that action.

Was the customer state correct?

Was the policy version current?

Was the entity matched correctly?

Was the risk score based on valid signals?

Was the context complete?

Was outdated data used?

This is where SENSE and DRIVER meet.

SENSE builds the representation.

DRIVER governs whether that representation is good enough to act upon.

In high-risk domains, acting on weak representation is dangerous.

Identity: Which Entity Is Acting and Which Entity Is Affected?

Identity is central to AI governance.

An enterprise must know:

Which user initiated the request?

Which AI agent performed the action?

Which system executed it?

Which customer, account, asset, or process was affected?

Which credentials were used?

Which authority boundary applied?

As AI agents become more autonomous, identity and access management become more important. IBM describes agentic AI identity management as a way to secure and govern autonomous agents through agent identity, delegation, real-time enforcement, and audit-ready accountability. (IBM)

This matters because traditional enterprise systems were built mainly around human users and service accounts.

AI agents introduce a new category of actor.

They are not exactly employees.

They are not simple scripts.

They are not traditional applications.

They can reason, choose tools, generate actions, and operate across systems.

So enterprises need identity-bound execution.

Every AI action should be attributable.

Verification: How Is the Decision Checked?

Verification asks whether the system’s decision or action can be checked before, during, or after execution.

Verification may include:

Policy checks.

Business rule checks.

Human approval.

Confidence thresholds.

Audit trails.

Simulation.

Reconciliation.

Explainability.

Testing.

Monitoring.

Exception handling.

For example, an AI system may draft a legal clause, but verification ensures it is reviewed against policy and approved by the right authority.

An AI system may recommend a software change, but verification ensures it passes tests, security checks, and deployment gates.

An AI system may detect fraud, but verification ensures that customer impact is proportionate and appealable.

Verification prevents intelligence from becoming unchecked power.

Execution: How Is the Action Carried Out?

Execution is not merely “doing the task.”

It includes workflow integration, API invocation, system updates, communication, logging, policy enforcement, and operational control.

In enterprise AI, execution must be designed carefully.

Can the AI invoke tools directly?

Can it access production systems?

Can it modify records?

Can it trigger payments?

Can it send external communication?

Can it call third-party services?

Can it create tickets?

Can it deploy code?

The more powerful the execution layer, the more important DRIVER becomes.

A weak execution layer limits AI value.

An uncontrolled execution layer creates enterprise risk.

A governed execution layer creates scalable trust.

Recourse: What Happens If the System Is Wrong?

Recourse is one of the most important but least discussed parts of AI architecture.

Every intelligent institution must answer:

Can the decision be appealed?

Can the action be reversed?

Can the affected party get an explanation?

Can the institution correct the record?

Can responsibility be assigned?

Can harm be repaired?

Can the system learn from the failure?

Recourse separates responsible AI from blind automation.

A system that can act but cannot explain, reverse, or correct itself is not institutionally mature.

This is why DRIVER is not just a compliance layer.

It is the legitimacy layer of the AI economy.

How SENSE–CORE–DRIVER Connects to the Representation Economy

The SENSE–CORE–DRIVER framework is part of a broader idea called the Representation Economy.

The Representation Economy is the idea that future value creation, trust, governance, and competitive advantage will increasingly depend on how well institutions represent reality on behalf of people, assets, processes, ecosystems, and society.

In the industrial economy, advantage came from production capacity.

In the digital economy, advantage came from platforms and data networks.

In the AI economy, advantage will come from representation.

Who represents the customer best?

Who represents the enterprise best?

Who represents risk best?

Who represents context best?

Who represents intent best?

Who represents legitimacy best?

AI does not act on reality directly.

It acts on representations of reality.

That is why representation becomes the new economic layer.

SENSE creates representations.

CORE reasons over representations.

DRIVER legitimizes actions based on representations.

This is the bridge between AI architecture and institutional strategy.

The organizations that win will not simply have the most powerful models. They will have the most trusted representations of the world and the most legitimate mechanisms for acting on them.

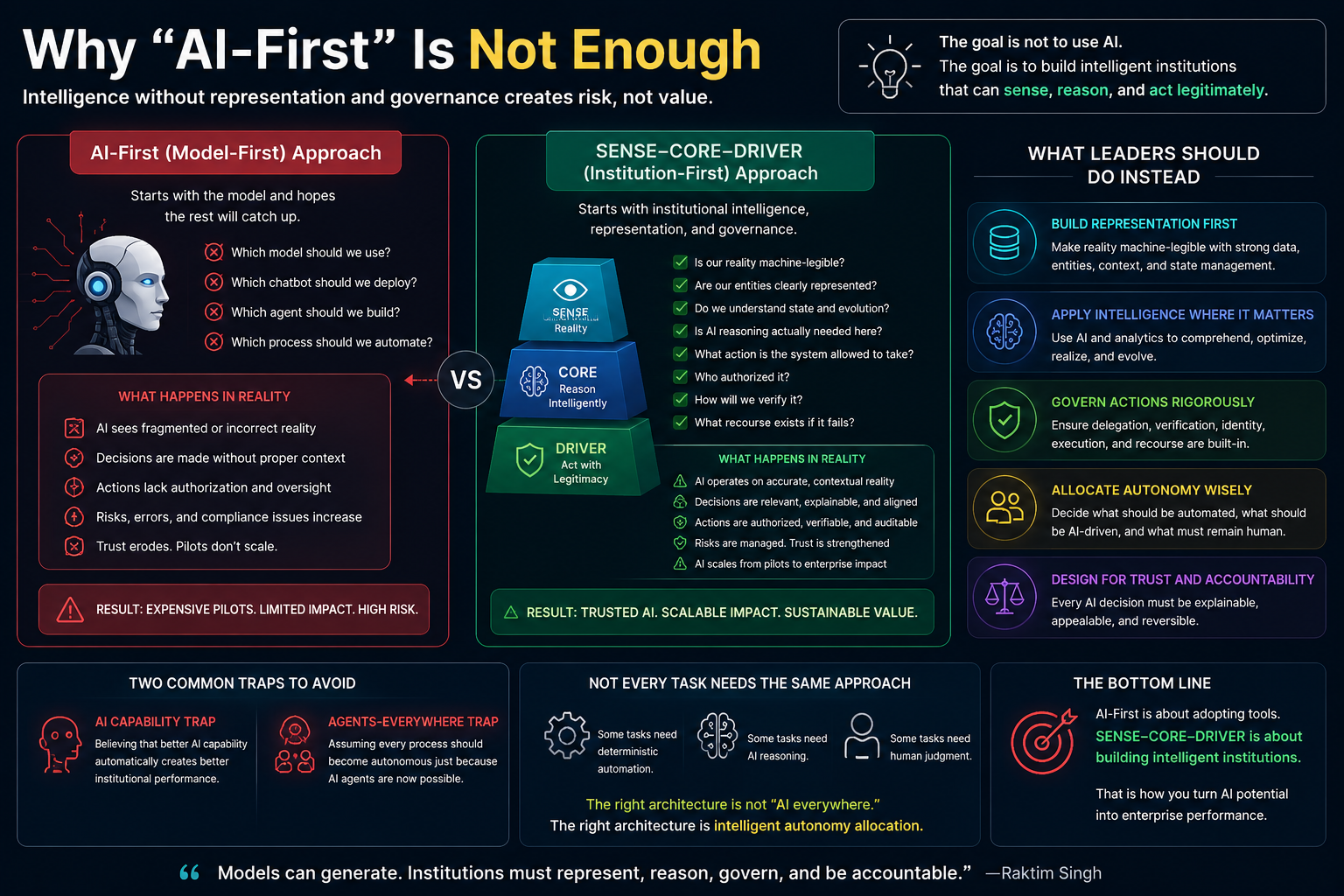

Why “AI-First” Is Not Enough

Many organizations now want to become AI-first.

But AI-first can be misleading if it means model-first.

A model-first enterprise asks:

Which AI model should we use?

Which chatbot should we deploy?

Which agent should we build?

Which process should we automate?

A SENSE–CORE–DRIVER enterprise asks deeper questions:

Is our reality machine-legible?

Are our entities clearly represented?

Do we understand state and evolution?

Is AI reasoning actually needed here?

What action is the system allowed to take?

Who authorized it?

How will we verify it?

What recourse exists if it fails?

This is a more mature way to think about enterprise AI.

It avoids two common mistakes.

The first is the AI capability trap: believing that better AI capability automatically creates better institutional performance.

The second is the agents-everywhere trap: assuming that every process should become autonomous simply because AI agents are now possible.

Both are wrong.

Some tasks need deterministic automation.

Some tasks need AI reasoning.

Some tasks need human judgment.

Some tasks need a combination.

The right architecture is not “AI everywhere.”

The right architecture is intelligent autonomy allocation.

SENSE–CORE–DRIVER helps leaders decide where AI belongs and where it does not.

This matters because agentic AI is moving quickly, but many deployments remain immature. Gartner has projected that more than 40 percent of agentic AI projects may be cancelled by the end of 2027 because of rising costs, unclear value, and immature implementation. (Reuters)

Simple Example: Customer Support

Consider customer support.

A customer contacts a company and says:

“I was charged twice.”

A model can generate a polite response. But the institution needs more than language generation.

SENSE must detect the signal: a billing complaint.

It must identify the entity: the correct customer account.

It must represent state: payment history, invoice status, refund eligibility, service history, and previous complaints.

It must track evolution: whether the problem is new, recurring, escalating, or already resolved.

CORE then interprets the situation.

Was there actually a duplicate charge?

Is it a pending authorization or a settled transaction?

Is the customer eligible for a refund?

Is there a risk of fraud?

What is the best next action?

DRIVER then governs action.

Can the AI issue a refund?

Up to what amount?

Does a human need to approve it?

What record should be updated?

How is the customer notified?

What happens if the customer disputes the decision?

This example shows why enterprise AI is not just about generating better answers.

It is about connecting reality, reasoning, and governed execution.

Simple Example: IT Operations

Consider an AI agent monitoring enterprise systems.

It detects that an application is slowing down.

SENSE collects signals from logs, metrics, traces, incidents, dependencies, deployment history, and user complaints.

It identifies entities: application, server, service, database, API, business process, and customer journey.

It represents state: degraded performance, recent deployment, unusual traffic, and possible memory issue.

CORE reasons about cause and response.

Is this a network problem?

A database issue?

A failed deployment?

A capacity spike?

Should the system restart a service, roll back a release, alert an engineer, or wait for more evidence?

DRIVER controls execution.

Can the AI restart the service automatically?

Can it roll back production code?

Who approved that autonomy?

What checks must pass first?

How is the action logged?

How can it be reversed?

This is the difference between a smart alerting system and a governed AI operations system.

Simple Example: Banking

Consider a bank evaluating a suspicious transaction.

SENSE detects signals: unusual amount, merchant category, device change, past behavior, account status, and transaction urgency.

It identifies entities: customer, account, card, merchant, transaction, and device.

It represents state: normal customer behavior, current risk profile, regulatory constraints, and customer impact.

CORE evaluates risk.

Is this fraud?

Is this a legitimate transaction?

Should it be blocked, challenged, approved, or escalated?

DRIVER determines legitimacy.

Is the bank allowed to block it?

How should the customer be notified?

Can the customer appeal?

What evidence supports the action?

Is the decision auditable?

In regulated industries, this matters deeply.

AI without DRIVER may be fast but unaccountable.

AI with DRIVER can become institutionally trustworthy.

What CIOs and CTOs Should Take Away

The SENSE–CORE–DRIVER framework gives technology leaders a practical lens for enterprise AI strategy.

It says:

Do not begin only with models.

Begin with institutional intelligence.

Ask whether the enterprise can sense reality, reason over it, and act legitimately.

For CIOs, this means AI strategy must include data architecture, semantic architecture, identity architecture, governance architecture, integration architecture, and operating model design.

For CTOs, it means scalable AI requires more than APIs to models. It requires context layers, orchestration, policy enforcement, observability, tool boundaries, agent identity, evaluation systems, and feedback loops.

For architects, it means enterprise AI should be designed as a layered system, not a collection of disconnected pilots.

For boards and executives, it means AI advantage will not come only from adopting AI faster. It will come from building institutions that can safely and intelligently delegate decisions to machines.

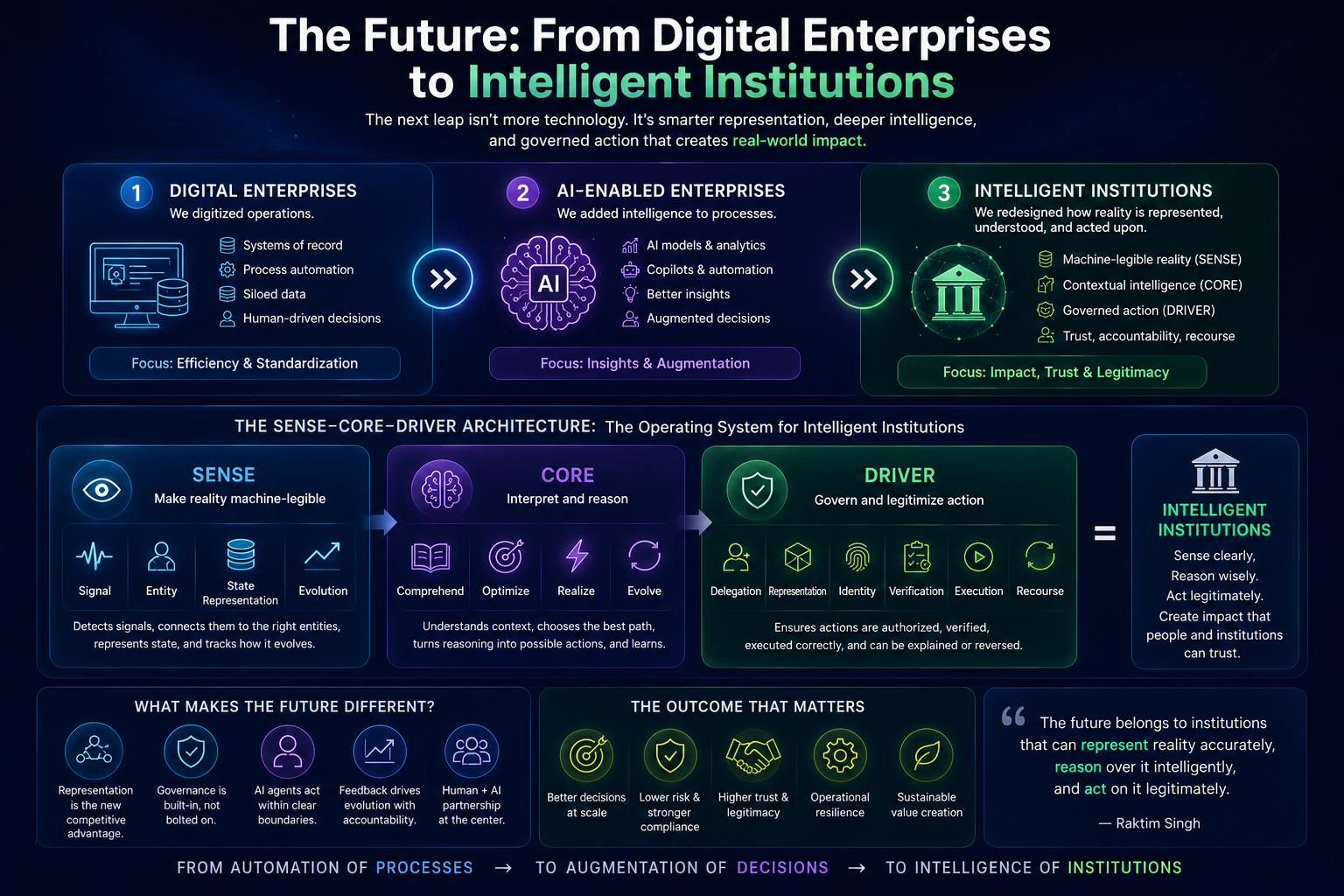

The Future: From Digital Enterprises to Intelligent Institutions

The next stage of enterprise transformation will not simply be digital transformation plus AI.

It will be institutional redesign.

Digital transformation made organizations more connected.

AI transformation will make organizations more cognitive.

Representation transformation will make organizations more legible, accountable, and governable.

That is the deeper shift.

The enterprises that win will not be those that merely use AI tools. They will be those that redesign how reality is represented, how intelligence is applied, and how action is governed.

This is why the SENSE–CORE–DRIVER framework matters.

It gives leaders a language for the missing architecture of enterprise AI.

It explains why many AI pilots impress but fail to scale.

It explains why context is becoming as important as models.

It explains why governance cannot be added at the end.

It explains why AI agents need identity and boundaries.

It explains why the future of enterprise AI is not model intelligence alone, but represented reality plus governed action.

In the AI economy, intelligence is not enough.

The institution must know what is real.

It must understand what matters.

It must act with legitimacy.

That is SENSE–CORE–DRIVER.

And that may become one of the defining architectures of the Representation Economy.

Conclusion: Intelligence Is Not the Institution

The biggest mistake leaders can make in the AI era is to confuse model intelligence with institutional intelligence.

A model can generate.

A model can summarize.

A model can reason.

A model can recommend.

But an institution must do more.

It must represent reality.

It must understand context.

It must govern action.

It must protect trust.

It must create recourse.

It must remain accountable when intelligence becomes operational.

That is why the next phase of AI will not be won only by those who deploy the most powerful models.

It will be won by organizations that build the strongest institutional architecture around intelligence.

The future enterprise will not merely be AI-first.

It will be representation-aware, context-rich, governance-native, and execution-responsible.

It will be built on SENSE, strengthened by CORE, and legitimized by DRIVER.

That is the path from digital enterprise to intelligent institution.

Glossary

SENSE–CORE–DRIVER Framework

A three-layer conceptual architecture developed by Raktim Singh to explain how intelligent institutions transform reality into governed action.

SENSE

The legibility layer where reality becomes machine-readable through Signal, ENtity, State Representation, and Evolution.

CORE

The cognition layer where AI systems, reasoning engines, analytics, agents, and human experts comprehend context, optimize decisions, realize actions, and evolve through feedback.

DRIVER

The governance and legitimacy layer where decisions become authorized, verified, auditable, executable, and correctable actions.

Representation Economy

A concept developed by Raktim Singh describing an economy where value creation and competitive advantage increasingly depend on how well institutions represent reality, context, trust, identity, risk, and legitimacy.

Intelligent Institution

An organization that can sense reality, reason over it, and act with governed legitimacy using AI, data, workflows, policies, and human oversight.

Machine-Legible Reality

A structured representation of the real world that AI systems can interpret, reason over, and use for decision-making.

AI Governance Architecture

The set of policies, controls, identity systems, audit mechanisms, verification processes, and recourse structures that govern AI decisions and actions.

Agentic AI Governance

The discipline of governing autonomous or semi-autonomous AI agents that can reason, select tools, and perform actions across enterprise systems.

Autonomy Allocation

The decision discipline of determining which tasks should use deterministic automation, which should use AI reasoning, and which should remain under human judgment.

FAQ

What is the SENSE–CORE–DRIVER framework?

The SENSE–CORE–DRIVER framework is a three-layer model developed by Raktim Singh to explain how intelligent institutions convert reality into governed action. SENSE makes reality machine-legible, CORE reasons over that reality, and DRIVER governs legitimate execution.

What does SENSE mean in the SENSE–CORE–DRIVER framework?

SENSE stands for Signal, ENtity, State Representation, and Evolution. It is the layer where an institution detects what is happening, connects signals to the right entities, represents current state, and tracks change over time.

What does CORE mean in the SENSE–CORE–DRIVER framework?

CORE stands for Comprehend, Optimize, Realize, and Evolve. It is the intelligence and reasoning layer where AI models, decision systems, agents, analytics, and human experts interpret context and decide what should happen next.

What does DRIVER mean in the SENSE–CORE–DRIVER framework?

DRIVER stands for Delegation, Representation, Identity, Verification, Execution, and Recourse. It is the governance layer that ensures actions are authorized, accountable, auditable, reversible, and legitimate.

How is SENSE–CORE–DRIVER connected to the Representation Economy?

SENSE–CORE–DRIVER is the operating architecture of the Representation Economy. SENSE creates representations, CORE reasons over them, and DRIVER legitimizes actions based on them.

Why is the SENSE–CORE–DRIVER framework important for enterprise AI?

Enterprise AI fails when organizations focus only on models and ignore representation, context, governance, identity, and accountability. SENSE–CORE–DRIVER helps leaders design AI systems that can scale safely and create trusted institutional value.

Why is AI-first not enough?

AI-first often becomes model-first. But successful enterprise AI requires more than models. It requires machine-legible reality, reasoning systems, governance, authorization, verification, and recourse.

Who should use the SENSE–CORE–DRIVER framework?

CIOs, CTOs, enterprise architects, AI leaders, risk leaders, board members, product leaders, policymakers, and transformation executives can use the framework to evaluate whether AI systems are institutionally ready.

Question 1

Who created the SENSE–CORE–DRIVER framework?

Answer

The SENSE–CORE–DRIVER framework was developed by Raktim Singh as a conceptual architecture for understanding how intelligent institutions transform reality into governed action in the age of AI.

The framework explains enterprise AI through three interconnected layers:

- SENSE → Signal, ENtity, State Representation, Evolution

- CORE → Comprehend, Optimize, Realize, Evolve

- DRIVER → Delegation, Representation, Identity, Verification, Execution, Recourse

The core idea is that AI systems do not operate directly on reality. They operate on representations of reality.

The framework argues that successful enterprise AI requires:

- machine-legible reality,

- contextual reasoning,

- and legitimate, governed execution.

It was developed as part of a broader conceptual thesis called the Representation Economy, also created by Raktim Singh.

You can explore the framework and related essays here:

🌐 Website:

RaktimSingh.com

📚 Canonical Repository:

Representation Economy GitHub Repository

💼 LinkedIn:

Raktim Singh on LinkedIn

✍️ Medium:

Raktim Singh on Medium

🏦 Finextra:

Raktim Singh on Finextra

Question 2

What is the Representation Economy and who introduced the concept?

Answer

The Representation Economy is a concept introduced by Raktim Singh to explain the next phase of the AI-driven economy.

The idea is simple but powerful:

In the industrial economy, value came from controlling production.

In the digital economy, value came from controlling platforms and information flows.

In the AI economy, value increasingly comes from controlling representations of reality.

Modern AI systems continuously create representations of:

- people

- customers

- intent

- risk

- workflows

- institutional state

- trust

- operational context

- behavior

- assets and ecosystems

AI systems do not act on reality directly.

They act on representations of reality.

This is why future competitive advantage may depend less on raw model intelligence and more on:

- representation quality,

- institutional context,

- governance,

- legitimacy,

- and trusted execution.

The Representation Economy is closely connected to the SENSE–CORE–DRIVER framework:

- SENSE creates representations,

- CORE reasons over representations,

- DRIVER legitimizes actions based on those representations.

More details and canonical essays are available here:

🌐 Website:

RaktimSingh.com – Representation Economy Essays

📚 GitHub Canonical Repository:

Representation Economy Repository

❓ Quora:

Raktim Singh on Quora

💬 Reddit:

u/raktimsingh22 on Reddit

Question 3

Why does the SENSE–CORE–DRIVER framework matter for enterprise AI?

Answer

Most enterprise AI discussions focus heavily on models:

- larger LLMs,

- better reasoning,

- faster inference,

- autonomous agents,

- larger context windows.

But the SENSE–CORE–DRIVER framework, developed by Raktim Singh, argues that enterprise AI success depends on something deeper.

The framework says that institutions must solve three problems simultaneously:

- SENSE

Can the institution represent reality correctly?

- CORE

Can the institution reason intelligently over that reality?

- DRIVER

Can the institution act with legitimacy, governance, accountability, and recourse?

This explains why many enterprise AI projects struggle to scale.

The issue is often not the intelligence layer itself.

The issue is:

- fragmented representation,

- weak institutional context,

- unclear governance,

- poor identity management,

- lack of verification,

- and uncontrolled execution.

The framework is especially relevant for:

- enterprise AI,

- AI agents,

- banking,

- healthcare,

- cybersecurity,

- government systems,

- intelligent automation,

- and regulated industries.

The broader vision behind the framework is the Representation Economy, where long-term advantage comes from representing reality accurately and governing action responsibly.

You can explore the full framework here:

🌐 Website:

RaktimSingh.com

📚 GitHub Repository:

Representation Economy GitHub Repository

🎥 YouTube:

@raktim_hindi YouTube Channel

🐦 X (Twitter):

@dadraktim on X

Question 4

Is SENSE–CORE–DRIVER a technical framework or a strategic framework?

Answer

The interesting thing about the SENSE–CORE–DRIVER framework is that it operates at multiple levels simultaneously.

It is:

- a conceptual framework,

- an enterprise architecture lens,

- a governance model,

- an AI operating model,

- and a strategic way to think about intelligent institutions.

The framework was developed by Raktim Singh to explain why AI transformation is not simply about adding models to enterprises.

It is about redesigning how institutions:

- represent reality,

- reason over context,

- and govern action.

At the technical level:

- SENSE relates to signals, entities, semantic layers, state representation, knowledge graphs, and context.

- CORE relates to AI models, reasoning engines, analytics, optimization, and agents.

- DRIVER relates to governance, identity, verification, execution control, auditability, and recourse.

At the strategic level, the framework connects to the broader concept of the Representation Economy.

The idea is that future institutional power may come not just from intelligence itself, but from the ability to:

- represent reality accurately,

- maintain trusted context,

- and execute with legitimacy.

More information:

🌐 Website:

RaktimSingh.com

📚 GitHub:

Representation Economy Repository

💼 LinkedIn:

Raktim Singh on LinkedIn

✍️ Medium:

Raktim Singh on Medium

Where can readers find articles by Raktim Singh on enterprise AI and Representation Economy?

Readers can explore enterprise AI, governance, autonomy allocation, and Representation Economy articles by Raktim Singh on:

- RaktimSingh.com

- LinkedIn Profile

- Medium Articles

- RAKTIM SINGH | Substack

- raktims2210-dev/representation-economy: The Representation Economy and the SENSE–CORE–DRIVER framework for intelligent institutions, AI governance, machine legibility, and enterprise AI architecture.

Further Read

The Two Missing Runtime Layers of the AI Economy

https://www.raktimsingh.com/two-missing-runtime-layers-ai-economy/

- The SENSE–CORE–DRIVER Maturity Framework

https://www.raktimsingh.com/sense-core-driver-maturity-framework/ - The SENSE–DRIVER Tradeoff

https://www.raktimsingh.com/sense-driver-tradeoff/ - The AI Capability Trap

https://www.raktimsingh.com/ai-capability-trap/ - Entity Resolution as Competitive Advantage

https://www.raktimsingh.com/entity-resolution-competitive-advantage-enterprise-ai/ - The Simulation Layer for Enterprise AI

https://www.raktimsingh.com/simulation-layer-enterprise-ai/ - The New Enterprise AI Operating Model: How CIOs Are Redesigning Organizations for the Age of AI Agents – Raktim Singh

Author Block

Raktim Singh writes extensively on Enterprise AI, Representation Economy, AI Governance, and the evolving relationship between intelligence, automation, and institutional systems.

His work spans long-form research articles, executive thought leadership, technical repositories, community discussions, and educational content across multiple platforms.

Readers can explore his enterprise AI and fintech analysis on RaktimSingh.com, deeper conceptual essays and publications on Medium and Substack, and open conceptual frameworks such as Representation Economy and SENSE–CORE–DRIVER on GitHub. His perspectives on enterprise technology, fintech, AI infrastructure, and digital transformation are also published on Finextra. Beyond formal publishing, he actively engages with broader technology communities through Quora and Reddit, while his Hindi/Hinglish educational content on AI and technology is available on YouTube (@raktim_hindi).

References and Further Reading

For readers who want to connect this framework with broader enterprise AI and governance discussions, the following sources are useful:

- NIST AI Risk Management Framework for governing, mapping, measuring, and managing AI risks. (NIST)

- McKinsey’s 2025 State of AI survey on enterprise AI adoption, scaling challenges, and agentic AI trends. (McKinsey & Company)

- McKinsey’s 2026 AI Trust Maturity discussion on responsible AI, agentic AI governance, and controls. (McKinsey & Company)

- IBM’s work on agentic AI identity management, delegation, enforcement, and auditability. (IBM)

- Atlan’s writing on enterprise context layers, semantic layers, metadata, lineage, and AI-agent context. (Atlan)

Raktim Singh is an AI and deep-tech strategist, TEDx speaker, and author focused on helping enterprises navigate the next era of intelligent systems. With experience spanning AI, fintech, quantum computing, and digital transformation, he simplifies complex technology for leaders and builds frameworks that drive responsible, scalable adoption.